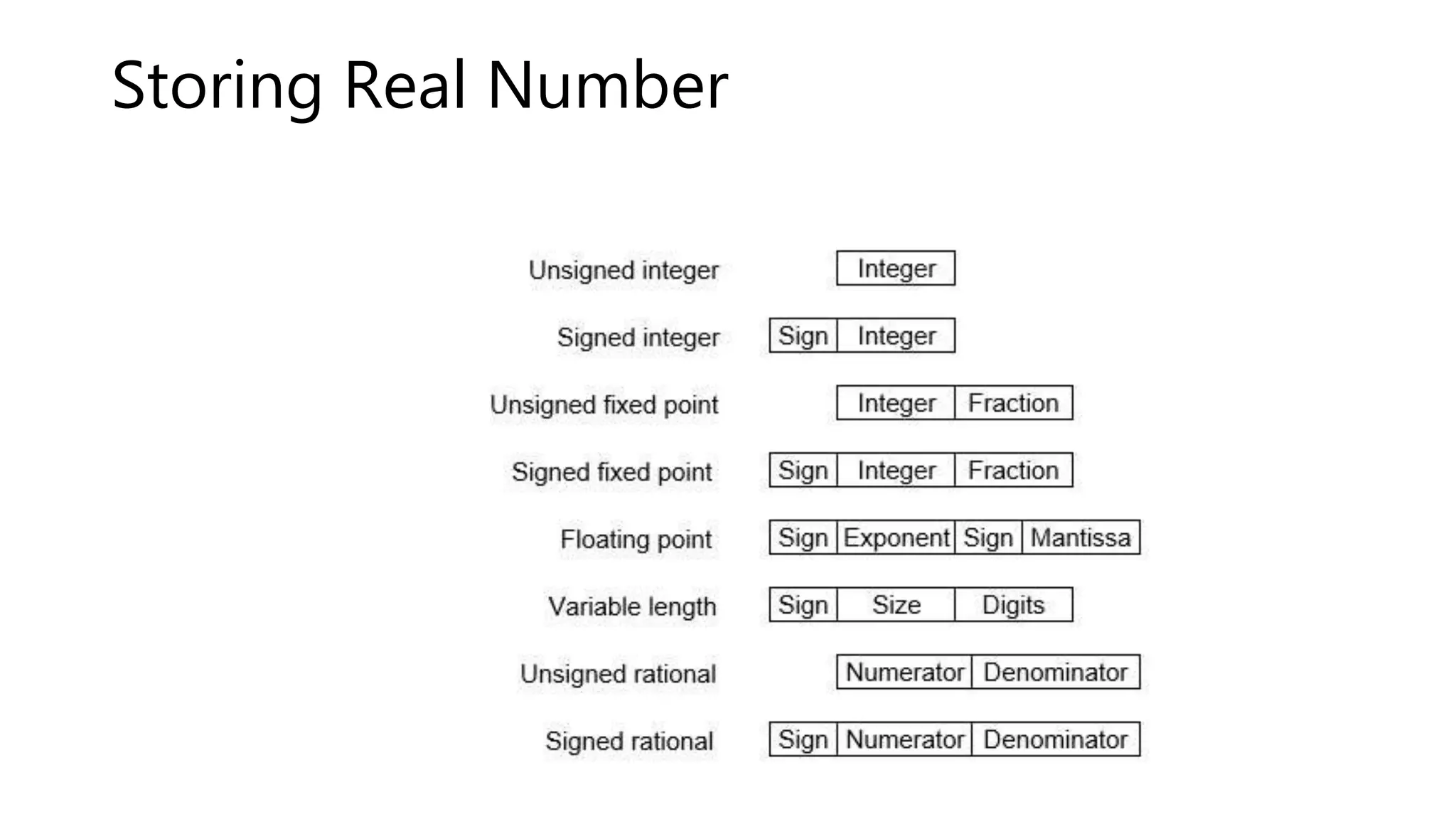

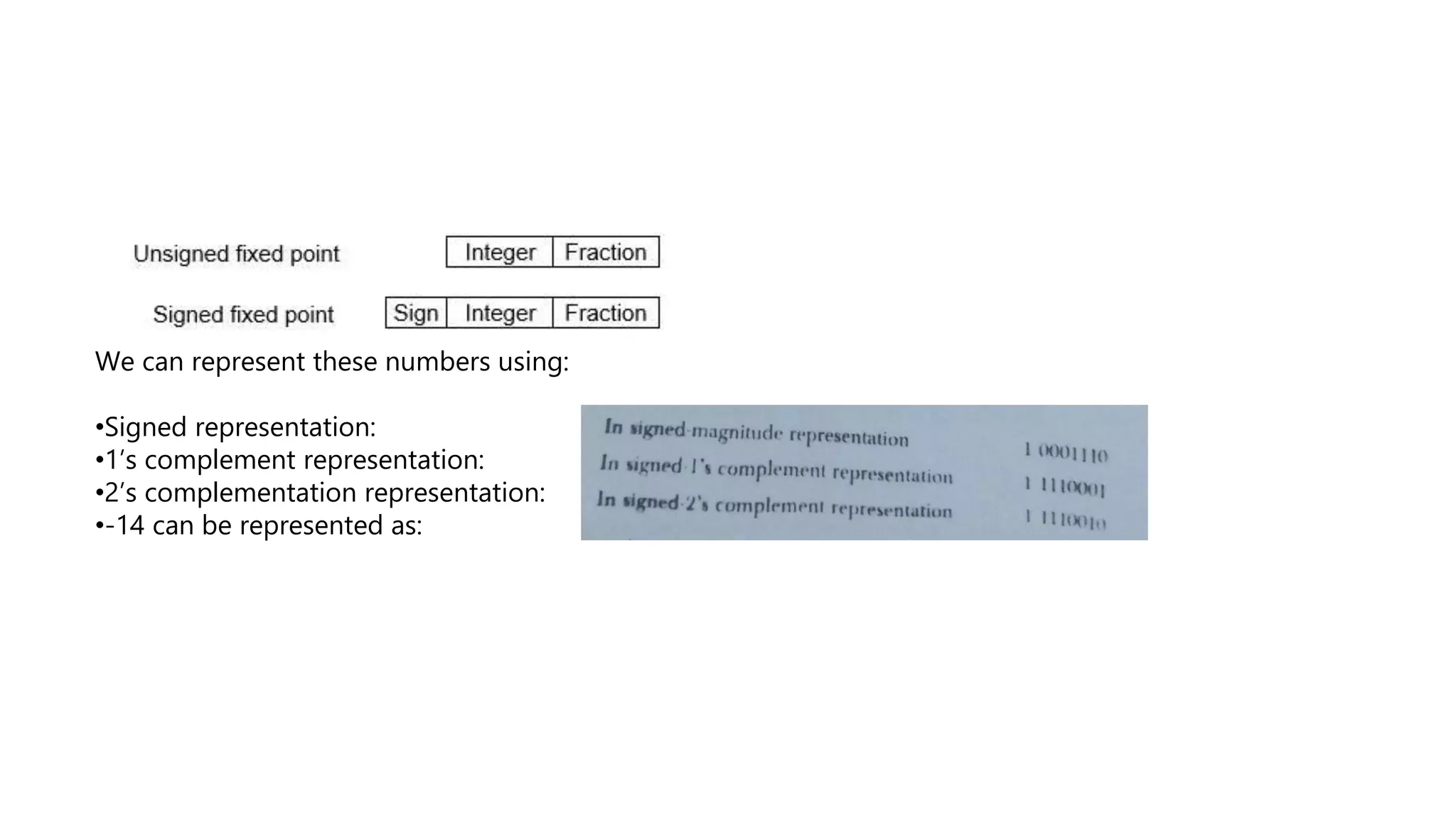

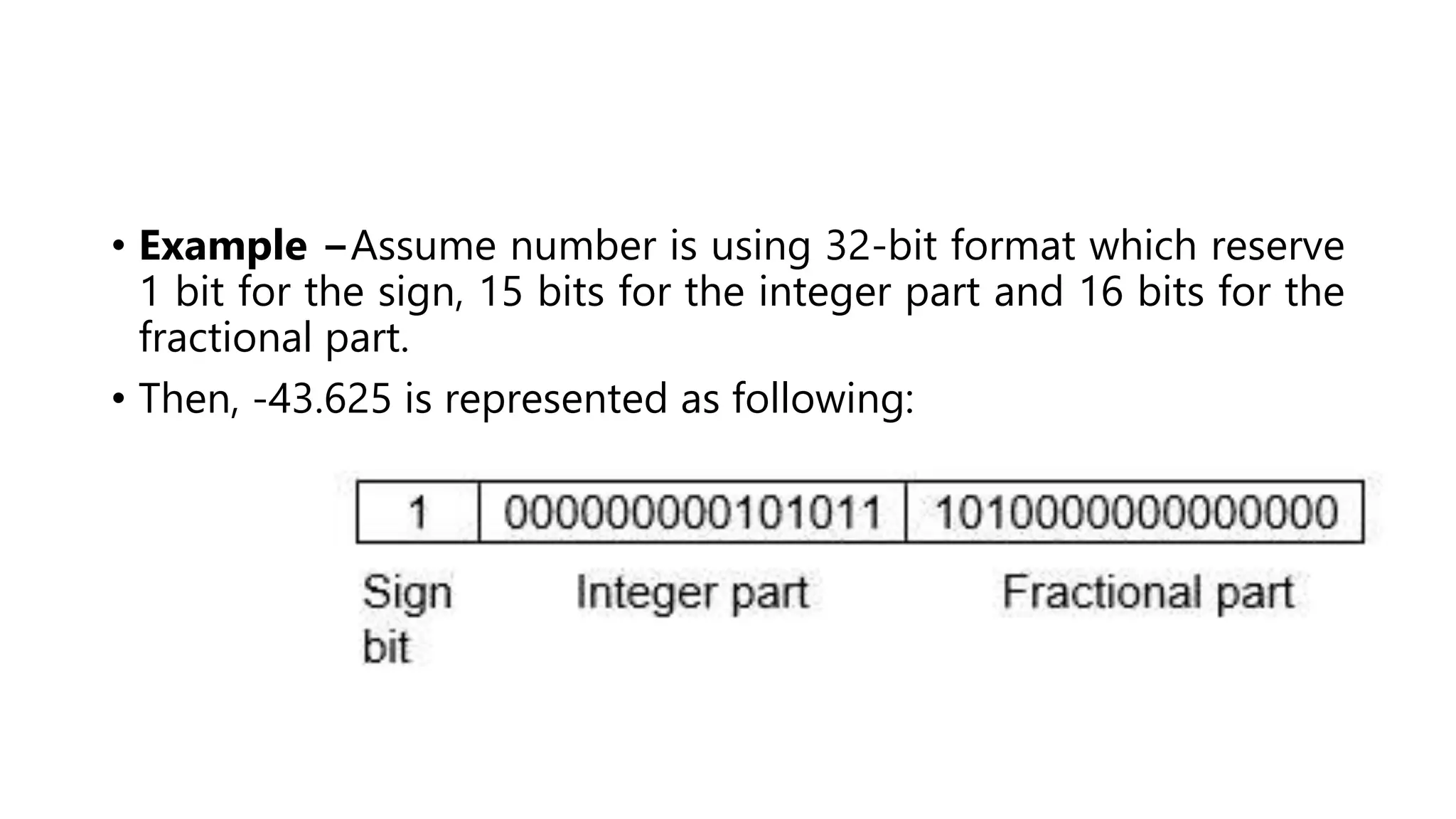

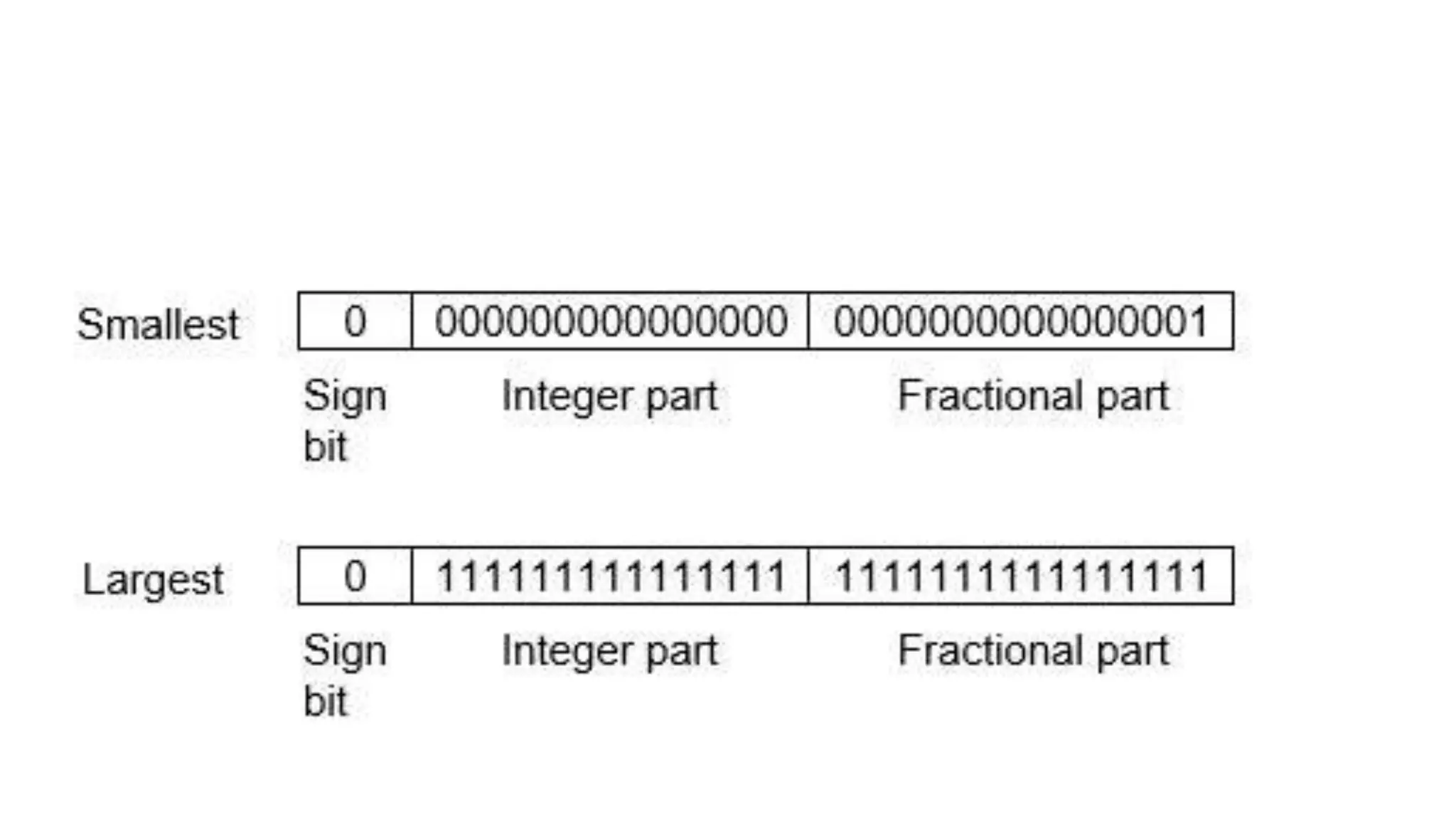

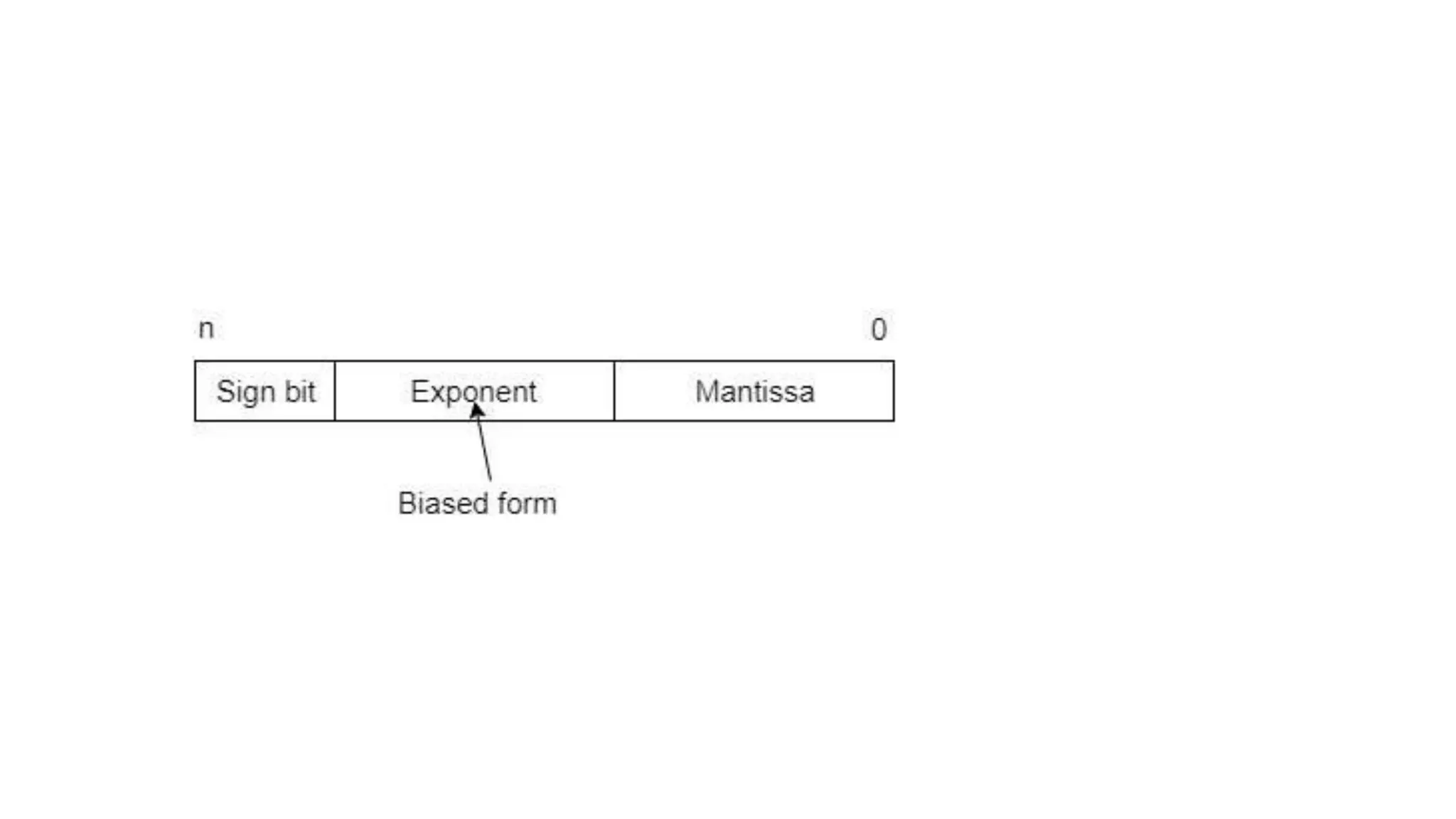

There are two main ways to represent real numbers in computing: fixed point and floating point notation. Fixed point notation reserves a fixed number of bits for the integer and fractional parts, limiting the range of representable values. Floating point notation reserves bits for the sign, exponent, and significand (mantissa), allowing for a wider range of values at the cost of precision. It represents numbers as (-1)^s * m * 2^e, where s is the sign bit, m is the normalized fractional binary number, and e is the power of 2 exponent.