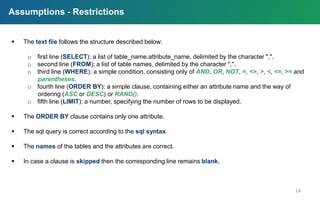

This document compares Microsoft SQL Server, a relational database management system, with Redis, an in-memory key-value data structure store. It outlines the key features, installation processes, and coding requirements for transitioning from a relational database to a key-value system. Additionally, it includes specific coding examples, assumptions, and references for further understanding.

![SQL Server vs. Redis

2

Description Microsoft’s relational DBMS In-memory data structure store,

used as database

Database model Relational DBMS Key-value store

Implementation language C++ C

Data scheme yes schema-free

Triggers yes no

Replication methods yes, depending the SQL-Server Edition Master-slave replication

Partitioning methods tables can be distributed across Sharding

several files, sharding through

federation

Project

from a relational database to a key-value systemReferences: [1]](https://image.slidesharecdn.com/redisprojectpresentation-170829124151/85/Redis-Project-Relational-databases-Key-Value-systems-2-320.jpg)

![Required installations

5

References: [2]

Redis installation

check if

Redis is

working

Required python packages installation](https://image.slidesharecdn.com/redisprojectpresentation-170829124151/85/Redis-Project-Relational-databases-Key-Value-systems-5-320.jpg)

![Python coding [1] – table parsing

7

Relational Data Insertion

References: [3]

Python coding [1] – table parsing](https://image.slidesharecdn.com/redisprojectpresentation-170829124151/85/Redis-Project-Relational-databases-Key-Value-systems-7-320.jpg)

![Python coding [2] – query parsing

8

References: [3]](https://image.slidesharecdn.com/redisprojectpresentation-170829124151/85/Redis-Project-Relational-databases-Key-Value-systems-8-320.jpg)

![Python coding [3] – query parsing

9

Query Execution

References: [3]](https://image.slidesharecdn.com/redisprojectpresentation-170829124151/85/Redis-Project-Relational-databases-Key-Value-systems-9-320.jpg)

![Python coding [4] – query parsing

10

Query Execution

References: [3]](https://image.slidesharecdn.com/redisprojectpresentation-170829124151/85/Redis-Project-Relational-databases-Key-Value-systems-10-320.jpg)

![Python coding [5] – query parsing

11

Query Execution

References: [3]](https://image.slidesharecdn.com/redisprojectpresentation-170829124151/85/Redis-Project-Relational-databases-Key-Value-systems-11-320.jpg)

![12

Python coding [6] – query parsing

Query Execution

References: [3]](https://image.slidesharecdn.com/redisprojectpresentation-170829124151/85/Redis-Project-Relational-databases-Key-Value-systems-12-320.jpg)

![13

Python coding [7] – code metrics

Query Execution

Unit Testing

Relational Data Insertion](https://image.slidesharecdn.com/redisprojectpresentation-170829124151/85/Redis-Project-Relational-databases-Key-Value-systems-13-320.jpg)

![Query examples / results [1]

15

1 2

3](https://image.slidesharecdn.com/redisprojectpresentation-170829124151/85/Redis-Project-Relational-databases-Key-Value-systems-15-320.jpg)

![Query examples / results [2]

16

4

5](https://image.slidesharecdn.com/redisprojectpresentation-170829124151/85/Redis-Project-Relational-databases-Key-Value-systems-16-320.jpg)

![Query examples / results [3]

17

6 7](https://image.slidesharecdn.com/redisprojectpresentation-170829124151/85/Redis-Project-Relational-databases-Key-Value-systems-17-320.jpg)

![Style check, code analysis & unit testing

18

[1] pep8 [2] flake8 [3] pylint](https://image.slidesharecdn.com/redisprojectpresentation-170829124151/85/Redis-Project-Relational-databases-Key-Value-systems-18-320.jpg)

![References

[1] Db-engines.com. (n.d.). Memcached vs. Microsoft SQL Server vs. Redis Comparison. [online] Available at:

https://db-engines.com/en/system/Memcached%3bMicrosoft+SQL+Server%3bRedis [Accessed 13 Apr. 2017].

[2] Redis.io. Redis Quick Start. https://redis.io/topics/quickstart [Accessed 12 Apr. 2017].

[3] Peter Cooper. Redis 101 - A whirlwind tour of the next big thing in NoSQL data storage.

https://www.scribd.com/document/33531219/Redis-Presentation [Accessed 12 Apr. 2017].

| lkoutsokera@gmail.com

| stratos.gounidellis@gmail.com

Lamprini Koutsokera (8130074)

Stratos Gounidellis (8130029)

BDSMasters](https://image.slidesharecdn.com/redisprojectpresentation-170829124151/85/Redis-Project-Relational-databases-Key-Value-systems-19-320.jpg)