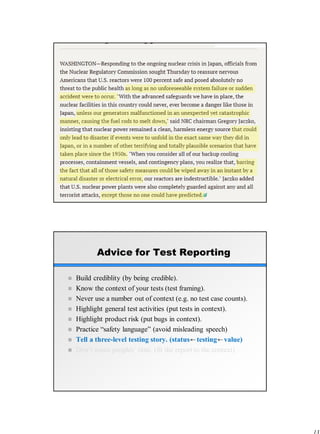

The document discusses rapid software testing and emphasizes the importance of effective test reporting in software projects. Key principles include building credibility, understanding the context of tests, and presenting a narrative of the testing process to inform clients accurately about product status and risks. The author provides various strategies for reporting that enhance clarity and prevent misleading information, demonstrating the necessity of conveying not just facts, but the story behind test results.