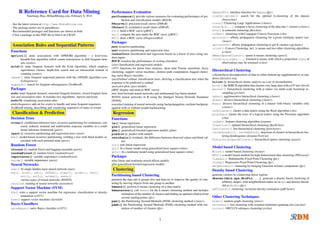

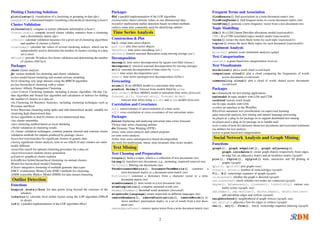

This document provides a summary of data mining and text mining packages and functions available in R. It lists popular packages and functions for tasks such as association rule mining, classification/prediction using decision trees and random forests, clustering, outlier detection, time series analysis, text cleaning/preparation, topic modeling, and social network analysis. It also includes packages and functions for evaluating model performance and visualizing results.