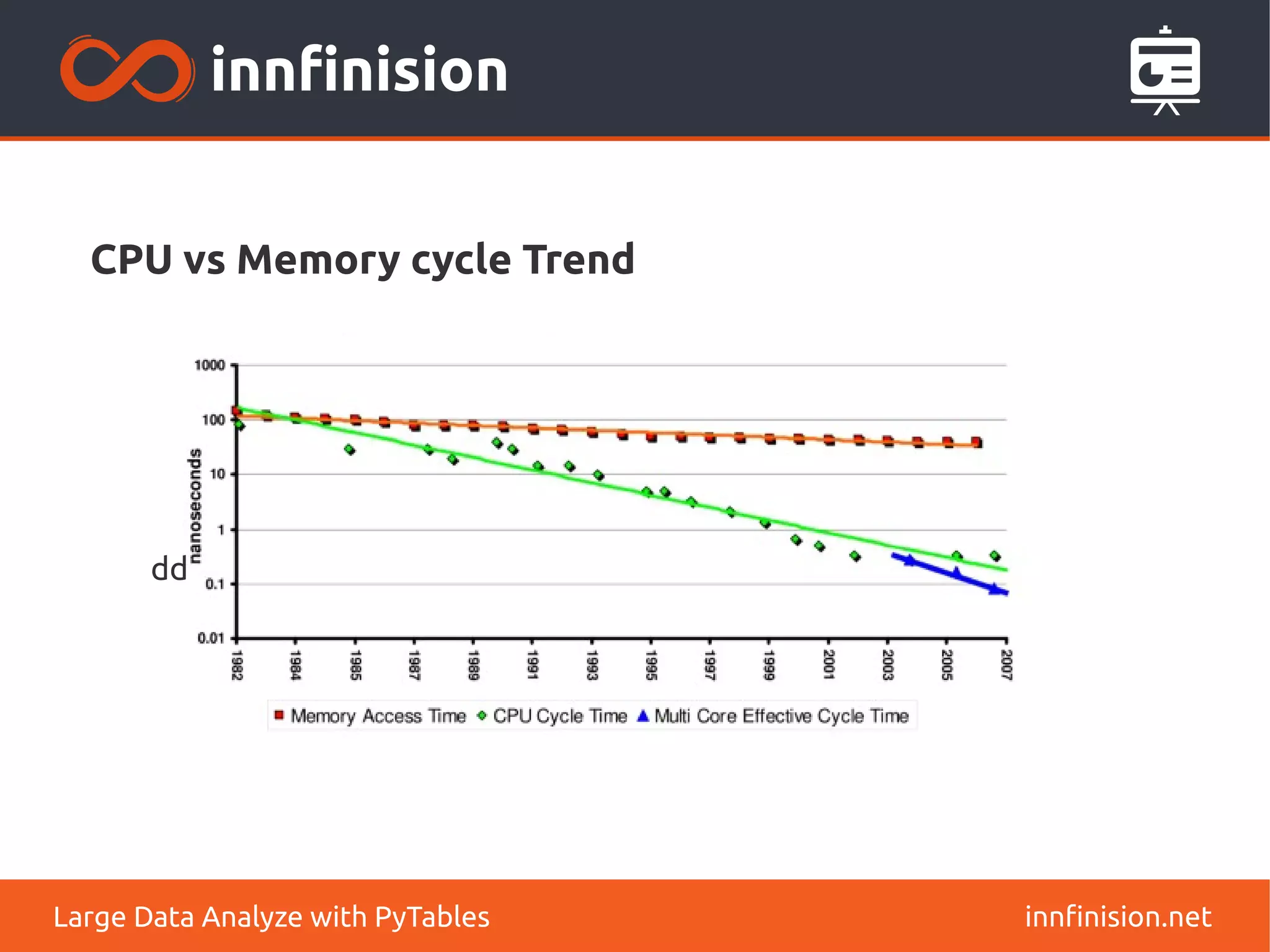

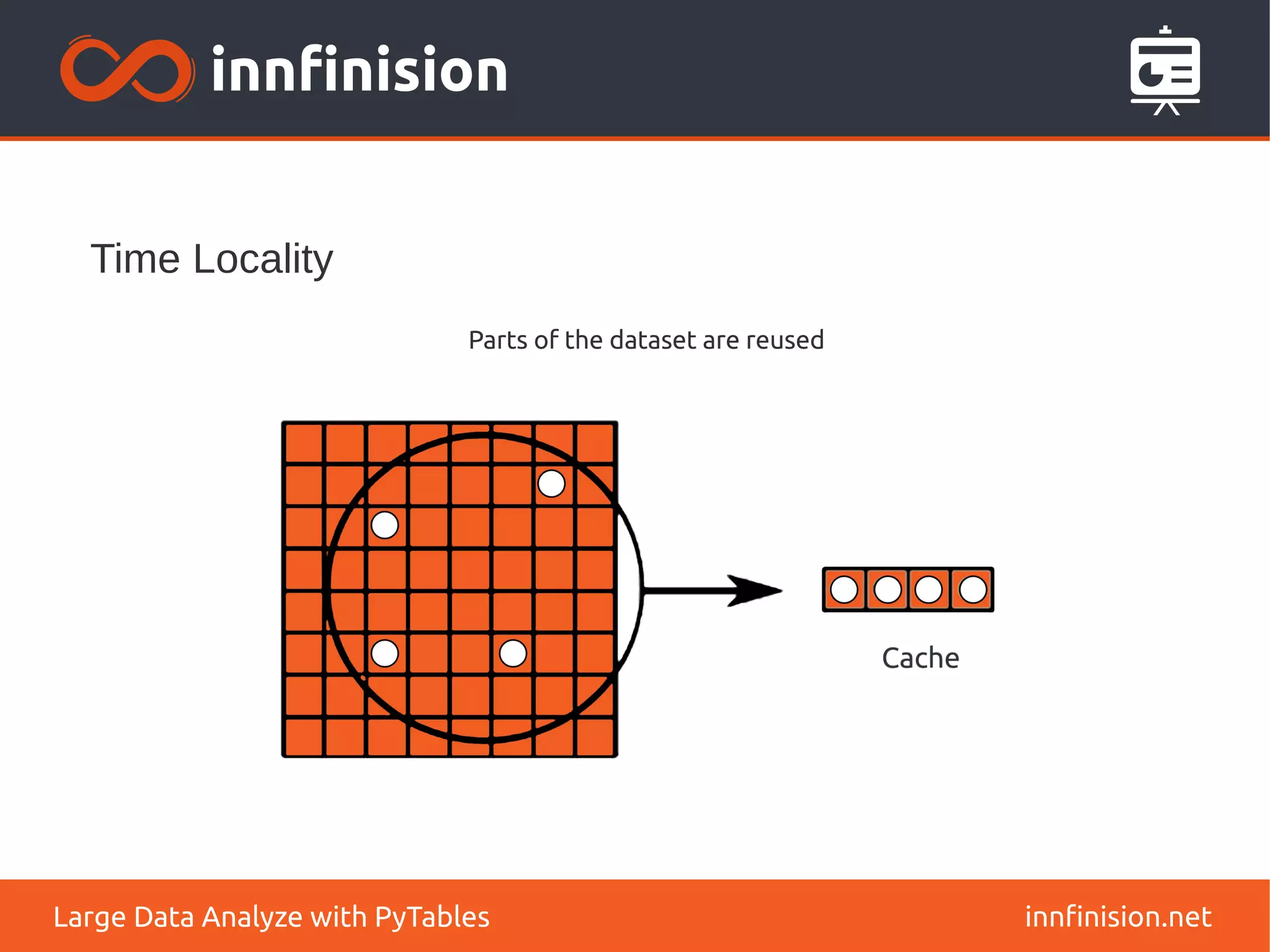

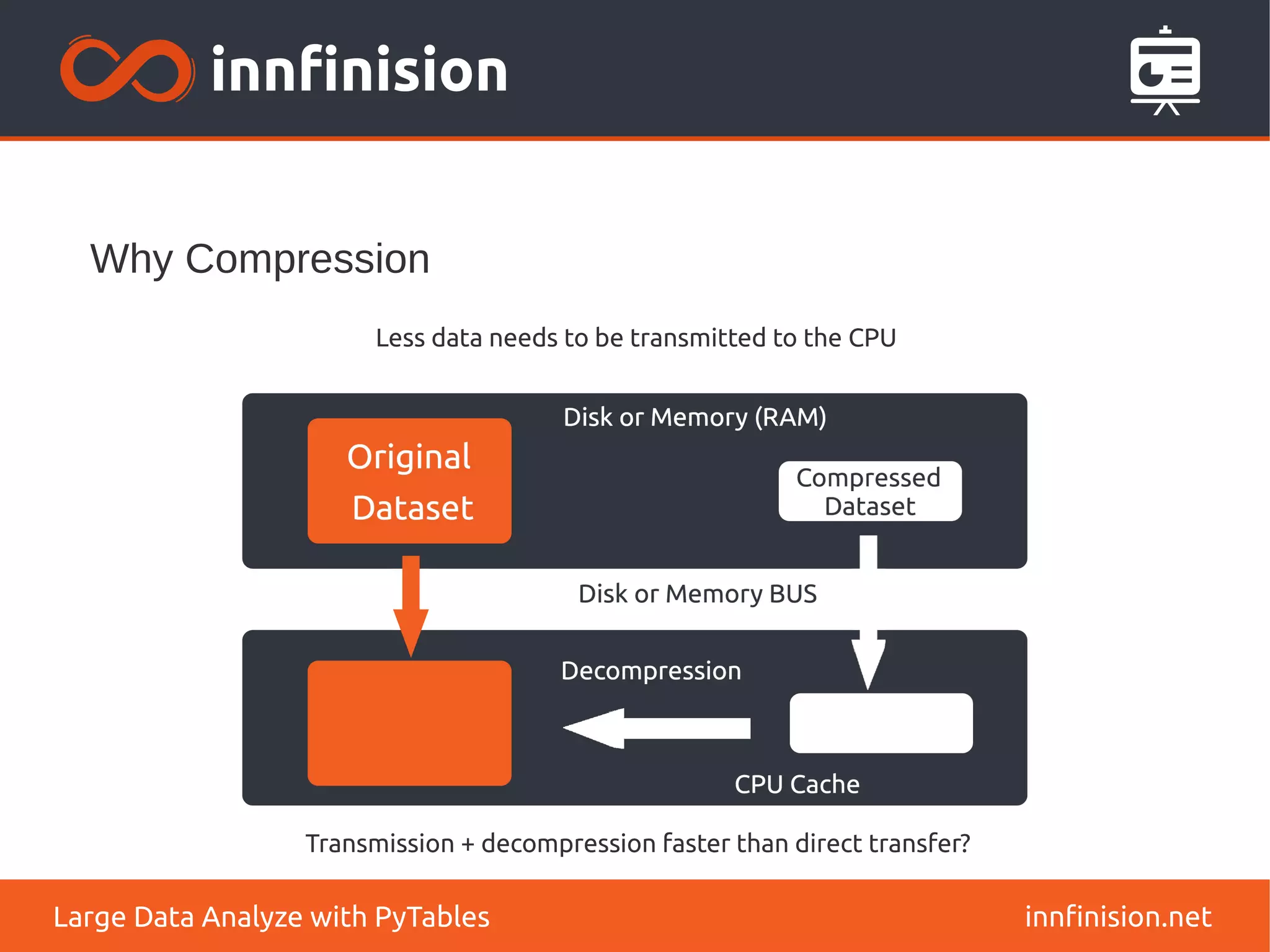

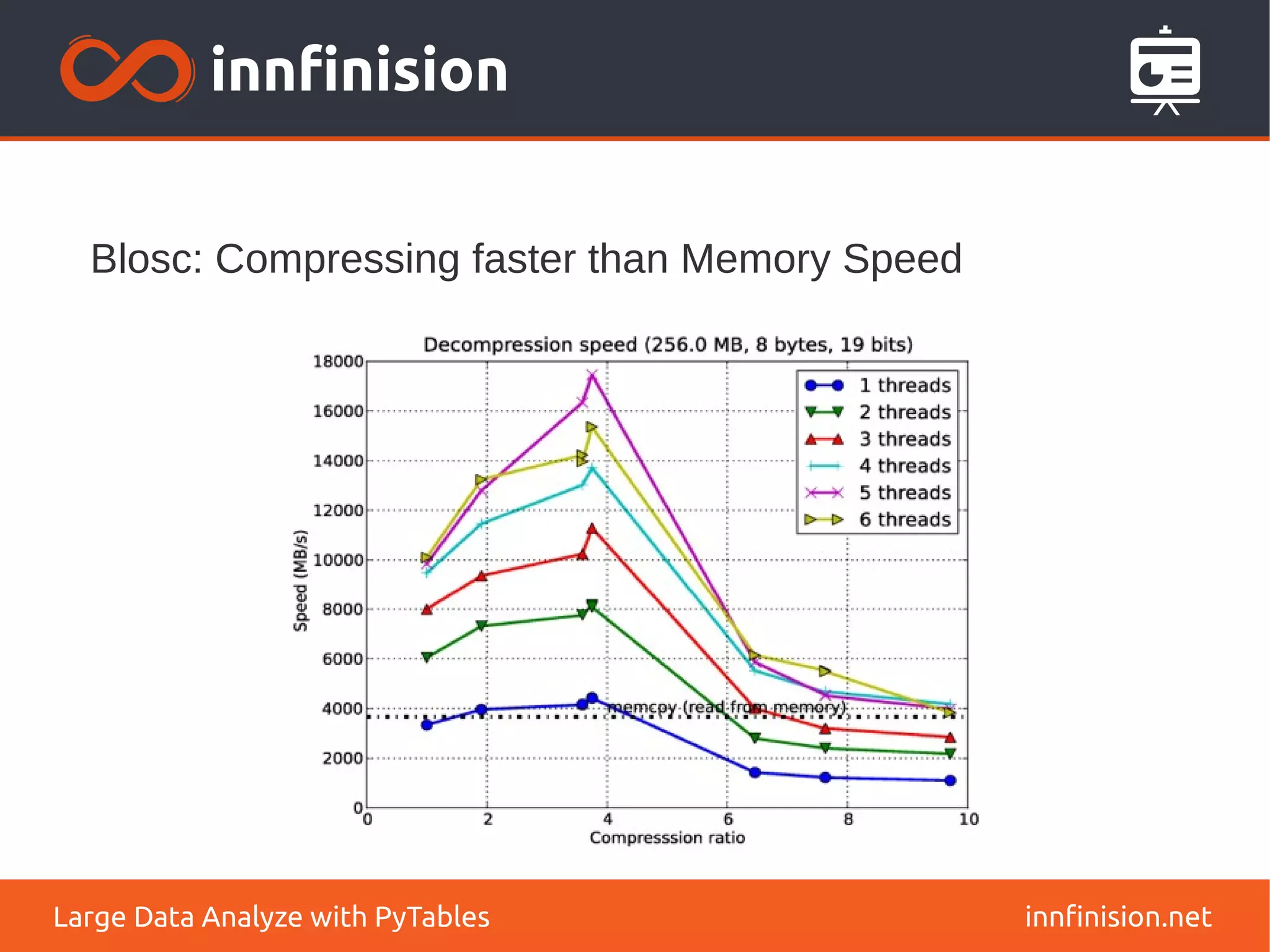

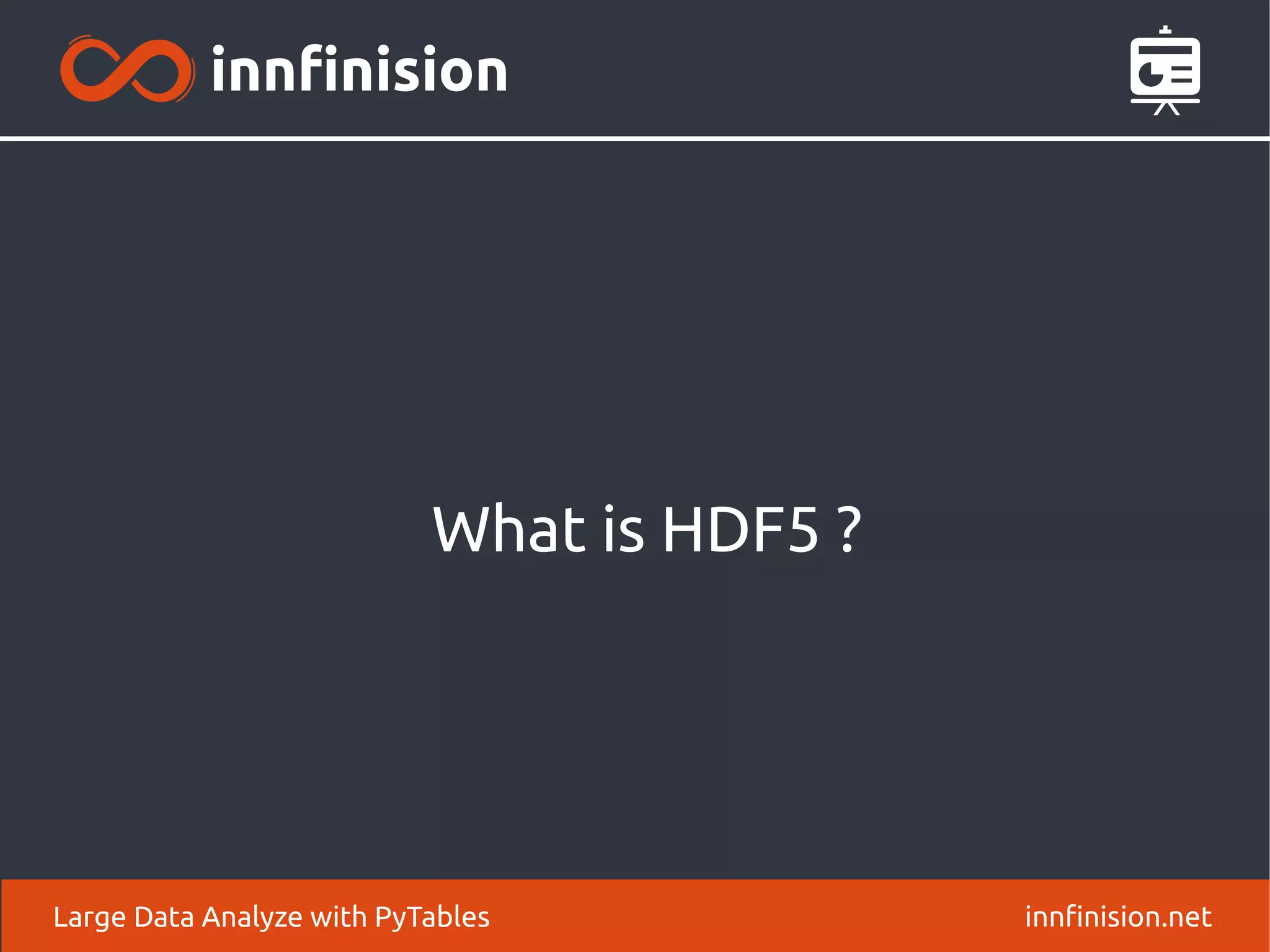

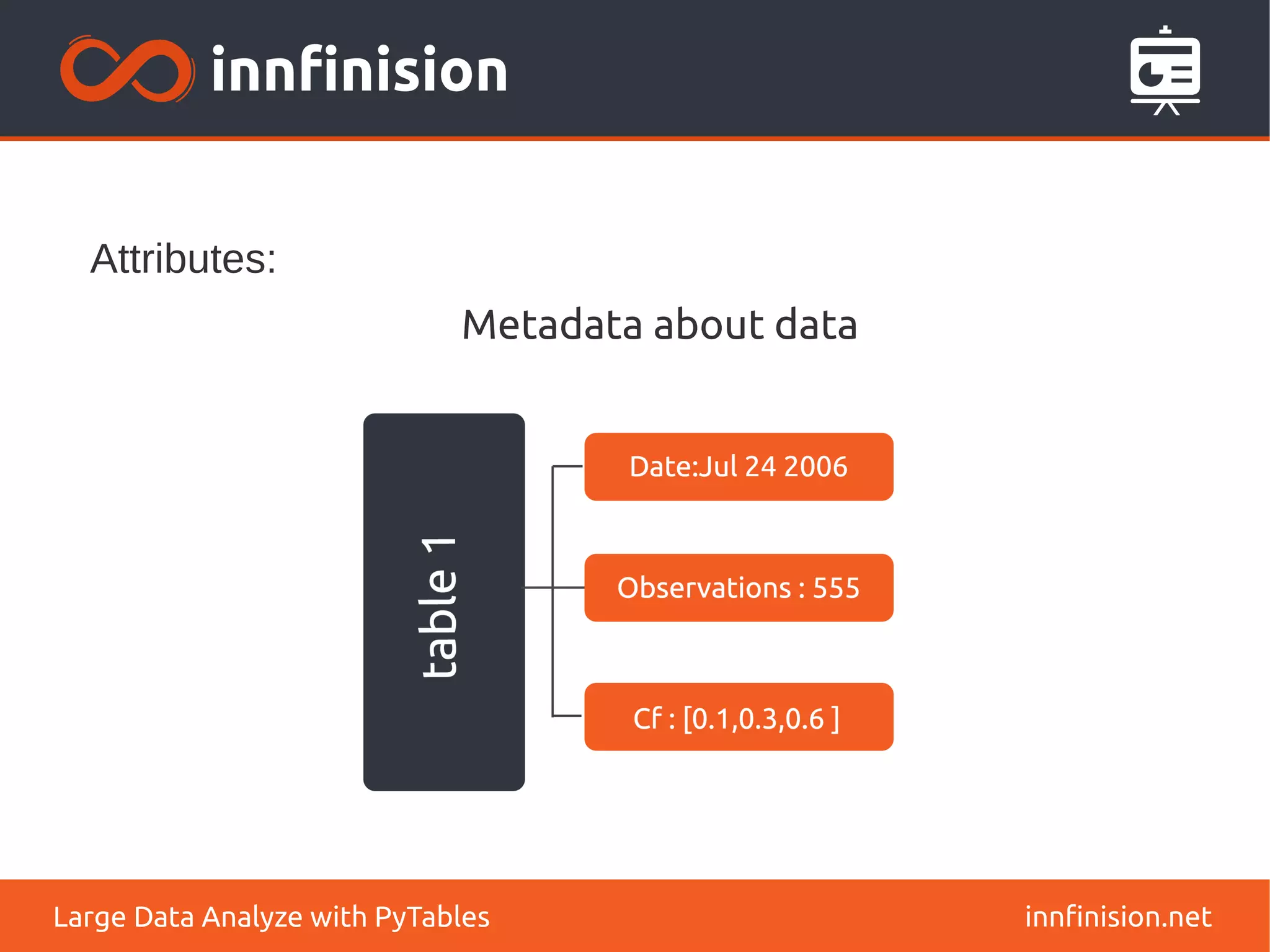

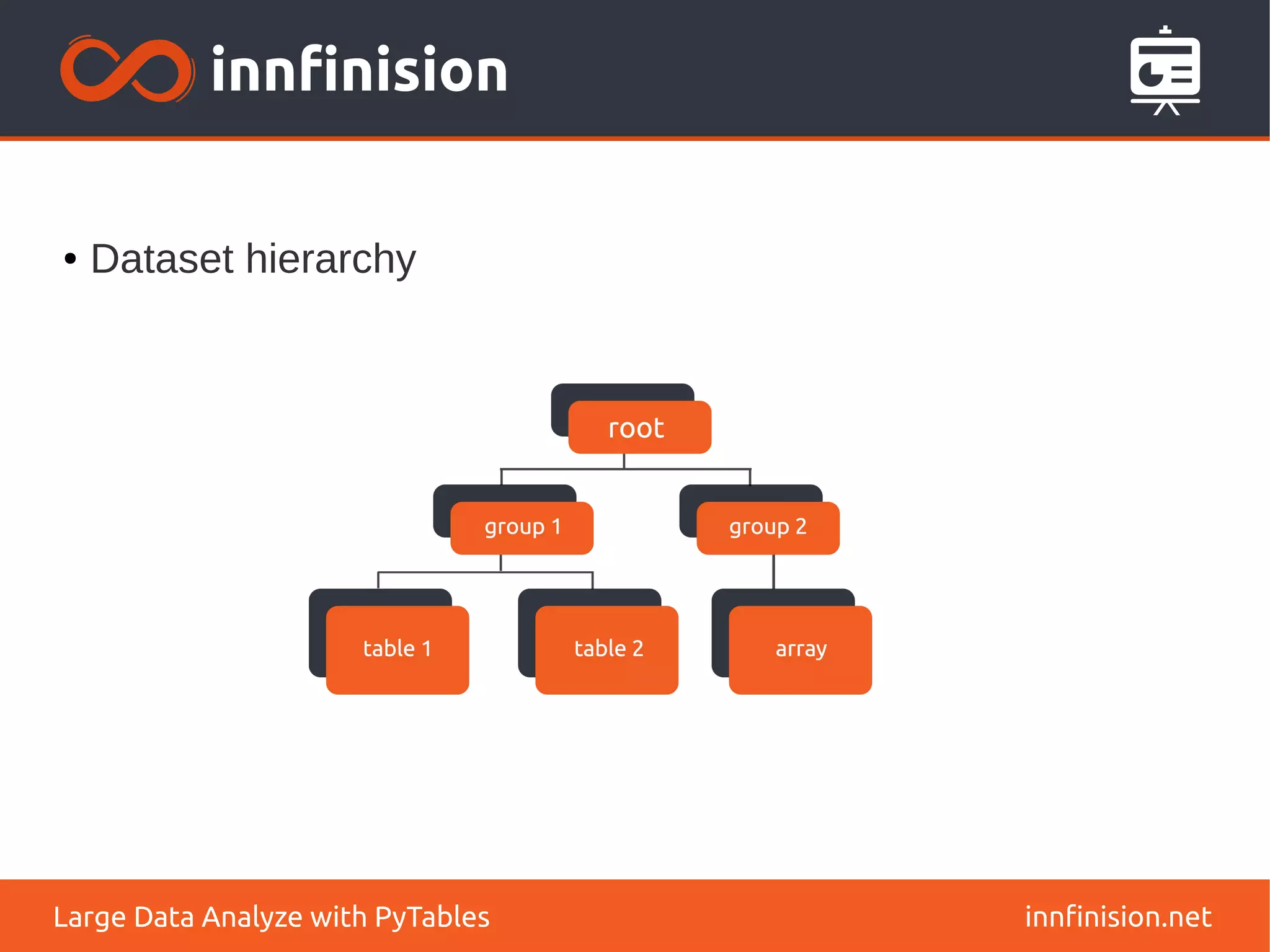

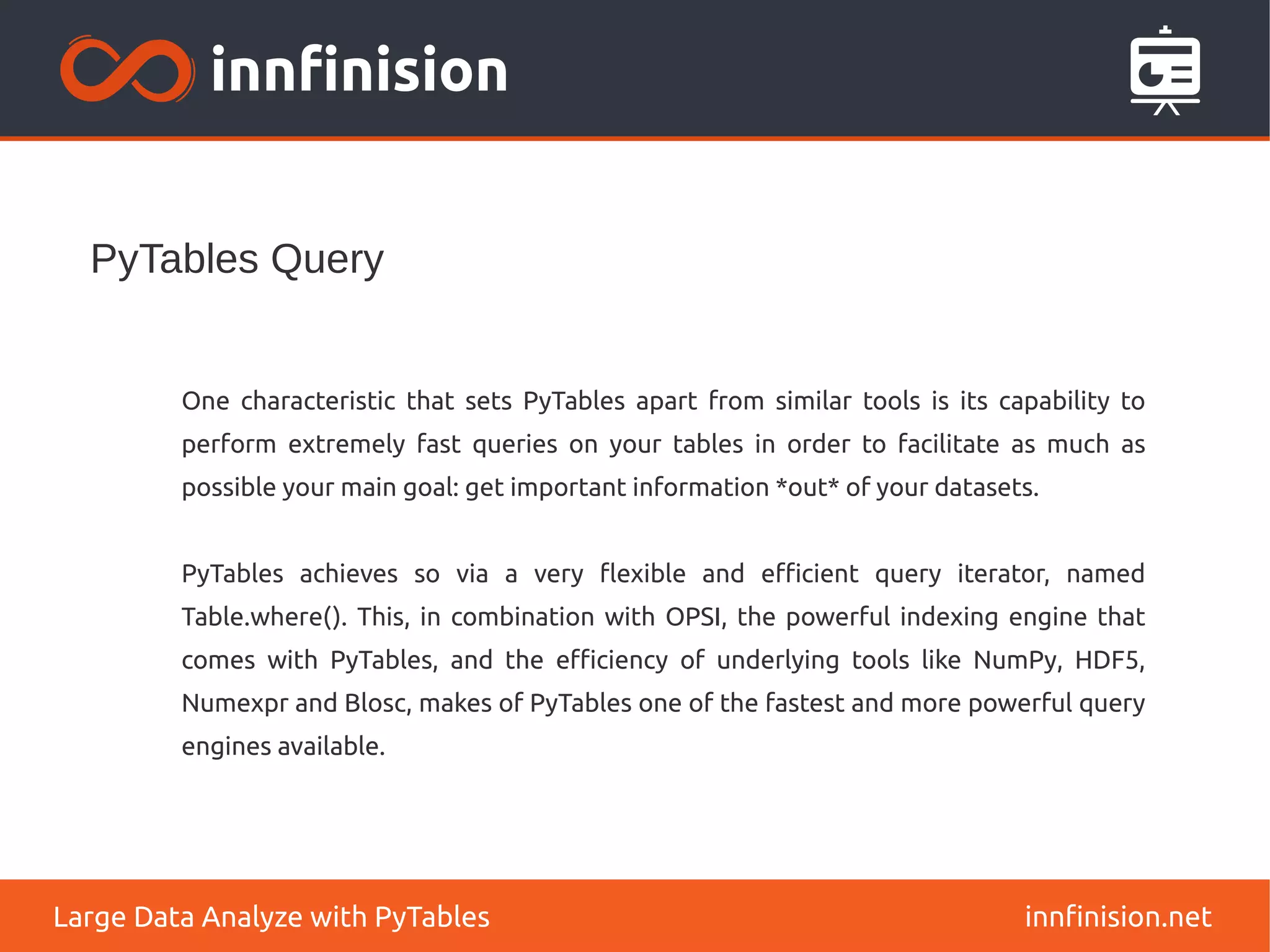

This document discusses the use of PyTables for large data analysis, detailing its capabilities for managing hierarchical datasets and efficient data manipulation. It highlights the challenges posed by CPU, memory, and disk speed mismatches, introduces high-performance libraries, and explains the advantages of using NumPy and data compression techniques. Additionally, it describes the HDF5 format and emphasizes PyTables' fast querying capabilities, enabling effective data retrieval and analysis.

![NumPy: A Powerful Data Container for Python

innfinision.net

Large Data Analyze with PyTables

NumPy provides a very powerful, object oriented, multi dimensional data container:

●

array[index]: retrieves a portion of a data container

●

(array1**3 / array2) - sin(array3): evaluates potentially complex

expressions

● numpy.dot(array1, array2): access to optimized BLAS (*GEMM) functions](https://image.slidesharecdn.com/pytables-150201024554-conversion-gate02/75/PyTables-22-2048.jpg)

![Different query modes

innfinision.net

Large Data Analyze with PyTables

Regular query:

●

[ r[‘c1’] for r in table

if r[‘c2’] > 2.1 and r[‘c3’] == True)) ]

In-kernel query:

●

[ r[‘c1’] for r in table.where(‘(c2>2.1)&(c3==True)’) ]

Indexed query:

●

table.cols.c2.createIndex()

●

table.cols.c3.createIndex()

●

[ r[‘c1’] for r in table.where(‘(c2>2.1)&(c3==True)’) ]](https://image.slidesharecdn.com/pytables-150201024554-conversion-gate02/75/PyTables-39-2048.jpg)