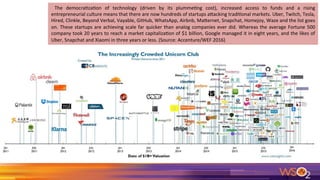

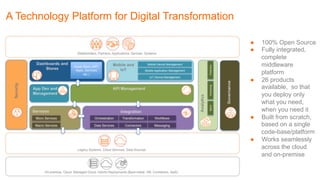

The document outlines an agenda for a session on digital transformation, emphasizing key trends and technology platforms that support this shift, including the rise of customer-centric businesses and the integration of big data. It cites various startups that have rapidly scaled in comparison to traditional companies and discusses the importance of innovative technology platforms for achieving digital transformation. The session will cover ten steps to building a successful platform and the changing dynamics of competition and collaboration in the tech landscape.