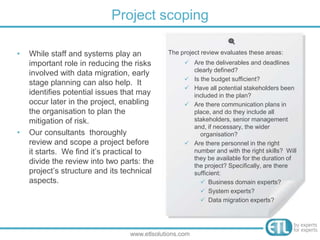

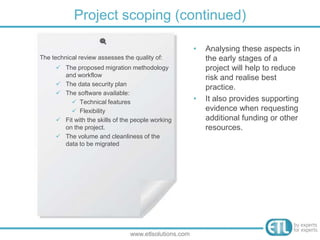

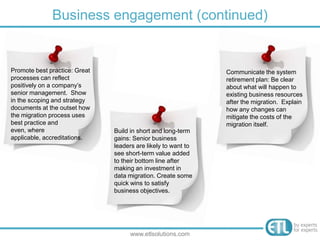

The document provides guidance on preparing a data migration plan. It discusses the importance of project scoping, methodology, data preparation, and data security when planning a data migration. Specifically, it recommends thoroughly reviewing all aspects of the project and data in the planning stages to identify risks and issues early. This helps reduce risks and ensures the migration is completed according to best practices.