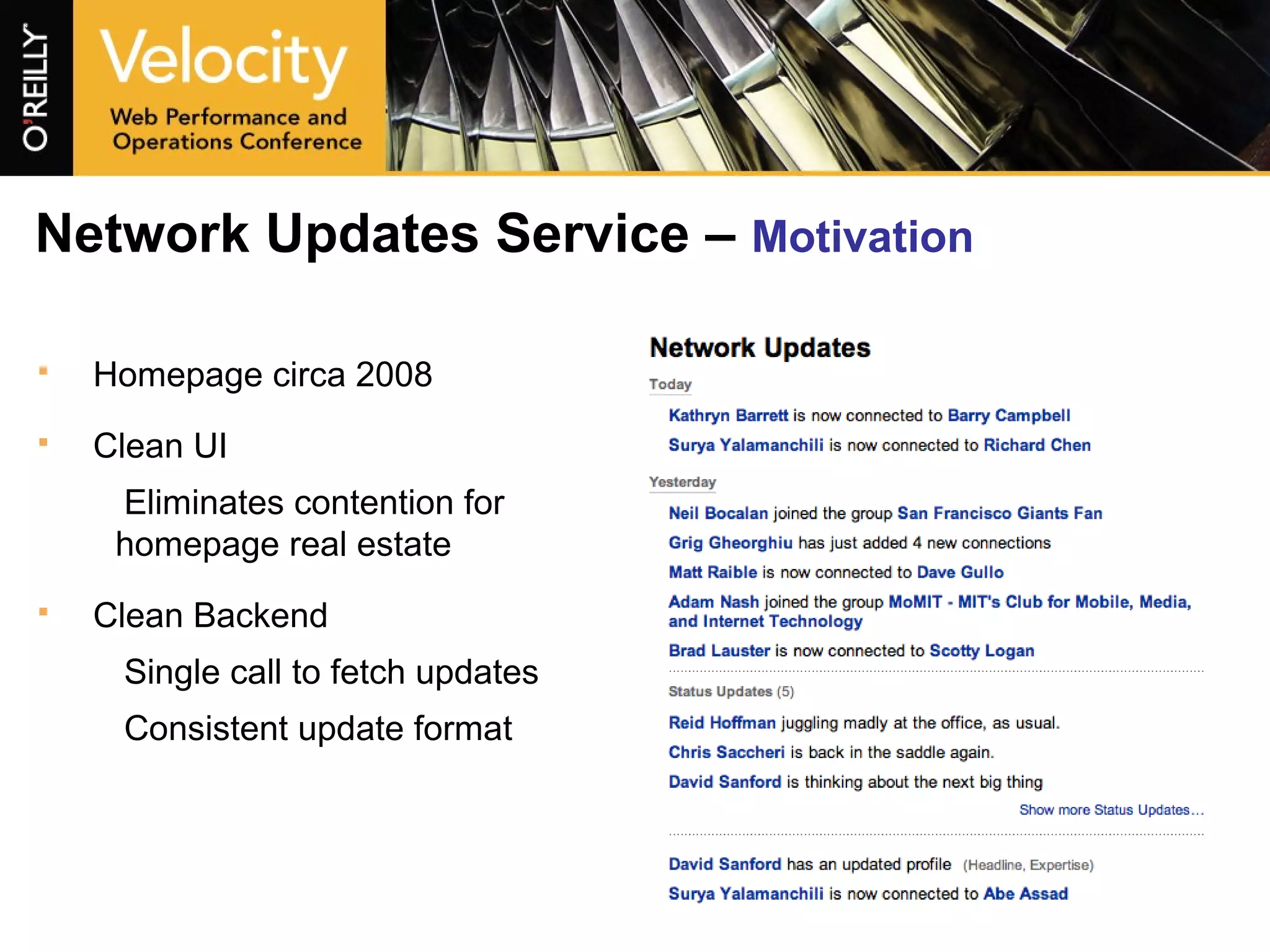

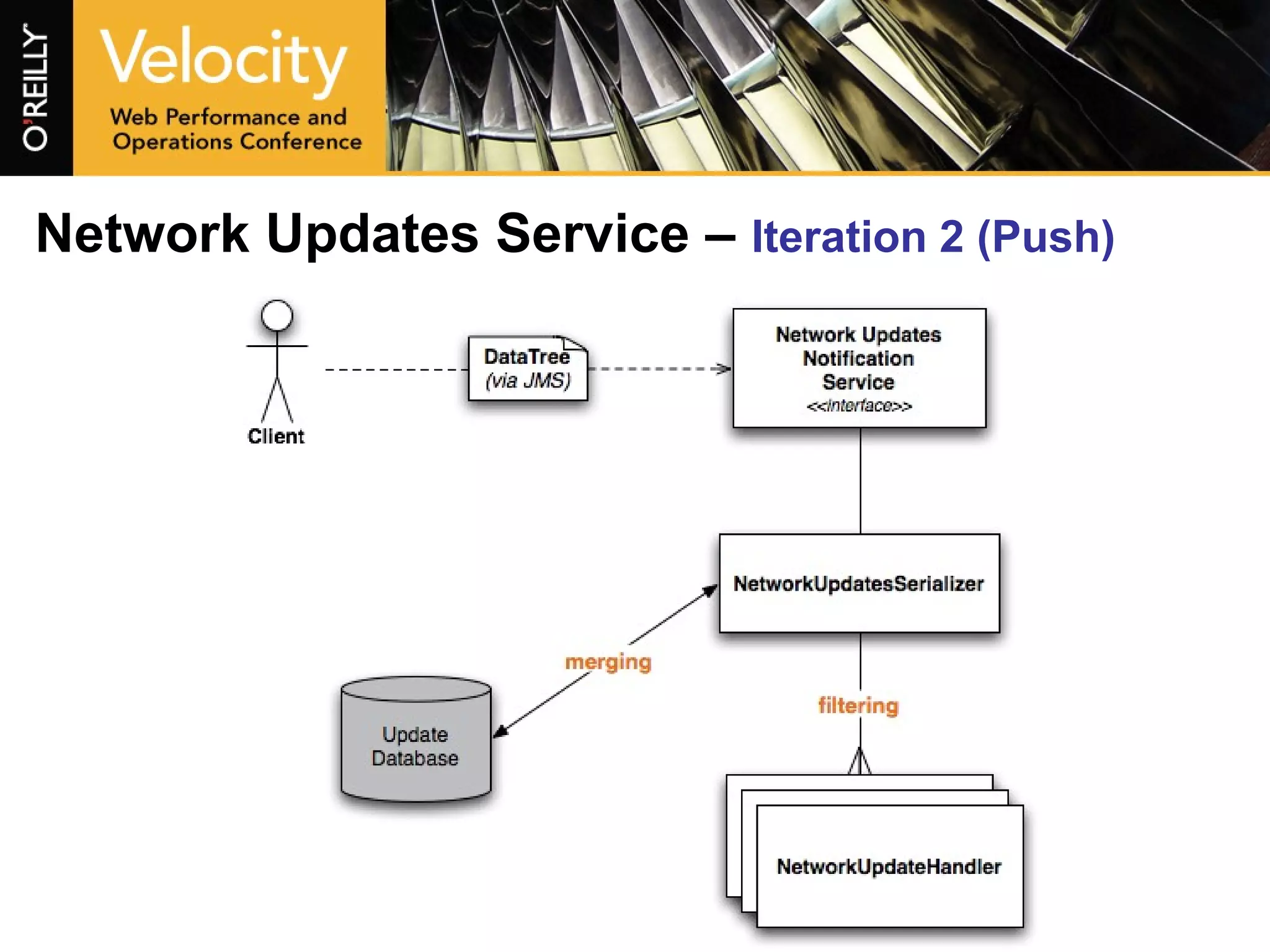

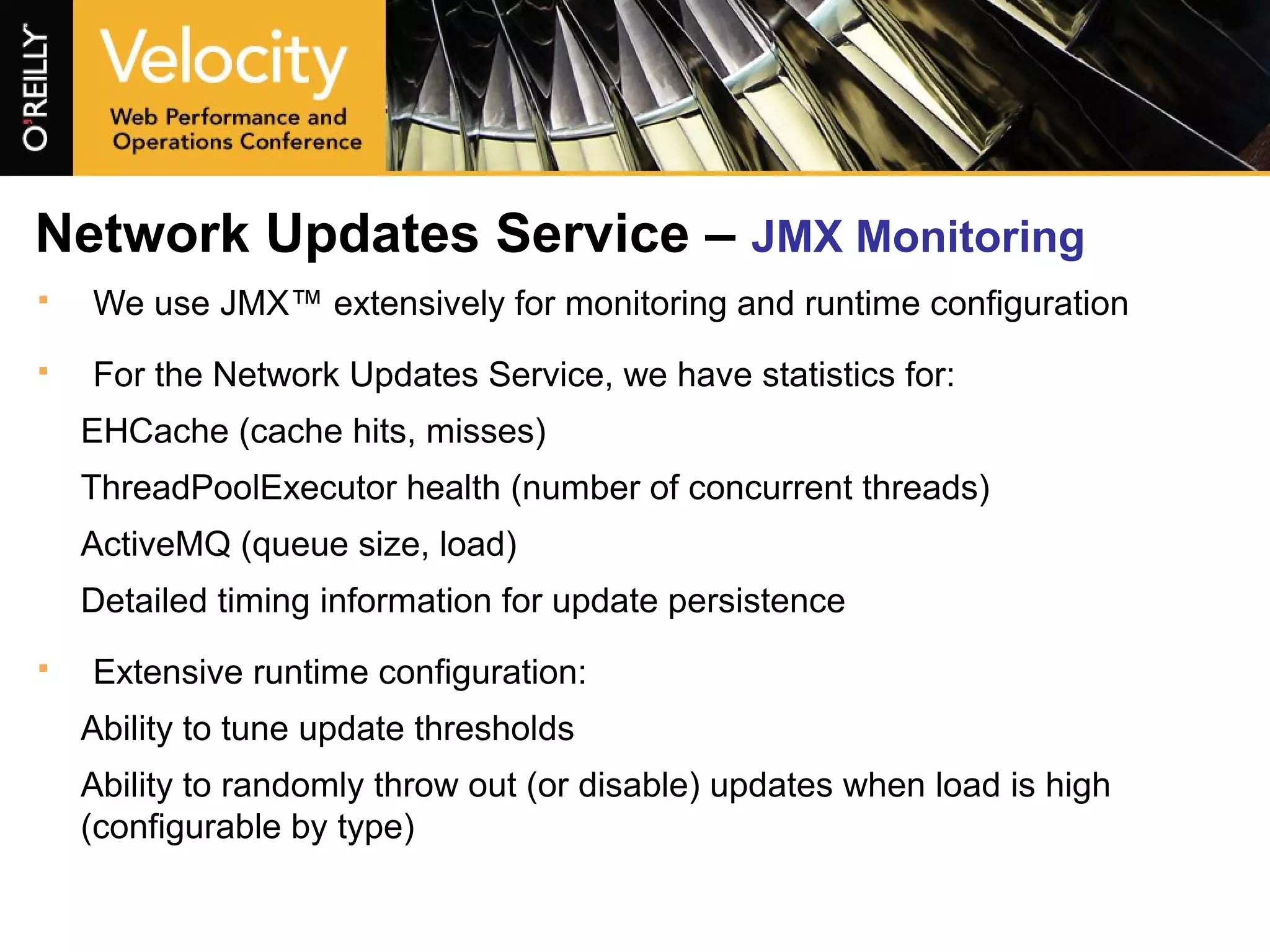

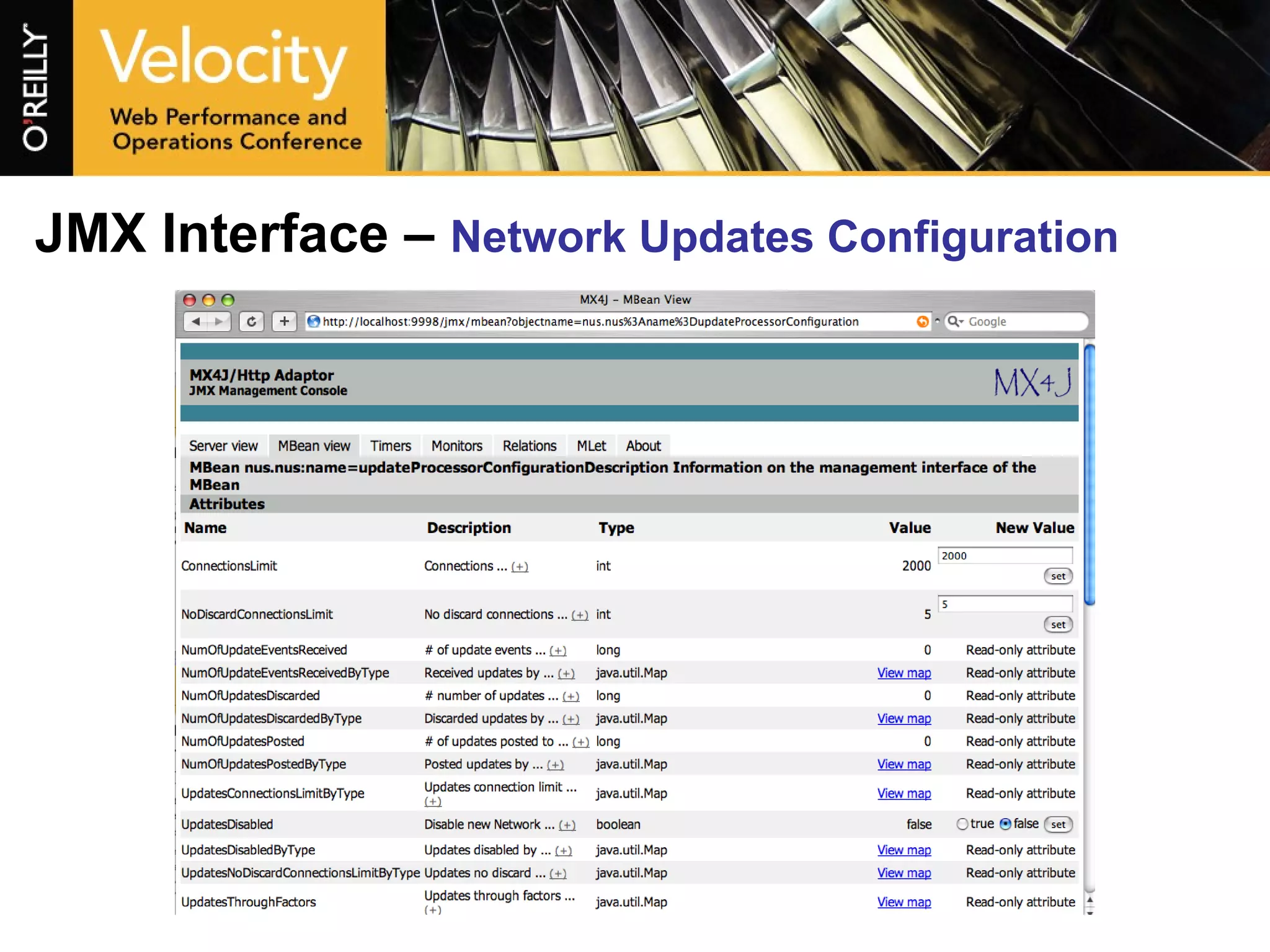

The document describes the evolution of LinkedIn's communication platform from its early days with 23 million members to handling millions of messages and connections daily. It discusses how the communication service was scaled to handle increased traffic by moving to a push-based architecture with event notifications distributed across groups. It also outlines how the platform was optimized through techniques like adding buffer columns to reduce expensive database updates and using caching.