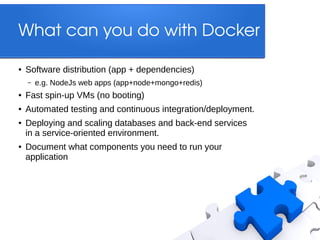

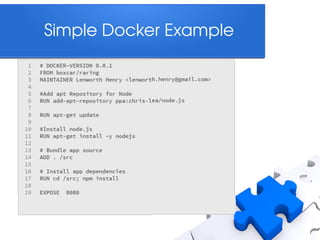

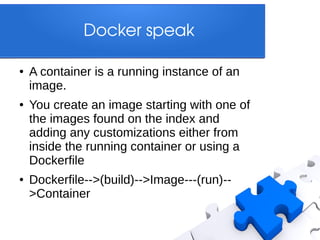

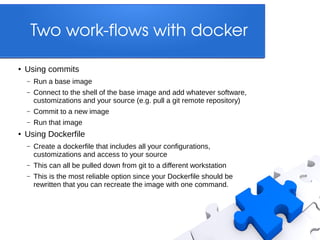

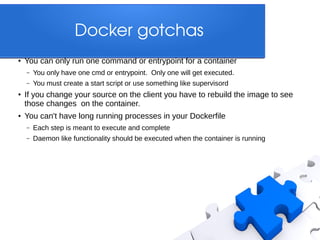

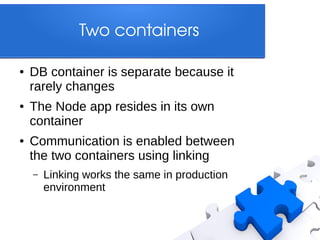

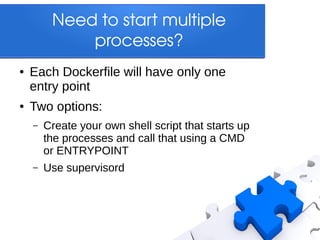

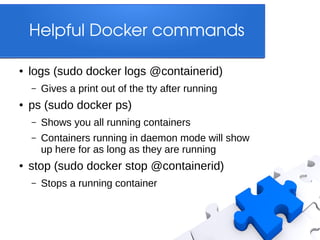

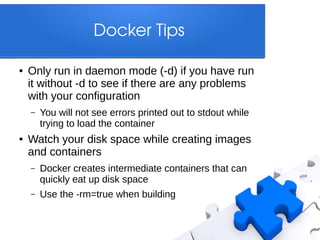

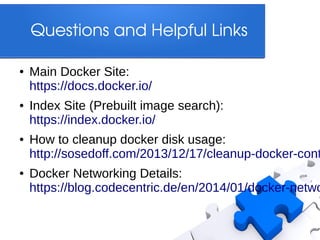

Docker is a platform that allows developers to create lightweight containers for applications, enabling consistent development and production environments. It facilitates software distribution, automated testing, and deployment while addressing synchronization issues in development setups. Key features include containerized applications, custom Dockerfiles for configuration, and efficient management of resources and dependencies.