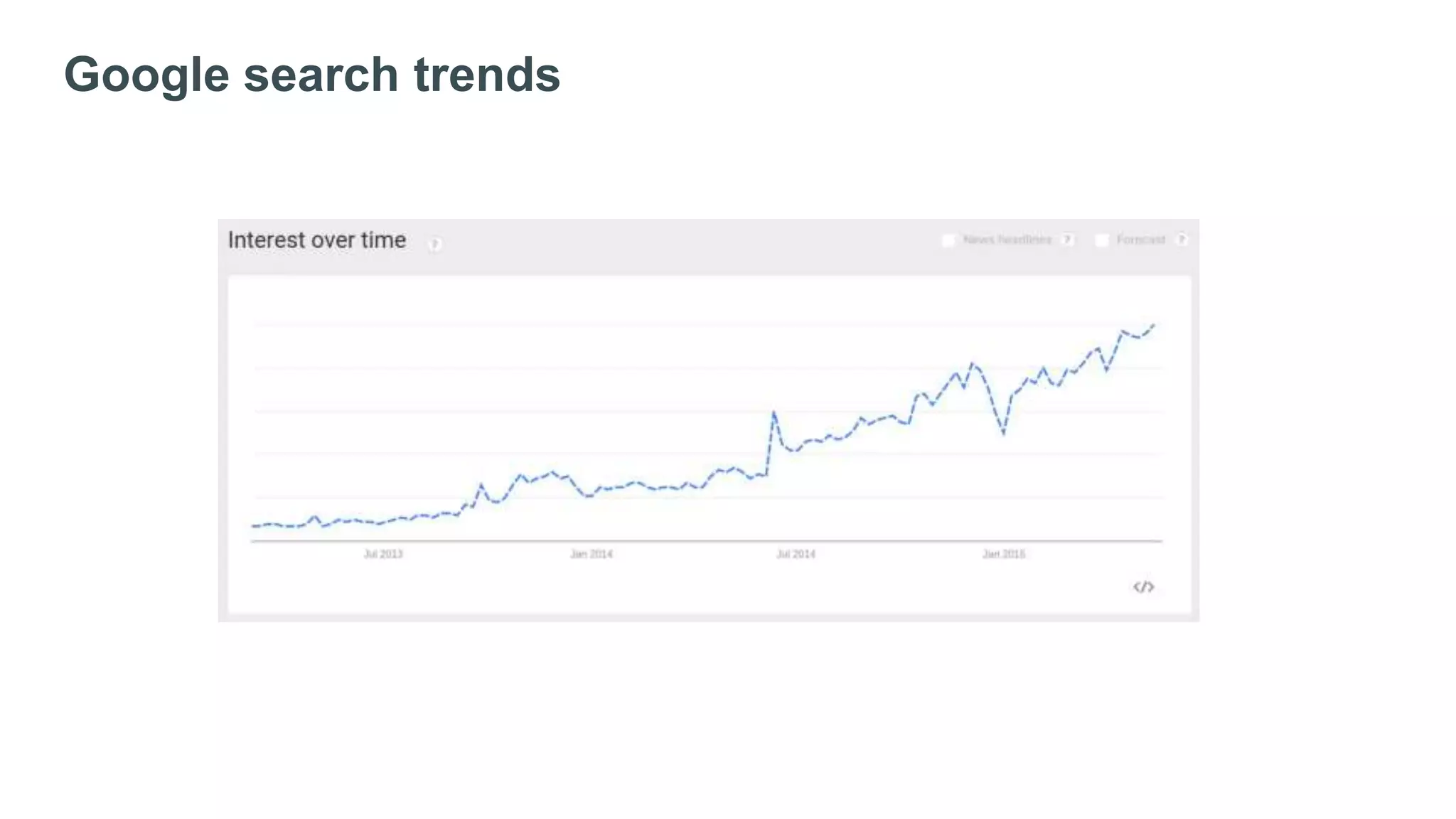

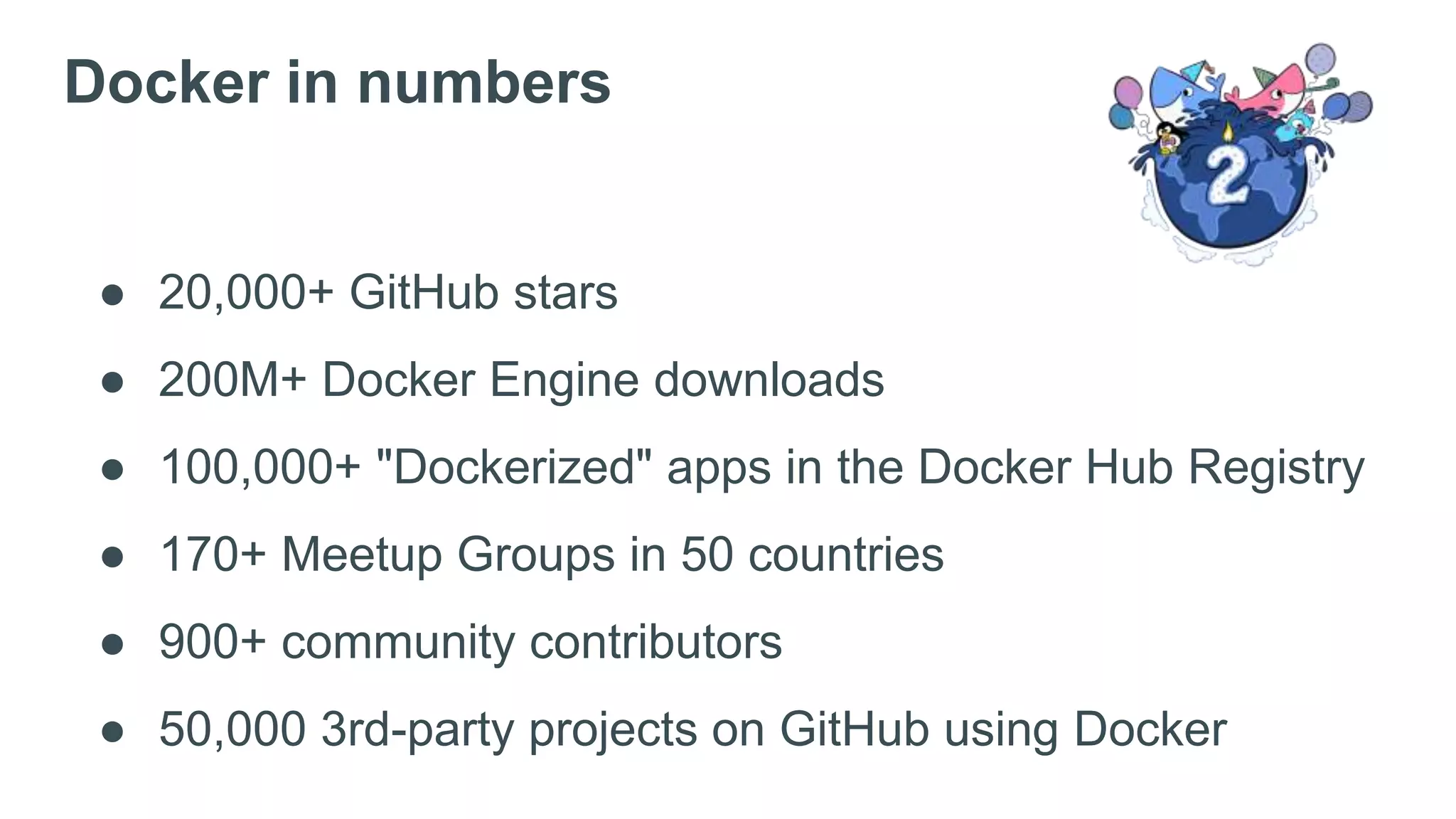

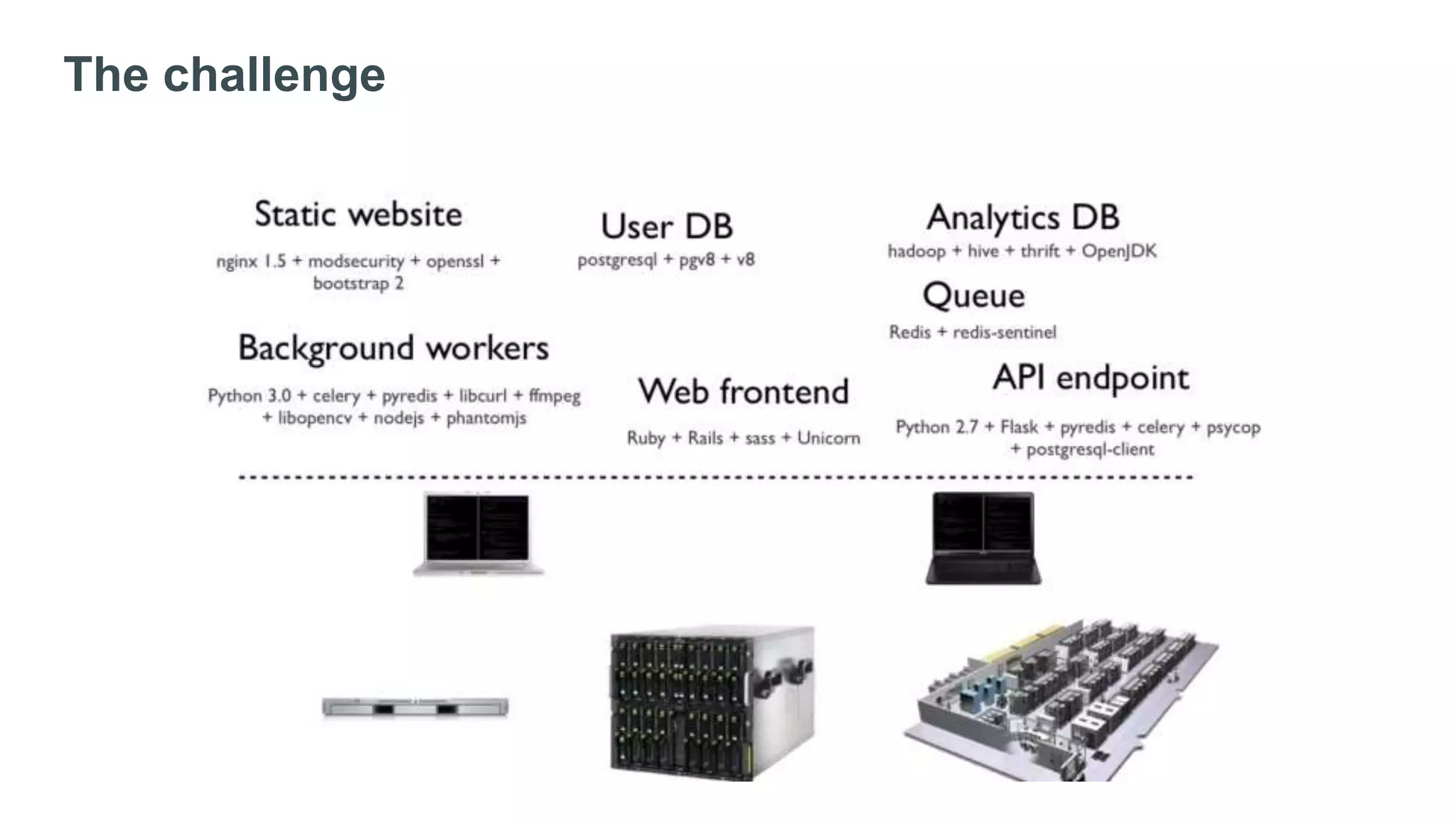

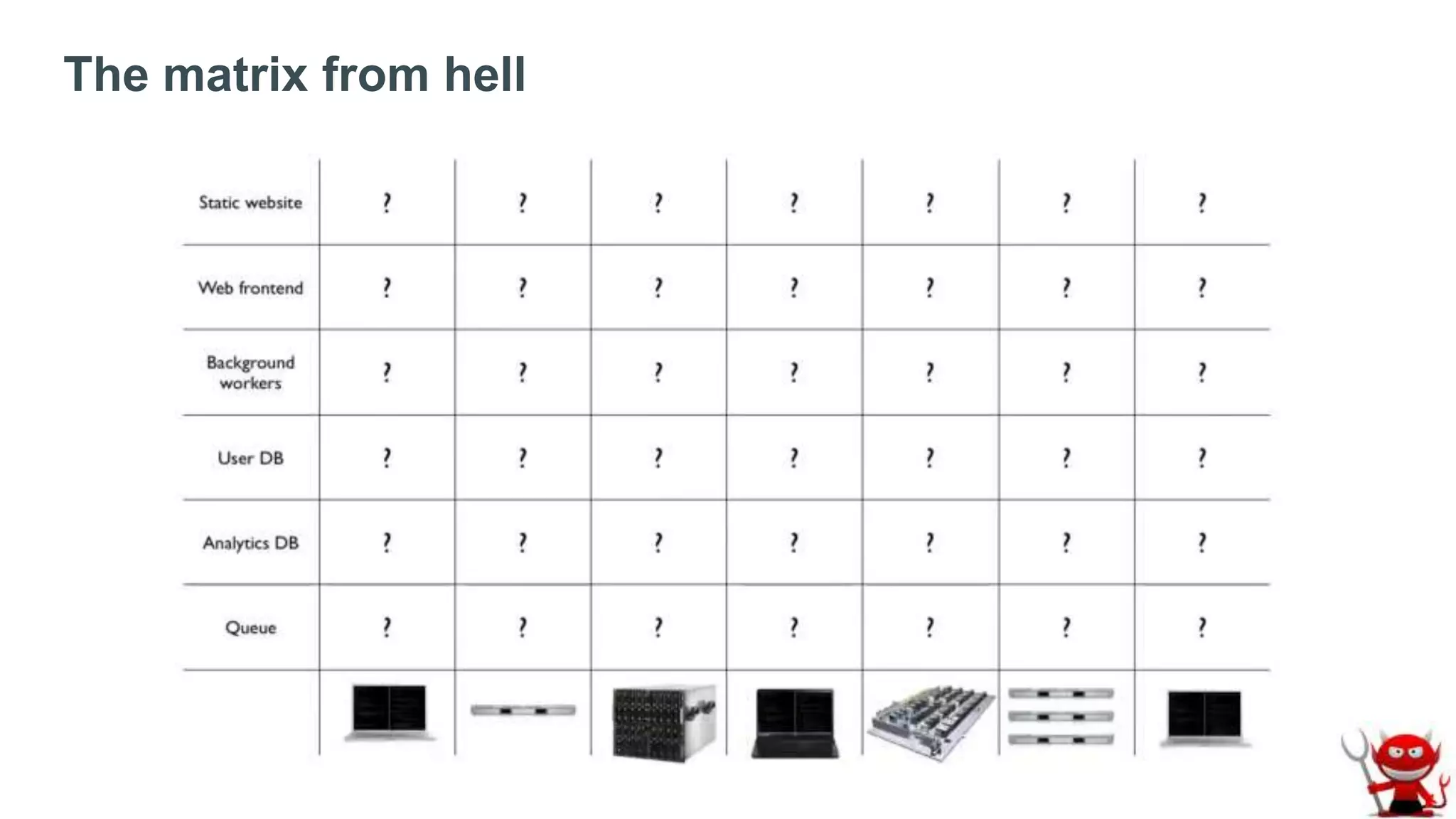

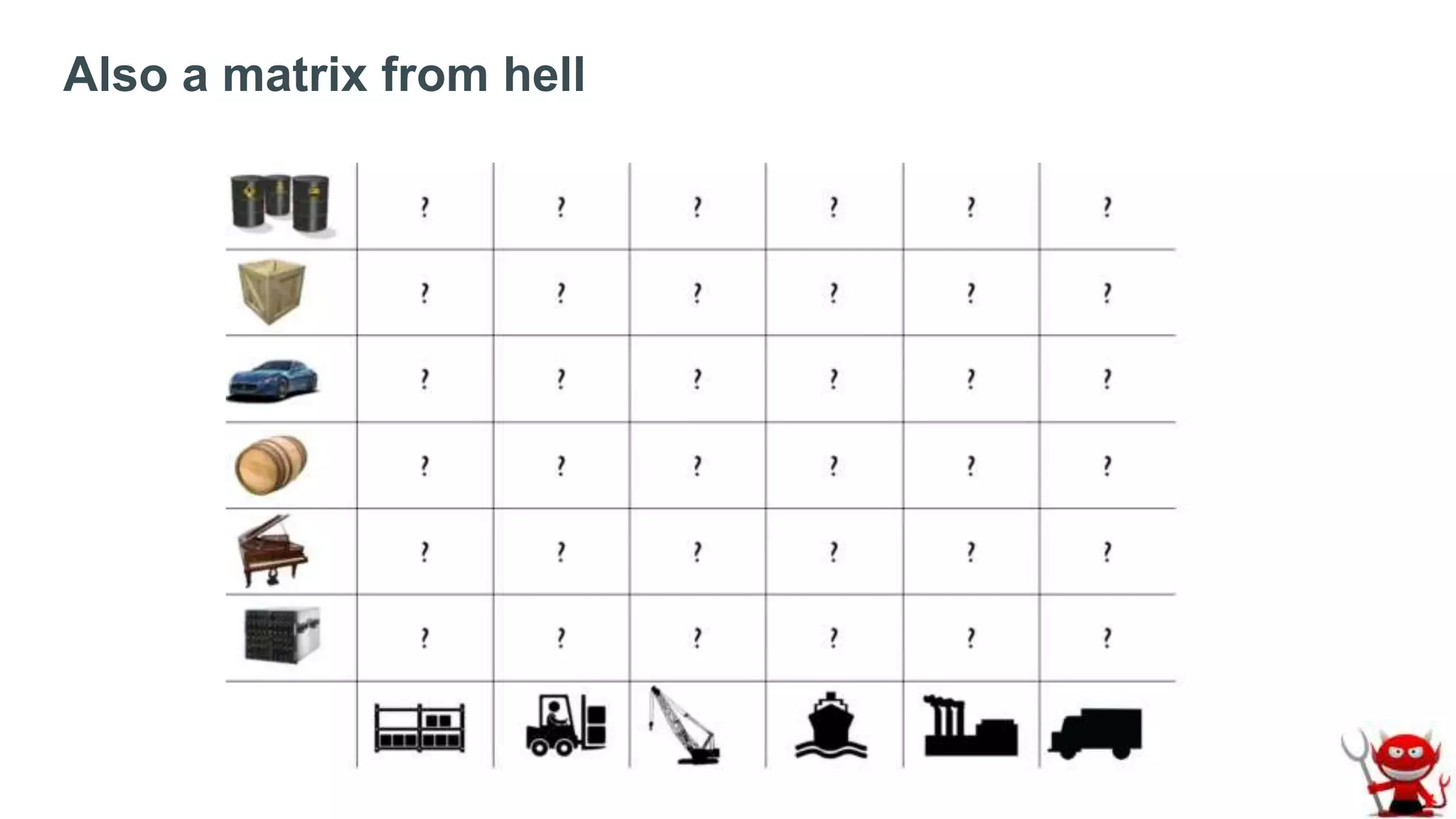

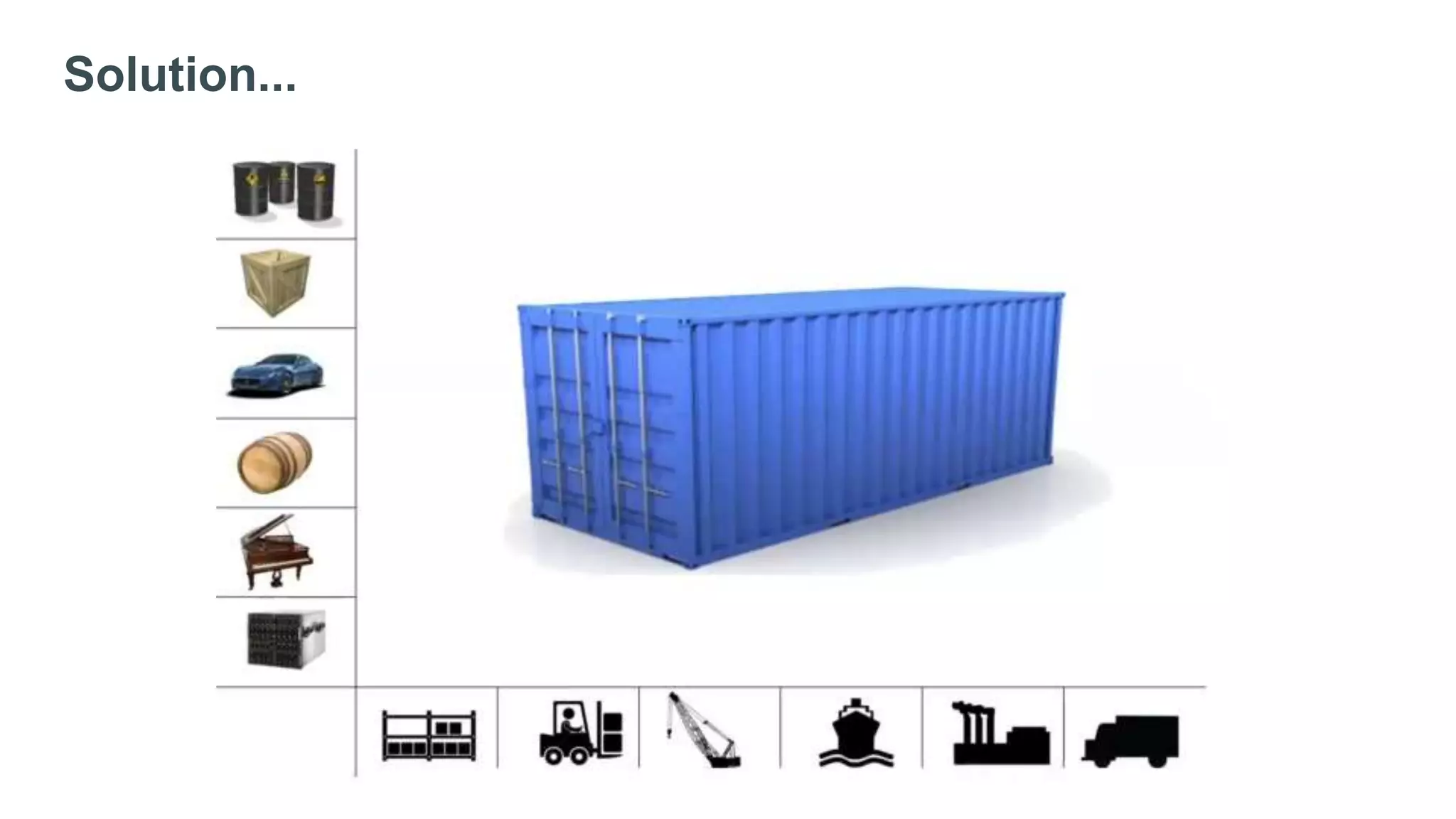

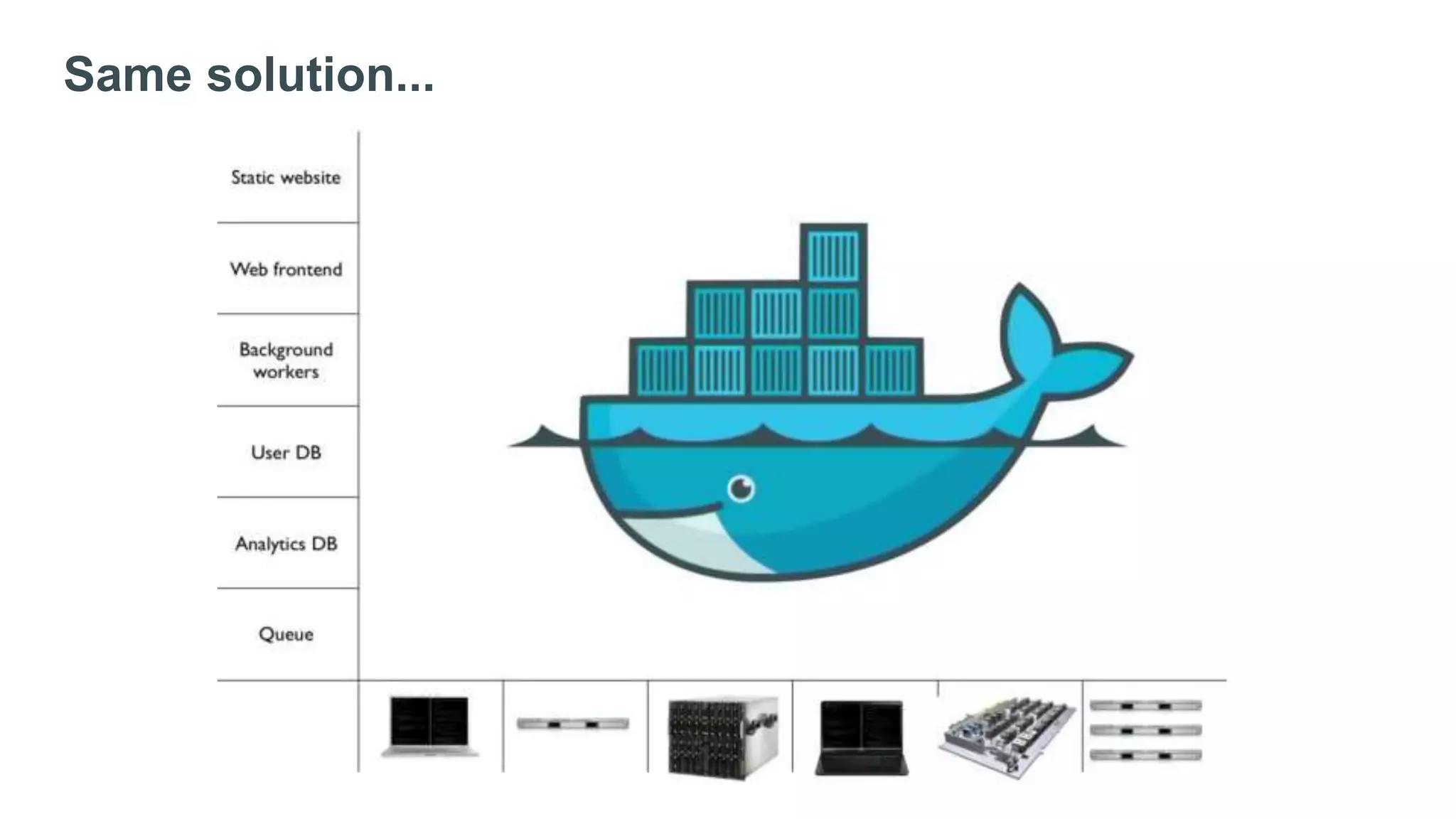

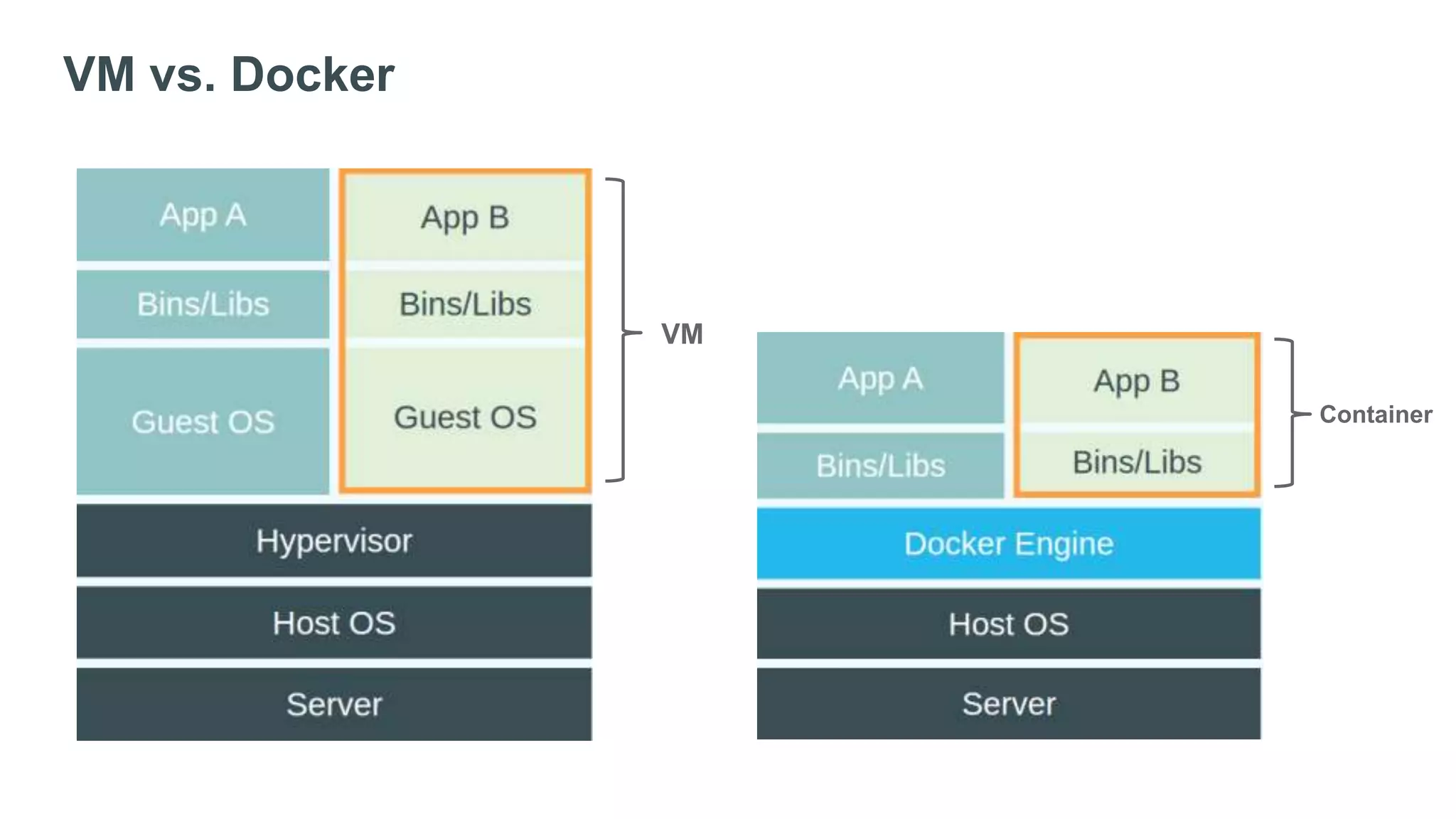

Docker is experiencing rapid growth, with over 20,000 GitHub stars, 200 million downloads, and 100,000 'dockerized' apps. It addresses challenges in application transport and promotes a 'build once, run anywhere' solution through its container technology. The ecosystem is expanding with a large community and diverse project applicability.