Network Challenge: Error and Sensitivity Analysis

•

1 like•633 views

SDM-Networks 2015: The Second SDM Workshop on Mining Networks and Graphs: A Big Data Analytic Challenge

Report

Share

Report

Share

Download to read offline

Recommended

Agile Data Science

Estamos presenciando inovações tecnológicas que possibilitam utilizar ciência dos dados sem a necessidade de antecipar grandes investimentos. Este contexto facilita a adoção de práticas e valores ágeis que encorajam a antecipação de insights e aprendizado contínuo. Nesta palestra, iremos abordar temas como times multi-funcionais, práticas ágeis de engenharia de software e desenvolvimento iterativo, incremental e colaborativo no contexto de produtos e soluções de ciência dos dados.

Agile Data Science

Is Agile Data Science just two buzzwords put together? I argue that agile is a very practical and applicable methodology, that does work well in the real world for all sorts of Analytics and Data Science workflows.

http://theinnovationenterprise.com/summits/digital-web-analytics-summit-london-2015/schedule

Time series forecasting

The case needs methods and tools for displaying and analyzing univariate time series forecasts including exponential smoothing via state space models and automatic ARIMA modelling. Explore the gas (Australian monthly gas production)

Read the data as a time series object in R. Plot the data.

Understand components of the time series are present in this dataset.

Check the periodicity of dataset..

Partition your dataset in such a way that we have the data 1994 onwards in the test data.

Inspect visually as well as conduct an ADF test.

Write down the null and alternate hypothesis for the stationarity test. De-seasonalise the series if seasonality is present.

Develop an initial forecast for next 20 periods. Check the same using the various metrics, after finalising the model, develop a final forecast for the 12 time periods. Use both manual and auto.arima (Show & explain all the steps)

Report the accuracy of the model.

AI and Applications

Current state of AI in 2017/2018, intended at dispelling the hype. Based on broad research on the topic of AI and also the excellent 2017 publication by McKinsey titled "Current State of AI"

K-12 Module in TLE - ICT Grade 9 [All Gradings]![K-12 Module in TLE - ICT Grade 9 [All Gradings]](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![K-12 Module in TLE - ICT Grade 9 [All Gradings]](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

==========================================

K-12 Module in TLE-9 ICT [All Gradings]

Want to Download?

Click the Download at the bottom of the Slideshare :)

==========================================

The R of War

Data Science. .Net/C# Monte Carlo modeling. The R Programming language. See it all come together in one place in this talk. Presentation date 6/13 at Lake County .NET User Group.

Recommended

Agile Data Science

Estamos presenciando inovações tecnológicas que possibilitam utilizar ciência dos dados sem a necessidade de antecipar grandes investimentos. Este contexto facilita a adoção de práticas e valores ágeis que encorajam a antecipação de insights e aprendizado contínuo. Nesta palestra, iremos abordar temas como times multi-funcionais, práticas ágeis de engenharia de software e desenvolvimento iterativo, incremental e colaborativo no contexto de produtos e soluções de ciência dos dados.

Agile Data Science

Is Agile Data Science just two buzzwords put together? I argue that agile is a very practical and applicable methodology, that does work well in the real world for all sorts of Analytics and Data Science workflows.

http://theinnovationenterprise.com/summits/digital-web-analytics-summit-london-2015/schedule

Time series forecasting

The case needs methods and tools for displaying and analyzing univariate time series forecasts including exponential smoothing via state space models and automatic ARIMA modelling. Explore the gas (Australian monthly gas production)

Read the data as a time series object in R. Plot the data.

Understand components of the time series are present in this dataset.

Check the periodicity of dataset..

Partition your dataset in such a way that we have the data 1994 onwards in the test data.

Inspect visually as well as conduct an ADF test.

Write down the null and alternate hypothesis for the stationarity test. De-seasonalise the series if seasonality is present.

Develop an initial forecast for next 20 periods. Check the same using the various metrics, after finalising the model, develop a final forecast for the 12 time periods. Use both manual and auto.arima (Show & explain all the steps)

Report the accuracy of the model.

AI and Applications

Current state of AI in 2017/2018, intended at dispelling the hype. Based on broad research on the topic of AI and also the excellent 2017 publication by McKinsey titled "Current State of AI"

K-12 Module in TLE - ICT Grade 9 [All Gradings]![K-12 Module in TLE - ICT Grade 9 [All Gradings]](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![K-12 Module in TLE - ICT Grade 9 [All Gradings]](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

==========================================

K-12 Module in TLE-9 ICT [All Gradings]

Want to Download?

Click the Download at the bottom of the Slideshare :)

==========================================

The R of War

Data Science. .Net/C# Monte Carlo modeling. The R Programming language. See it all come together in one place in this talk. Presentation date 6/13 at Lake County .NET User Group.

Graph Analysis Trends and Opportunities -- CMG Performance and Capacity 2014

High-performance graph analysis is unlocking knowledge in problems like anomaly detection in computer security, community structure in social networks, and many other data integration areas. While graphs provide a convenient abstraction, real-world problems' sparsity and lack of locality challenge current systems. This talk will cover current trends ranging from massive scales to low-power, low-latency systems and summarize opportunities and directions for graphs and computing systems.

Target Leakage in Machine Learning

Target leakage is one of the most difficult problems in developing real-world machine learning models. Leakage occurs when the training data gets contaminated with information that will not be known at prediction time. Additionally, there can be multiple sources of leakage, from data collection and feature engineering to partitioning and model validation. As a result, even experienced data scientists can inadvertently introduce leaks and become overly optimistic about the performance of the models they deploy. In this talk, we will look through real-life examples of data leakage at different stages of the data science project lifecycle, and discuss various countermeasures and best practices for model validation.

Operations-Driven Web Services at Rent the Runway

From a meetup on 8/26, Rent the Runway's operations-driven service infrastructure including an overview of why we use Dropwizard

Machine Learning for dummies!

Currently hundreds of tools are promising to make artificial intelligence accessible to the masses. Tools like DataRobot, H20 Driverless AI, Amazon SageMaker or Microsoft Azure Machine Learning Studio.

These tools promise to accelerate the time-to-value of data science projects by simplifying model building.

In the workshop we will approach the AI Topic head on!

What is AI? What can AI do today? What do I need to start my own project?

We do all this using Microsoft's Machine Learning Studio.

Trainer: Philipp von Loringhoven - Chef, Designer, Developer, Markeeter - Data Nerd!

He has acquired a lot of expertise in marketing, business intelligence and product development during his time at the Rocket Internet startups (Wimdu, Lamudi) and Projekt-A (Tirendo).

Today he supports customers of the Austrian digitisation agency TOWA as Director Data Consulting to generate an added value from their data.

PyData 2015 Keynote: "A Systems View of Machine Learning"

Despite the growing abundance of powerful tools, building and deploying machine-learning frameworks into production continues to be major challenge, in both science and industry. I'll present some particular pain points and cautions for practitioners as well as recent work addressing some of the nagging issues. I advocate for a systems view, which, when expanded beyond the algorithms and codes to the organizational ecosystem, places some interesting constraints on the teams tasked with development and stewardship of ML products.

About: Dr. Joshua Bloom is an astronomy professor at the University of California, Berkeley where he teaches high-energy astrophysics and Python for data scientists. He has published over 250 refereed articles largely on time-domain transients events and telescope/insight automation. His book on gamma-ray bursts, a technical introduction for physical scientists, was published recently by Princeton University Press. He is also co-founder and CTO of wise.io, a startup based in Berkeley. Josh has been awarded the Pierce Prize from the American Astronomical Society; he is also a former Sloan Fellow, Junior Fellow at the Harvard Society, and Hertz Foundation Fellow. He holds a PhD from Caltech and degrees from Harvard and Cambridge University.

Data Science, what even?!

Presented an abridged version of my "What is data science" talk at #websummit 2013.

This talk goes over the required skillset as defined by Drew Conway and his famous venn diagram, and also outlines the Data Scientific Method brought by Dr. Patil. The talk is mainly two parts and the second part goes over some of the packages and technologies we use — minus the storage part.

Data Science as a Career and Intro to R

A basic intro to R and discussions on Data Science as a career option

Lean Digital | Data Driven Factory

Les sujets autours de la data défient régulièrement la chronique. La richesse et la valeur des informations issues des algorithmes sont maintenant bien connues. Elles sont par exemple utilisées dans le domaine du markéting, dans la modélisation d’un comportement pour déclencher un acte de choix, d'achat voire de vote. Le monde du sport est également un grand utilisateur de statistiques dans une recherche constante d'amélioration des performances collectives et individuelles.

Déclencher la bonne réaction au bon moment dans le but d'optimiser l'efficience, n’est-ce pas un des objectifs de l’Excellence Opérationnelle ? Et des données, l'entreprise en génère en continu !

Pourtant avant d’orienter le pilotage par la donnée vers le flux de valeur, il reste quelques obstacles à franchir, techniques et surtout humains. Les différents systèmes d’information peuvent avoir été conçus sur le modèle d’organisations isolées, en silos. L’accès et la consolidation des différentes sources de données ne sont donc pas toujours simples. Il peut aussi manquer des compétences au sein de l’entreprise. Passés ces premiers obstacles, un outil même puissant ne remplacera pas l’intelligence collective. Les modèles statistiques ainsi rendus visibles sont de réels catalyseurs d’optimisations. Restons toutefois vigilants à ne pas perdre le contact avec le sol. Les gains réalisés seront le résultat d’actions terrain, robustes, où celles et ceux qui font auront toutes les cartes en main pour piloter leur process.

Sommaire de la présentation :

- Les enjeux liés à la data et les constats

- L’intégration de la data dans l'amélioration de la performance

- Les outils de la statistique descriptive et analytique

- Aller plus loin dans l’IA et les Big Data avec de nouveaux métiers et outils

Principal Component Analysis

Slides used to present an overview on Principal Component Analysis during a analytics group meeting at TWBR.

Machine Learning Project - Default credit card clients

- The model we built here will use all possible factors to predict data on customers to find who are defaulters and non‐defaulters next month.

- The goal is to find the whether the clients are able to pay their next month credit amount.

- Identify some potential customers for the bank who can settle their credit balance.

- To determine if their customers could make the credit card payments on‐time.

- Default is the failure to pay interest or principal on a loan or credit card payment.

Visualizing your data in JavaScript

Visualizing your results accurately can reveal hidden insights, catch errors, and inspire your audience to investigate further. During this workshop, we’ll cover types of data visualizations and when they’re most effective, different JavaScript charting libraries such as D3, Google Charts, and Dygraphs, and how to get started on a simple dashboard.

Predictive Testing

An introduction on how machine learning can assist you in finding, how much is enough to test. Covering the risk formula, and references to how to assess impact, and calculate probabilities across a complex domain.

Introduction to Data Science.pptx

Data science is an interdisciplinary field that uses algorithms, procedures, and processes to examine large amounts of data in order to uncover hidden patterns, generate insights, and direct decision making.

Target Leakage in Machine Learning (ODSC East 2020)

Target leakage is one of the most difficult problems in developing real-world machine learning models. Leakage occurs when the training data gets contaminated with information that will not be known at prediction time. Additionally, there can be multiple sources of leakage, from data collection and feature engineering to partitioning and model validation. As a result, even experienced data scientists can inadvertently introduce leaks and become overly optimistic about the performance of the models they deploy. In this talk, we will look through real-life examples of data leakage at different stages of the data science project lifecycle, and discuss various countermeasures and best practices for model validation.

Sage FAS for Sage ERP

Easily manage assets and depreciation with Sage FAS which integrates naturally with Sage 100, Sage 500, and Sage X3.

Machine Learning for Dummies

Introduction to Machine Learning

, The ML Philosophy,

Advantages

, Applications and ML Algorithms

big-data-anallytics.pptx

Presented the hands-on session on “Introduction to Big Data Analysis” at Dayananda Sagar University. Around 150+ University students benefitted from this session.

Lucata at the HPEC GraphBLAS BoF

A five minute piece to introduce the Lucata architecture to the GraphBLAS BoF.

LAGraph 2021-10-13

Introducing folks to the Lucata platform for high-performance, massive-scale graph analysis.

More Related Content

Similar to Network Challenge: Error and Sensitivity Analysis

Graph Analysis Trends and Opportunities -- CMG Performance and Capacity 2014

High-performance graph analysis is unlocking knowledge in problems like anomaly detection in computer security, community structure in social networks, and many other data integration areas. While graphs provide a convenient abstraction, real-world problems' sparsity and lack of locality challenge current systems. This talk will cover current trends ranging from massive scales to low-power, low-latency systems and summarize opportunities and directions for graphs and computing systems.

Target Leakage in Machine Learning

Target leakage is one of the most difficult problems in developing real-world machine learning models. Leakage occurs when the training data gets contaminated with information that will not be known at prediction time. Additionally, there can be multiple sources of leakage, from data collection and feature engineering to partitioning and model validation. As a result, even experienced data scientists can inadvertently introduce leaks and become overly optimistic about the performance of the models they deploy. In this talk, we will look through real-life examples of data leakage at different stages of the data science project lifecycle, and discuss various countermeasures and best practices for model validation.

Operations-Driven Web Services at Rent the Runway

From a meetup on 8/26, Rent the Runway's operations-driven service infrastructure including an overview of why we use Dropwizard

Machine Learning for dummies!

Currently hundreds of tools are promising to make artificial intelligence accessible to the masses. Tools like DataRobot, H20 Driverless AI, Amazon SageMaker or Microsoft Azure Machine Learning Studio.

These tools promise to accelerate the time-to-value of data science projects by simplifying model building.

In the workshop we will approach the AI Topic head on!

What is AI? What can AI do today? What do I need to start my own project?

We do all this using Microsoft's Machine Learning Studio.

Trainer: Philipp von Loringhoven - Chef, Designer, Developer, Markeeter - Data Nerd!

He has acquired a lot of expertise in marketing, business intelligence and product development during his time at the Rocket Internet startups (Wimdu, Lamudi) and Projekt-A (Tirendo).

Today he supports customers of the Austrian digitisation agency TOWA as Director Data Consulting to generate an added value from their data.

PyData 2015 Keynote: "A Systems View of Machine Learning"

Despite the growing abundance of powerful tools, building and deploying machine-learning frameworks into production continues to be major challenge, in both science and industry. I'll present some particular pain points and cautions for practitioners as well as recent work addressing some of the nagging issues. I advocate for a systems view, which, when expanded beyond the algorithms and codes to the organizational ecosystem, places some interesting constraints on the teams tasked with development and stewardship of ML products.

About: Dr. Joshua Bloom is an astronomy professor at the University of California, Berkeley where he teaches high-energy astrophysics and Python for data scientists. He has published over 250 refereed articles largely on time-domain transients events and telescope/insight automation. His book on gamma-ray bursts, a technical introduction for physical scientists, was published recently by Princeton University Press. He is also co-founder and CTO of wise.io, a startup based in Berkeley. Josh has been awarded the Pierce Prize from the American Astronomical Society; he is also a former Sloan Fellow, Junior Fellow at the Harvard Society, and Hertz Foundation Fellow. He holds a PhD from Caltech and degrees from Harvard and Cambridge University.

Data Science, what even?!

Presented an abridged version of my "What is data science" talk at #websummit 2013.

This talk goes over the required skillset as defined by Drew Conway and his famous venn diagram, and also outlines the Data Scientific Method brought by Dr. Patil. The talk is mainly two parts and the second part goes over some of the packages and technologies we use — minus the storage part.

Data Science as a Career and Intro to R

A basic intro to R and discussions on Data Science as a career option

Lean Digital | Data Driven Factory

Les sujets autours de la data défient régulièrement la chronique. La richesse et la valeur des informations issues des algorithmes sont maintenant bien connues. Elles sont par exemple utilisées dans le domaine du markéting, dans la modélisation d’un comportement pour déclencher un acte de choix, d'achat voire de vote. Le monde du sport est également un grand utilisateur de statistiques dans une recherche constante d'amélioration des performances collectives et individuelles.

Déclencher la bonne réaction au bon moment dans le but d'optimiser l'efficience, n’est-ce pas un des objectifs de l’Excellence Opérationnelle ? Et des données, l'entreprise en génère en continu !

Pourtant avant d’orienter le pilotage par la donnée vers le flux de valeur, il reste quelques obstacles à franchir, techniques et surtout humains. Les différents systèmes d’information peuvent avoir été conçus sur le modèle d’organisations isolées, en silos. L’accès et la consolidation des différentes sources de données ne sont donc pas toujours simples. Il peut aussi manquer des compétences au sein de l’entreprise. Passés ces premiers obstacles, un outil même puissant ne remplacera pas l’intelligence collective. Les modèles statistiques ainsi rendus visibles sont de réels catalyseurs d’optimisations. Restons toutefois vigilants à ne pas perdre le contact avec le sol. Les gains réalisés seront le résultat d’actions terrain, robustes, où celles et ceux qui font auront toutes les cartes en main pour piloter leur process.

Sommaire de la présentation :

- Les enjeux liés à la data et les constats

- L’intégration de la data dans l'amélioration de la performance

- Les outils de la statistique descriptive et analytique

- Aller plus loin dans l’IA et les Big Data avec de nouveaux métiers et outils

Principal Component Analysis

Slides used to present an overview on Principal Component Analysis during a analytics group meeting at TWBR.

Machine Learning Project - Default credit card clients

- The model we built here will use all possible factors to predict data on customers to find who are defaulters and non‐defaulters next month.

- The goal is to find the whether the clients are able to pay their next month credit amount.

- Identify some potential customers for the bank who can settle their credit balance.

- To determine if their customers could make the credit card payments on‐time.

- Default is the failure to pay interest or principal on a loan or credit card payment.

Visualizing your data in JavaScript

Visualizing your results accurately can reveal hidden insights, catch errors, and inspire your audience to investigate further. During this workshop, we’ll cover types of data visualizations and when they’re most effective, different JavaScript charting libraries such as D3, Google Charts, and Dygraphs, and how to get started on a simple dashboard.

Predictive Testing

An introduction on how machine learning can assist you in finding, how much is enough to test. Covering the risk formula, and references to how to assess impact, and calculate probabilities across a complex domain.

Introduction to Data Science.pptx

Data science is an interdisciplinary field that uses algorithms, procedures, and processes to examine large amounts of data in order to uncover hidden patterns, generate insights, and direct decision making.

Target Leakage in Machine Learning (ODSC East 2020)

Target leakage is one of the most difficult problems in developing real-world machine learning models. Leakage occurs when the training data gets contaminated with information that will not be known at prediction time. Additionally, there can be multiple sources of leakage, from data collection and feature engineering to partitioning and model validation. As a result, even experienced data scientists can inadvertently introduce leaks and become overly optimistic about the performance of the models they deploy. In this talk, we will look through real-life examples of data leakage at different stages of the data science project lifecycle, and discuss various countermeasures and best practices for model validation.

Sage FAS for Sage ERP

Easily manage assets and depreciation with Sage FAS which integrates naturally with Sage 100, Sage 500, and Sage X3.

Machine Learning for Dummies

Introduction to Machine Learning

, The ML Philosophy,

Advantages

, Applications and ML Algorithms

big-data-anallytics.pptx

Presented the hands-on session on “Introduction to Big Data Analysis” at Dayananda Sagar University. Around 150+ University students benefitted from this session.

Similar to Network Challenge: Error and Sensitivity Analysis (20)

Graph Analysis Trends and Opportunities -- CMG Performance and Capacity 2014

Graph Analysis Trends and Opportunities -- CMG Performance and Capacity 2014

Rent The Runway: Transitioning to Operations Driven Webservices

Rent The Runway: Transitioning to Operations Driven Webservices

PyData 2015 Keynote: "A Systems View of Machine Learning"

PyData 2015 Keynote: "A Systems View of Machine Learning"

Machine Learning Project - Default credit card clients

Machine Learning Project - Default credit card clients

Target Leakage in Machine Learning (ODSC East 2020)

Target Leakage in Machine Learning (ODSC East 2020)

More from Jason Riedy

Lucata at the HPEC GraphBLAS BoF

A five minute piece to introduce the Lucata architecture to the GraphBLAS BoF.

LAGraph 2021-10-13

Introducing folks to the Lucata platform for high-performance, massive-scale graph analysis.

Reproducible Linear Algebra from Application to Architecture

All computing must be parallel to take advantage of modern systems like multicore processors, GPUs, and distributed systems. Results that are not bit-wise reproducible introduce doubt on many levels. Sometimes that is appropriate. Reproducibility limitations occur because underlying libraries do not specify their reproducibility requirements. New advances in interfaces, algorithms, and architectures allow selecting among those requirements in the future. This talk covers many of the upcoming options and their trade-offs.

PEARC19: Wrangling Rogues: A Case Study on Managing Experimental Post-Moore A...

The Rogues Gallery is a new experimental testbed that is focused on tackling "rogue'' architectures for the Post-Moore era of computing. While some of these devices have roots in the embedded and high-performance computing spaces, managing current and emerging technologies provides a challenge for system administration that are not always foreseen in traditional data center environments.

We present an overview of the motivations and design of the initial Rogues Gallery testbed and cover some of the unique challenges that we have seen and foresee with upcoming hardware prototypes for future post-Moore research. Specifically, we cover the networking, identity management, scheduling of resources, and tools and sensor access aspects of the Rogues Gallery and techniques we have developed to manage these new platforms. We argue that current tools like the Slurm resource manager can support new rogues without major infrastructure changes.

ICIAM 2019: Reproducible Linear Algebra from Application to Architecture

All computing must be parallel to take advantage of modern systems like multicore processors, GPUs, and distributed systems. Results that are not bit-wise reproducible introduce doubt on many levels. Sometimes that is appropriate. Reproducibility limitations occur because underlying libraries do not specify their reproducibility requirements. New advances in interfaces, algorithms, and architectures allow selecting among those requirements in the future. This talk covers many of the upcoming options and their trade-offs.

ICIAM 2019: A New Algorithm Model for Massive-Scale Streaming Graph Analysis

Applications in many areas analyze an ever-changing environment. On billion vertices graphs, providing snapshots imposes a large performance cost. We propose the first formal model for graph analysis running concurrently with streaming data updates. We consider an algorithm valid if its output is correct for the initial graph plus some implicit subset of concurrent changes. We show theoretical properties of the model, demonstrate the model on various algorithms, and extend it to updating results incrementally.

Novel Architectures for Applications in Data Science and Beyond

Describing new HPDA applications and architectures with a focus on Georgia Tech's CRNCH Rogues Gallery

Characterization of Emu Chick with Microbenchmarks

Presenting current and recent performance results on the Emu Chick looking towards graph and data analysis.

CRNCH 2018 Summit: Rogues Gallery Update

In one classic sense a rogue is someone who goes their own way, who breaks away from the crowd. The CRNCH Rogues Gallery aims to support computer architecture rogues by being a physical and virtual space providing access to novel computing architectures. Researchers find applications, and architects discover what happens when their prototypes hit reality. Our goals are to help kick-start software ecosystems, train students in novel system evaluation and use, and provide rapid feedback to architects. By exposing students and researchers to this set of unique hardware, we foster cross-cutting discussions about hardware designs that will drive future performance improvements in computing long after the Moore’s Law era of “cheap transistors” ends. We provide a brief description of the current Rogues Gallery along with successes and research highlights over the last year.

Augmented Arithmetic Operations Proposed for IEEE-754 2018

Algorithms for extending arithmetic precision through compensated summation or arithmetics like double-double rely on operations commonly called twoSum and twoProduct. The current draft of the IEEE 754 standard specifies these operations under the names augmentedAddition and augmentedMultiplication. These operations were included after three decades of experience because of a motivating new use: bitwise reproducible arithmetic. Standardizing the operations provides a hardware acceleration target that can provide at least a 33% speed improvements in reproducible dot product, placing reproducible dot product almost within a factor of two of common dot product. This paper provides history and motivation for standardizing these operations. We also define the operations, explain the rationale for all the specific choices, and provide parameterized test cases for new boundary behaviors.

CRNCH Rogues Gallery: A Community Core for Novel Computing Platforms

Version from the CSE Strategic Partner Program meeting

CRNCH Rogues Gallery: A Community Core for Novel Computing Platforms

The Rogues Gallery is a new concept focused on developing our understanding of next-generation hardware with a focus on unorthodox and uncommon technologies. This project, initiated by Georgia Tech's Center for Research into Novel Computing Hierarchies (CRNCH), will acquire new and unique hardware (ie, the aforementioned "rogues") from vendors, research labs, and startups and make this hardware available to students, faculty, and industry collaborators within a managed data center environment. By exposing students and researchers to this set of unique hardware, we hope to foster cross-cutting discussions about hardware designs that will drive future performance improvements in computing long after the Moore's Law era of "cheap transistors" ends.

A New Algorithm Model for Massive-Scale Streaming Graph Analysis

Applications in computer network security, social media analysis,and other areas rely on analyzing a changing environment. The data is rich in relationships and lends itself to graph analysis. Traditional static graph analysis cannot keep pace with network security applications analyzing nearly one million events per second and social networks like Facebook collecting 500 thousand comments per second. Streaming frameworks like STINGER support ingesting up three million of edge changes per second but there are few streaming analysis kernels that keep up with these rates. Here we present a new algorithm model for applying complex metrics to a changing graph. In this model, many more algorithms can be applied without having to stop the world.

High-Performance Analysis of Streaming Graphs

Graph-structured data in social networks, finance, network security, and others not only are massive but also under continual change. These changes often are scattered across the graph. Stopping the world to run a single, static query is infeasible. Repeating complex global analyses on massive snapshots to capture only what has changed is inefficient. We discuss requirements for single-shot queries on changing graphs as well as recent high-performance algorithms that update rather than recompute results. These algorithms are incorporated into our software framework for streaming graph analysis, STINGER.

High-Performance Analysis of Streaming Graphs

Graph-structured data in social networks, finance, network security, and others not only are massive but also under continual change. These changes often are scattered across the graph. Stopping the world to run a single, static query is infeasible. Repeating complex global analyses on massive snapshots to capture only what has changed is inefficient. We discuss requirements for single-shot queries on changing graphs as well as recent high-performance algorithms that update rather than recompute results. These algorithms are incorporated into our software framework for streaming graph analysis, STING (Spatio-Temporal Interaction Networks and Graphs).

Updating PageRank for Streaming Graphs

Algorithm for efficiently and accurately updating PageRank as the graph changes from a stream of updates. Also includes needs from the upcoming GraphBLAS to support high-performance streaming graph analysis.

More from Jason Riedy (20)

Reproducible Linear Algebra from Application to Architecture

Reproducible Linear Algebra from Application to Architecture

PEARC19: Wrangling Rogues: A Case Study on Managing Experimental Post-Moore A...

PEARC19: Wrangling Rogues: A Case Study on Managing Experimental Post-Moore A...

ICIAM 2019: Reproducible Linear Algebra from Application to Architecture

ICIAM 2019: Reproducible Linear Algebra from Application to Architecture

ICIAM 2019: A New Algorithm Model for Massive-Scale Streaming Graph Analysis

ICIAM 2019: A New Algorithm Model for Massive-Scale Streaming Graph Analysis

Novel Architectures for Applications in Data Science and Beyond

Novel Architectures for Applications in Data Science and Beyond

Characterization of Emu Chick with Microbenchmarks

Characterization of Emu Chick with Microbenchmarks

Augmented Arithmetic Operations Proposed for IEEE-754 2018

Augmented Arithmetic Operations Proposed for IEEE-754 2018

Graph Analysis: New Algorithm Models, New Architectures

Graph Analysis: New Algorithm Models, New Architectures

CRNCH Rogues Gallery: A Community Core for Novel Computing Platforms

CRNCH Rogues Gallery: A Community Core for Novel Computing Platforms

CRNCH Rogues Gallery: A Community Core for Novel Computing Platforms

CRNCH Rogues Gallery: A Community Core for Novel Computing Platforms

A New Algorithm Model for Massive-Scale Streaming Graph Analysis

A New Algorithm Model for Massive-Scale Streaming Graph Analysis

Recently uploaded

一比一原版(CBU毕业证)卡普顿大学毕业证如何办理

CBU毕业证offer【微信95270640】《卡普顿大学毕业证书》《QQ微信95270640》学位证书电子版:在线制作卡普顿大学毕业证成绩单GPA修改(制作CBU毕业证成绩单CBU文凭证书样本)、卡普顿大学毕业证书与成绩单样本图片、《CBU学历证书学位证书》、卡普顿大学毕业证案例毕业证书制作軟體、在线制作加拿大硕士学历证书真实可查.

如果您是以下情况,我们都能竭诚为您解决实际问题:【公司采用定金+余款的付款流程,以最大化保障您的利益,让您放心无忧】

1、在校期间,因各种原因未能顺利毕业,拿不到官方毕业证+微信95270640

2、面对父母的压力,希望尽快拿到卡普顿大学卡普顿大学毕业证成绩单;

3、不清楚流程以及材料该如何准备卡普顿大学卡普顿大学毕业证成绩单;

4、回国时间很长,忘记办理;

5、回国马上就要找工作,办给用人单位看;

6、企事业单位必须要求办理的;

面向美国乔治城大学毕业留学生提供以下服务:

【★卡普顿大学卡普顿大学毕业证成绩单毕业证、成绩单等全套材料,从防伪到印刷,从水印到钢印烫金,与学校100%相同】

【★真实使馆认证(留学人员回国证明),使馆存档可通过大使馆查询确认】

【★真实教育部认证,教育部存档,教育部留服网站可查】

【★真实留信认证,留信网入库存档,可查卡普顿大学卡普顿大学毕业证成绩单】

我们从事工作十余年的有着丰富经验的业务顾问,熟悉海外各国大学的学制及教育体系,并且以挂科生解决毕业材料不全问题为基础,为客户量身定制1对1方案,未能毕业的回国留学生成功搭建回国顺利发展所需的桥梁。我们一直努力以高品质的教育为起点,以诚信、专业、高效、创新作为一切的行动宗旨,始终把“诚信为主、质量为本、客户第一”作为我们全部工作的出发点和归宿点。同时为海内外留学生提供大学毕业证购买、补办成绩单及各类分数修改等服务;归国认证方面,提供《留信网入库》申请、《国外学历学位认证》申请以及真实学籍办理等服务,帮助众多莘莘学子实现了一个又一个梦想。

专业服务,请勿犹豫联系我

如果您真实毕业回国,对于学历认证无从下手,请联系我,我们免费帮您递交

诚招代理:本公司诚聘当地代理人员,如果你有业余时间,或者你有同学朋友需要,有兴趣就请联系我

你赢我赢,共创双赢

你做代理,可以帮助卡普顿大学同学朋友

你做代理,可以拯救卡普顿大学失足青年

你做代理,可以挽救卡普顿大学一个个人才

你做代理,你将是别人人生卡普顿大学的转折点

你做代理,可以改变自己,改变他人,给他人和自己一个机会道银边山娃摸索着扯了扯灯绳小屋顿时一片刺眼的亮瞅瞅床头的诺基亚山娃苦笑着摇了摇头连他自己都感到奇怪居然又睡到上午点半掐指算算随父亲进城已一个多星期了山娃几乎天天起得这么迟在乡下老家暑假五点多山娃就醒来在爷爷奶奶嘁嘁喳喳的忙碌声中一骨碌爬起把牛驱到后龙山再从莲塘里采回一蛇皮袋湿漉漉的莲蓬也才点多点半早就吃过早餐玩耍去了山娃的家在闽西山区依山傍水山清水秀门前潺潺流淌的蜿蜒小溪一直都是山娃和小伙伴们盛试

办(uts毕业证书)悉尼科技大学毕业证学历证书原版一模一样

原版一模一样【微信:741003700 】【(uts毕业证书)悉尼科技大学毕业证学历证书】【微信:741003700 】学位证,留信认证(真实可查,永久存档)offer、雅思、外壳等材料/诚信可靠,可直接看成品样本,帮您解决无法毕业带来的各种难题!外壳,原版制作,诚信可靠,可直接看成品样本。行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备。十五年致力于帮助留学生解决难题,包您满意。

本公司拥有海外各大学样板无数,能完美还原海外各大学 Bachelor Diploma degree, Master Degree Diploma

1:1完美还原海外各大学毕业材料上的工艺:水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠。文字图案浮雕、激光镭射、紫外荧光、温感、复印防伪等防伪工艺。材料咨询办理、认证咨询办理请加学历顾问Q/微741003700

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

The Building Blocks of QuestDB, a Time Series Database

Talk Delivered at Valencia Codes Meetup 2024-06.

Traditionally, databases have treated timestamps just as another data type. However, when performing real-time analytics, timestamps should be first class citizens and we need rich time semantics to get the most out of our data. We also need to deal with ever growing datasets while keeping performant, which is as fun as it sounds.

It is no wonder time-series databases are now more popular than ever before. Join me in this session to learn about the internal architecture and building blocks of QuestDB, an open source time-series database designed for speed. We will also review a history of some of the changes we have gone over the past two years to deal with late and unordered data, non-blocking writes, read-replicas, or faster batch ingestion.

Chatty Kathy - UNC Bootcamp Final Project Presentation - Final Version - 5.23...

SlideShare Description for "Chatty Kathy - UNC Bootcamp Final Project Presentation"

Title: Chatty Kathy: Enhancing Physical Activity Among Older Adults

Description:

Discover how Chatty Kathy, an innovative project developed at the UNC Bootcamp, aims to tackle the challenge of low physical activity among older adults. Our AI-driven solution uses peer interaction to boost and sustain exercise levels, significantly improving health outcomes. This presentation covers our problem statement, the rationale behind Chatty Kathy, synthetic data and persona creation, model performance metrics, a visual demonstration of the project, and potential future developments. Join us for an insightful Q&A session to explore the potential of this groundbreaking project.

Project Team: Jay Requarth, Jana Avery, John Andrews, Dr. Dick Davis II, Nee Buntoum, Nam Yeongjin & Mat Nicholas

Enhanced Enterprise Intelligence with your personal AI Data Copilot.pdf

Recently we have observed the rise of open-source Large Language Models (LLMs) that are community-driven or developed by the AI market leaders, such as Meta (Llama3), Databricks (DBRX) and Snowflake (Arctic). On the other hand, there is a growth in interest in specialized, carefully fine-tuned yet relatively small models that can efficiently assist programmers in day-to-day tasks. Finally, Retrieval-Augmented Generation (RAG) architectures have gained a lot of traction as the preferred approach for LLMs context and prompt augmentation for building conversational SQL data copilots, code copilots and chatbots.

In this presentation, we will show how we built upon these three concepts a robust Data Copilot that can help to democratize access to company data assets and boost performance of everyone working with data platforms.

Why do we need yet another (open-source ) Copilot?

How can we build one?

Architecture and evaluation

06-04-2024 - NYC Tech Week - Discussion on Vector Databases, Unstructured Dat...

06-04-2024 - NYC Tech Week - Discussion on Vector Databases, Unstructured Data and AI

Round table discussion of vector databases, unstructured data, ai, big data, real-time, robots and Milvus.

A lively discussion with NJ Gen AI Meetup Lead, Prasad and Procure.FYI's Co-Found

Analysis insight about a Flyball dog competition team's performance

Insight of my analysis about a Flyball dog competition team's last year performance. Find more: https://github.com/rolandnagy-ds/flyball_race_analysis/tree/main

Global Situational Awareness of A.I. and where its headed

You can see the future first in San Francisco.

Over the past year, the talk of the town has shifted from $10 billion compute clusters to $100 billion clusters to trillion-dollar clusters. Every six months another zero is added to the boardroom plans. Behind the scenes, there’s a fierce scramble to secure every power contract still available for the rest of the decade, every voltage transformer that can possibly be procured. American big business is gearing up to pour trillions of dollars into a long-unseen mobilization of American industrial might. By the end of the decade, American electricity production will have grown tens of percent; from the shale fields of Pennsylvania to the solar farms of Nevada, hundreds of millions of GPUs will hum.

The AGI race has begun. We are building machines that can think and reason. By 2025/26, these machines will outpace college graduates. By the end of the decade, they will be smarter than you or I; we will have superintelligence, in the true sense of the word. Along the way, national security forces not seen in half a century will be un-leashed, and before long, The Project will be on. If we’re lucky, we’ll be in an all-out race with the CCP; if we’re unlucky, an all-out war.

Everyone is now talking about AI, but few have the faintest glimmer of what is about to hit them. Nvidia analysts still think 2024 might be close to the peak. Mainstream pundits are stuck on the wilful blindness of “it’s just predicting the next word”. They see only hype and business-as-usual; at most they entertain another internet-scale technological change.

Before long, the world will wake up. But right now, there are perhaps a few hundred people, most of them in San Francisco and the AI labs, that have situational awareness. Through whatever peculiar forces of fate, I have found myself amongst them. A few years ago, these people were derided as crazy—but they trusted the trendlines, which allowed them to correctly predict the AI advances of the past few years. Whether these people are also right about the next few years remains to be seen. But these are very smart people—the smartest people I have ever met—and they are the ones building this technology. Perhaps they will be an odd footnote in history, or perhaps they will go down in history like Szilard and Oppenheimer and Teller. If they are seeing the future even close to correctly, we are in for a wild ride.

Let me tell you what we see.

ViewShift: Hassle-free Dynamic Policy Enforcement for Every Data Lake

Dynamic policy enforcement is becoming an increasingly important topic in today’s world where data privacy and compliance is a top priority for companies, individuals, and regulators alike. In these slides, we discuss how LinkedIn implements a powerful dynamic policy enforcement engine, called ViewShift, and integrates it within its data lake. We show the query engine architecture and how catalog implementations can automatically route table resolutions to compliance-enforcing SQL views. Such views have a set of very interesting properties: (1) They are auto-generated from declarative data annotations. (2) They respect user-level consent and preferences (3) They are context-aware, encoding a different set of transformations for different use cases (4) They are portable; while the SQL logic is only implemented in one SQL dialect, it is accessible in all engines.

#SQL #Views #Privacy #Compliance #DataLake

Adjusting OpenMP PageRank : SHORT REPORT / NOTES

For massive graphs that fit in RAM, but not in GPU memory, it is possible to take

advantage of a shared memory system with multiple CPUs, each with multiple cores, to

accelerate pagerank computation. If the NUMA architecture of the system is properly taken

into account with good vertex partitioning, the speedup can be significant. To take steps in

this direction, experiments are conducted to implement pagerank in OpenMP using two

different approaches, uniform and hybrid. The uniform approach runs all primitives required

for pagerank in OpenMP mode (with multiple threads). On the other hand, the hybrid

approach runs certain primitives in sequential mode (i.e., sumAt, multiply).

一比一原版(UniSA毕业证书)南澳大学毕业证如何办理

原版定制【微信:41543339】【(UniSA毕业证书)南澳大学毕业证】【微信:41543339】成绩单、外壳、offer、留信学历认证(永久存档真实可查)采用学校原版纸张、特殊工艺完全按照原版一比一制作(包括:隐形水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠,文字图案浮雕,激光镭射,紫外荧光,温感,复印防伪)行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备,十五年致力于帮助留学生解决难题,业务范围有加拿大、英国、澳洲、韩国、美国、新加坡,新西兰等学历材料,包您满意。

【我们承诺采用的是学校原版纸张(纸质、底色、纹路),我们拥有全套进口原装设备,特殊工艺都是采用不同机器制作,仿真度基本可以达到100%,所有工艺效果都可提前给客户展示,不满意可以根据客户要求进行调整,直到满意为止!】

【业务选择办理准则】

一、工作未确定,回国需先给父母、亲戚朋友看下文凭的情况,办理一份就读学校的毕业证【微信41543339】文凭即可

二、回国进私企、外企、自己做生意的情况,这些单位是不查询毕业证真伪的,而且国内没有渠道去查询国外文凭的真假,也不需要提供真实教育部认证。鉴于此,办理一份毕业证【微信41543339】即可

三、进国企,银行,事业单位,考公务员等等,这些单位是必需要提供真实教育部认证的,办理教育部认证所需资料众多且烦琐,所有材料您都必须提供原件,我们凭借丰富的经验,快捷的绿色通道帮您快速整合材料,让您少走弯路。

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

留信网服务项目:

1、留学生专业人才库服务(留信分析)

2、国(境)学习人员提供就业推荐信服务

3、留学人员区块链存储服务

→ 【关于价格问题(保证一手价格)】

我们所定的价格是非常合理的,而且我们现在做得单子大多数都是代理和回头客户介绍的所以一般现在有新的单子 我给客户的都是第一手的代理价格,因为我想坦诚对待大家 不想跟大家在价格方面浪费时间

对于老客户或者被老客户介绍过来的朋友,我们都会适当给一些优惠。

选择实体注册公司办理,更放心,更安全!我们的承诺:客户在留信官方认证查询网站查询到认证通过结果后付款,不成功不收费!

My burning issue is homelessness K.C.M.O.

My burning issue is homelessness in Kansas City, MO

To: Tom Tresser

From: Roger Warren

一比一原版(Deakin毕业证书)迪肯大学毕业证如何办理

原版定制【微信:41543339】【(Deakin毕业证书)迪肯大学毕业证】【微信:41543339】成绩单、外壳、offer、留信学历认证(永久存档真实可查)采用学校原版纸张、特殊工艺完全按照原版一比一制作(包括:隐形水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠,文字图案浮雕,激光镭射,紫外荧光,温感,复印防伪)行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备,十五年致力于帮助留学生解决难题,业务范围有加拿大、英国、澳洲、韩国、美国、新加坡,新西兰等学历材料,包您满意。

【我们承诺采用的是学校原版纸张(纸质、底色、纹路),我们拥有全套进口原装设备,特殊工艺都是采用不同机器制作,仿真度基本可以达到100%,所有工艺效果都可提前给客户展示,不满意可以根据客户要求进行调整,直到满意为止!】

【业务选择办理准则】

一、工作未确定,回国需先给父母、亲戚朋友看下文凭的情况,办理一份就读学校的毕业证【微信41543339】文凭即可

二、回国进私企、外企、自己做生意的情况,这些单位是不查询毕业证真伪的,而且国内没有渠道去查询国外文凭的真假,也不需要提供真实教育部认证。鉴于此,办理一份毕业证【微信41543339】即可

三、进国企,银行,事业单位,考公务员等等,这些单位是必需要提供真实教育部认证的,办理教育部认证所需资料众多且烦琐,所有材料您都必须提供原件,我们凭借丰富的经验,快捷的绿色通道帮您快速整合材料,让您少走弯路。

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

留信网服务项目:

1、留学生专业人才库服务(留信分析)

2、国(境)学习人员提供就业推荐信服务

3、留学人员区块链存储服务

→ 【关于价格问题(保证一手价格)】

我们所定的价格是非常合理的,而且我们现在做得单子大多数都是代理和回头客户介绍的所以一般现在有新的单子 我给客户的都是第一手的代理价格,因为我想坦诚对待大家 不想跟大家在价格方面浪费时间

对于老客户或者被老客户介绍过来的朋友,我们都会适当给一些优惠。

选择实体注册公司办理,更放心,更安全!我们的承诺:客户在留信官方认证查询网站查询到认证通过结果后付款,不成功不收费!

Levelwise PageRank with Loop-Based Dead End Handling Strategy : SHORT REPORT ...

Abstract — Levelwise PageRank is an alternative method of PageRank computation which decomposes the input graph into a directed acyclic block-graph of strongly connected components, and processes them in topological order, one level at a time. This enables calculation for ranks in a distributed fashion without per-iteration communication, unlike the standard method where all vertices are processed in each iteration. It however comes with a precondition of the absence of dead ends in the input graph. Here, the native non-distributed performance of Levelwise PageRank was compared against Monolithic PageRank on a CPU as well as a GPU. To ensure a fair comparison, Monolithic PageRank was also performed on a graph where vertices were split by components. Results indicate that Levelwise PageRank is about as fast as Monolithic PageRank on the CPU, but quite a bit slower on the GPU. Slowdown on the GPU is likely caused by a large submission of small workloads, and expected to be non-issue when the computation is performed on massive graphs.

做(mqu毕业证书)麦考瑞大学毕业证硕士文凭证书学费发票原版一模一样

原版定制【Q微信:741003700】《(mqu毕业证书)麦考瑞大学毕业证硕士文凭证书》【Q微信:741003700】成绩单 、雅思、外壳、留信学历认证永久存档查询,采用学校原版纸张、特殊工艺完全按照原版一比一制作(包括:隐形水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠,文字图案浮雕,激光镭射,紫外荧光,温感,复印防伪)行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备,十五年致力于帮助留学生解决难题,业务范围有加拿大、英国、澳洲、韩国、美国、新加坡,新西兰等学历材料,包您满意。

【业务选择办理准则】

一、工作未确定,回国需先给父母、亲戚朋友看下文凭的情况,办理一份就读学校的毕业证【Q微信741003700】文凭即可

二、回国进私企、外企、自己做生意的情况,这些单位是不查询毕业证真伪的,而且国内没有渠道去查询国外文凭的真假,也不需要提供真实教育部认证。鉴于此,办理一份毕业证【微信741003700】即可

三、进国企,银行,事业单位,考公务员等等,这些单位是必需要提供真实教育部认证的,办理教育部认证所需资料众多且烦琐,所有材料您都必须提供原件,我们凭借丰富的经验,快捷的绿色通道帮您快速整合材料,让您少走弯路。

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

【关于价格问题(保证一手价格)】

我们所定的价格是非常合理的,而且我们现在做得单子大多数都是代理和回头客户介绍的所以一般现在有新的单子 我给客户的都是第一手的代理价格,因为我想坦诚对待大家 不想跟大家在价格方面浪费时间

对于老客户或者被老客户介绍过来的朋友,我们都会适当给一些优惠。

哪里卖(usq毕业证书)南昆士兰大学毕业证研究生文凭证书托福证书原版一模一样

原版定制【Q微信:741003700】《(usq毕业证书)南昆士兰大学毕业证研究生文凭证书》【Q微信:741003700】成绩单 、雅思、外壳、留信学历认证永久存档查询,采用学校原版纸张、特殊工艺完全按照原版一比一制作(包括:隐形水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠,文字图案浮雕,激光镭射,紫外荧光,温感,复印防伪)行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备,十五年致力于帮助留学生解决难题,业务范围有加拿大、英国、澳洲、韩国、美国、新加坡,新西兰等学历材料,包您满意。

【业务选择办理准则】

一、工作未确定,回国需先给父母、亲戚朋友看下文凭的情况,办理一份就读学校的毕业证【Q微信741003700】文凭即可

二、回国进私企、外企、自己做生意的情况,这些单位是不查询毕业证真伪的,而且国内没有渠道去查询国外文凭的真假,也不需要提供真实教育部认证。鉴于此,办理一份毕业证【微信741003700】即可

三、进国企,银行,事业单位,考公务员等等,这些单位是必需要提供真实教育部认证的,办理教育部认证所需资料众多且烦琐,所有材料您都必须提供原件,我们凭借丰富的经验,快捷的绿色通道帮您快速整合材料,让您少走弯路。

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

【关于价格问题(保证一手价格)】

我们所定的价格是非常合理的,而且我们现在做得单子大多数都是代理和回头客户介绍的所以一般现在有新的单子 我给客户的都是第一手的代理价格,因为我想坦诚对待大家 不想跟大家在价格方面浪费时间

对于老客户或者被老客户介绍过来的朋友,我们都会适当给一些优惠。

一比一原版(UIUC毕业证)伊利诺伊大学|厄巴纳-香槟分校毕业证如何办理

UIUC毕业证offer【微信95270640】☀《伊利诺伊大学|厄巴纳-香槟分校毕业证购买》GoogleQ微信95270640《UIUC毕业证模板办理》加拿大文凭、本科、硕士、研究生学历都可以做,二、业务范围:

★、全套服务:毕业证、成绩单、化学专业毕业证书伪造《伊利诺伊大学|厄巴纳-香槟分校大学毕业证》Q微信95270640《UIUC学位证书购买》

(诚招代理)办理国外高校毕业证成绩单文凭学位证,真实使馆公证(留学回国人员证明)真实留信网认证国外学历学位认证雅思代考国外学校代申请名校保录开请假条改GPA改成绩ID卡

1.高仿业务:【本科硕士】毕业证,成绩单(GPA修改),学历认证(教育部认证),大学Offer,,ID,留信认证,使馆认证,雅思,语言证书等高仿类证书;

2.认证服务: 学历认证(教育部认证),大使馆认证(回国人员证明),留信认证(可查有编号证书),大学保录取,雅思保分成绩单。

3.技术服务:钢印水印烫金激光防伪凹凸版设计印刷激凸温感光标底纹镭射速度快。

办理伊利诺伊大学|厄巴纳-香槟分校伊利诺伊大学|厄巴纳-香槟分校毕业证offer流程:

1客户提供办理信息:姓名生日专业学位毕业时间等(如信息不确定可以咨询顾问:我们有专业老师帮你查询);

2开始安排制作毕业证成绩单电子图;

3毕业证成绩单电子版做好以后发送给您确认;

4毕业证成绩单电子版您确认信息无误之后安排制作成品;

5成品做好拍照或者视频给您确认;

6快递给客户(国内顺丰国外DHLUPS等快读邮寄)

-办理真实使馆公证(即留学回国人员证明)

-办理各国各大学文凭(世界名校一对一专业服务,可全程监控跟踪进度)

-全套服务:毕业证成绩单真实使馆公证真实教育部认证。让您回国发展信心十足!

(详情请加一下 文凭顾问+微信:95270640)欢迎咨询!的鬼地方父亲的家在高楼最底屋最下面很矮很黑是很不显眼的地下室父亲的家安在别人脚底下须绕过高楼旁边的垃圾堆下八个台阶才到父亲的家很狭小除了一张单人床和一张小方桌几乎没有多余的空间山娃一下子就联想起学校的男小便处山娃很想笑却怎么也笑不出来山娃很迷惑父亲的家除了一扇小铁门连窗户也没有墓穴一般阴森森有些骇人父亲的城也便成了山娃的城父亲的家也便成了山娃的家父亲让山娃呆在屋里做作业看电视最多只能在门口透透气间

Recently uploaded (20)

The Building Blocks of QuestDB, a Time Series Database

The Building Blocks of QuestDB, a Time Series Database

Chatty Kathy - UNC Bootcamp Final Project Presentation - Final Version - 5.23...

Chatty Kathy - UNC Bootcamp Final Project Presentation - Final Version - 5.23...

Enhanced Enterprise Intelligence with your personal AI Data Copilot.pdf

Enhanced Enterprise Intelligence with your personal AI Data Copilot.pdf

06-04-2024 - NYC Tech Week - Discussion on Vector Databases, Unstructured Dat...

06-04-2024 - NYC Tech Week - Discussion on Vector Databases, Unstructured Dat...

Analysis insight about a Flyball dog competition team's performance

Analysis insight about a Flyball dog competition team's performance

Global Situational Awareness of A.I. and where its headed

Global Situational Awareness of A.I. and where its headed

ViewShift: Hassle-free Dynamic Policy Enforcement for Every Data Lake

ViewShift: Hassle-free Dynamic Policy Enforcement for Every Data Lake

Machine learning and optimization techniques for electrical drives.pptx

Machine learning and optimization techniques for electrical drives.pptx

Levelwise PageRank with Loop-Based Dead End Handling Strategy : SHORT REPORT ...

Levelwise PageRank with Loop-Based Dead End Handling Strategy : SHORT REPORT ...

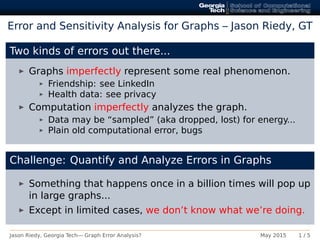

Network Challenge: Error and Sensitivity Analysis

- 1. Error and Sensitivity Analysis for Graphs – Jason Riedy, GT Two kinds of errors out there... Graphs imperfectly represent some real phenomenon. Friendship: see LinkedIn Health data: see privacy Computation imperfectly analyzes the graph. Data may be “sampled” (aka dropped, lost) for energy... Plain old computational error, bugs Challenge: Quantify and Analyze Errors in Graphs Something that happens once in a billion times will pop up in large graphs... Except in limited cases, we don’t know what we’re doing. Jason Riedy, Georgia Tech— Graph Error Analysis? May 2015 1 / 5

- 2. Quick Example: Global Clustering Coefficient −Error+ − Fraction of graph used (kinda) + From Zakrzewska & Bader, “Measuring the Sensitivity of Graph Metrics to Missing Data,” PPAM 2013 Jason Riedy, Georgia Tech— Graph Error Analysis? May 2015 2 / 5

- 3. Quick Example: Local Clustering Coefficients −Error+ − Fraction of graph used (kinda) + From Zakrzewska & Bader, “Measuring the Sensitivity of Graph Metrics to Missing Data,” PPAM 2013 Jason Riedy, Georgia Tech— Graph Error Analysis? May 2015 3 / 5

- 4. Quick Example: Streaming Magnifies Errors Updating PageRank via simple linear algebra: q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q q 1e−06 1e−05 1e−06 1e−05 1e−06 1e−05 caidaRouterLevelcoPapersCiteseerpower 0 1000 2000 3000 4000 5000 Number of updates Relativeresidual(1−norm) Algorithm qRestarted P∆ ∆x = (A∆ T D∆ −1 − AT D−1 )x P∆ ∆x = (A∆ T D∆ −1 − AT D−1 )x + r Ranking looks just fine! Until everything falls apart... Paying attention to the initial error works. Jason Riedy, Georgia Tech— Graph Error Analysis? May 2015 4 / 5

- 5. Challenge: Build Error & Sensitivity Analysis for Graphs Possible starting points How do you measure or model error in... connected components? Is the graph a window into the “real” network? Can you leverage link prediction between components? Measure precision and recall against... what? linear-algebra-ish metrics like PageRank? Is this easier? Mapping backward error analysis to a discrete matrix... What is success? Building mental and formal methods for addressing error and sensitivity that can be condensed to rules of thumb. Jason Riedy, Georgia Tech— Graph Error Analysis? May 2015 5 / 5