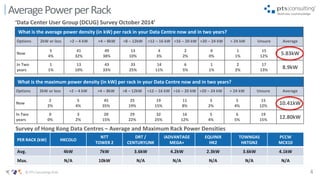

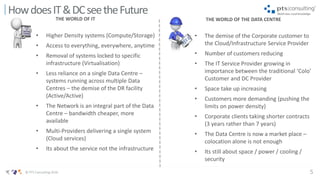

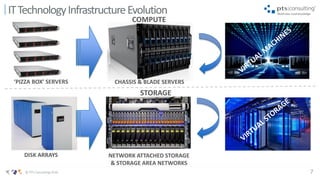

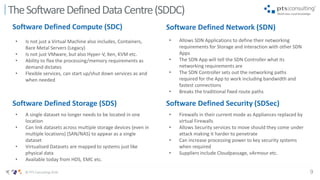

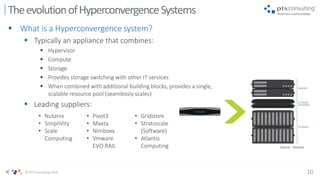

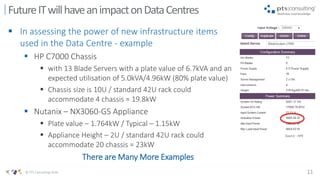

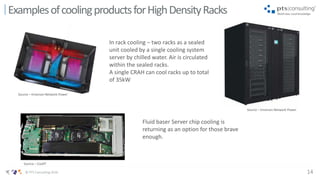

This document provides an overview and predictions for enterprise data center trends from 2020 onwards. It discusses how IT requirements are evolving more rapidly than traditional data center design timelines, with higher power densities and virtualization. This is driving trends towards software-defined infrastructure, hyperconverged systems, and new cooling solutions as average rack power increases. Data centers will need to adapt cooling methods to address densities reaching 10kW and beyond per rack. The pace of IT evolution poses challenges for data center operators to continuously keep up with changing power and infrastructure demands.