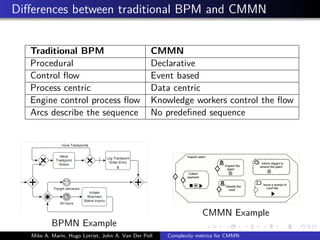

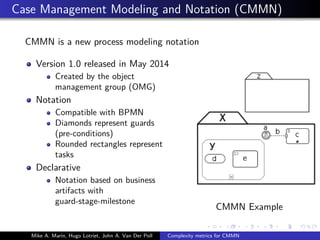

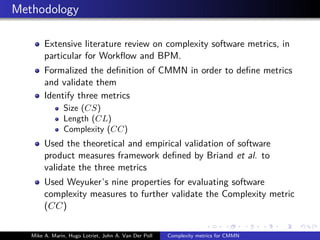

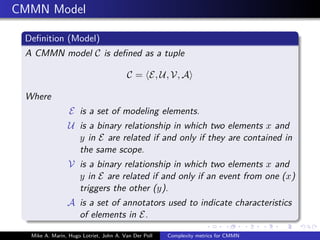

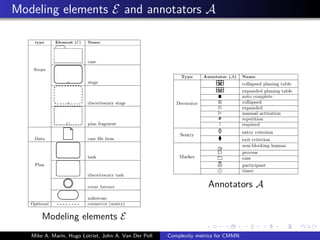

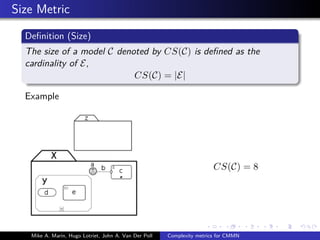

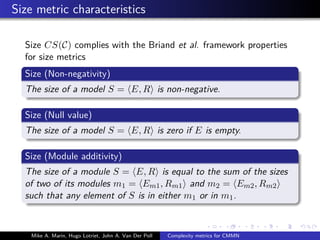

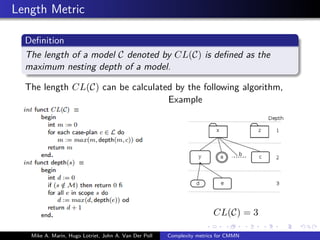

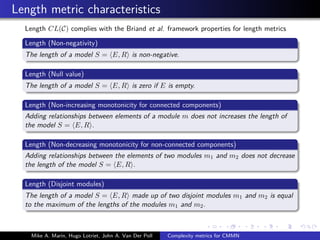

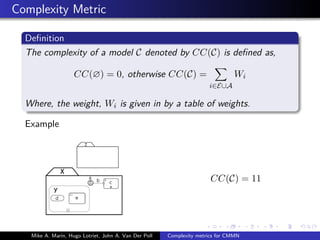

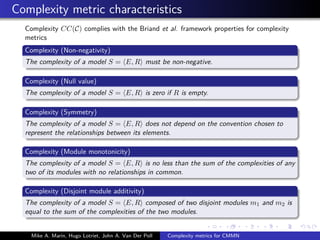

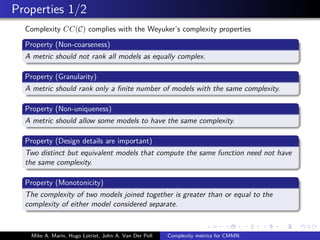

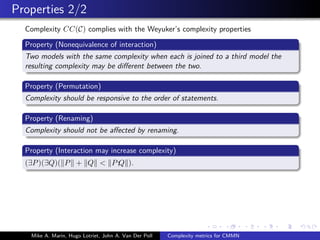

The document explores complexity metrics for Case Management Modeling and Notation (CMMN) to understand modeling complexity in comparison to traditional Business Process Management (BPM). It identifies and validates three metrics: size, length, and complexity, established through a rigorous literature review and formal definitions. Future work is necessary to empirically validate these metrics and adapt existing frameworks to better fit declarative systems like CMMN.