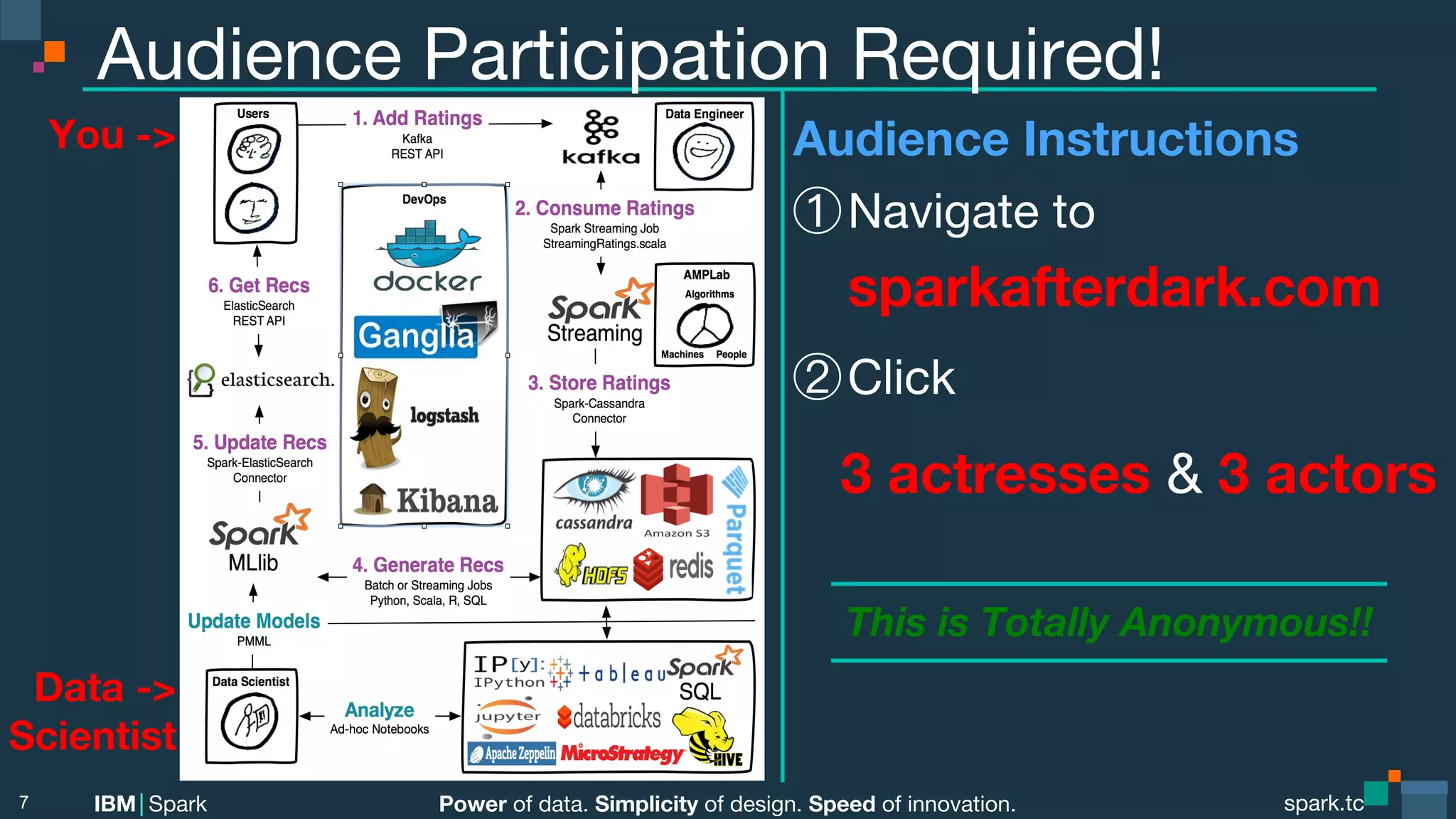

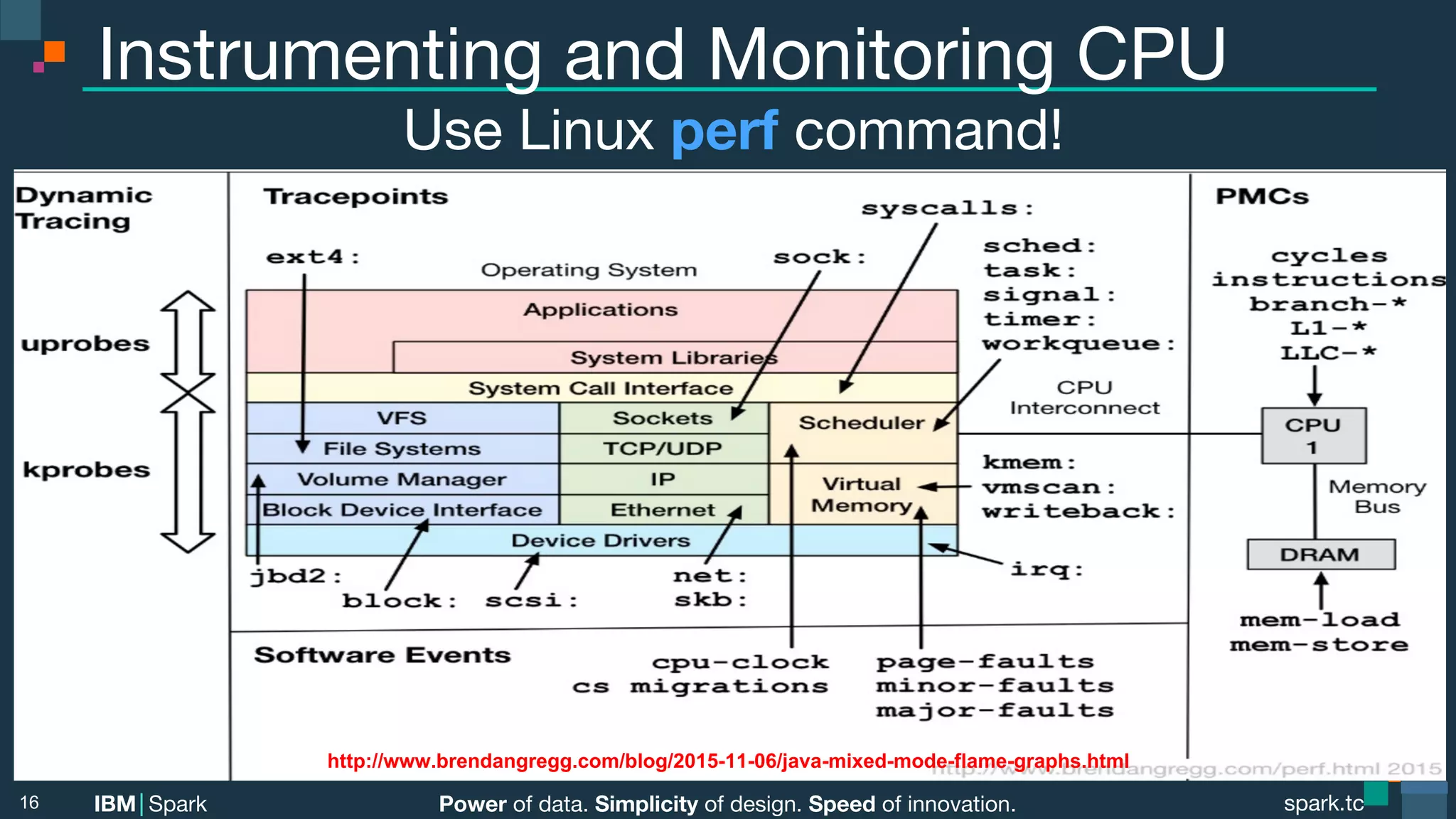

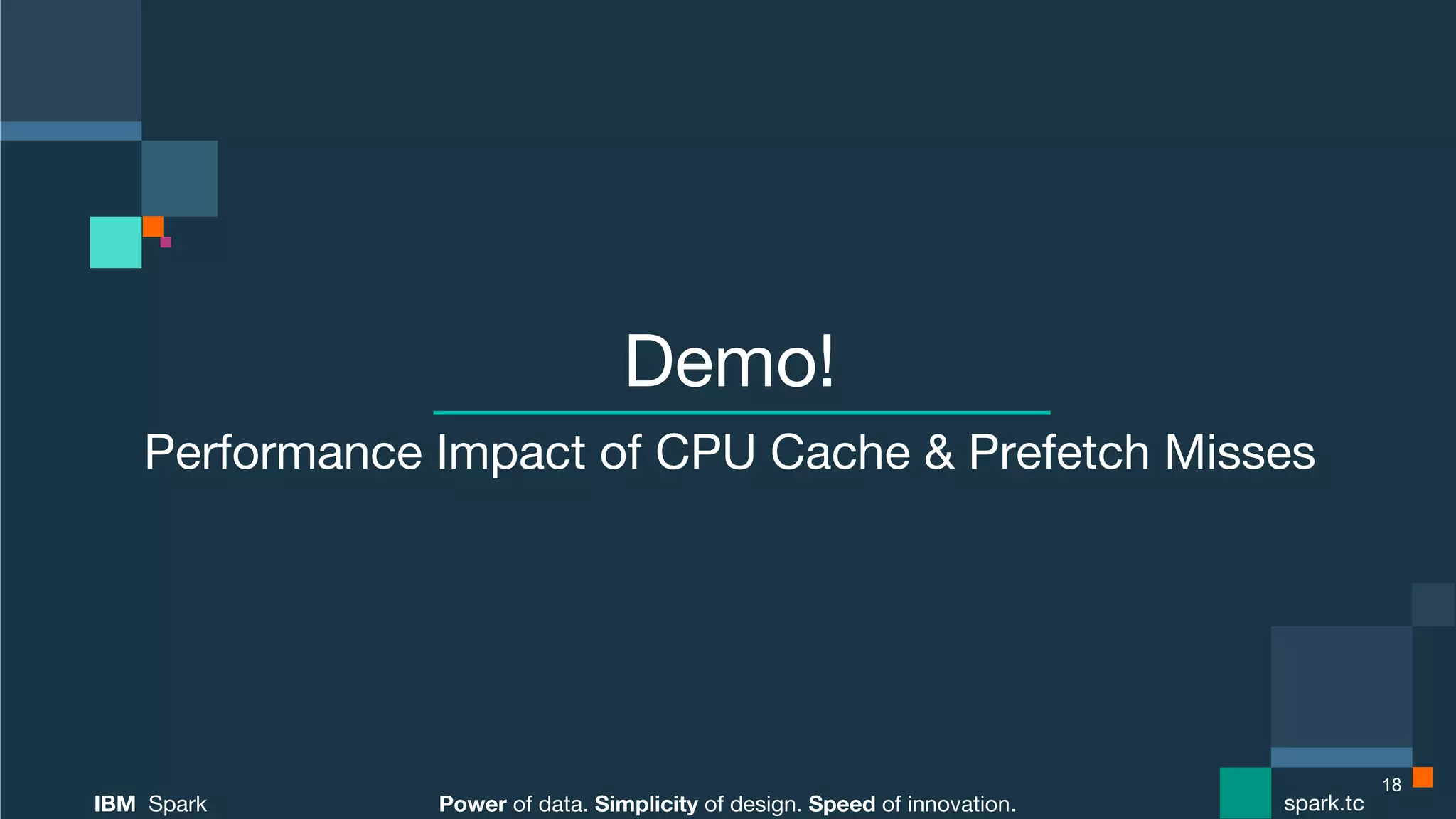

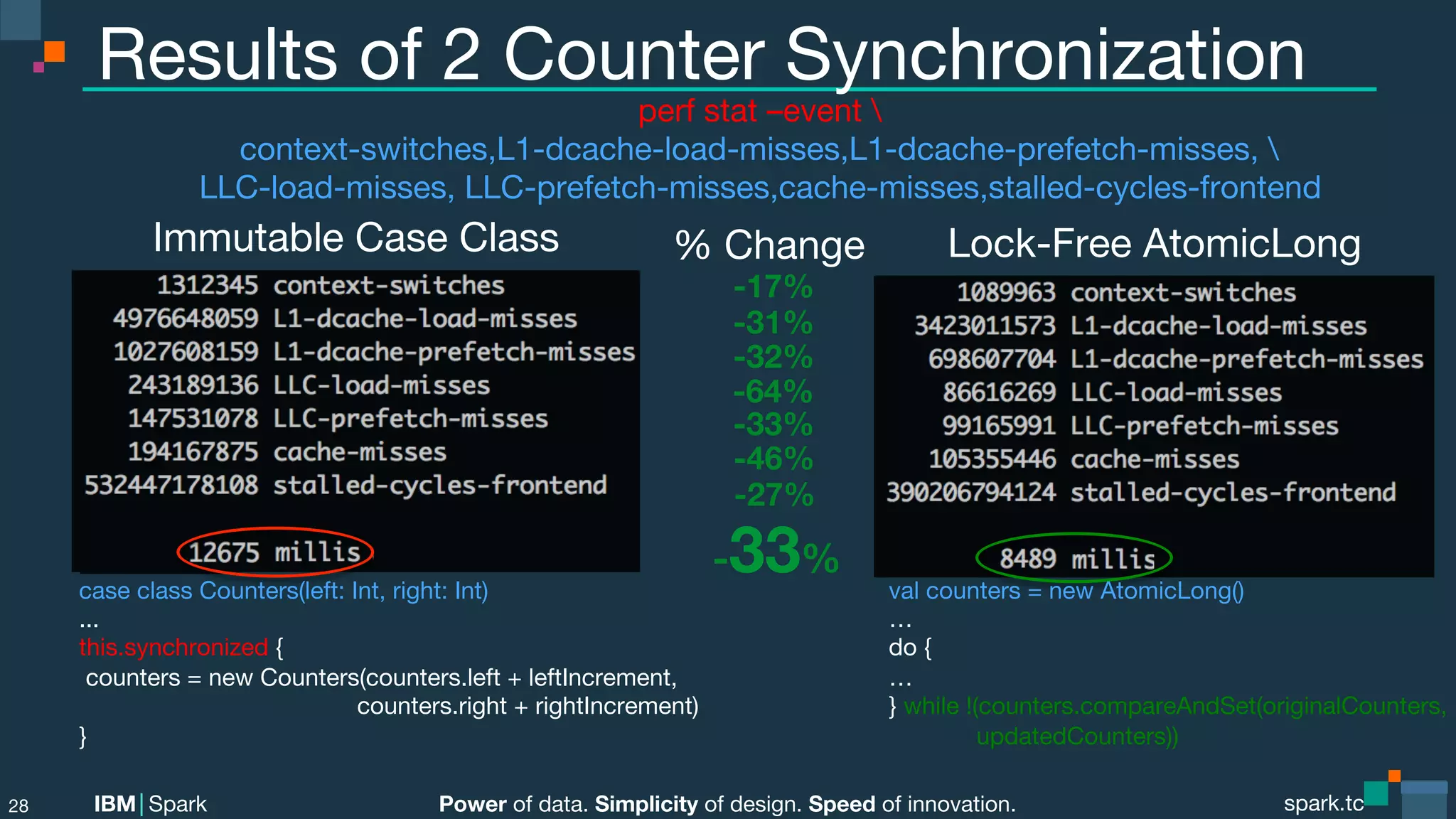

1. The document discusses techniques for improving Apache Spark performance through mechanical sympathy, which means optimizing for hardware performance by considering factors like CPU cache usage and minimizing random memory access.

2. It provides examples of how to improve sorting, matrix multiplication, and thread synchronization by making them more cache-friendly and reducing cache misses and context switches.

3. The speaker demonstrates performance improvements from these techniques using Linux perf and flame graph profiling tools. Optimizations like Project Tungsten that customize Spark for the hardware are also discussed.

![Power of data. Simplicity of design. Speed of innovation.

IBM Spark

spark.tc

spark.tc

Power of data. Simplicity of design. Speed of innovation.

IBM Spark

CPU Cache Naïve Matrix Multiplication

// Dot product of each row & column vector

for (i <- 0 until numRowA)

for (j <- 0 until numColsB)

for (k <- 0 until numColsA)

res[ i ][ j ] += matA[ i ][ k ] * matB[ k ][ j ];

19

Bad: Row-wise traversal,

not using full CPU cache line,

ineffective pre-fetching](https://image.slidesharecdn.com/melbournesparkmeetupdec092015-151211033419/75/Melbourne-Spark-Meetup-Dec-09-2015-19-2048.jpg)

![Power of data. Simplicity of design. Speed of innovation.

IBM Spark

spark.tc

spark.tc

Power of data. Simplicity of design. Speed of innovation.

IBM Spark

CPU Cache Friendly Matrix Multiplication

// Transpose B

for (i <- 0 until numRowsB)

for (j <- 0 until numColsB)

matBT[ i ][ j ] = matB[ j ][ i ];

20

Good: Full CPU Cache Line,

Effective Prefetching

OLD: matB [ k ][ j ];

// Modify algo for Transpose B

for (i <- 0 until numRowsA)

for (j <- 0 until numColsB)

for (k <- 0 until numColsA)

res[ i ][ j ] += matA[ i ][ k ] * matBT[ j ][ k ];](https://image.slidesharecdn.com/melbournesparkmeetupdec092015-151211033419/75/Melbourne-Spark-Meetup-Dec-09-2015-20-2048.jpg)

)

…

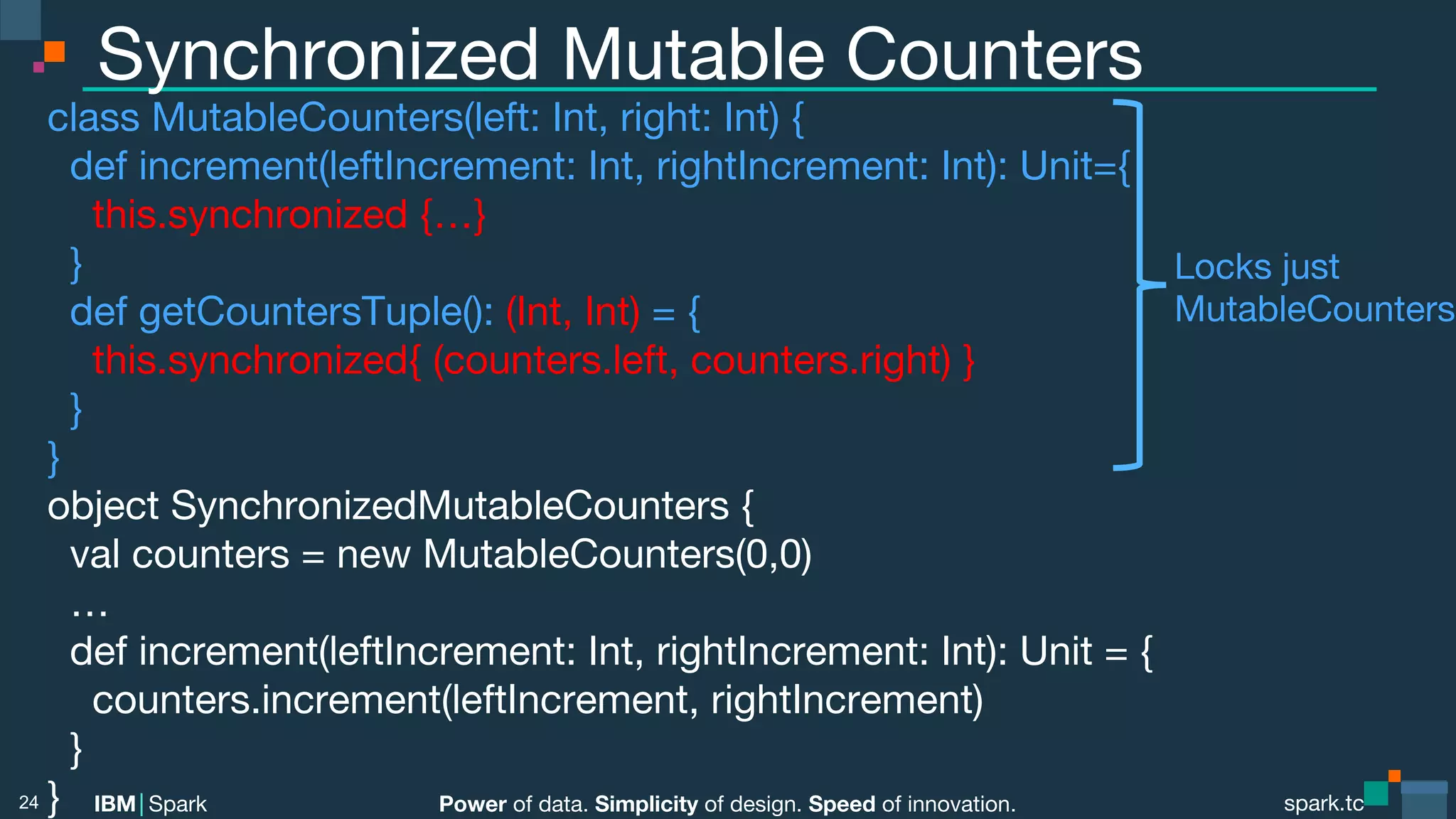

def increment(leftIncrement: Int, rightIncrement: Int) : Long = {

var originalCounters: Counters = null

var updatedCounters: Counters = null

do {

originalCounters = getCounters()

updatedCounters = new Counters(originalCounters.left+ leftIncrement,

originalCounters.right+ rightIncrement)

}

// Retry lock-free, optimistic compareAndSet() until AtomicRef updates

while !(counters.compareAndSet(originalCounters, updatedCounters))

}

}

25

Lock Free!!](https://image.slidesharecdn.com/melbournesparkmeetupdec092015-151211033419/75/Melbourne-Spark-Meetup-Dec-09-2015-25-2048.jpg)

![Power of data. Simplicity of design. Speed of innovation.

IBM Spark

spark.tc

spark.tc

Power of data. Simplicity of design. Speed of innovation.

IBM Spark

Custom Data Structs & Algos: Sorting

o.a.s.util.collection.unsafe.sort.

UnsafeSortDataFormat

UnsafeExternalSorter

UnsafeShuffleWriter

UnsafeInMemorySorter

RecordPointerAndKeyPrefix

33

Ptr

Key-Prefix

2x CPU Cache-line Friendly!

SortDataFormat<RecordPointerAndKeyPrefix, Long[ ]>

Note: Mixing multiple subclasses of SortDataFormat

simultaneously will prevent JIT inlining.

Supports merging compressed records

(if compression CODEC supports it, ie. LZF)

In-place external sorting of spilled BytesToBytes data

AlphaSort-based, 8-byte aligned sort key

In-place sorting of BytesToBytesMap data](https://image.slidesharecdn.com/melbournesparkmeetupdec092015-151211033419/75/Melbourne-Spark-Meetup-Dec-09-2015-33-2048.jpg)

![Power of data. Simplicity of design. Speed of innovation.

IBM Spark

spark.tc

spark.tc

Power of data. Simplicity of design. Speed of innovation.

IBM Spark

Pushdowns

aka. Predicate or Filter Pushdowns

Predicate returns true or false for given function

Filters rows deep into the data source

Reduces number of rows returned

Data Source must implement PrunedFilteredScan

def buildScan(requiredColumns: Array[String],

filters: Array[Filter]): RDD[Row]

41](https://image.slidesharecdn.com/melbournesparkmeetupdec092015-151211033419/75/Melbourne-Spark-Meetup-Dec-09-2015-41-2048.jpg)

![Power of data. Simplicity of design. Speed of innovation.

IBM Spark

spark.tc

spark.tc

Power of data. Simplicity of design. Speed of innovation.

IBM Spark

Parquet Data Source

Configuration

spark.sql.parquet.filterPushdown=true

spark.sql.parquet.mergeSchema=false (unless your schema is evolving)

spark.sql.parquet.cacheMetadata=true (requires sqlContext.refreshTable())

spark.sql.parquet.compression.codec=[uncompressed,snappy,gzip,lzo]

DataFrames

val gendersDF = sqlContext.read.format("parquet")

.load("file:/root/pipeline/datasets/dating/genders.parquet")

gendersDF.write.format("parquet").partitionBy("gender")

.save("file:/root/pipeline/datasets/dating/genders.parquet")

SQL

CREATE TABLE genders USING parquet

OPTIONS

(path "file:/root/pipeline/datasets/dating/genders.parquet")

52](https://image.slidesharecdn.com/melbournesparkmeetupdec092015-151211033419/75/Melbourne-Spark-Meetup-Dec-09-2015-52-2048.jpg)

![Power of data. Simplicity of design. Speed of innovation.

IBM Spark

spark.tc

spark.tc

Power of data. Simplicity of design. Speed of innovation.

IBM Spark

Spark SQL API

Datasets type safe API (similar to RDDs) utilizing Tungsten

val ds = sqlContext.read.text("ratings.csv").as[String]

val df = ds.flatMap(_.split(",")).filter(_ != "").toDF() // RDD API, convert to DF

val agg = df.groupBy($"rating").agg(count("*") as "ct”).orderBy($"ct" desc)

Typed Aggregators used alongside UDFs and UDAFs

val simpleSum = new Aggregator[Int, Int, Int] with Serializable {

def zero: Int = 0

def reduce(b: Int, a: Int) = b + a

def merge(b1: Int, b2: Int) = b1 + b2

def finish(b: Int) = b

}.toColumn

val sum = Seq(1,2,3,4).toDS().select(simpleSum)

Query files directly without registerTempTable()

%sql SELECT * FROM json.`/datasets/movielens/ml-latest/movies.json`

67](https://image.slidesharecdn.com/melbournesparkmeetupdec092015-151211033419/75/Melbourne-Spark-Meetup-Dec-09-2015-67-2048.jpg)