Embed presentation

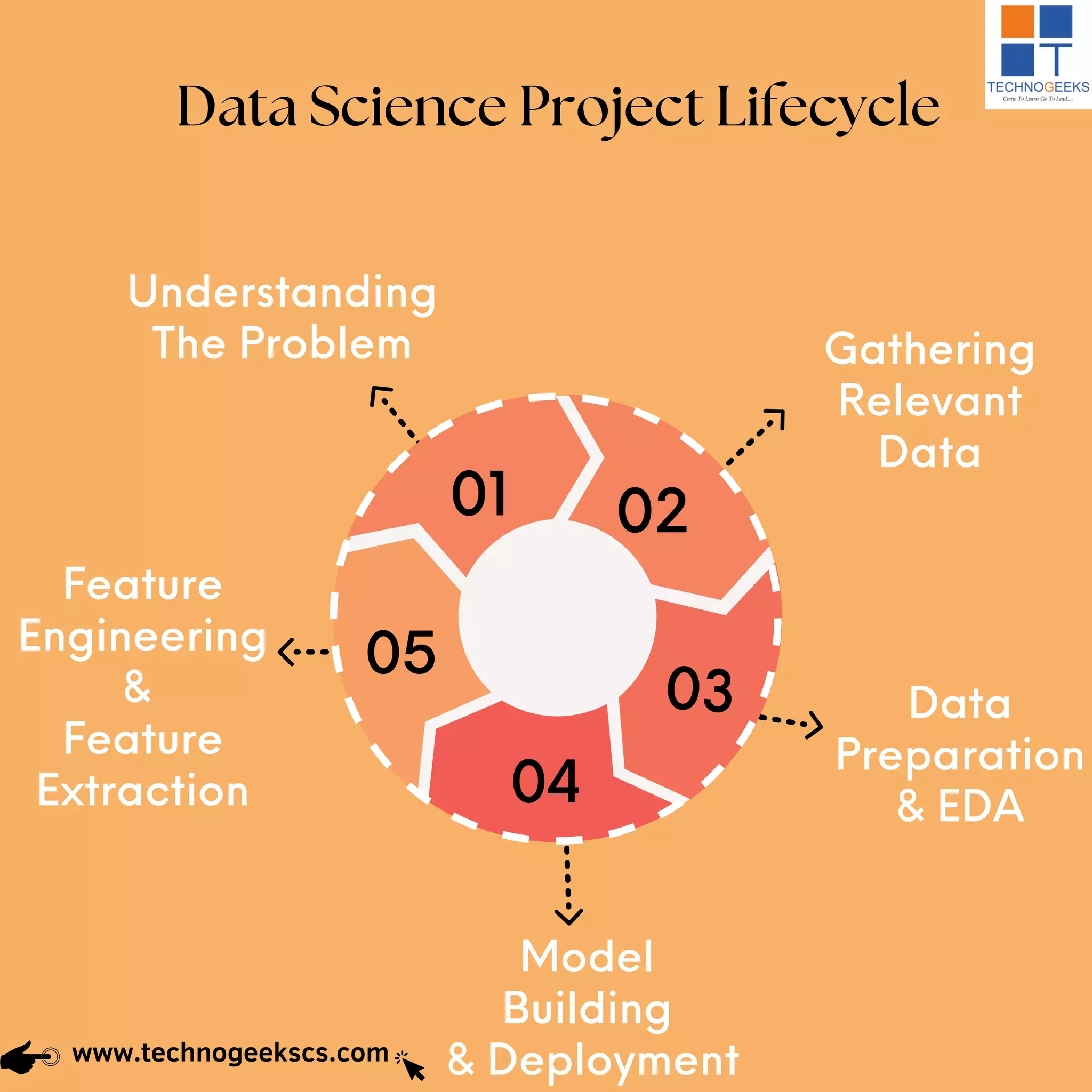

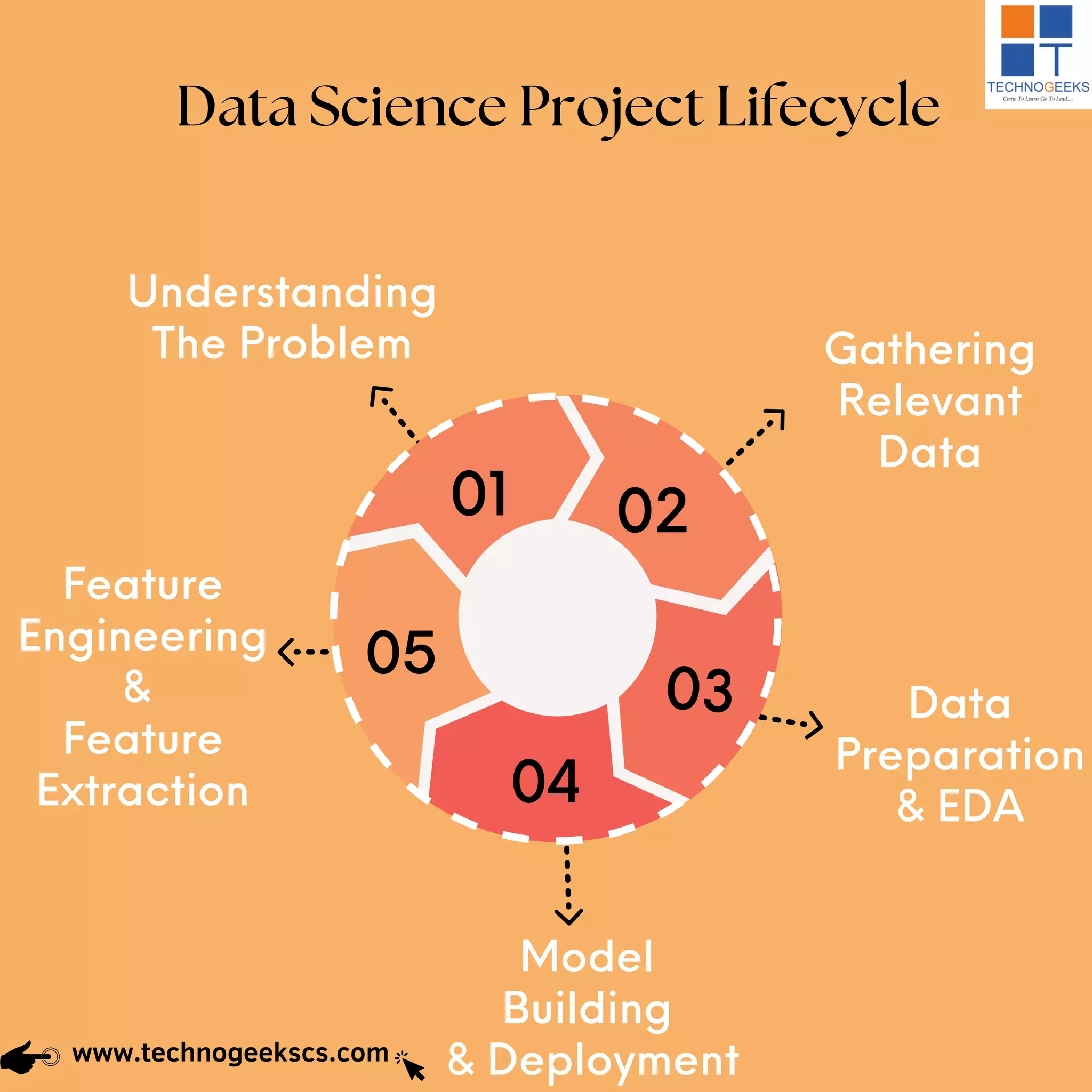

The document outlines the data science project lifecycle, encompassing six key stages: understanding the problem, gathering relevant data, data preparation, feature engineering, model building, and deployment. Each stage is crucial for breaking down the business problem and preparing data for analysis to generate desired outcomes. Ultimately, thorough evaluation is necessary to ensure the correct solution is deployed effectively.