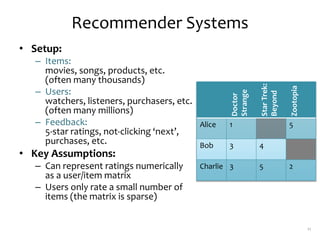

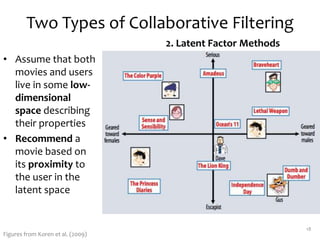

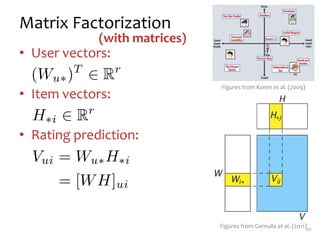

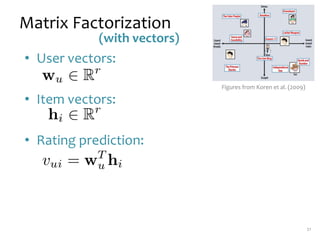

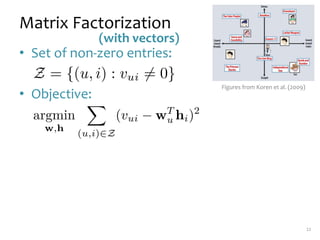

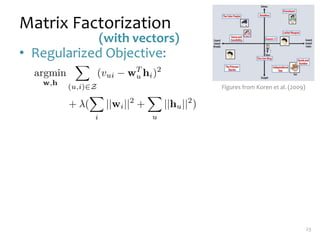

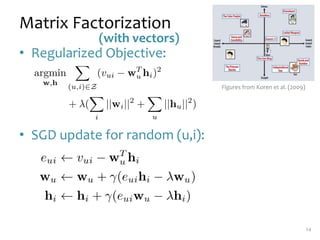

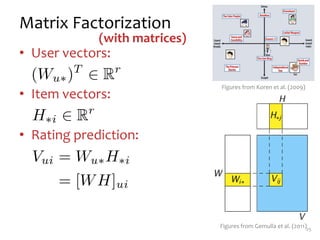

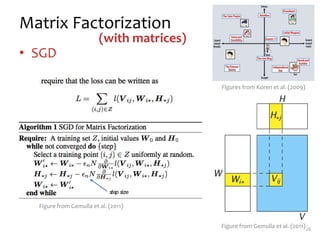

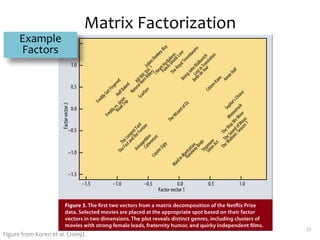

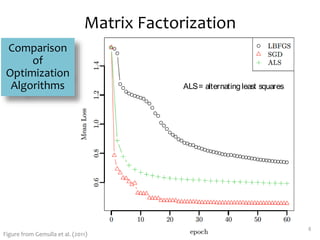

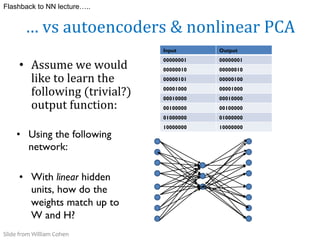

Matrix factorization is a technique used in collaborative filtering recommender systems. It involves representing both users and items as vectors in a low-dimensional latent factor space, and predicting user ratings of items based on the dot product of their vectors. The model is trained using stochastic gradient descent to minimize the regularized squared error between actual and predicted ratings. Matrix factorization is commonly used for tasks like collaborative filtering, linear regression, PCA, and clustering.

![Recovering$

latent$

factors$

in$

a$

matrix

m movies

n

users

m movies

x1 y1

x2 y2

.. ..

… …

xn yn

a1 a2 .. … am

b1 b2 … … bm

v11 …

… …

vij

…

vnm

~

V[i,j] = user i’s rating of movie j

r

W

H

V

30

Slide from William Cohen](https://image.slidesharecdn.com/lecture26-mf-220424155810/85/lecture244-mf-pptx-29-320.jpg)

![31

…$

is$

like$

Linear$

Regression$

….

m=1$

regressors

n

instances

(e.g.,

150)

predictions

pl1 pw1 sl1 sw1

pl2 pw2 sl2 sw2

.. ..

… …

pln pwn

w1

w2

w3

w4

y1

…

yi

yn

~

Y[i,1]$

=$

instance$

i’s$

prediction

W

H

Y

r features(eg4)

Slide from William Cohen](https://image.slidesharecdn.com/lecture26-mf-220424155810/85/lecture244-mf-pptx-30-320.jpg)

![32

..$

for$

many$

outputs$

at$

once….

m#

regressors

n

instances

(e.g.,

150)

predictions

pl1 pw1 sl1 sw1

pl2 pw2 sl2 sw2

.. ..

… …

pln …

w11 w12

w21 ..

w31 ..

w41 .. …

y11 y12

…

yn1

~

Y[I,j]$

=$

instance$

i’s$

prediction$

for$

regression$

task$

j

W

H

Y

ym

…

ynm

r features(eg4)

…$

where$

we$

also$

have$

to$

O

ind$

the$

dataset!

Slide from William Cohen](https://image.slidesharecdn.com/lecture26-mf-220424155810/85/lecture244-mf-pptx-31-320.jpg)

![33

…$

vs$

PCA

m movies

n

users

m movies

x1 y1

x2 y2

.. ..

… …

xn yn

a1 a2 .. … am

b1 b2 … … bm

v11 …

… …

vij

…

vnm

~

V[i,j] = user i’s rating of movie j

r

W

H

V

Minimize squared error

reconstruction error

and force the

“prototype” users to be

orthogonal ! PCA

Slide from William Cohen](https://image.slidesharecdn.com/lecture26-mf-220424155810/85/lecture244-mf-pptx-32-320.jpg)

![Recovering$

latent$

factors$

in$

a$

matrix

m movies

n

users

m movies

x1 y1

x2 y2

.. ..

… …

xn yn

a1 a2 .. … am

b1 b2 … … bm

v11 …

… …

vij

…

vnm

~

V[i,j] = user i’s rating of movie j

r

W

H

V

36

Slide from William Cohen](https://image.slidesharecdn.com/lecture26-mf-220424155810/85/lecture244-mf-pptx-35-320.jpg)