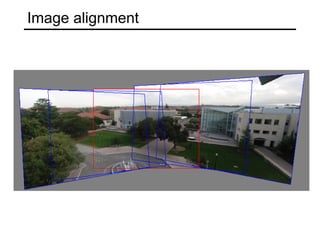

- Image alignment is used for tasks like panorama stitching and object recognition. It involves finding the transformation between two images.

- There are two main approaches: direct/pixel-based alignment searches for pixel agreement, and feature-based alignment searches for agreement between extracted features and can be verified using pixel alignment.

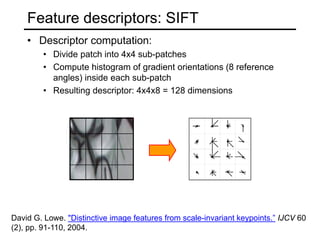

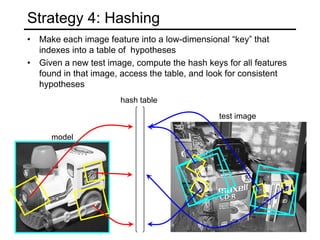

- Feature-based alignment first extracts features, computes putative matches between features, then hypothesizes and verifies transformations between small groups of matches.