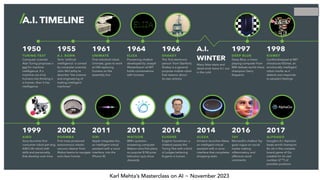

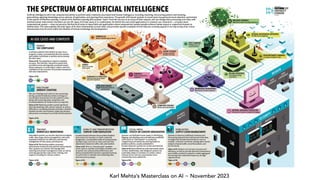

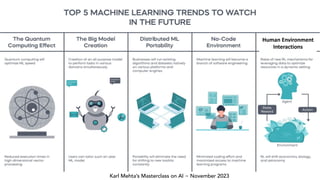

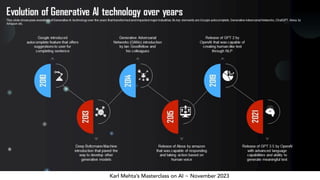

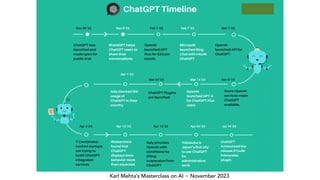

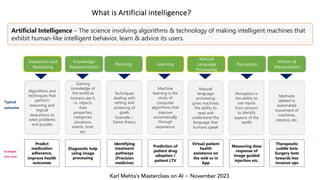

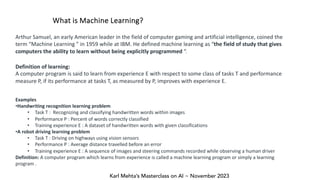

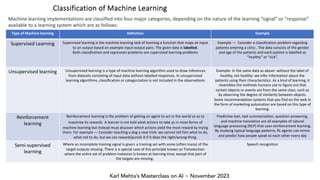

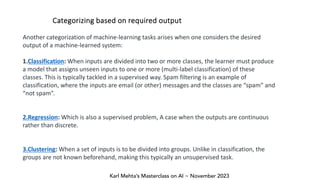

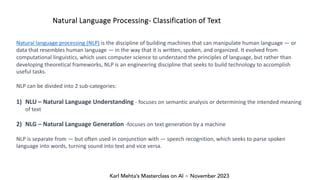

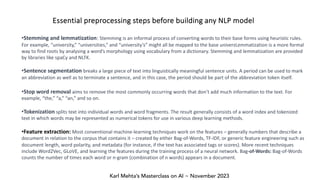

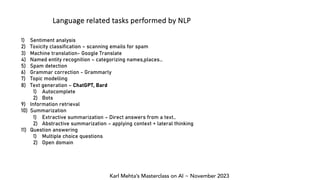

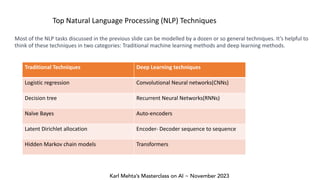

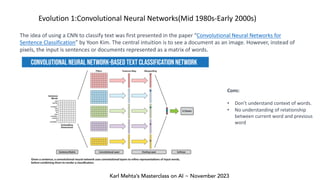

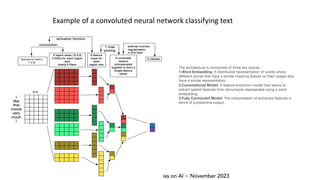

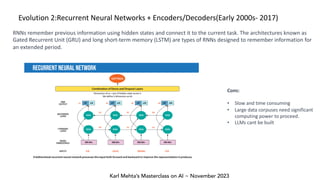

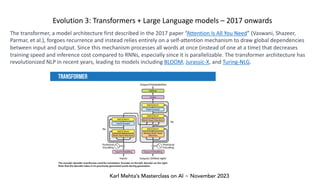

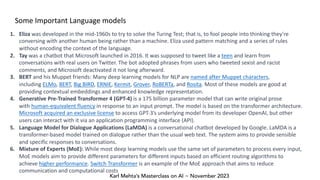

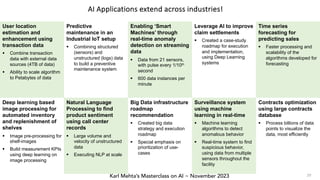

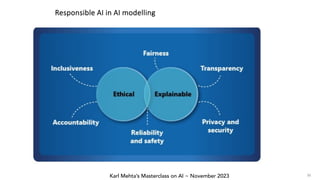

Karl Mehta's masterclass on AI, presented at the Slush’d AI conference in November 2023, covers key concepts in artificial intelligence such as machine learning, natural language processing, and neural networks. He discusses various machine learning categories including supervised, unsupervised, and reinforcement learning, along with essential NLP tasks and techniques. The masterclass also highlights the evolution of neural networks, emphasizing the impact of transformer architectures and large language models in natural language processing.