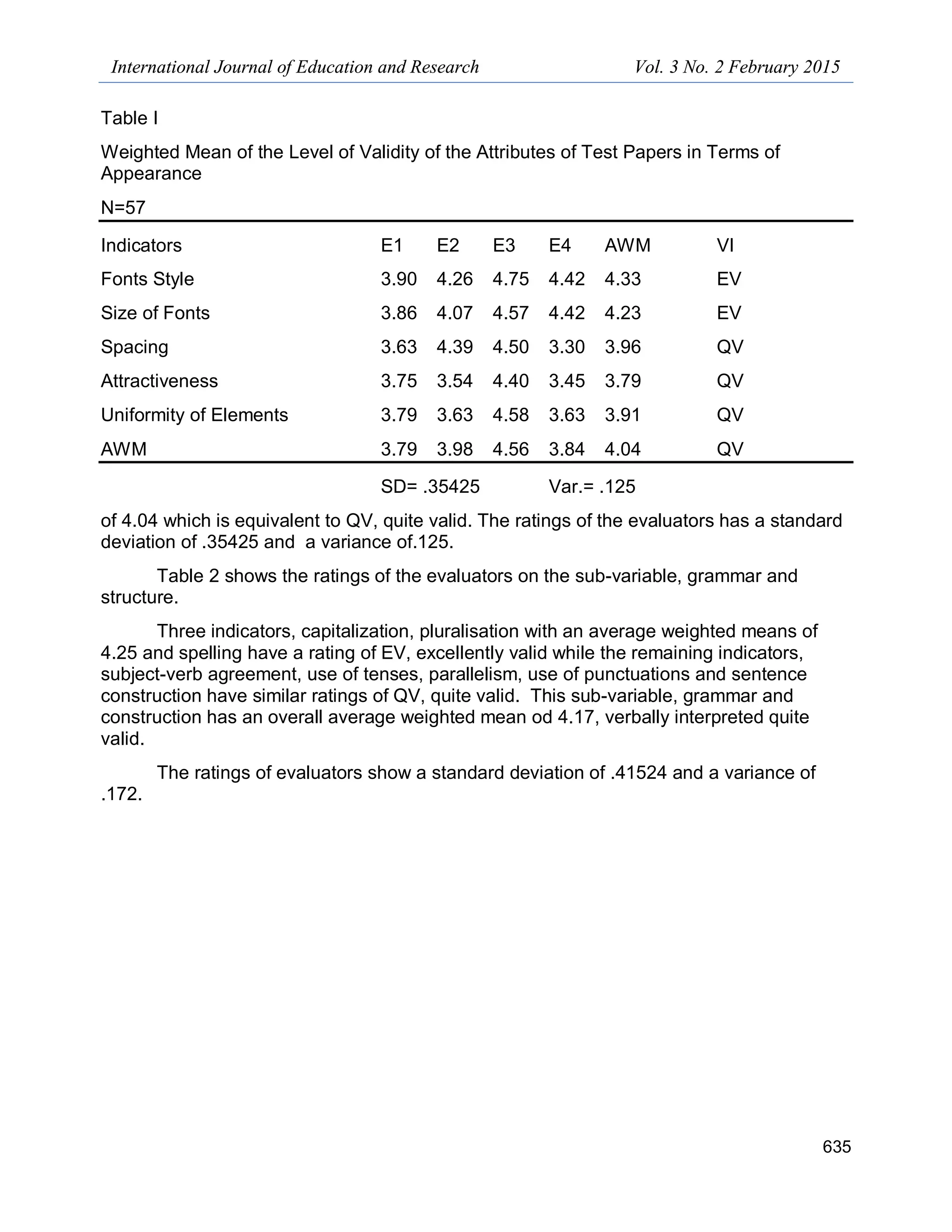

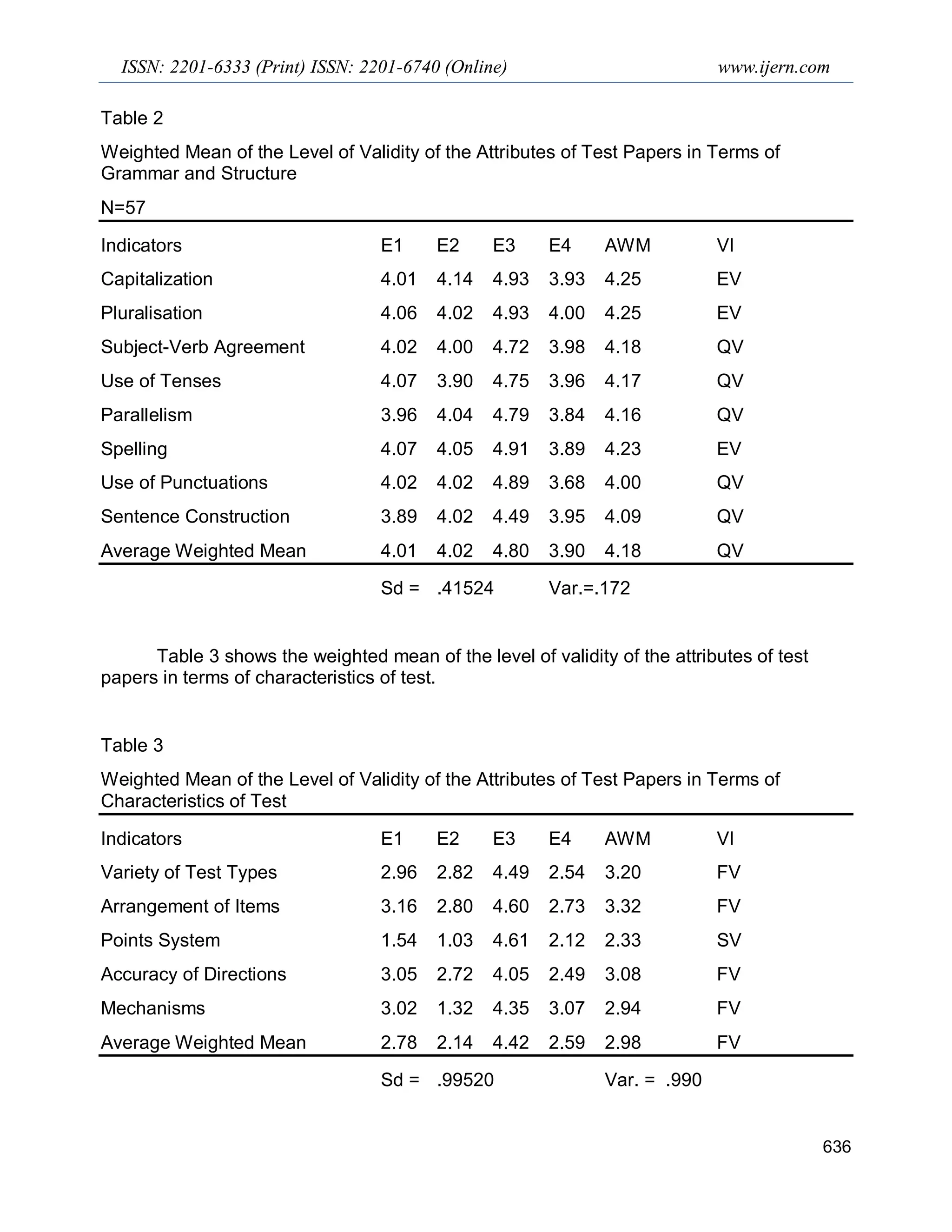

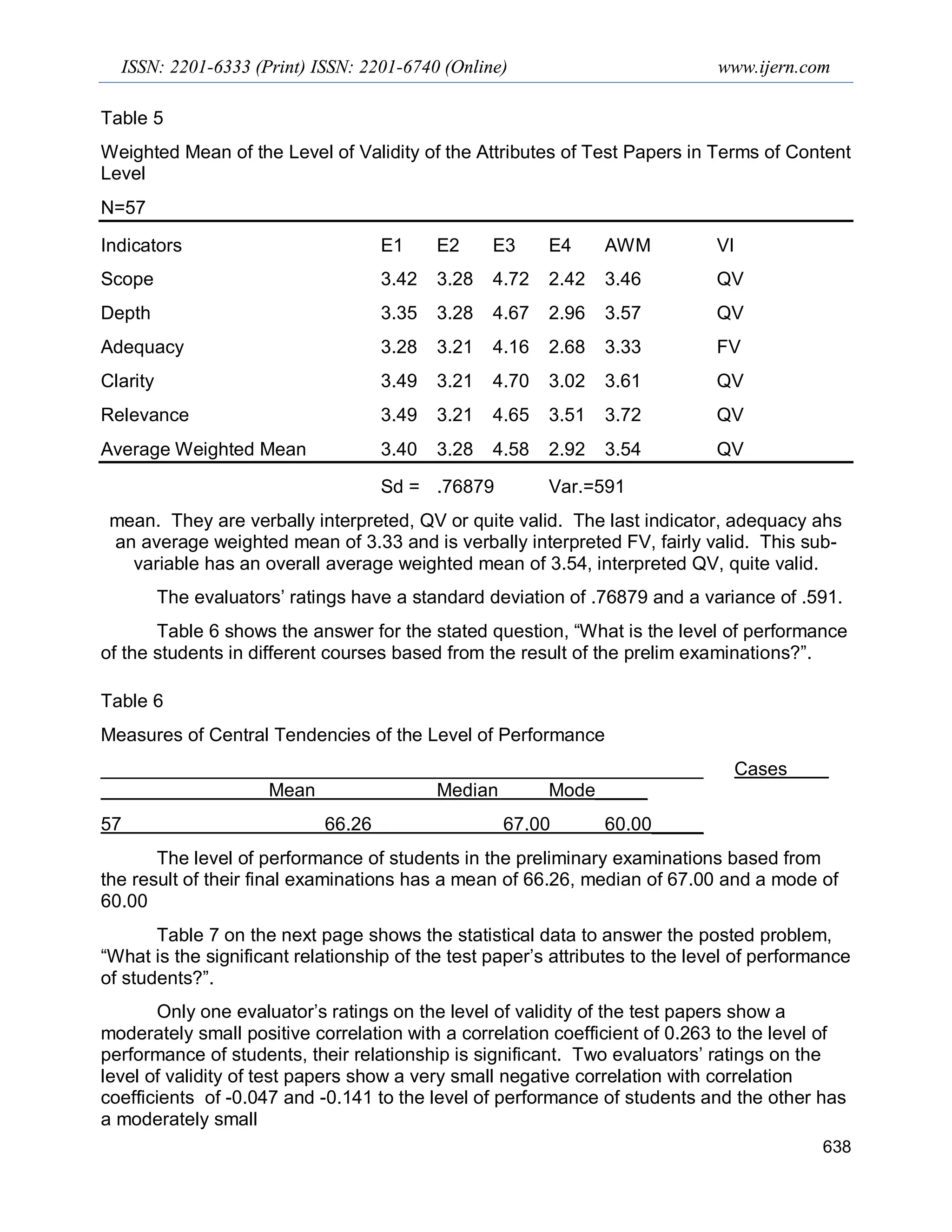

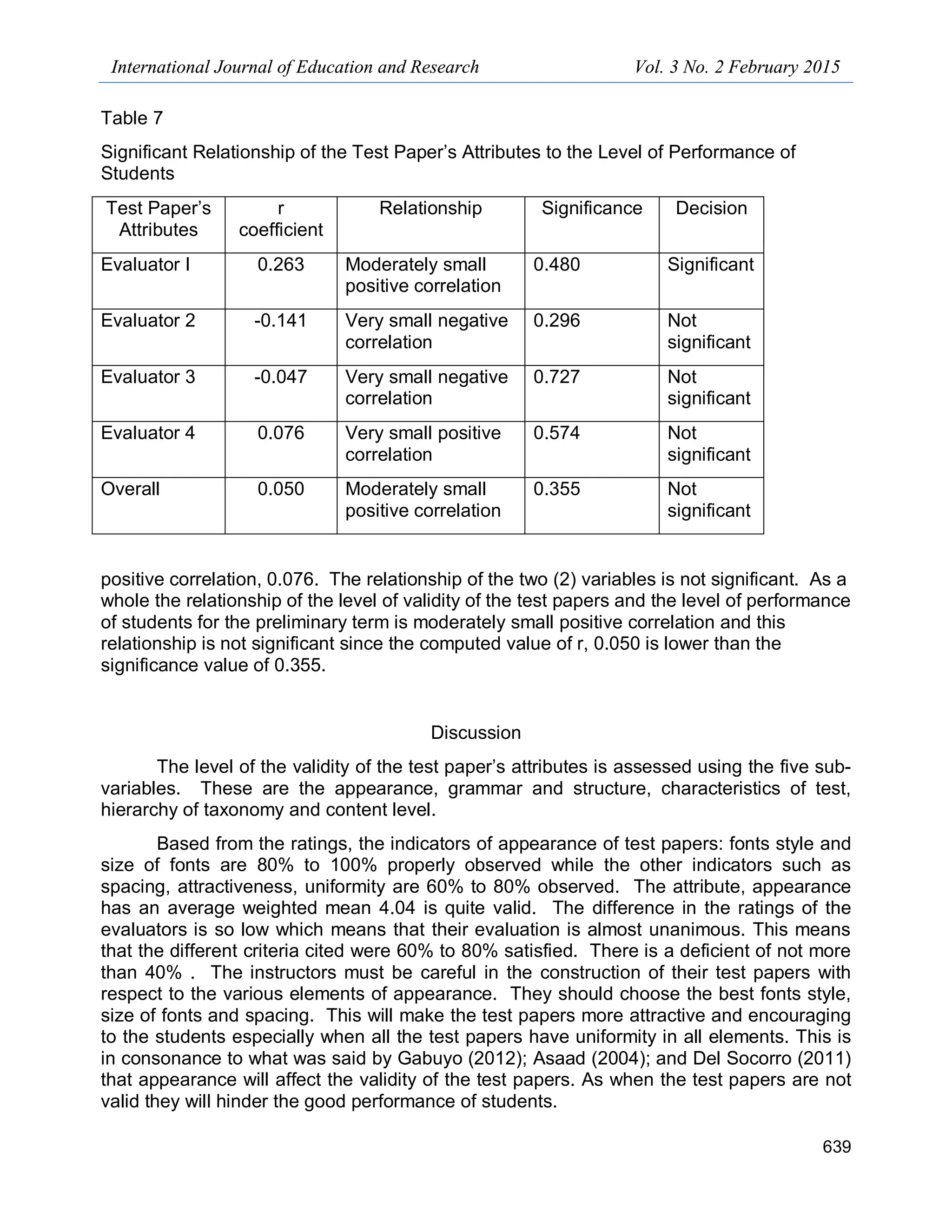

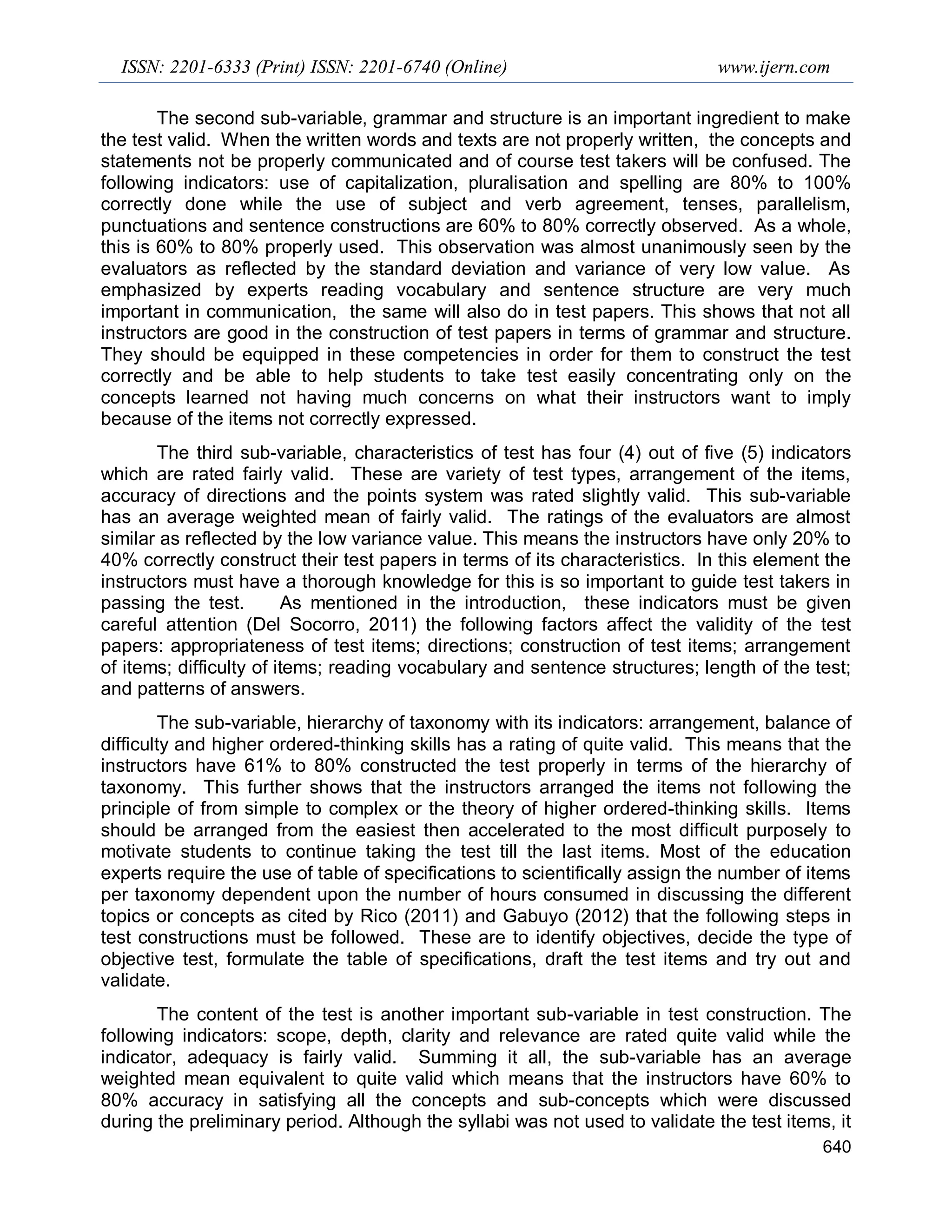

The document discusses a study that was conducted to validate test papers used at Saint Paul School of Business and Law and relate the validity of the test papers to student performance. 50% of test papers from the previous term were analyzed by experts using a checklist. The validity of test papers was found to have a moderately small positive correlation with student performance. Based on the results, guidelines for standardized test construction were formulated to improve the quality of assessment at the institution. The guidelines differentiate requirements for theory-based versus skill-based subjects. The study aims to establish best practices and standards for test development and administration at the school.