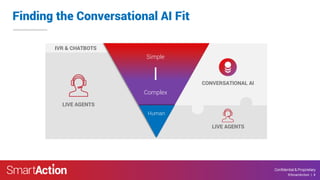

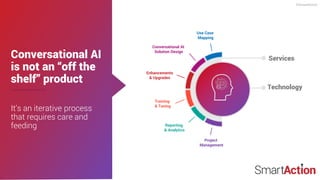

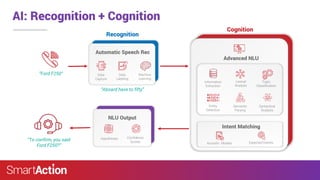

SmartAction provides AI-powered virtual agents for omnichannel self-service, aiming to simplify customer interactions across various industries. Their solutions focus on automating customer service while maintaining a personalized touch, leveraging natural language processing and machine learning for better outcomes. By 2021, they anticipate that 15% of all customer service interactions will be managed entirely by AI.