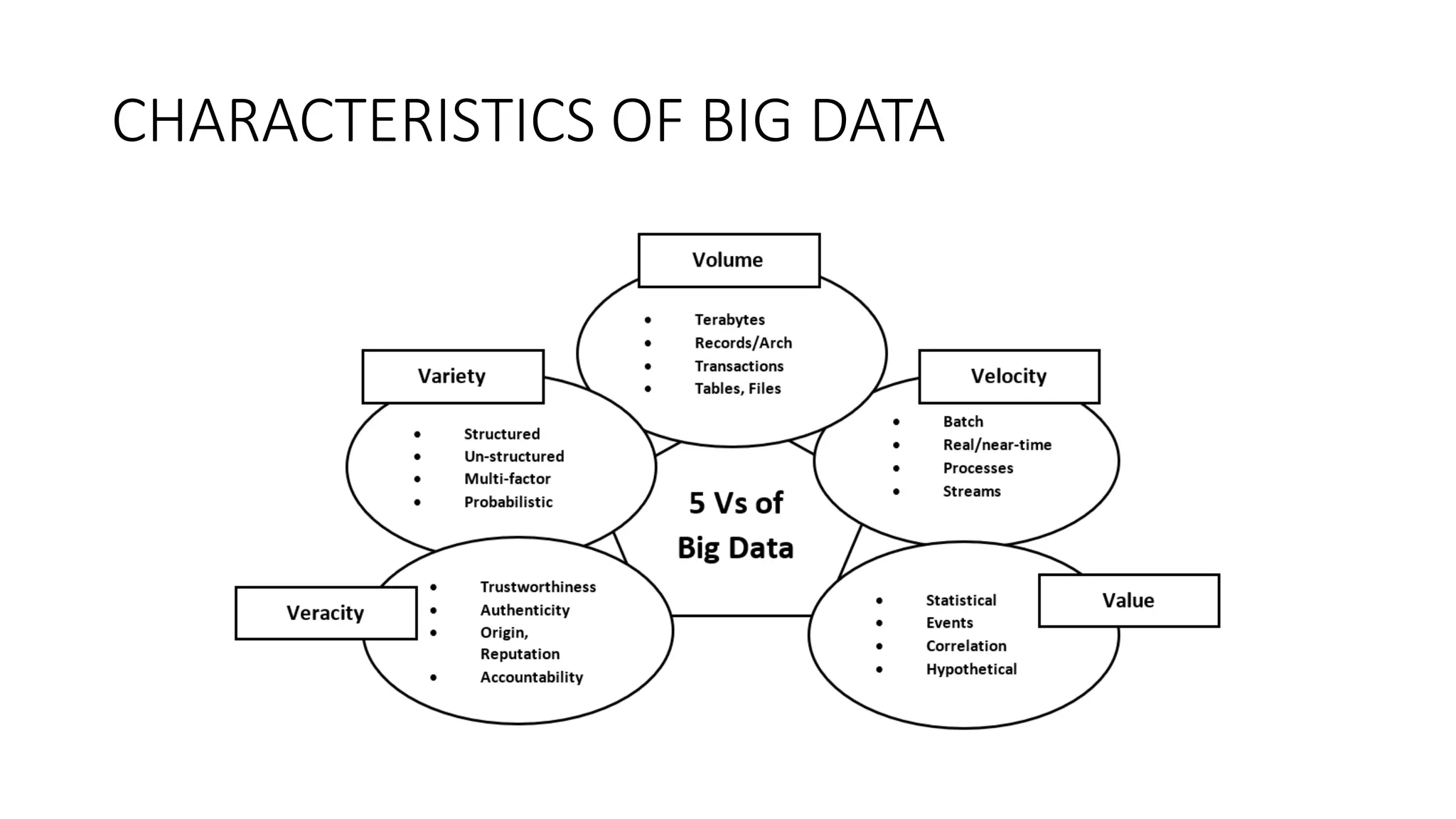

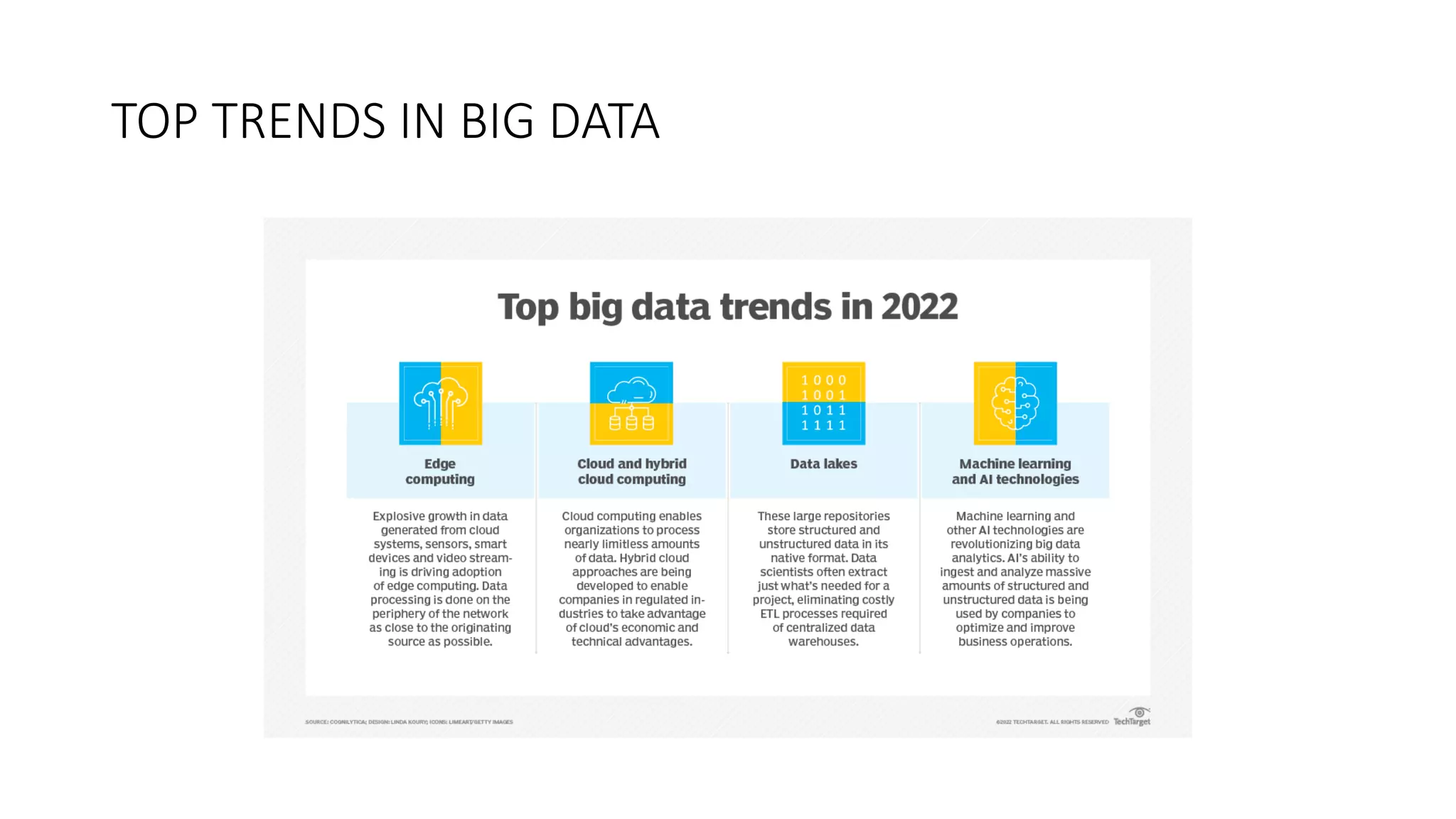

The document provides an overview of big data, including its definition, significance, types, characteristics, sources, and analytics. Big data is described as massive datasets that traditional tools struggle to manage, with examples highlighting its growth in various sectors such as finance and healthcare. Additionally, it discusses the various tools used for managing big data and current trends influencing its evolution.