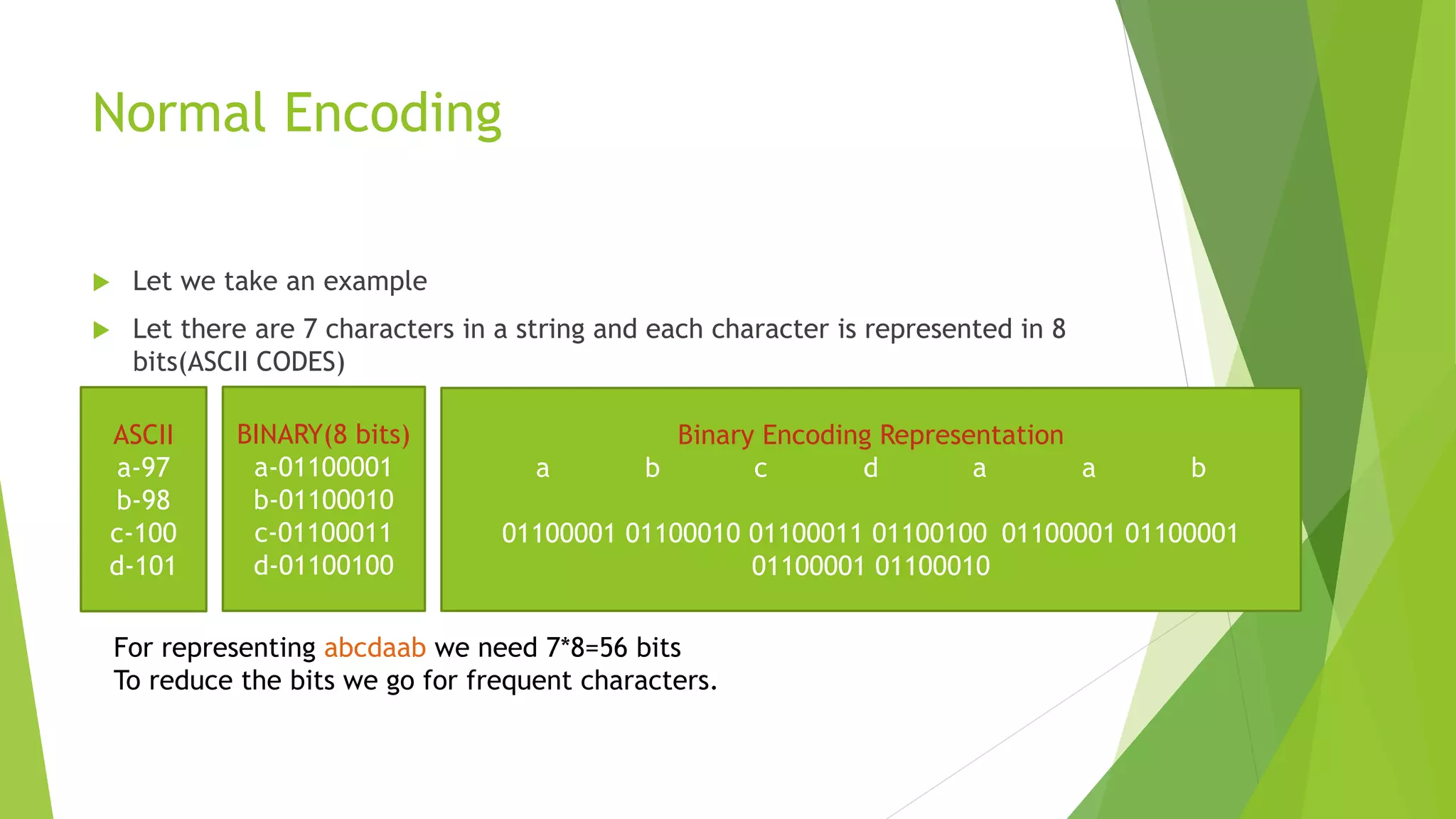

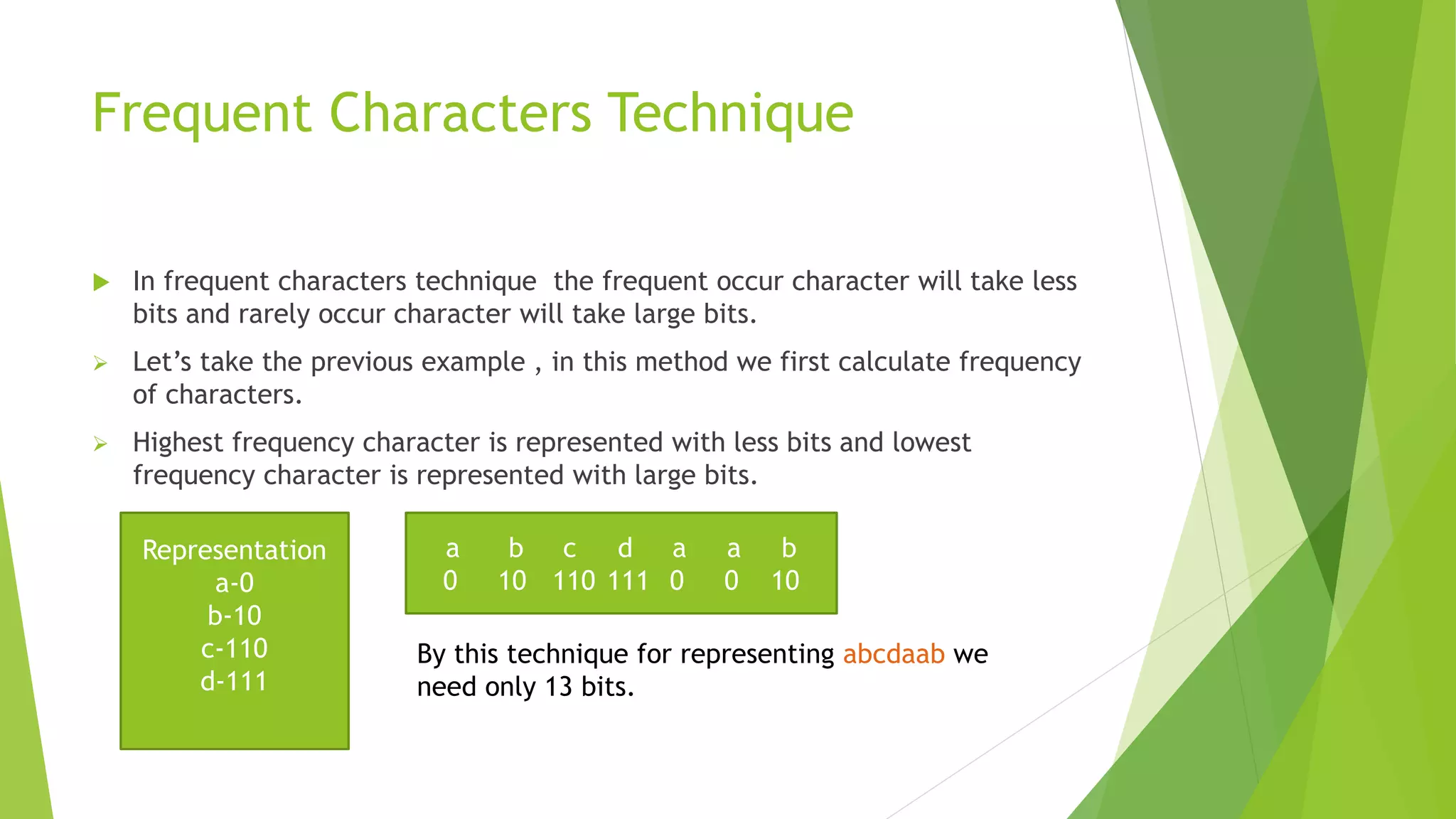

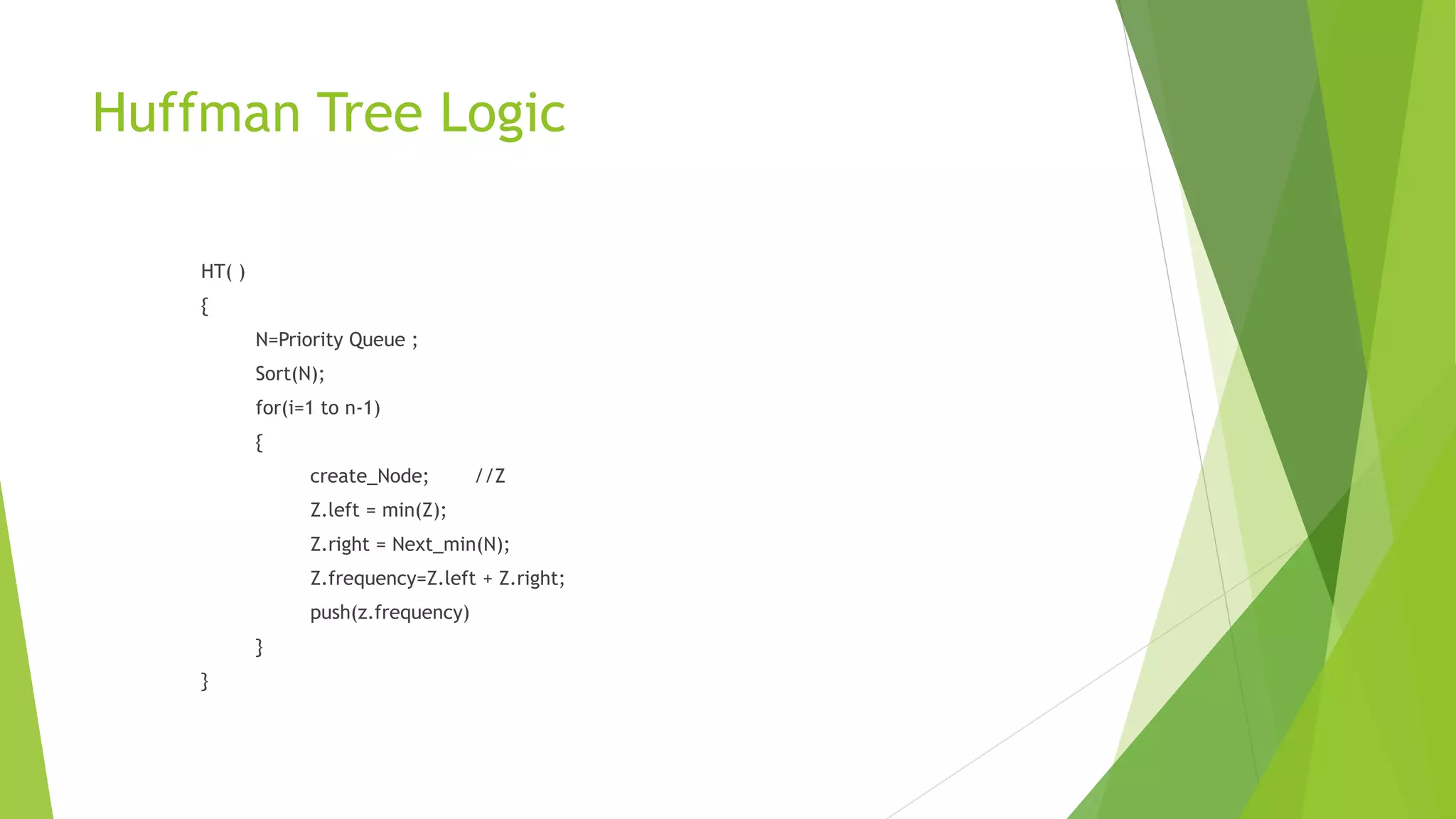

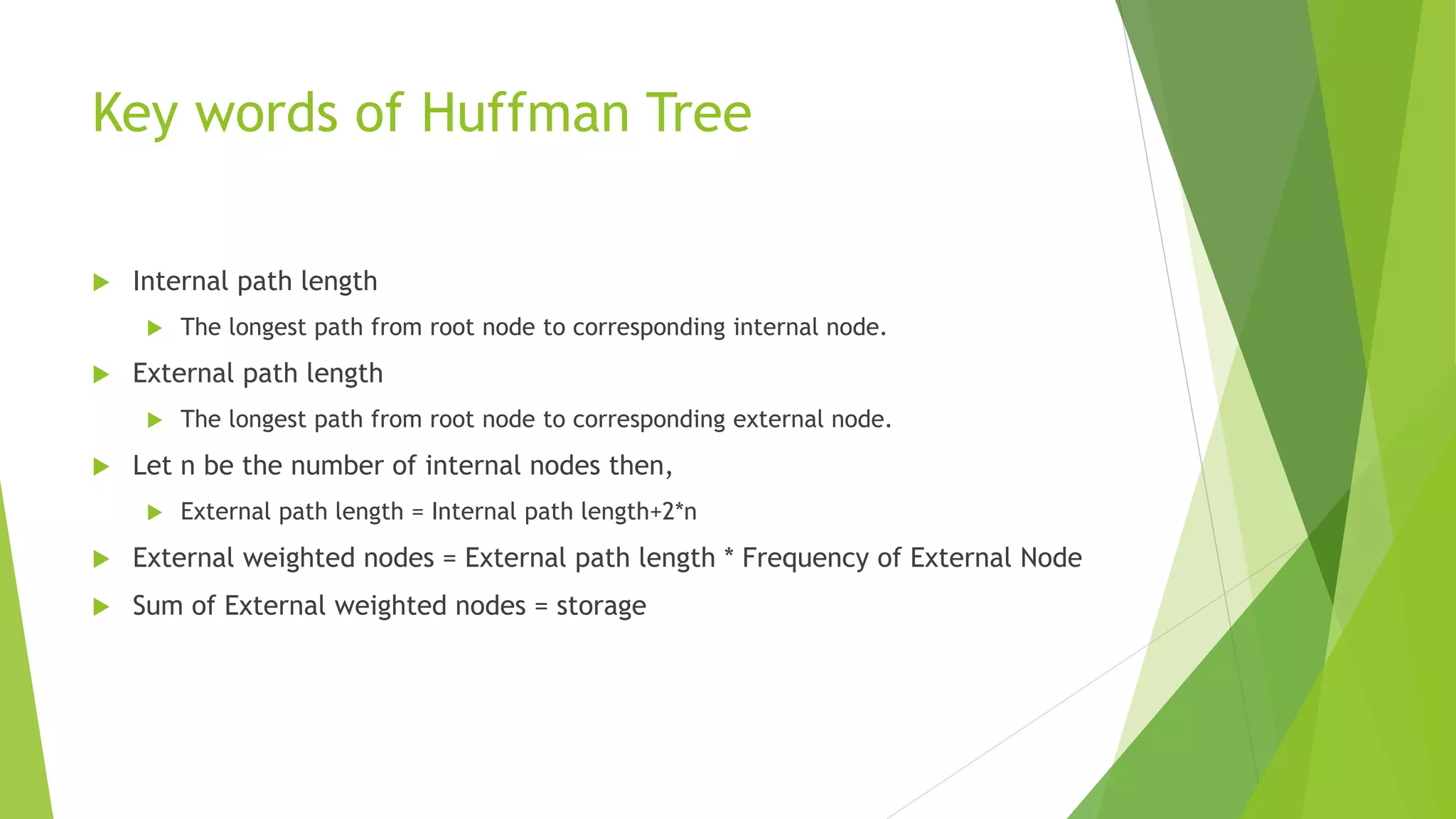

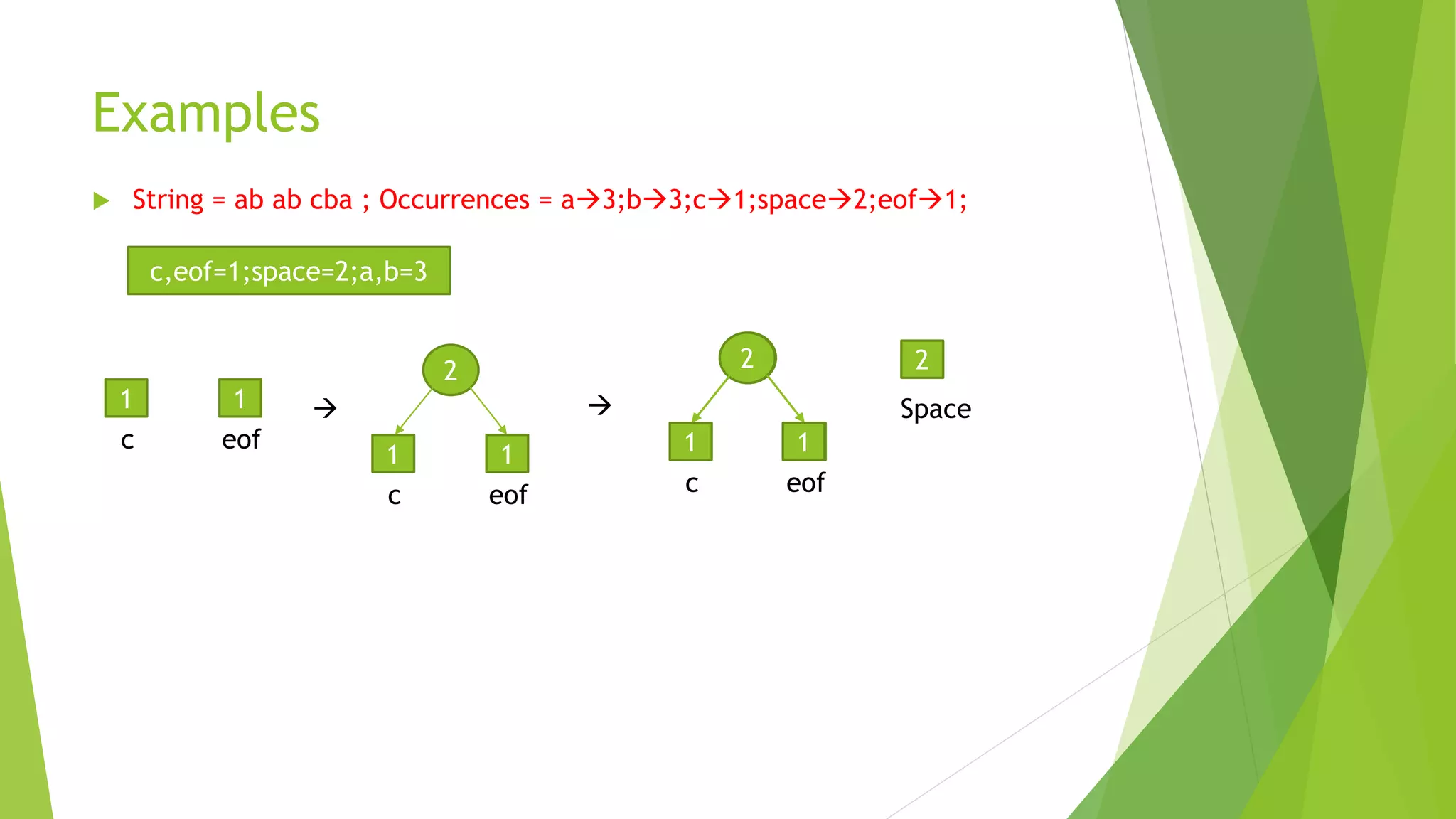

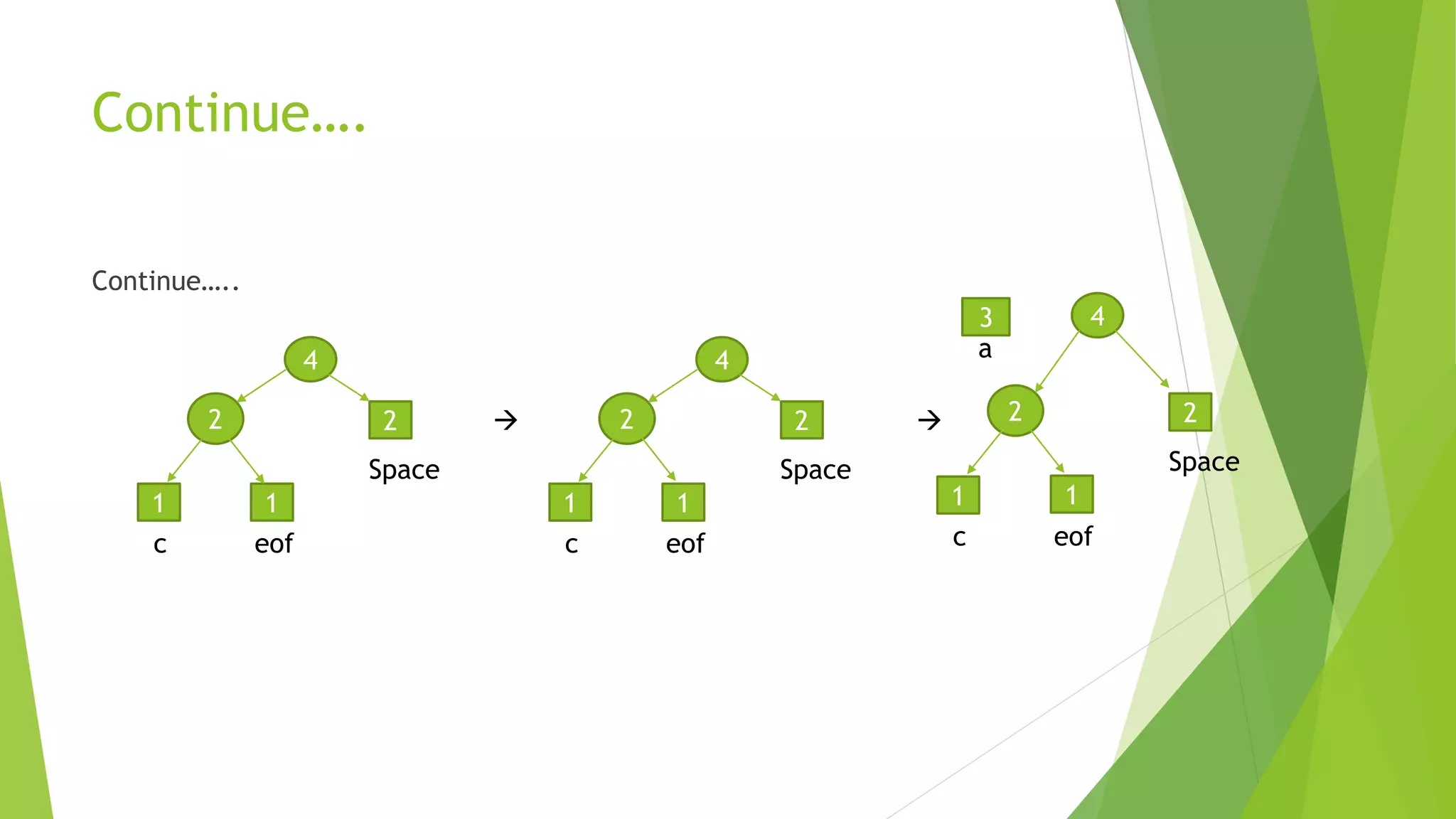

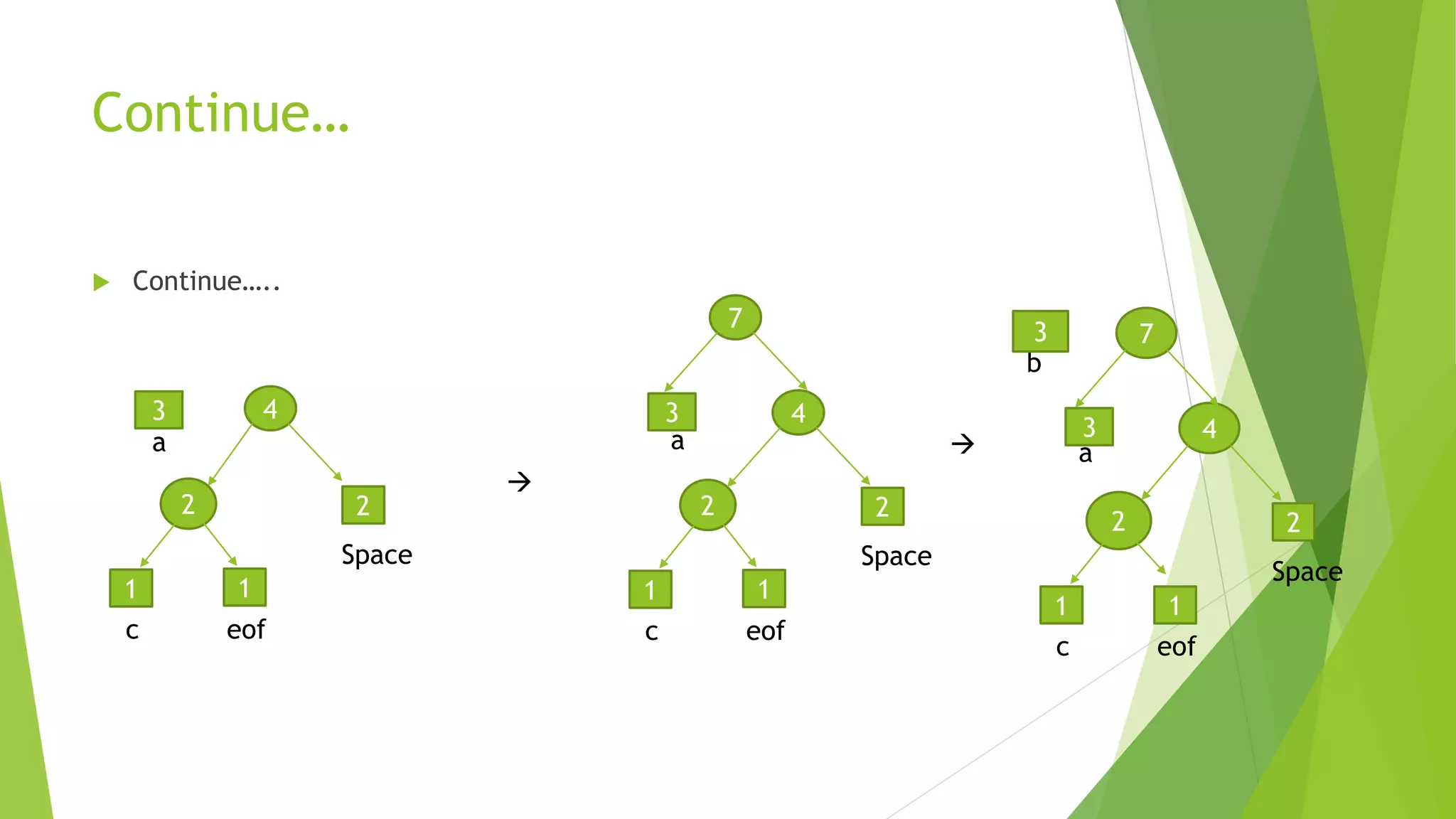

The document discusses Huffman coding, which is a data compression technique that uses variable-length codes to encode symbols based on their frequency of occurrence, with more common symbols getting shorter codes. It provides details on how a Huffman tree is constructed by assigning codes to characters based on their frequency, with the most frequent characters assigned the shortest binary codes to achieve data compression. Examples are given to demonstrate how characters are encoded using a Huffman tree and how the storage size is calculated based on the path lengths and frequencies of characters.