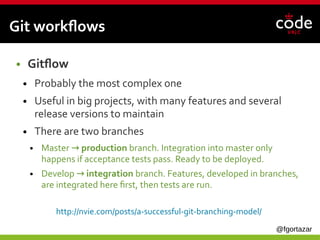

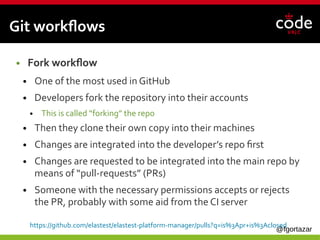

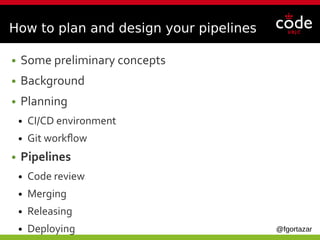

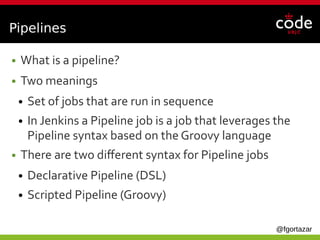

The document outlines the fundamentals of planning and defining CI/CD pipelines, emphasizing the importance of continuous integration, code review, and structured git workflows. It details planning considerations for CI/CD environments, addresses various git workflow models, and explains how to implement effective pipelines with merge and release jobs. Additionally, it stresses the need for good logging management and suggests using shared libraries to maintain efficiency in CI/CD processes.

![@fgortazar

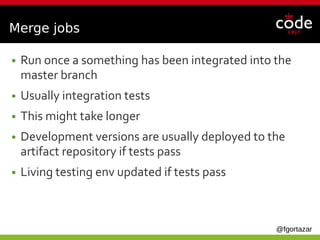

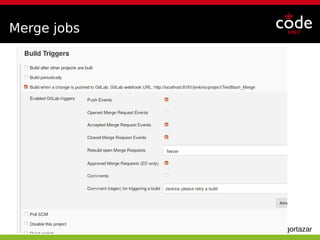

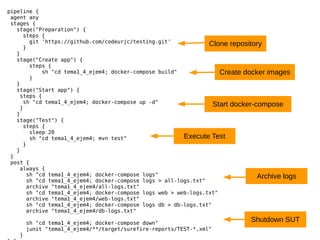

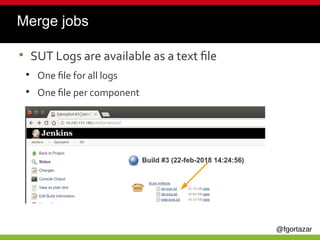

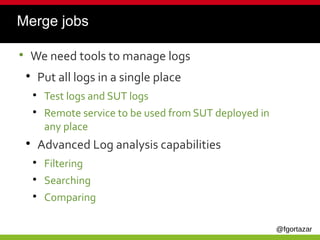

Merge jobs

node{

elastest(tss: ['EUS']) {

stage("Preparation") {

git "https://github.com/elastest/full-teaching-experiment.git"

}

stage("Start SUT") {

sh "cd docker-compose/full-teaching-env; docker-compose up -d"

sh "sleep 20"

}

stage("Test") {

def sutIp = containerIp("fullteachingenv_full-teaching_1")

try {

sh "mvn -Dapp.url=https://" + sutIp +":5001/ test"

} finally {

sh "cd docker-compose/full-teaching-env; docker-compose down"

}

}

}

}

Use ElasTest Plugin with Browsers

Start SUT

Get web container IP

Teardown SUT

Execute Tests](https://image.slidesharecdn.com/howtoplananddefineyourci-cdpipeline-190409080958/85/How-to-plan-and-define-your-CI-CD-pipeline-43-320.jpg)

![@fgortazar

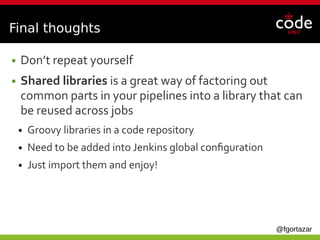

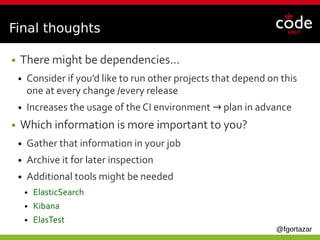

Final thoughts

● Jobs can get really big…

● Separate them into different jobs

● Add a new job to orchestrate these new jobs

● Jobs can be called from other jobs

● Better visibility

stage('Update ci environments') {

steps {

build job: 'Development/run_ansible',

propagate: false

build job: 'Development/kurento_ci_build',

propagate: false,

parameters: [string(name: 'GERRIT_REFSPEC', value: 'master')]

}

}](https://image.slidesharecdn.com/howtoplananddefineyourci-cdpipeline-190409080958/85/How-to-plan-and-define-your-CI-CD-pipeline-51-320.jpg)

![@fgortazar

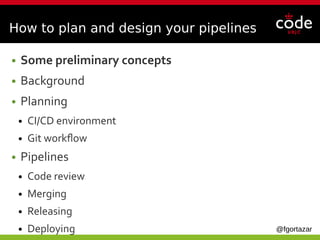

stage('Testing new environment') {

steps {

parallel (

"kurento_api_audit" : {

build job: 'Development/kurento_api_audit', propagate: false,

parameters: [string(name: 'GERRIT_REFSPEC', value: 'master')]

},

"kurento_app_audit" : {

build job: 'Development/kurento_app_audit', propagate: false,

parameters: [string(name: 'GERRIT_REFSPEC', value: 'master')]

},

"capability_functional_audit" : {

build job: 'Development/capability_functional_audit', propagate: false,

parameters: [string(name: 'GERRIT_REFSPEC', value: 'master')]

},

"capability_stability_audit" : {

build job: 'Development/capability_stability_audit', propagate: false,

parameters: [string(name: 'GERRIT_REFSPEC', value: 'master')]

},

"webrtc_audit" : {

build job: 'Development/webrtc_audit', propagate: false,

parameters: [string(name: 'GERRIT_REFSPEC', value: 'master')]

}

)

}

}

Final thoughts

● They can be run in parallel](https://image.slidesharecdn.com/howtoplananddefineyourci-cdpipeline-190409080958/85/How-to-plan-and-define-your-CI-CD-pipeline-52-320.jpg)