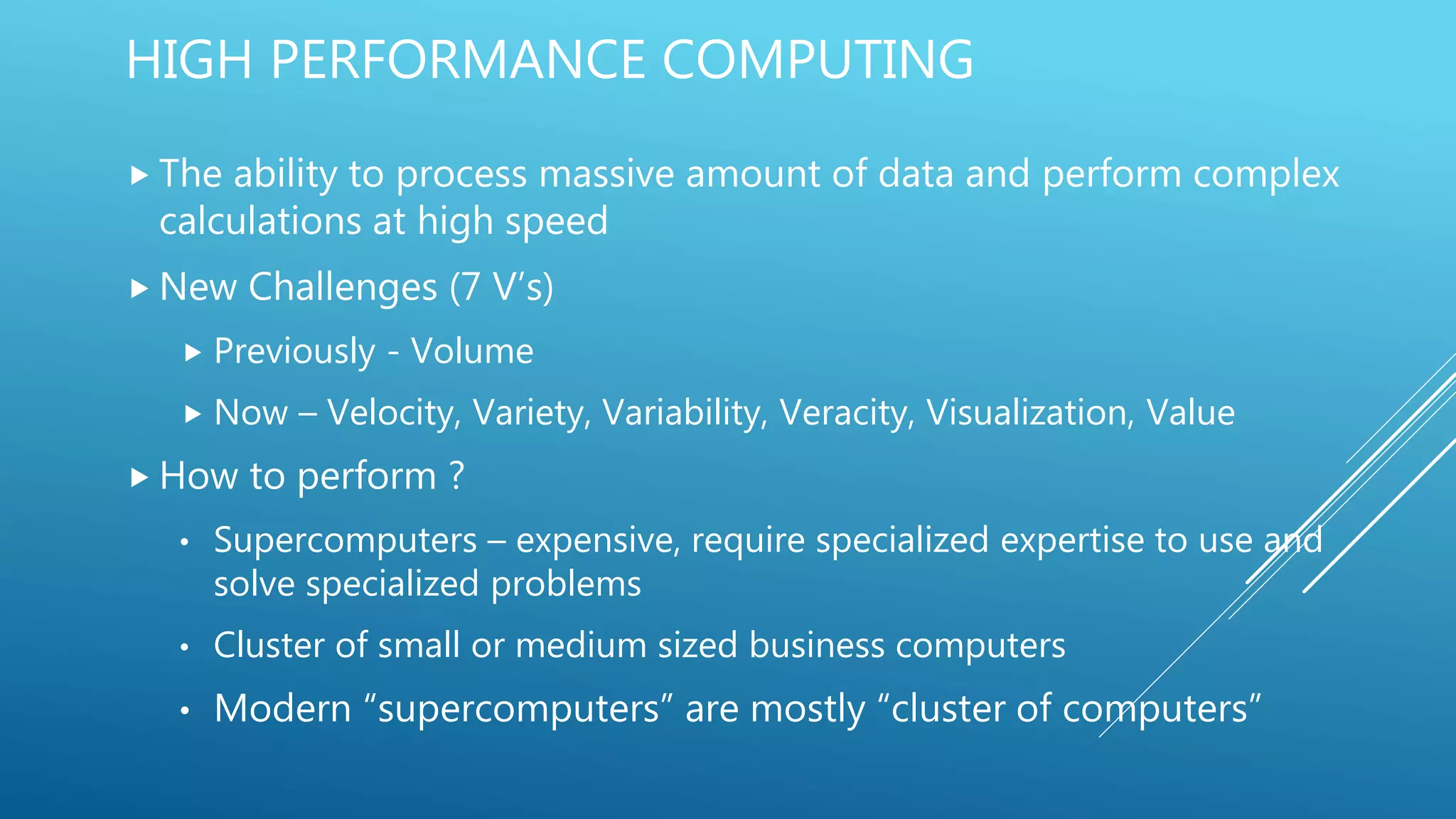

The document discusses the methodologies and techniques for extracting insights from the New York City Yellow Taxi dataset, emphasizing the importance of high-performance computing in handling big data characterized by volume, velocity, and variety. It explores various frameworks such as MapReduce, Pig, Hive, and Spark, highlighting their operational differences, coding strategies, and performance metrics for data processing. Additionally, it provides examples of queries and challenges associated with each framework, illustrating the capabilities of modern analytics engines in managing vast datasets.