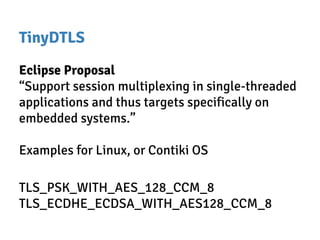

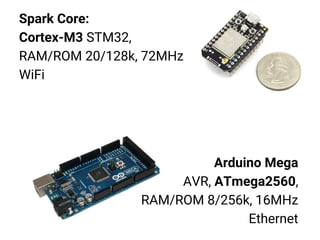

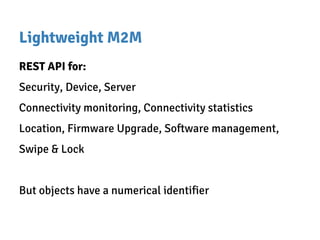

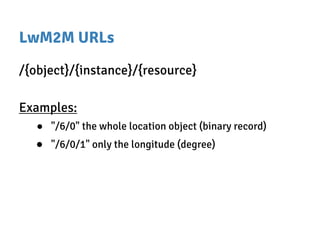

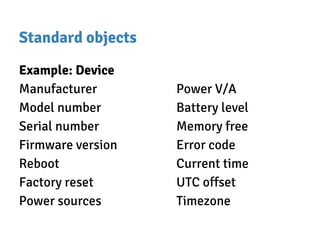

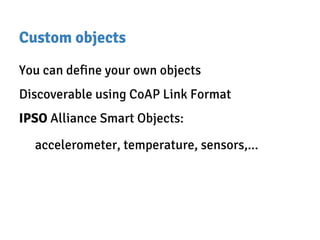

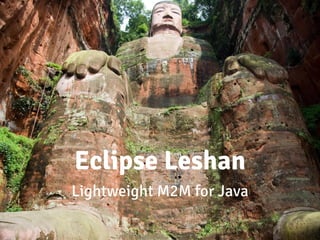

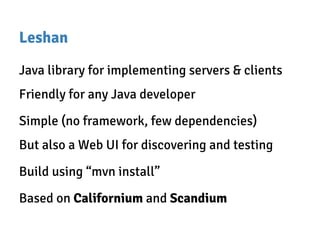

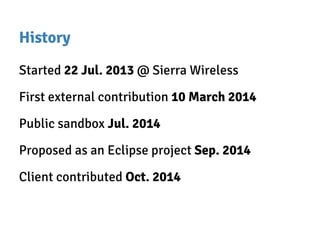

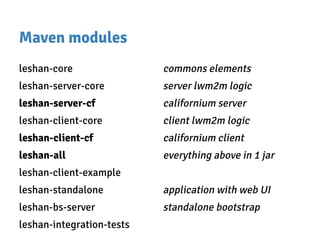

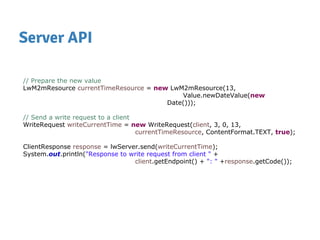

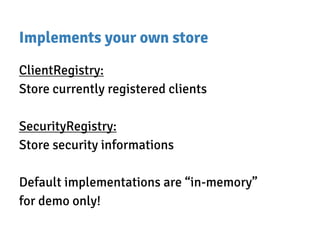

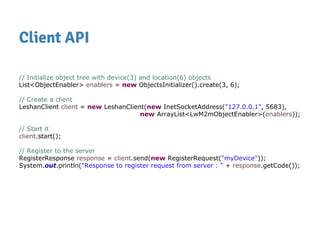

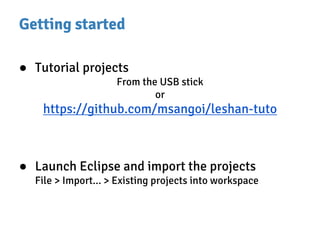

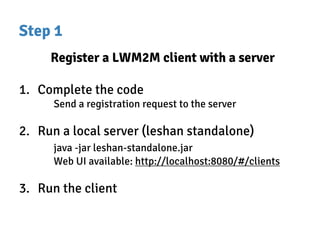

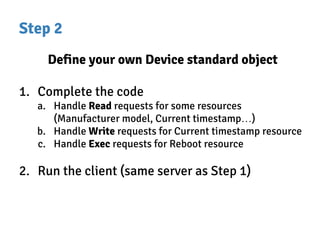

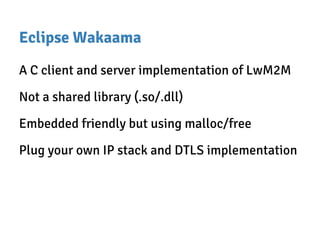

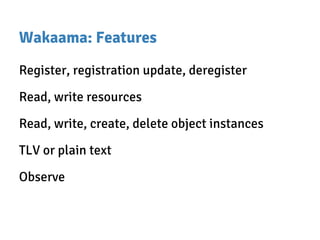

The document discusses Lightweight M2M (LwM2M), a device management protocol for IoT, utilizing the Constrained Application Protocol (CoAP) for efficient communication. It details the structure and features of LwM2M, including its use for device monitoring, firmware updates, and managing object resources, as well as providing a hands-on demonstration with a smartwatch. Additionally, it introduces Eclipse Leshan, a Java library for implementing LwM2M servers and clients, and outlines tutorial steps for setting up and customizing the protocol in applications.

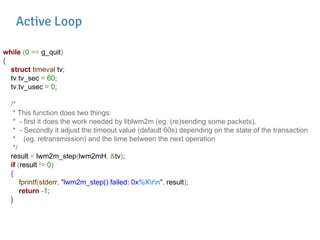

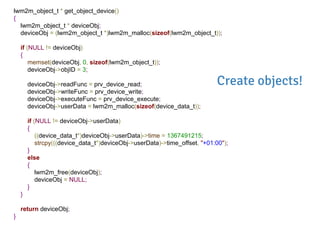

![objArray[0] = get_object_device();

if (NULL == objArray[0])

{

fprintf(stderr, "Failed to create Device objectrn");

return -1;

}

objArray[1] = get_object_firmware();

if (NULL == objArray[1])

{

fprintf(stderr, "Failed to create Firmware objectrn");

return -1;

}

objArray[2] = get_test_object();

if (NULL == objArray[2])

{

fprintf(stderr, "Failed to create test objectrn");

return -1;

}

lwm2mH = lwm2m_init(prv_connect_server, prv_buffer_send, &data);

if (NULL == lwm2mH)

{

fprintf(stderr, "lwm2m_init() failedrn");

return -1;

}

result = lwm2m_configure(lwm2mH, "testlwm2mclient", BINDING_U, NULL, OBJ_COUNT, objArray);

...

result = lwm2m_start(lwm2mH);

Configure

Wakaama!](https://image.slidesharecdn.com/hands-onwithlightweightm2m-150623115612-lva1-app6891/85/Hands-on-with-lightweight-m2m-and-Eclipse-Leshan-44-320.jpg)