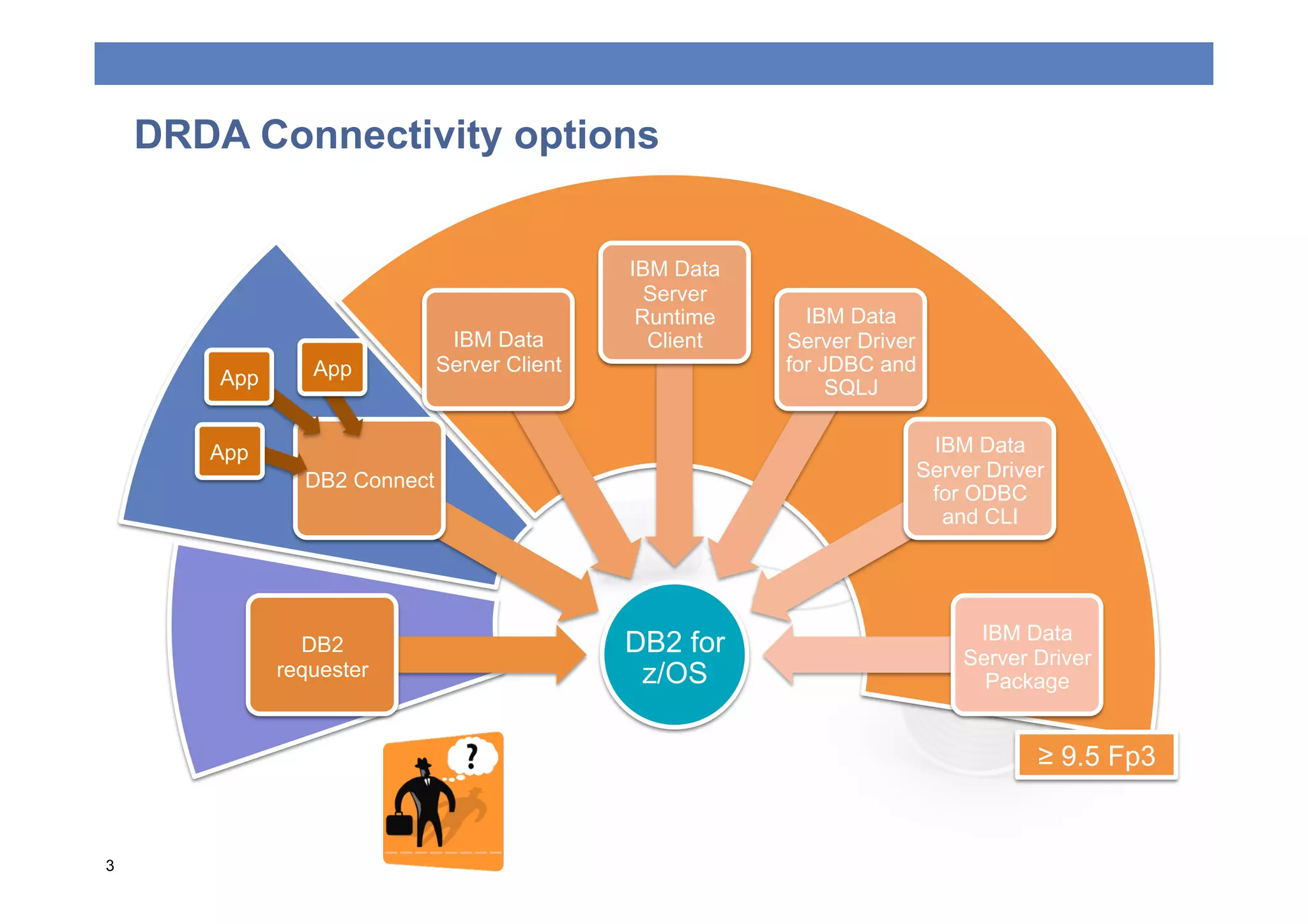

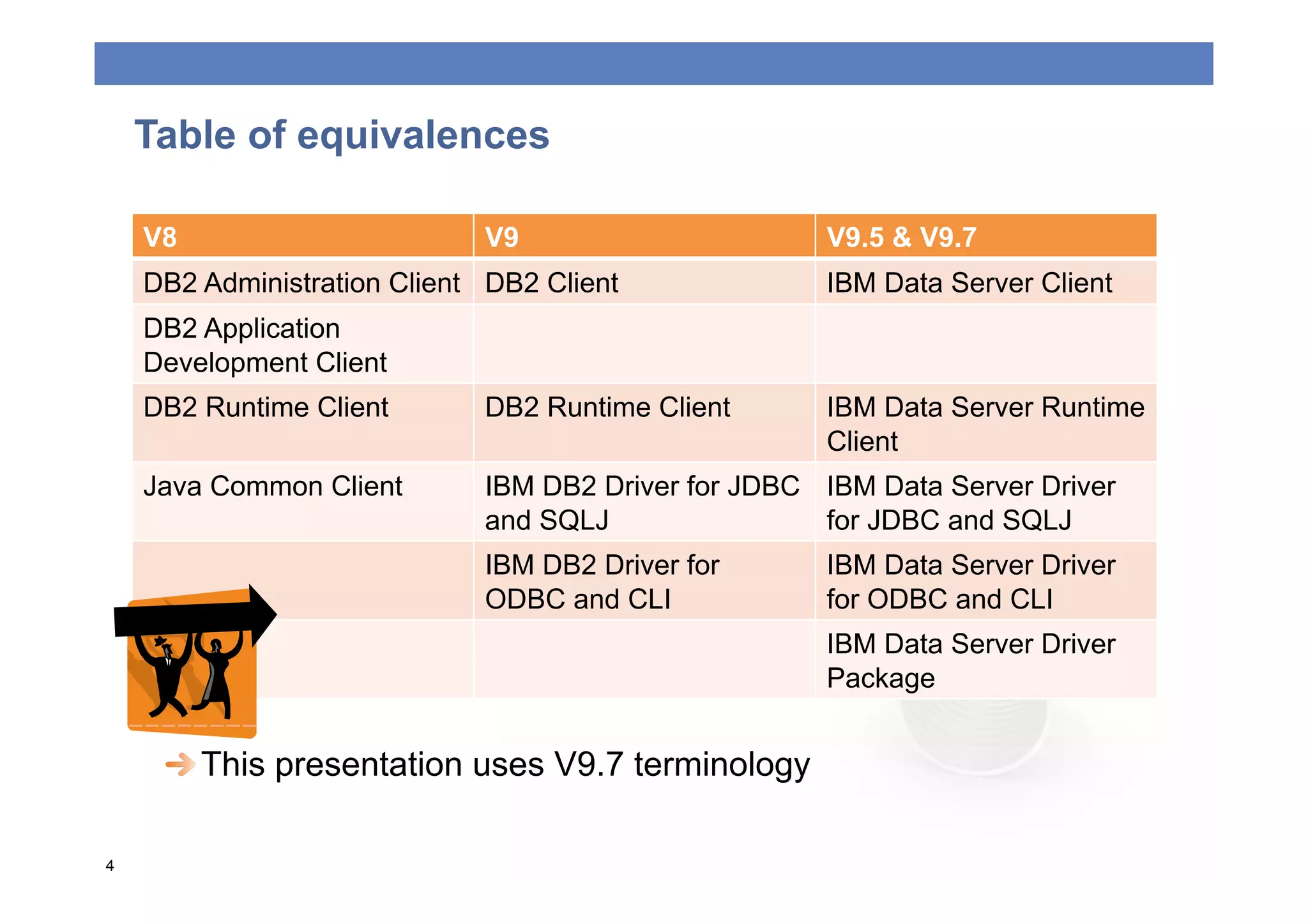

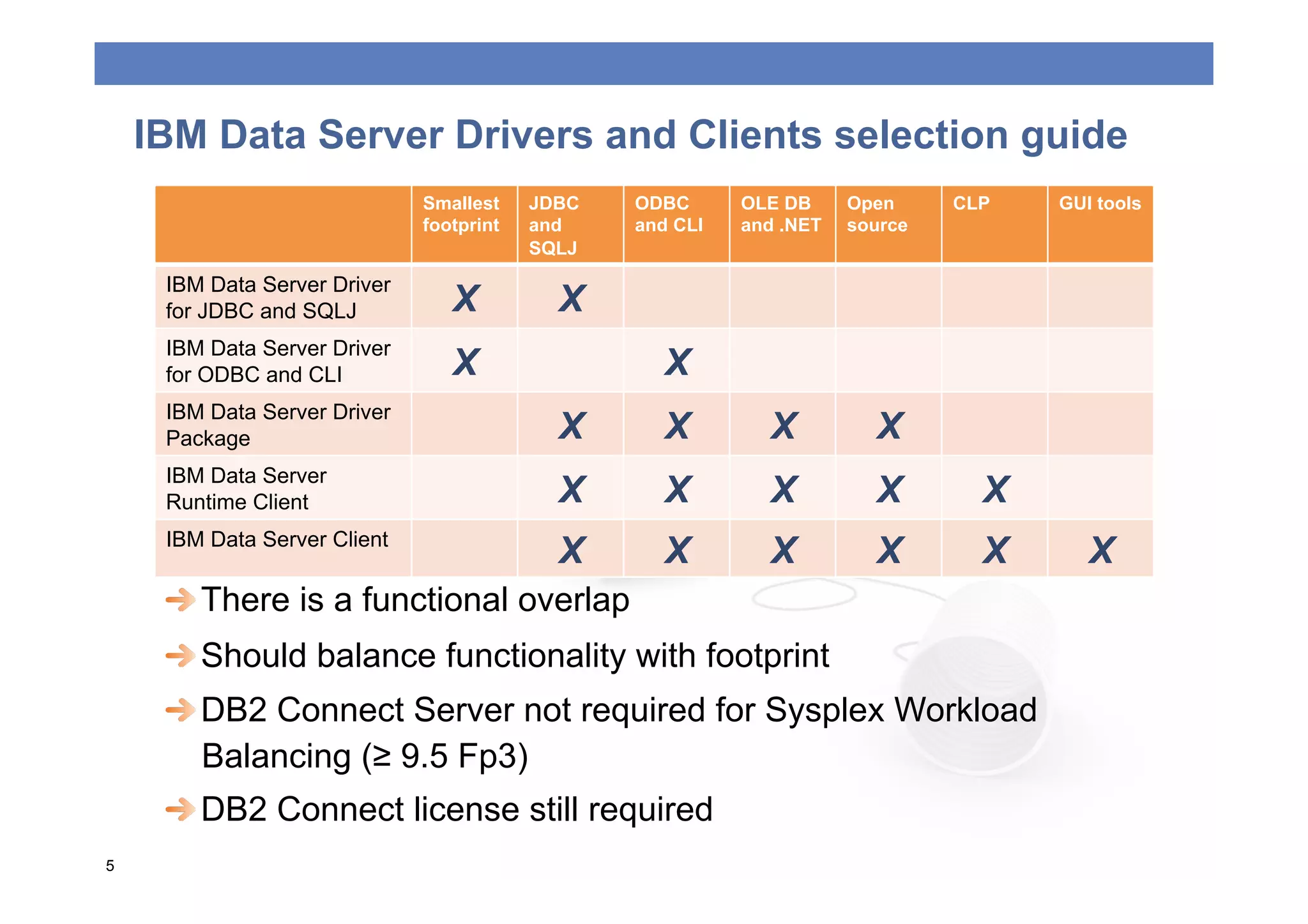

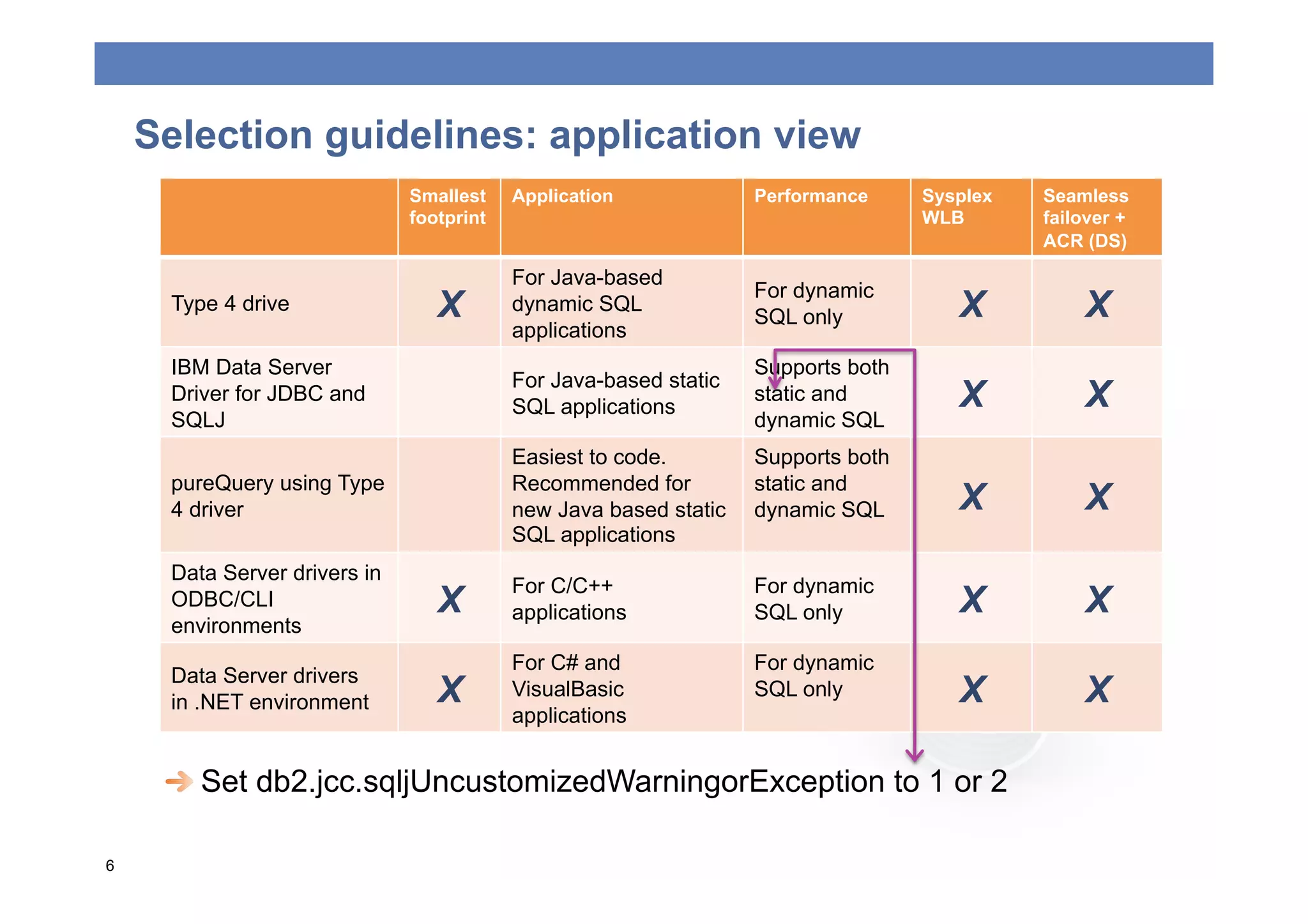

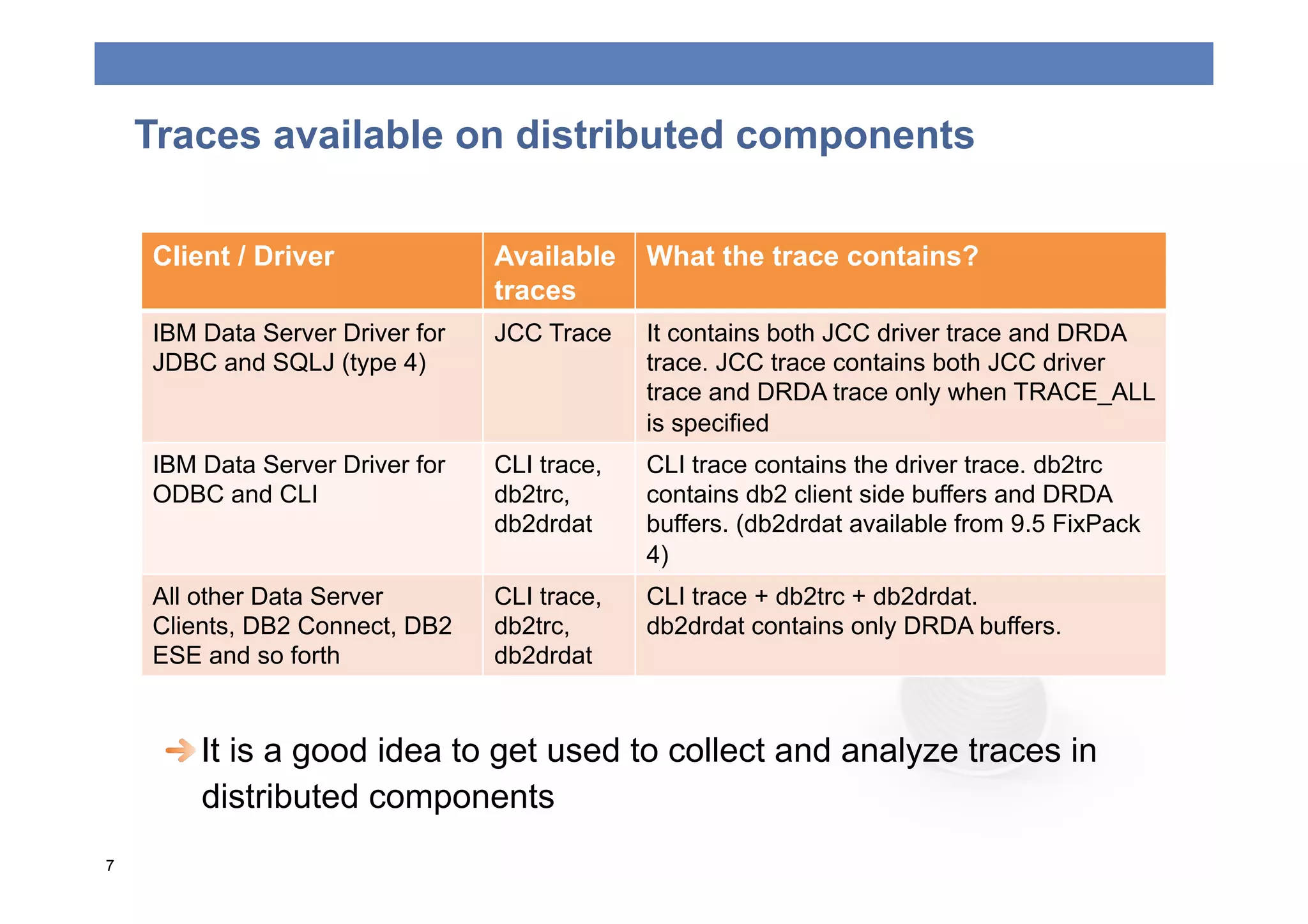

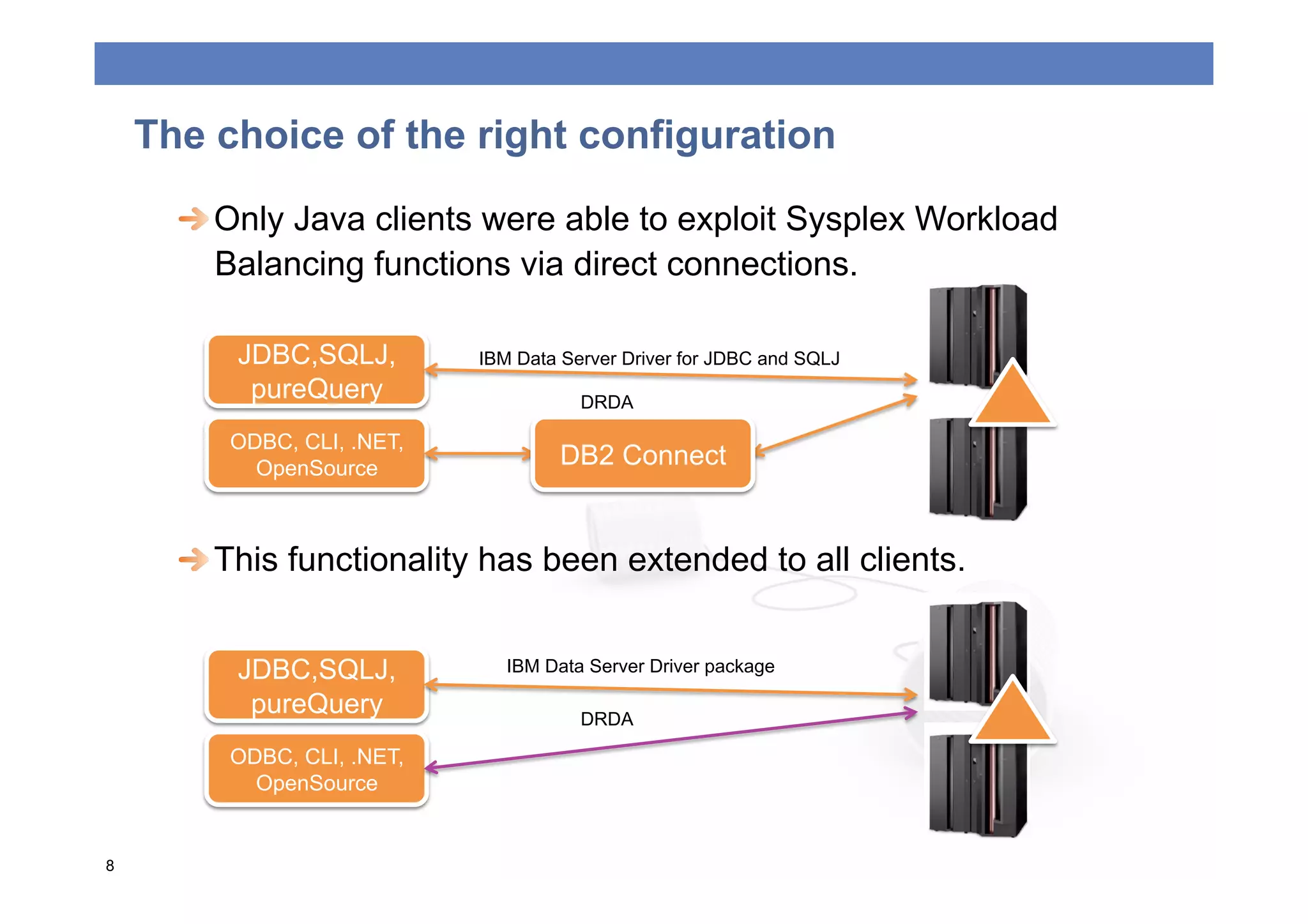

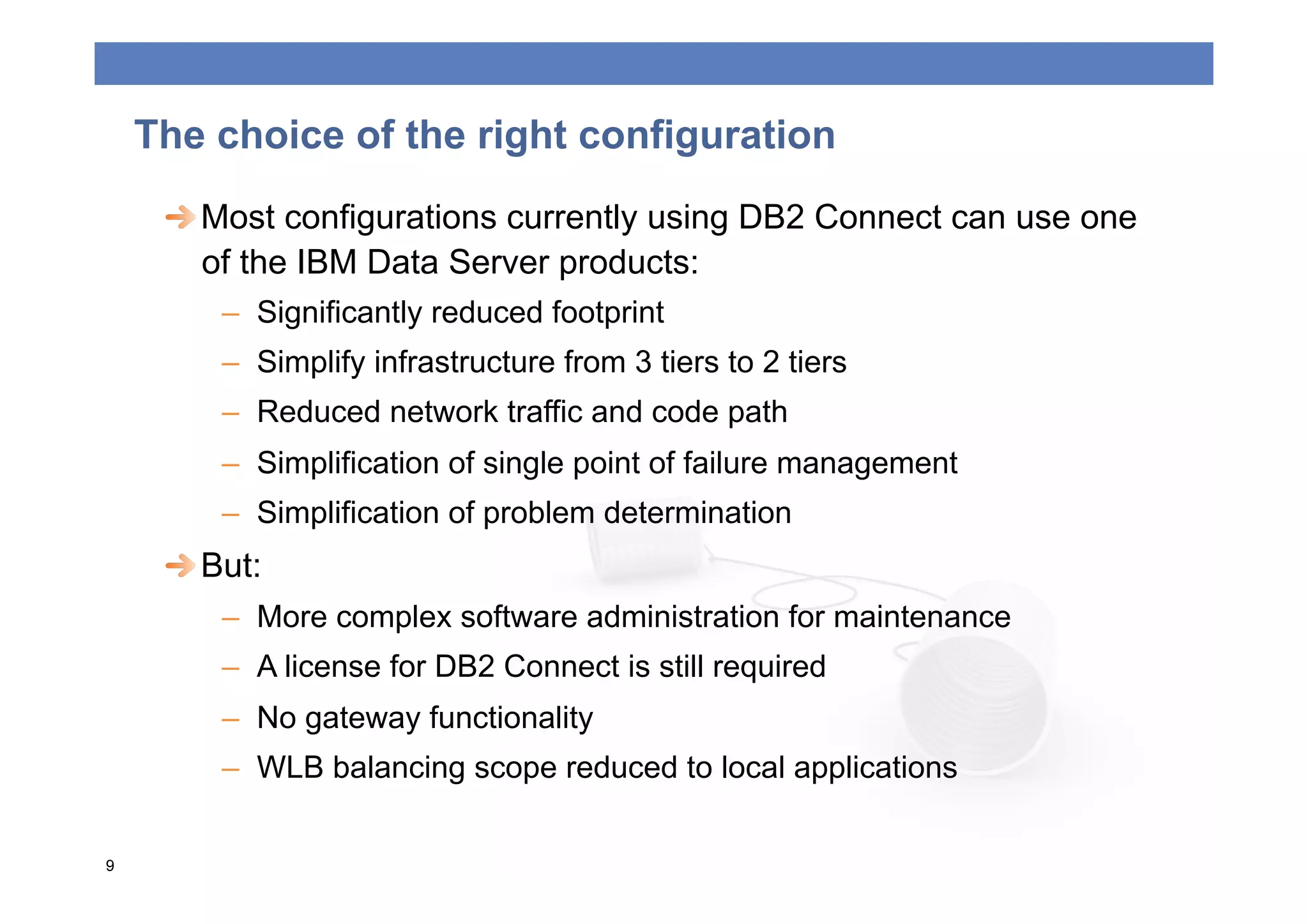

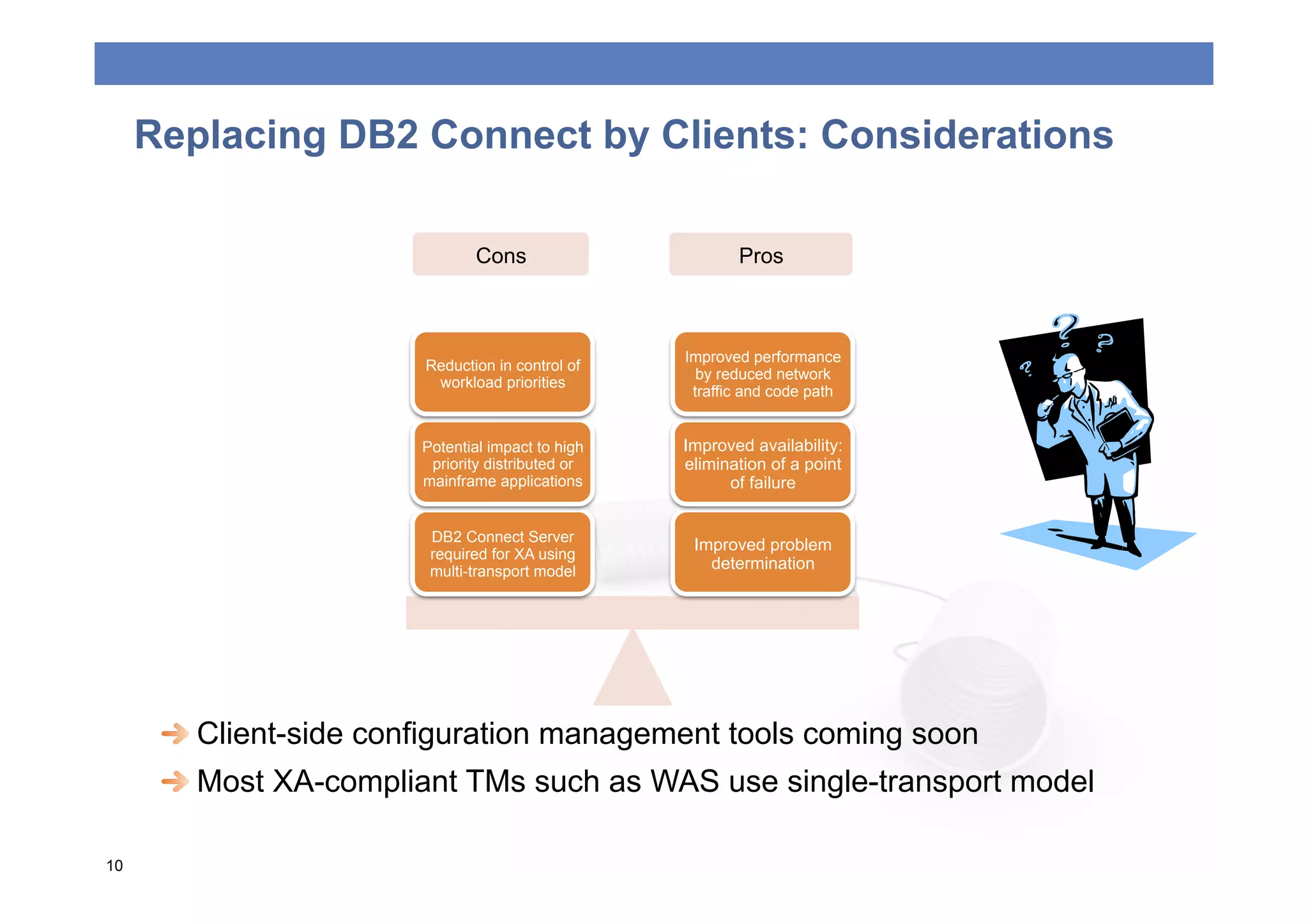

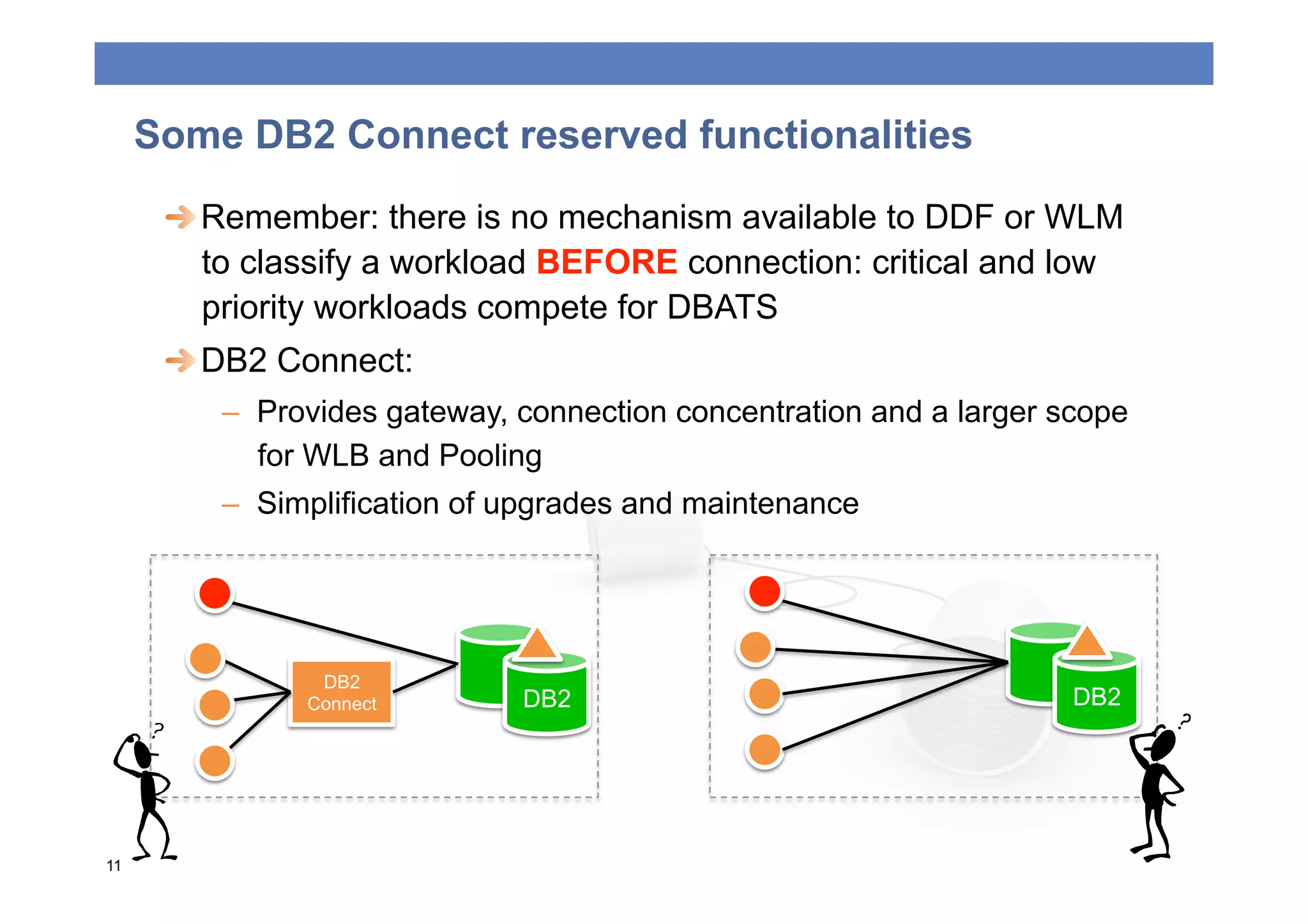

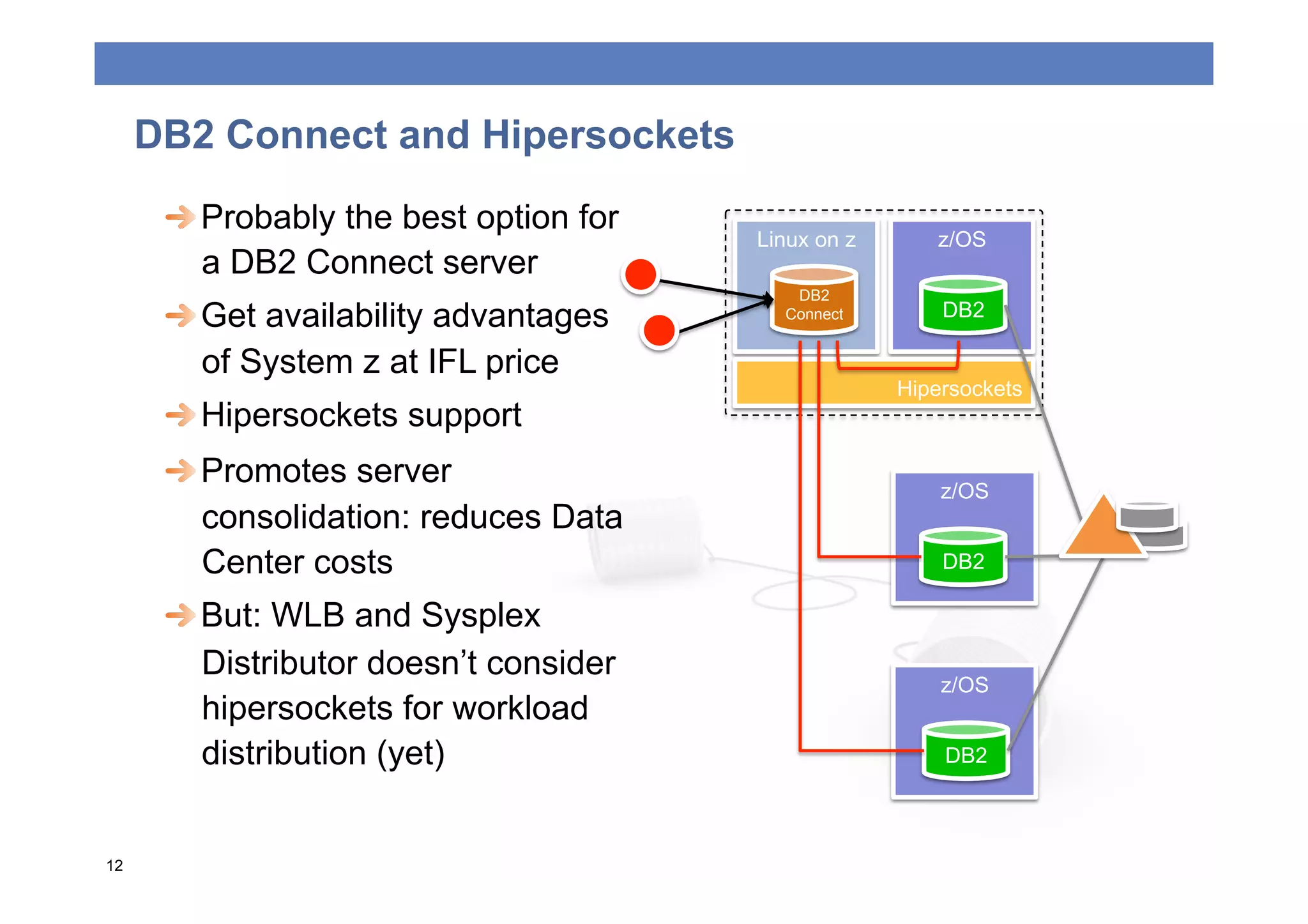

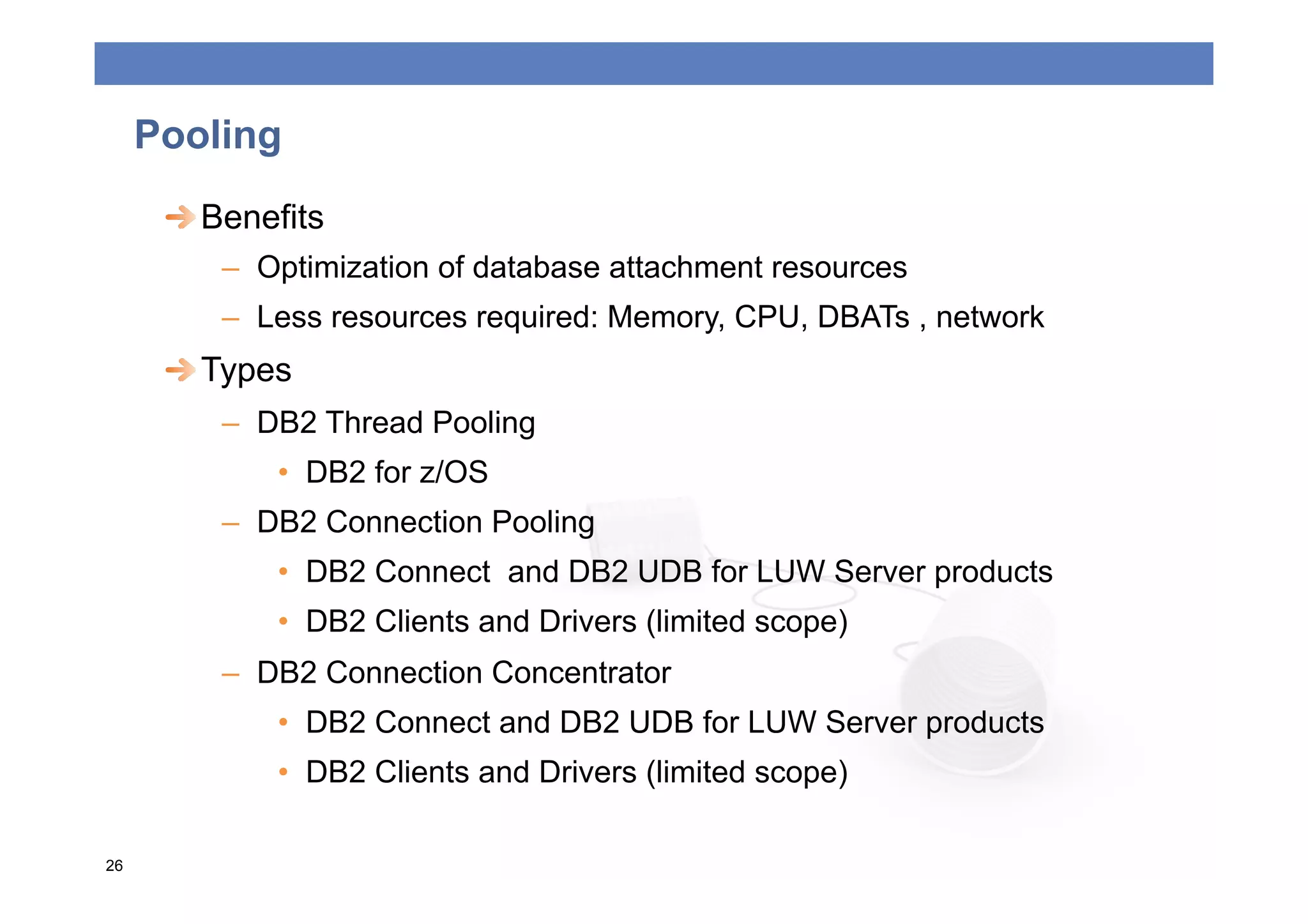

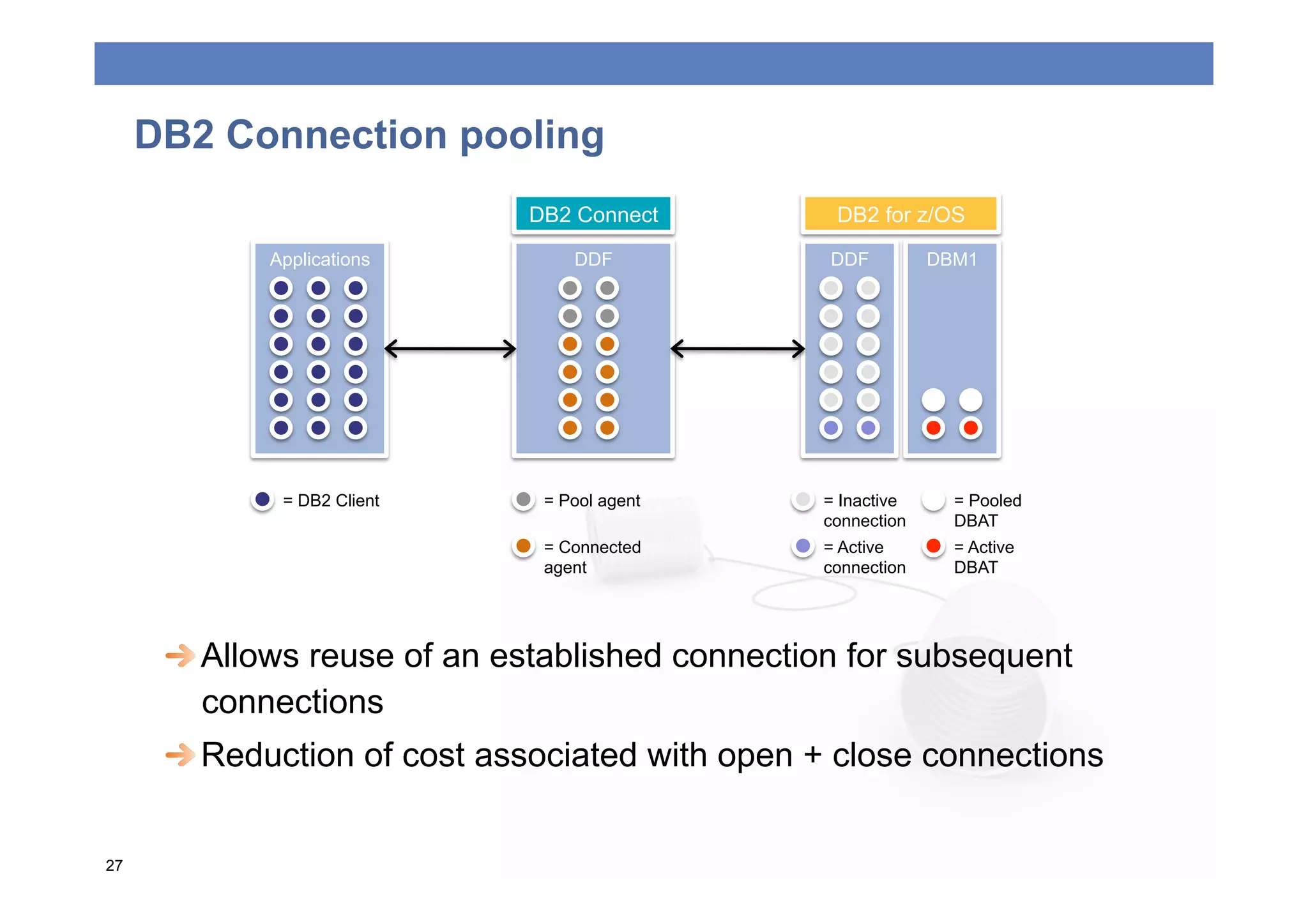

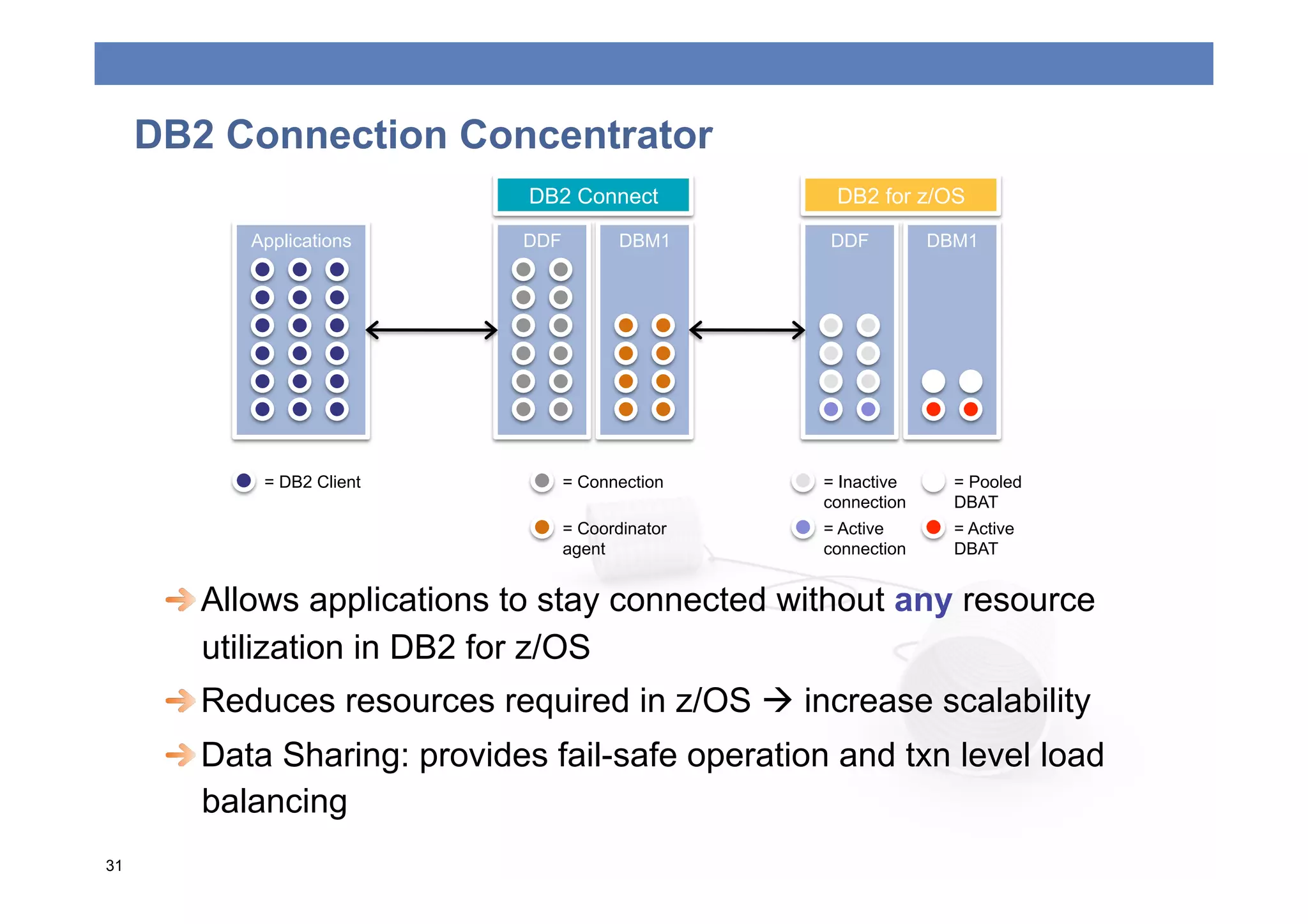

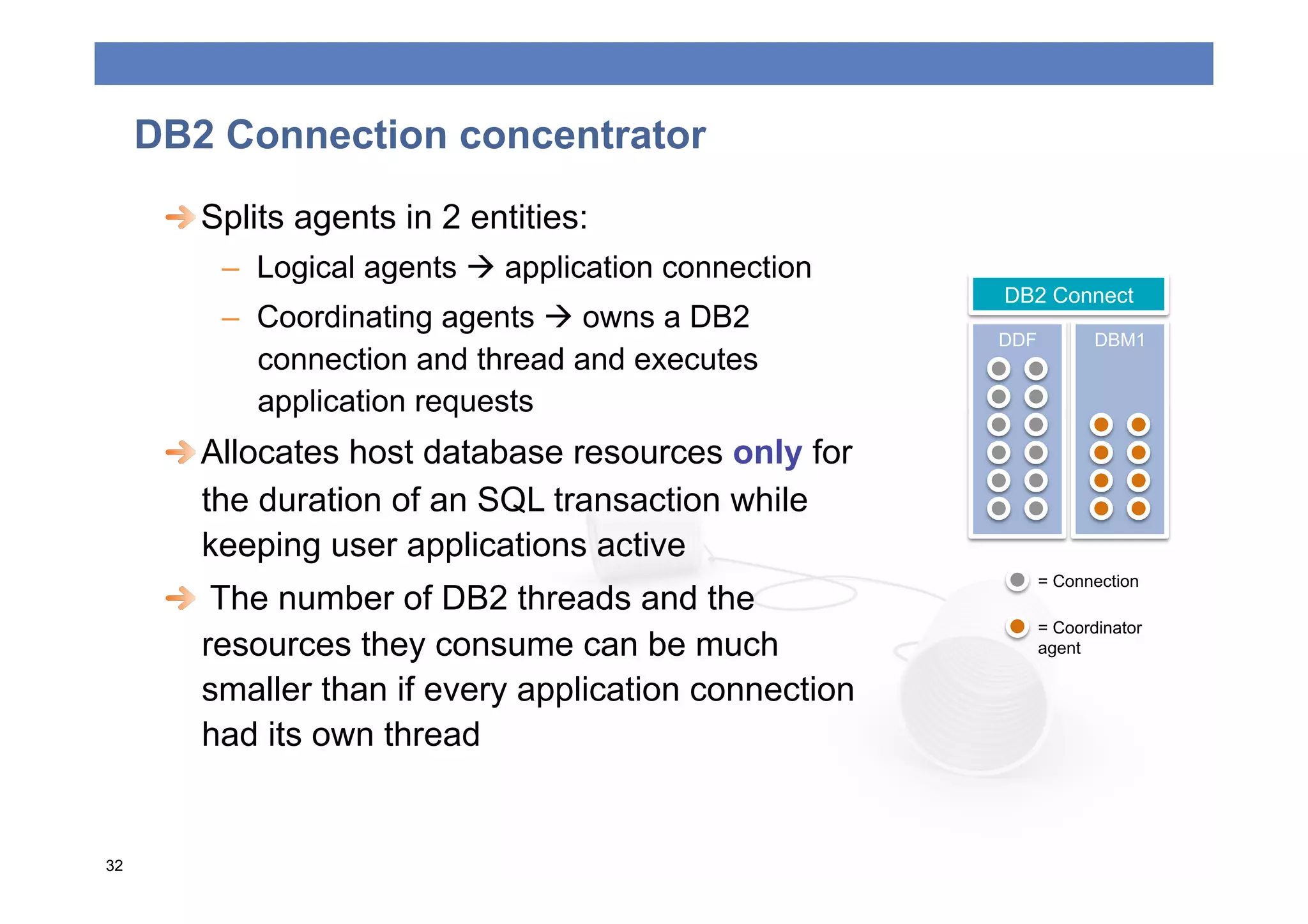

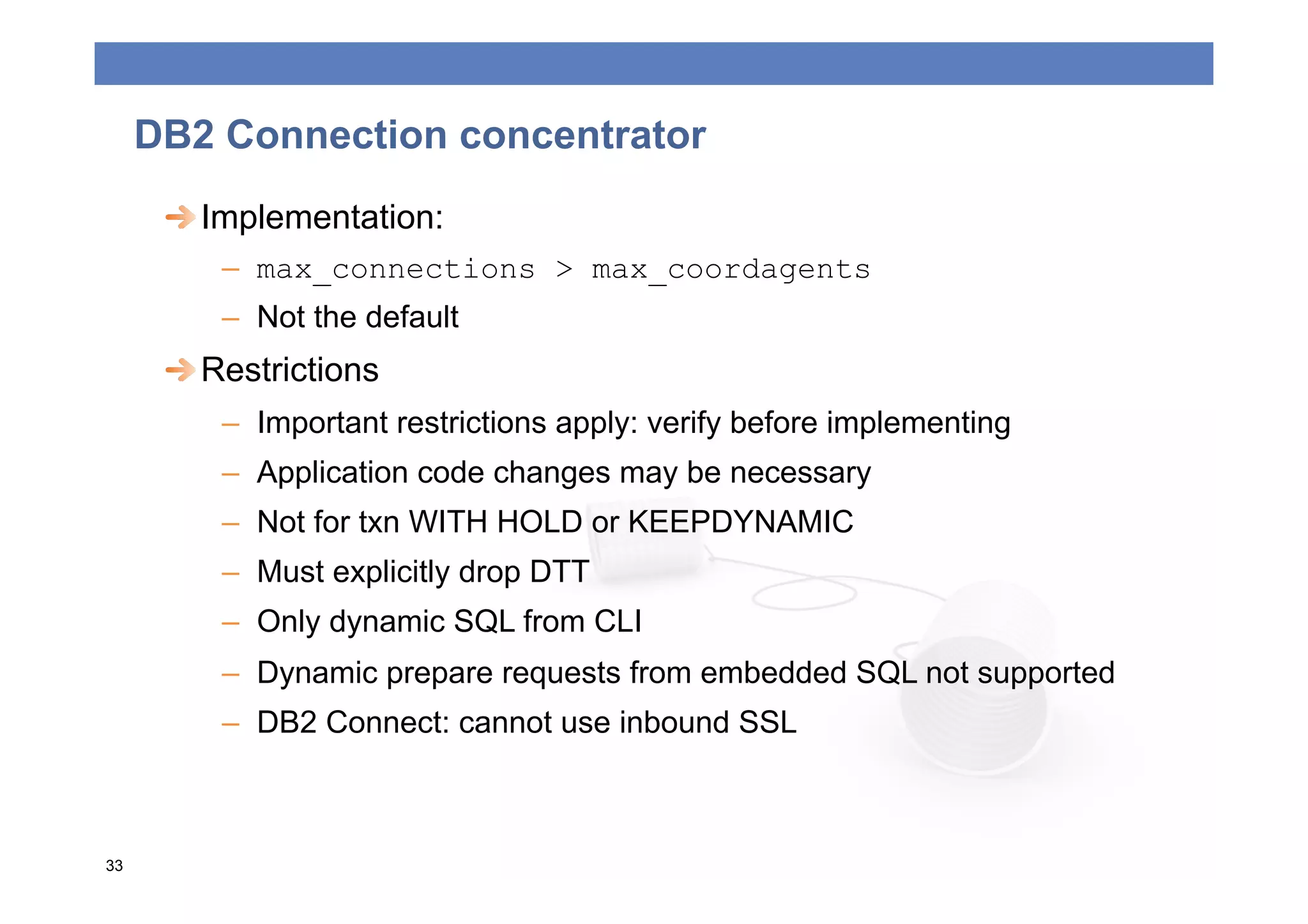

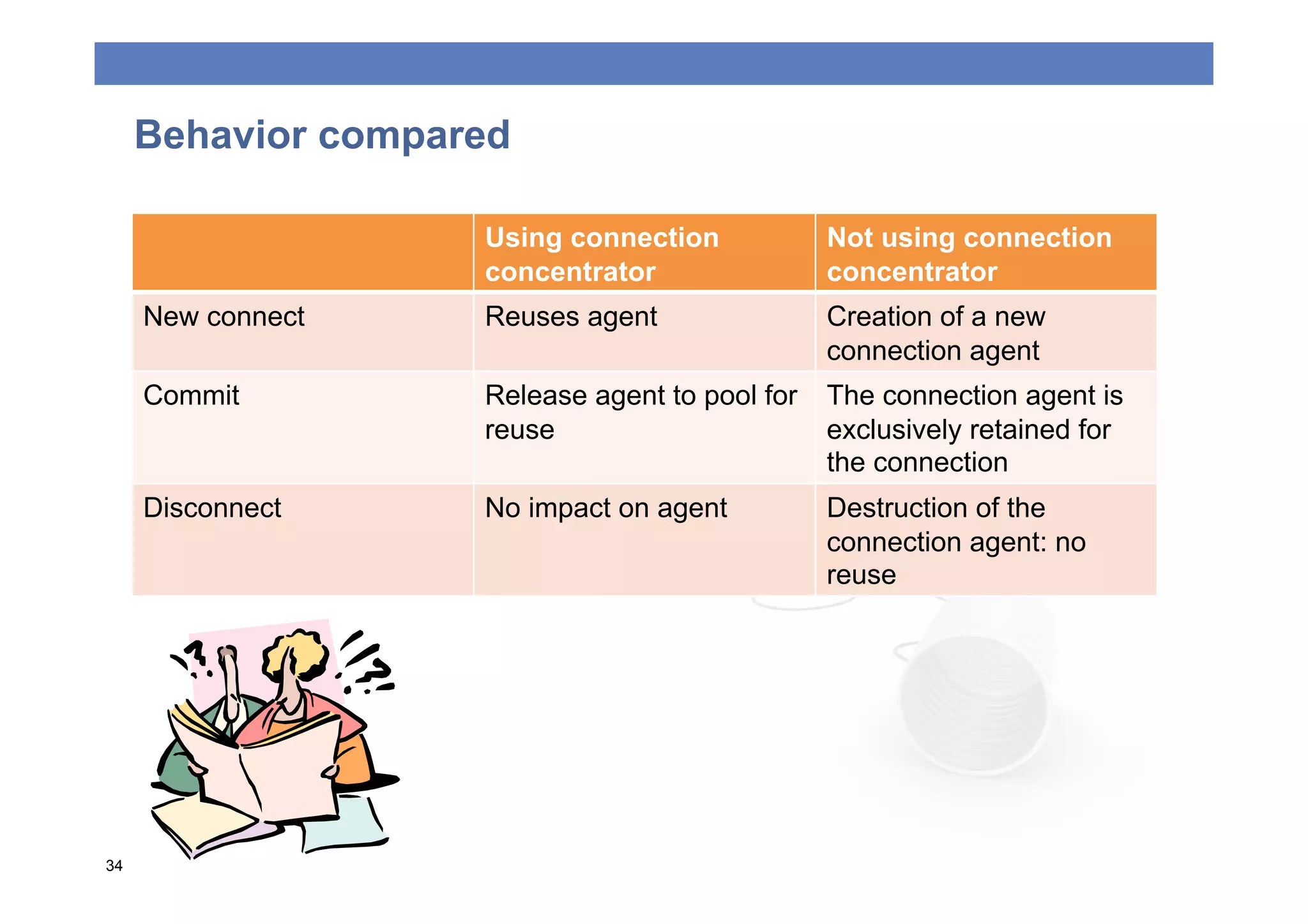

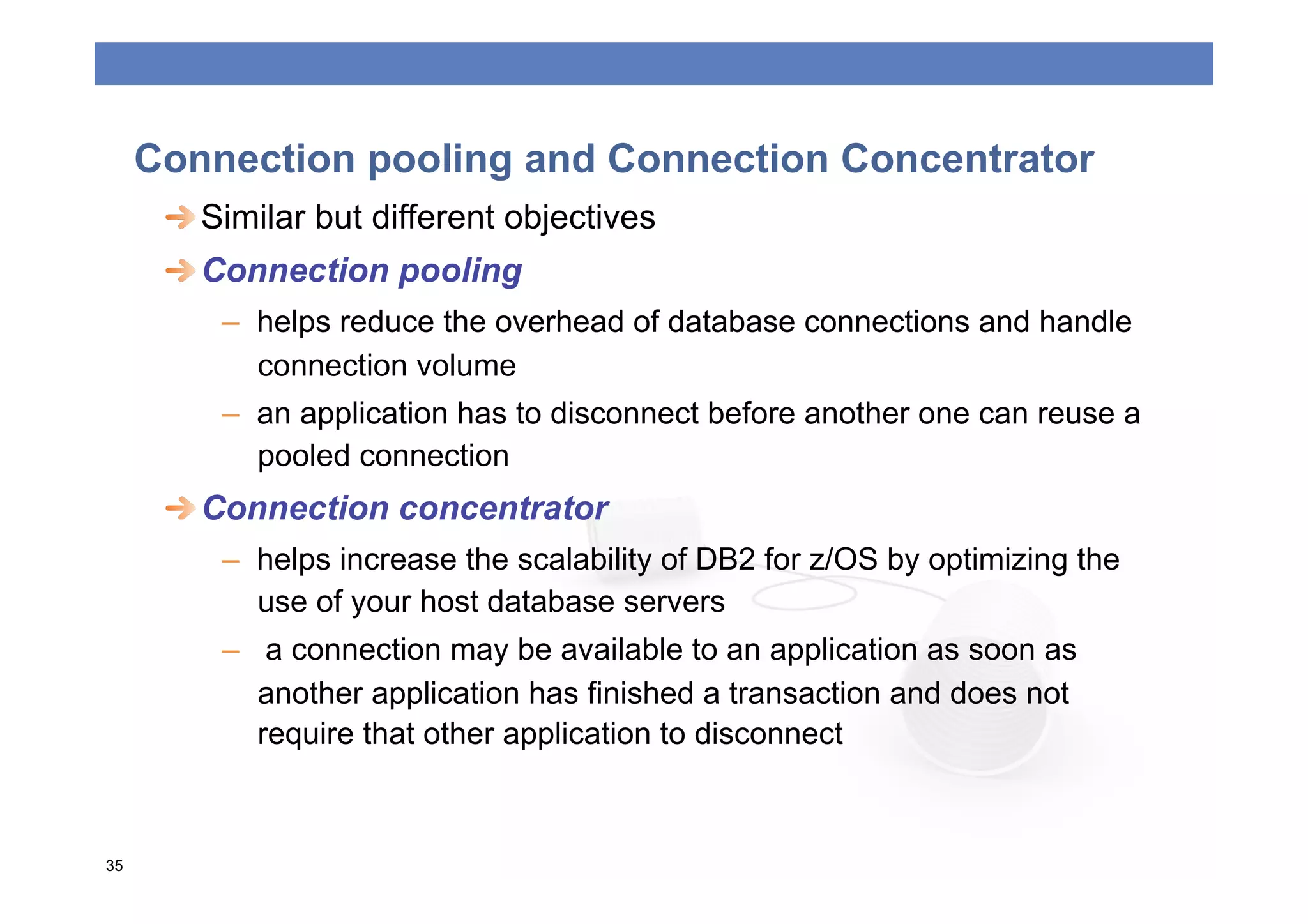

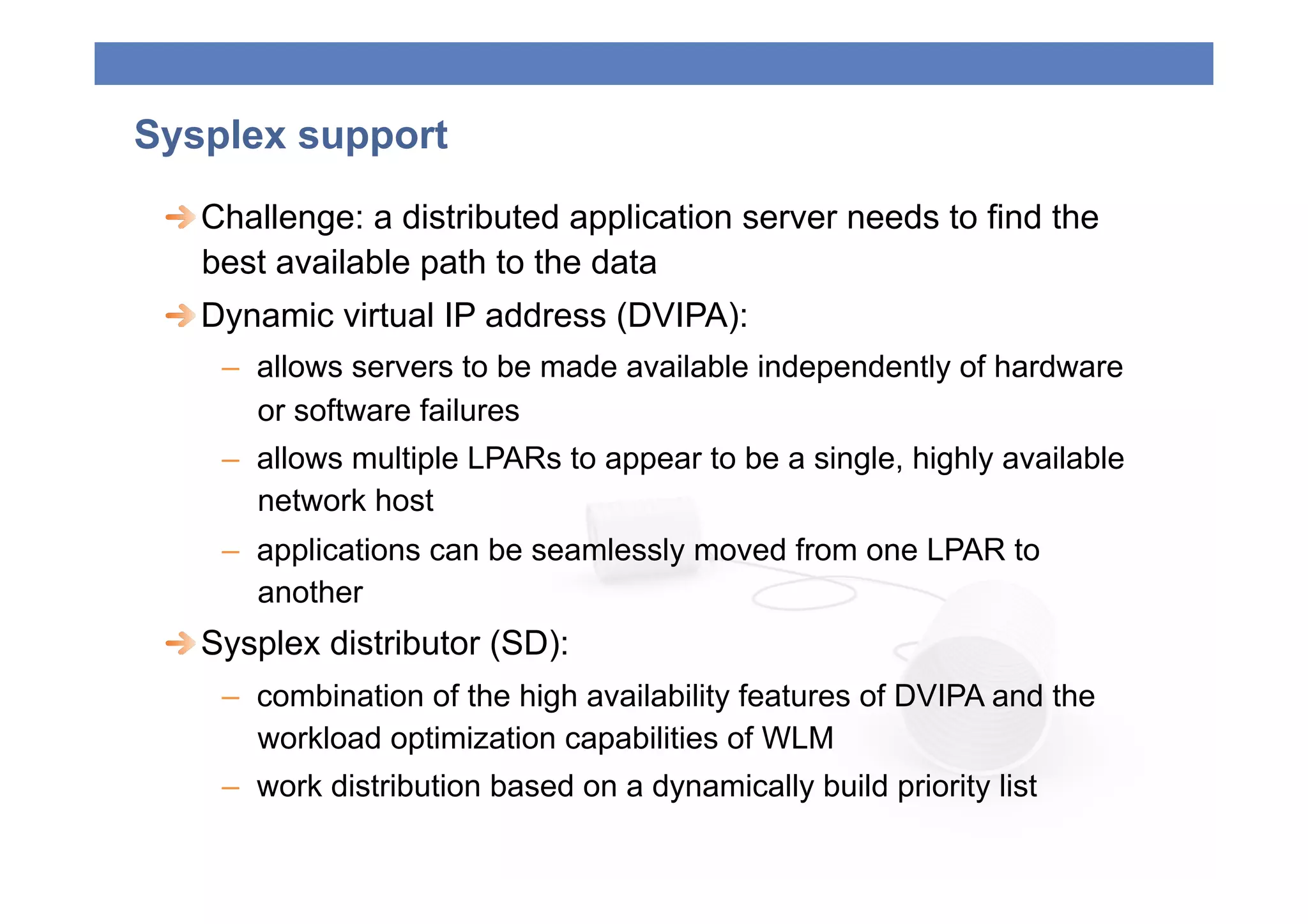

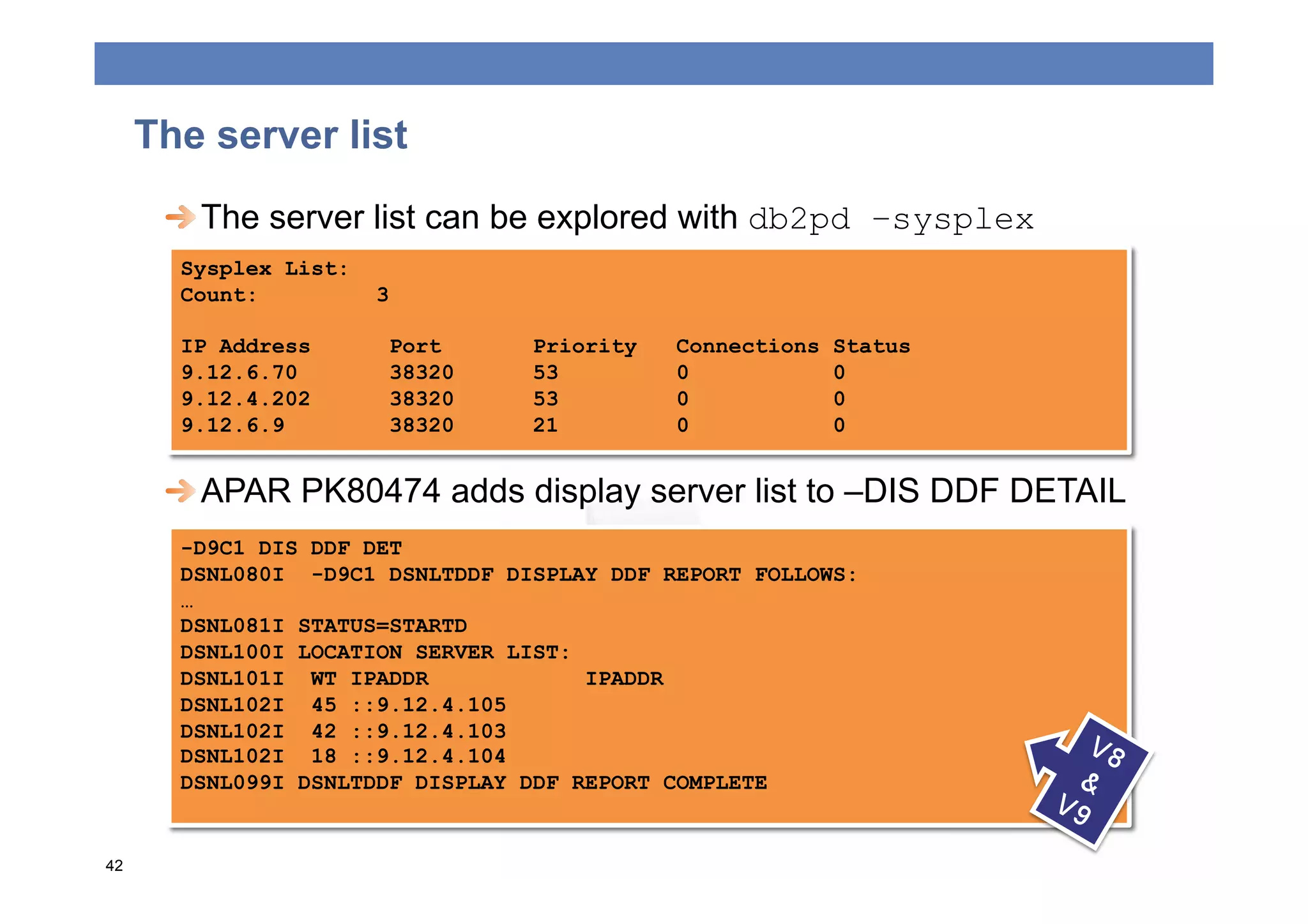

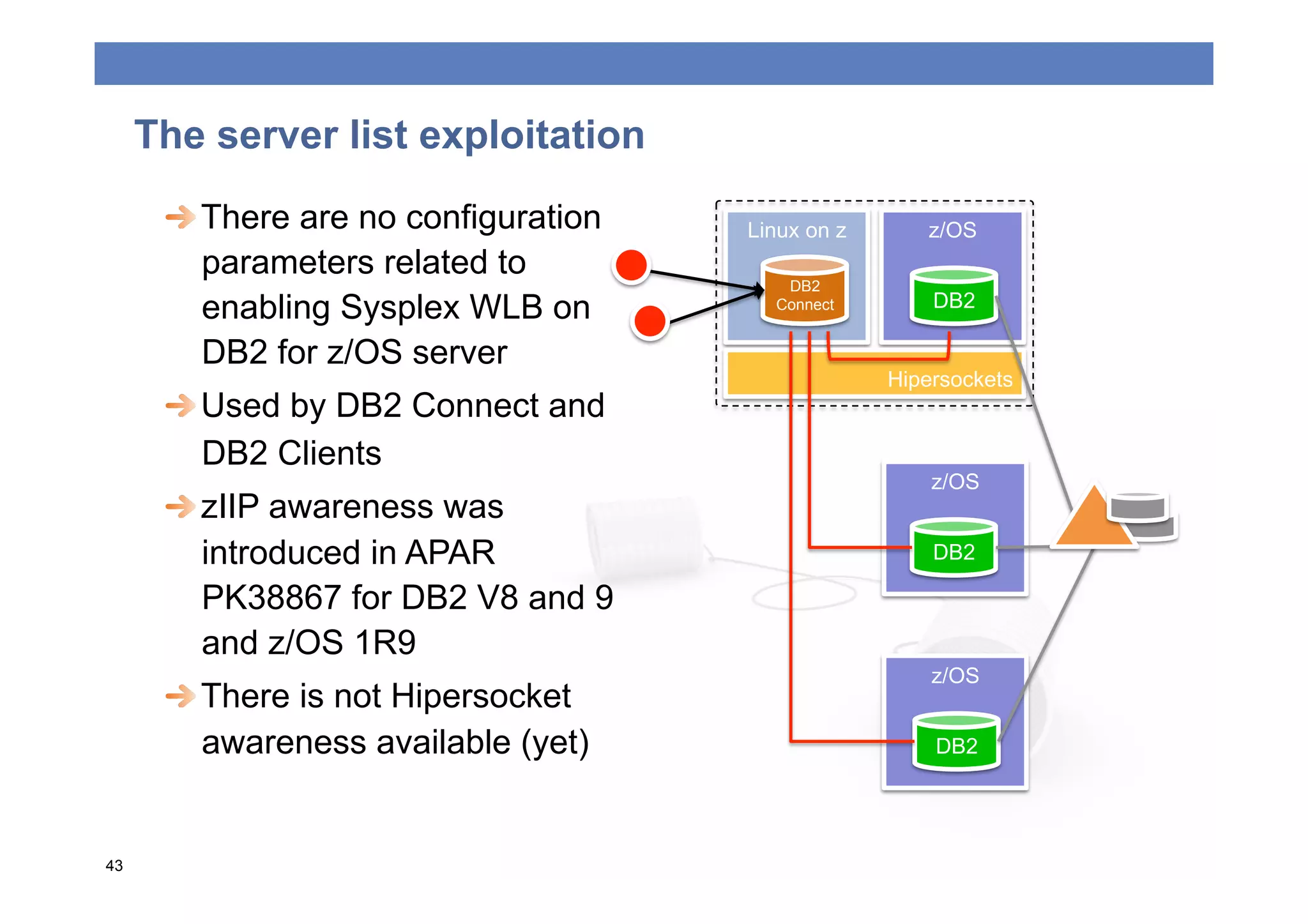

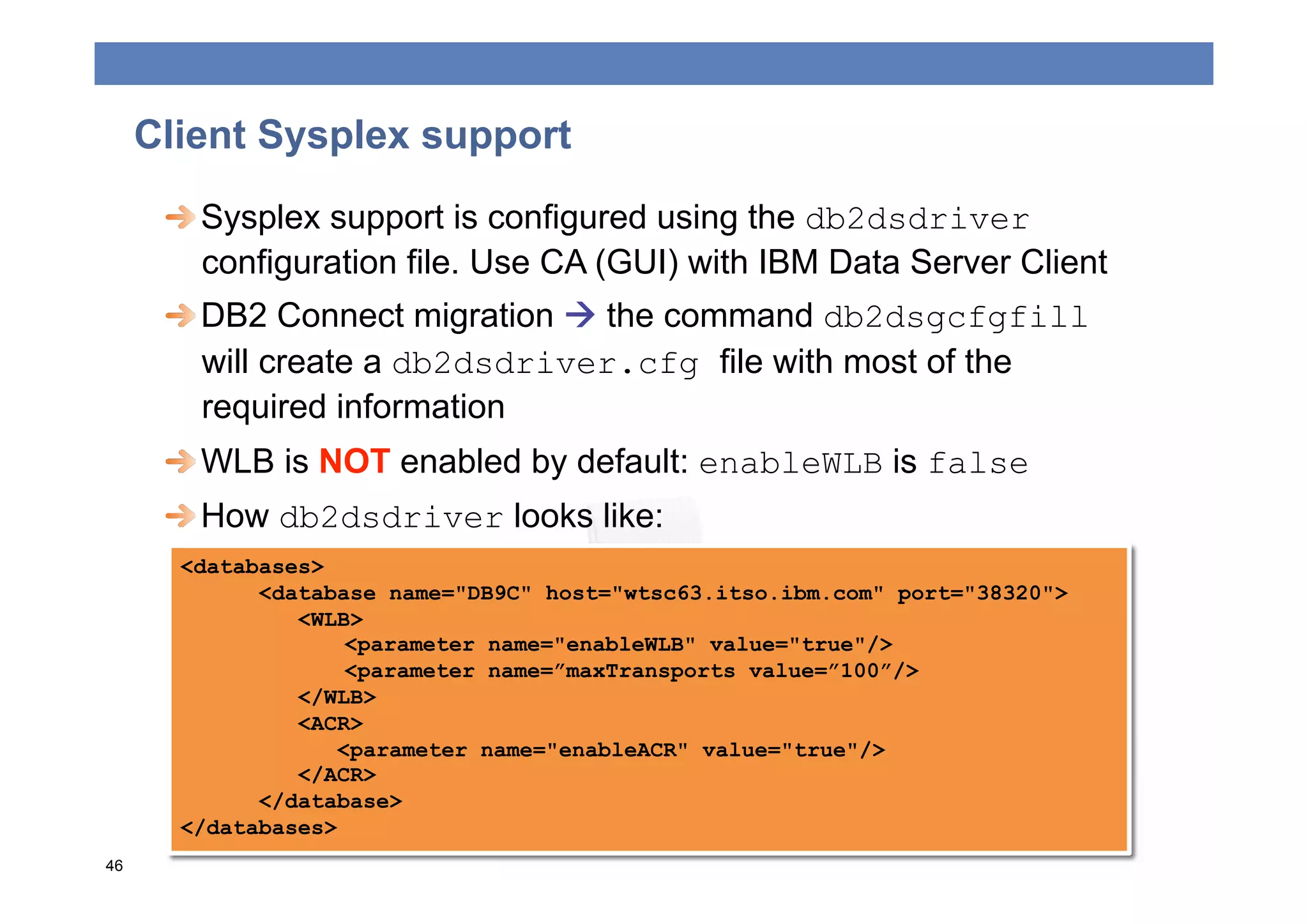

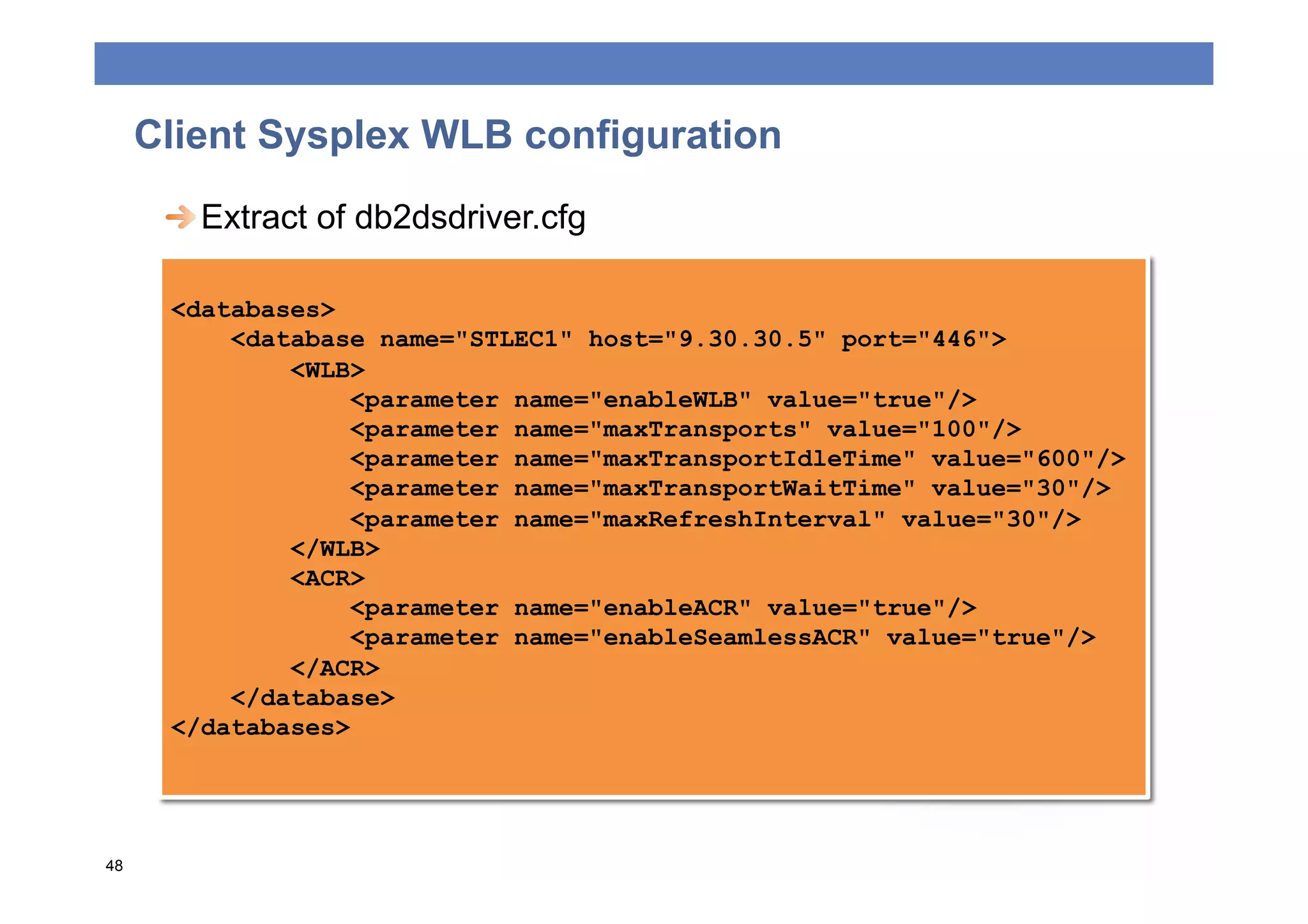

The document discusses connectivity options for talking with DB2, including DB2 Connectivity drivers, clients, and protocols. It provides an overview of DB2 connectivity drivers for JDBC, ODBC, CLI, and .NET, and how they map to older versions. Guidelines are given for selecting the right driver based on application needs and considerations around footprint, performance, high availability features, and workload balancing. The roles of DB2 Connect and connectivity protocols like DRDA, private protocol, and hipersockets are also summarized.