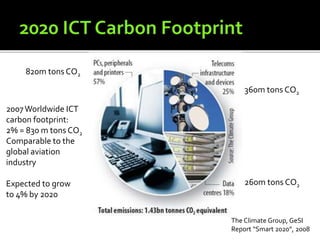

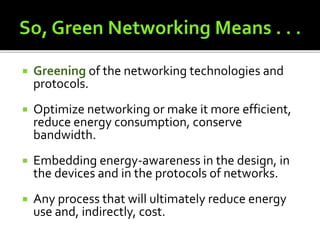

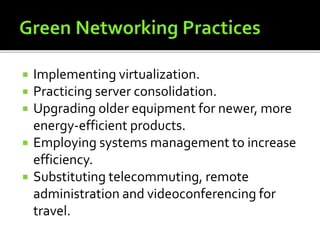

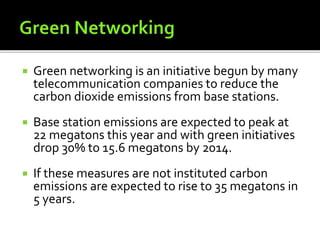

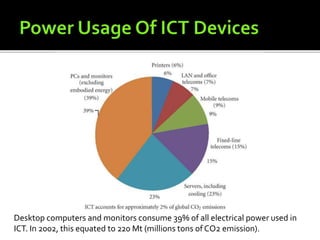

Green networking aims to reduce the carbon footprint of information and communication technology (ICT) networks by improving energy efficiency. Key strategies include optimizing network infrastructure utilization through technologies like virtualization, improving equipment energy efficiency, and locating network resources closer to renewable energy sources. Measurement of energy savings is important to track progress towards a lower carbon "Green Network".