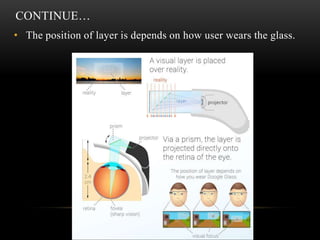

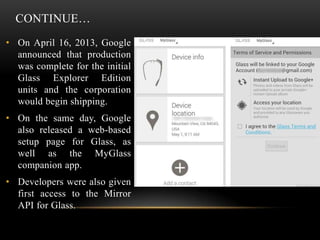

Project Glass is a Google initiative aimed at developing augmented reality head-mounted displays to provide hands-free access to information and internet interaction via voice commands. It integrates several technologies including a mini projector, camera, and touchpad, offering features such as voice recognition, navigation, and social media sharing. While it boasts advantages like portability and comprehensive smartphone functionality, it also raises concerns regarding privacy and social acceptance.