Fundamental computing algorithms

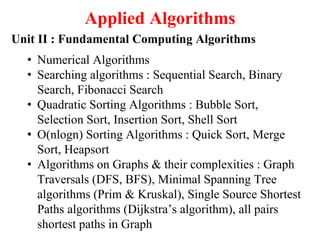

- 1. Applied Algorithms Unit II : Fundamental Computing Algorithms • Numerical Algorithms • Searching algorithms : Sequential Search, Binary Search, Fibonacci Search • Quadratic Sorting Algorithms : Bubble Sort, Selection Sort, Insertion Sort, Shell Sort • O(nlogn) Sorting Algorithms : Quick Sort, Merge Sort, Heapsort • Algorithms on Graphs & their complexities : Graph Traversals (DFS, BFS), Minimal Spanning Tree algorithms (Prim & Kruskal), Single Source Shortest Paths algorithms (Dijkstra’s algorithm), all pairs shortest paths in Graph

- 2. Unit II : Fundamental Computing Algorithms Numerical Algorithms: • All mathematical problems like Solving Equations or System of equations, Computing Definite Integrals, Evaluating Functions etc. • These algorithms play a critical role in many scientific and engineering applications. Examples : Finding Square root of a given number, to find factorial n, Solution of a quadratic equation, Solution of simultaneous equations, Matrix manipulations etc.

- 3. Unit II : Fundamental Computing Algorithms Searching Algorithms: Sequential Search: Used when elements are unsorted. Elements are scanned sequentially for searching the given element. Algorithm SeqSearch(a, x, n) // Search for x in a[1:n]. a[0] is used as additional space { i = n; a[0] = x; while (a[i] != x) do i = i – 1; return i; } Analysis : Best case : element to be searched is the last element in an array a[1:n]. Time complexity = O(1) and Space complexity = O(1)

- 4. Unit II : Fundamental Computing Algorithms Searching Algorithms: Sequential Search: Analysis : Worst case : element to be searched is the first element in an array a[1:n]. Time complexity = O(n) Or by using recurrence equation T(n) = T(n – 1) + 1 Given, T(1) = 1 After solving this recurrence we get, T(n) = O(n) Space complexity = O(1) Average Case : For Successful Search time required is = (1 + 2 + … + n) / n = n + 1 = O(n) For Unsuccessful Search time required is = O(n) Time complexity = O(n) Space complexity = O(1)

- 5. Unit II : Fundamental Computing Algorithms Searching Algorithms: Binary Search: Used when elements are in sorted order. A given element x is compared with the middle element in the partition. If x = middle element then search is successful else if x < middle element then element is searched in upper half of the partition else it is searched in lower part of the partition. Binary Search is an example of Divide and Conquer strategy.

- 6. Unit II : Fundamental Computing Algorithms Searching Algorithms: Binary Search : Recursive algorithm : Algorithm binsearch-rec (a, i, l, x) // given an array a[i:l] of elements in non-decreasing order, 1<=i<=l, determine // whether x is present and if so return j such that x = a[j]; else return 0. { if (i = l) { if x = a[i] then return i; else return 0; } else mid = [i + l) / 2; if x = a[mid] then return mid; else if x < a[mid] return binsearch-rec (a, i, mid-1, x); else return binsearch-rec (a, mid+1, l. x); } }

- 7. Unit II : Fundamental Computing Algorithms Searching Algorithms: Binary Search : Non-recursive or Iterative algorithm : Algorithm binsearch-nrec (a, i, l, x) // given an array a[i:l] of elements in non-decreasing order, 1<=i<=l, // determine whether x is present and if so return j // such that x = a[j]; else // return 0. { low = i; high = l; while (low <= high) do { mid = lower ceil of (low + high)/2 if x = a[mid] then return mid; else if x < a[mid] high = mid - 1; else low = mid + 1; } }

- 8. Unit II : Fundamental Computing Algorithms Searching Algorithms: Binary Search : Analysis : Best case : element to be searched is the middle element in an array a[1:n]. Time complexity = O(1) and Space complexity is storage required for variables low, high and mid = O(1) Worst case: element to be searched is the last element in an array a[1:n] or it is absent in the array. Time complexity = O(log n) By using recurrence equation T(n) = T(n/2) + 1 Given, T(1) = 1 After solving this recurrence we get, T(n) = O(log n) Space complexity = O(1)

- 9. Unit II : Fundamental Computing Algorithms Searching Algorithms: Binary Search : Analysis : Average Case : For Unsuccessful Search time required is = Au = O(log n) For Successful search time required = As = O(log n) By using equations : E = I + 2n As = (I + n) / n Au = E / (n + 1) We get As = (1 + 1/n) Au - 1 = O(log n) for large values of n. Time complexity = O(log n) Space complexity = O(1)

- 10. Unit II : Fundamental Computing Algorithms Searching Algorithms: Fibonacci Search: Used when elements are in sorted order and density of elements increases from top to down for non-decreasing order. It is similar to binary search. Only difference is that the middle element is the element of array which is positioned at (k-1)th fibonacci number, where (k-1)th fibonacci number < n <= kth fibonacci number. Analysis : Best case time is O(1) | Refer “Fundamentals of Data Worst case time is O(log n) | Data Structures” Average case time is O(log n) | By Horowitz and Sahani Space complexity is O(1) |

- 11. Unit II : Fundamental Computing Algorithms Sorting Algorithms: Quadratic sorting algorithms : Bubble Sort Basic step of bubble sort or exchange sort is that compare ith and (i+1)th element and swap them if they are out of order. Algorithm BubbleSort (a, n) // sorts the array a[1:n] into non-decreasing order { for i = 1 to n-1 do { for k = 1 to n-i do { if a[k] > a[k+1] temp = a[k]; a[k] = a[k+1]; a[k+1] = temp; } } }

- 12. Unit II : Fundamental Computing Algorithms Sorting Algorithms: Bubble Sort : Analysis Best case : Already sorted input. The recurrence equation is: T(n) = T(n-1) + n, given that T[1] = 1. Time complexity = O(n2) Space complexity = O(1) Number of exchanges = 0 = O(1) Number of passes = n-1 = O(n) Worst case : Sorted input in reverse order. The recurrence equation is: T(n) = T(n-1) + n, given that T[1] = 1. Time complexity = O(n2) Space complexity = O(1) Number of exchanges = O(n2) Number of passes = n-1 = O(n)

- 13. Unit II : Fundamental Computing Algorithms Sorting Algorithms: Bubble Sort : Analysis Average case : Any random input. The recurrence equation is: T(n) = T(n-1) + n, given that T[1] = 1. Time complexity = O(n2) Space complexity = O(1) Number of exchanges = O(n2) Number of passes = n-1 = O(n) Note : With little modification of algorithm, best case analysis can be improved. Time complexity = O(n) Space complexity = O(1) Number of exchanges = 0 = O(1) Number of passes = 1 = O(1)

- 14. Unit II : Fundamental Computing Algorithms Sorting Algorithms: Quadratic sorting algorithms : Selection Sort Basic step of Selection sort is that find the smallest element (jth) from ith to nth element in an array, then swap ith and jth element. Algorithm SelectionSort (a, n) // sorts the array a[1:n] into non-decreasing order { for i = 1 to n do { j = i; for k = i to n do { if a[k] < a[j] j = k; } t = a[k]; a[k] = a[j]; a[j] = t; } }

- 15. Unit II : Fundamental Computing Algorithms Sorting Algorithms: Quadratic sorting algorithms : Selection Sort Selection Sort : Analysis Best case : Already sorted input. The recurrence equation is: T(n) = T(n-1) + n, given that T[1] = 1. Time complexity = O(n2) Space complexity = O(1) Number of exchanges = 0 = O(1) Number of passes = n = O(n) Worst case : Sorted input in reverse order. The recurrence equation is: T(n) = T(n-1) + n, given that T[1] = 1. Time complexity = O(n2) Space complexity = O(1) Number of exchanges = n = O(n) Number of passes = n = O(n)

- 16. Unit II : Fundamental Computing Algorithms Sorting Algorithms: Quadratic sorting algorithms : Selection Sort Selection Sort : Analysis Average case : Any random input. The recurrence equation is: T(n) = T(n-1) + n, given that T[1] = 1. Time complexity = O(n2) Space complexity = O(1) Number of exchanges = O(n) Number of passes = n = O(n) Note : With little modification of algorithm, best case analysis can be improved. Time complexity = O(n) Space complexity = O(1) Number of exchanges = 0 = O(1) Number of passes = 1 = O(1)

- 17. Unit II : Fundamental Computing Algorithms Sorting Algorithms: Quadratic sorting algorithms : Insertion Sort Basic step of Insertion sort is that ith element is inserted in a sorted array such that original order is preserved. Used when very few elements are Left-Out-of-Order (LOO). Algorithm InsertionSort (a, n) // sorts the array a[1:n] into non-decreasing order, n>=1 { for j = 2 to n do { item = a[j]; i = j - 1; // a[1:j-1] is already sorted while ((i >= 1) and (item < a[i])) do { a[i+1] = a[i]; i = i – 1; } a[i+1] = item; } }

- 18. Unit II : Fundamental Computing Algorithms Sorting Algorithms: Quadratic sorting algorithms : Insertion Sort Analysis : Best case : Already sorted input. Time complexity = O(n) Space complexity = O(1) Number of pushdowns = 0 = O(1) Number of passes = 1 = O(1) Worst case : Sorted input in reverse order. Total comparisons = 1+2+…+(n-1) = n(n-1)/2 Time complexity = O(n2) Space complexity = O(1) Total pushdowns = 1+2+…+(n-1) = n(n-1)/2 Number of pushdowns = O(n2) Number of passes = 1= O(1)

- 19. Unit II : Fundamental Computing Algorithms Sorting Algorithms: Quadratic sorting algorithms : Insertion Sort Insertion Sort : Analysis Average case : Any random input. Average number of comparisons to insert ith element at kth position in sorted array a[1:i-1] = (1/i) Σ (i-k+1) = (i+1)/2 = Ci 1<= k <= i To insert elements from 2 to n total number of comparisons = Σ Ci = (n-1)(n+4)/4 = O(n2) 2<= i <= n Time complexity = O(n2) Space complexity = O(1) Number of pushdowns = O(n2) Number of passes = 1 = O(1)

- 20. Unit II : Fundamental Computing Algorithms Sorting Algorithms: Quadratic sorting algorithms : Shell Sort Shell sort is similar to insertion sort. The algorithm starts with step k i.e. elements at a distance of k are ordered first by using insertion sort and this is repeated by reducing step size to k/2 and so on till step size is equal to one. Analysis : Best case : Already sorted input. Time complexity = O(n) Space complexity = O(1) Number of pushdowns = 0 = O(1) Number of passes = log k = O(1)

- 21. Unit II : Fundamental Computing Algorithms Sorting Algorithms: Quadratic sorting algorithms : Shell Sort Analysis : Worst case : Sorted input in reverse order. Time complexity = O(n2) Space complexity = O(1) Number of pushdowns = O(n2) Constant time less as compared to insertion sort Number of passes = logk = O(1) Average case :Time complexity = O(n2) Space complexity = O(1) Number of pushdowns = O(n2) Constant time less as compared to insertion sort Number of passes = logk = O(1)

- 22. Unit II : Fundamental Computing Algorithms Sorting Algorithms: O(nlog n) sorting algorithms : Quick Sort Basic step of Quick sort : Generally 1st element is selected as pivot. Pivot is compared with the elements from 2nd position onwards until pivot <= ith element in array. Then pivot is compared with elements starting from end of the partition backward until pivot <= jth element. Then ith and jth elements are interchanged if i < j, otherwise process is continued until i > j. Finally pivot and jth elements are interchanged, thus positioning pivot at its final position in sorted output and dividing the input in two partitions [1: j-1] and [(j+1):n]. Same procedure is applied recursively to sort these partitions independently so that we get the final output in sorted order. Quick sort is an example of Divide and Conquer strategy.

- 23. Unit II : Fundamental Computing Algorithms Sorting Algorithms: O(nlog n) sorting algorithms : Quick Sort Algorithm partition (a, m, p) // a[m:p] is a global array. 1st element in the array is a[m] and last //element in the array is a[p-1] and a[p] >= a[m,…,p-1]. Pivot is // the 1st element i.e. a[m]. After completion of algorithm it returns // position where the pivot is placed in an array { v = a[m]; i = m; j = p repeat { repeat i = i +1; until (a[i] >= v); repeat j = j -1; until (a[j] <= v);

- 24. Unit II : Fundamental Computing Algorithms Sorting Algorithms: O(nlog n) sorting algorithms : Quick Sort Algorithm partition (a, m, p) … contd. if (i < j) { p = a[i]; a[i] = a[j]; a[j] = p; } } until (i>=j); a[m] = a[j] a[j] = v; return j; }

- 25. Unit II : Fundamental Computing Algorithms Sorting Algorithms: O(nlog n) sorting algorithms : Quick Sort Algorithm quicksort (p, q) // a[1:n+1] is global array. Sorts elements a[p], … , a[q] in // non-// decreasing order stored in array a[1:n]. a[n+1] is > all // elements in a[1:n] { if (p < q) // there are more than 1 items { j = partition [a, p, q+1] // divides in two partitions quicksort (p, j-1); quicksort (j+1, q); } }

- 26. Unit II : Fundamental Computing Algorithms Sorting Algorithms: O(nlog n) sorting algorithms : Quick Sort Analysis : Best case : Every time partition is divided into two partitions of equal size. Recurrence equation for this is: T(n) = 2T(n/2) + n given that T[1] = 0 Time complexity = O(nlog n) Space complexity is storage required for variables i, j, p, v and space required for implicit stack = O(log n) Worst case : Instance is already in sorted order. Recurrence equation for this is: T(n) = T(n - 1) + n given that T[1] = 0 Time complexity = O(n2) Space complexity is storage required for variables i, j, p, v and space required for implicit stack = O(n)

- 27. Unit II : Fundamental Computing Algorithms Sorting Algorithms: O(nlog n) sorting algorithms : Quick Sort Analysis : Average case : Any random input. Average computation time is given by: t(n) = (1/n) Σ (g(n) + t(k-1) + t(n - k)) 1<= k <= n where g(n) = time required to partition = O(n) (k-1) and (n-k) are two partitions created and k can take value from 1 to n to consider all n possibilities Time complexity = O(nlog n) Space complexity is storage required for variables i, j, p, v and space required for implicit stack = O(n) Note : QUICK SORT is not a STABLE one.

- 28. Unit II : Fundamental Computing Algorithms Sorting Algorithms: O(nlog n) sorting algorithms : Merge Sort Basic step of Merge sort : In merge sort, a partition is divided (divide) into two partitions of equal size. This process of division is continued until partition size is 1, i.e. finally we get all partitions of size 1 which are sorted (conquer). Then the solution to original problem is obtained by combining (combine) these sorted partitions, to get the final partition of size n in sorted order. Merge sort is an example of Divide and Conquer strategy.

- 29. Unit II : Fundamental Computing Algorithms Sorting Algorithms: O(nlog n) sorting algorithms : Merge Sort Algorithm merge (low, mid, high) // array a[low : high] is a global array containing two sorted subsets in // a[low : mid] and [a[mid+1 : high]. This algorithm merges these sets into a // single set residing in a[low : high]. B[ ] // is an auxiliary global array. { h = low; i = low; j = mid + 1; while ((h <= mid) and (j <= high)) do { if a[h] <= a[j] { b[i] = a[h]; h = h + 1; } else { b[i] = a[j] j = j + 1; } I = I + 1 }

- 30. Unit II : Fundamental Computing Algorithms Sorting Algorithms: O(nlog n) sorting algorithms : Merge Sort Algorithm merge (low, mid, high) … contd. if (h > mid) { for k = j to high do { b[i] = a[k]; i = i +1; } } else { for k = h to mid do { b[i] = a[k]; i = i +1; } } for k = low to high do a[k] = b[k]; }

- 31. Unit II : Fundamental Computing Algorithms Sorting Algorithms: O(nlog n) sorting algorithms : Merge Sort Algorithm mergesort (low, high) // a[low:high] is a global array to be sorted { if (low < high) // more than one element { // create sub-partitions mid = lower ceil of (low+high)/2; // solve subproblems mergesort (low, mid); mergesort (mid+1, high); // combine merge (low, mid, high) } }

- 32. Unit II : Fundamental Computing Algorithms Sorting Algorithms: O(nlog n) sorting algorithms : Merge Sort Analysis : Mergesort works blindly while dividing, conquering and combining the solution. Order of elements in a instance is immaterial. Therefore there is no best, worst or average case and computation time remains same in all cases and it is stable. The recurrence equation for mergesort is: T(n) = 2 T(n/2) + n given that T(1) = 0 Replace n with 2i reduces it to: ti = 2ti + 2i, char. equation is x2 – 4x + 4 = 0 = (x-2)2 General solution is: ti = c12i + c2.i.2i T(n) = c1n + c2.logn.n c1 = 0 and c2= 1 T(n) = O(nlog n) … best, worst and average case

- 33. Unit II : Fundamental Computing Algorithms Sorting Algorithms: O(nlog n) sorting algorithms : Heap Sort Heap : A max (or min) heap is a complete binary tree with a property that the value at each node is at least (at most) or as large as (or as small as) the values at its children (if they exist). This property is called as heap property. Heap data structure is used in implementation of priority queue and in heap sort. It takes O(log n) time to insert an element in heap or to delete max (or min) element from heap.

- 34. Unit II : Fundamental Computing Algorithms Sorting Algorithms: O(nlog n) sorting algorithms : Heap Sort Algorithm adjust (a, i, n) // The complete binary trees with roots 2i and 2i + 1 are combined // with node i to form a heap rooted at i. No node has address // greater than n and less than 1. { j = 2i; item = a[i]; while (j <= n) do { if ((j < n) and (a[j] < a[j+1])) j = j + 1; if (item >= a[j] then break; a[lower ceil ( j/2) ] = a[ j ]; j = 2j; } a[lower ceil (j/2)] = item; }

- 35. Unit II : Fundamental Computing Algorithms Sorting Algorithms: O(nlog n) sorting algorithms : Heap Sort Analysis of algorithm adjust : Worst case run time of adjust is proportional to the height of binary tree. If there are n nodes in binary tree then worst case time is O(log n).

- 36. Unit II : Fundamental Computing Algorithms Sorting Algorithms: O(nlog n) sorting algorithms : Heap Sort Algorithm heapify(a, n) // readjust the elements in a[1:n] to form a heap { for i = lower ceil (n/2) to 1 step -1 do adjust (a, i, n); } Analysis of algorithm heapify : In worst case, let 2k-1 <= n <= 2k, where k = log (n+1) = number of levels in complete binary tree. The number of iterations of adjust algorithm will be (k – i) for a node at level i. Total time for heapify in worst case = Σ 2i-1(k - i) = O(n) 1<= i <= k

- 37. Unit II : Fundamental Computing Algorithms Sorting Algorithms: O(nlog n) sorting algorithms : Heap Sort Algorithm heapsort (a, n) // array a[1:n] contains n elements, algorithm sorts them // into non-decreasing order. { heapify ( a, n); // transforms the array into heap // interchange the new maximum with // O(n) // the element at the end of the array. for i = n to 2 step -1 do // O(n) { t = a[i]; a[i] = a[1]; a[1] = t adjust (a, 1, i-1); // O(logn) } }

- 38. Unit II : Fundamental Computing Algorithms Sorting Algorithms: O(nlog n) sorting algorithms : Heap Sort Analysis of algorithm heapsort : Time Complexity : • Computation time for heapify is O(n) • Computation time for adjust is O(log n) • For loop is executed for (n – 1) times • Worst case computation time for heapsort is O(nlog n) Space Complexity : Storage space required is O(1)

- 39. Unit II : Fundamental Computing Algorithms Algorithms on Graphs : Graph traversing algorithms : DFS or DFT Depth First Search or Depth First Traversal : Given an undirected graph G = (V, E) and a vertex v in V(G). Traversing a graph means visiting all vertices in G that are reachable from v i.e. all vertices connected to v. When we trace the edges selected while traversing a graph, it forms a spanning tree. Algorithm DFS (v) // Given an undirected graph G = (V, E), with n vertices. Graph G and // visited[n] are global and array visited [1:n] is initialized to 0 set T // accumulates the selected edges { visited [v] = 1; for each vertex w adjacent from v do { if { visited [w] = 0 T = T U {(v, w)}; // T is a spanning tree call DFS (w); } }

- 40. Unit II : Fundamental Computing Algorithms Algorithms on Graphs : Graph traversing algorithms : DFS or DFT Depth First Search or Depth First Traversal : Analysis : Case 1: Graph G is represented as adjacency list. Each list node is visited exactly once = 2.e Each vertex is visited exactly once = n Time complexity = O(n + e) Space complexity = O(n) ... for array visited & stack Case 1: Graph G is represented as adjacency matrix. Time required to determine all vertices adjacent to v is O(n) and at most n vertices are visited, the total time is Time complexity = O(n2) Space complexity = O(n) ... for array visited & stack

- 41. Unit II : Fundamental Computing Algorithms Algorithms on Graphs : Graph traversing algorithms : BFS or BFT Breadth First Search or Breadth First Traversal : Algorithm BFS (v) // Graph G and array visited[1:n] are global and array visited[1:n] is // initialized to zero { visited[v] = 1; add vertex v to queue Q while Q is not empty do { call deleteQ(Q, v); for each vertex w adjacent to v { if visited[w] = 0 { T = T U {(v, w)}; // T is spanning tree addQ ( Q, w); visited[w] = 1;} } } }

- 42. Unit II : Fundamental Computing Algorithms Algorithms on Graphs : Graph traversing algorithms : BFS or BFT Breadth First Search or Breadth First Traversal : Analysis : Case 1: Graph G is represented as adjacency list. Each list node is visited exactly once = 2.e Each vertex is visited exactly once = n Time complexity = O(n + e) Space complexity = O(n) ... for array visited & stack Case 1: Graph G is represented as adjacency matrix. Time required to determine all vertices adjacent to v is O(n) and at most n vertices are visited the total time is Time complexity = O(n2) Space complexity = O(n) ... for array visited & stack

- 43. Unit II : Fundamental Computing Algorithms Algorithms on Graphs : Minimum-cost Spanning Tree : • We have seen that using by DFT or BFT algorithms we obtain a spanning tree from a given connected graph. • Spanning trees can be used to obtain an independent set of circuit equations for an electric network. By adding an edge from the set of back edges (edges not included in spanning tree), it forms a circuit or cycle. Then Kirchoff’s second law can be applied to form the independent circuit equation. • In practice we are interested to find a spanning tree of connected graph G with minimum cost. Since finding MST involves the selection of a subset of the edges of G, it fits into the subset paradigm.

- 44. Unit II : Fundamental Computing Algorithms Algorithms on Graphs : Minimum-cost Spanning Tree : • Consider the problem of setting up a communication network links between the cities with a minimum cost. • If we represent the cities as vertices and the communication links between cities with the associated costs as weighted edges, then the problem gets resolved to finding a minimum spanning tree whose total weight is equal to the sum of the weights of all branches of a spanning tree (Minimum cost Spanning Tree – MST). • We can obtain MST by applying either Kruskal’s algorithm or Prim’s algorithm.

- 45. Unit I : Analysis of Algorithms Greedy strategy : Minimum-cost Spanning Tree Prim’s Algorithm : • A greedy method to obtain a minimum cost spanning tree builds it edge by edge. The next edge to include is chosen according to some optimization criteria. • The simplest such criteria is to choose an edge that results in a minimum increase in the sum of the costs of the edges included so far. • Prim’s algorithm starts with a given vertex & then selects the edge (u, v) such that u vertices already included & v remaining vertices and the cost of edge (u, v) is minimum. If these constraints are satisfied the edge (u, v) is included in MST. Vertex v is added to the set of already selected vertices. Example

- 46. Unit I : Analysis of Algorithms Greedy strategy : Minimum-cost Spanning Tree Prim’s Algorithm : Problem Example : Obtain MST for the following graph G using Prim’s Algorithm starting with vertex 1. 1 2 4 6 4 5 6 3 8 7 4 3 1 2 3 4 7 65

- 47. Unit I : Analysis of Algorithms Greedy strategy : Minimum-cost Spanning Tree Prim’s Algorithm : Steps • Let G = (V, E) & T = (A, B) • A = B = ; • Let i = v // starting vertex • A = A { i } • while A ≠ V do • { find edge (u, v) E such that • u A & v (V – A) AND cost of (u, v) is minimum • A = A { u } • B = B {(u, v)} • Delete (u, v) from E • } • stop

- 48. Unit I : Analysis of Algorithms Greedy strategy : Minimum-cost Spanning Tree Prim’s Algorithm : Solution Example contd. : Minimum Spanning tree for above graph. Total weight of MST = 17 = cost of MST 1 2 4 3 4 3 1 2 3 4 7 65

- 49. Unit I : Analysis of Algorithms Greedy strategy : Minimum-cost Spanning Tree Prim’s Algorithm : Analysis • Maximal connected graph is the worst case for this algorithm. To select an edge (u, v) from undirected maximum connected graph, the number of comparisons would be = (d1) + (d1+d2 –2) + (d1+d2+d3 – 4) + … where di = degree of the vertex = n- 1 = (n-1)d1 + (n-2)d2 + (n-3)d3 + … + dn – {2 + 4 + 6 + … + 2(n-1)} = (n-1)[(n-1) + (n-2) + …. + 1] – 2(1 + 2 + 3 + … + n-1) = [(n-1)(n-1)(n)/2] – [2(n-1)(n)/2] = n(n-1)(n-3)/2 = O( n3)

- 50. Unit II : Fundamental Computing Algorithms Algorithms on Graphs : Minimum-cost Spanning Tree : Prim’s Algorithm Algorithm Prim (E, cost, n, t) // E is the set of edges in G, cost [1:n, 1:n] is the cost either a positive real number or ∞ // if no edge (i,j) exists. A MST is computed and stored as a set of edges in the array // t[1:n-1, 1:n-2] . ( t[i,1], t[i,2] ) is an edge in MST. The final cost is returned. { Let (k, l) be an edge of minimum cost in E; // O(|E|) mincost = cost[k,l]; t[1, 1] = k; t[1, 2] = l; for i = 1 to n do // initialize near (nearest vertex). // O(n) { if cost [i, l] < cost [i, k] near [i] = l else near [i] = k; near [l] = near [k] = 0;} for i = 2 to n-1 do // find n-2 additional edges for MST t. // O(n) { let j be an index such that near[ j] != 0 and cost [ j, near[j]] is min; t[i, 1] = j; t[i, 2] = near[ j ]; // O(n)

- 51. Unit II : Fundamental Computing Algorithms Algorithms on Graphs : Minimum-cost Spanning Tree : Prim’s Algorithm Algorithm Prim (E, cost, n, t) …. Contd. mincost = mincost + cost [ j , near [ j ]]; near [ j] = 0; for k = 1 to n do // update near (nearest vertex). … O(n) { if ((near [k] != 0) and (cost [k, near[k]] > cost [k, j])) near [k] = j; } } return mincost; } Analysis : Prim’s algorithm runs in O(n2) time and has space complexity O(n) for n and t arrays of size n.

- 52. Unit II : Fundamental Computing Algorithms Algorithms on Graphs : Minimum-cost Spanning Tree : Kruskal’s Algorithm 1. List all the edges of a graph ‘G’ in non-decreasing order of weights of edges. 2. Select the edge having minimum weight from the list and add it to the spanning tree (which is initially empty), only if the inclusion of the edge does not make a circuit otherwise selected edge is rejected. 3. Repeat step 2 for the remaining edges in the sorted list until (|V|-1) edges are included in tree or the sorted list of edges is empty. 4. If the tree contains less than |V|-1 edges and the sorted list of edges is empty then display “No MST possible” otherwise print MST Example

- 53. Unit II : Fundamental Computing Algorithms Algorithms on Graphs : Minimum-cost Spanning Tree : Kruskal’s Algorithm Algorithm Kruskal (E, cost, n, t) // E is the set of edges in G. G has n vertices. cost[u, v] is the cost of // edge (u, v). t is the set of edges in the MST. The final cost is returned. { Construct a heap out of the edge costs using Heapify // O(E) for i = 1 to n do // O(n) parent[i] = -1; i = 0; mincost = 0; while ((i < (n-1) and (heap not empty)) do // O(E) { delete a minimum cost edge (u, v) from the heap; // O(log E) reheapify using adjust; // O(log E) j = Find(u), k = Find(v);} // O(log E)

- 54. Unit II : Fundamental Computing Algorithms Algorithms on Graphs : Minimum-cost Spanning Tree : Kruskal’s Algorithm Algorithm Kruskal (E, cost, n, t) … contd. if (j != k) // O(log E) { i = i + 1; t[i,1] = u; t[i, 2] = v}; mincost = mincost + cost[u, v]; Union (j, k); } } if (i != (n-1) write (“No spanning tree”); else return mincost; } Analysis : Computing time = O(|E| log |E|) Space complexity is O(n) to store array parent.

- 55. Unit II : Fundamental Computing Algorithms Algorithms on Graphs : Shortest Paths : Let G = (V, E, w) be a weighted graph, where w is a function from E to the set of positive real numbers. Consider V as a set of cities and E as a set of highways connecting these cities. The weight of an edge (i, j) is w(i, j) usually referred to as the length of the edge (i, j), which has the obvious interpretation as the distance between adjacent cities i and j. The length of the path in G is defined to be the sum of the lengths of the edges in the path. Now the problem is to determine a shortest path from one vertex to another vertex in V. The solution to this problem is discovered by E. W. Dijkshtra called as Dijkshtra’s algorithm.

- 56. Unit I : Analysis of Algorithms Greedy strategy : Single Source Shortest Paths Dijkstra’s Algorithm : Example Find the shortest paths from vertex 1 to all other vertices in the following directed graph using Dijkstra’s algorithm. 10 50 100 30 10 20 5 50 1 5 2 4 3

- 57. Unit I : Analysis of Algorithms Greedy strategy : Single Source Shortest Paths Dijkstra’s Algorithm : Example Shortest Paths : 1-2 : 2-3-1 : 1-3-2 1-3 : 3-1 : 1-3 1-4 : 4-5-1 : 1-5-4 1-5 : 5-1 : 1-5 Step No P T Distance (D) {2, 3, 4, 5} Prev. Vertex to {2, 3, 4, 5} Initialize 1 {2, 3, 4, 5} {50, 30, 100, 10} {1, 1, 1, 1} 1 (1,5) {2, 3, 4 } {50, 30, 20, 10} {1, 1, 5, 1} 2 (1,4,5) {2, 3} {40, 30, 20, 10} {4, 1, 5, 1} 3 (1,3,4,5) {2} {35, 30, 20, 10} {3, 1, 5, 1}

- 58. Unit II : Fundamental Computing Algorithms Algorithms on Graphs : Single Source Shortest Paths : Dijkstra’s Algorithm The procedure (algorithm) for computing the shortest path from vertex a to any other vertex in a graph G. 1. Initially let P = {a} and T = V – {a} and for every vertex t in T let D(t) = w(a, t). Update the vertex a as the previous vertex for all vertices in T adjacent to vertex a 2. Select the vertex x in T such that it has the smallest index (minimum weight edge ) starting from a & going thru vertices in P 3. If x is the vertex, we want to reach from a then STOP, otherwise let P = P U {x} and T = T – {x} for every vertex t in T compute it’s index D(t) = min [D(t), D(x) + w(x, t)] 4. Update the vertex x as the previous vertex for all vertices in T adjacent to vertex x 5. Repeat steps 2 to 4 until T becomes empty Example

- 59. Unit II : Fundamental Computing Algorithms Algorithms on Graphs : Single Source Shortest Paths : Dijkstra’s Algorithm Algorithm ShortestPaths (v, cost, dist, n) // dist [ j], 1<=j<=n, is set to the lengths of the shortest path from vertex v to // vertex j in a digraph G with n vertices. dist [v] is set to zero. G is represented // by its cost adjacency matrix cost [1:n, 1:n]. { for i = 1 to n do // O(n) { S[i] = 0; dist[i] = cost [v, i]; } S[v] = 1; dist [v] = 0 // include v in S for num = 2 to n do // determine n-1 paths from v // O(n) { choose u from among those vertices not in S s.t. dist[u] is minimum; S[u] = 1; // include u in S // O(n) for (each w adjacent to u with S(w) = 0) do // update distances if (dist [w] > (dist [u] + cost [u, w])) // O(n) dist [w] = dist [u] + cost [u, w]; } }

- 60. Unit II : Fundamental Computing Algorithms Algorithms on Graphs : Single Source Shortest Paths : Dijkstra’s Algorithm Analysis of ShortestPaths (v, cost, dist, n) Time complexity = O(n2) Space complexity = O(n) All Pairs Shortest Paths : For this Dijkstra’s Algorithm may be used n times. Analysis of AllpairsPaths (v, cost, dist, n) Time complexity = O(n3) Space complexity = O(n)