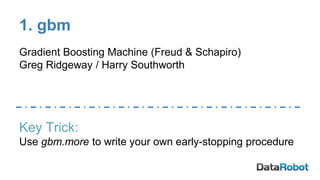

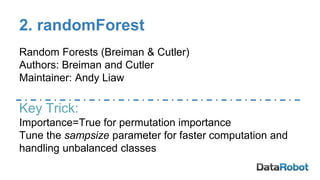

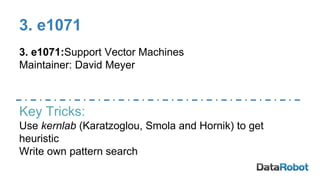

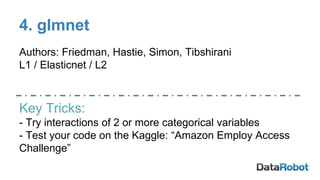

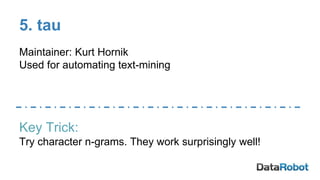

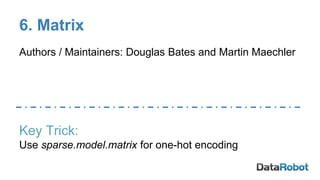

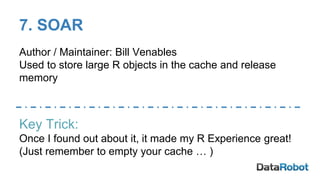

This document discusses 10 R packages that are useful for winning Kaggle competitions by helping to capture complexity in data and make code more efficient. The packages covered are gbm and randomForest for gradient boosting and random forests, e1071 for support vector machines, glmnet for regularization, tau for text mining, Matrix and SOAR for efficient coding, and forEach, doMC, and data.table for parallel processing. The document provides tips for using each package and emphasizes letting machine learning algorithms find complexity while also using intuition to help guide the models.