This document describes a cloud computing framework that uses image recognition and remote assistance to help blind individuals identify products while grocery shopping. A blind person takes a photo of a product with their smartphone, which is sent to a cloud server for image matching. The top 5 matches are then sent to a sighted assistant for verification. If a match is incorrect, the assistant provides the right name either by voice or text. The system was tested in the lab and a supermarket, showing it can help blind people identify products independently with help from remote sighted assistants.

![Eyesight Sharing in Blind Grocery Shopping: Remote

P2P Caregiving through Cloud Computing

Vladimir Kulyukin, Tanwir Zaman, Abhishek Andhavarapu ,

and Aliasgar Kutiyanawala

Department of Computer Science

Utah State University

Logan, UT, USA

{vladimir.kulyukin}@usu.edu

Abstract. Product recognition continues to be a major access barrier for visual-

ly impaired (VI) and blind individuals in modern supermarkets. R&D ap-

proaches to this problem in the assistive technology (AT) literature vary from

automated vision-based solutions to crowdsourcing applications where VI cli-

ents send image identification requests to web services. The former struggle

with run-time failures and scalability while the latter must cope with concerns

about trust, privacy, and quality of service. In this paper, we investigate a mo-

bile cloud computing framework for remote caregiving that may help VI and

blind clients with product recognition in supermarkets. This framework empha-

sizes remote teleassistance and assumes that clients work with dedicated care-

givers (helpers). Clients tap on their smartphones’ touchscreens to send images

of products they examine to the cloud where the SURF algorithm matches in-

coming image against its image database. Images along with the names of the

top 5 matches are sent to remote sighted helpers via push notification services.

A helper confirms the product’s name, if it is in the top 5 matches, or speaks or

types the product’s name, if it is not. Basic quality of service is ensured through

human eyesight sharing even when image matching does not work well. We

implemented this framework in a module called EyeShare on two Android

2.3.3/2.3.6 smartphones. EyeShare was tested in three experiments with one

blindfolded subject: one lab study and two experiments in Fresh Market, a su-

permarket in Logan, Utah. The results of our experiments show that the pro-

posed framework may be used as a product identification solution in supermar-

kets.

1 Introduction

The term teleassistance covers a wide range of technologies that enable VI and blind

individuals to transmit video and audio data to remote caregivers and receive audio

assistance [1]. Research evidence suggests that the availability of remote caregiving

reduces the psychological stress on VI and blind individuals when they perform vari-

ous tasks in different environments [2].](https://image.slidesharecdn.com/eyesharecamerareadyicchp2012-120424180312-phpapp02/75/Eyesight-Sharing-in-Blind-Grocery-Shopping-Remote-P2P-Caregiving-through-Cloud-Computing-1-2048.jpg)

![A typical example of how teleassistance is used for blind navigation is the system

developed by Bujacz et. al. [1]. The system consists of two notebook computers: one

is carried by the VI traveler in a backpack and the other used by the remote sighted

caregiver. The traveler transmits video through a chest-mounted USB camera. The

traveler wears a headset (an earphone and a microphone) to communicate with the

caregiver. Several indoor navigation experiments showed that VI travelers walked

faster, at a steadier pace, and were able to navigate more easily when assisted by re-

mote guides then when they navigated the same routes by themselves.

Our research group has applied teleassistance to blind shopping in ShopMobile, a

mobile shopping system for VI and blind individuals [3]. Our end objective is to ena-

ble VI and blind individuals to shop independently using only their smartphones.

ShopMobile is our most recent system for accessible blind shopping that follows Ro-

boCart and ShopTalk [4]. The system has three software modules: an eyes-free bar-

code scanner, an OCR engine, and a teleassitance module called TeleShop. The eyes-

free barcode scanner allows VI shoppers to scan UPC barcodes on products and MSI

barcodes on shelves. The OCR engine is being developed to extract nutrition facts

from nutrition tables available on many product packages. TeleShop provides a tele-

assistance backup in situations when the barcode scanner or the OCR engine’s mal-

function.

The current implementation of TeleShop consists of a server running on the VI

shopper's smartphone (Google Nexus One with Android 2.3.3/2.3.6) and a client GUI

module running on the remote caregiver's computer. All client-server communication

occurs over UDP. Images from the phone camera are continuously transmitted to the

client GUI. The caregiver can start, stop, and pause the incoming image stream and to

change image resolution and quality. Images of high resolution and quality provide

more reliable detail but may cause the video stream to become choppy. Lower resolu-

tion images result in smoother video streams but provide less detail. The pause option

is for holding the current image on the screen.

TeleShop has so far been evaluated in two laboratory studies with Wi-Fi and 3G

[3]. The first study was done with two sighted students, Alice and Bob. The second

study was done with a married couple: a completely blind person (Carl) and his sight-

ed wife (Diana). For both studies, we assembled four plastic shelves in our laboratory

and stocked them with empty boxes, cans, and bottles to simulate an aisle in a grocery

store. The shopper and the caregiver were in separate rooms. In the first study, we

blindfolded Bob to act as a VI shopper. The studies were done on two separate days.

The caregivers were given a list of nine products and were asked to help the shoppers

find the products and read the nutrition facts on the products' packages or bottles. A

voice connection was established between the shopper and the caregiver via a regular

phone call. Alice and Bob took an average of 57.22 and 86.5 seconds to retrieve a

product from the shelf and to read its nutrition facts, respectively. The corresponding

times for Carl and Diana were 19.33 and 74.8 seconds, respectively [3].

In this paper, we present an extension of TeleShop, called EyeShare, that leverages

cloud computing to assist VI and blind shoppers (clients) with product recognition in

supermarkets. The client takes a still image of the product that he or she currently

examines and sends it to the cloud. The image is processed by an open source object](https://image.slidesharecdn.com/eyesharecamerareadyicchp2012-120424180312-phpapp02/75/Eyesight-Sharing-in-Blind-Grocery-Shopping-Remote-P2P-Caregiving-through-Cloud-Computing-2-2048.jpg)

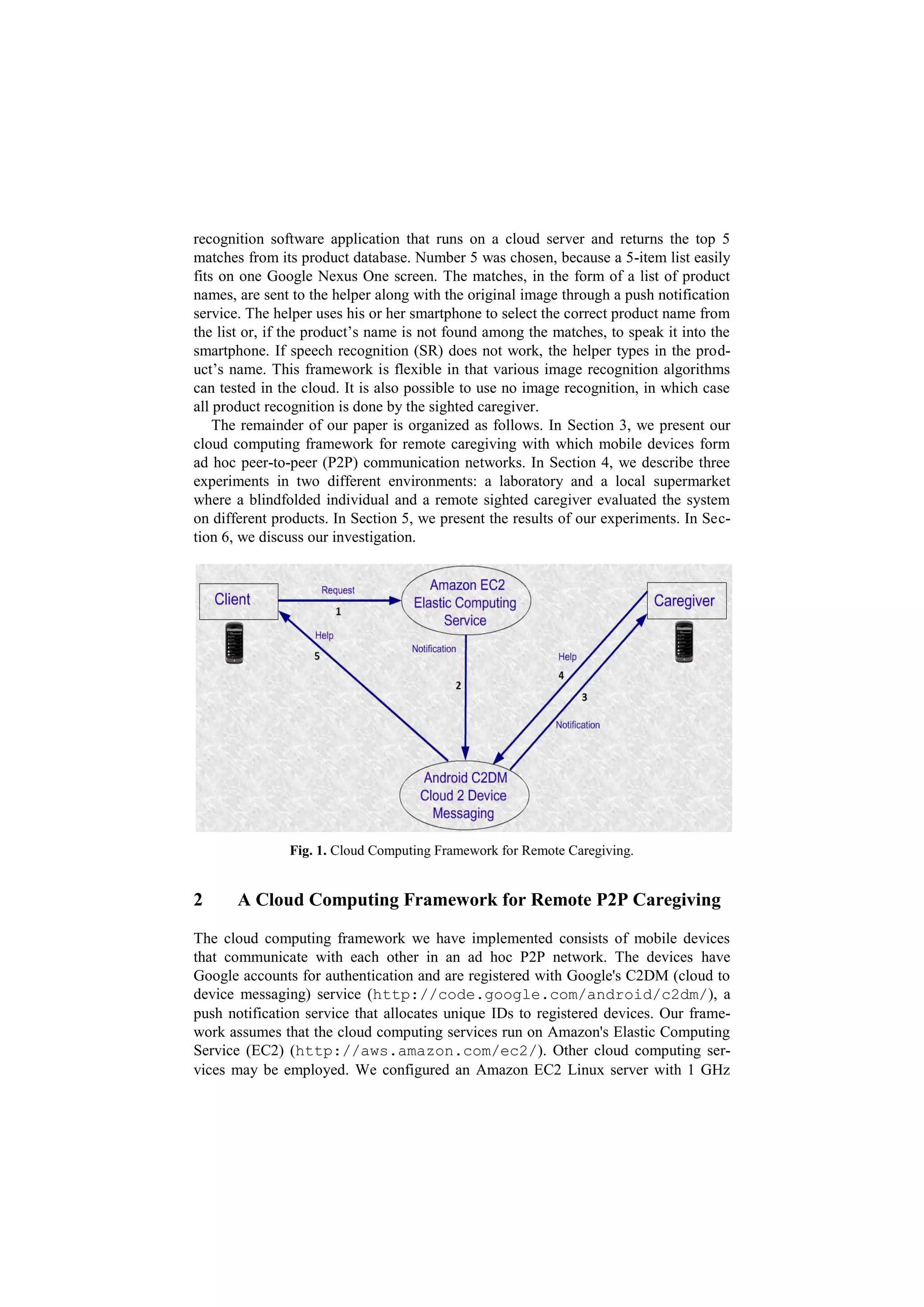

![processor and 512 MB RAM. The server runs an OpenCV 2.3.3

(http://opencv.willowgarage.com/wiki/) image matching application.

Product images are saved in a MySQL database. The use of this framework requires

that clients and helpers download the client and caregiver applications on their

smartphones. The clients and helpers subsequently find each other and form an ad hoc

P2P network via C2DM registration IDs.

Figure 1 shows this framework in action. A client sends a help request (Step 1). In

EyeShare, this request consists of a product image. However, in principle, this request

can be anything transmittable over available wireless channels such as Wi-Fi, 3G, 4G,

Bluetooth, etc. The image is received by the Amazon EC2 Linux server where it is

matched against the images in the MySQL database.

Our image matching application uses the SURF algorithm [5]. The matching op-

eration returns the top 5 matches and sends the names of the corresponding products

along with the URL that contains the client’s original image to the C2DM service

(Step 2). Thus, the image is transmitted only once – in the help request. C2DM for-

wards the message to the caregiver's smartphone (Step 3). The helper confirms the

product’s name by selecting it from the list of the top 5 matches. If the top matches

are incorrect, the helper uses SR to speak the product’s name or, if SR does not work

or is not available, types it in on the touchscreen. If the helper cannot determine the

product’s name from the image, the helper sends a resend request to the client. The

helper’s message goes back to the C2DM service (Step 4) and then on to the client's

smartphone (Step 5). The helper application is designed in such a way that the helper

does not have to interrupt its smartphone activities for too long to render assistance.

2.1 Android Cloud to Device Messaging (C2DM) Framework

C2DM (http://code.google.com/android/c2dm/) takes care of message

queuing and delivery. Push notifications ensure that the application does not need to

keep polling the cloud server for new incoming requests. C2DM wakes up the An-

droid application when messages are received through intent broadcasts. However,

the application must be set up with the proper C2DM broadcast receiver permissions.

In EyeShare, C2DM is used in two separate activities. First, C2DM forwards the mes-

sage from the server to the helper application. This message consists of a formatted

string of the client registration ID, the names of the top 5 product matches, and the

URL containing the client’s image. Clients’ images are temporarily saved on the

cloud-based Linux server and removed as soon as the corresponding help requests are

processed. Second, C2DM is used when helper messages are sent back to clients.

2.2 Image Matching

We have used SURF (Speeded Up Robust Features) [5] as a black box image match-

ing algorithm in our cloud server. SURF extracts unique key points and descriptors

from images and later uses them to match indexed images against incoming image.

SURF uses an intermediate image representation called Integral Image that is com-

puted from the input image. This intermediate representation speeds up the calcula-](https://image.slidesharecdn.com/eyesharecamerareadyicchp2012-120424180312-phpapp02/75/Eyesight-Sharing-in-Blind-Grocery-Shopping-Remote-P2P-Caregiving-through-Cloud-Computing-4-2048.jpg)

![tions in rectangular areas. It is formed by summing up the pixel values of the x,y co-

ordinates from the origin to the ends of the image. This makes computation time in-

variant to change in size and is useful in matching large images. The SURF detector is

based on the determinant of the Hessian matrix. The SURF descriptor describes how

pixel intensities are distributed within a scale dependent neighborhood of each interest

point detected by Fast Hessian. Object detection using SURF is scale and rotation

invariant and does not require long training. The fact that SURF is rotation invariant

makes the algorithm useful in situations where image matching works with object

images taken at different orientations than the images of the same objects used in

training.

3 Experiments

We evaluated EyeShare in product recognition experiments at two locations. The first

study was conducted in our laboratory. The second and third studies were conducted

at Fresh Market, a local supermarket in Logan, Utah.

3.1 A Laboratory Study

We assembled four shelves in our laboratory and placed on them 20 products: bottles,

boxes, and cans. The same setup was successfully used in our previous experiments

on accessible blind shopping [3, 4]. We created a database of 100 images. Each of the

20 products on the shelves had 5 images taken at different orientations. The SURF

algorithm was trained on these 100 images. A blindfolded individual was given a

Google Nexus One smartphone (Android 2.3.3) with the EyeShare client application

installed on it. A sighted helper was given another Google Nexus One (Android 2.3.3)

with the EyeShare helper app installed on it.

The blindfolded client was asked to take each product from the assembled shelves

and recognize it. The client took a picture of the product by tapping the touchscreen.

The image was sent to the cloud Linux server where it was processed by the SURF

algorithm. The names of the top 5 matched products were sent to the helper for verifi-

cation along with the URL with the original image through C2DM. The helper, locat-

ed in a different room in the same building, selected the product’s name from the list

of the top matches and sent the product’s name back to the client. If the product’s

name was not in the list, the helper spoke the name of the product or, if SR was not

recognized after three attempts, typed in the product’s name on the virtual

touchscreen keyboard. The run for an individual product was considered completed

when the product’s name was spoken on the client’s smartphone through TTS. Thus,

the total run time (in seconds) for each run included all five steps given in Fig. 1.

3.2 Store Experiments

The next two experiments were executed in Fresh Market, a local supermarket in

Logan, Utah. Prior to the experiments we added 270 images to our image database](https://image.slidesharecdn.com/eyesharecamerareadyicchp2012-120424180312-phpapp02/75/Eyesight-Sharing-in-Blind-Grocery-Shopping-Remote-P2P-Caregiving-through-Cloud-Computing-5-2048.jpg)

![study 1, there were three cases when the helper requested the client to send another

image of a product because he could not identify the product’s name from the original

image. In supermarket study 1, there was one brief (several seconds) loss of Wi-Fi

connection on the helper’s smartphone.

Table 1. Experimental results.

Environment # Products Mean Time STD Top 5 Mean SR SR Failures

Lab 16 40 .00021 8 1.1 0

Store 1 16 60 .00033 0 1.2 2

Store 2 17 60 .00081 0 1.1 3

5 Discussion

Our study contributes to the recent body of research that addresses various aspects

of independent blind shopping through mobile and cloud computing (e.g., [6, 7, 8]).

Our approach differs from these studies in its emphasis on dedicated remote caregiv-

ing. Our approach addresses, at least to some extent, both image recognition failures

of fully automated solutions and the concerns about trust, privacy, and basic quality of

service of pure crowdsourcing approaches. Dedicated caregivers alleviate image

recognition failures through human eyesight sharing. Since dedicated caregiving is

more personal and trustworthy, clients are not required to post image recognition

requests on open web forums, which allows them to preserve more privacy. Interested

readers may watch our research videos at www.youtube.com/csatlusu for

more information on our accessible shopping experiments and projects.

The experiments show that the average product recognition is within one minute.

The results demonstrate that SR is a viable option for product naming. We attribute

the poor performance of SURF in the first supermarket study to our failure to properly

parameterize the algorithm. As we gain more experience with SURF, we may be able

to improve the performance of automated image matching. However, database

maintenance may be a more serious long-term concern for automated image matching

unless there is direct access to the supermarket’s inventory control system.

Our findings should be interpreted with caution, because we used only one blind-

folded subject in the experiments. Nonetheless, our findings may serve as a basis for

future research on remote teleassisted caregiving in accessible blind shopping. Our

experience with the framework suggests that telassistance may be an feasible option

for VI individuals in modern supermarkets. Dedicated remote caregiving can be ap-

plied not only to product recognition but also to assistance with cash payments and

supermarket navigation. It is a relatively inexpensive solution, because the only re-

quired hardware device is a smartphone with a data plan.](https://image.slidesharecdn.com/eyesharecamerareadyicchp2012-120424180312-phpapp02/75/Eyesight-Sharing-in-Blind-Grocery-Shopping-Remote-P2P-Caregiving-through-Cloud-Computing-7-2048.jpg)