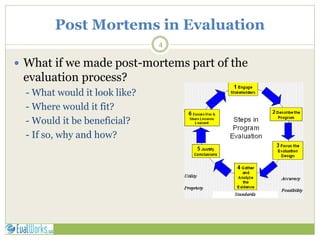

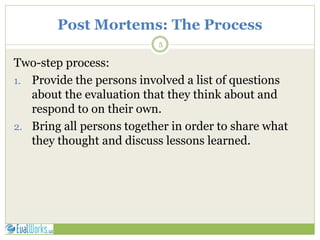

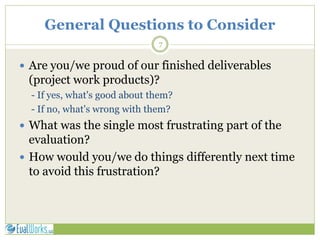

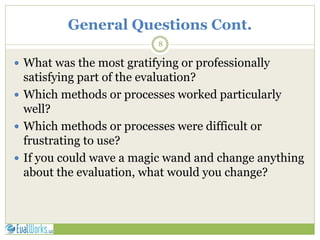

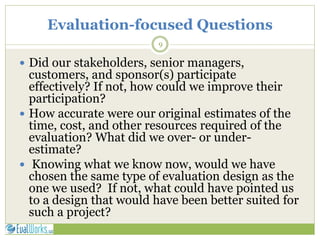

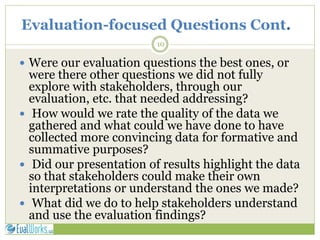

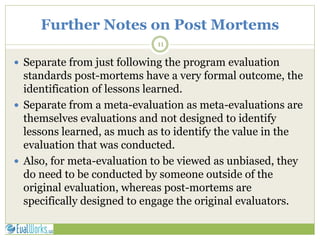

This document discusses evaluation post-mortems, which are formal analyses of the successes and failures of an evaluation project. They involve bringing all stakeholders together to discuss lessons learned from the evaluation process. The discussion includes general questions about what went well and what could be improved, as well as evaluation-specific questions about the design, methods, stakeholder participation, and use of findings. Conducting post-mortems is seen as a way to add to the knowledge base for improving future evaluations.