The document outlines the setup of etcd clusters on AWS using Terraform and CoreOS, focusing on key aspects such as configuration, bootstrapping nodes, and ensuring stable hostnames for service discovery. It highlights the manual nature of etcd operations and the use of Route53 for DNS records. Best practices and challenges in deploying distributed applications like etcd are also discussed, along with resources for further exploration.

![TERRAFORM + CoreOS

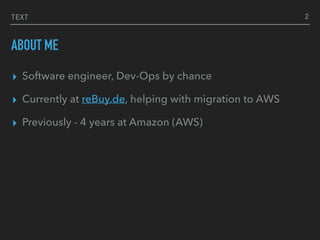

resource "aws_route53_record" "etcd_srv_discover" {

name = "_etcd-server._tcp"

type = "SRV"

records = ["${formatlist("0 0 2380 %s", aws_route53_record.etc_a_nodes.*.fqdn)}"]

ttl = “300"

zone_id = "${aws_route53_zone.etcd_zone.id}"

}

resource "aws_route53_record" "etc_a_nodes" {

count = "${var.node_count}"

type = "A" name = "node-${count.index}"

records = ["${aws_instance.etcd_node.*.private_ip[count.index]}"]

ttl = 300

zone_id = "${aws_route53_zone.etcd_zone.id}"

}

STABLE HOST NAMES

8

$ dig _etcd-server._tcp.cluster.etcd SRV

_etcd-server._tcp.cluster.etcd. 183 IN SRV 0 0 2380 node-0.cluster.etcd.

_etcd-server._tcp.cluster.etcd. 183 IN SRV 0 0 2380 node-1.cluster.etcd.

_etcd-server._tcp.cluster.etcd. 183 IN SRV 0 0 2380 node-2.cluster.etcd.](https://image.slidesharecdn.com/etcd-terraform-161115090737/85/Etcd-terraform-by-Alex-Somesan-8-320.jpg)

![TERRAFORM + CoreOS

LAUNCH NODES

11

resource "aws_instance" "etcd_node" {

count = "${var.node_count}"

ami = "${data.aws_ami.coreos_ami.id}"

instance_type = "t2.medium"

subnet_id = "${aws_subnet.az_subnet.*.id[count.index]}"

key_name = "${aws_key_pair.ssh-key.id}"

user_data = "${data.template_file.userdata.*.rendered[count.index]}"

}

$ terraform apply

core@node-1 ~ $ etcdctl cluster-health

member 5bea3befcd2b527d is healthy: got healthy result from http://node-2.cluster.etcd:2379

member bfc4d7d3459cc4cb is healthy: got healthy result from http://node-1.cluster.etcd:2379

member d1b3f464b49063ac is healthy: got healthy result from http://node-0.cluster.etcd:2379

cluster is healthy](https://image.slidesharecdn.com/etcd-terraform-161115090737/85/Etcd-terraform-by-Alex-Somesan-11-320.jpg)