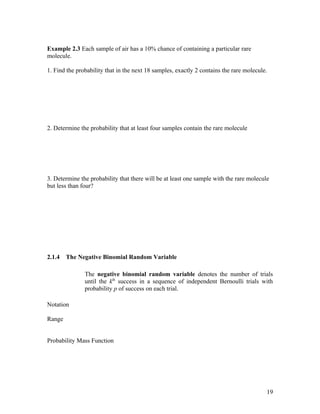

This document provides an overview of engineering statistics and probability concepts taught in an EN505 Engineering Statistics course. It defines key terms like random variables, sample spaces, events, probability, and distributions. Random variables can be either discrete or continuous, depending on whether they take countable or uncountable values. Probability is used to quantify the likelihood of events and is governed by rules like the addition rule and multiplication rule. Conditional probability and Bayes' theorem are also introduced to relate the probabilities of events given other information. Important discrete and continuous probability distributions are discussed.

![c) Find P[( X , Y ∈ A], where A={(x,y),|0 ≤ x ≤ 1/2, 1/4 ≤ y ≤ 1/2}

Theorem 4.2 Marginal Probability Density Function

Let X and Y be continuous random variables having probability density function f(x, y).

le. Let f x ( x) denote the marginal probability density function of X, and let f y ( y ) denote

the (marginal) probability density function of Y. Then,

50](https://image.slidesharecdn.com/en505engineeringstatisticsstudentnotes-110114115730-phpapp02/85/En505-engineering-statistics-student-notes-50-320.jpg)