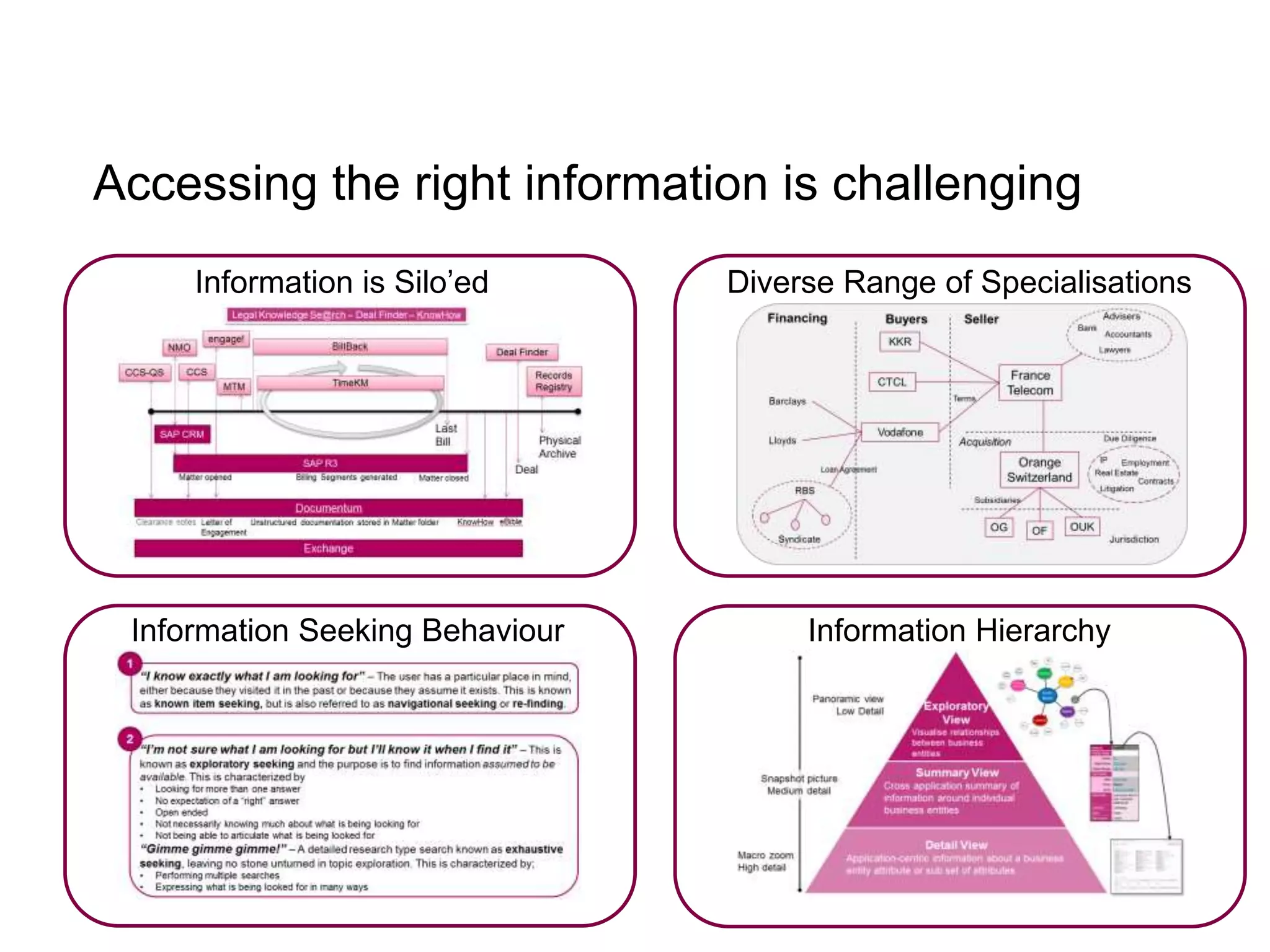

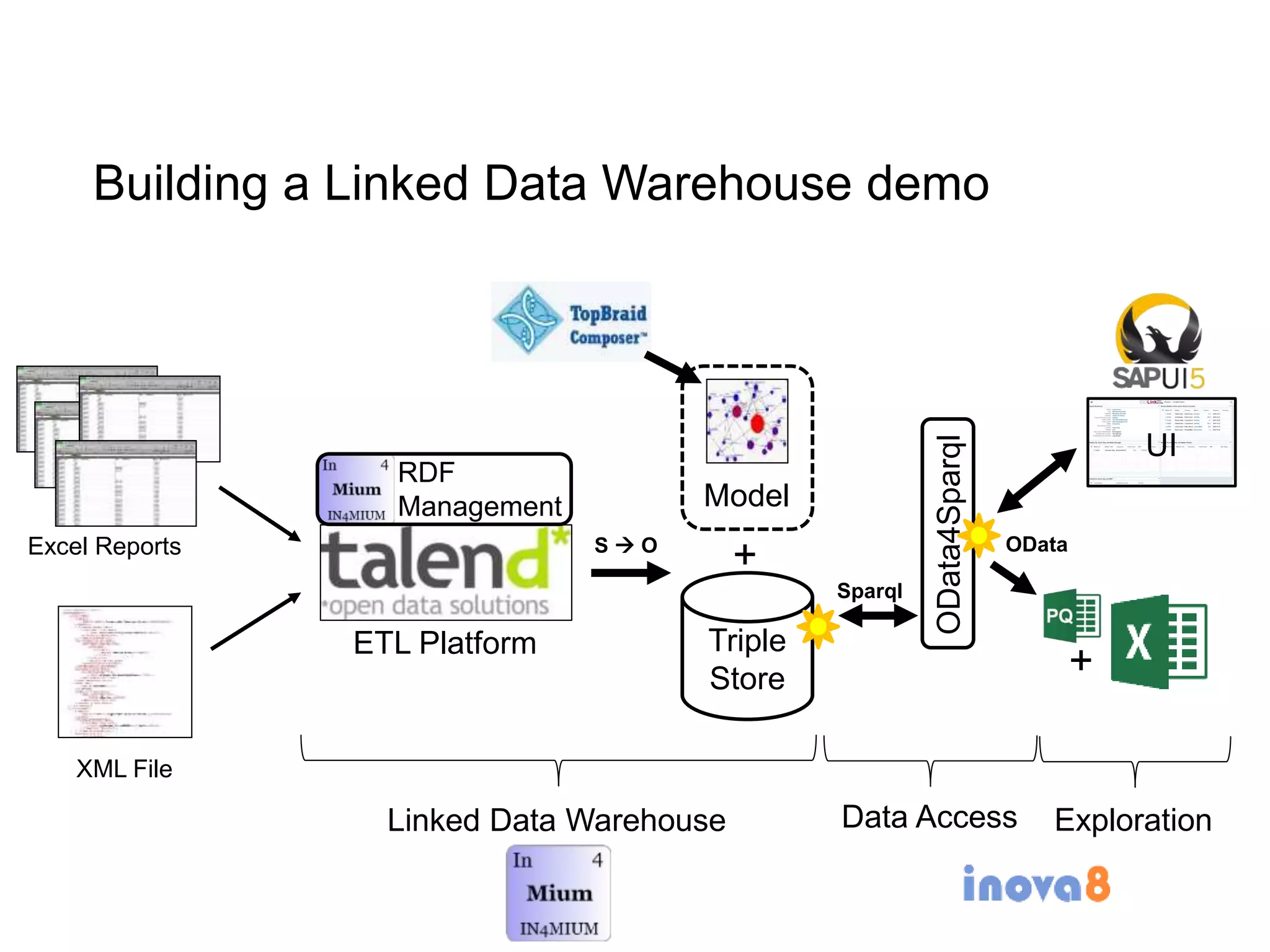

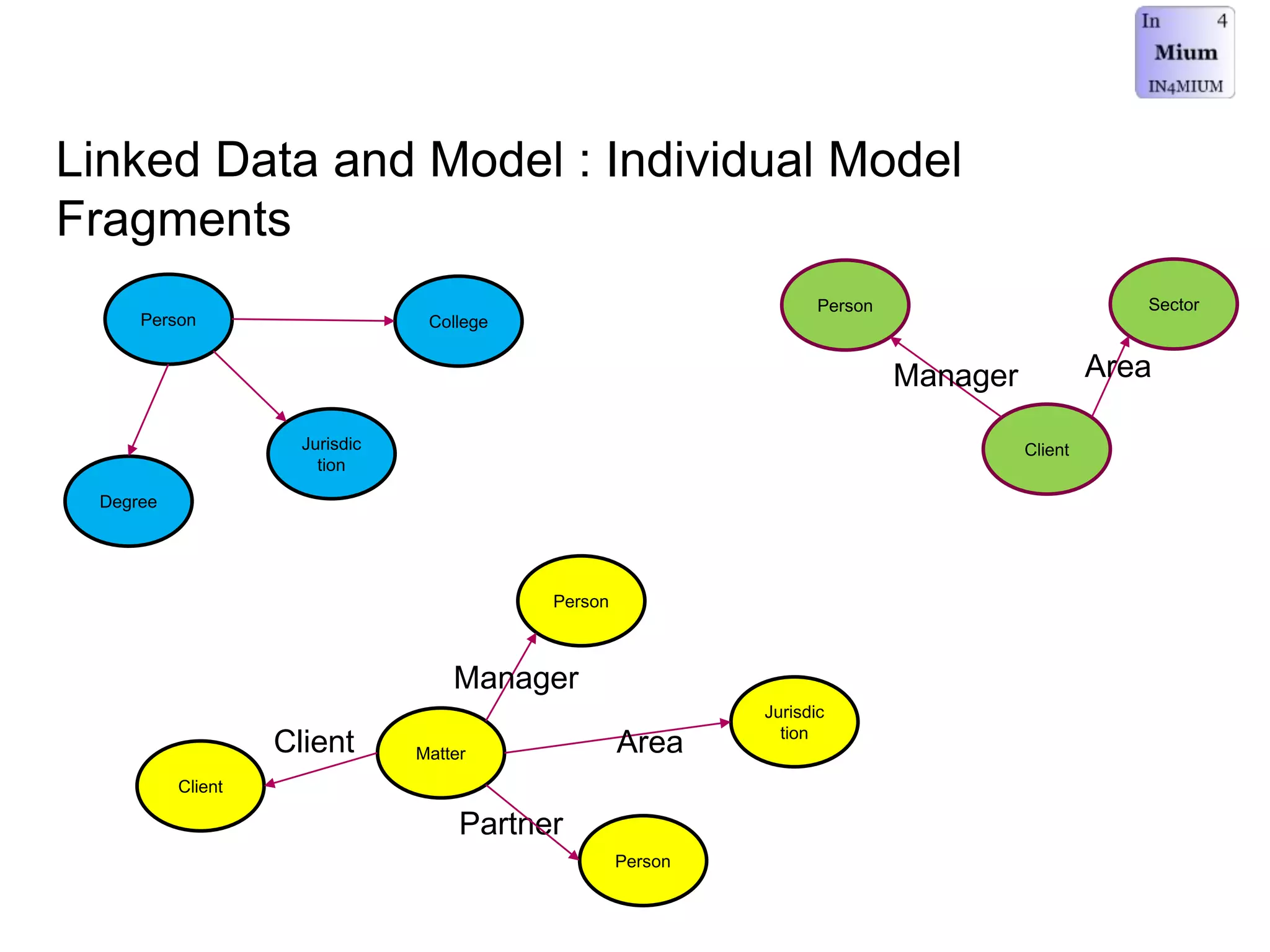

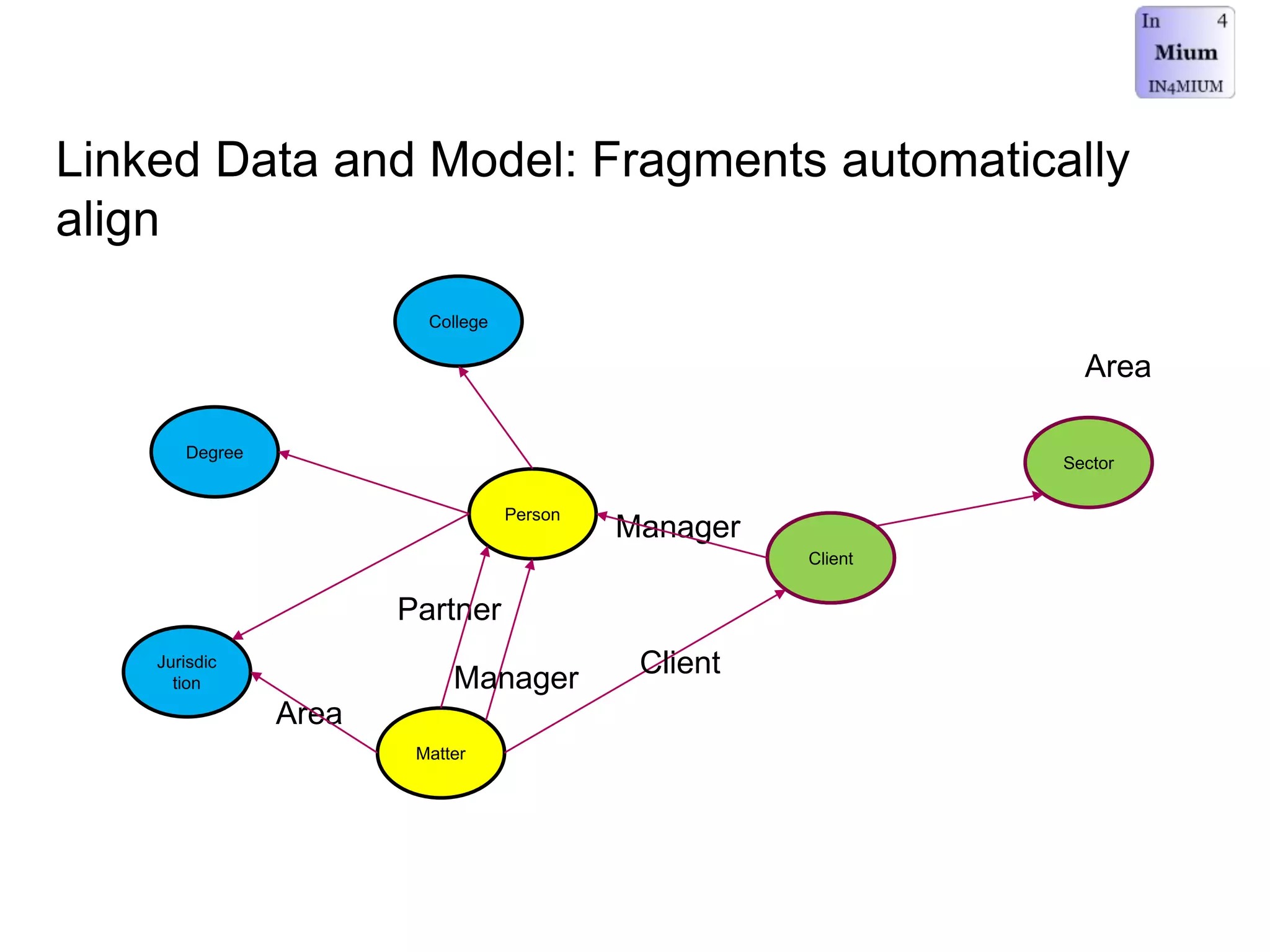

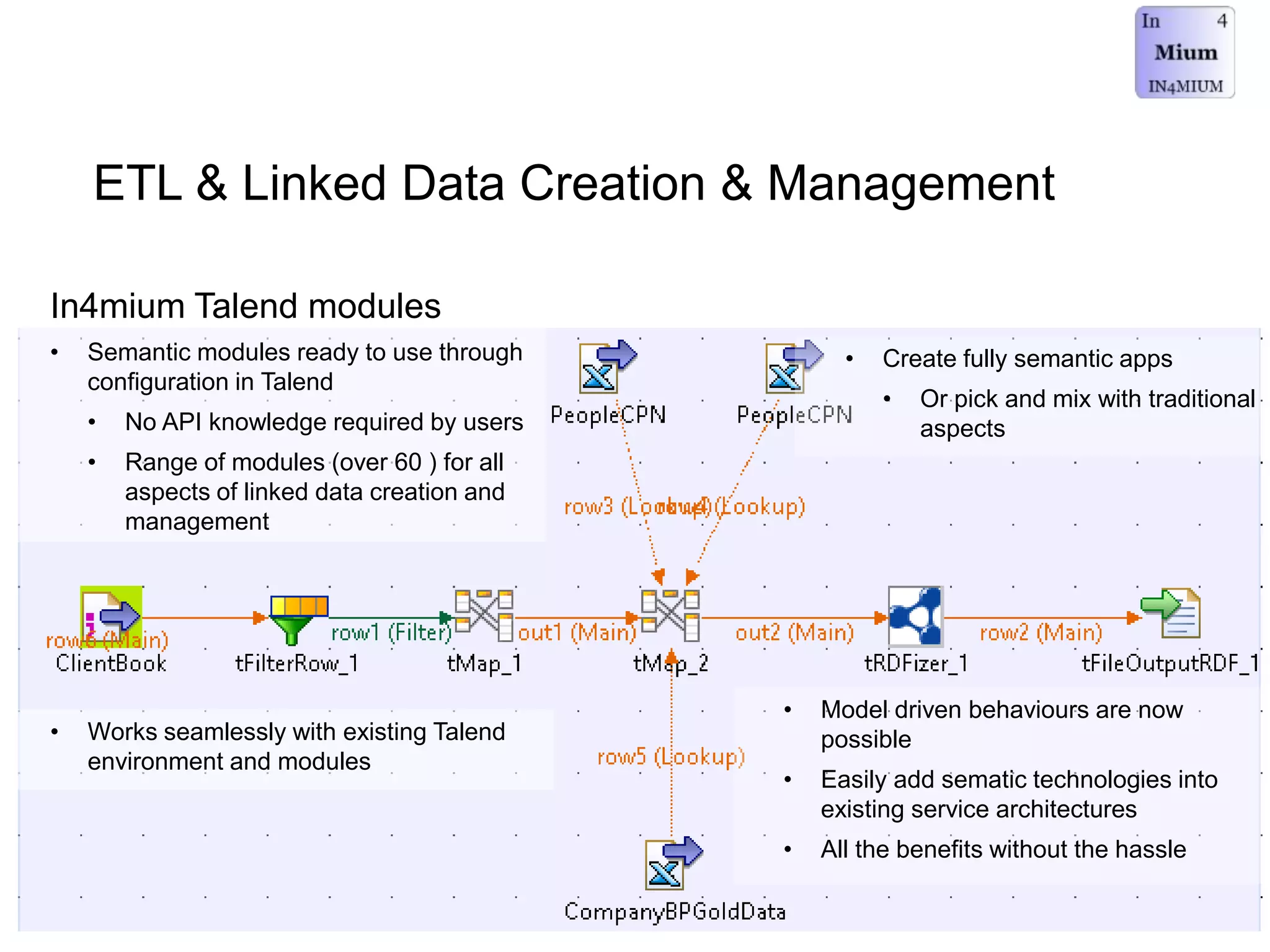

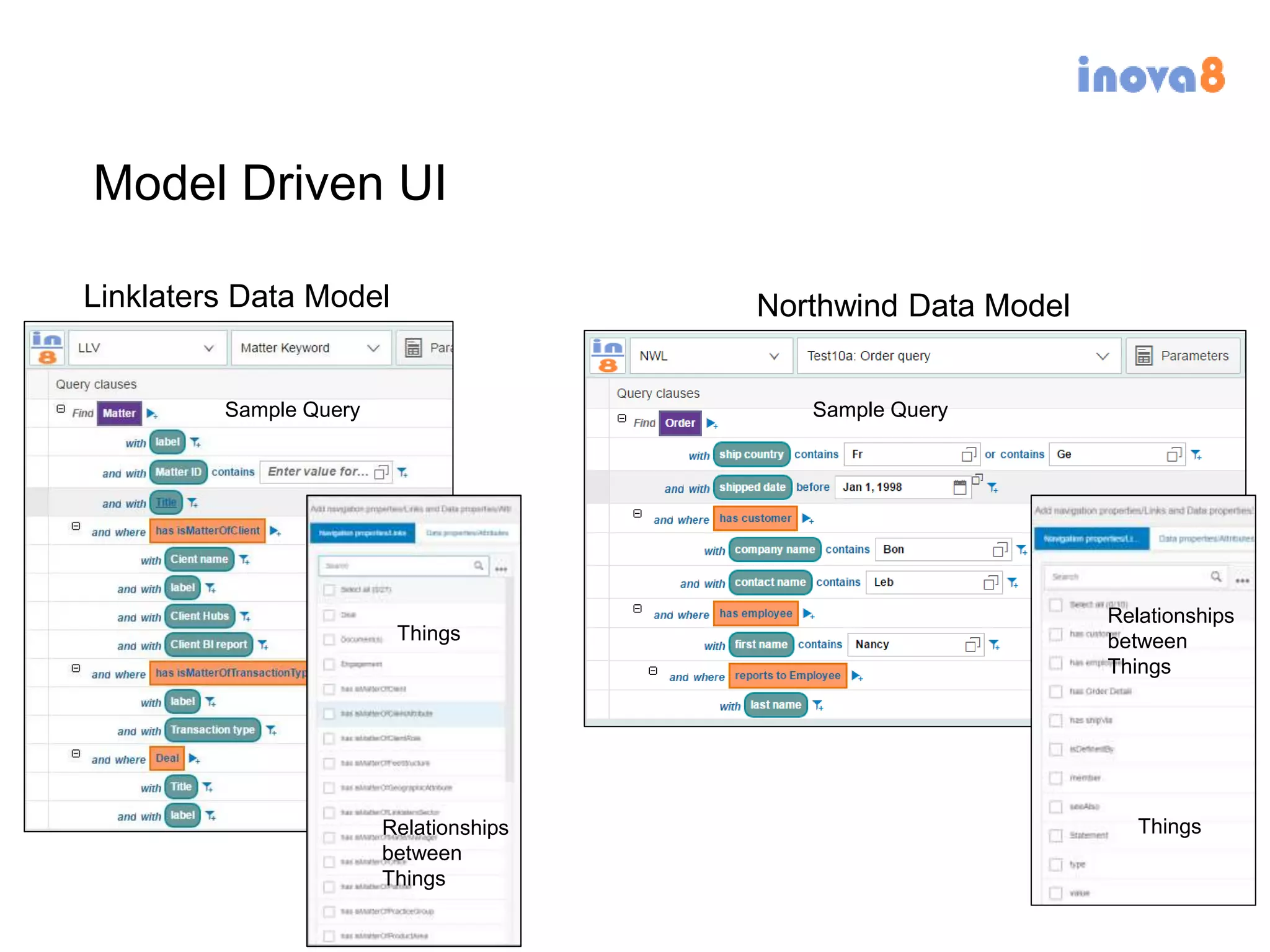

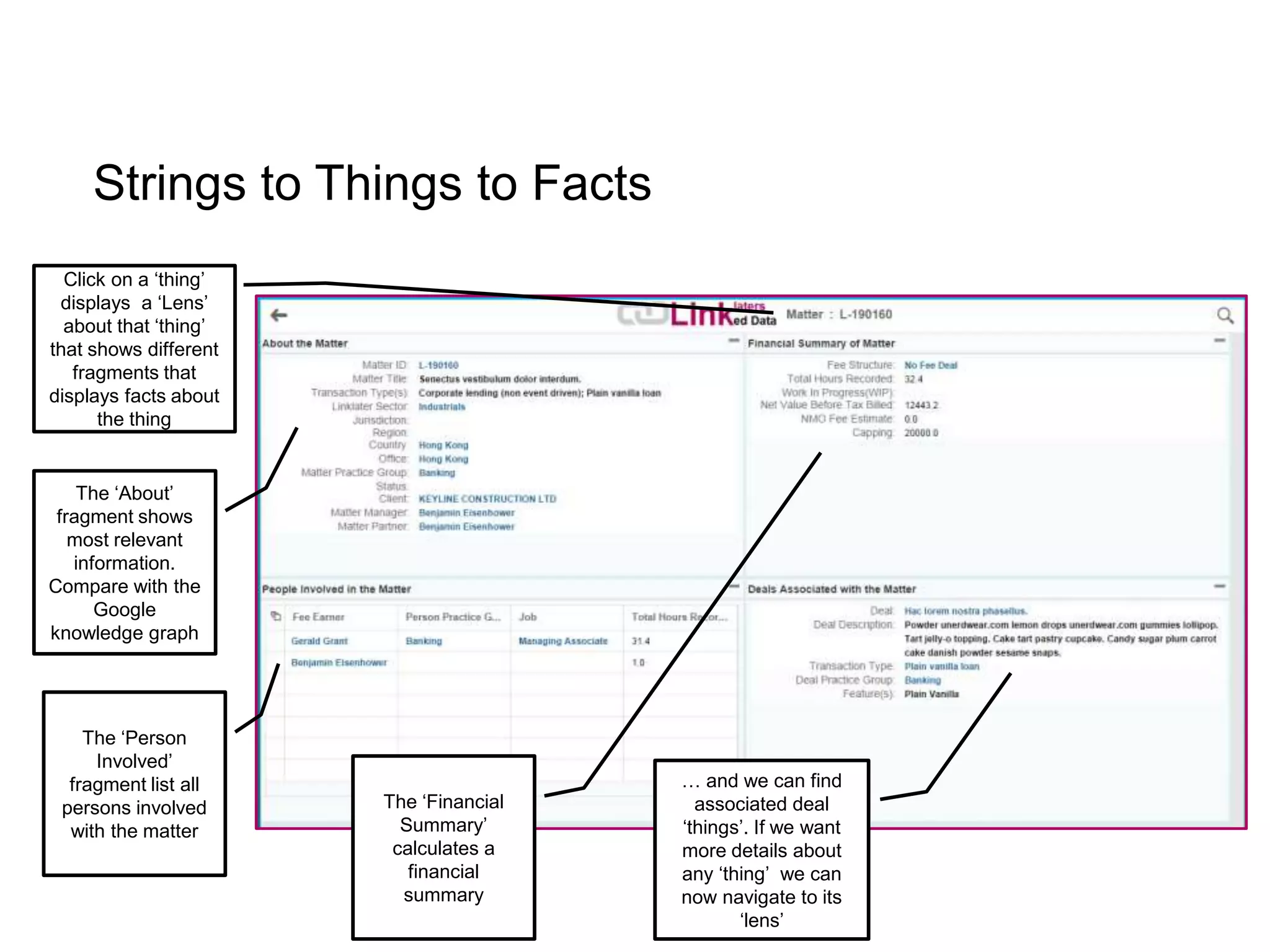

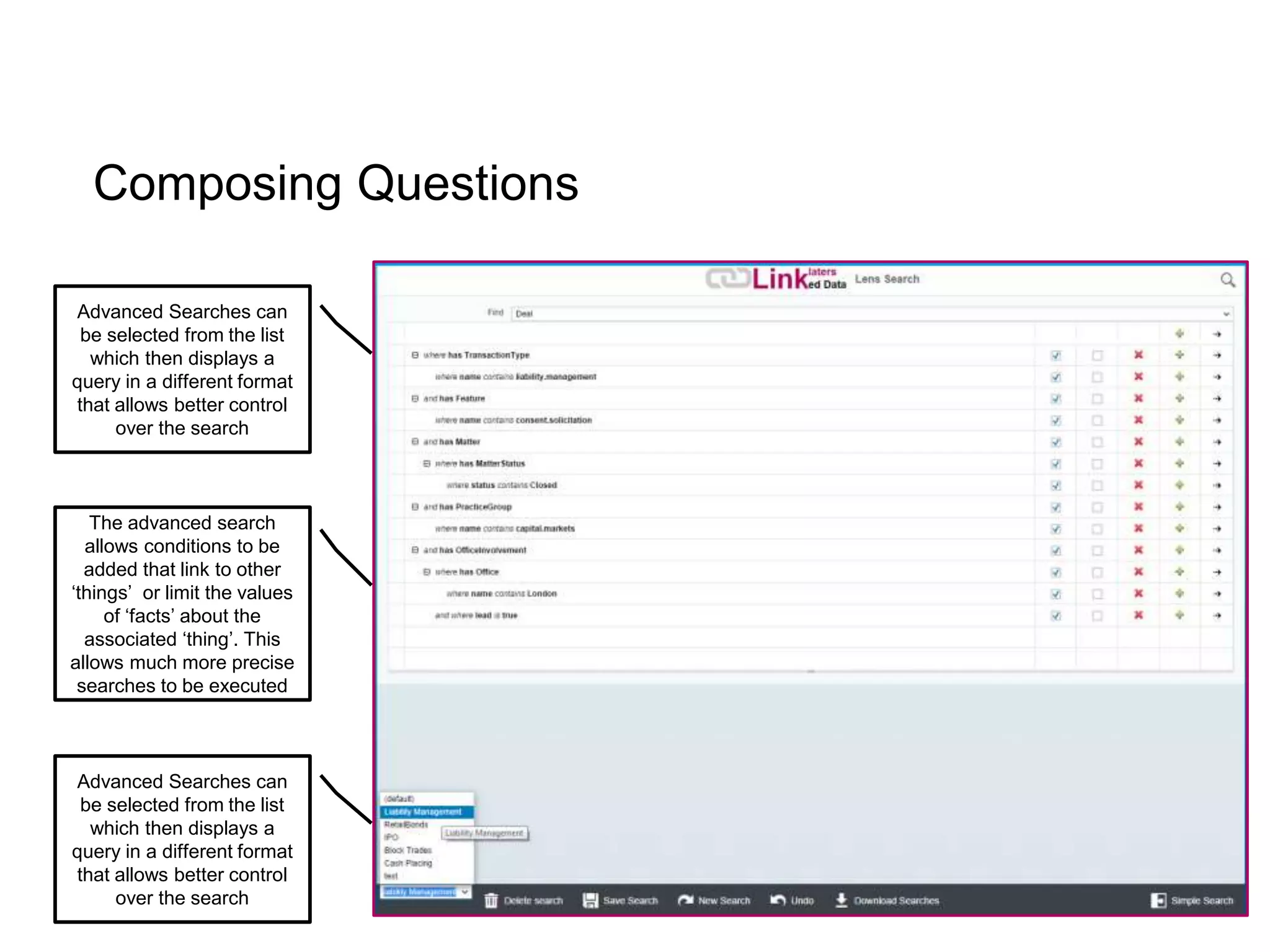

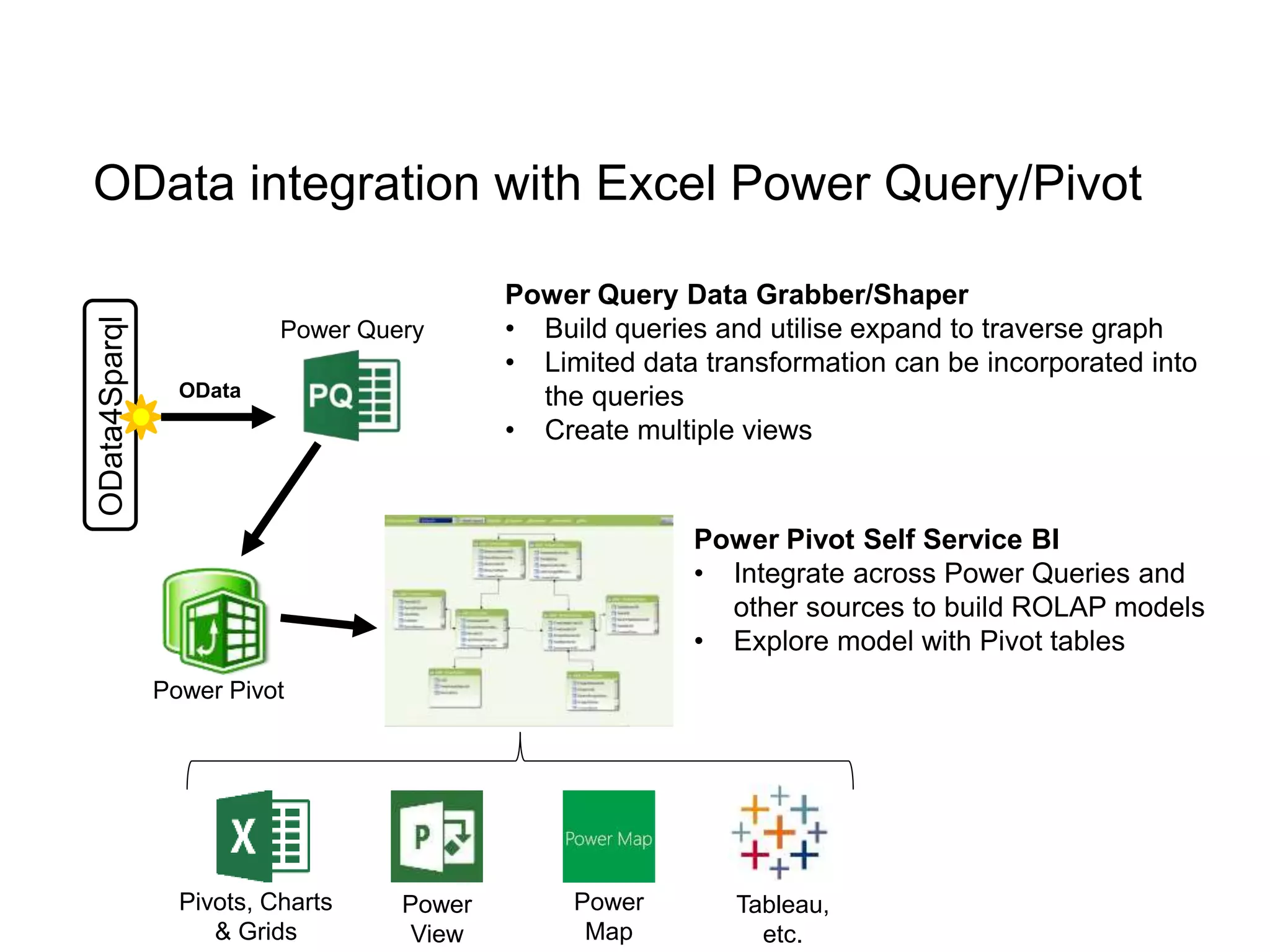

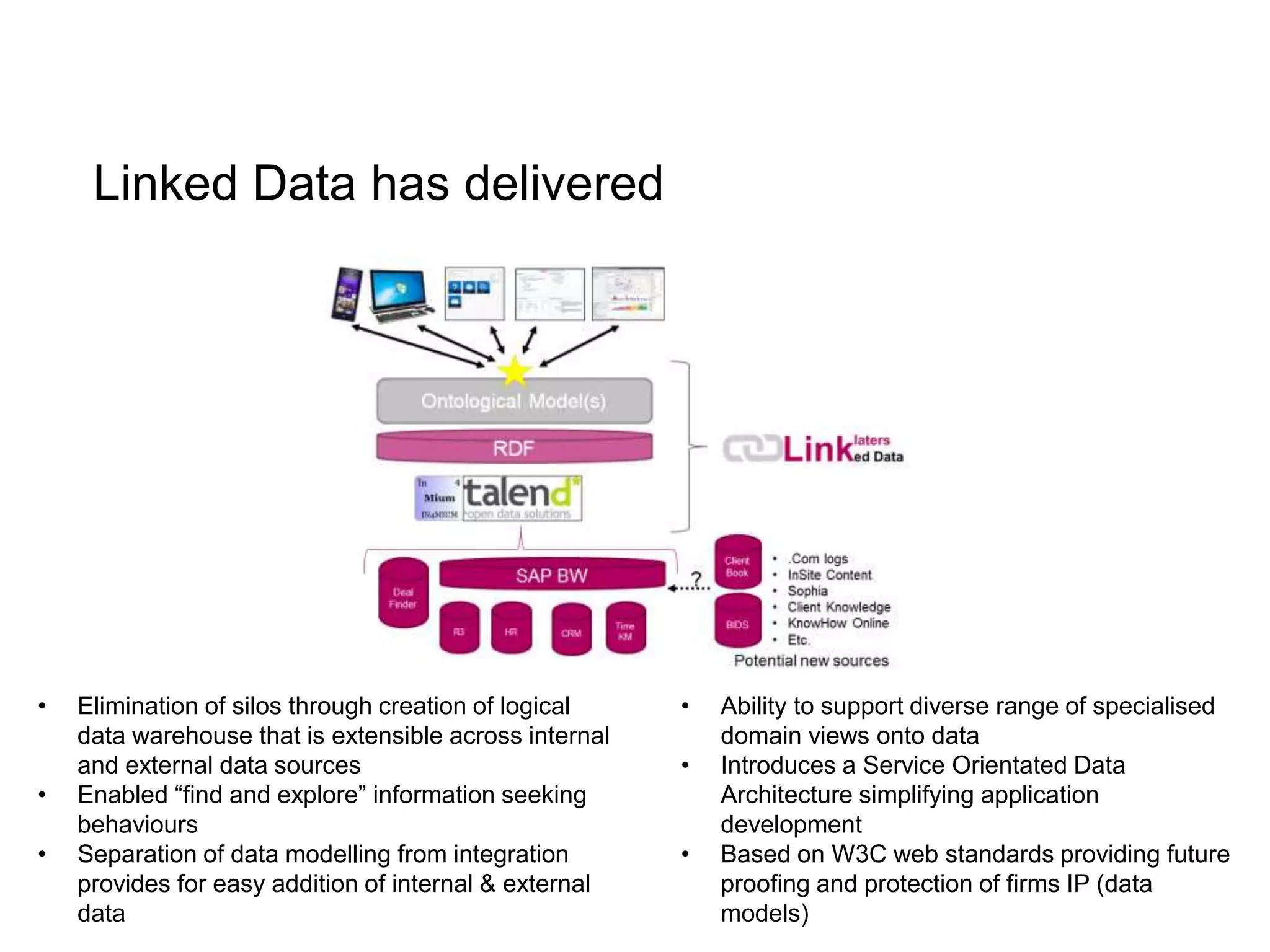

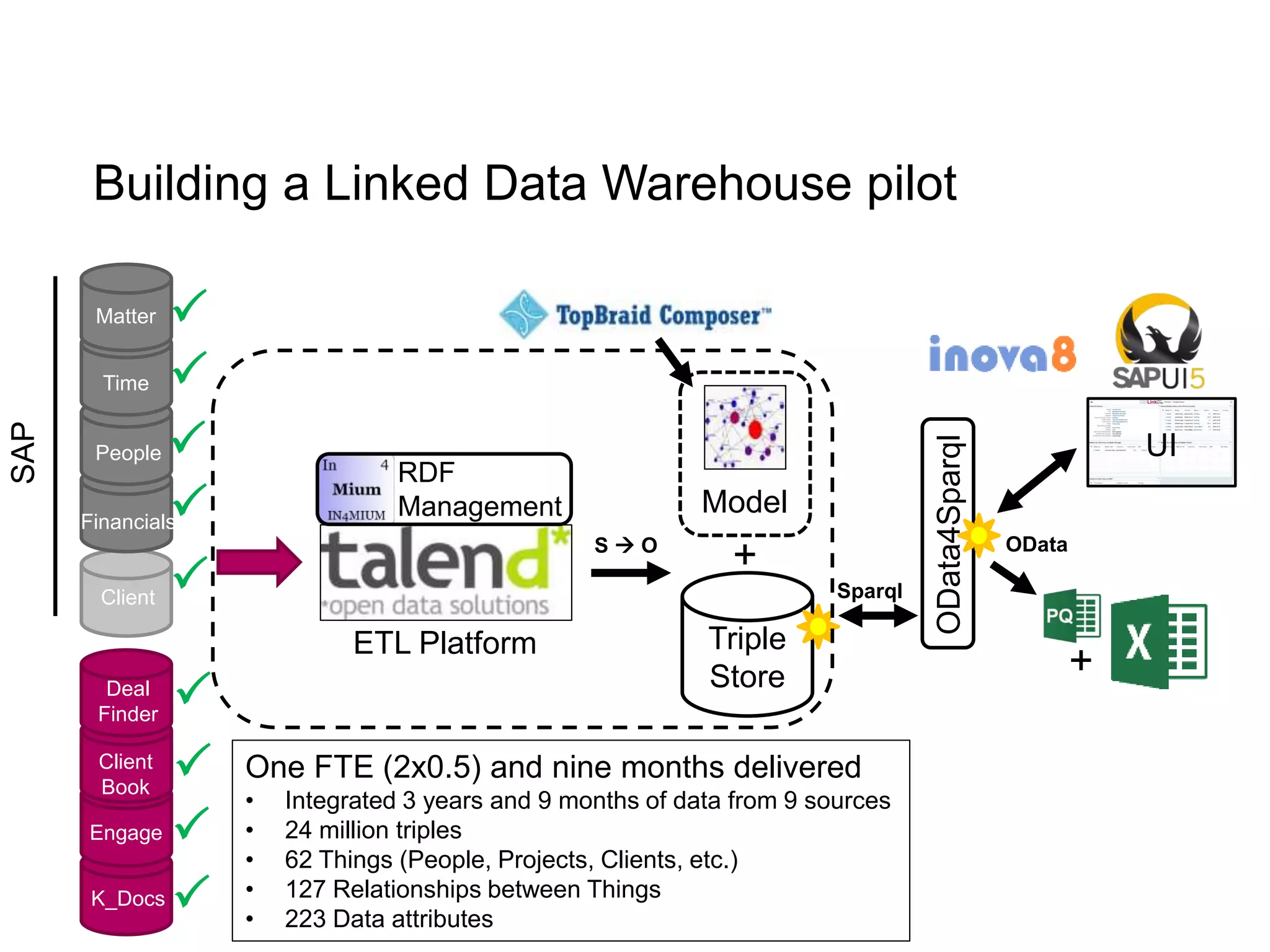

The document discusses the implementation of a linked data warehouse at Linklaters LLP, highlighting the challenges of accessing information due to silos and diverse specializations. It details the approach taken, including the use of semantic technologies and the integration of ETL processes with user-friendly interfaces like odata4sparql, enabling data exploration and discovery. The conclusion emphasizes the benefits of eliminating silos, supporting specialized domain views, and facilitating application development through a service-oriented data architecture.