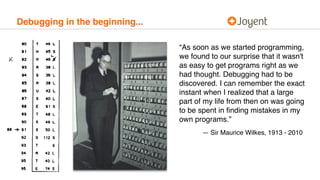

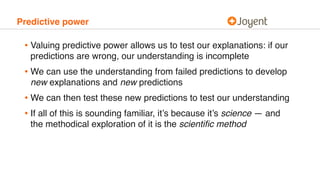

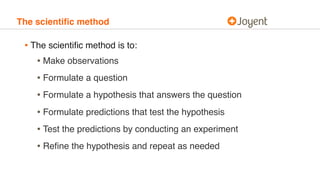

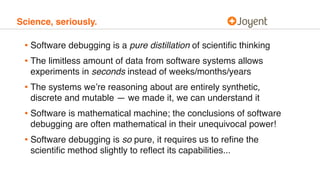

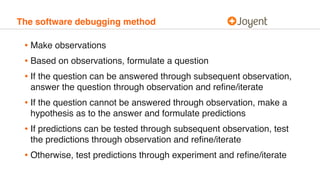

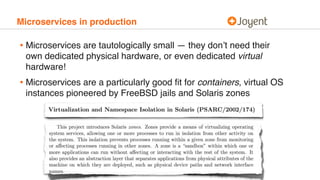

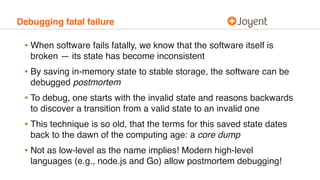

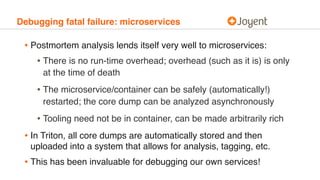

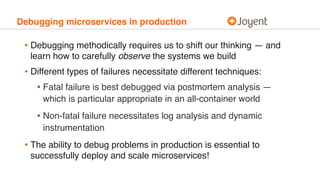

This document discusses debugging microservices in production environments. It begins by providing context on the history of debugging and introduces some common anti-patterns in debugging. It then argues that debugging should be approached methodically using the scientific method. The document outlines a method for debugging software called the software debugging method. Finally, it discusses challenges that arise in debugging microservices due to their distributed nature and argues that containers can help address these challenges.