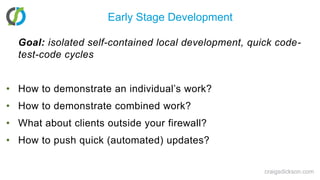

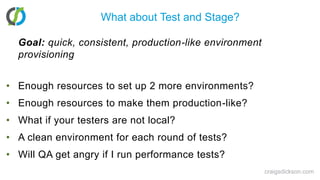

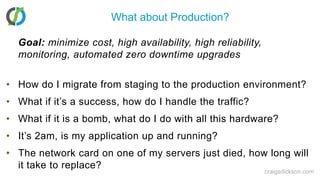

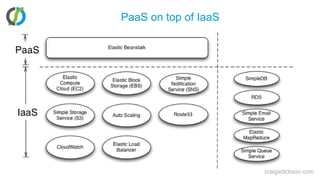

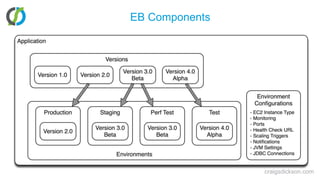

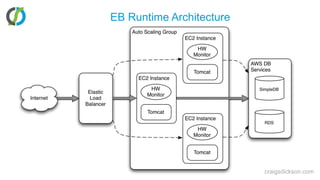

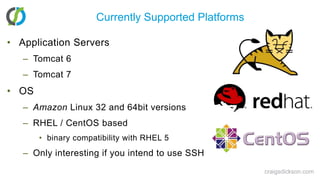

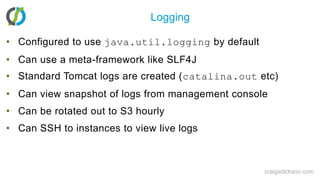

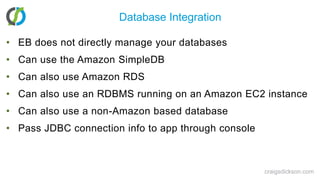

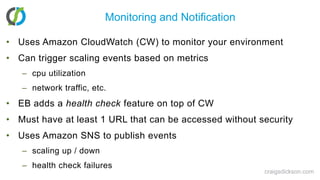

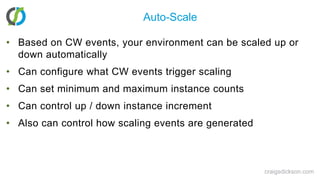

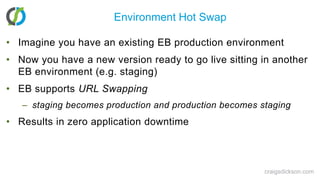

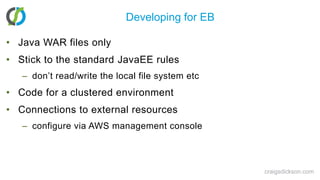

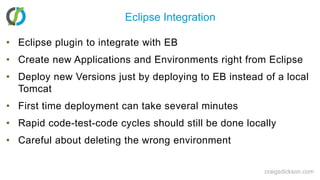

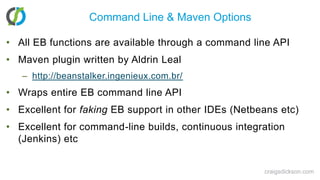

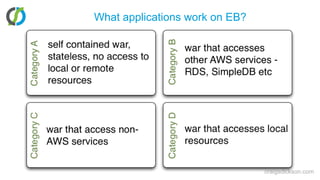

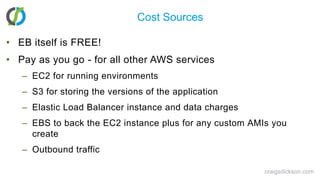

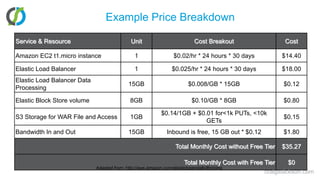

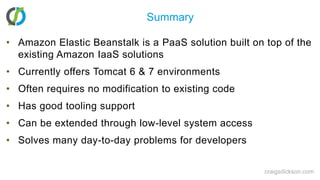

The document discusses Amazon Elastic Beanstalk, a platform-as-a-service (PaaS) solution designed for deploying Java web applications with minimal setup and maintenance issues, launched by AWS in 2011. It highlights how Elastic Beanstalk addresses common challenges faced by developers, such as resource scaling and environment management, without requiring significant modifications to existing applications. The presentation also covers costs, available integrations, and alternatives to Elastic Beanstalk in the cloud computing space.