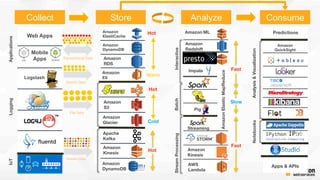

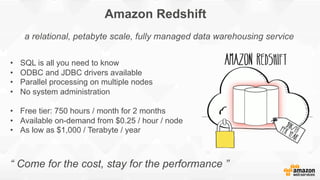

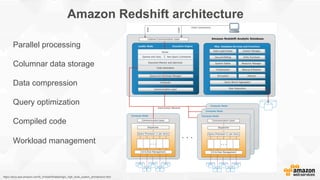

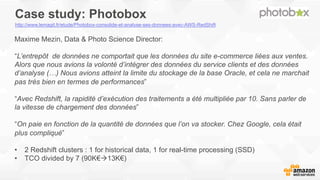

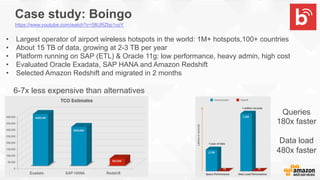

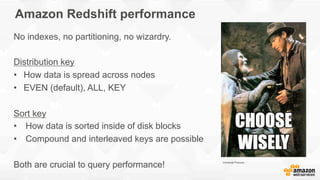

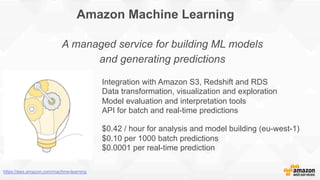

This document provides a summary of a presentation about building data warehouses with Amazon Redshift and using Amazon Machine Learning. The presentation discusses how Amazon Redshift can be used to build a petabyte-scale data warehouse with SQL and no system administration. Case studies are presented showing companies saving on total cost of ownership by migrating to Amazon Redshift. It also briefly introduces Amazon Machine Learning for building predictive models with managed services. Demo examples are shown of loading data into Redshift and using ML to train a regression model and create a real-time prediction API.