Embed presentation

Download as PDF, PPTX

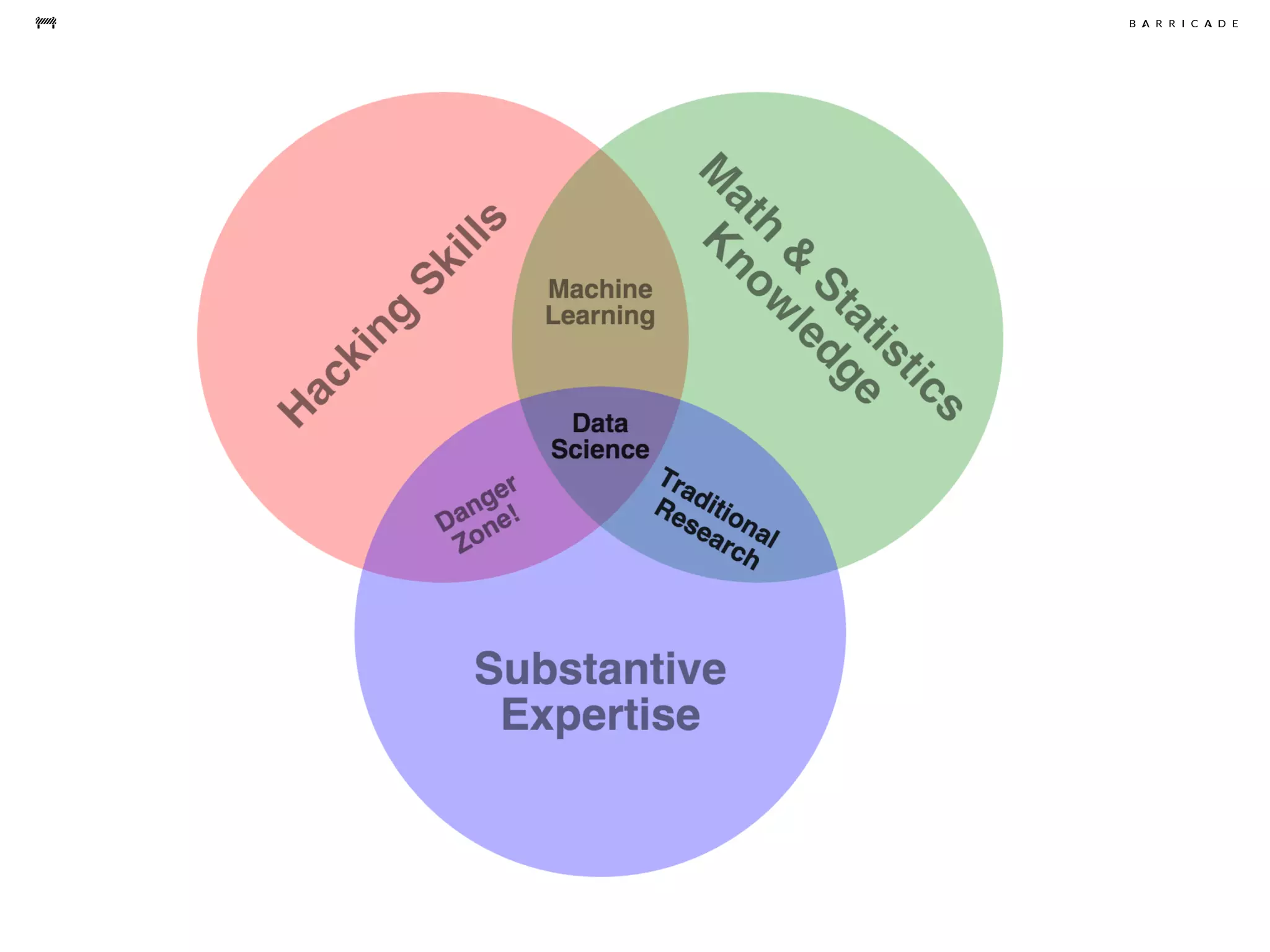

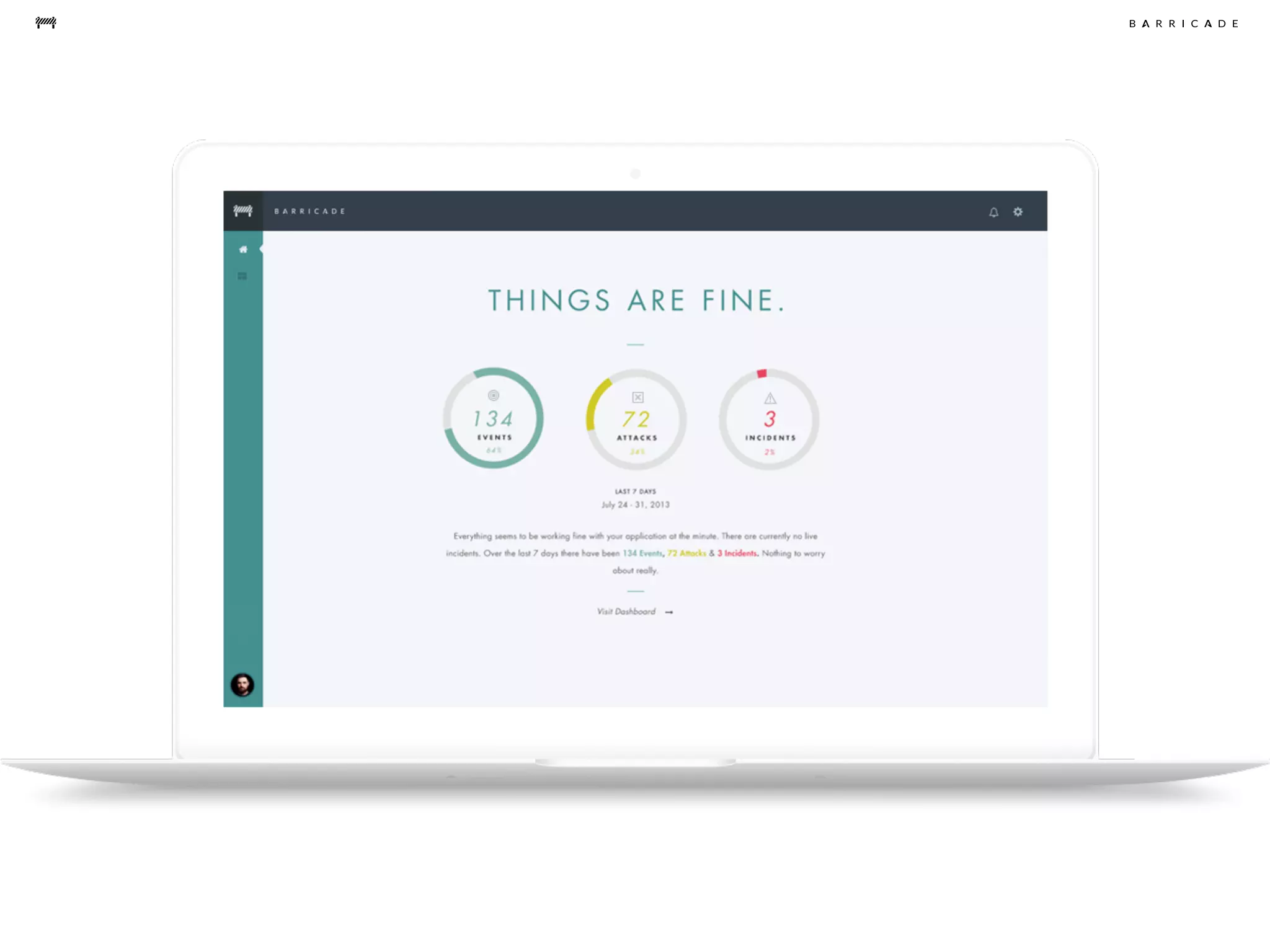

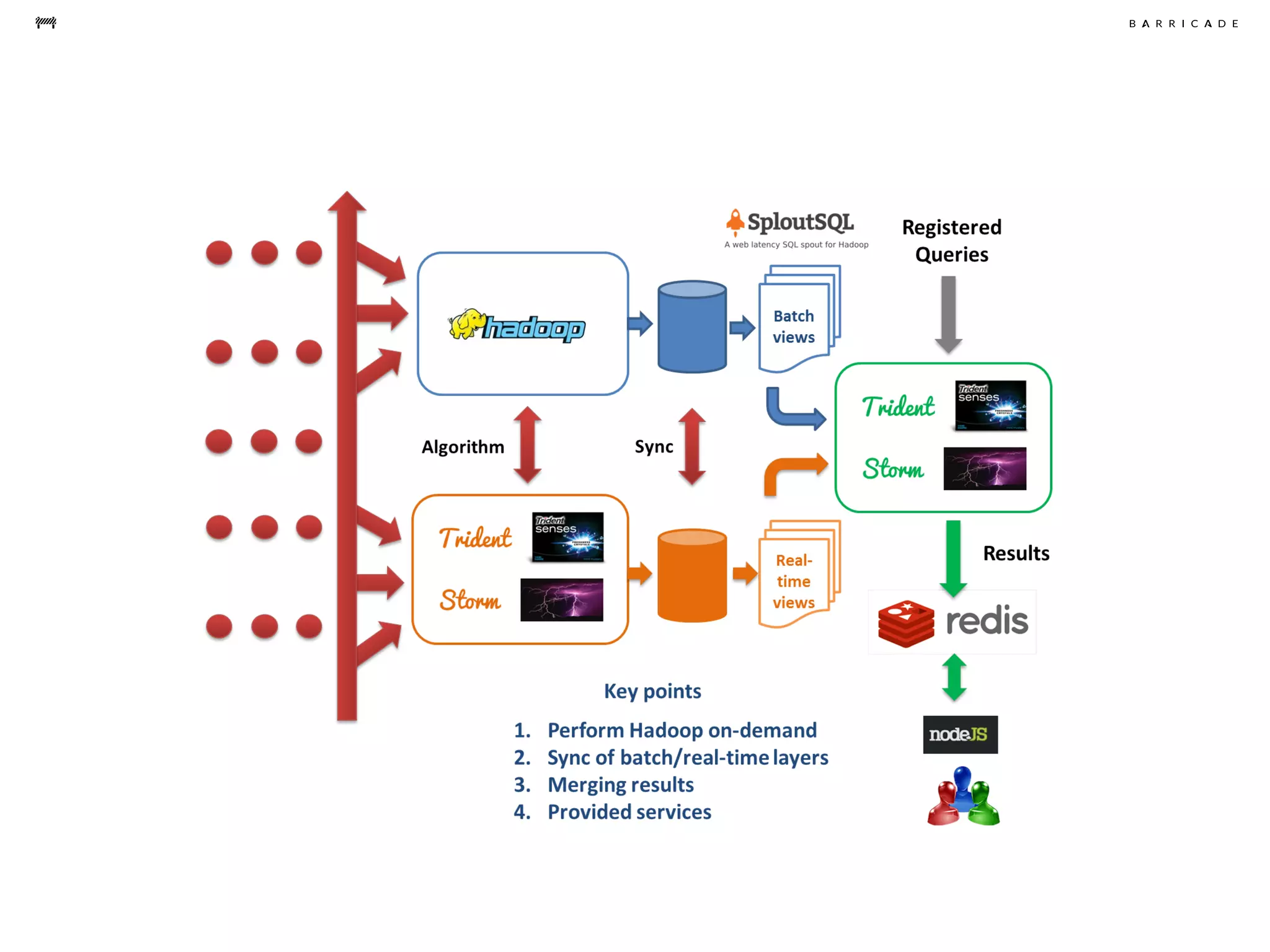

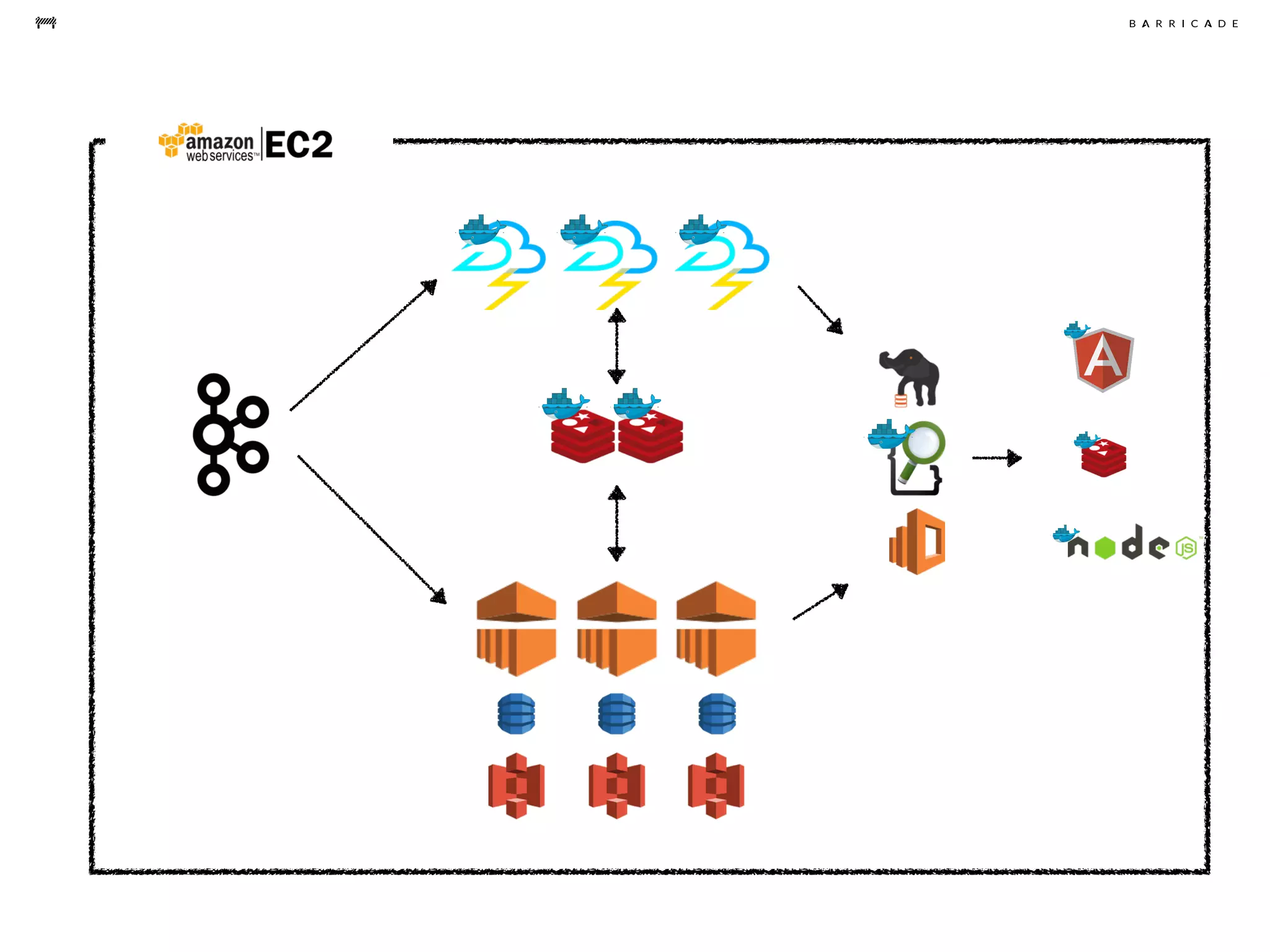

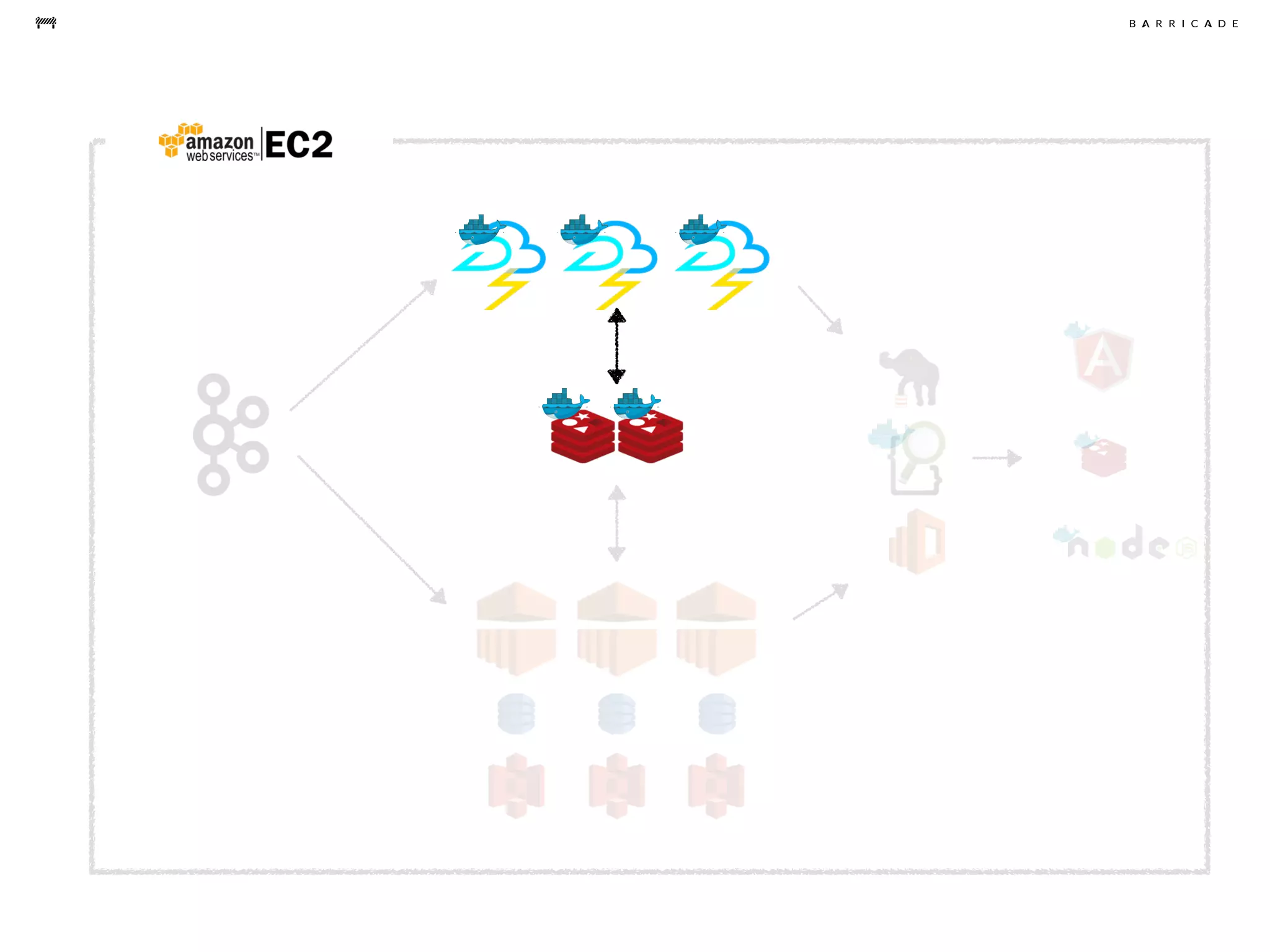

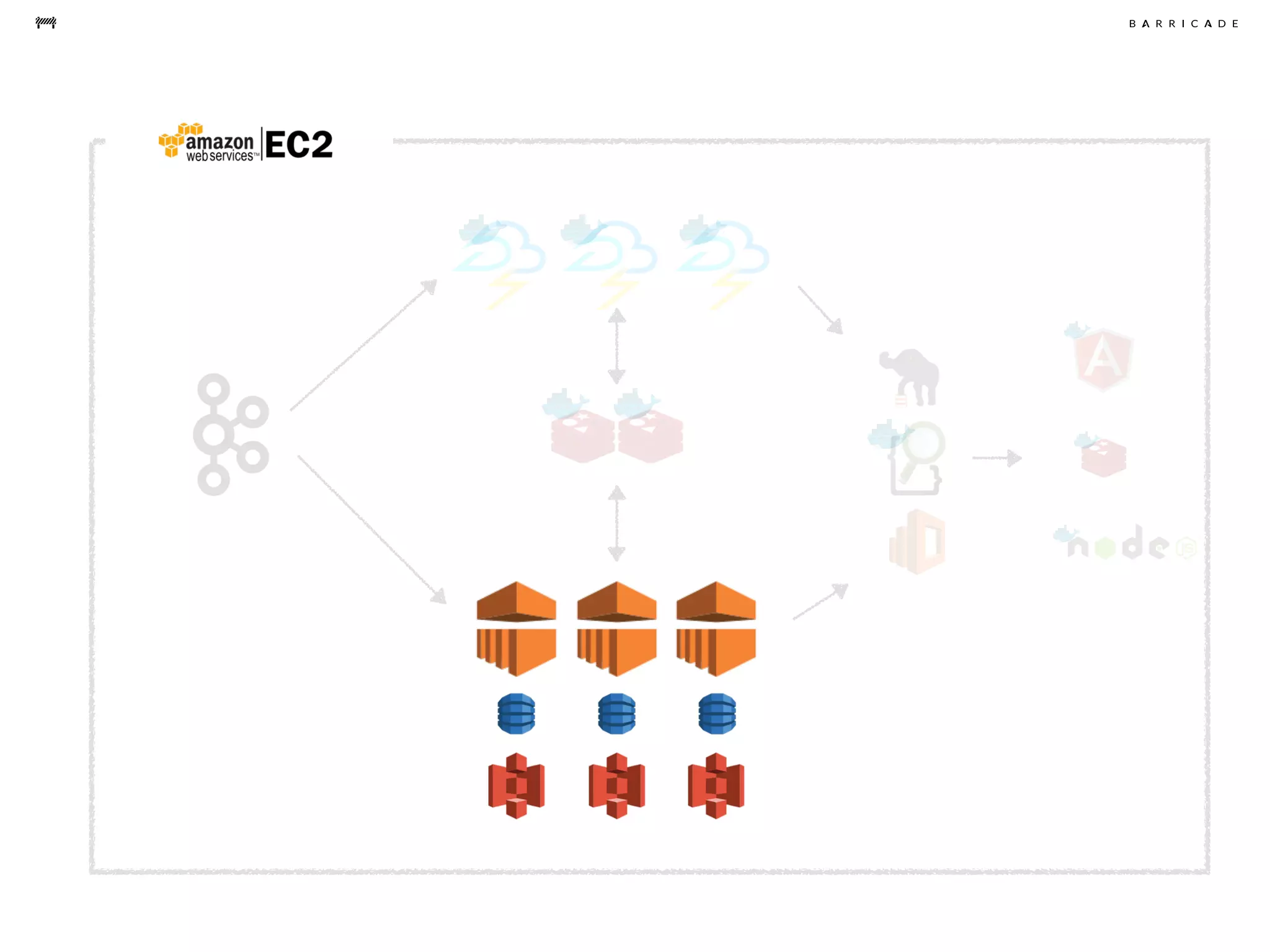

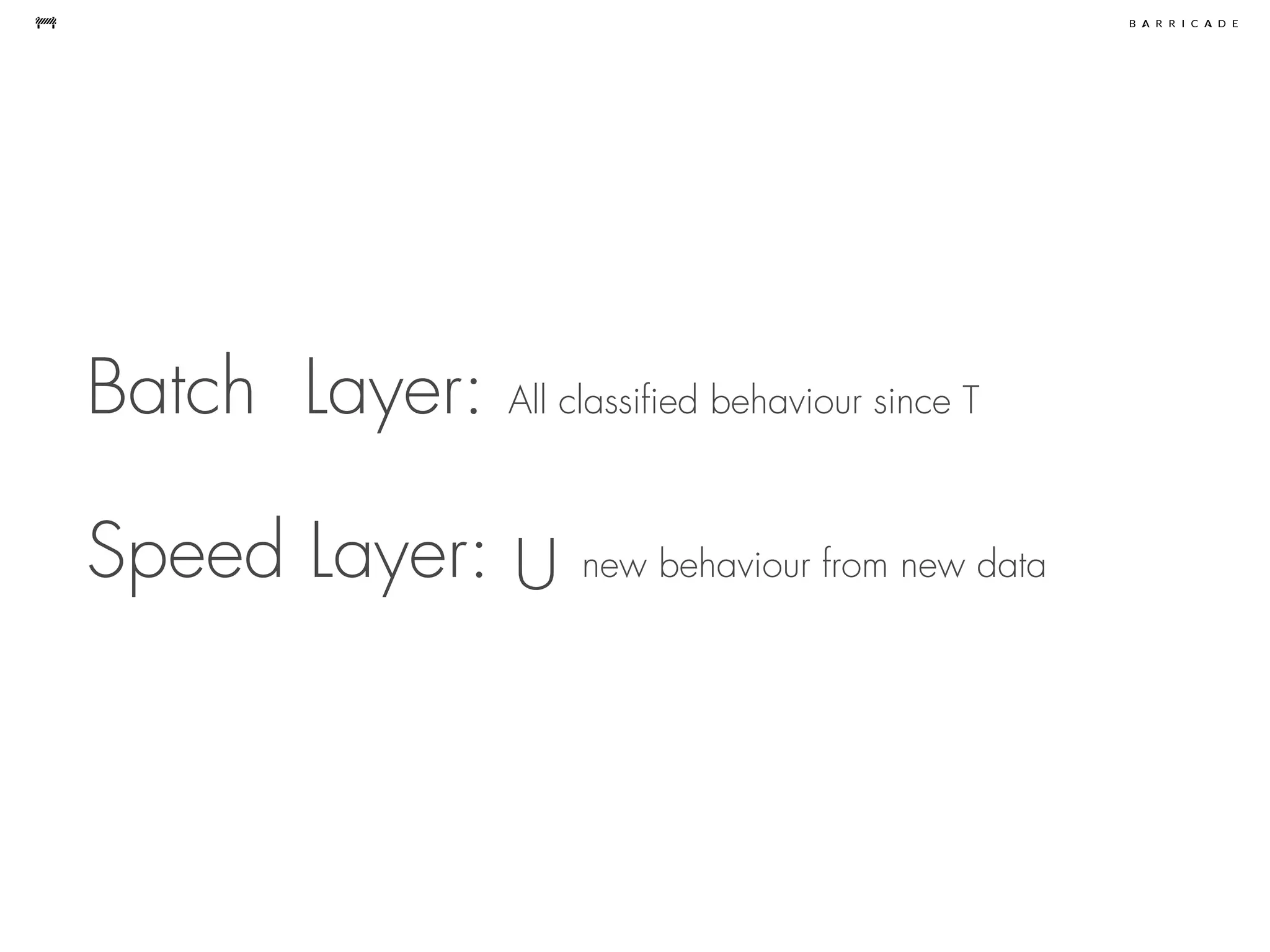

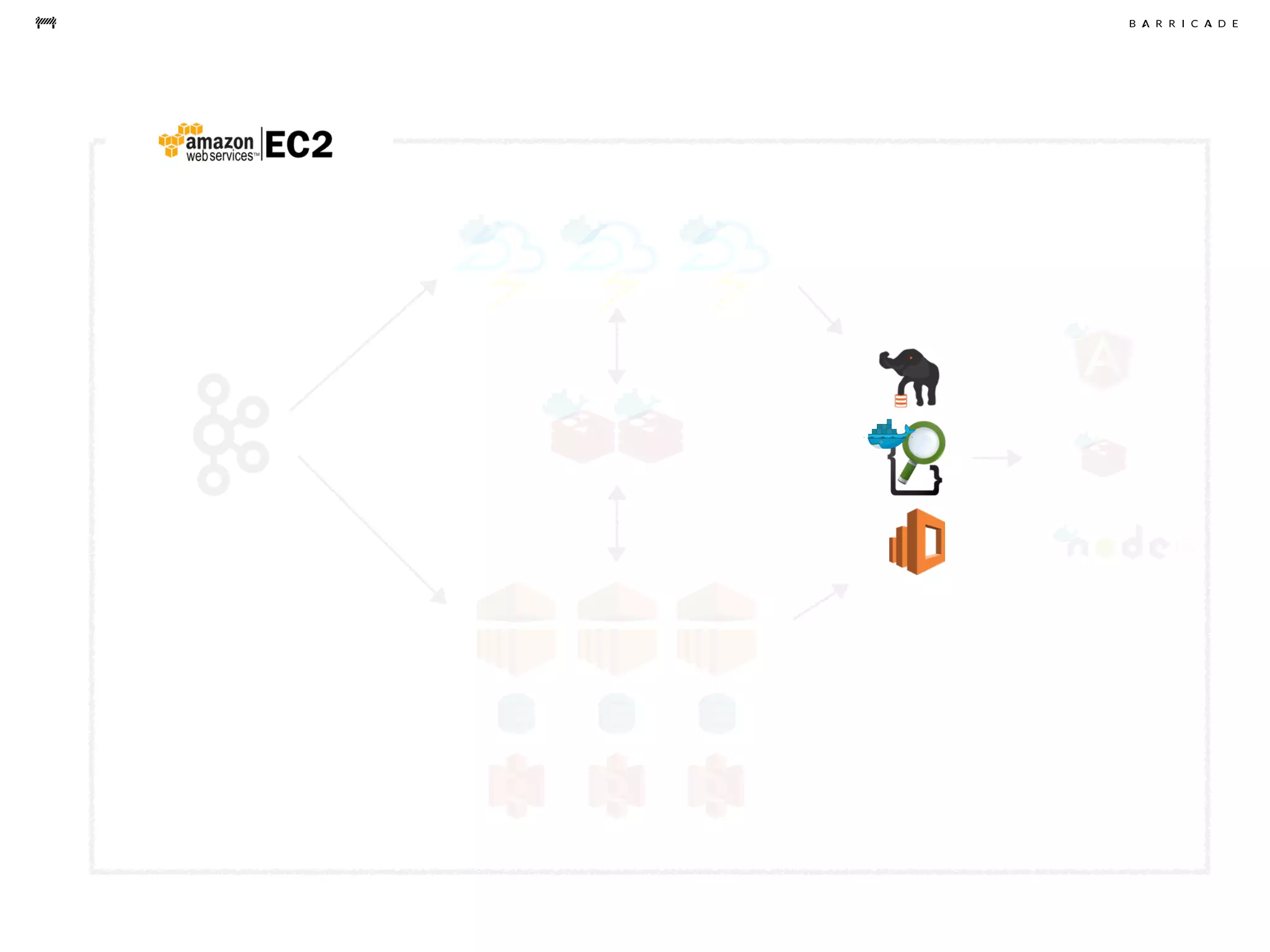

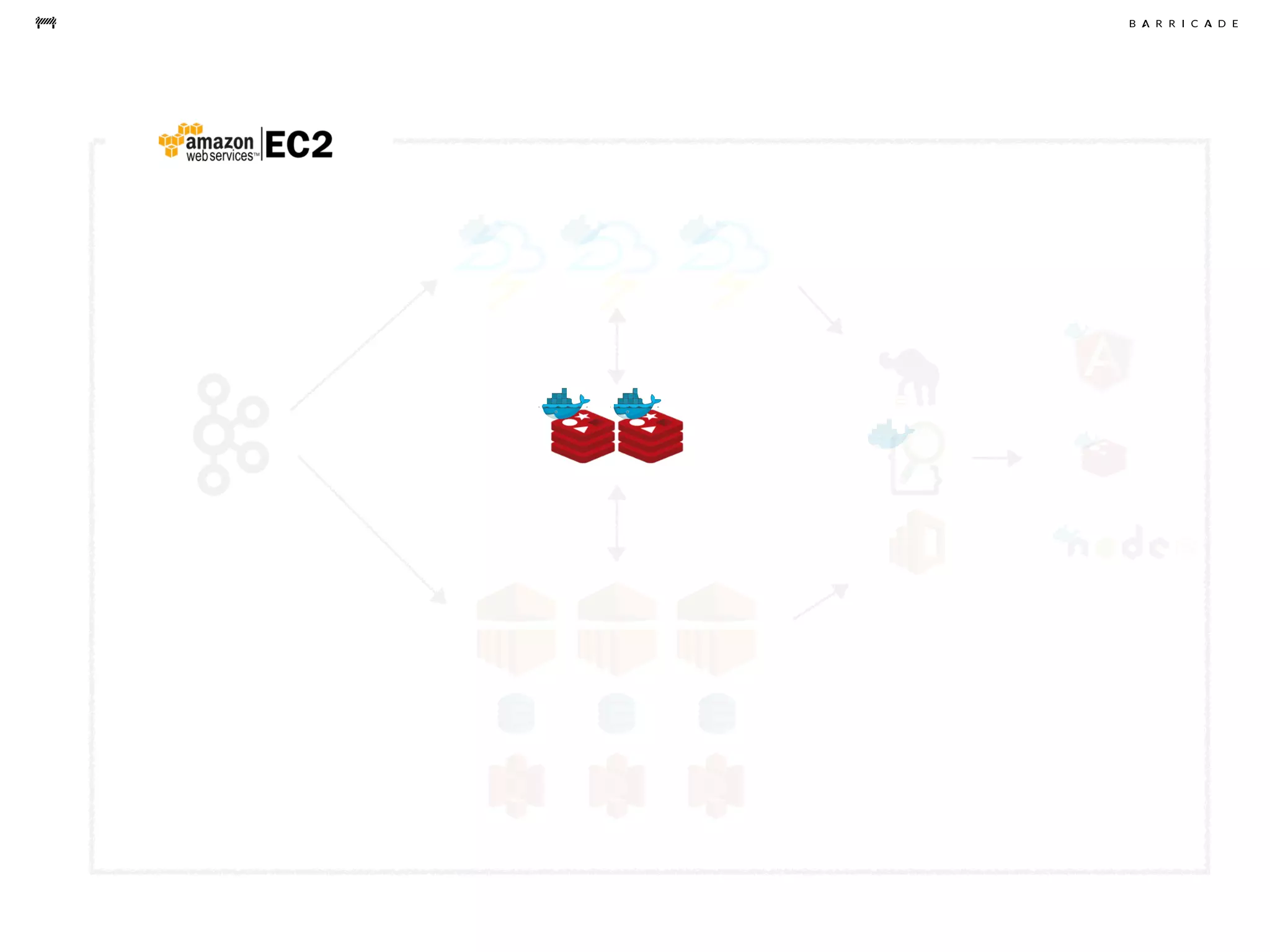

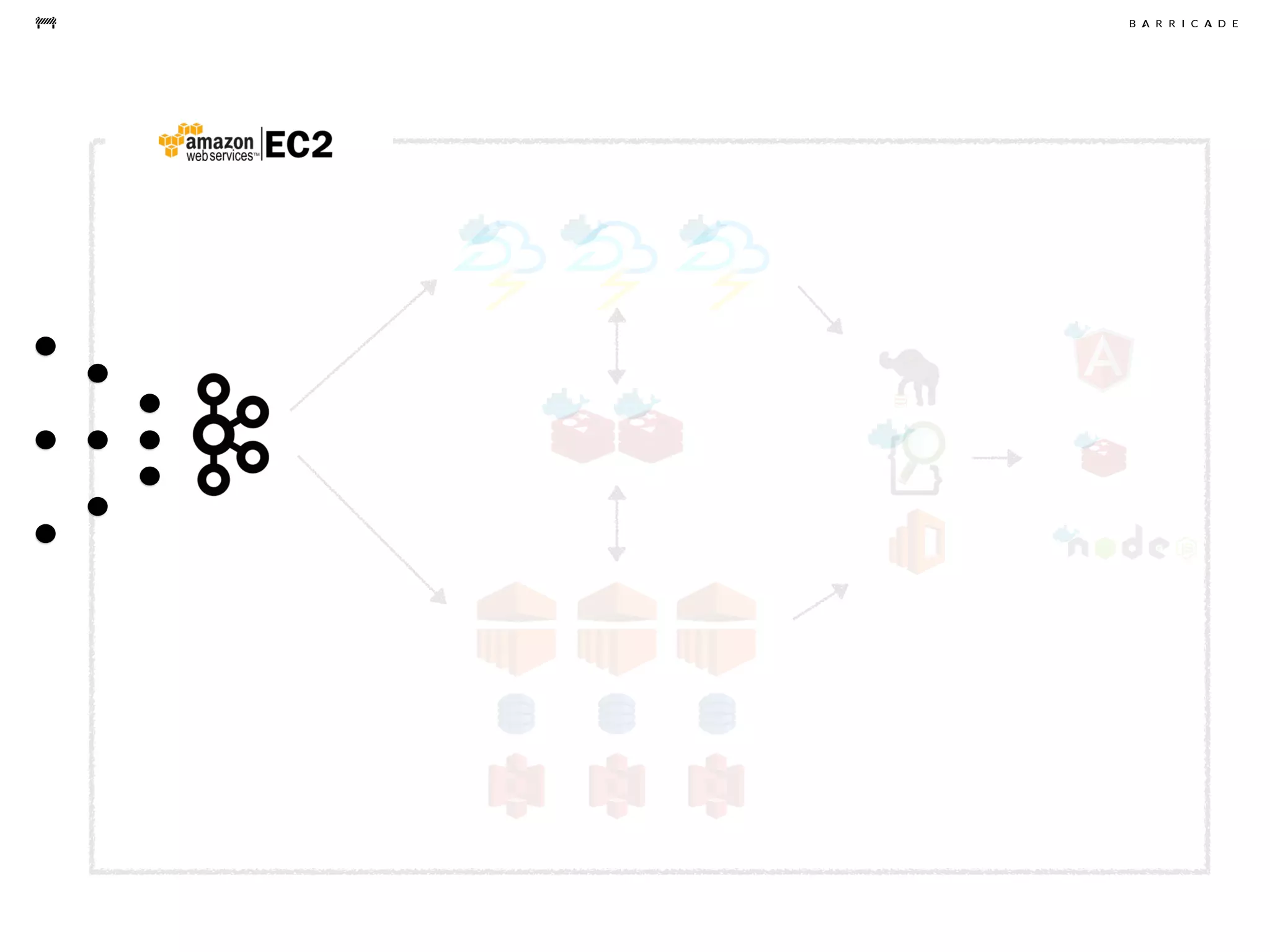

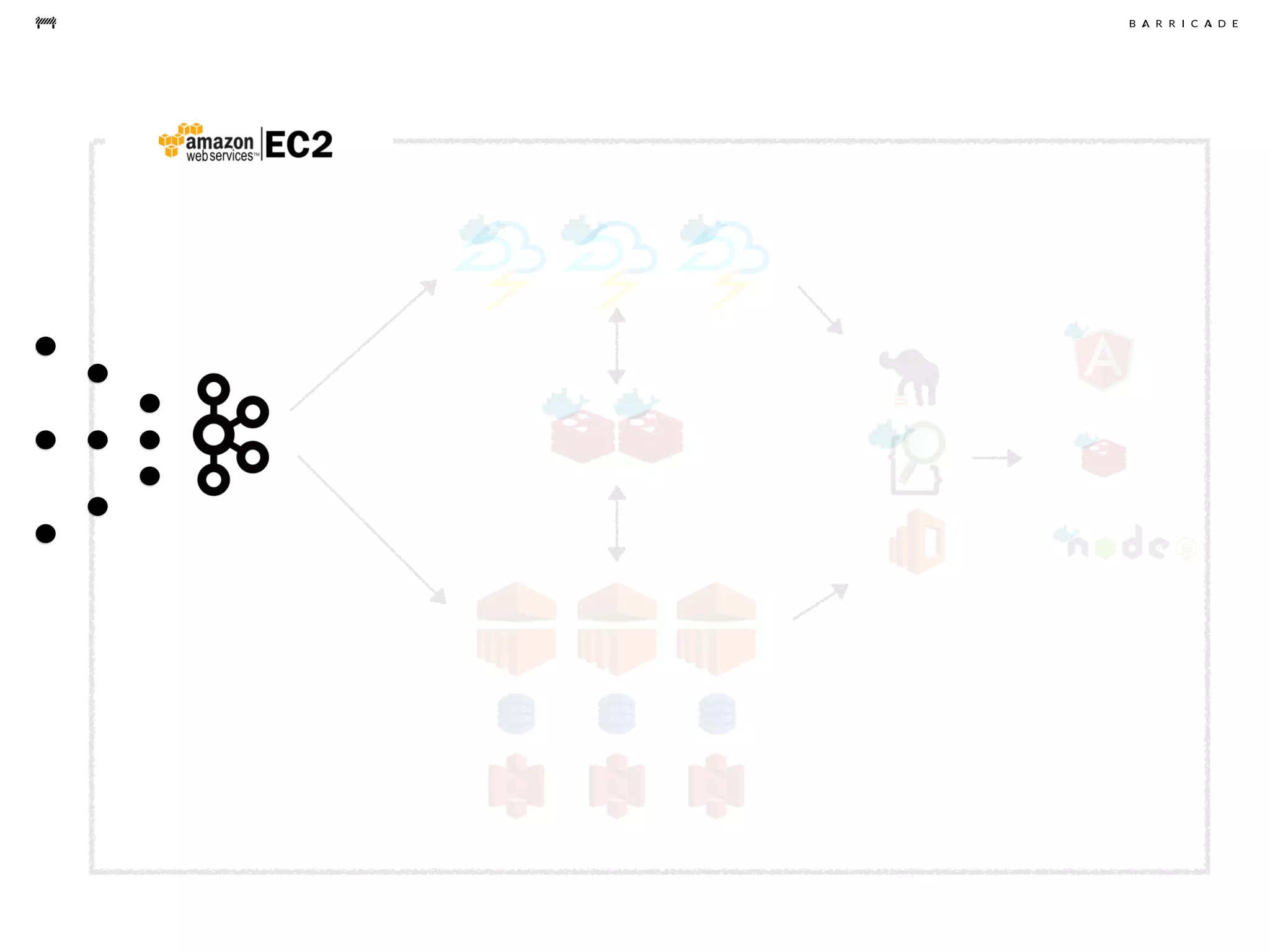

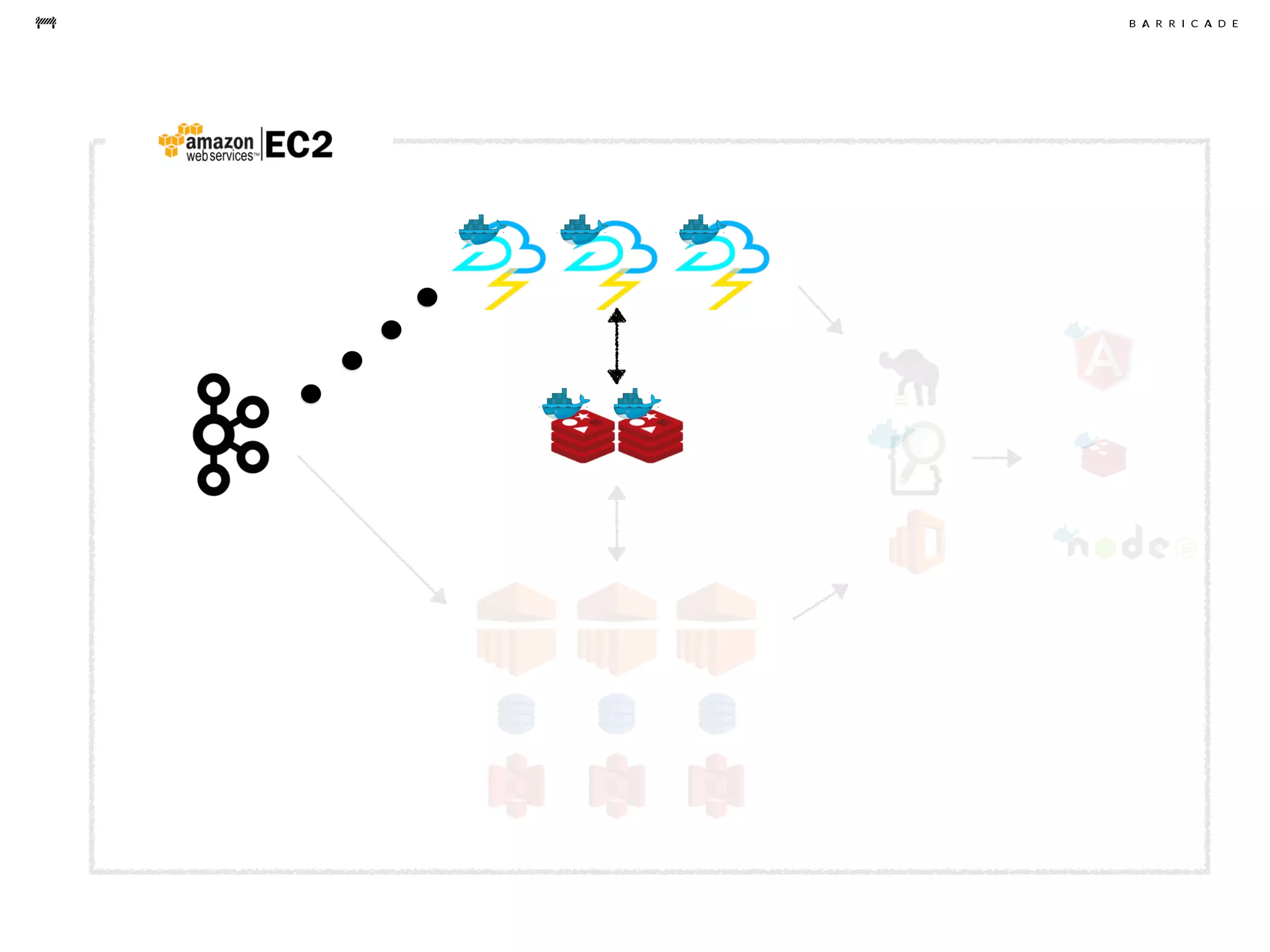

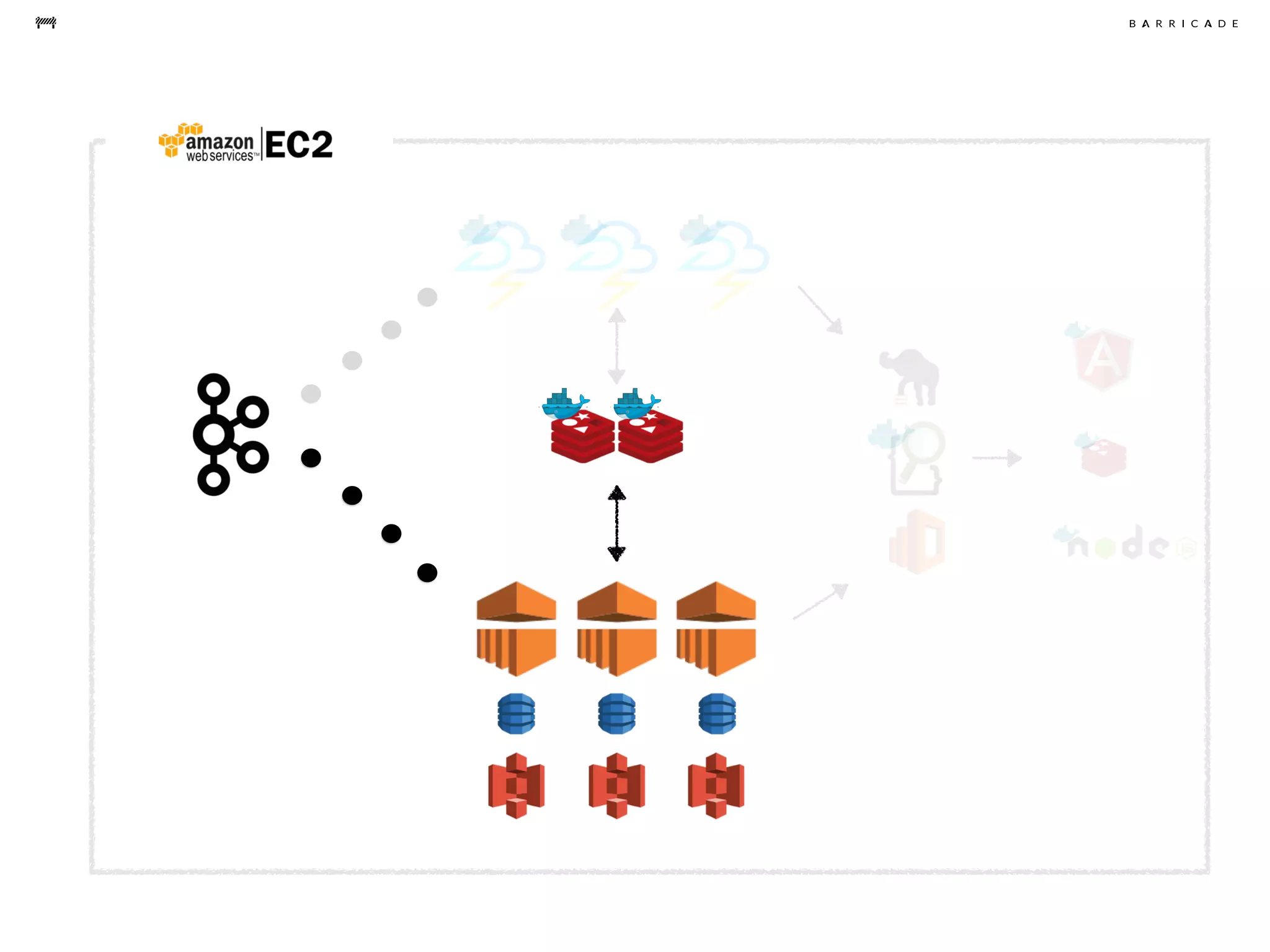

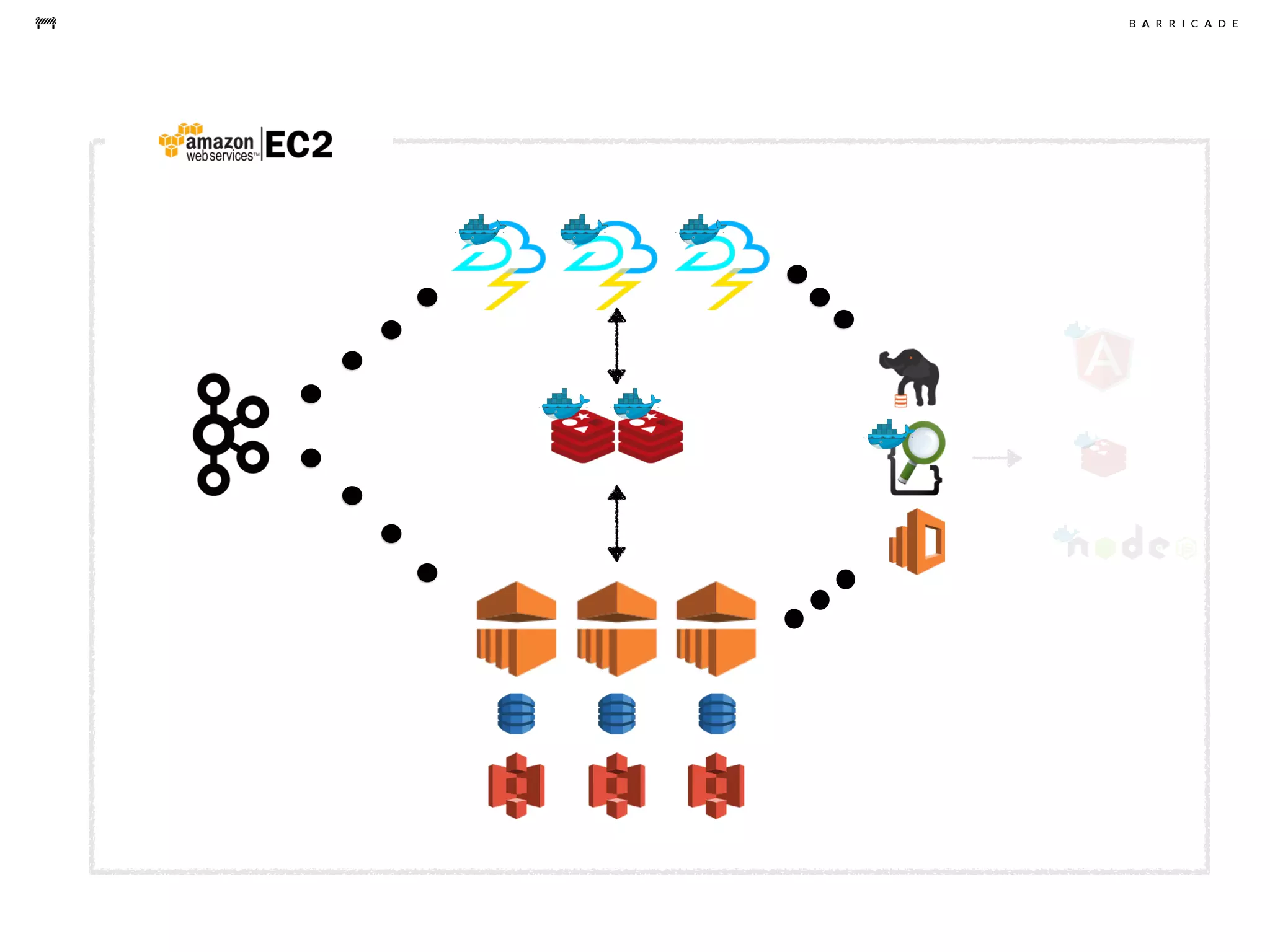

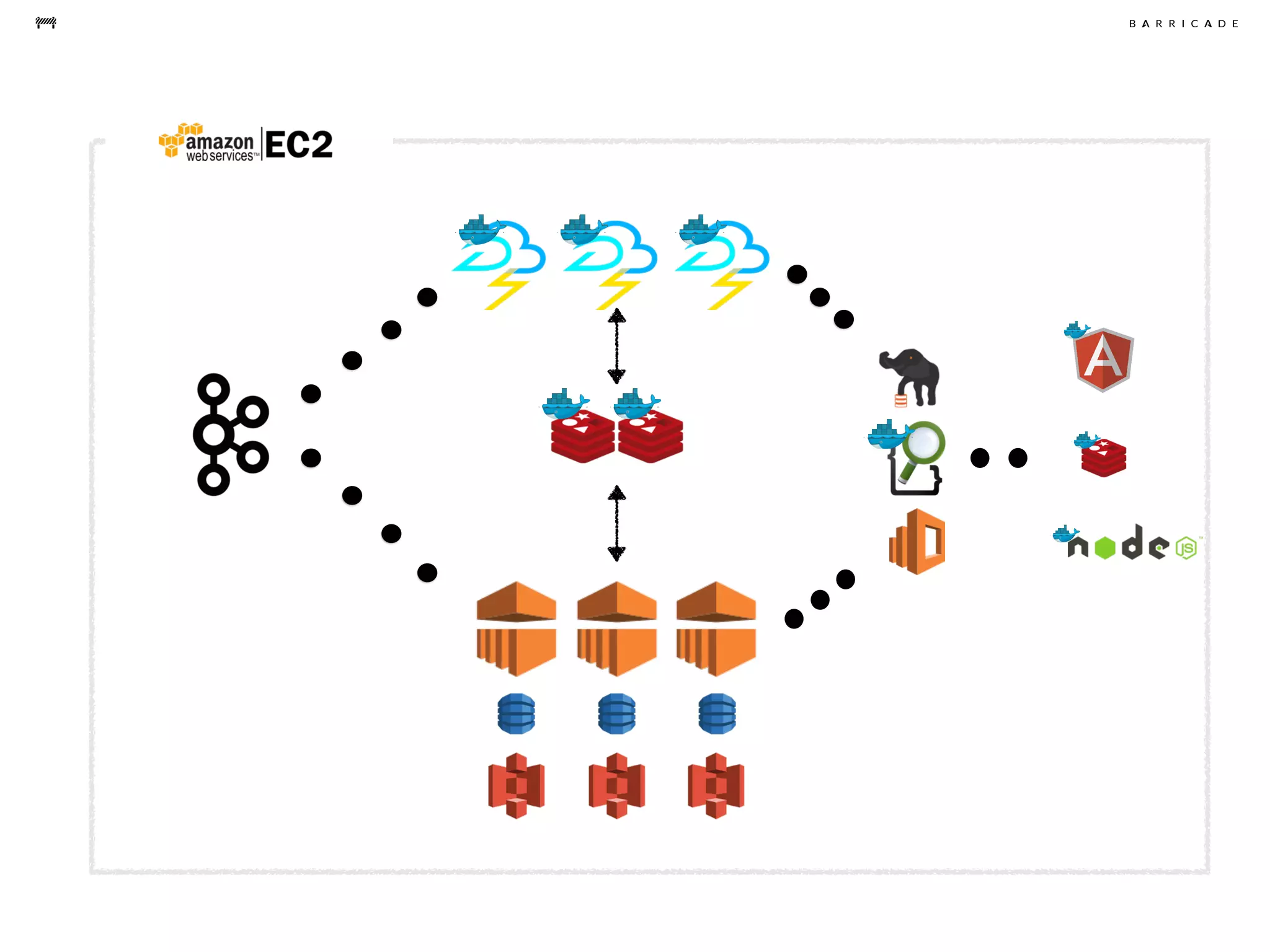

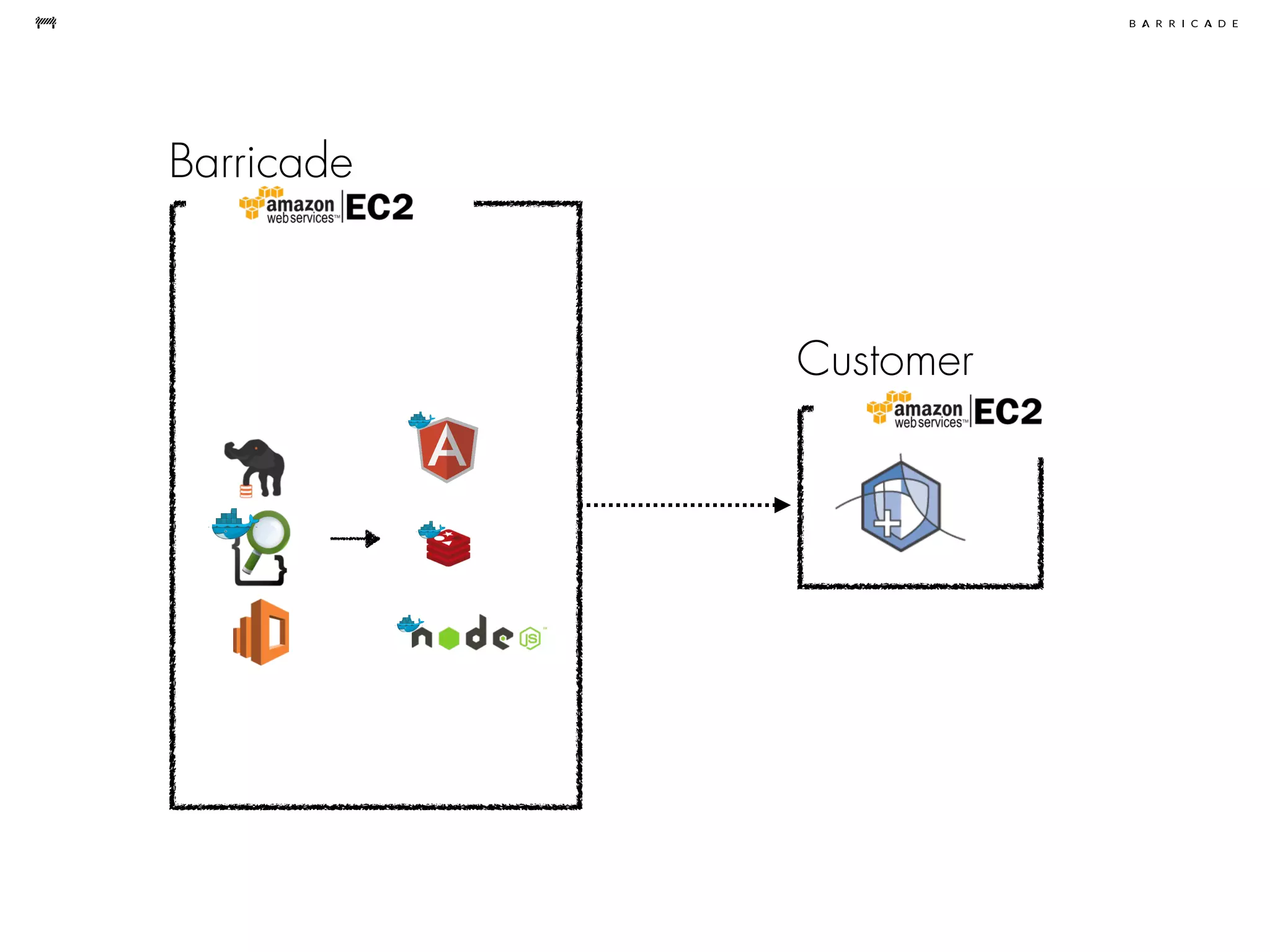

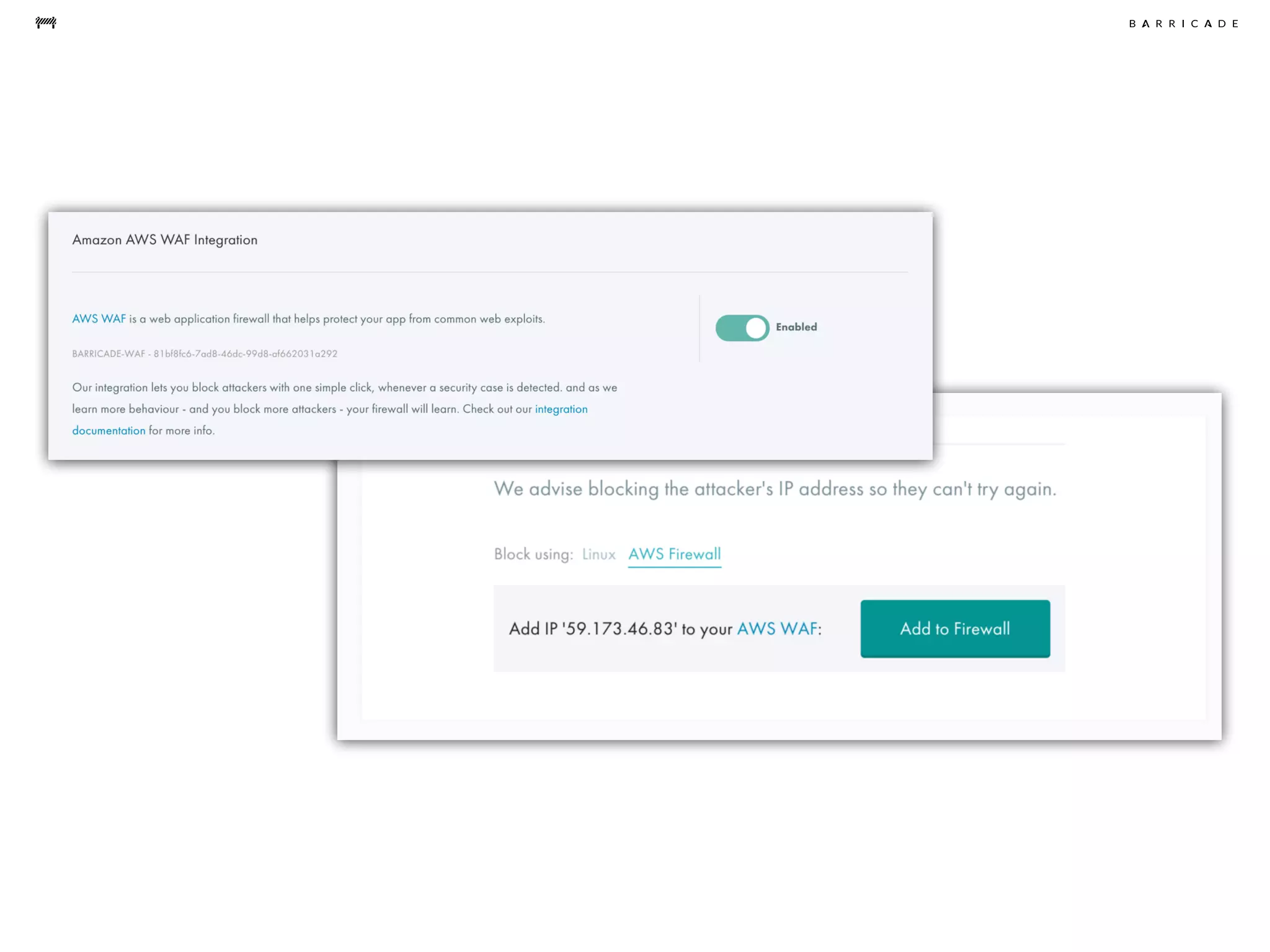

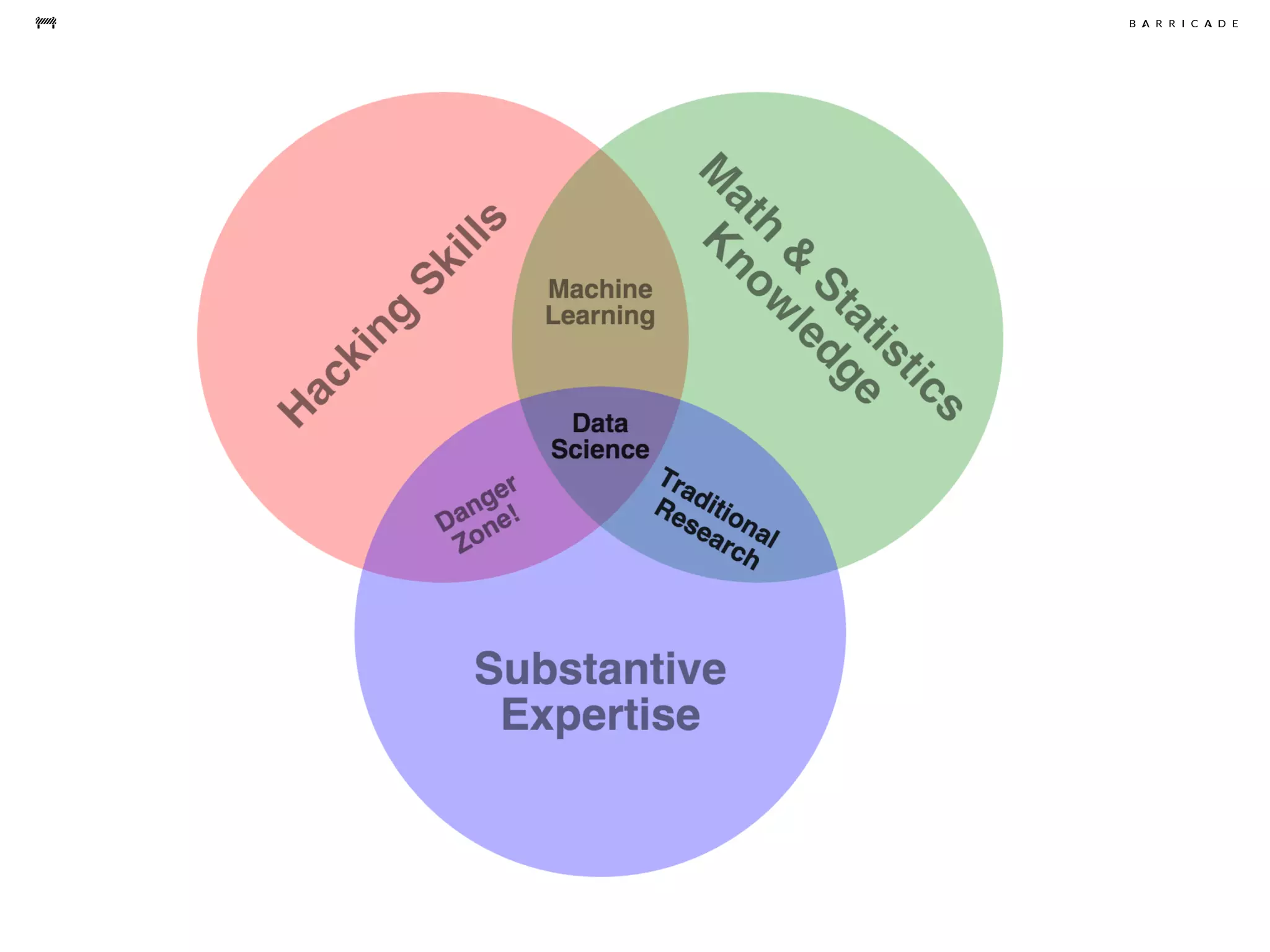

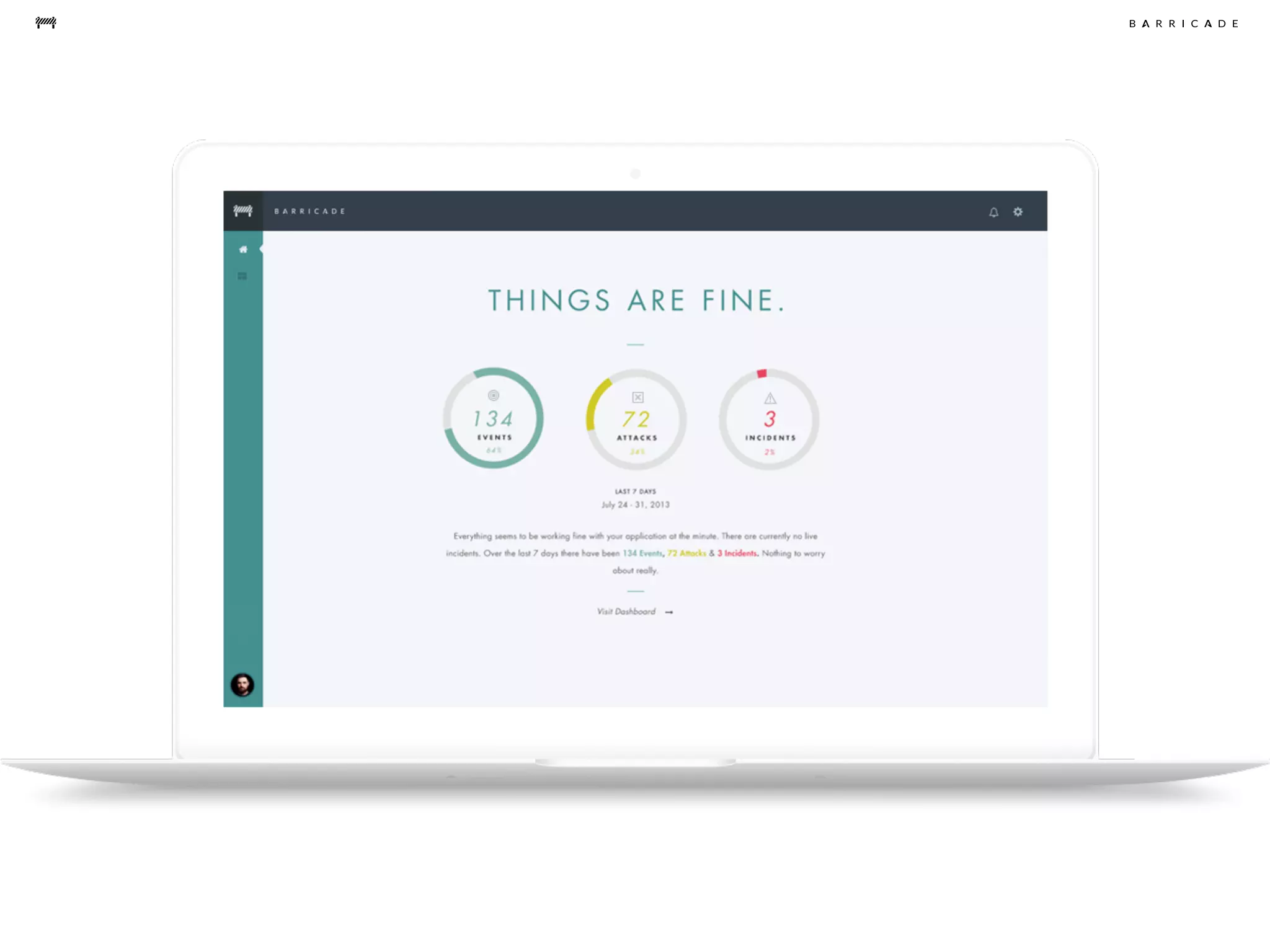

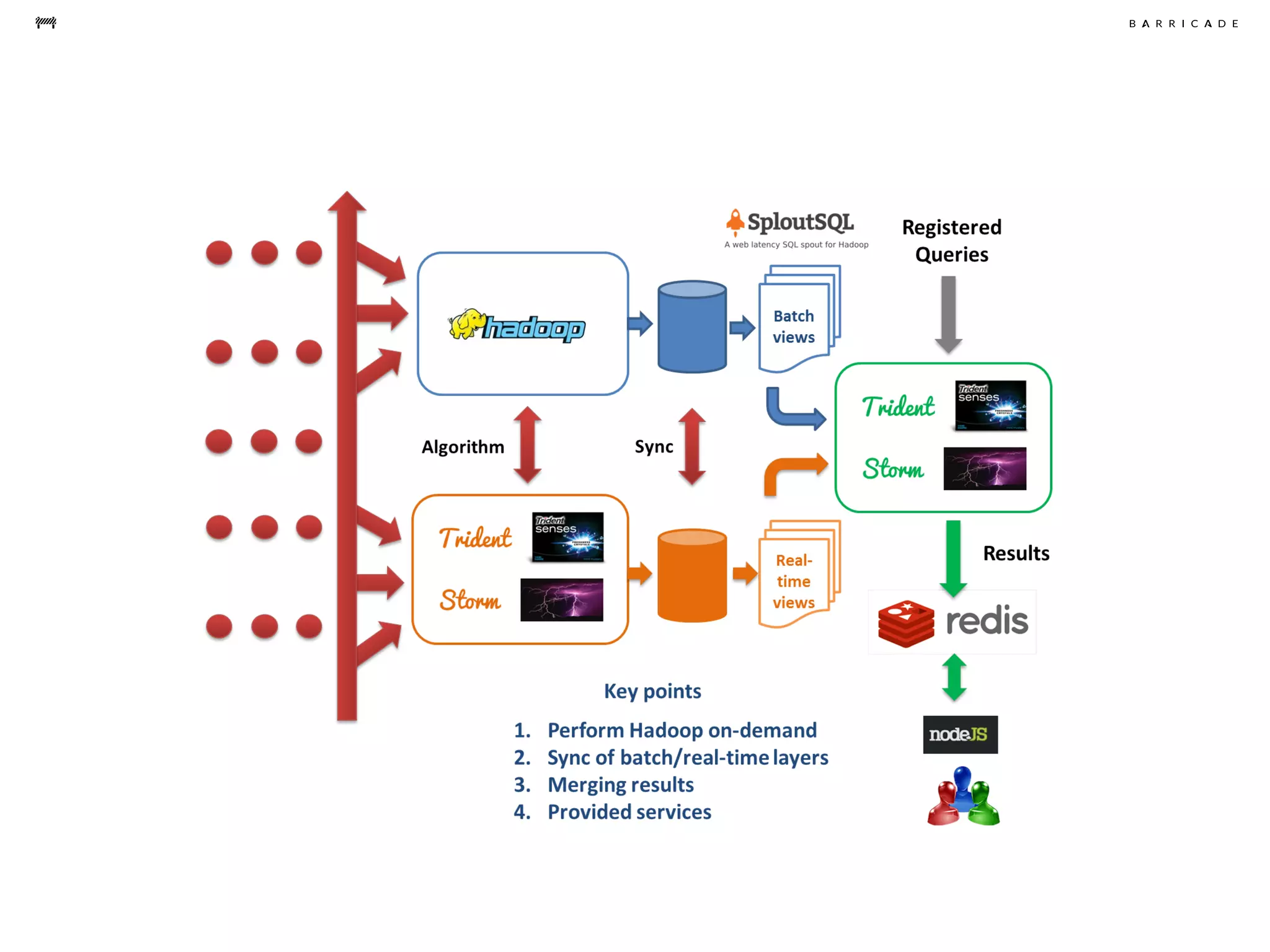

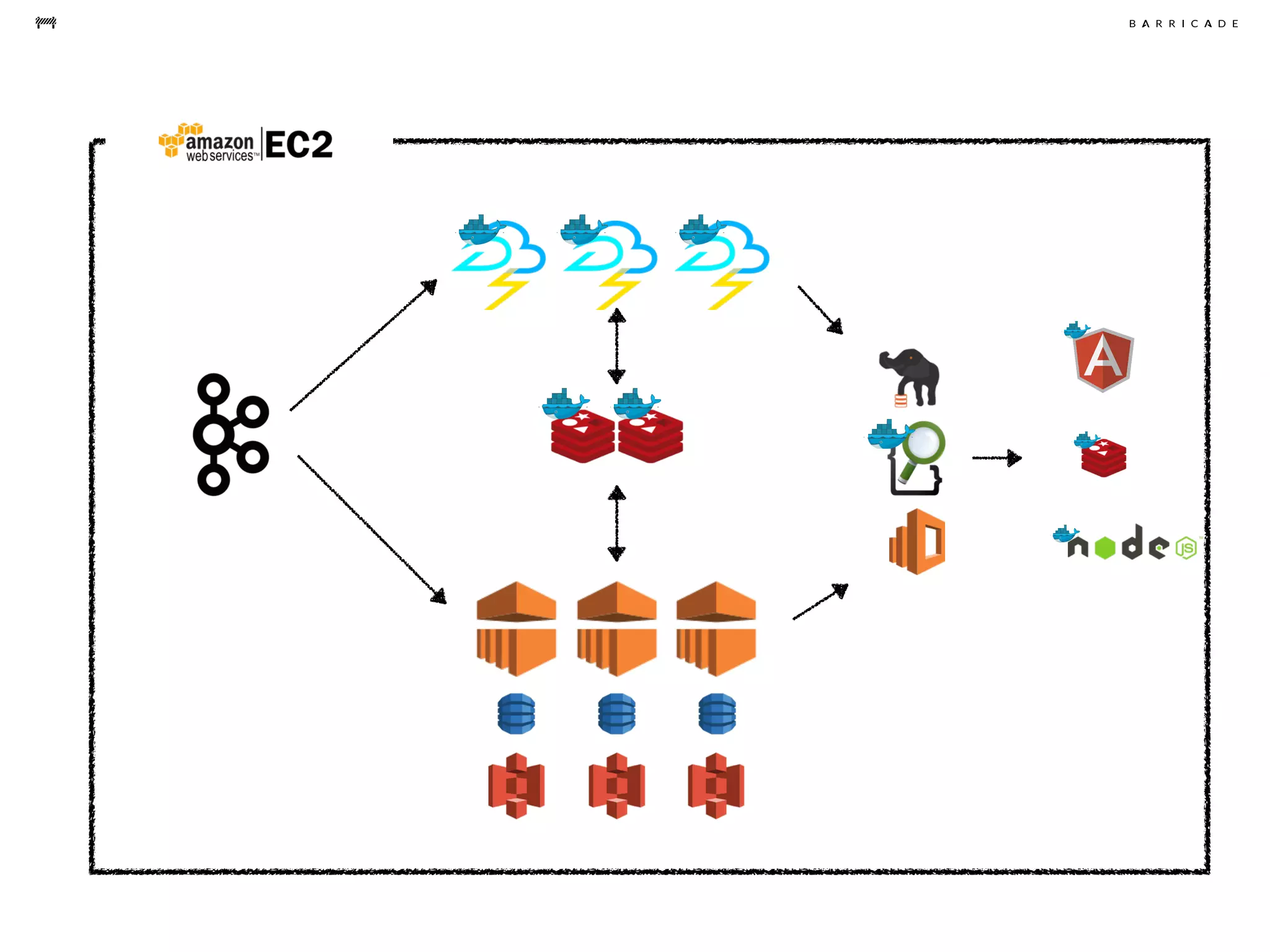

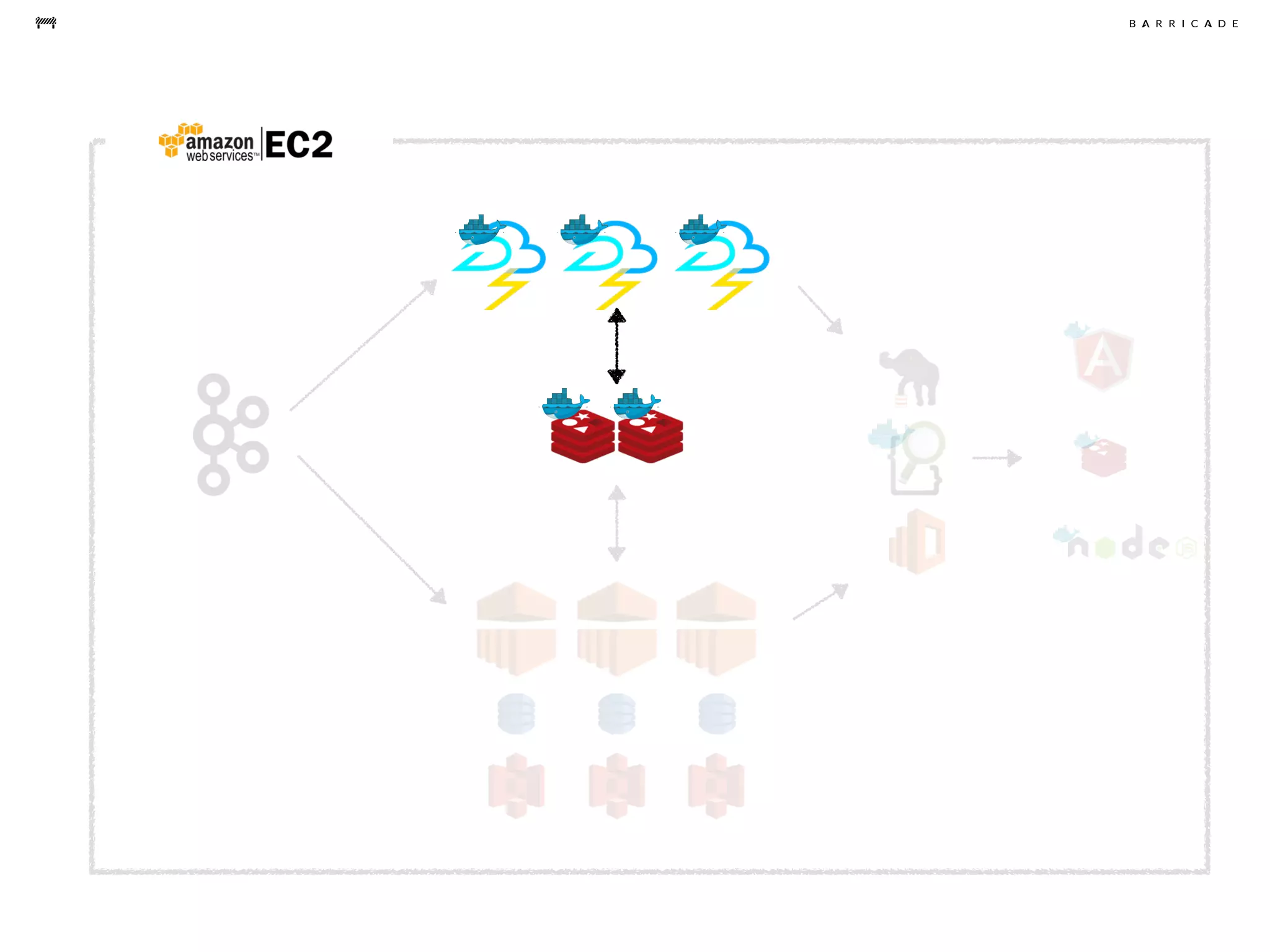

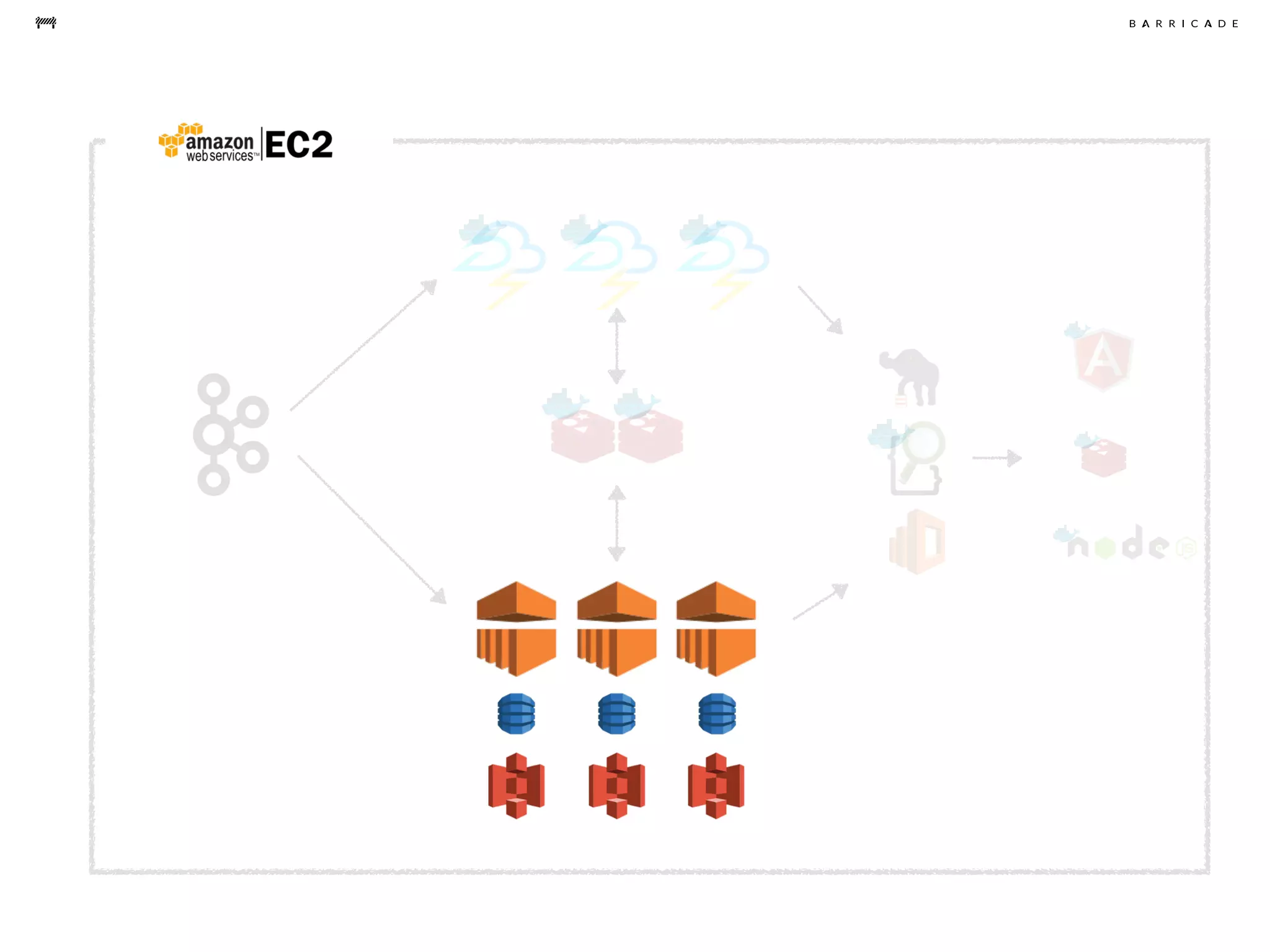

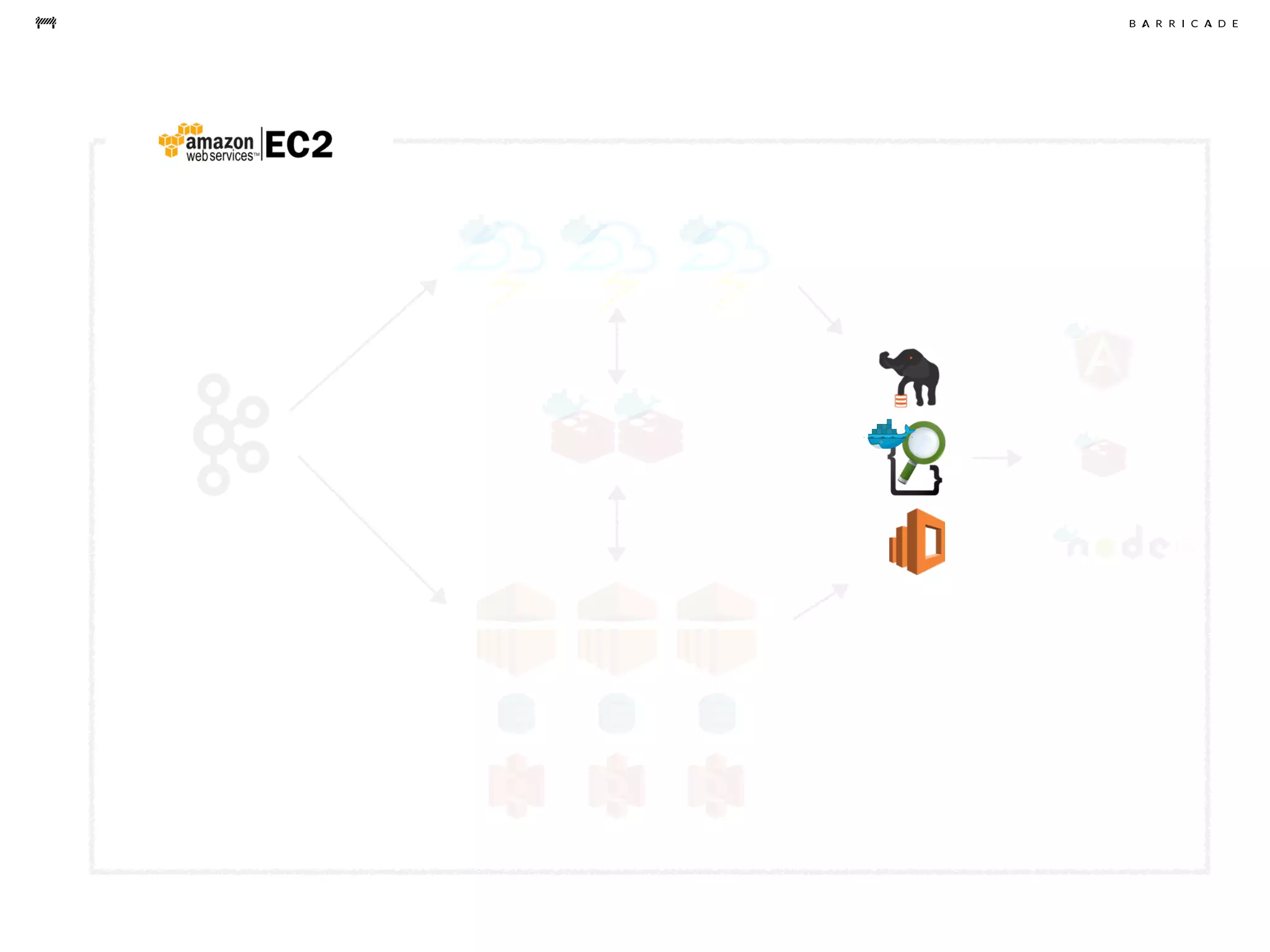

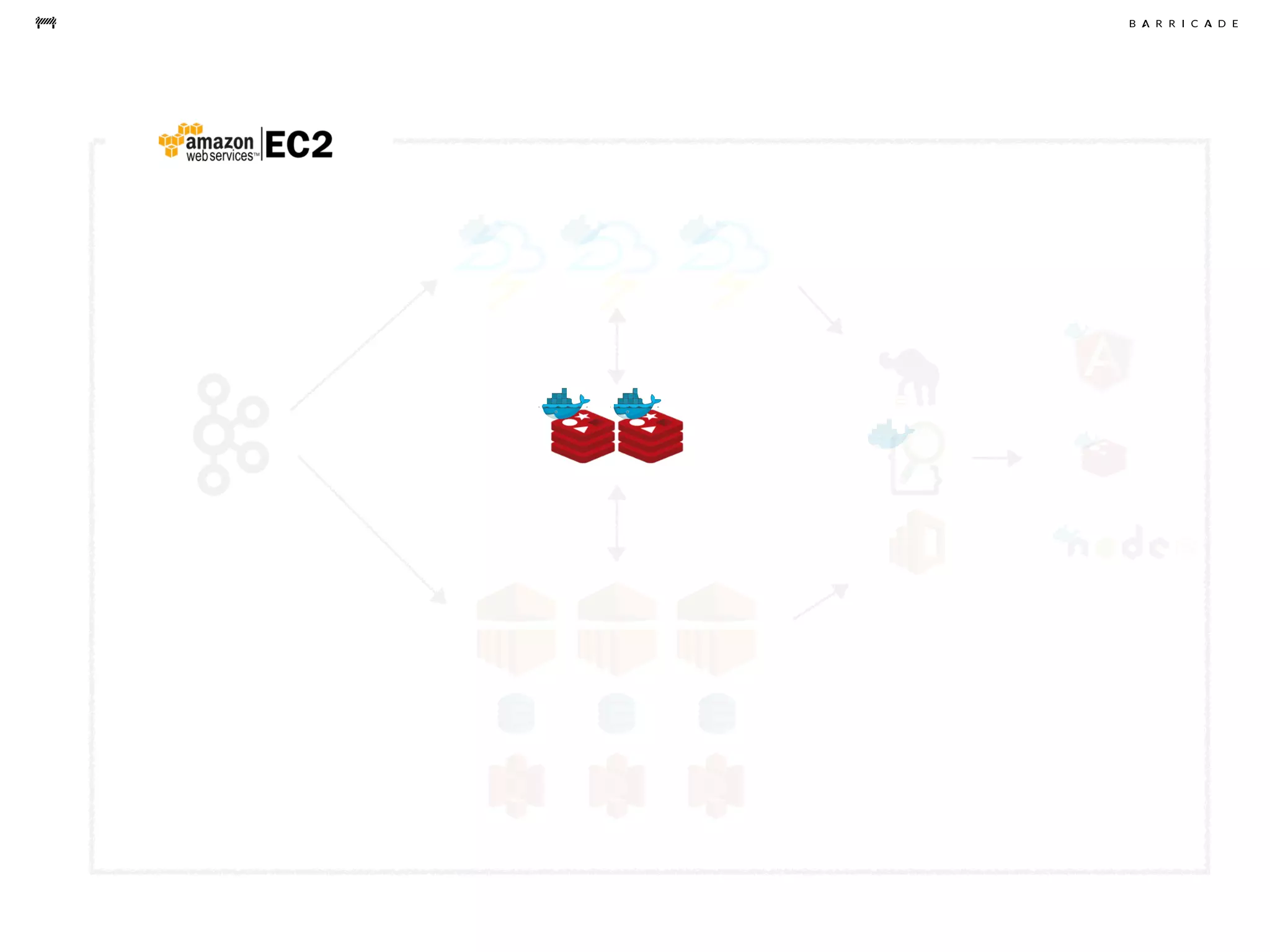

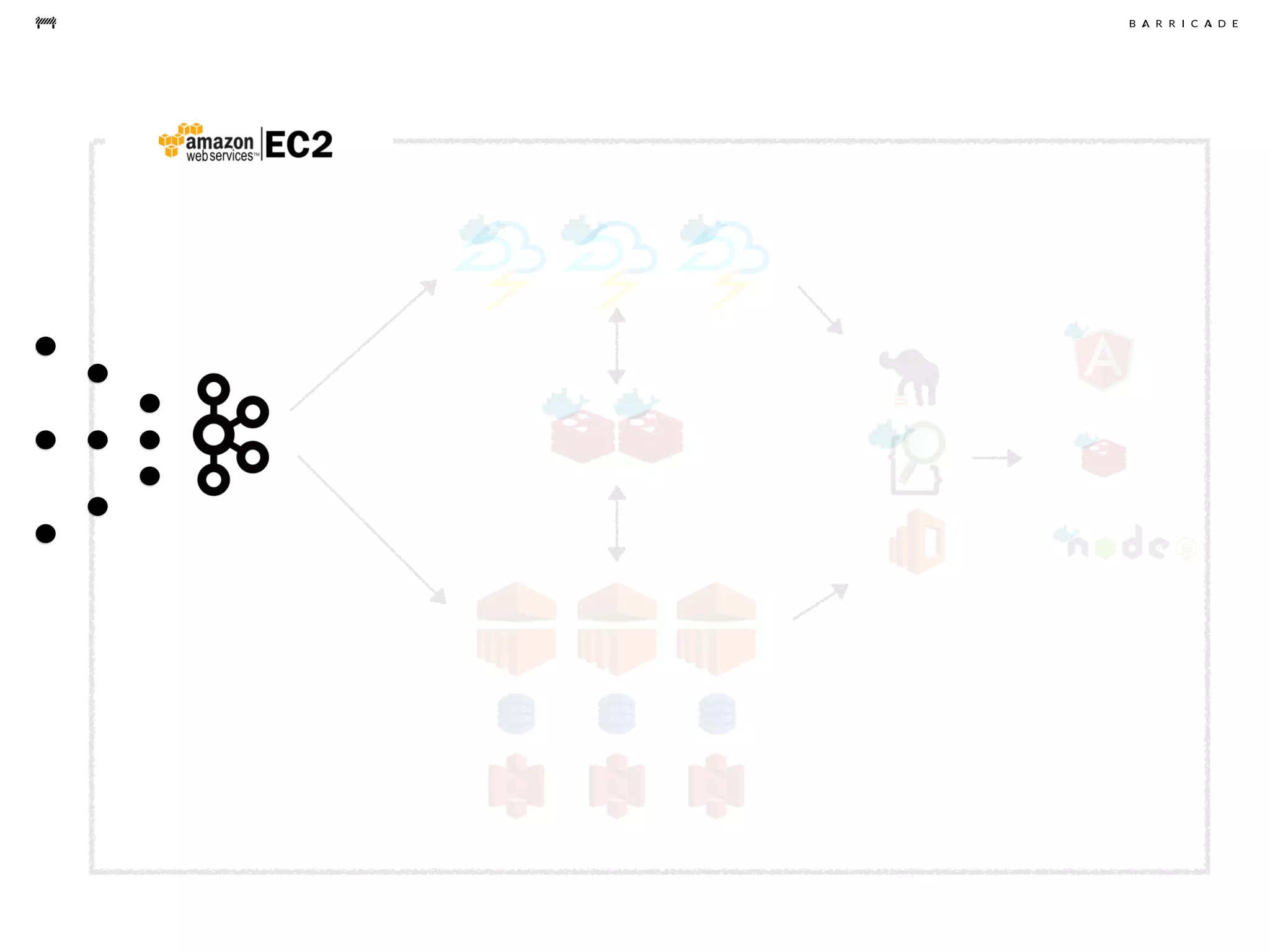

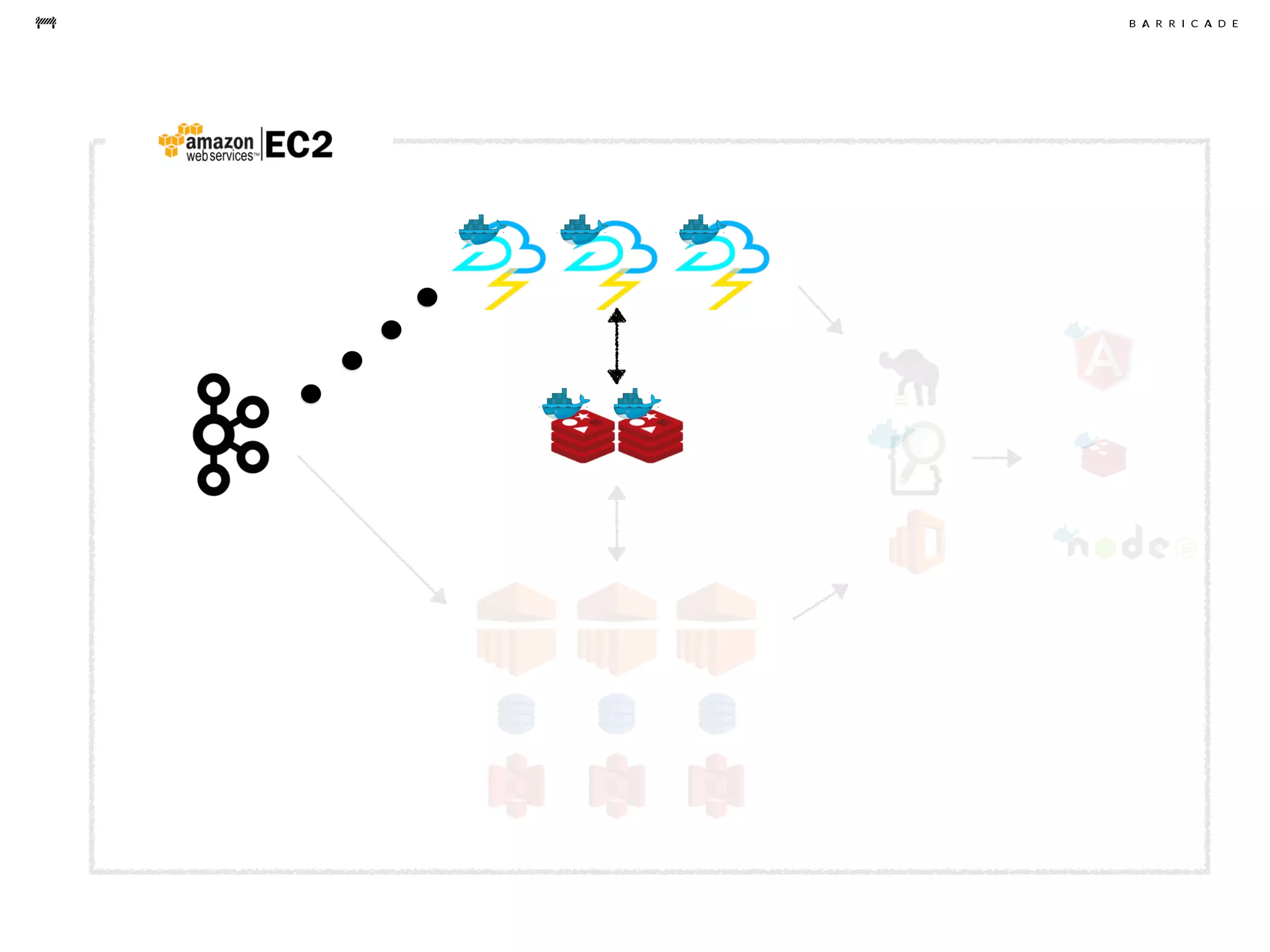

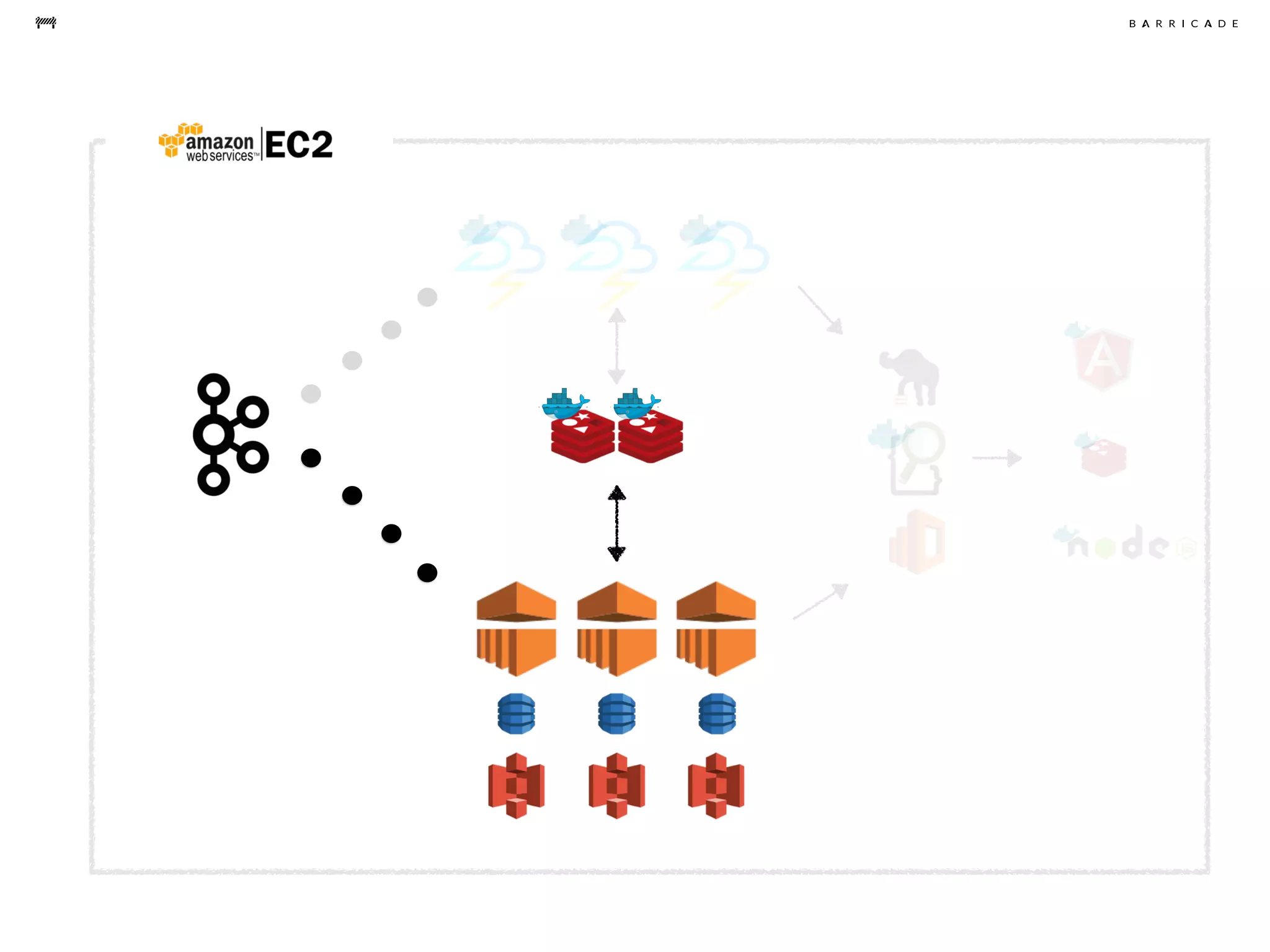

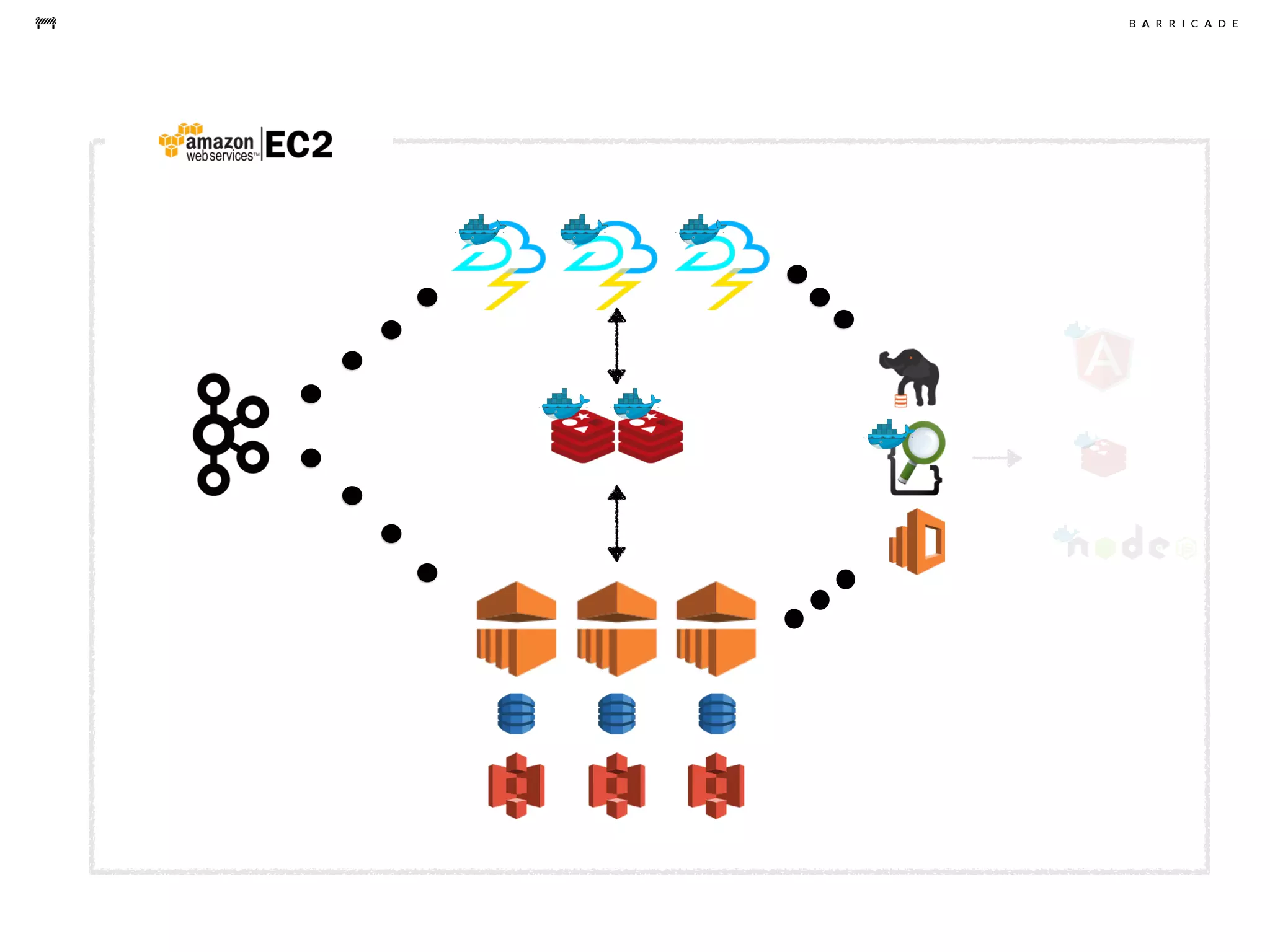

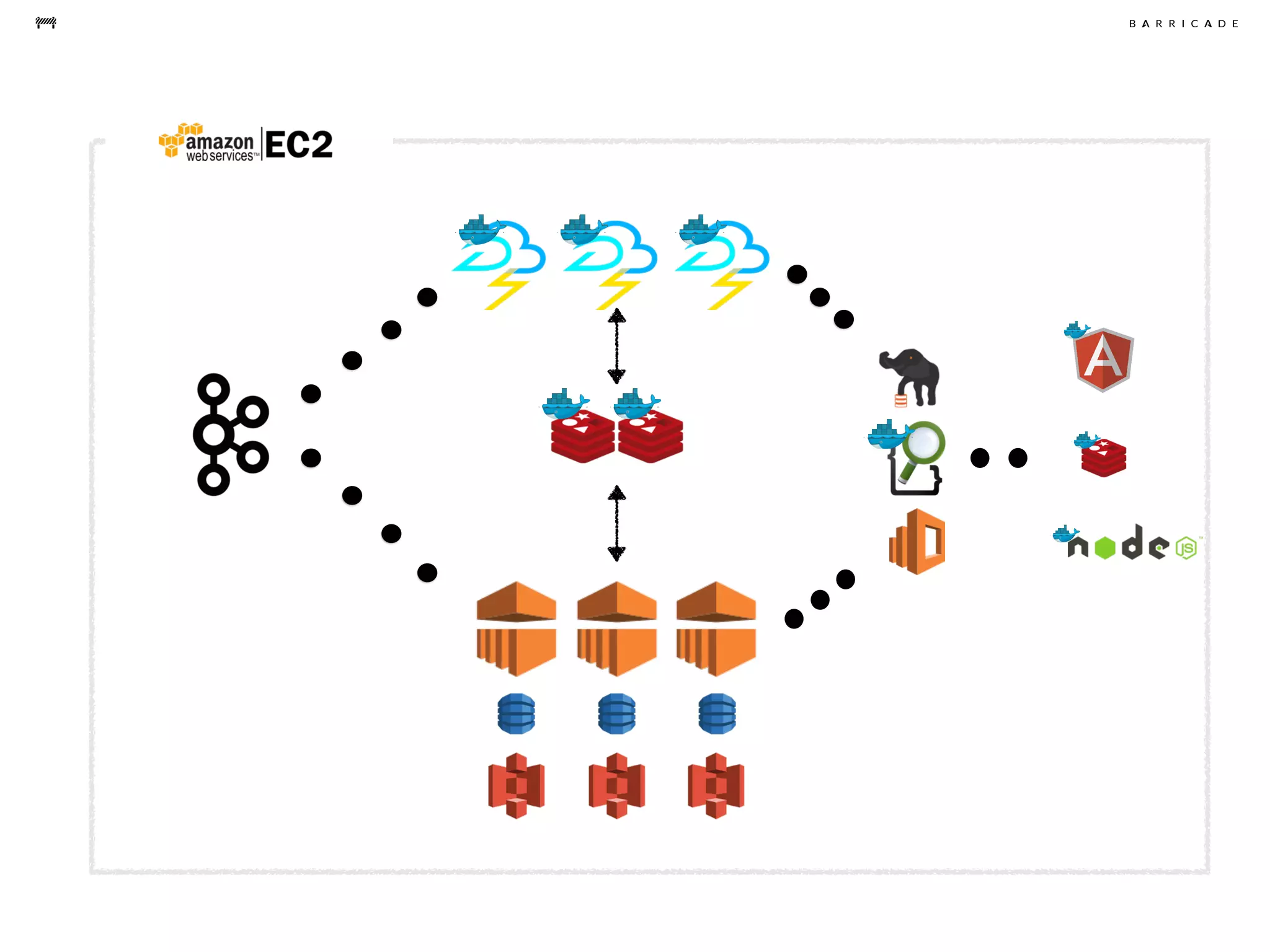

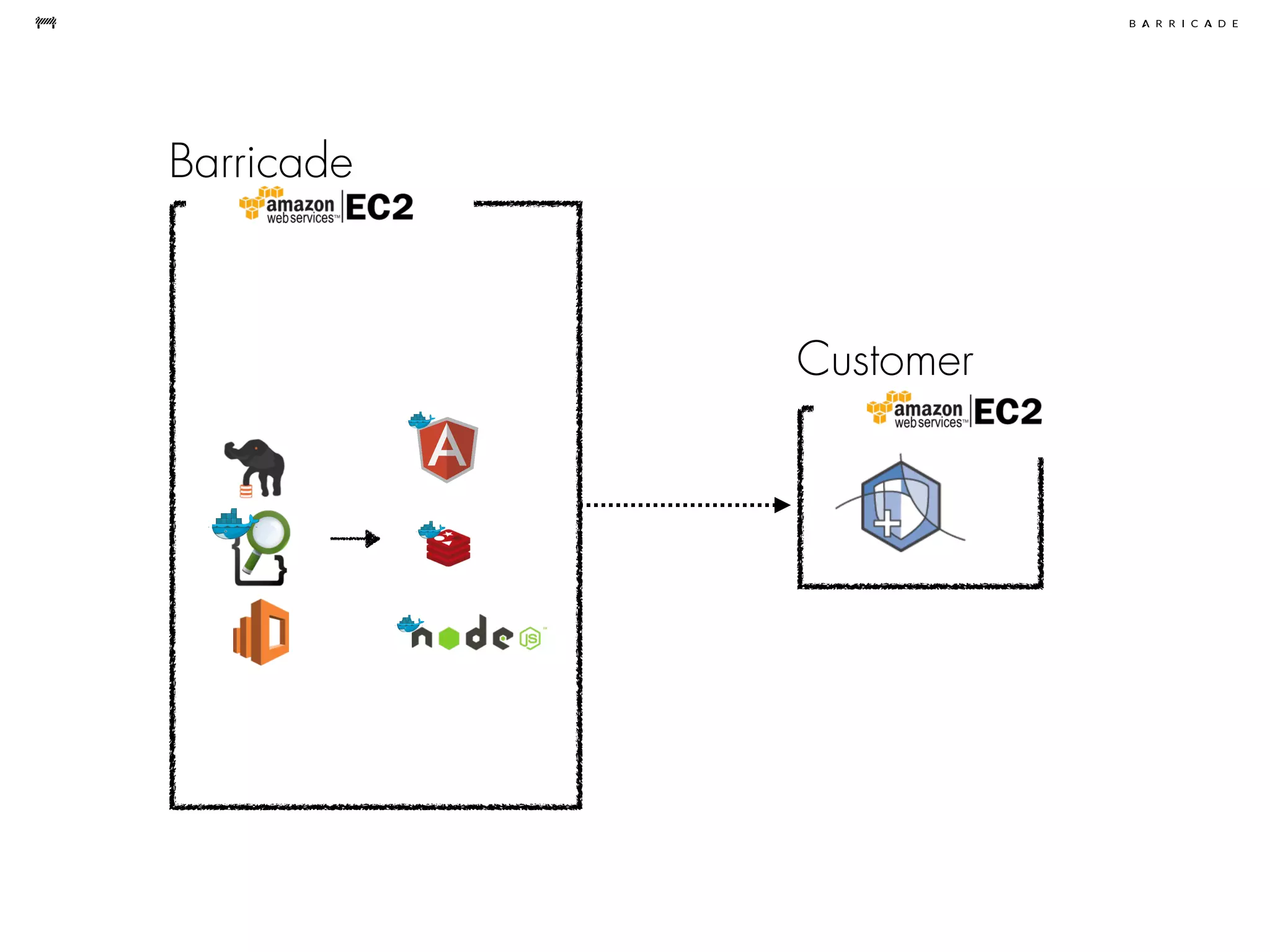

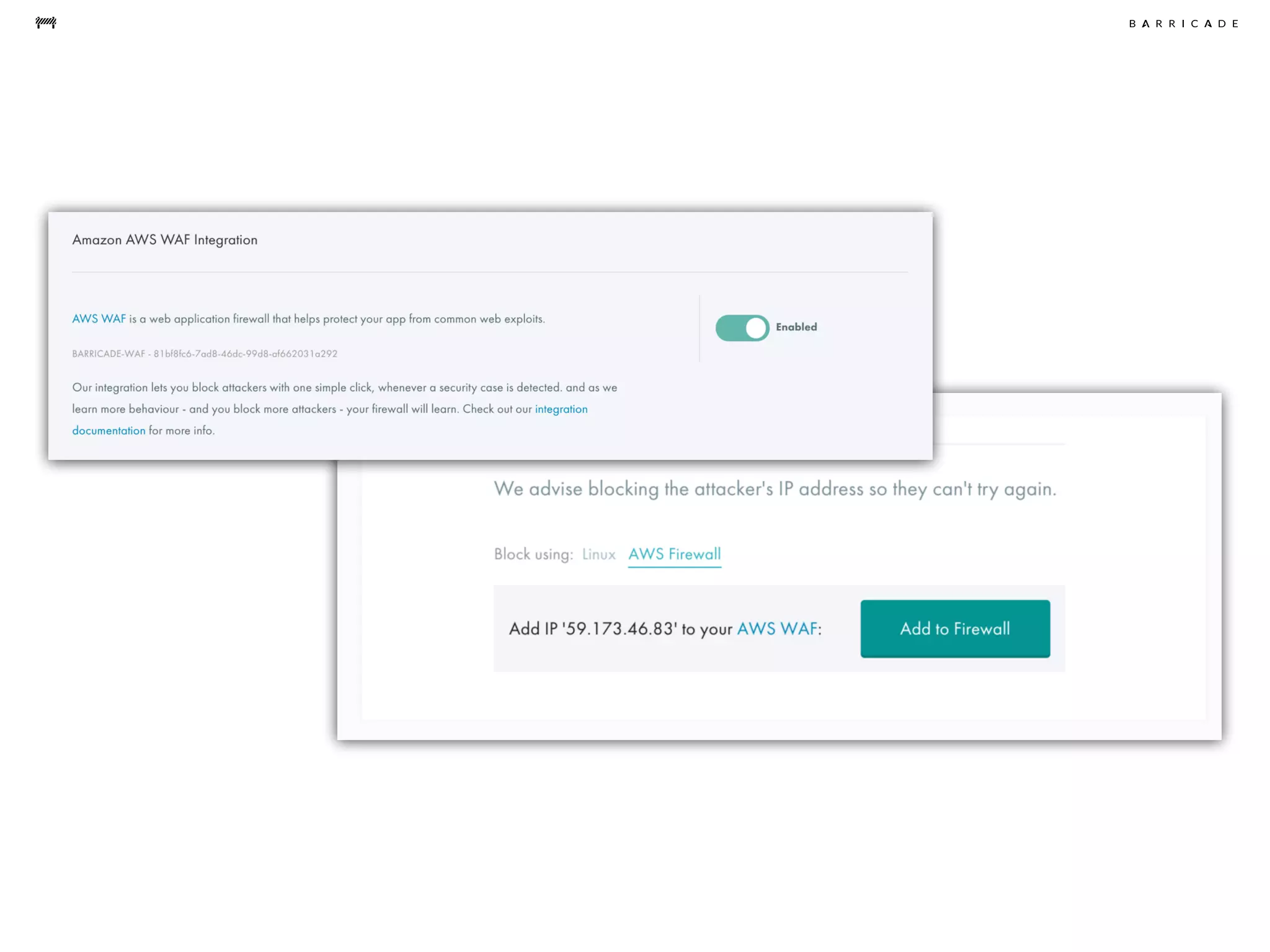

The document discusses the challenges of data science, emphasizing the need for data hacking, analysis, and expertise while maintaining focus on purpose. It outlines a pragmatic approach involving questioning, analyzing data, and sharing findings within a collaborative team structure, particularly in the context of fraud prevention. The author highlights the complexities of scaling data science at barricade.io, mentioning the use of lambda architecture and distributed messaging systems for effective data handling.