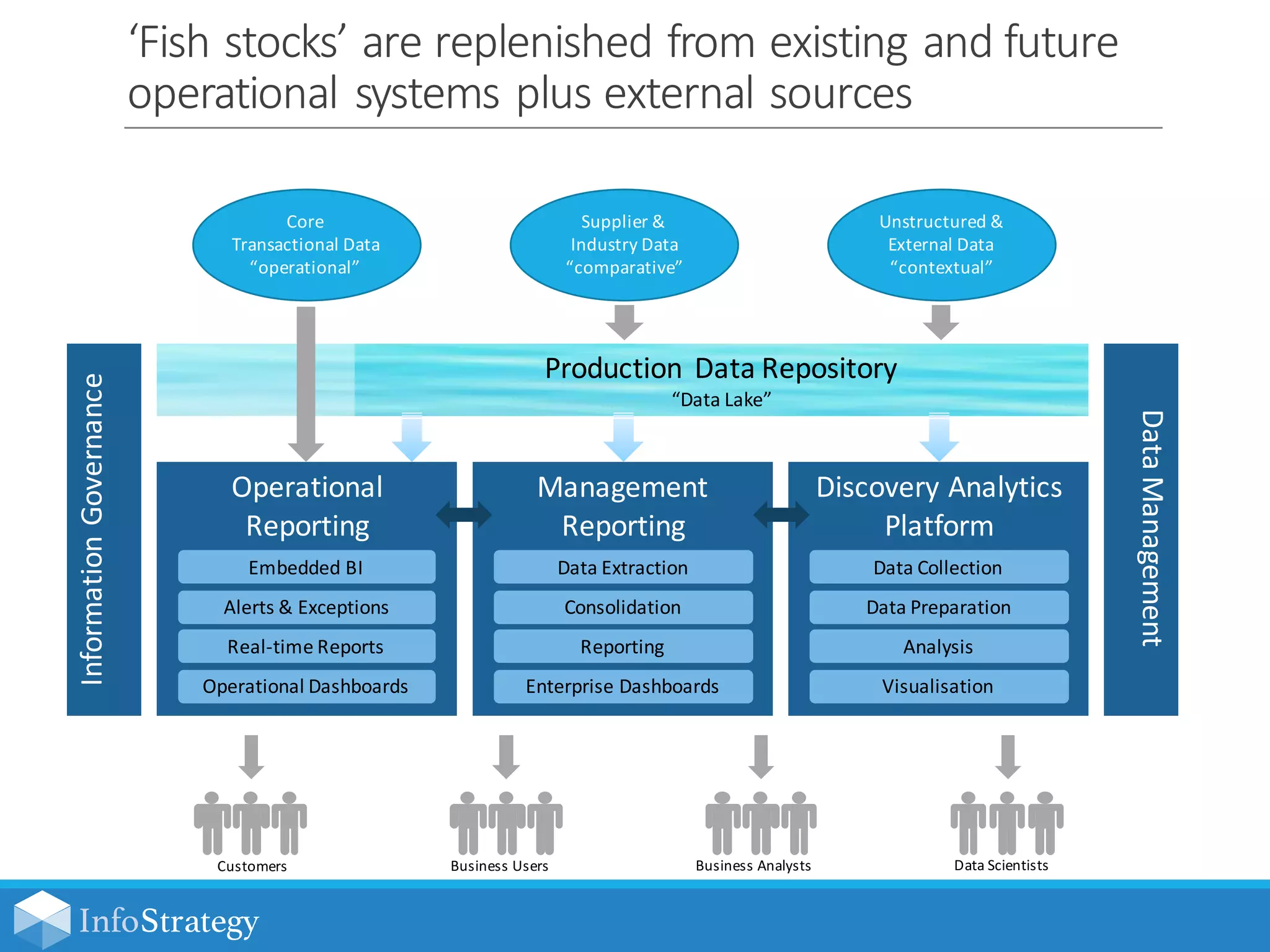

The document discusses the concept of a data lake, a vast data repository designed to store all attributes of big data for future exploration and analysis. It emphasizes the importance of advanced analytics in optimizing business operations through insights, fostering a flexible multi-platform architecture for rapid data access. The text also highlights the need for information governance and security standards to effectively manage and extract value from large data sets.