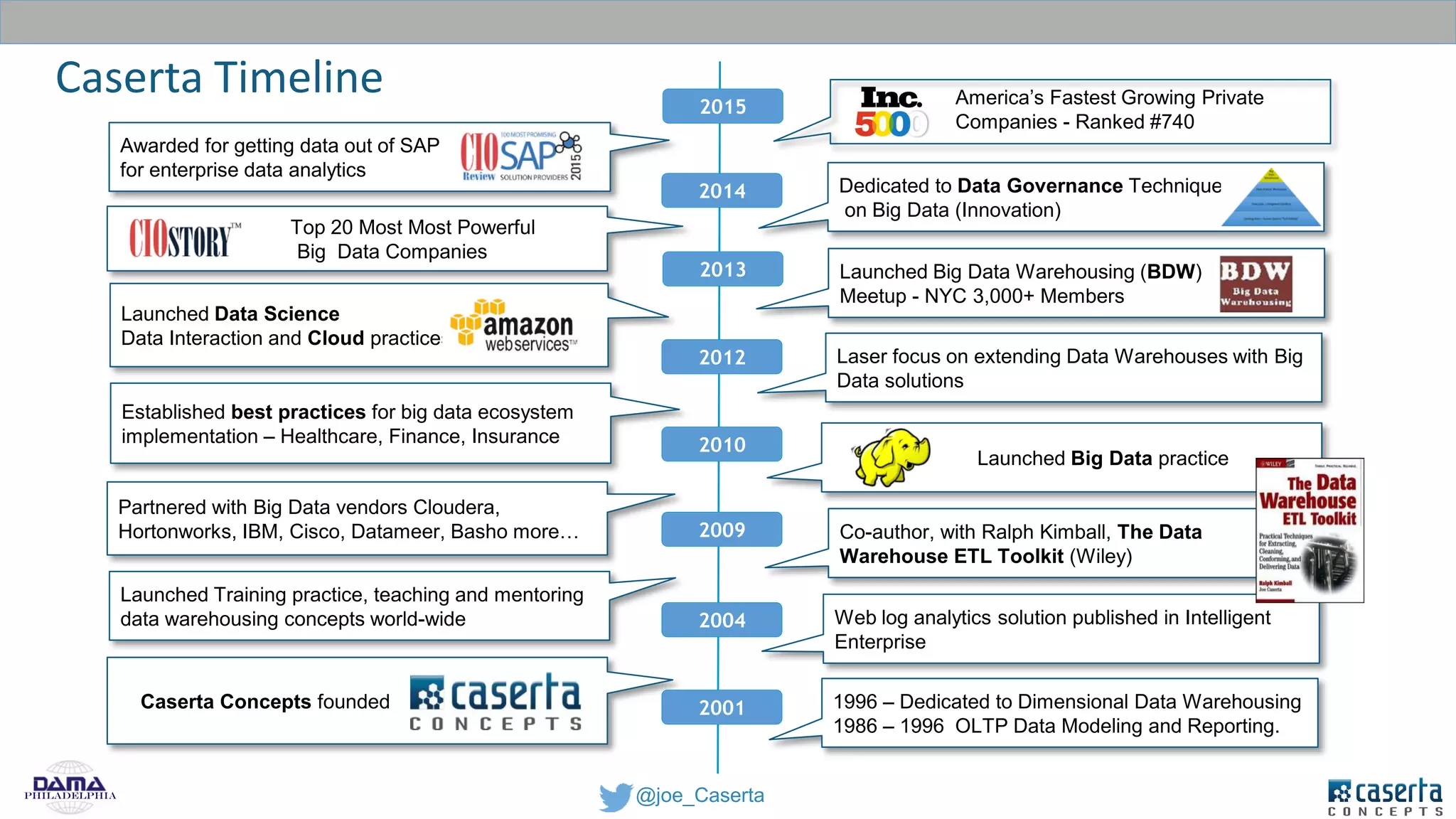

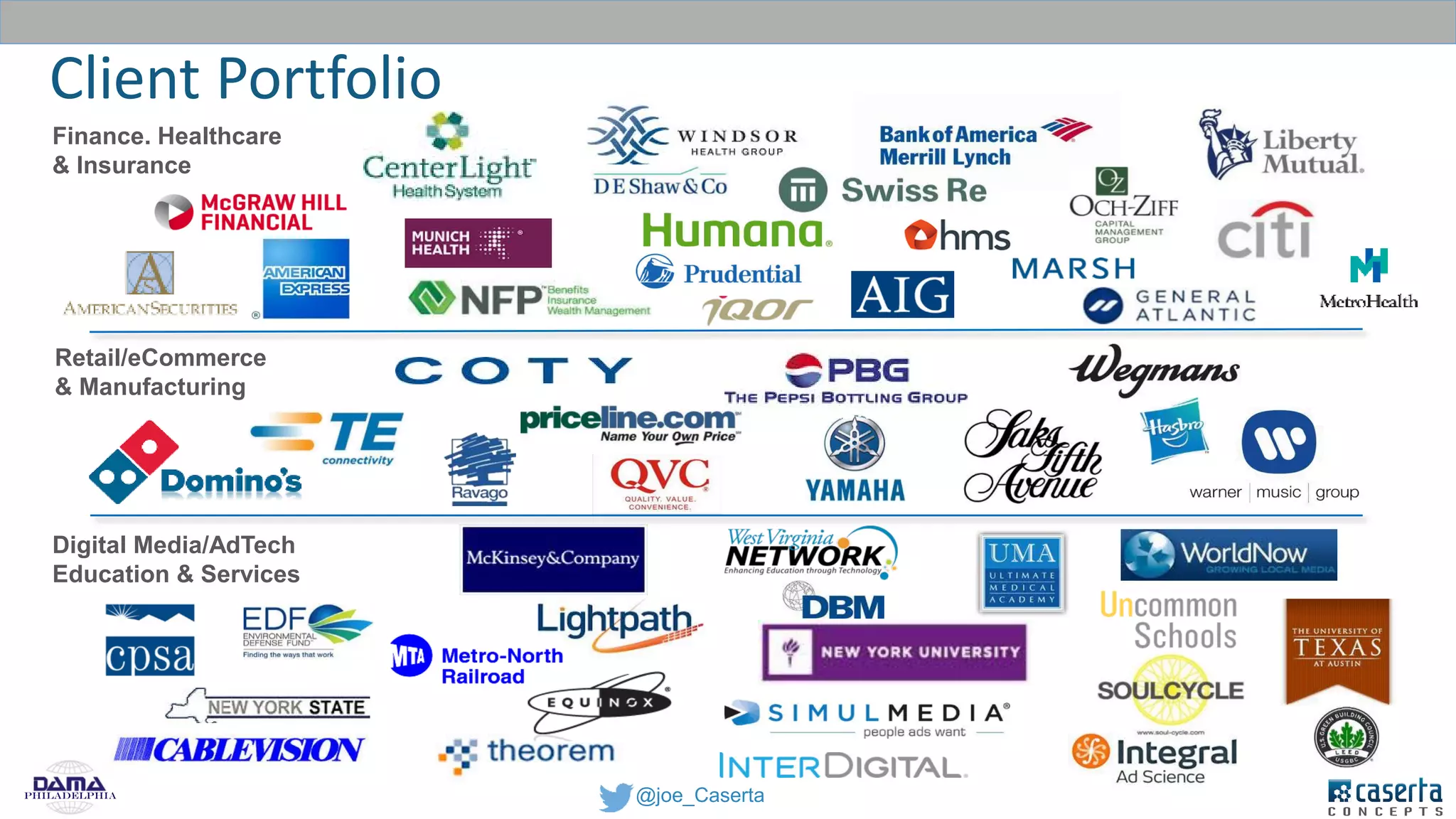

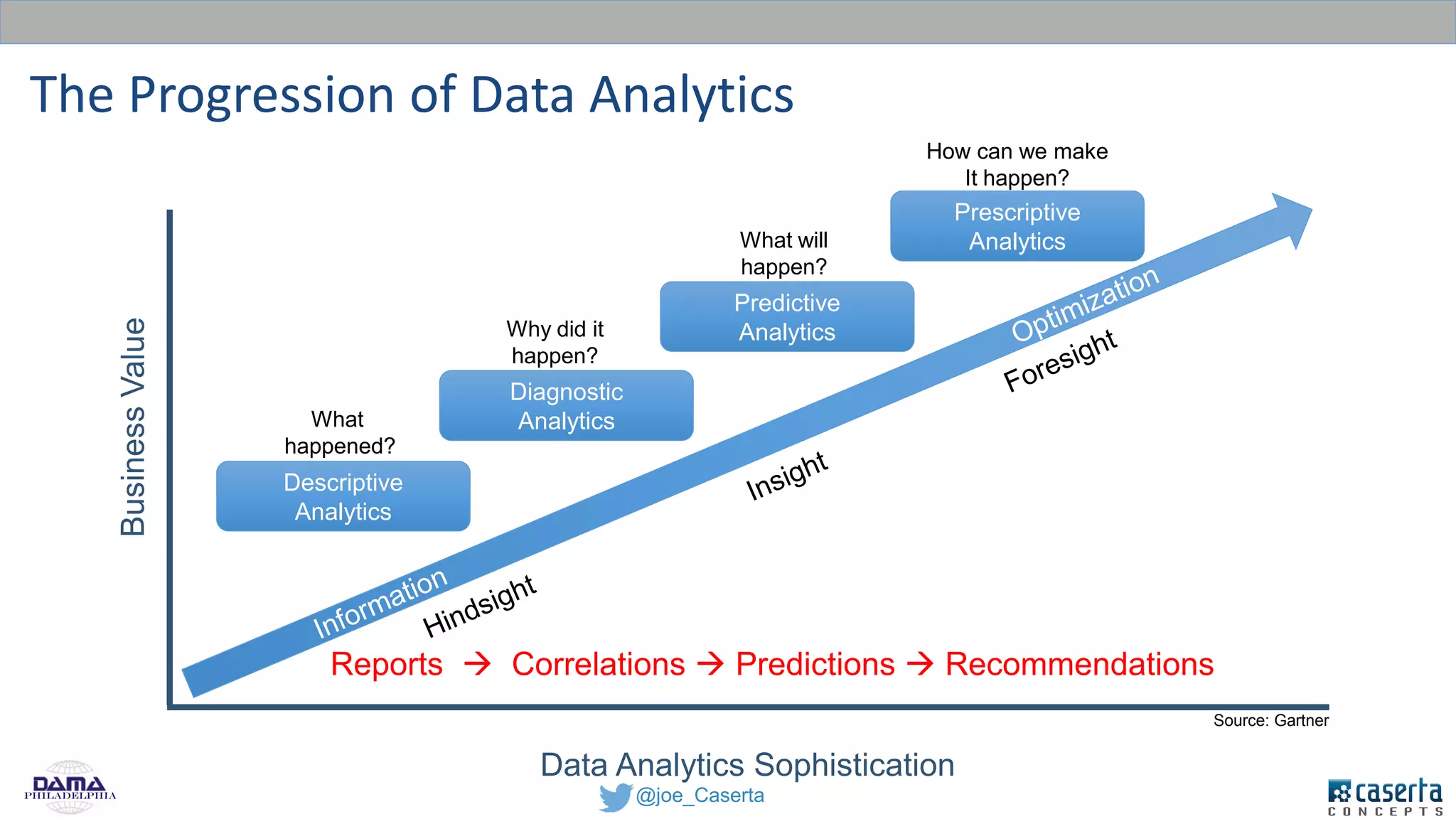

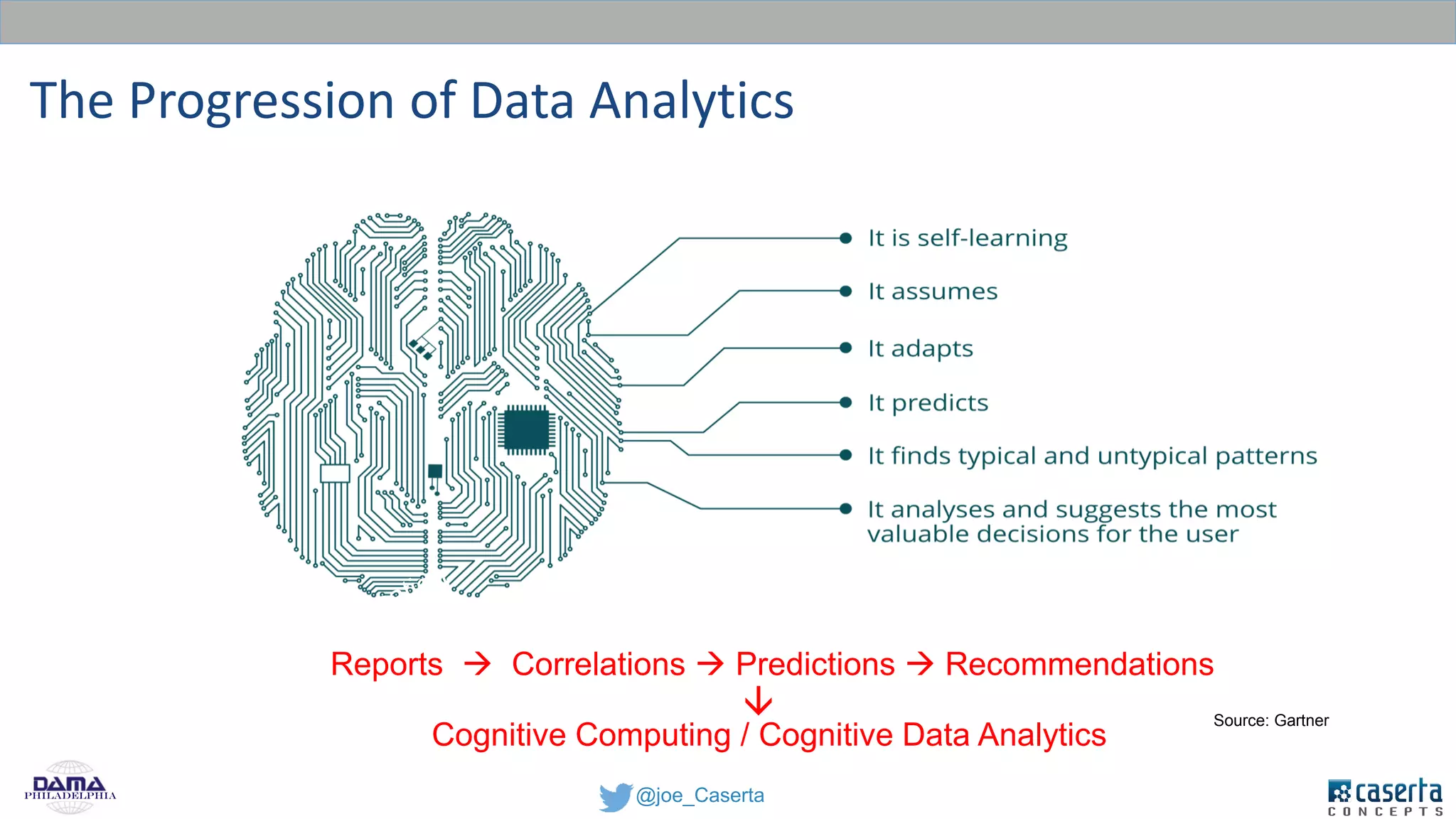

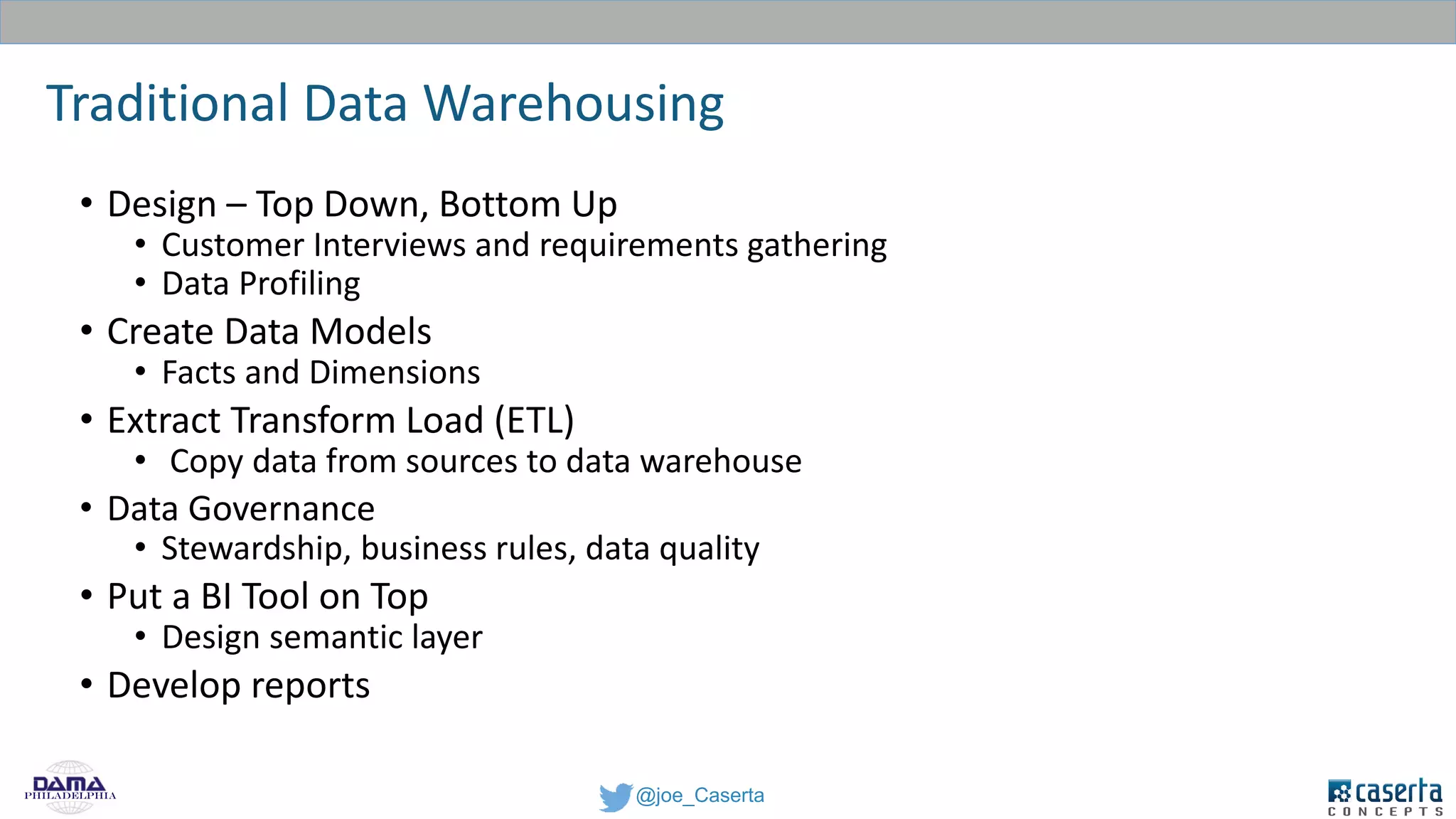

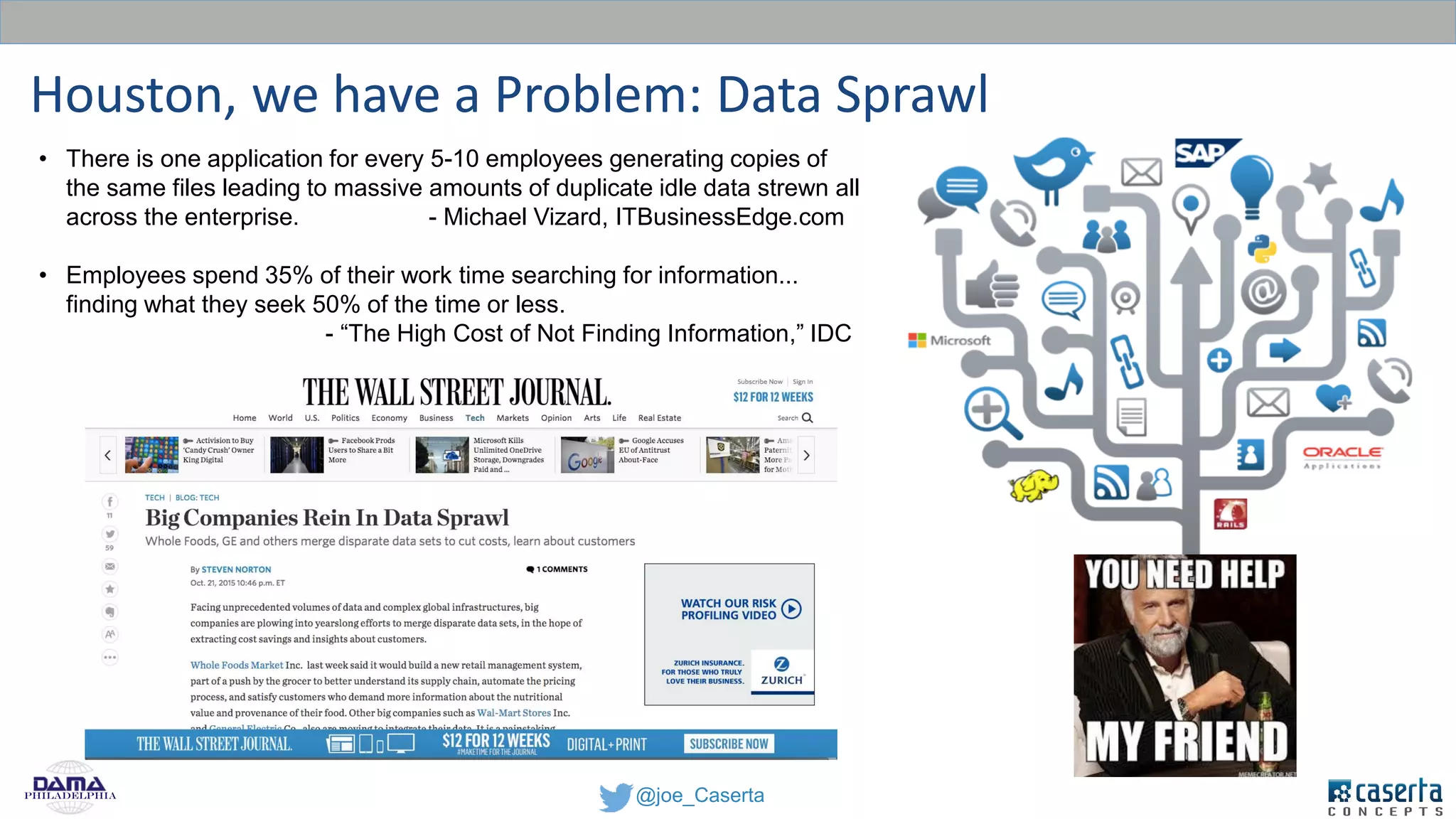

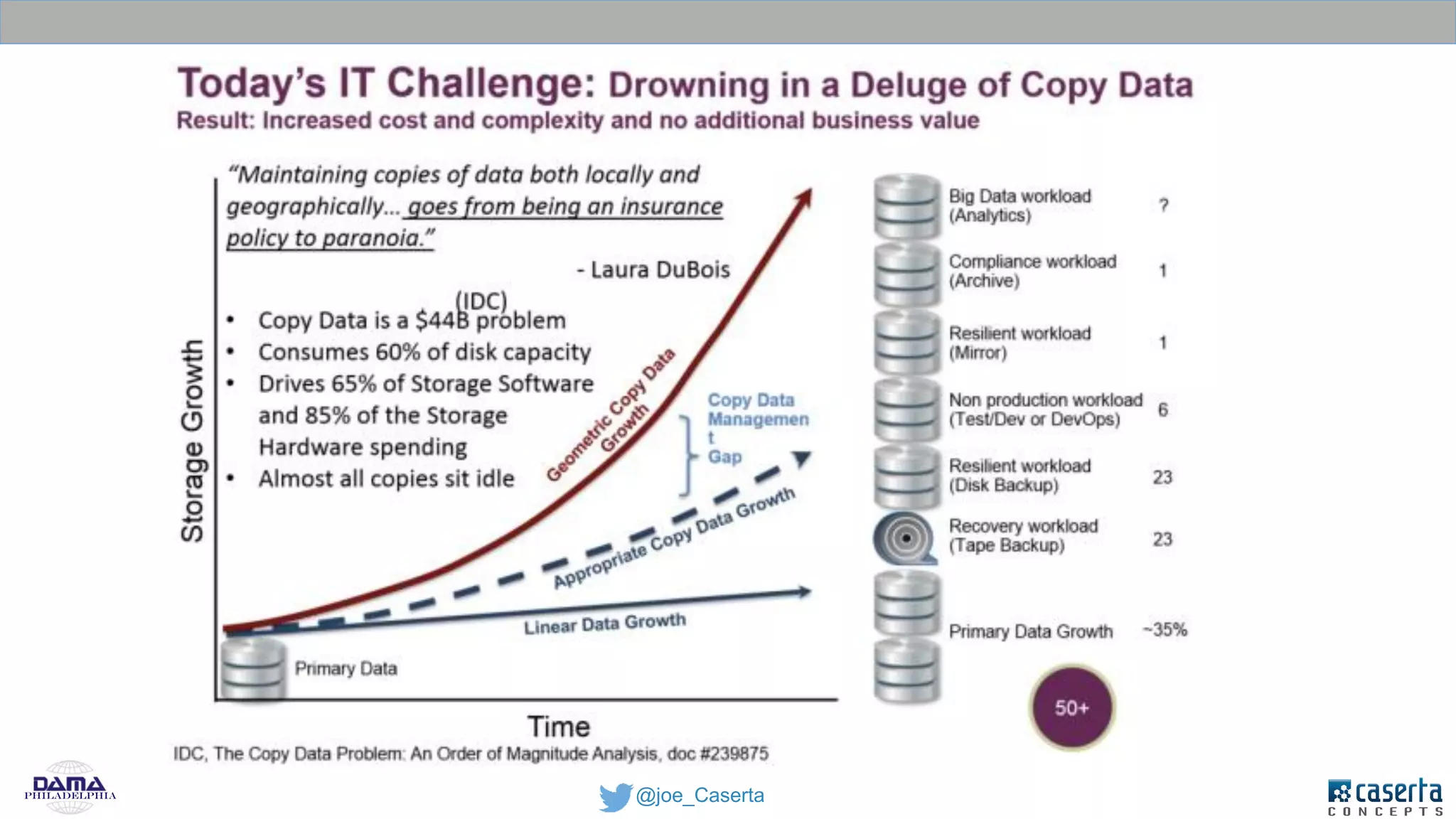

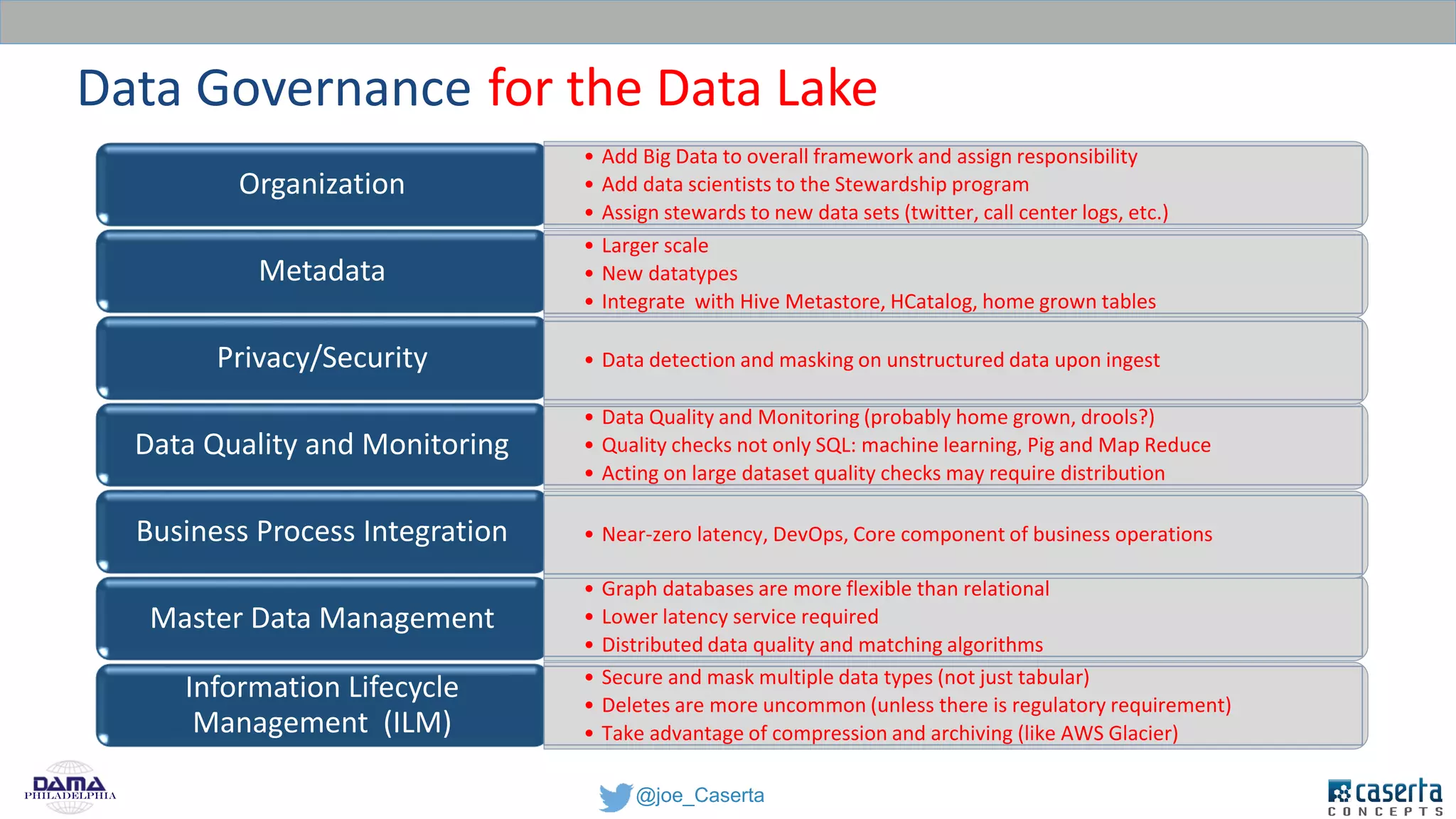

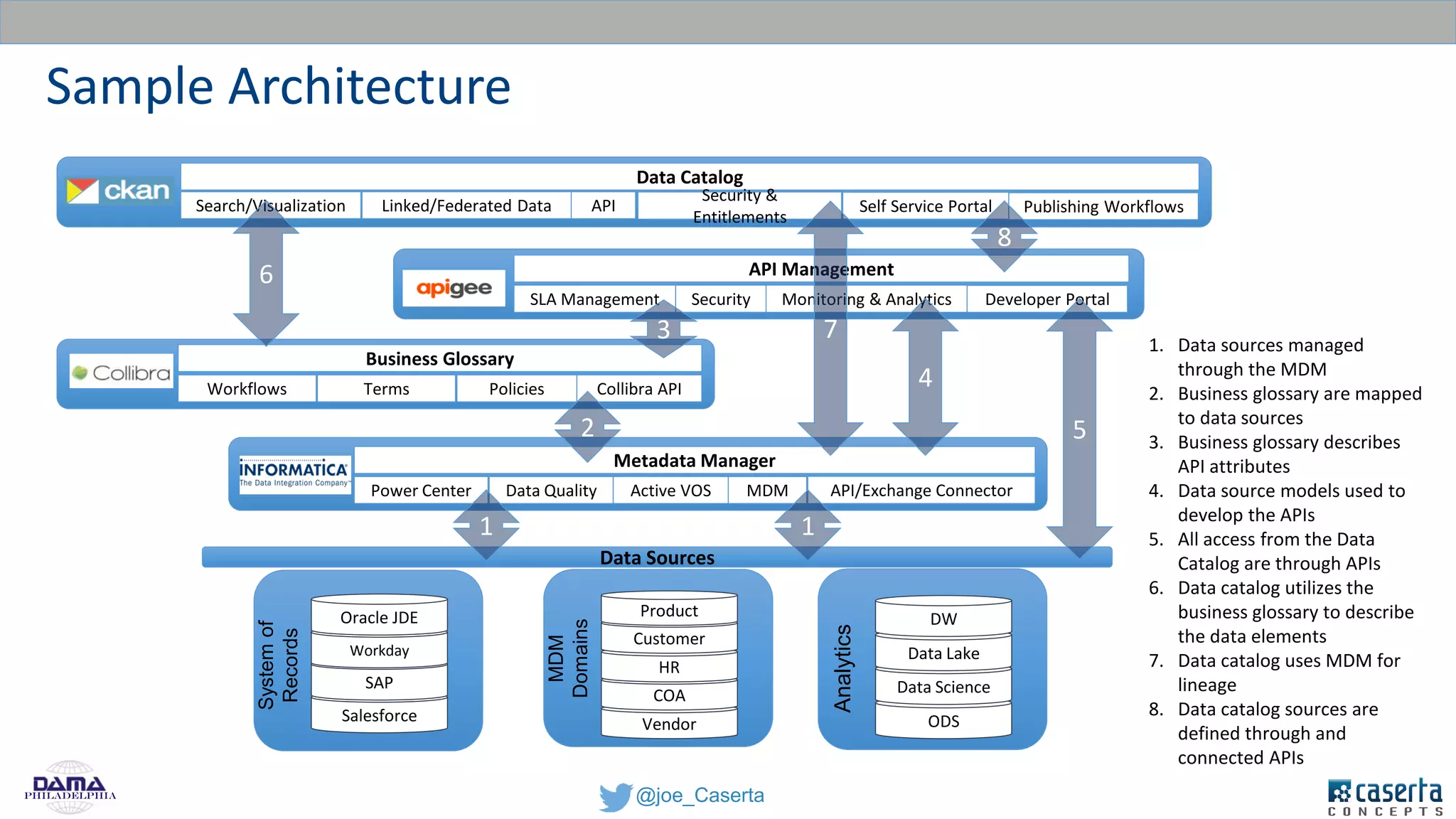

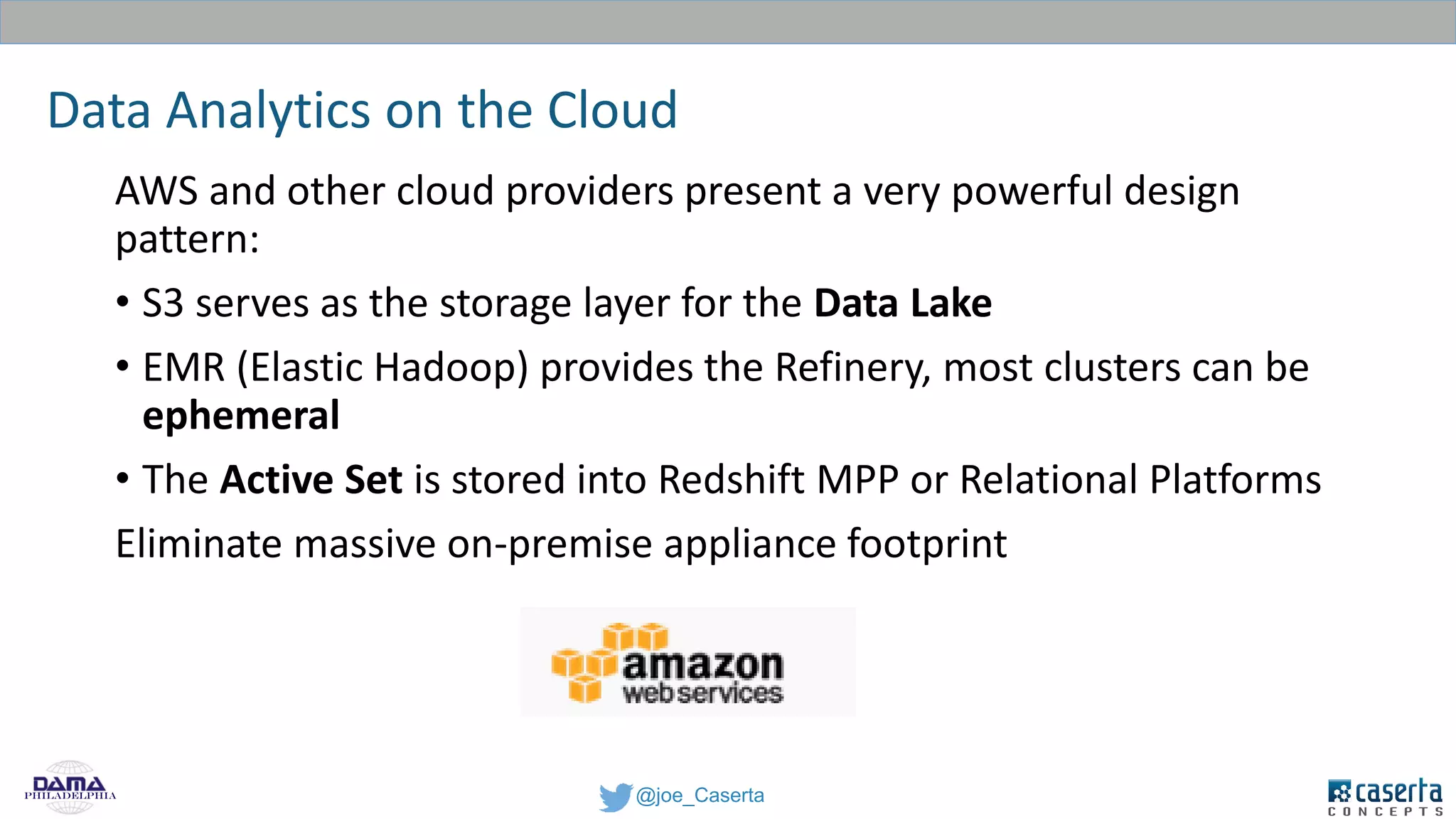

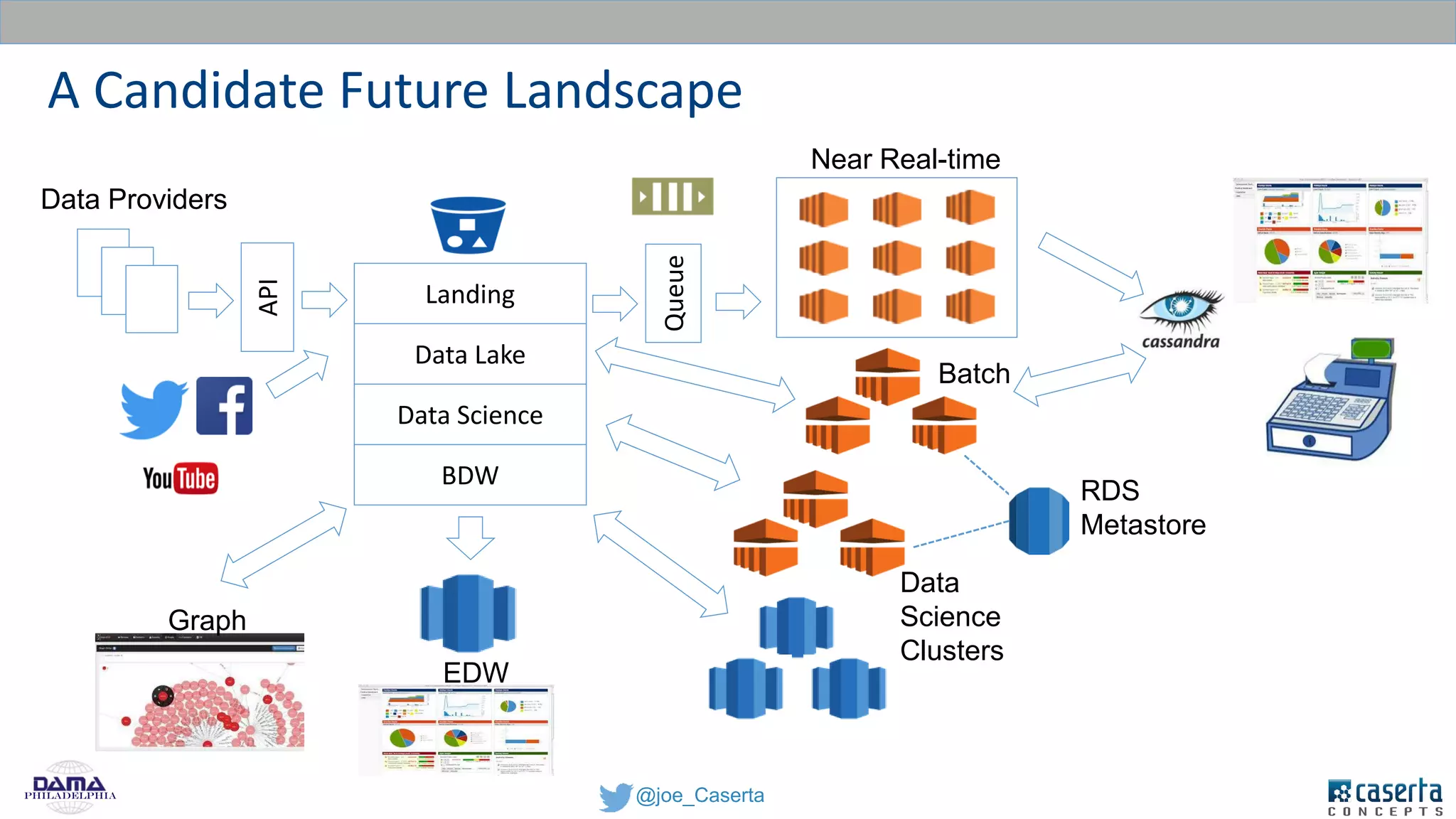

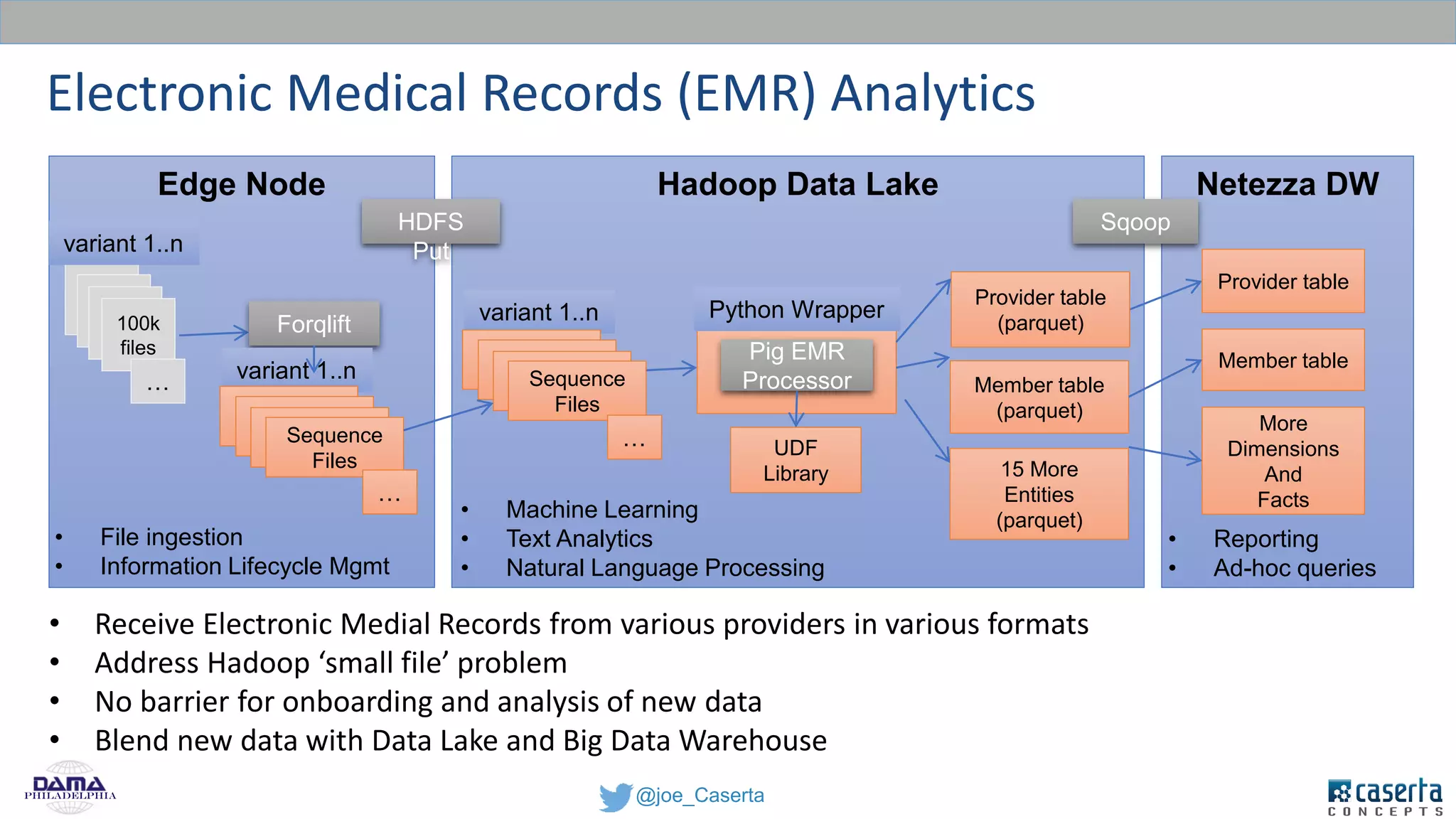

Caserta Concepts, founded by Joe Caserta, is a consulting firm specializing in data innovation and modern data engineering, providing solutions for complex business data challenges. The company has launched several initiatives, including a big data practice and training programs, and is recognized for its work in data governance, analytics, and cloud solutions. With a focus on evolving data analytics and governance techniques, Caserta Concepts aims to address issues such as data sprawl and improve data integration across various sectors.