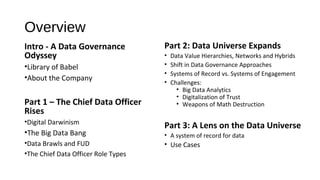

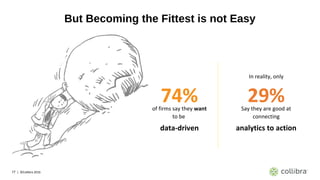

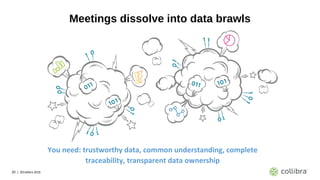

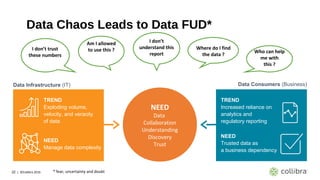

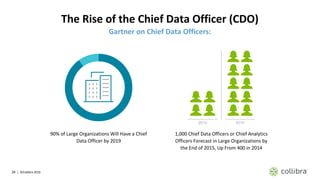

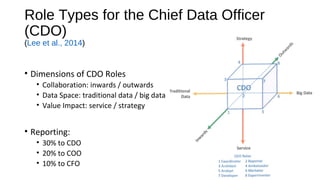

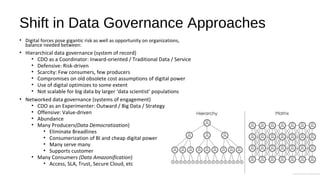

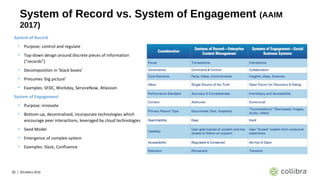

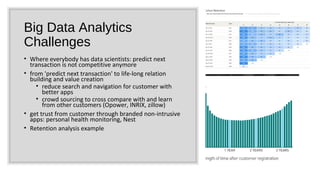

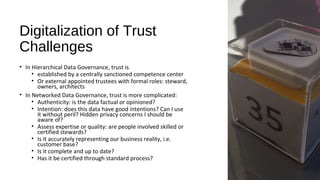

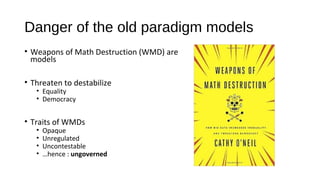

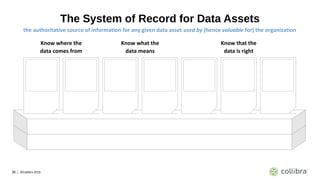

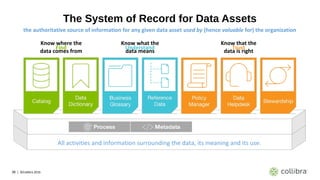

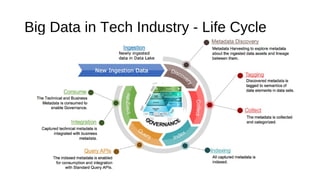

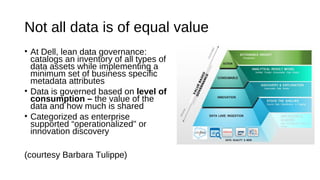

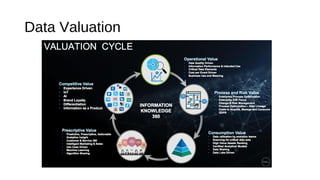

This document discusses data governance challenges in the era of big data and proposes solutions. It begins by outlining the rise of data-driven businesses and the challenges they face with data quality, access, and trust issues. This has led to the rise of the Chief Data Officer role. The document then discusses how data governance approaches need to shift from hierarchical systems of record to more networked systems of engagement to manage expanding data volumes and types from sources like IoT and big data analytics. Key challenges discussed include digitalizing trust in data and addressing risks from opaque big data models. The document proposes taking a hybrid governance approach and implementing a system of record for data assets to provide findability, understandability and trust for all organizational data. Example use