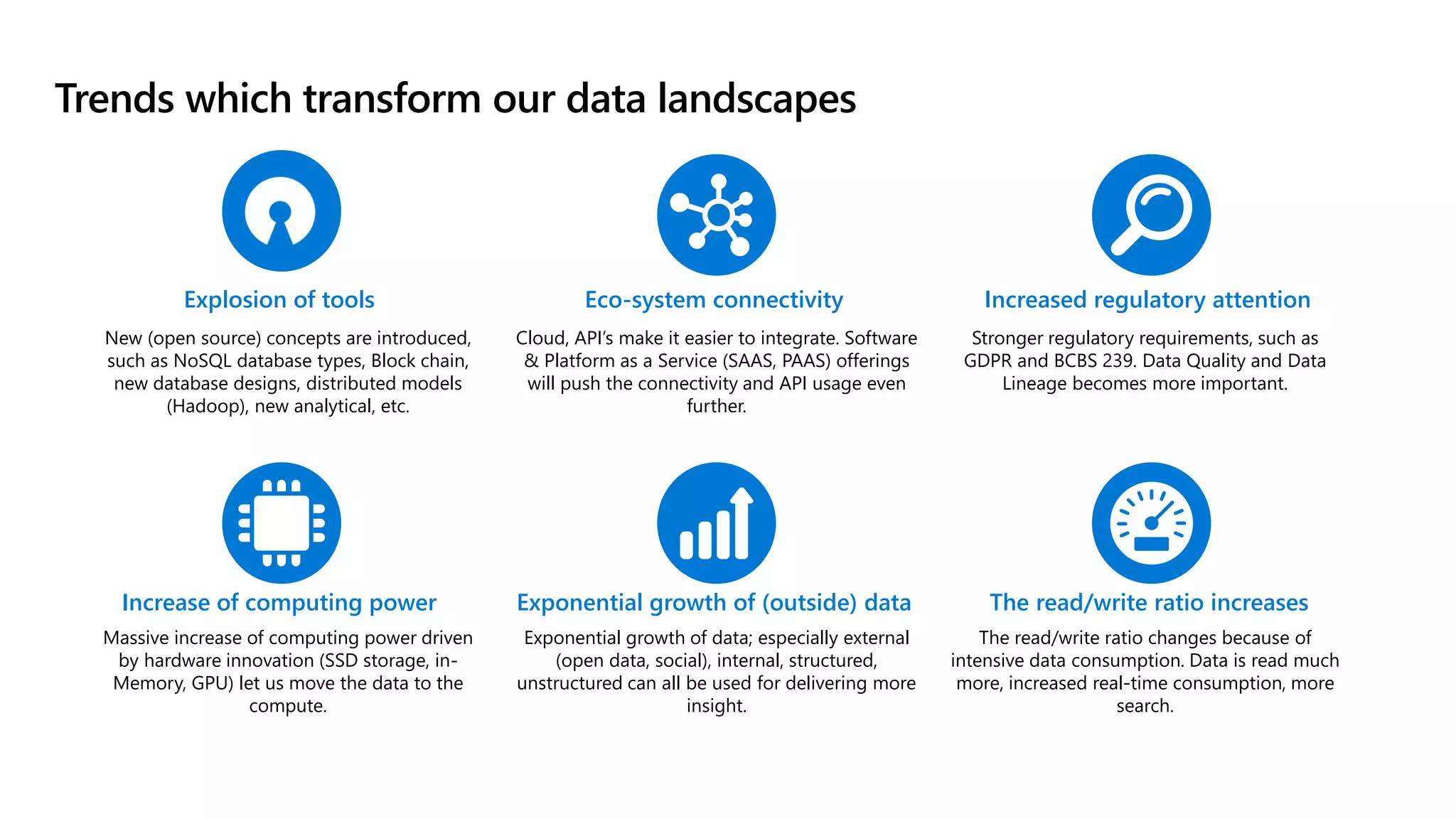

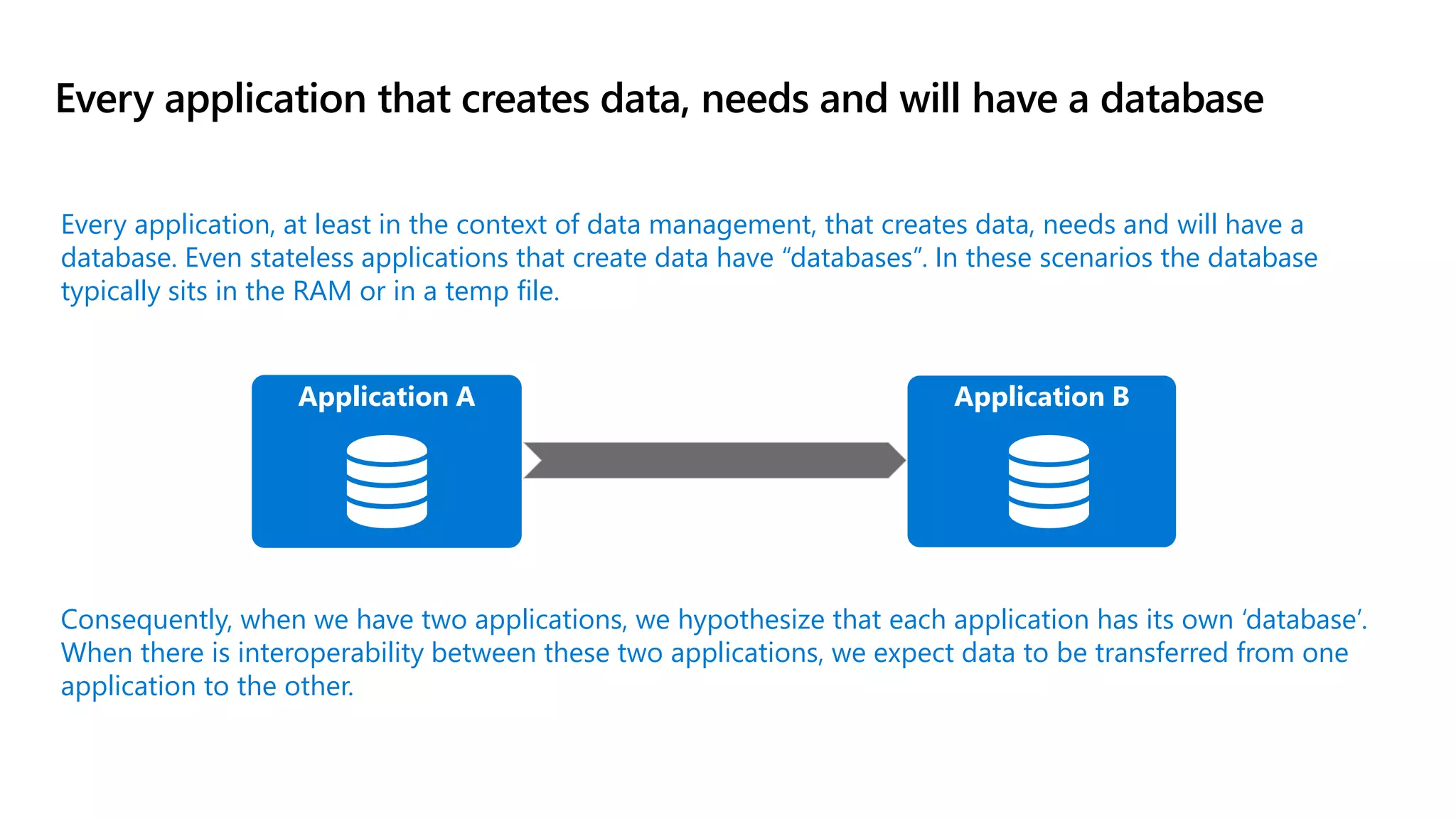

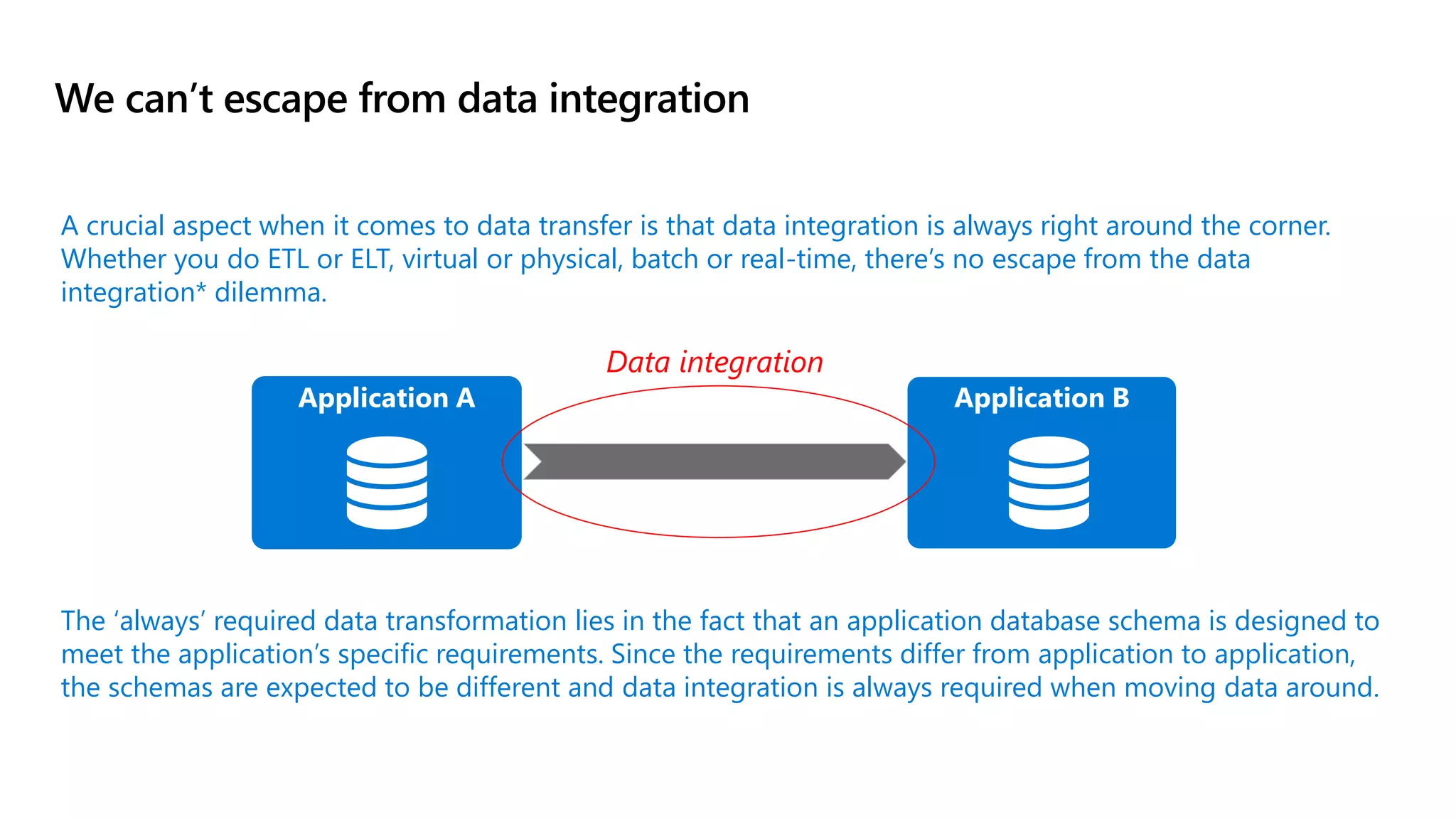

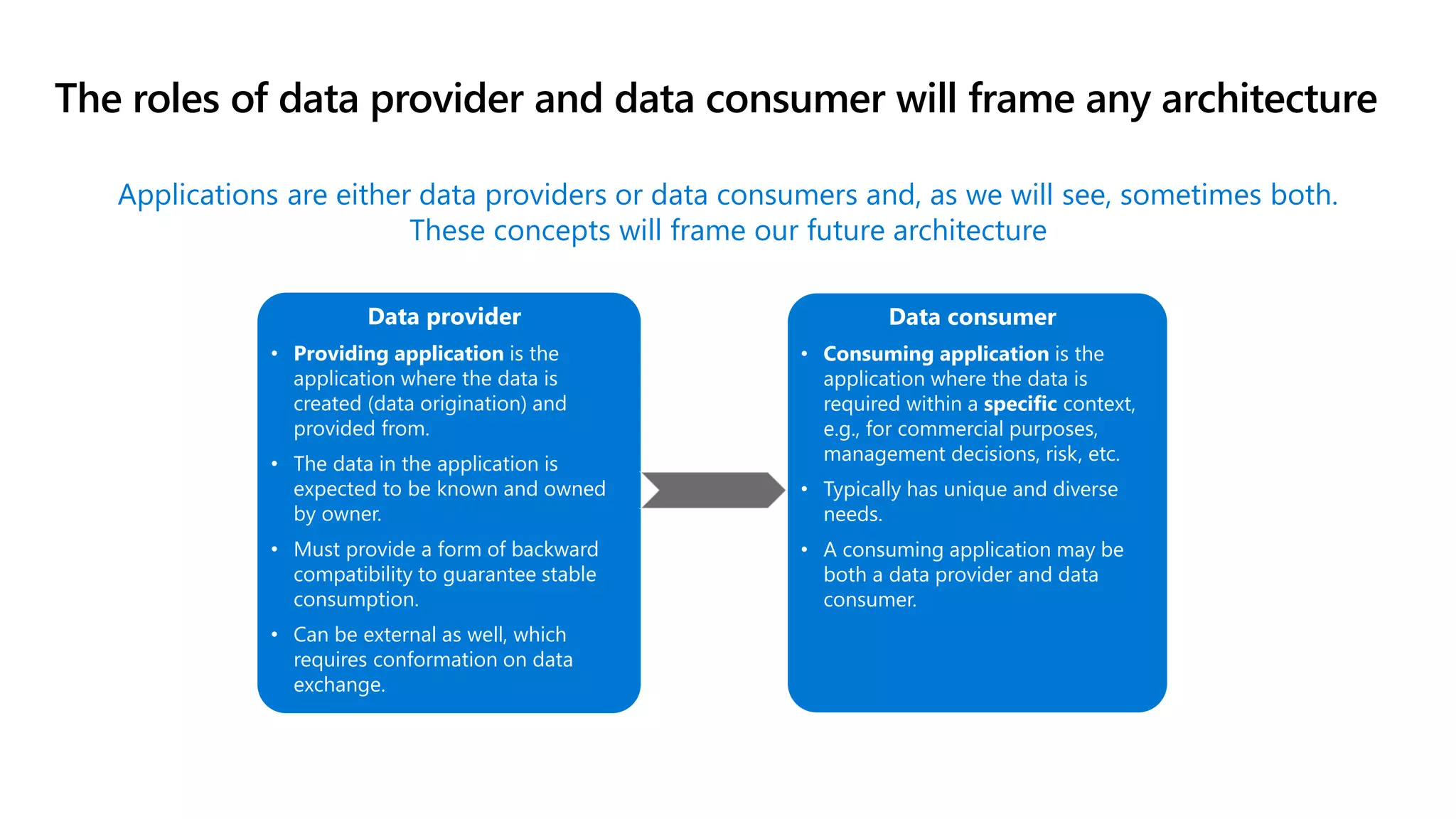

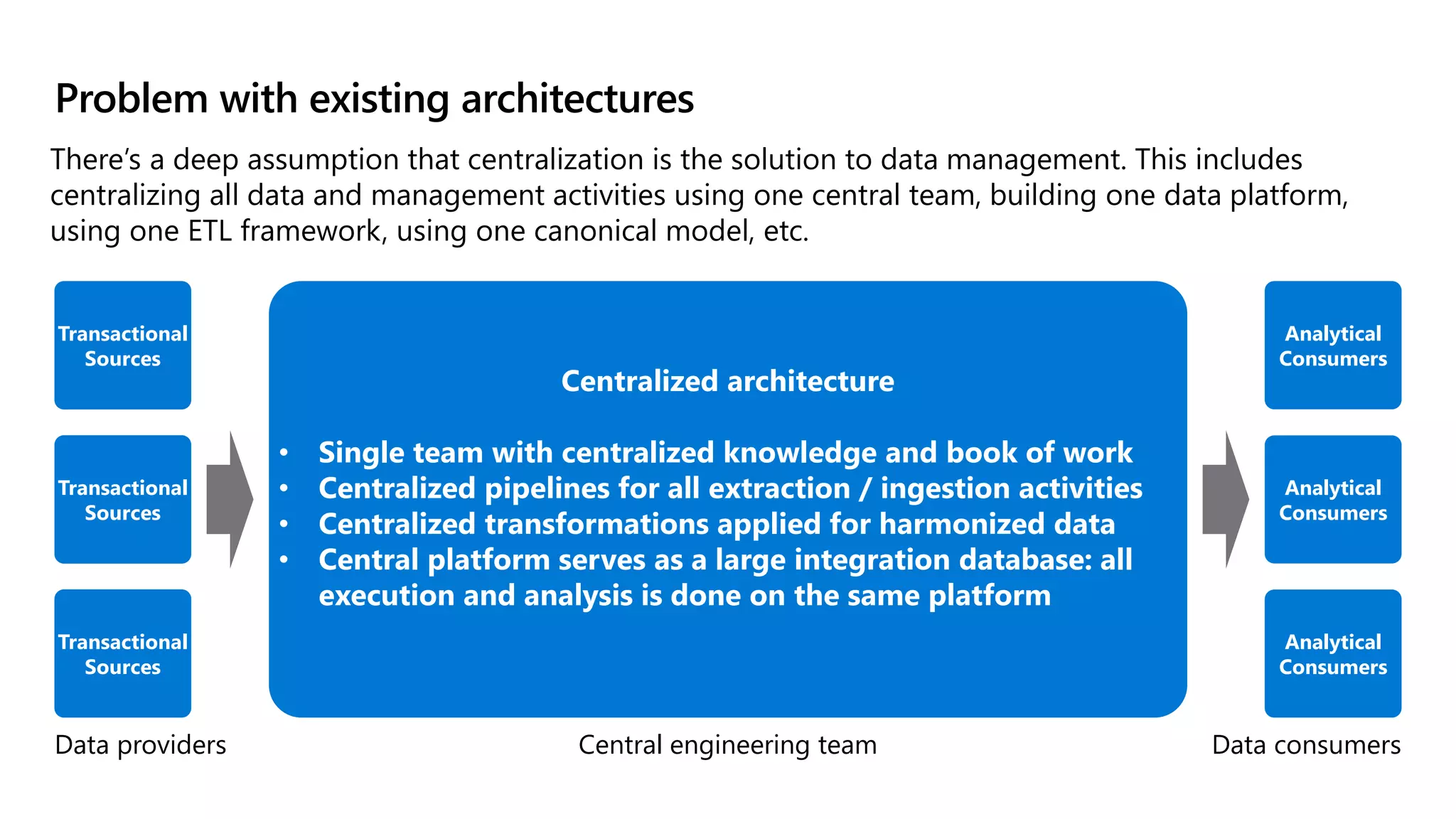

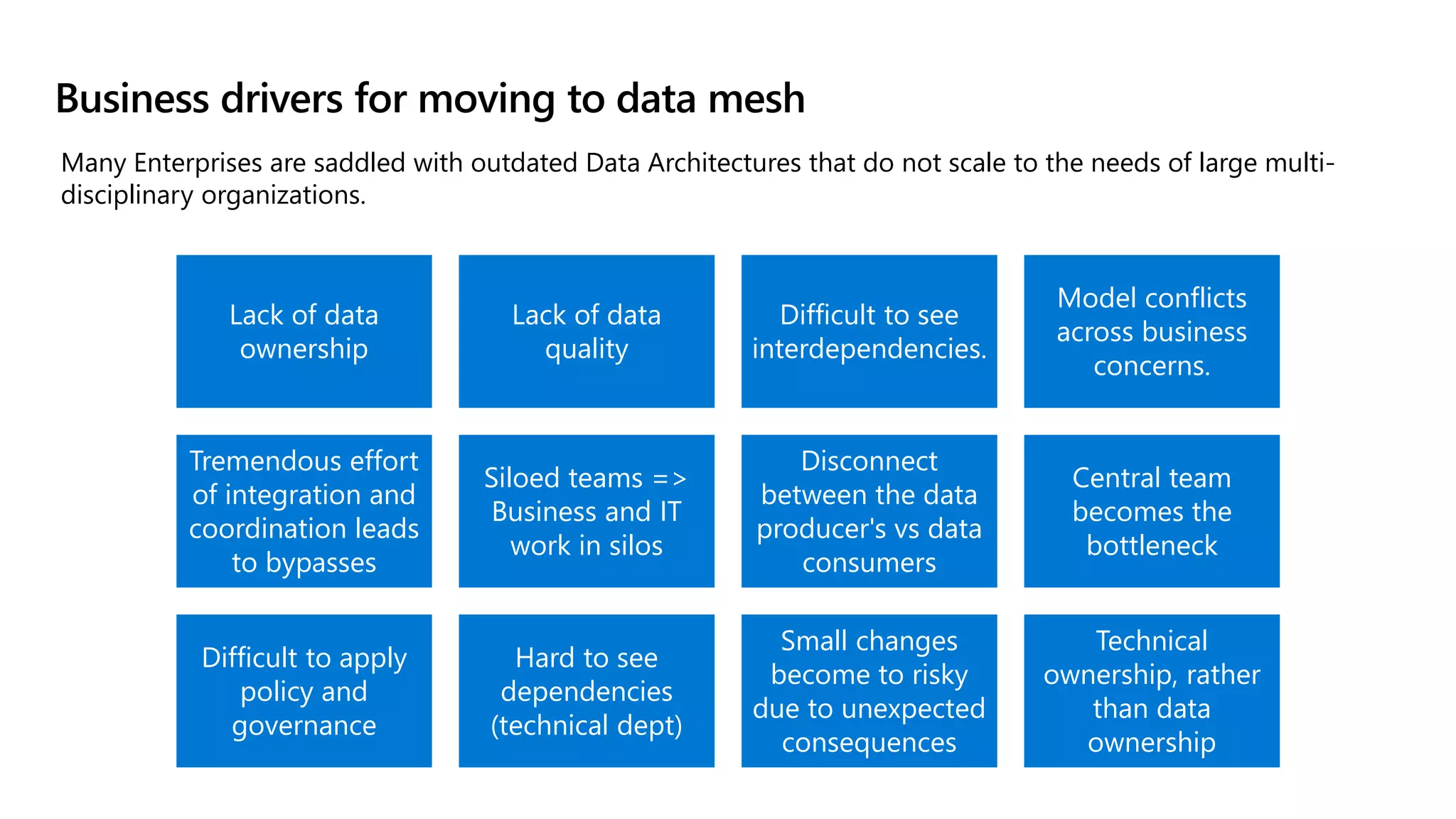

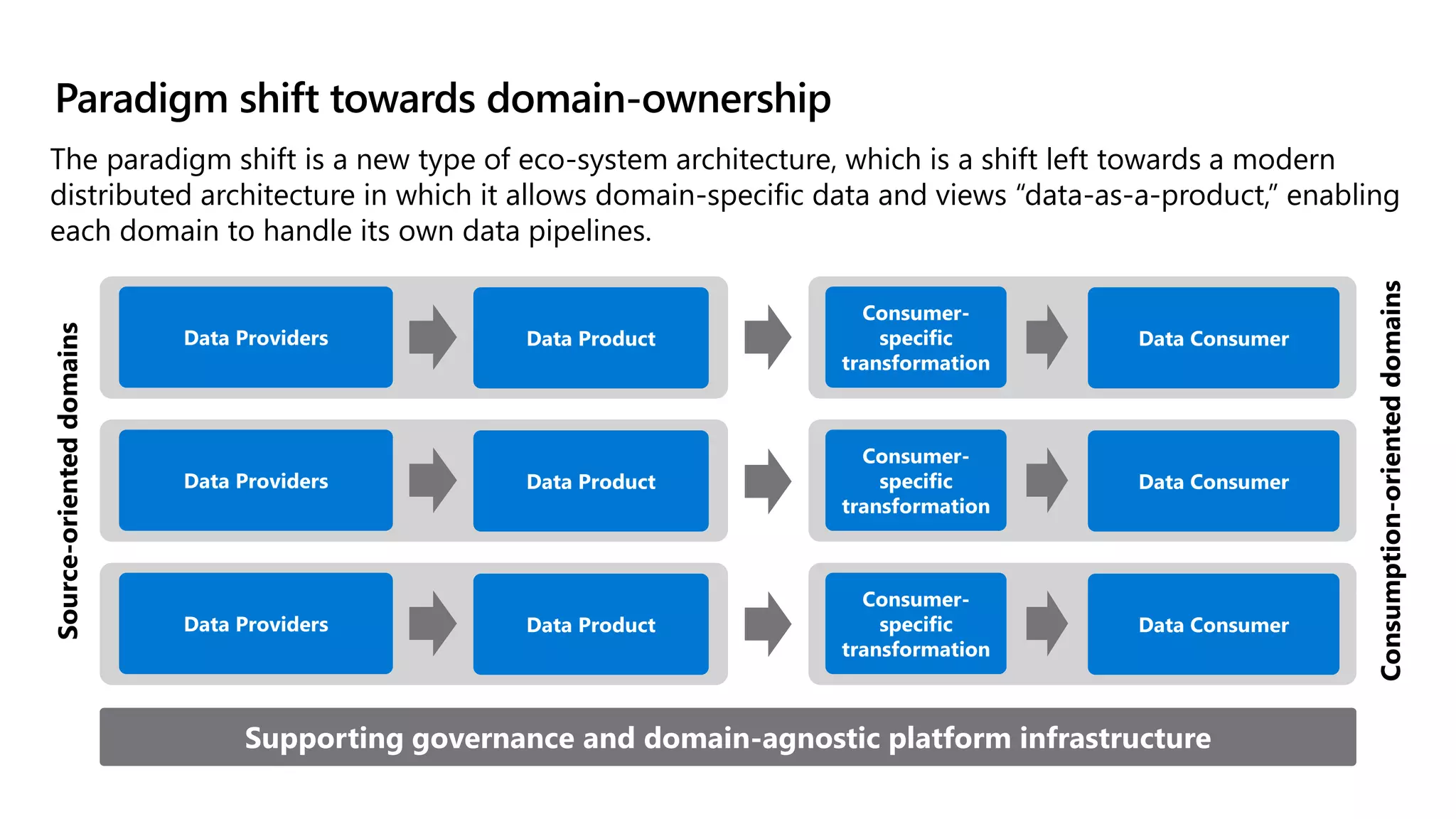

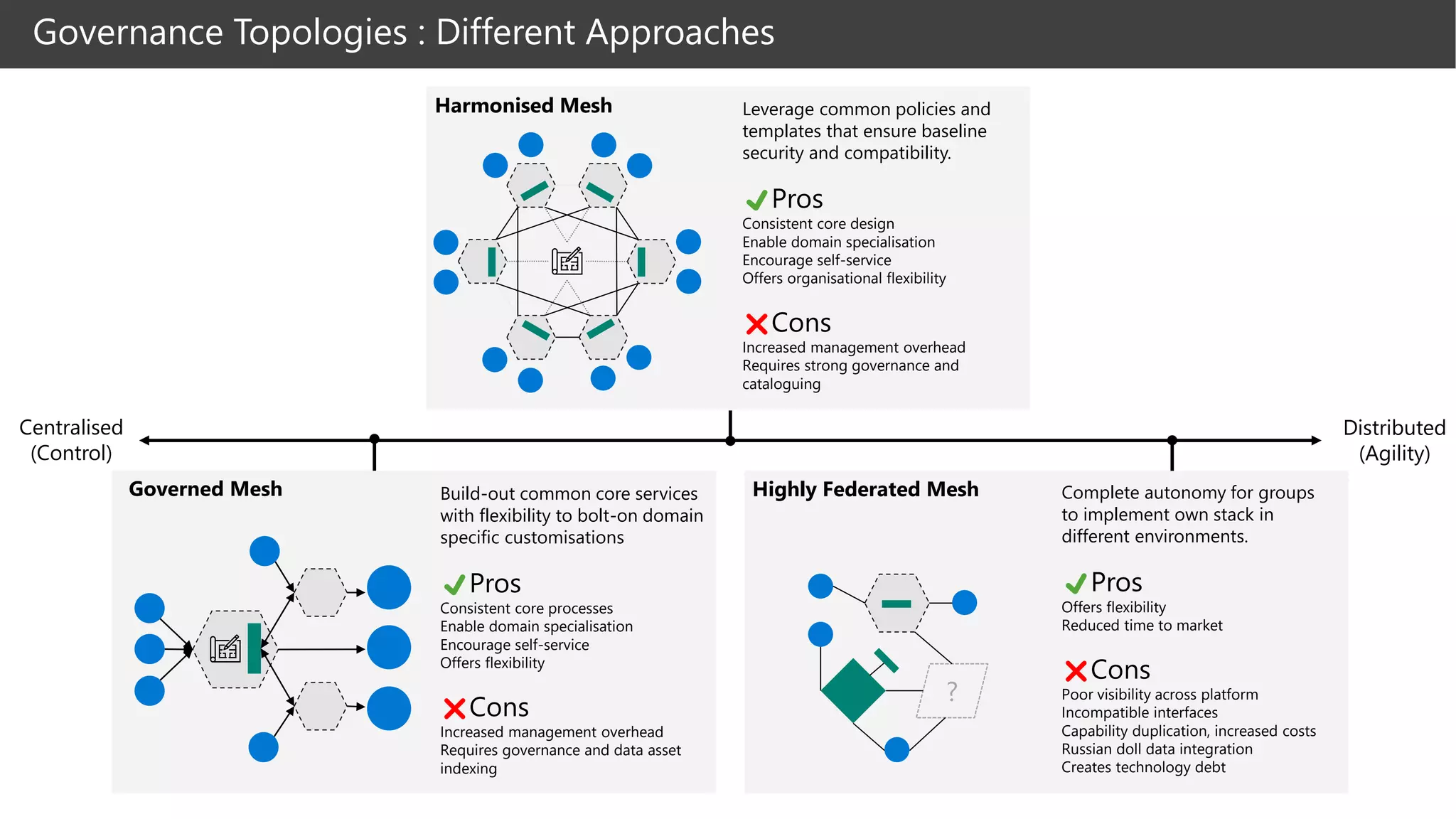

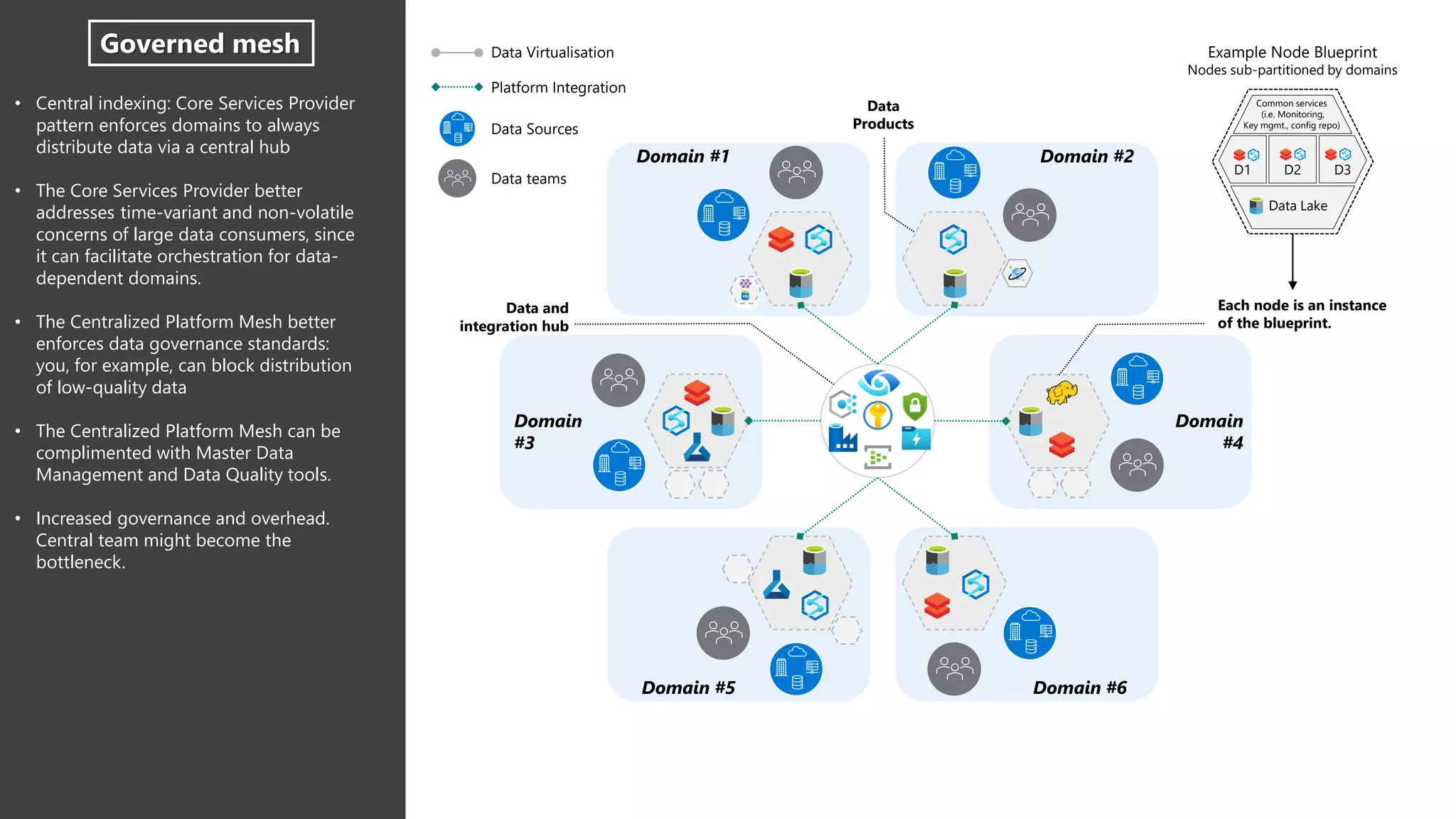

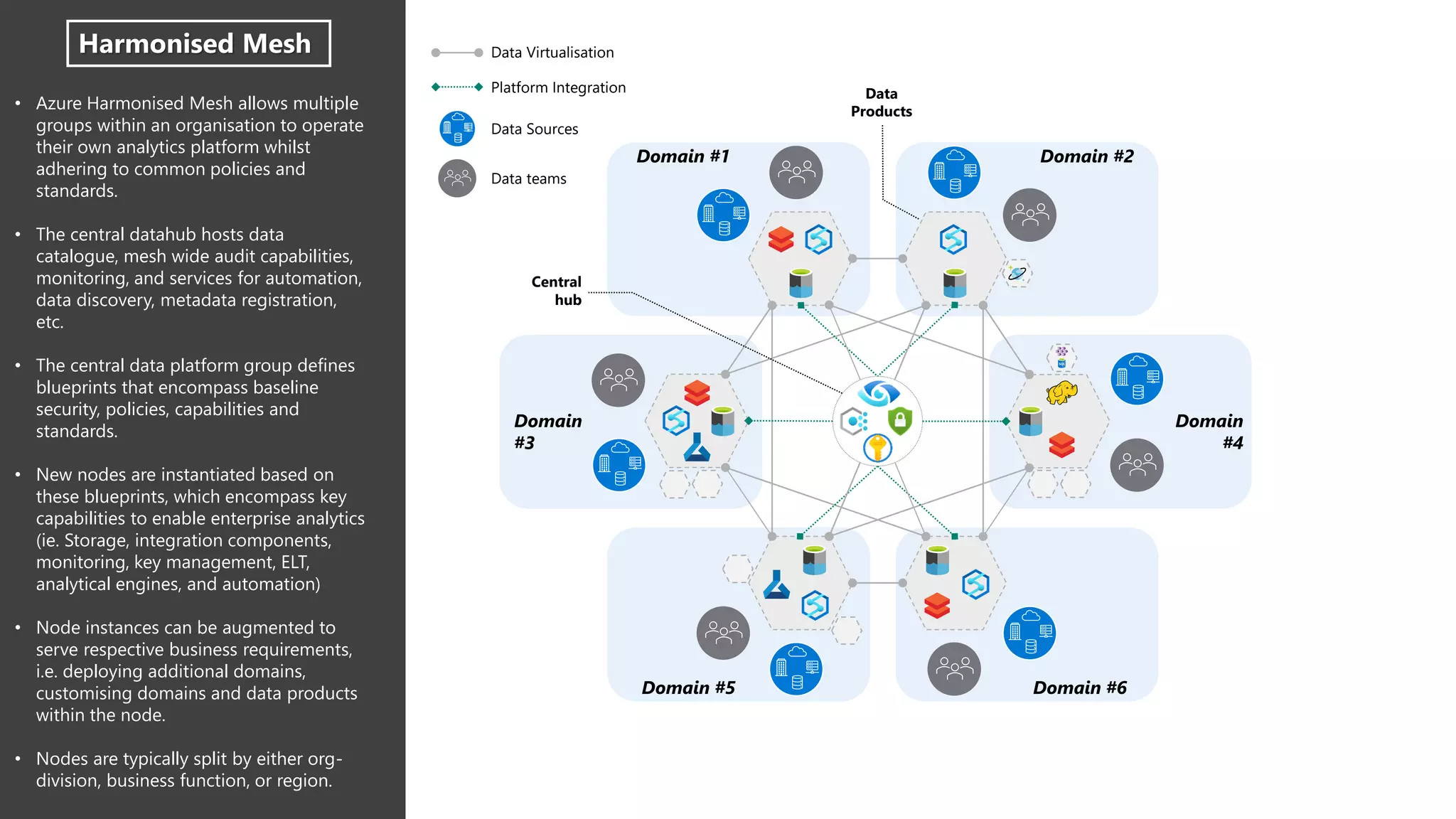

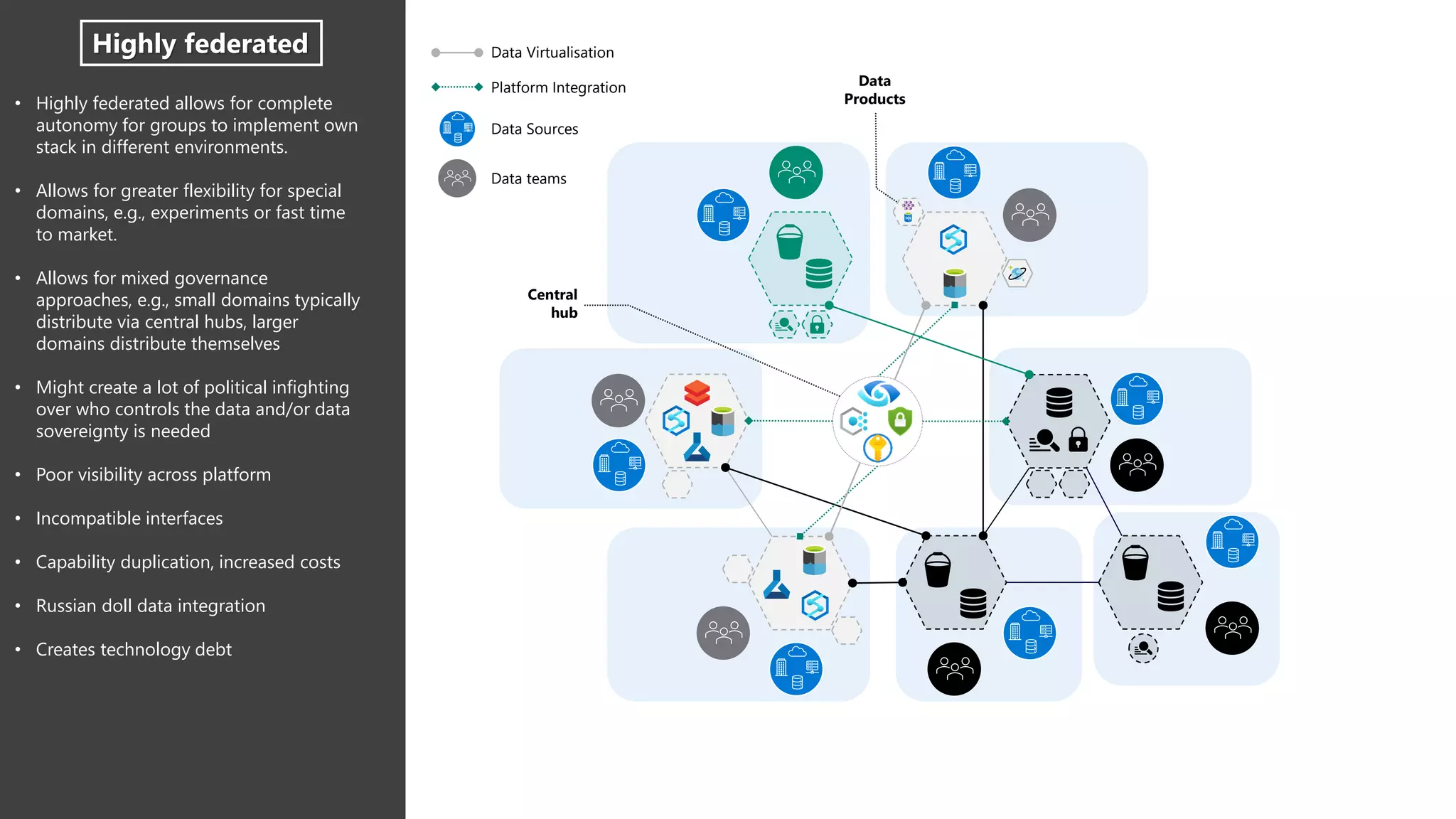

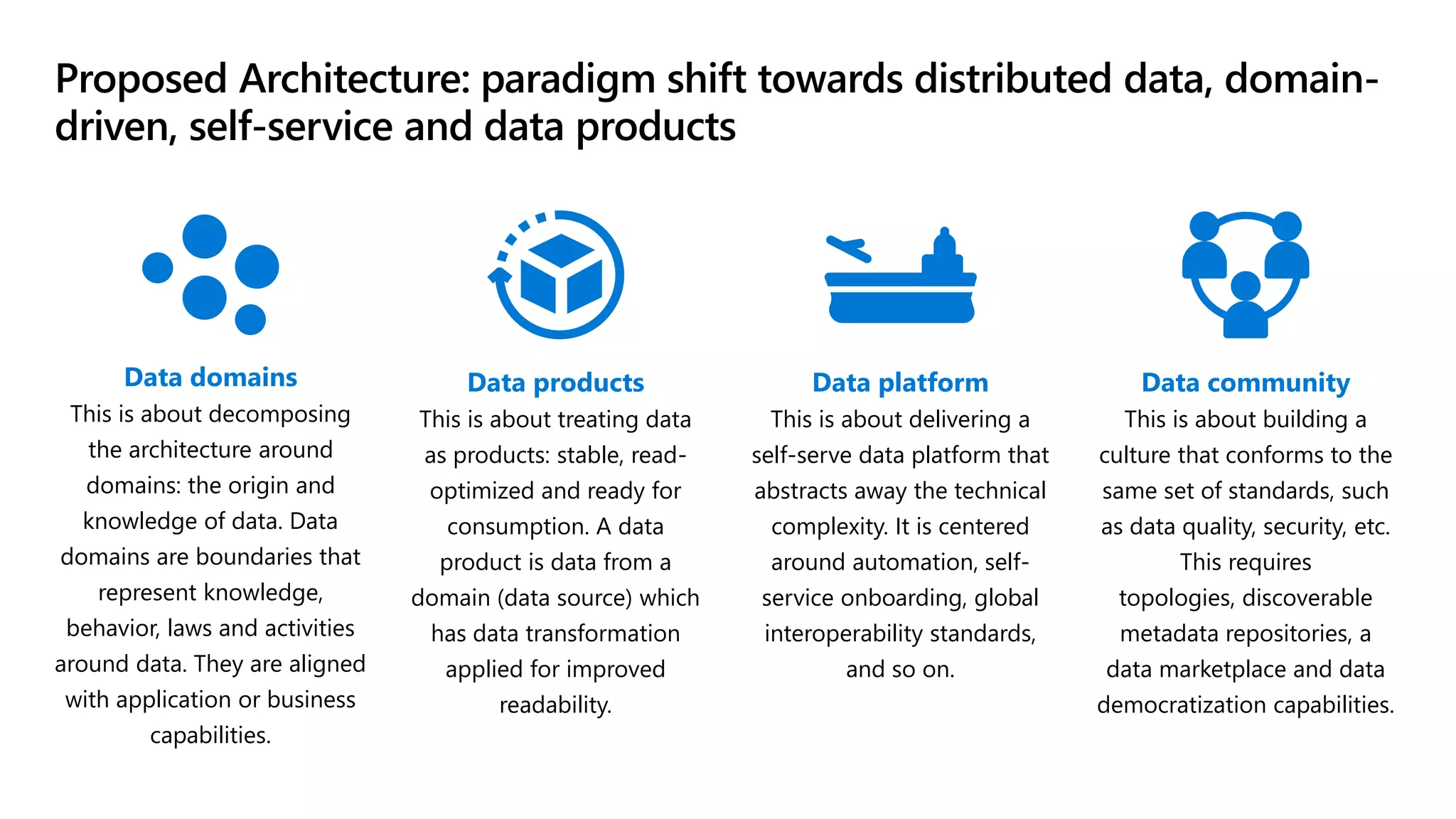

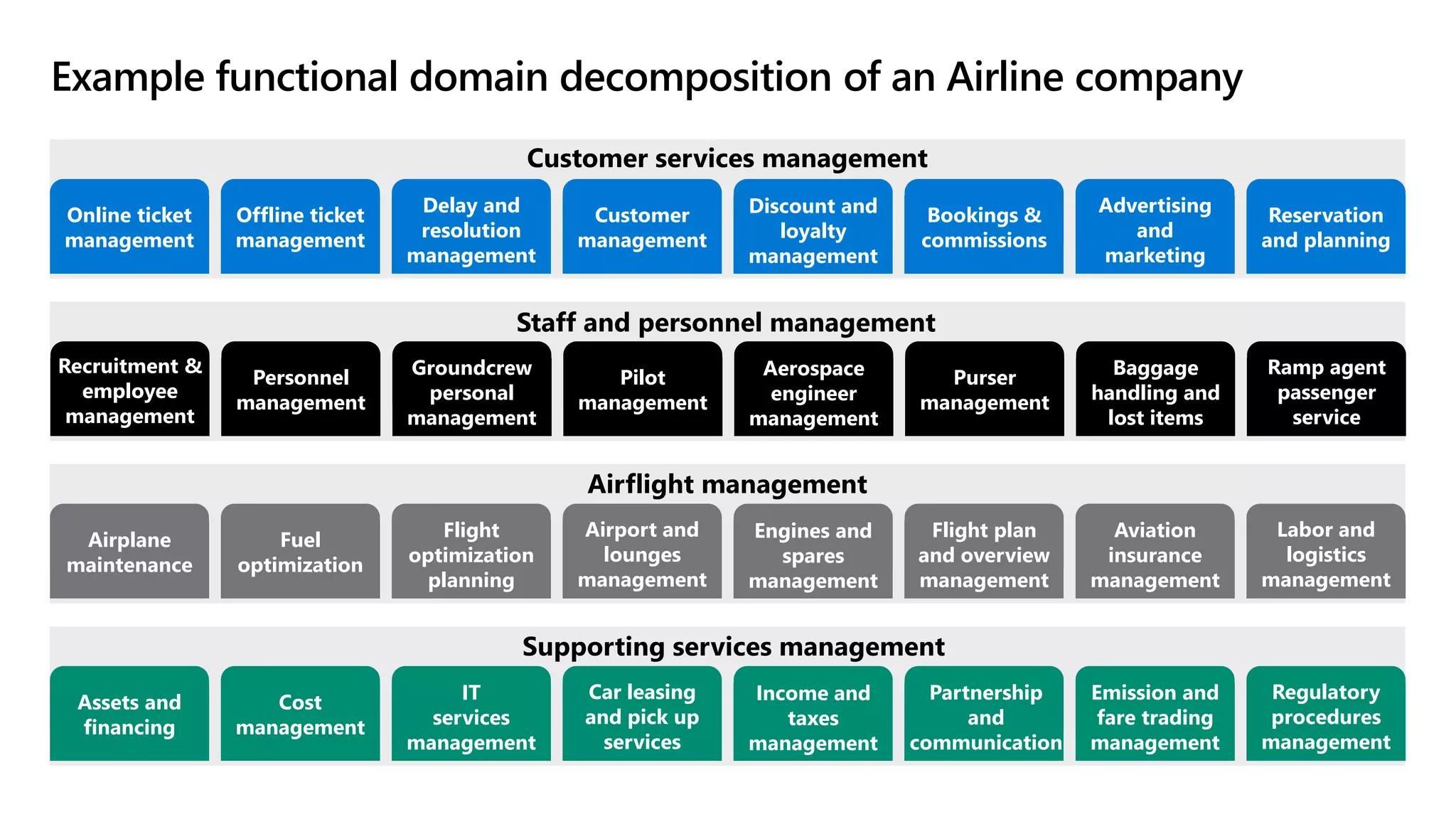

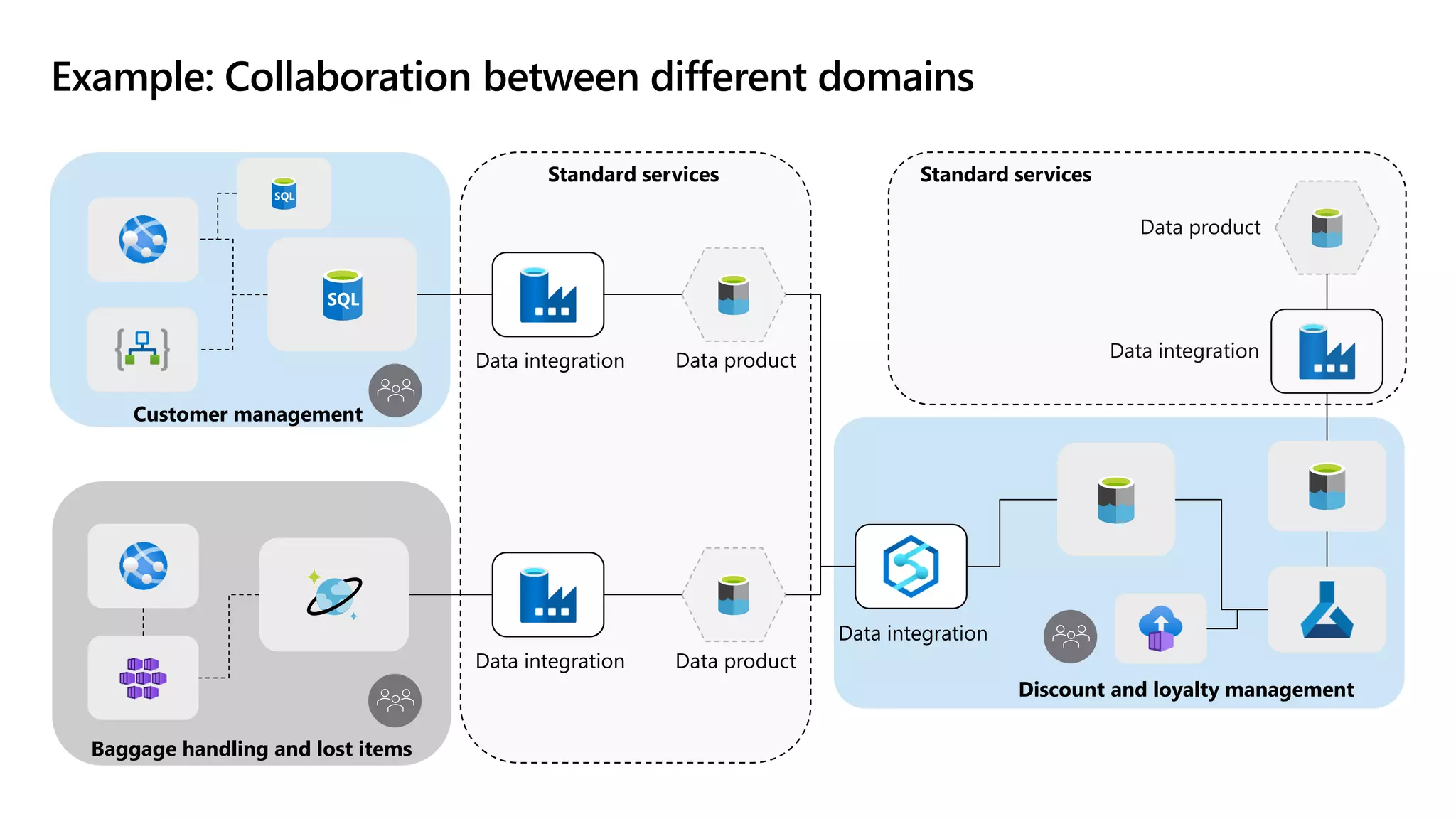

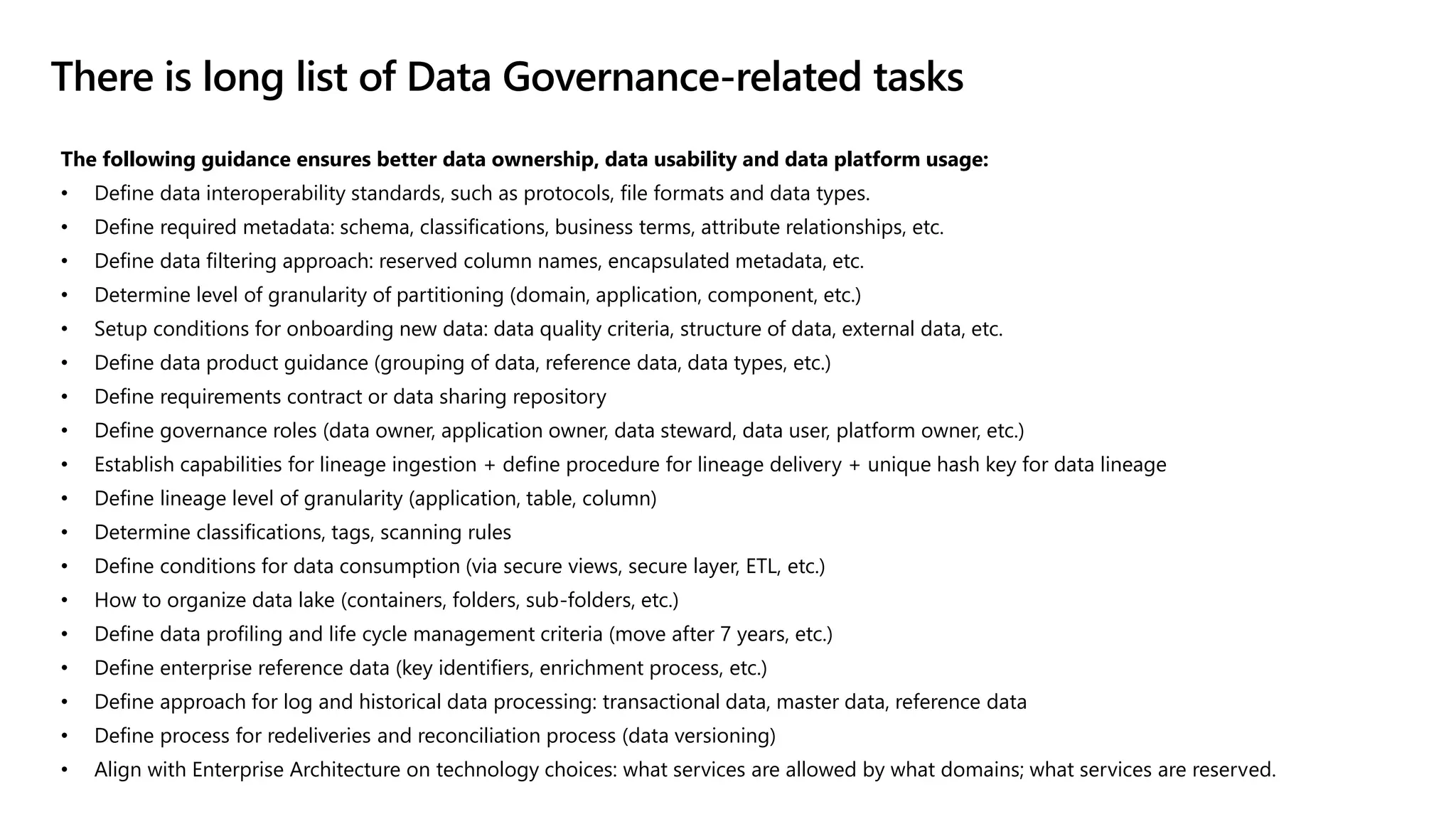

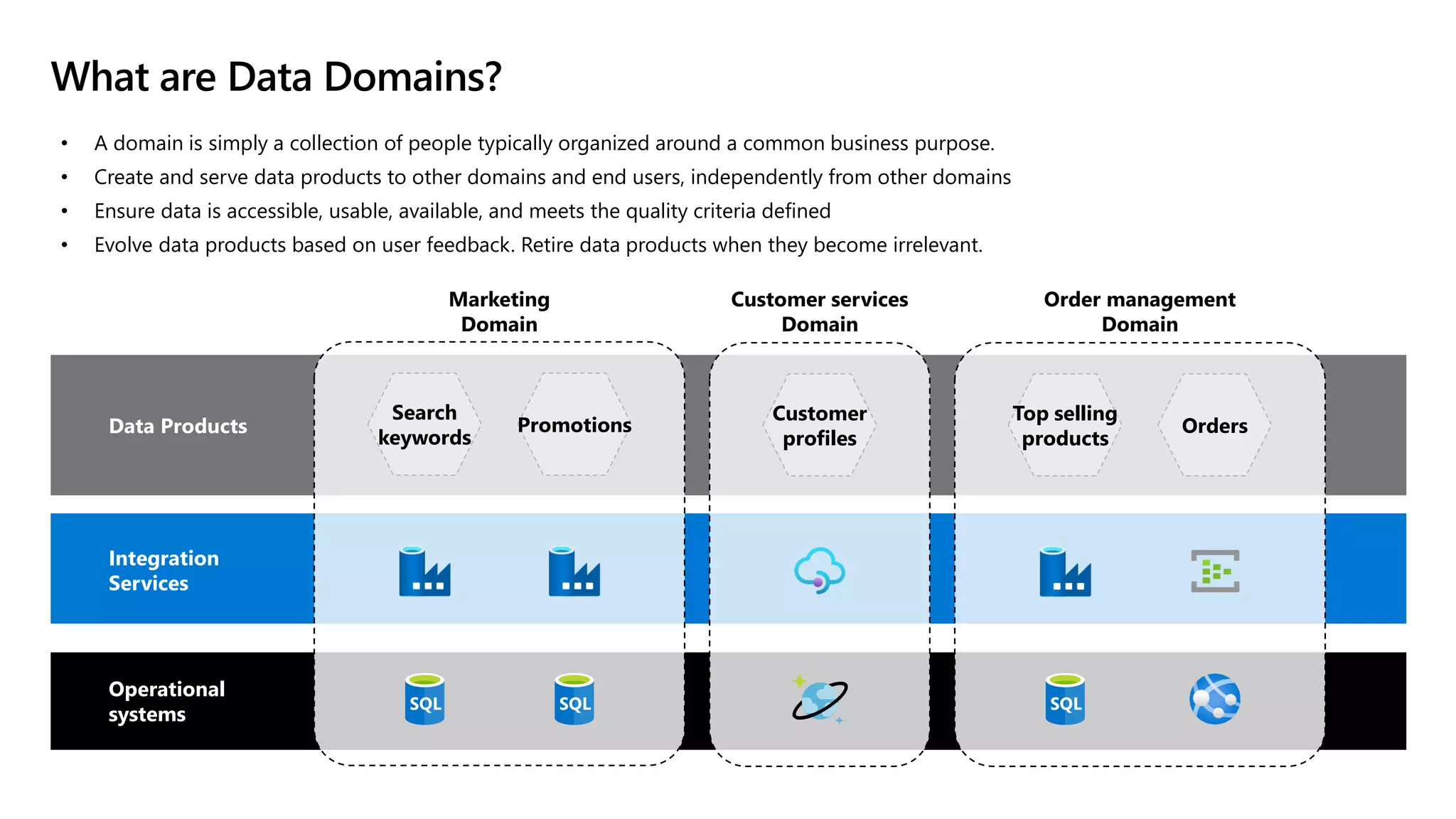

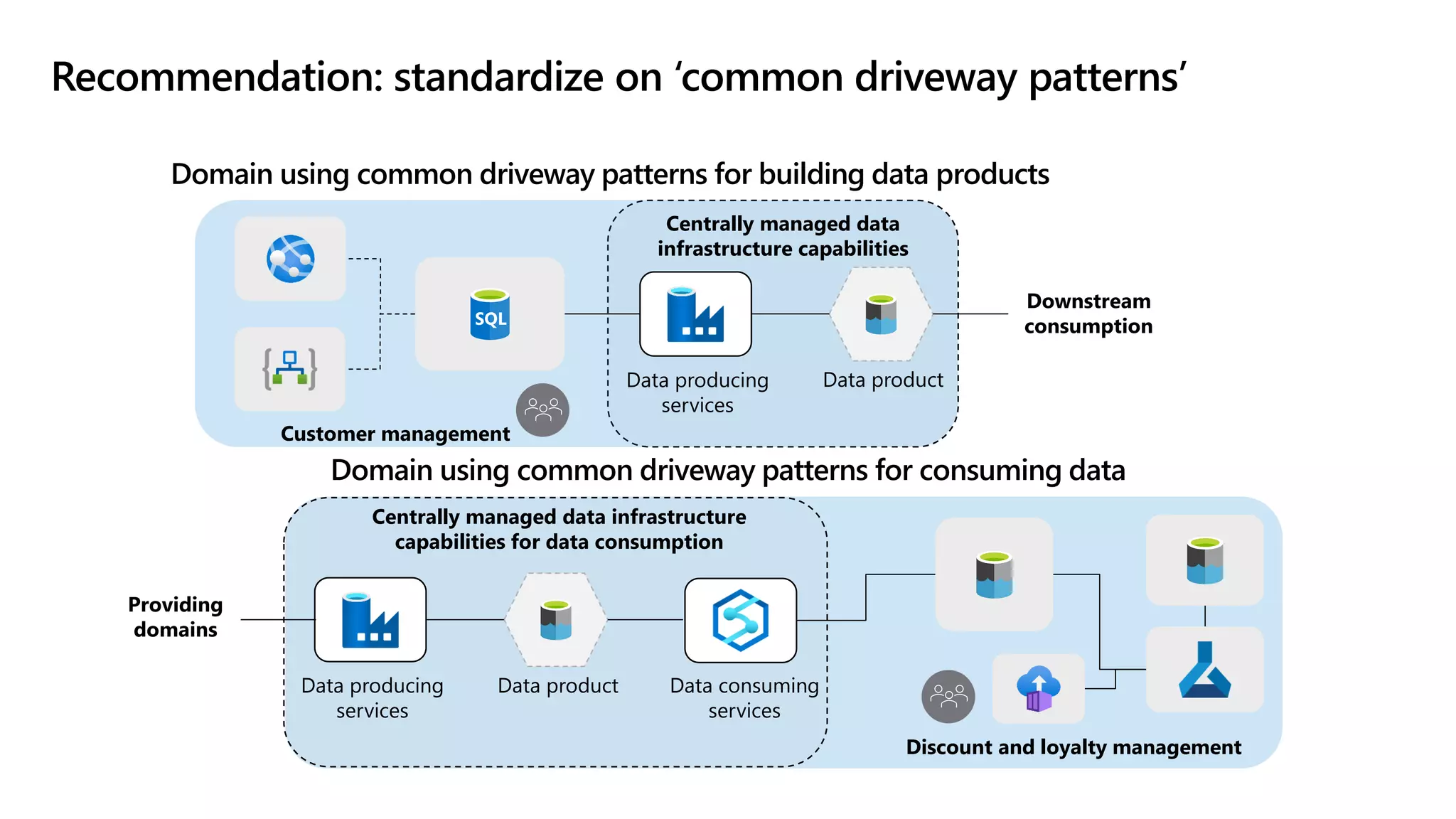

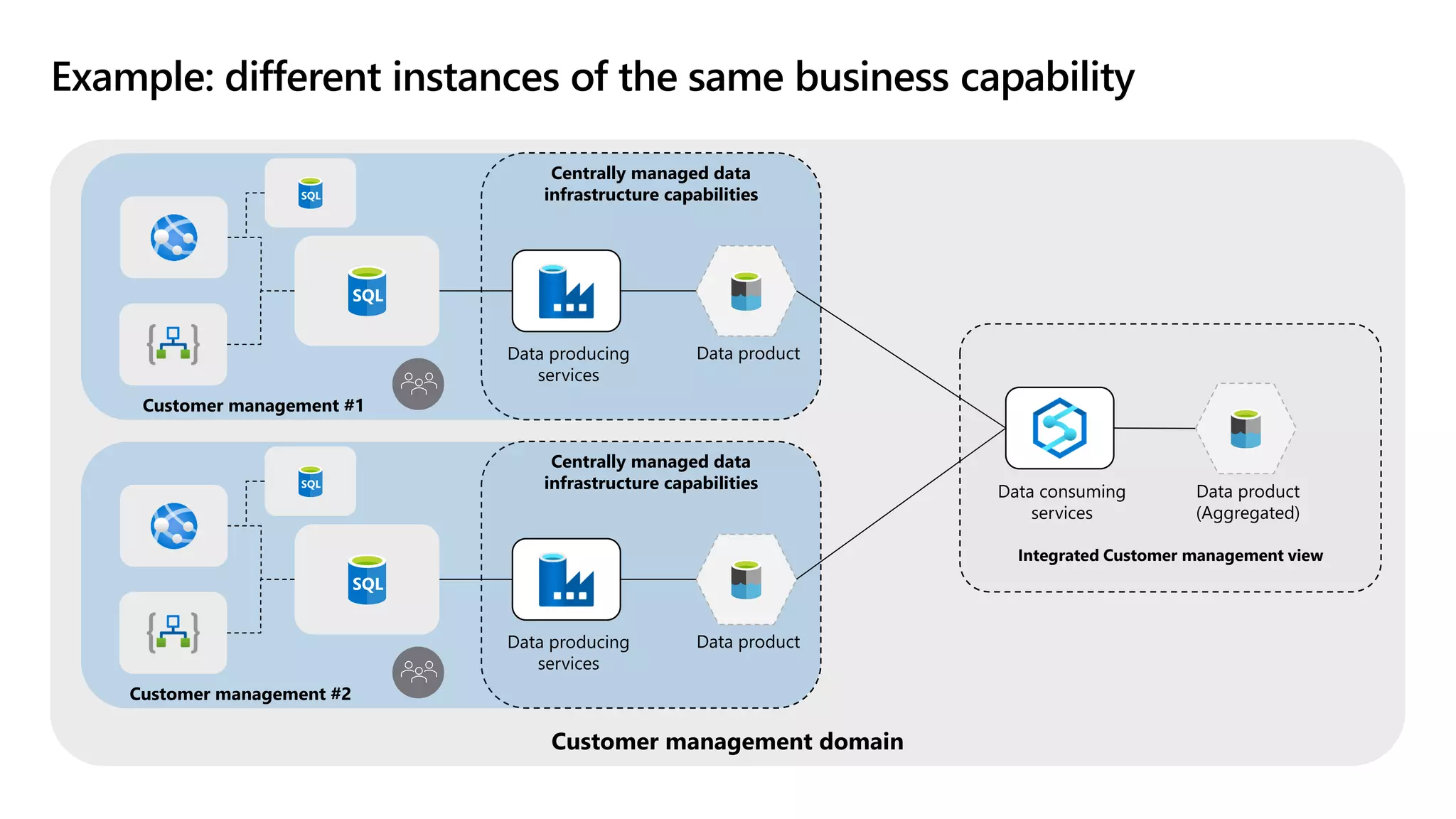

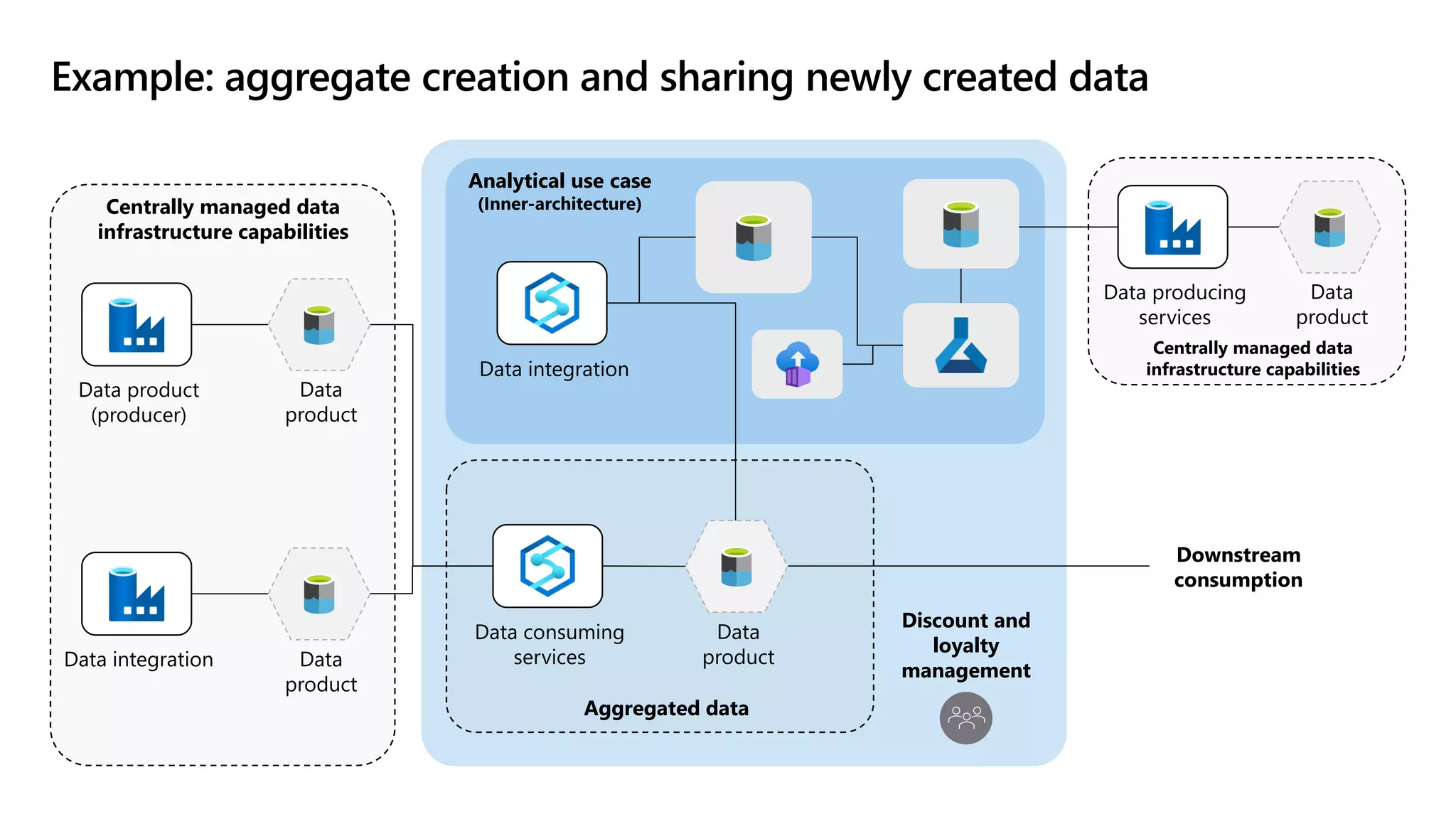

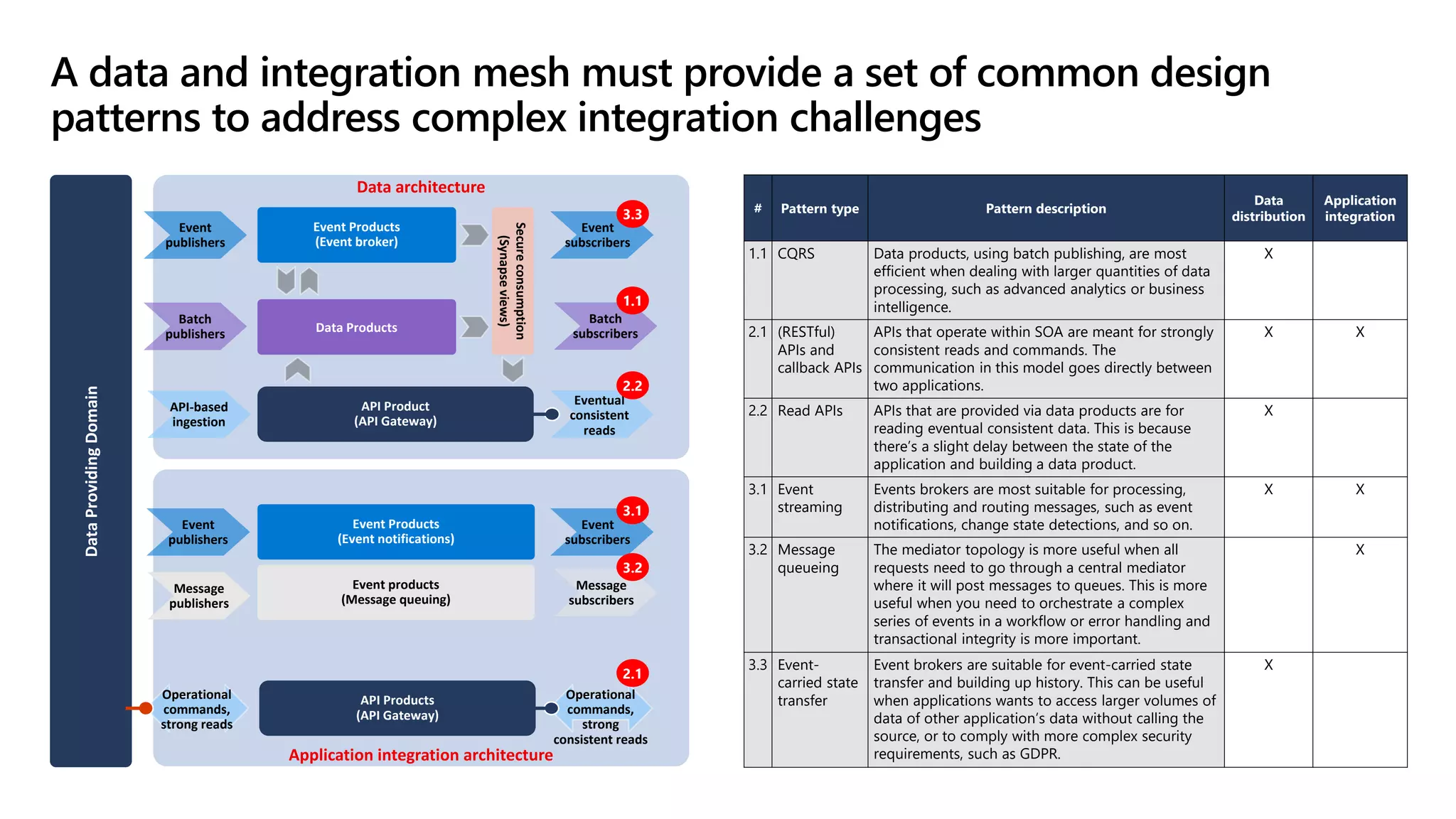

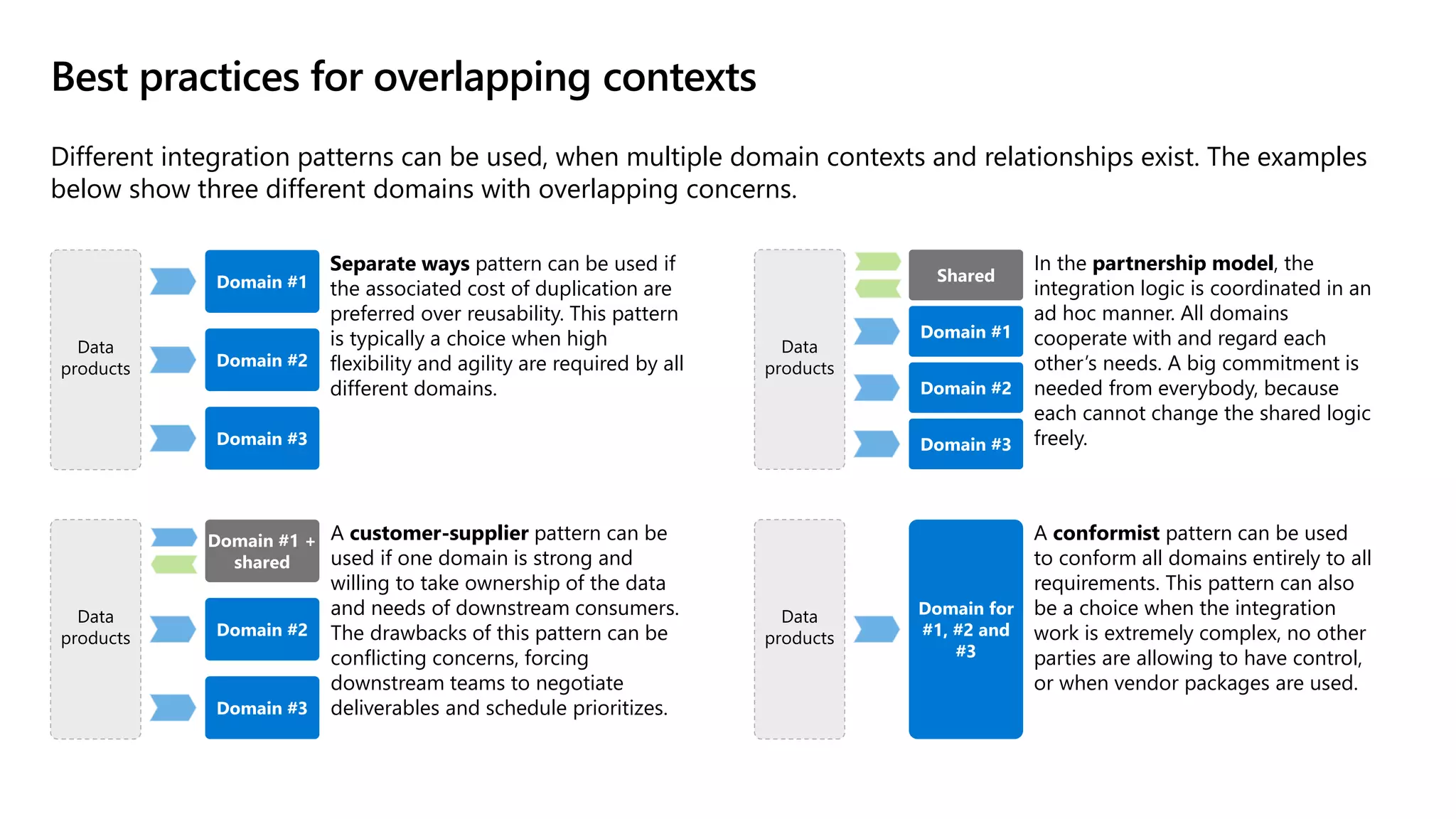

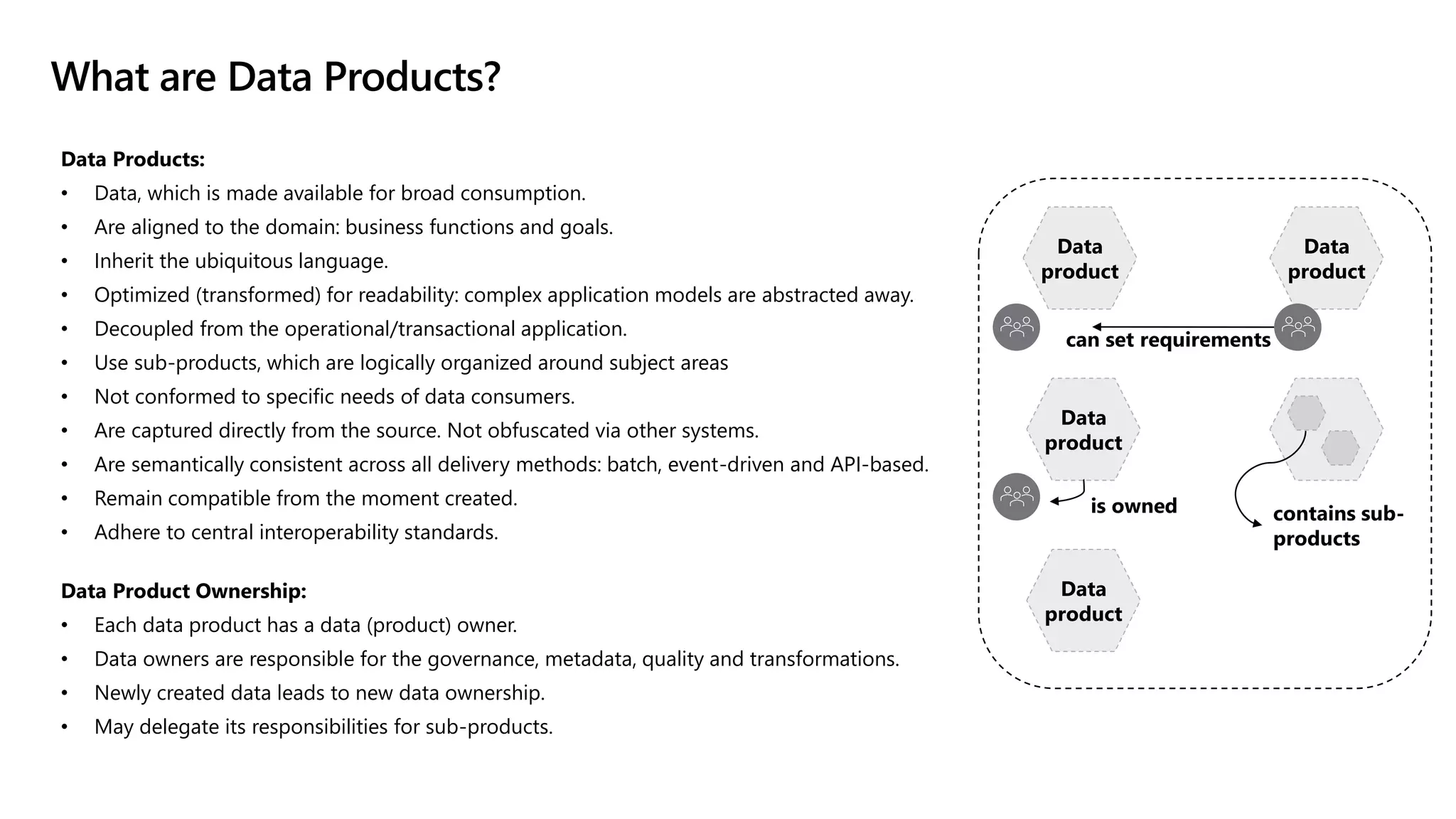

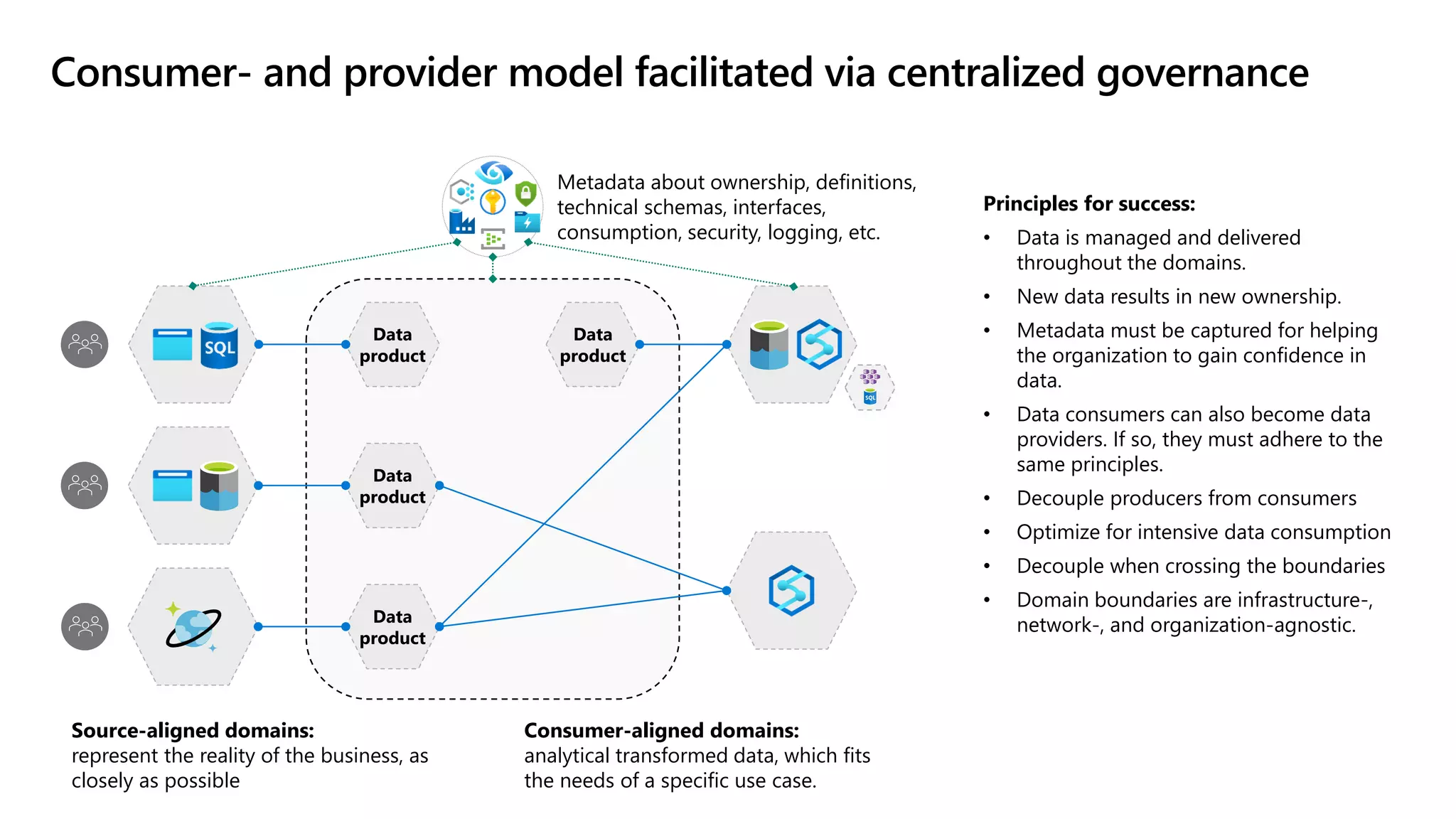

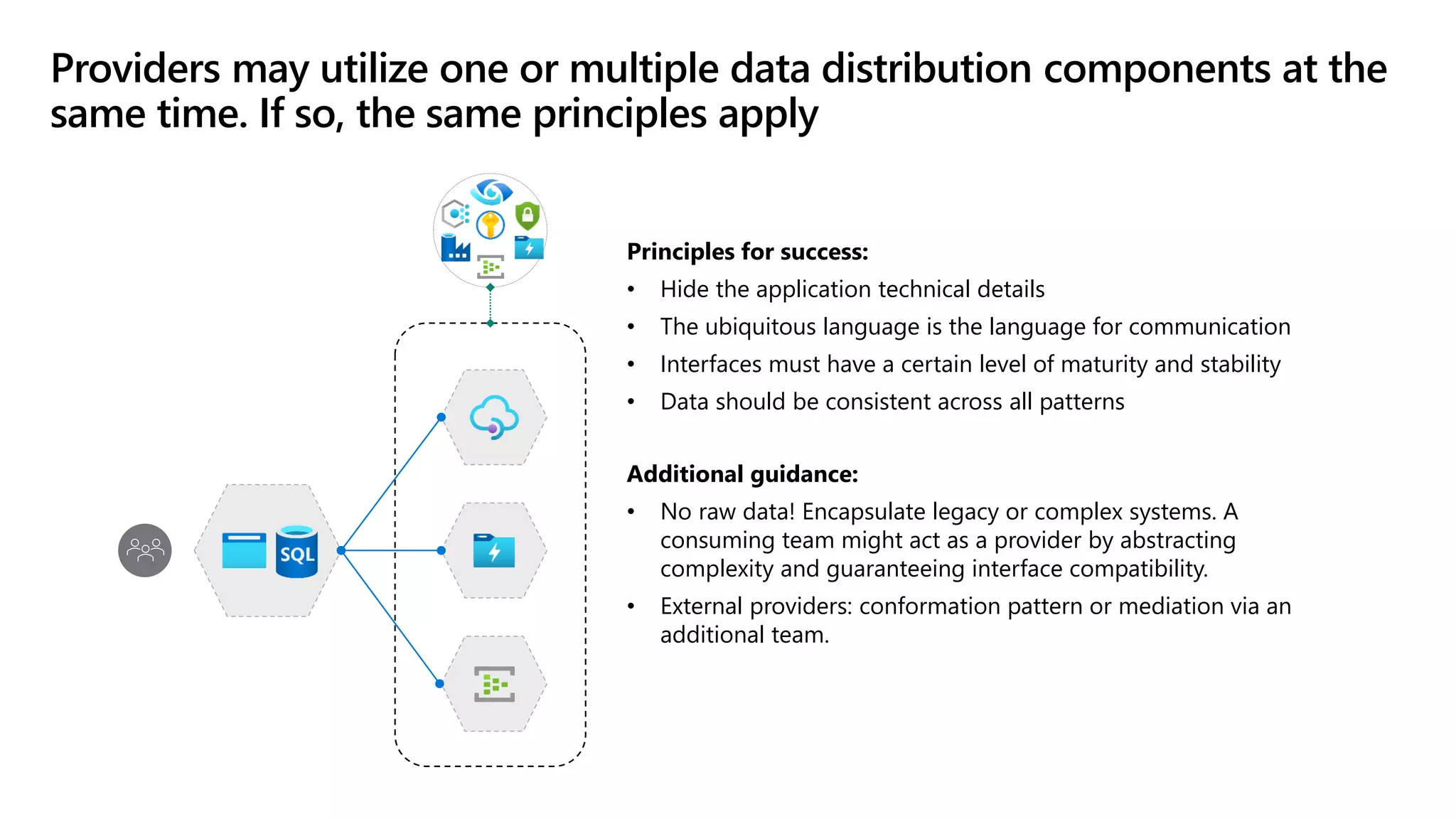

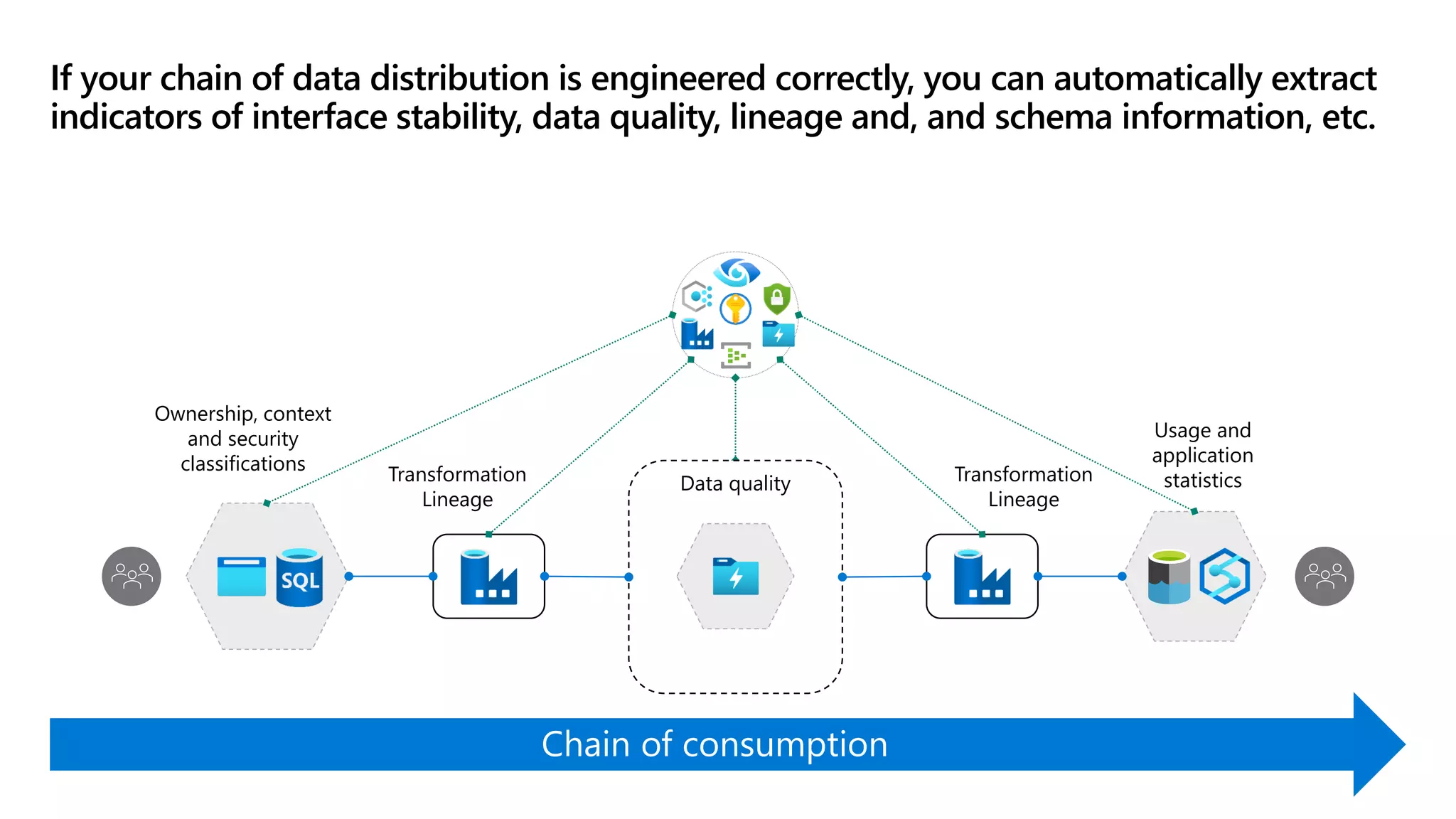

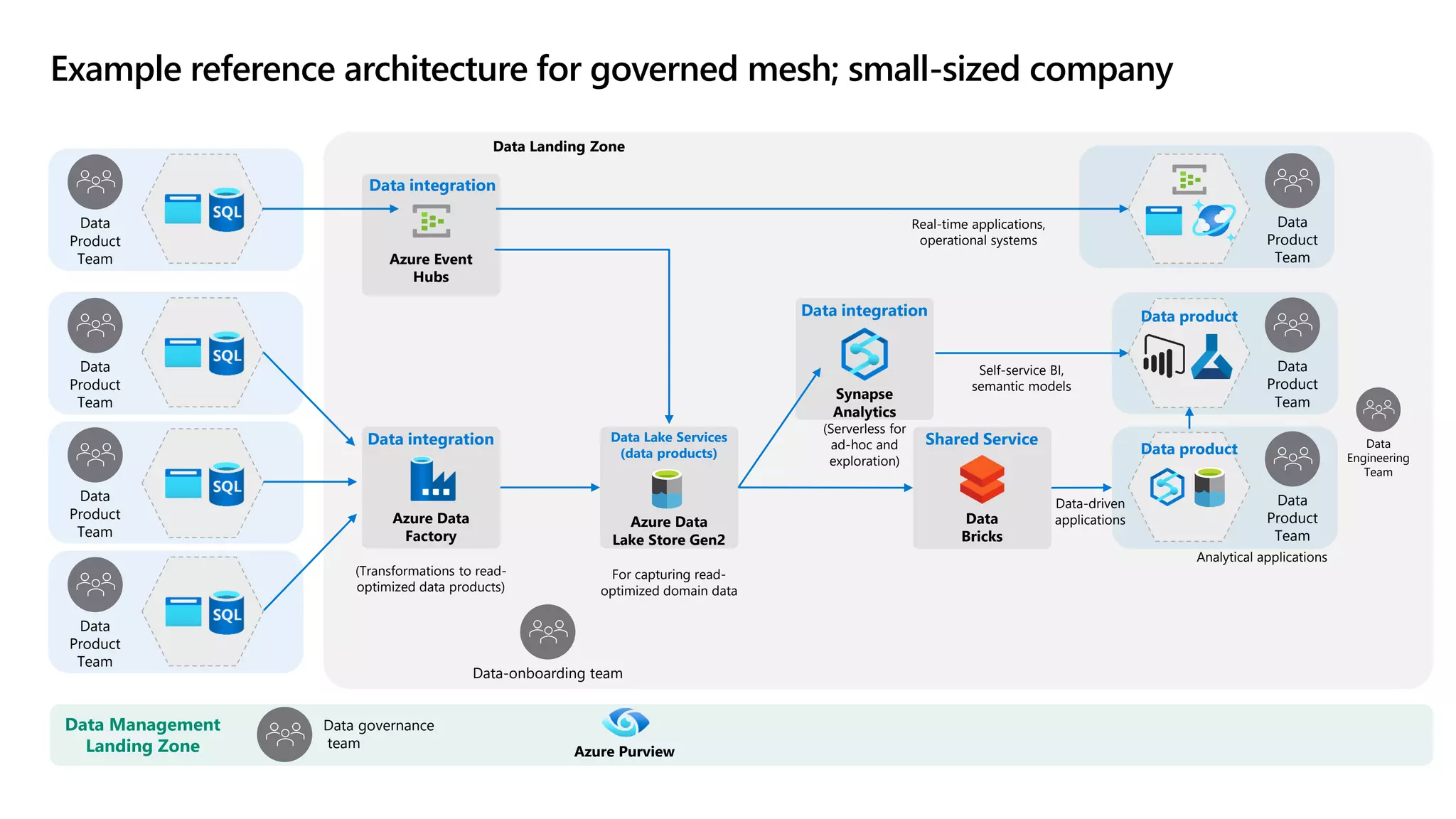

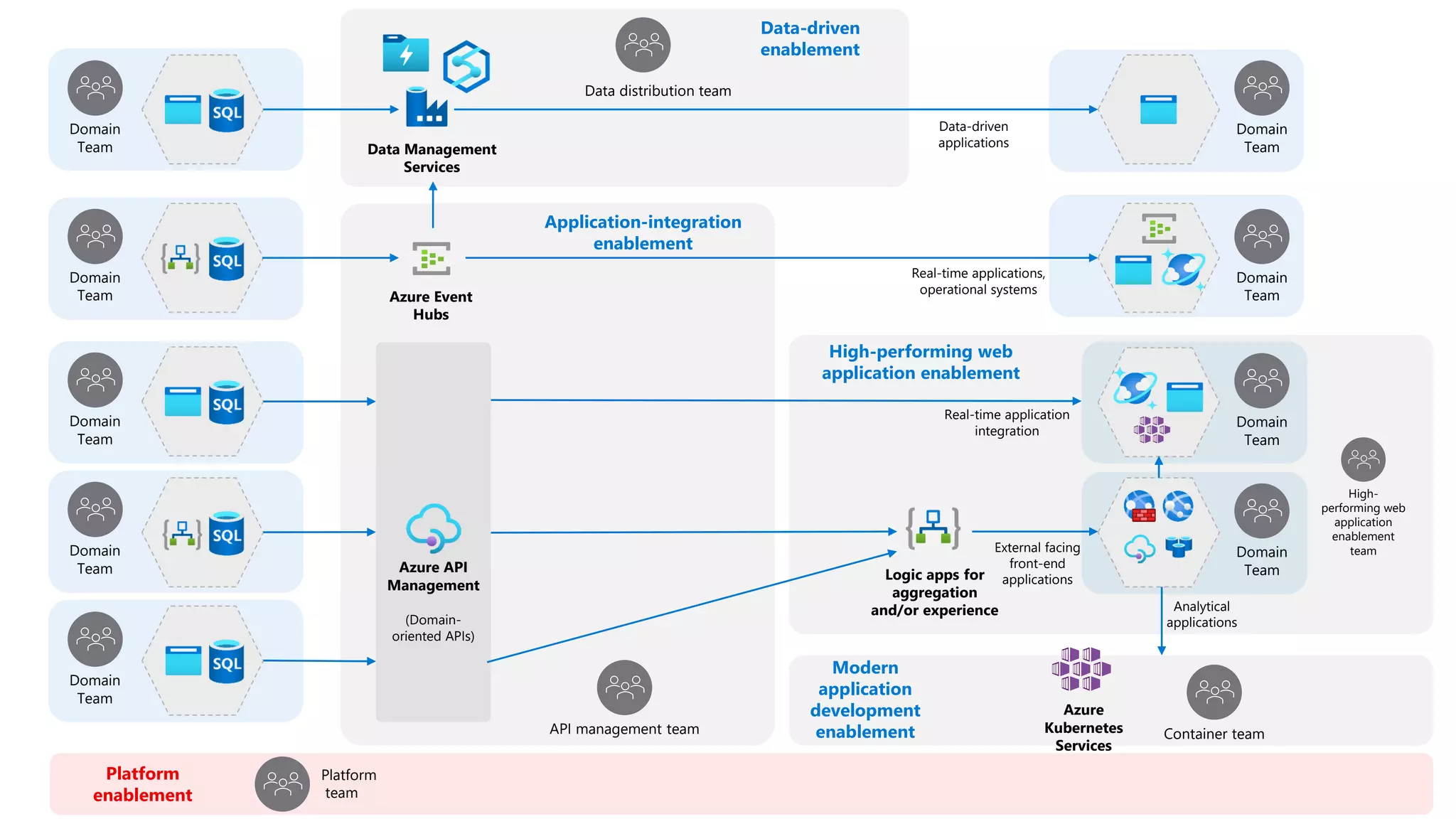

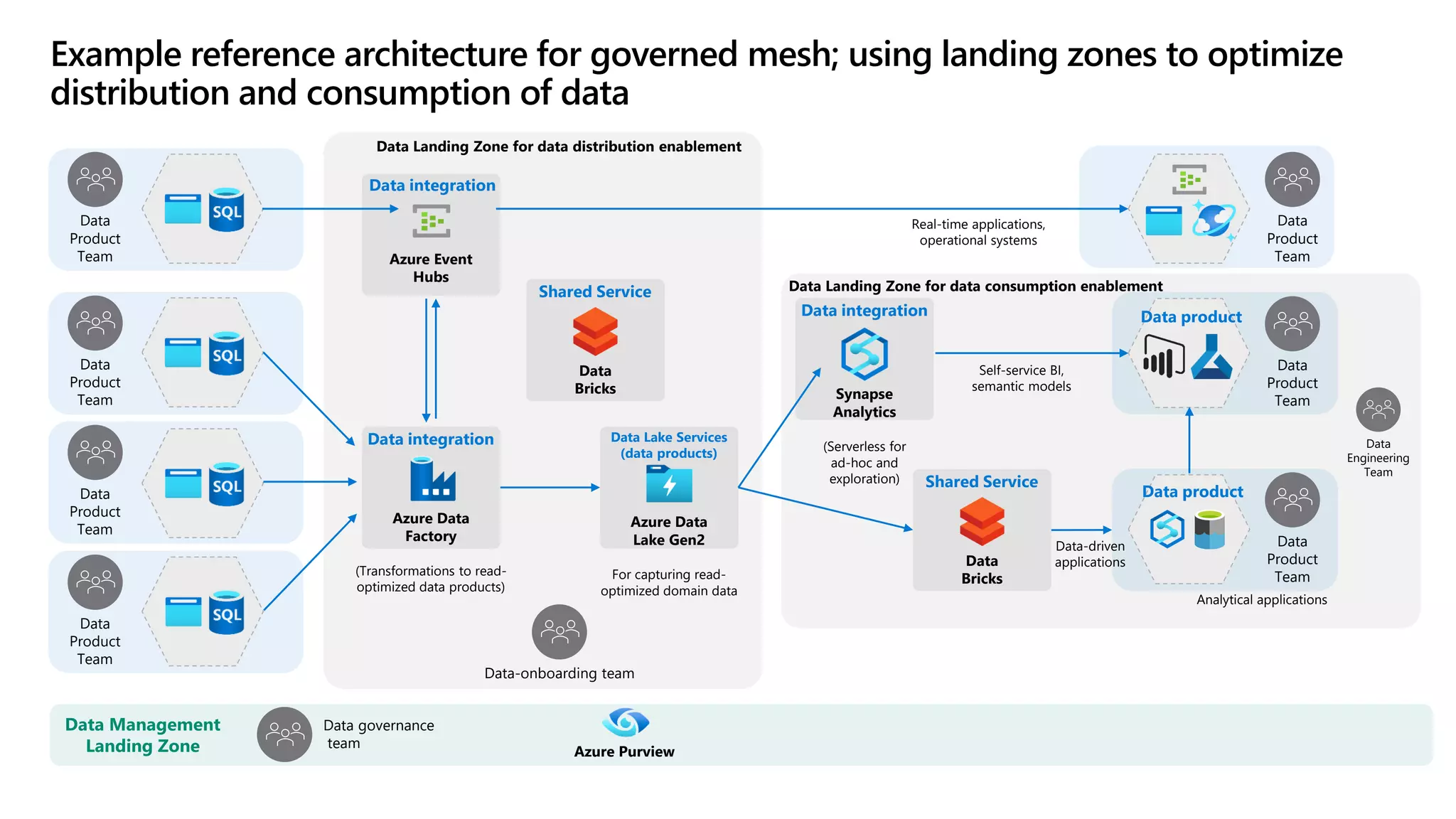

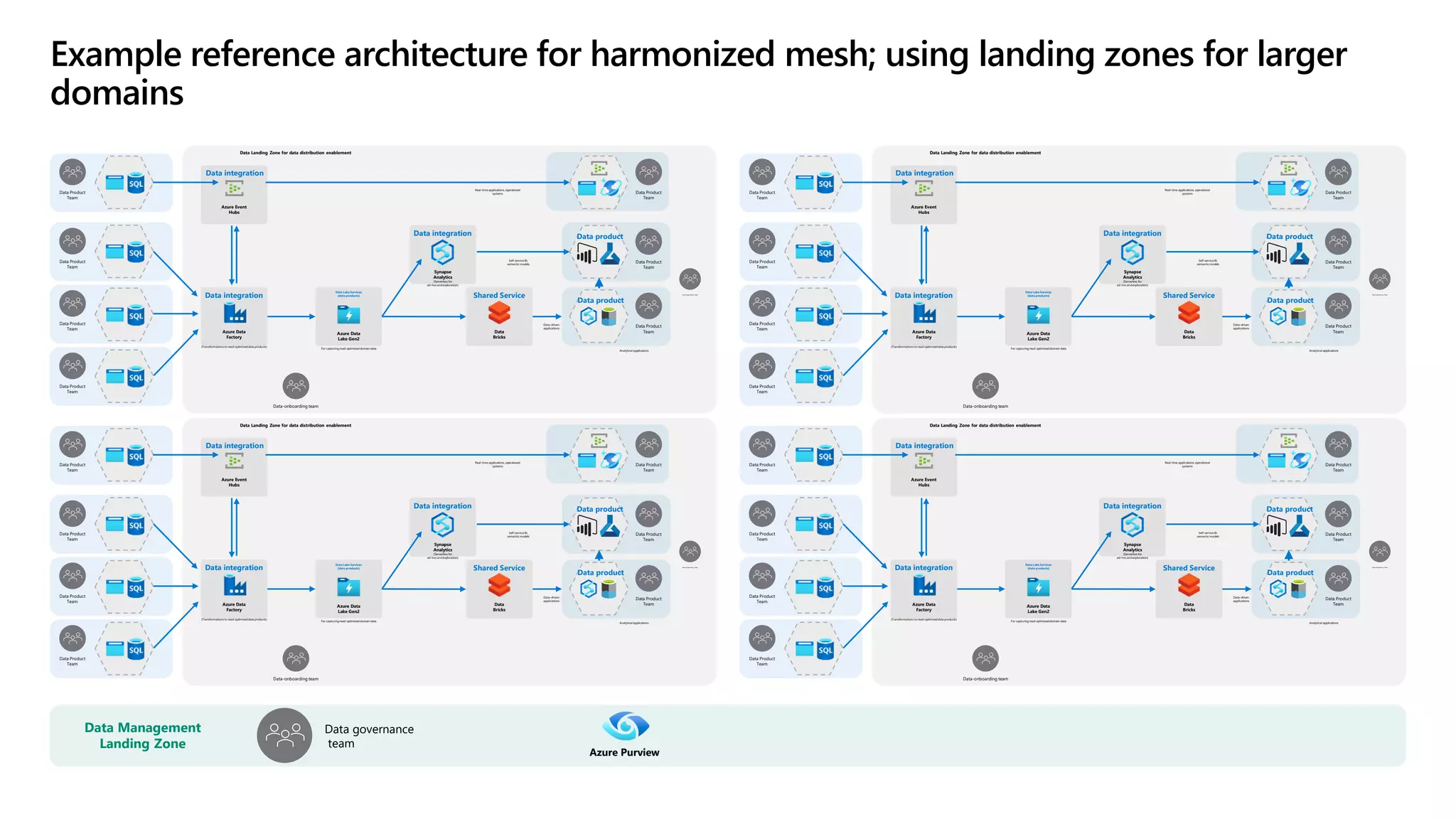

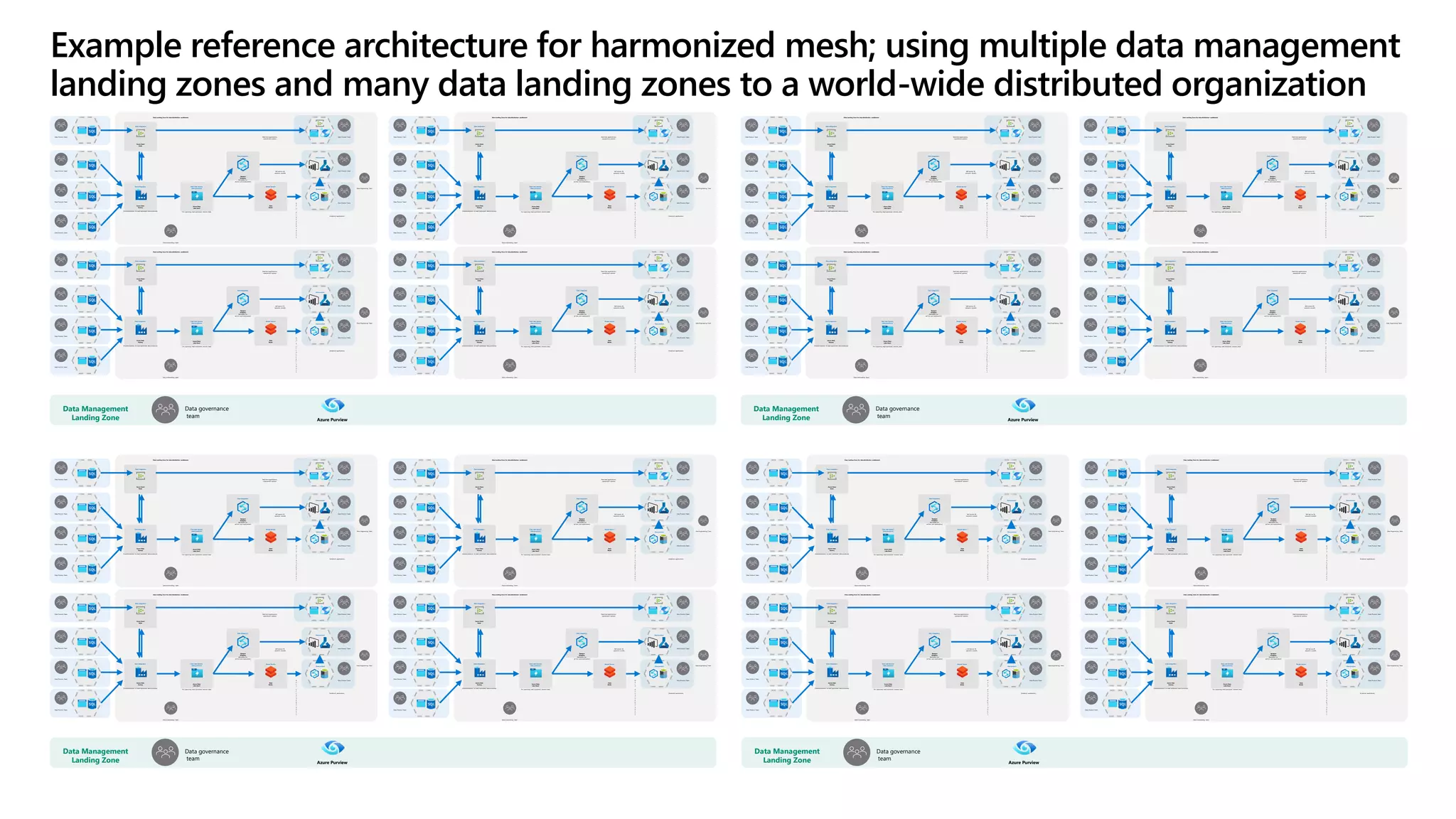

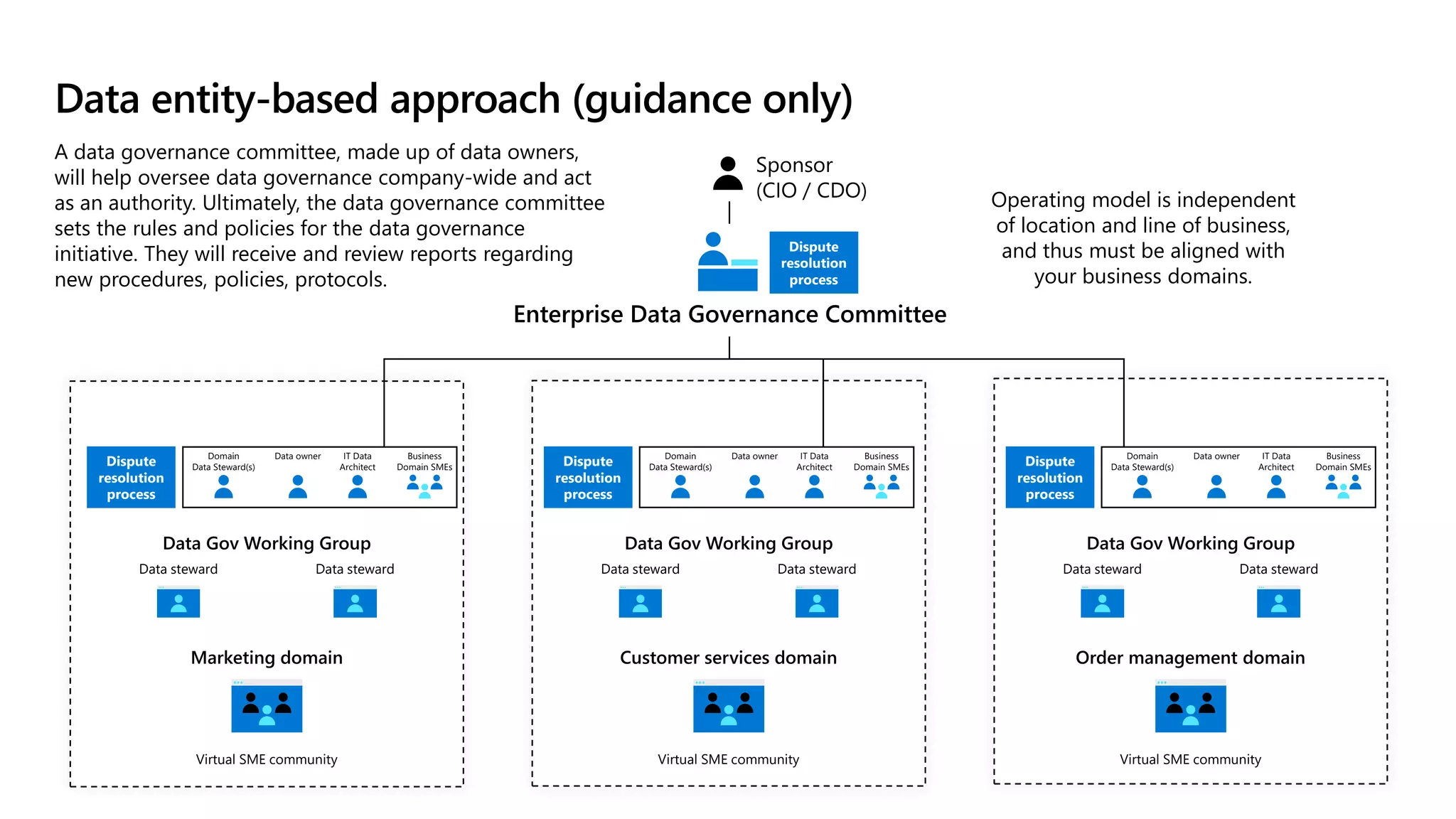

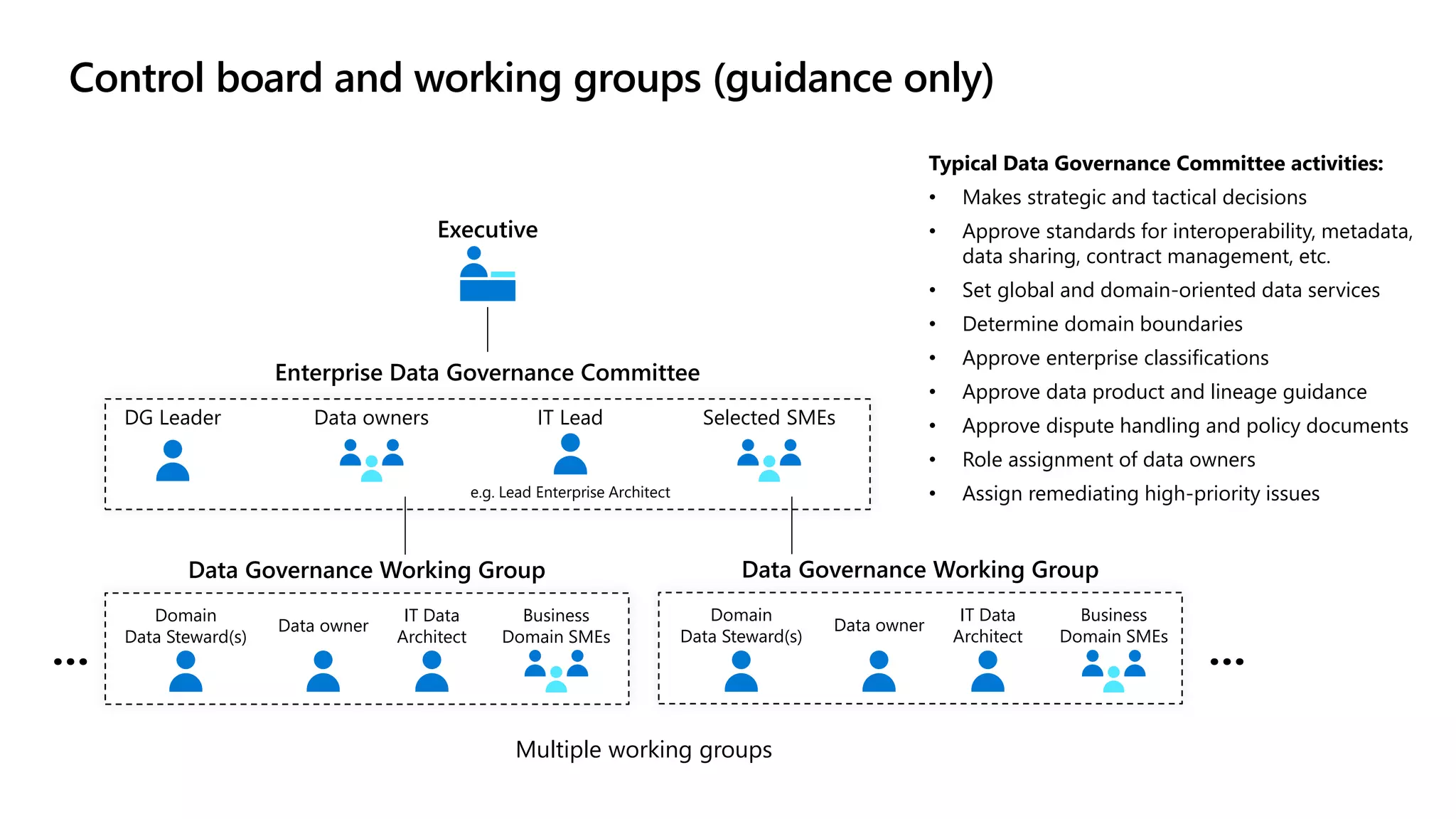

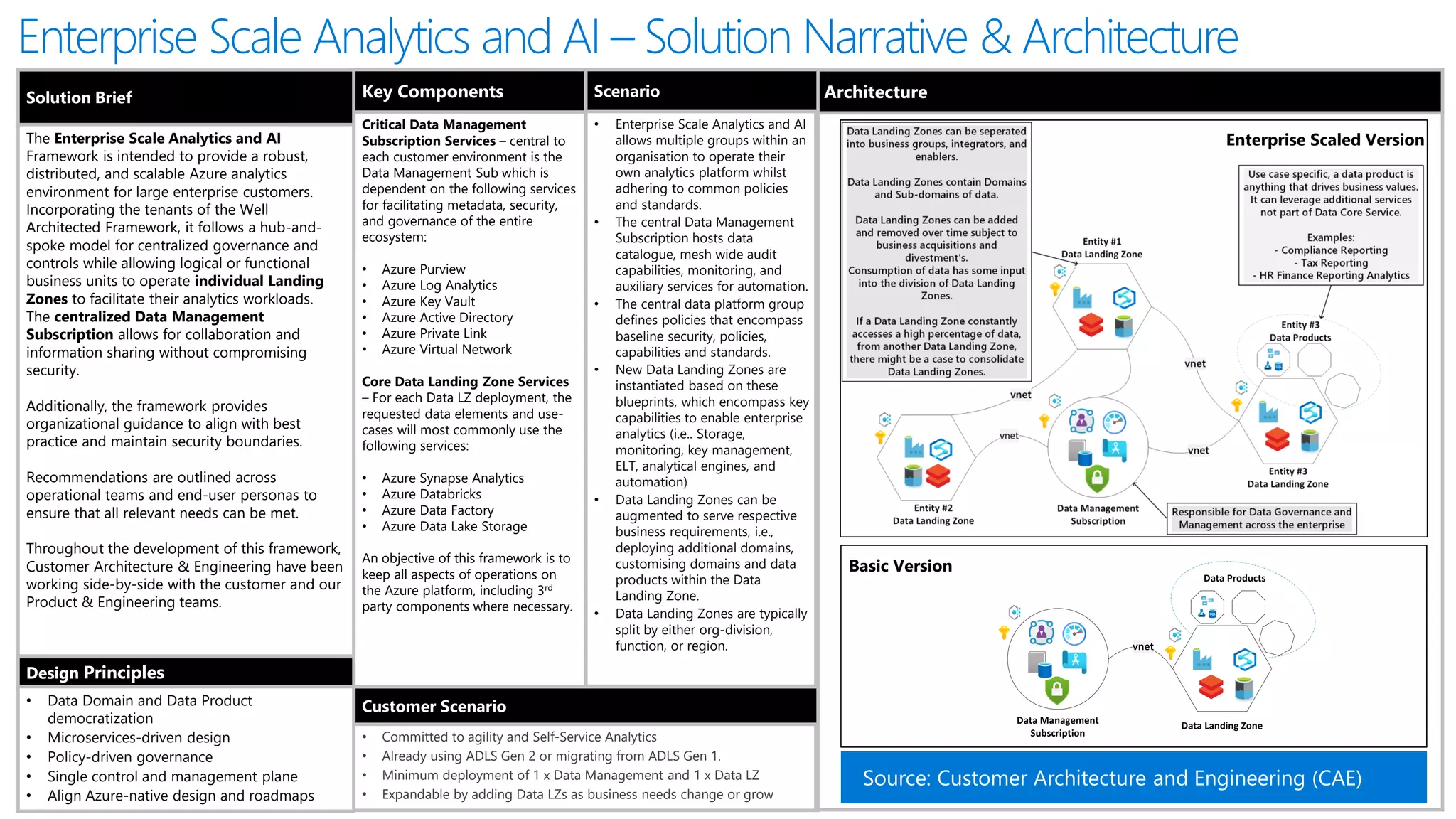

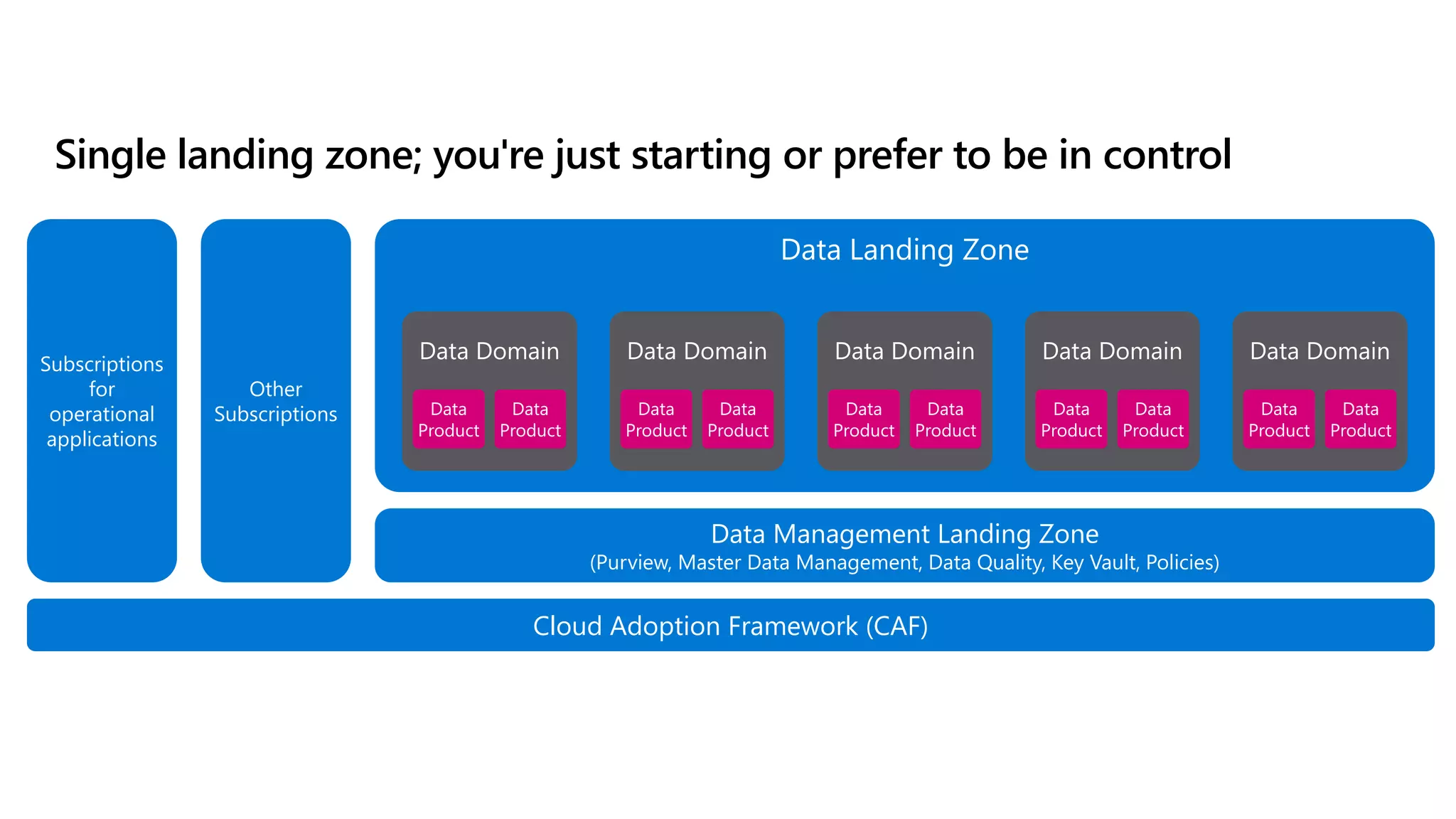

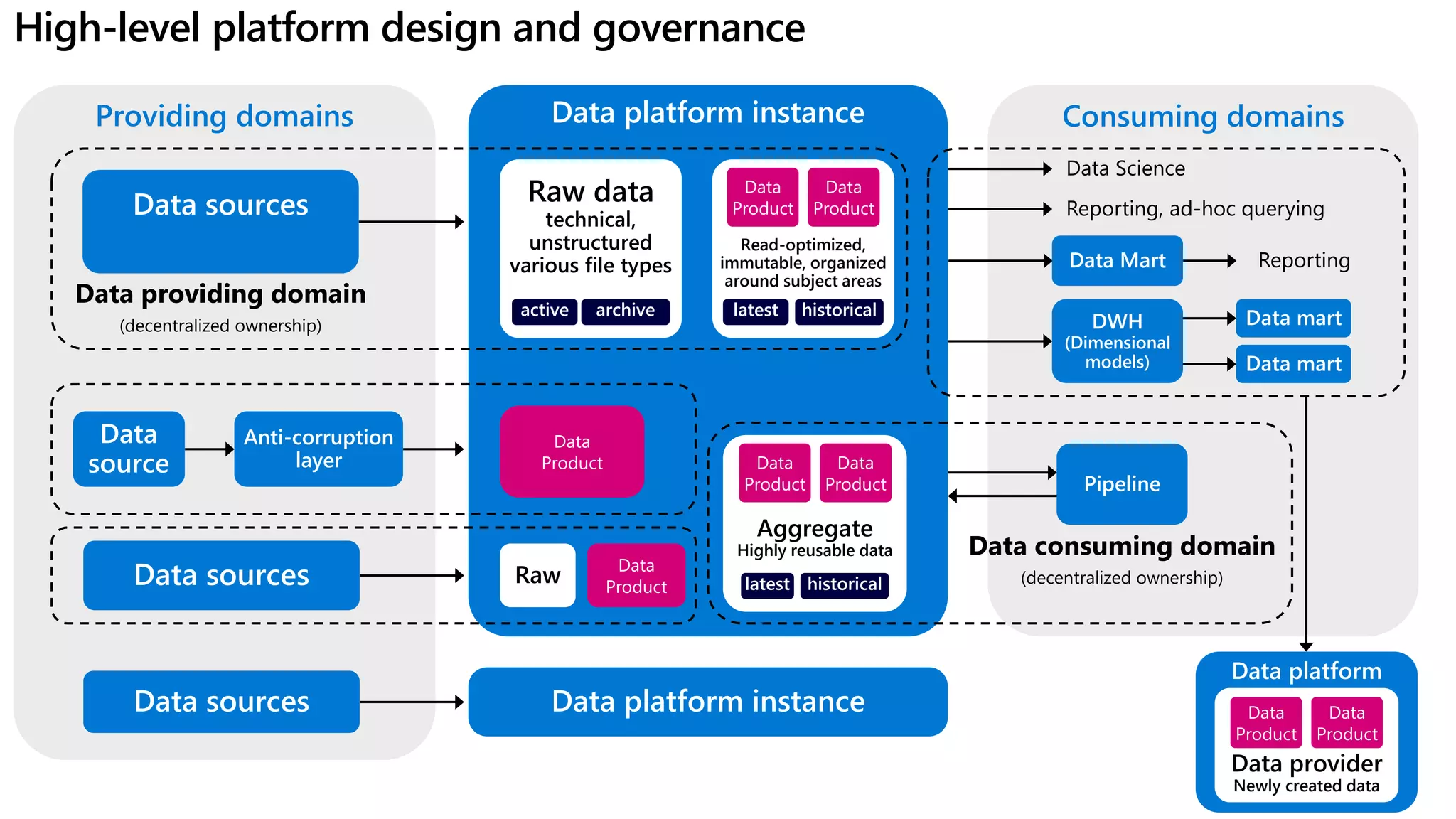

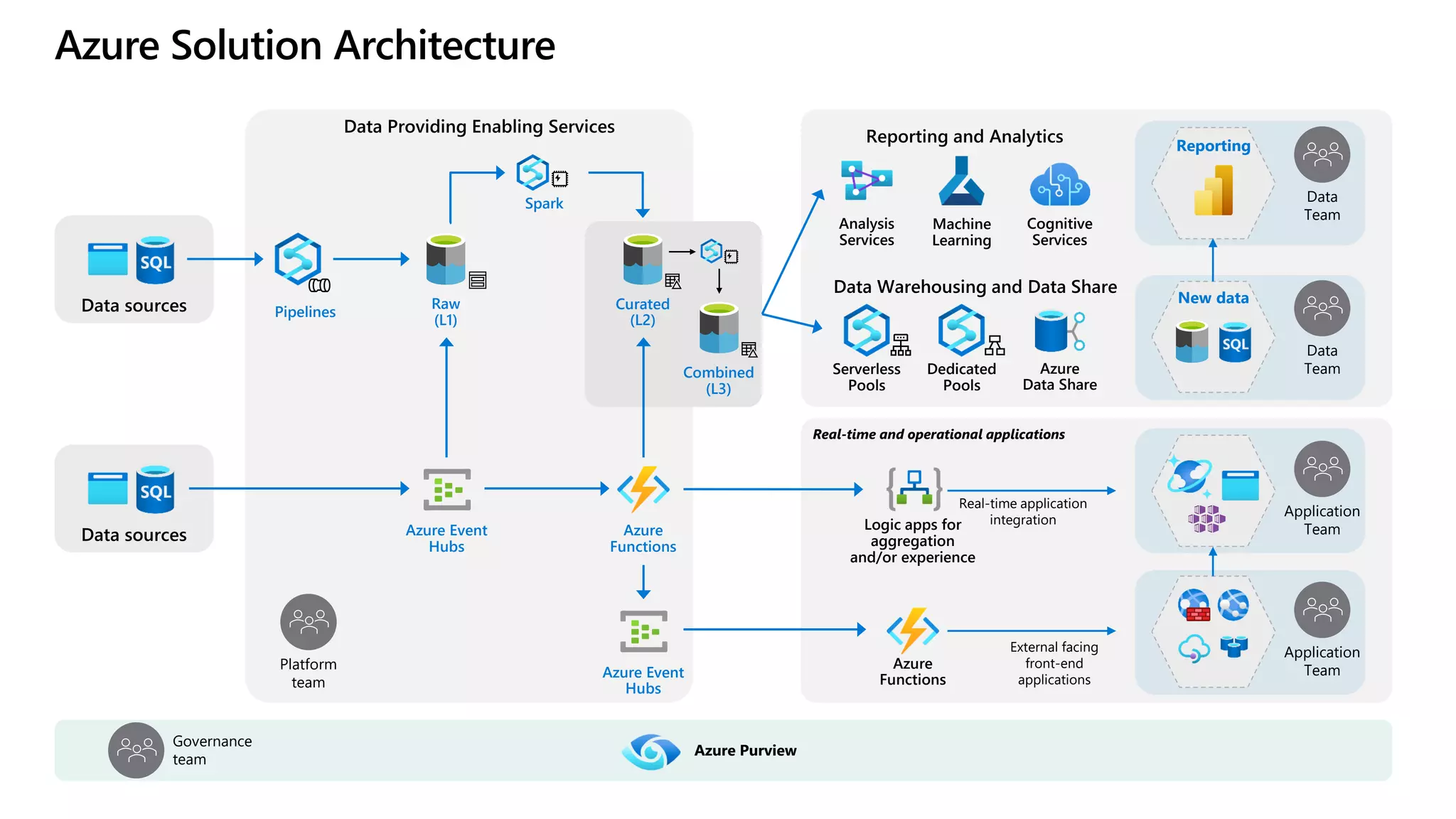

The document discusses the challenges and solutions in modern data management, particularly through the lens of data mesh architecture on Azure. It emphasizes the importance of decentralizing data ownership and integrating data-as-a-product for improved governance and usability across organizations. The text outlines best practices and the significance of establishing clear domain boundaries and interoperability standards to support effective data consumption and management.