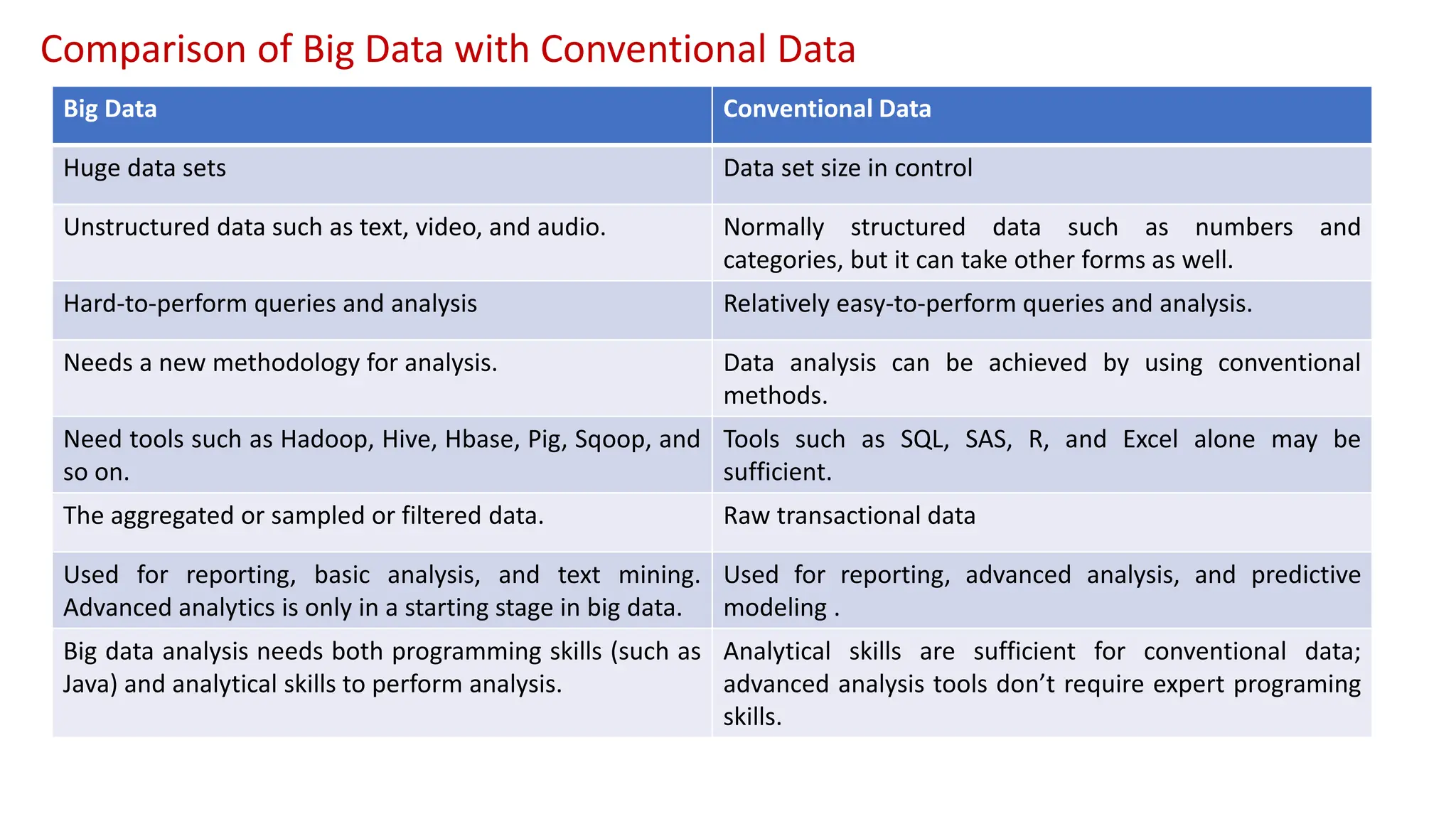

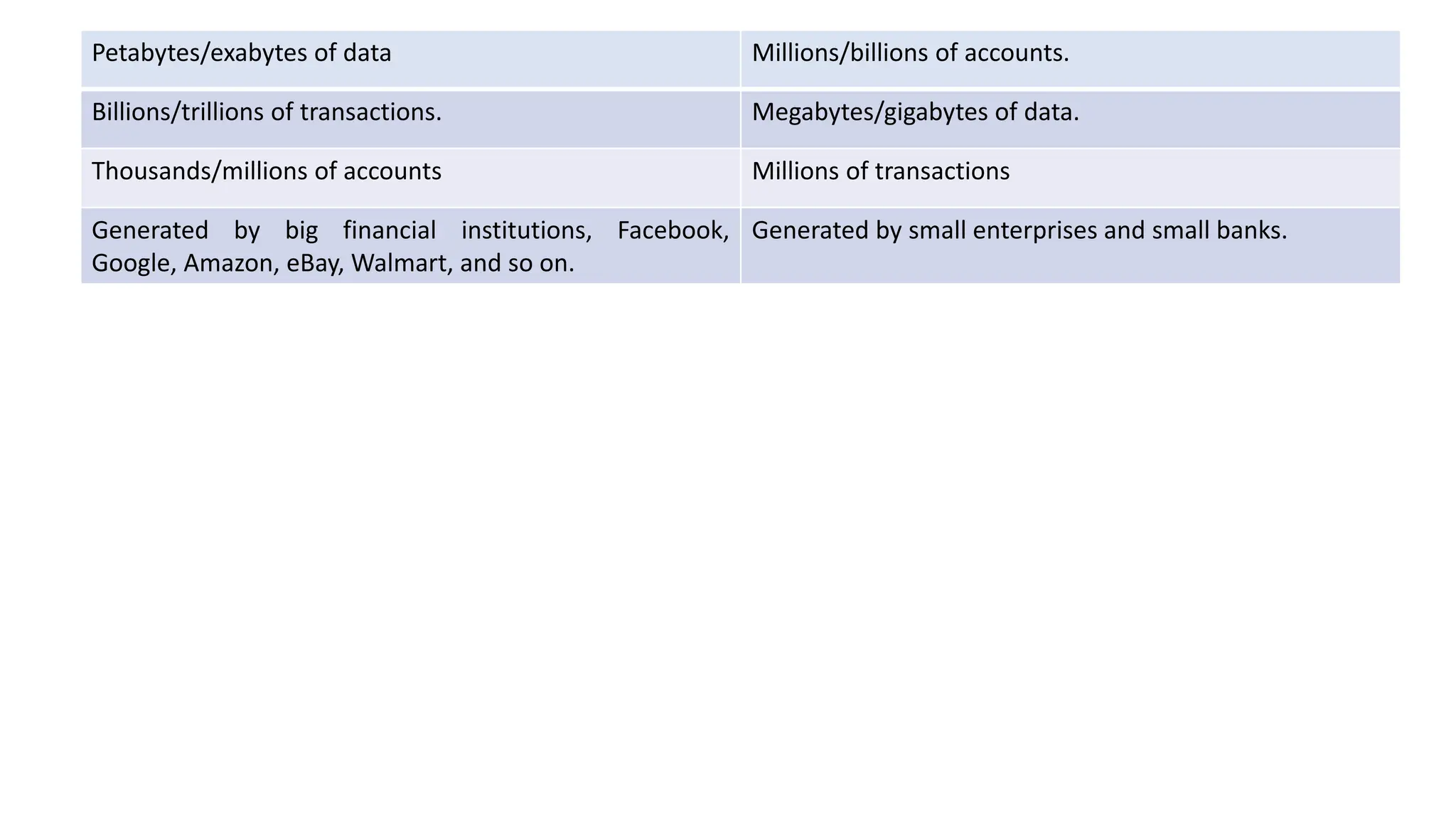

The document discusses the application of data analytics across various industry verticals, highlighting specific challenges in sectors such as banking, healthcare, and education, as well as the data analytics lifecycle with its six phases. It contrasts traditional systems with big data approaches, emphasizing the limitations of conventional computing in handling large and complex datasets, and outlines the challenges faced in adopting big data solutions. Additionally, it addresses the talent gap and the need for efficient data management and analysis methodologies in the evolving landscape of big data.