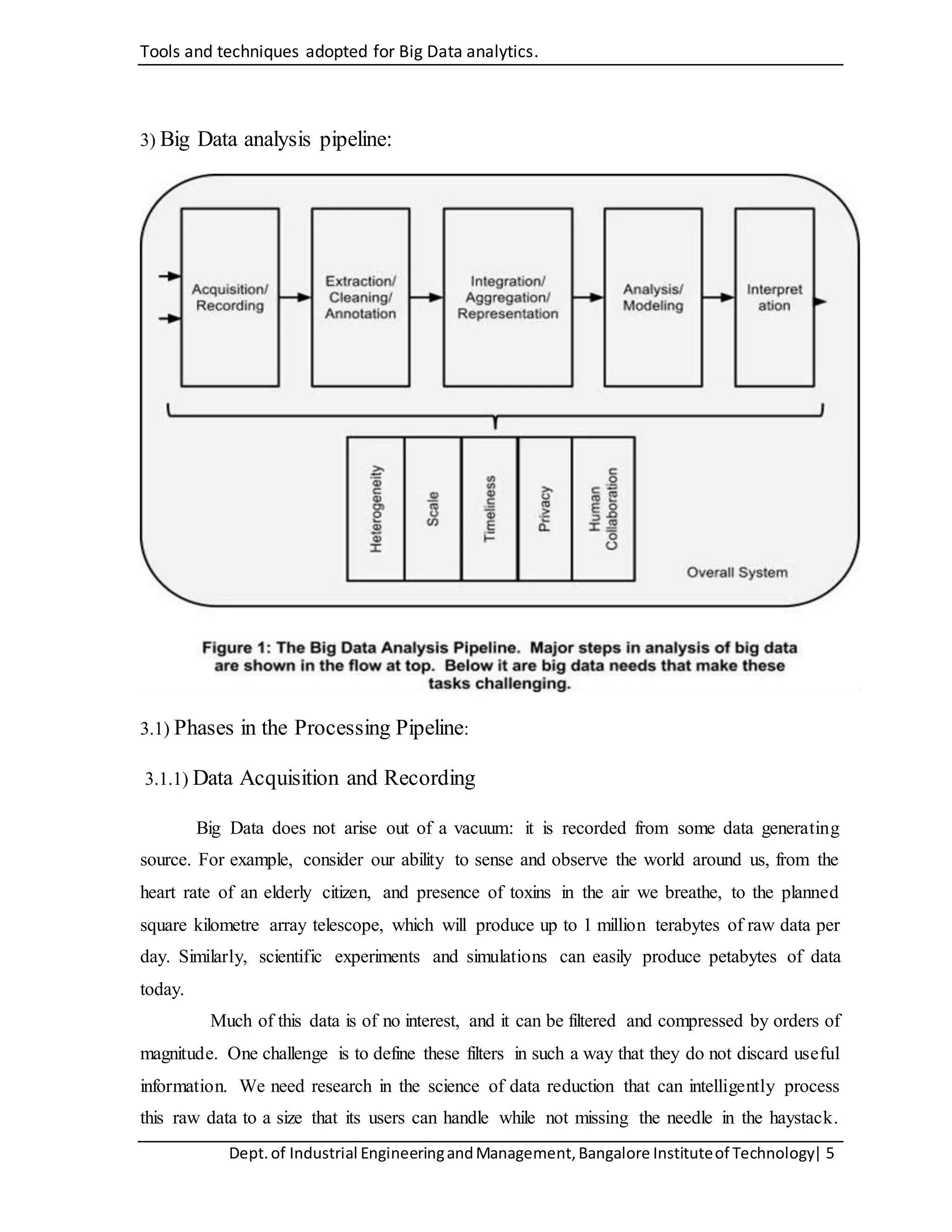

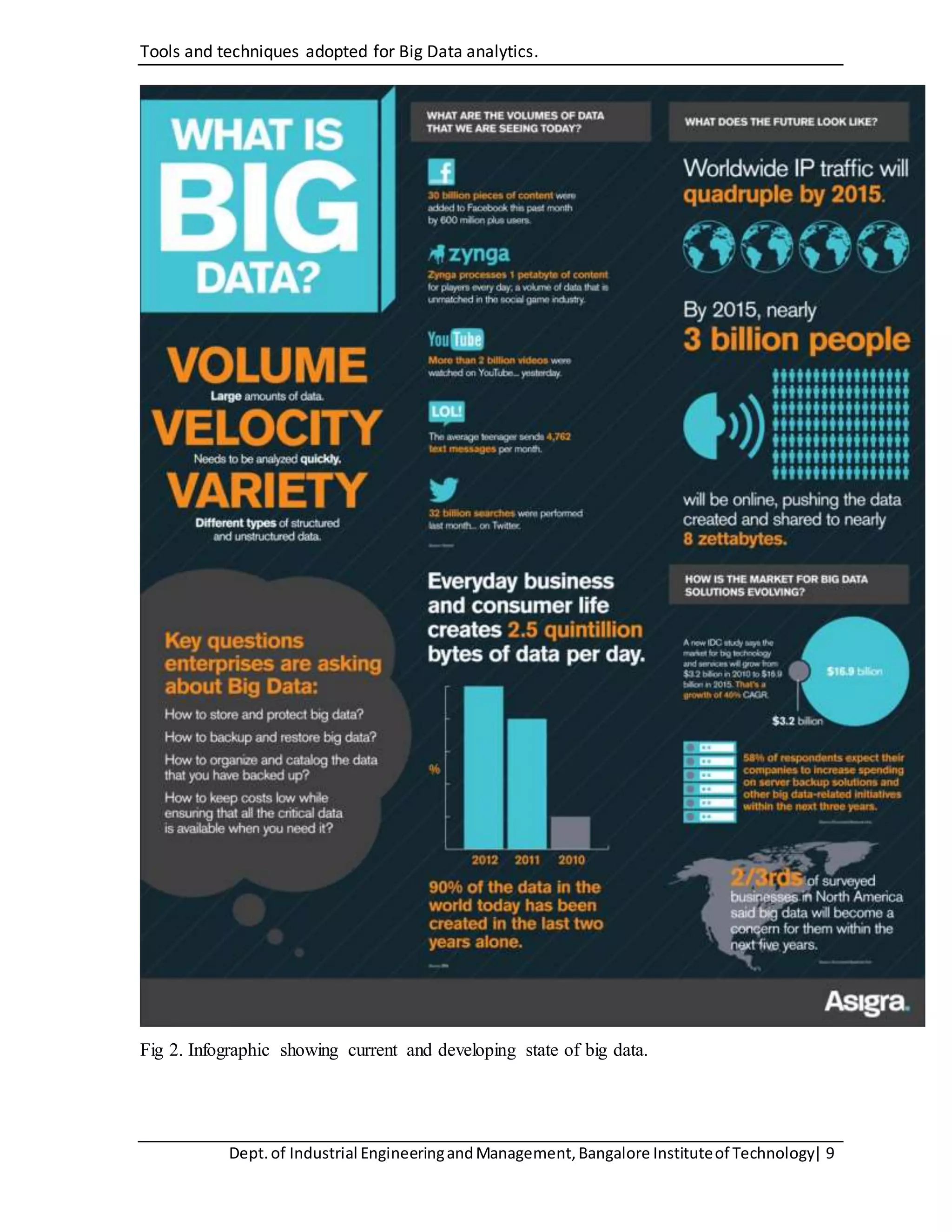

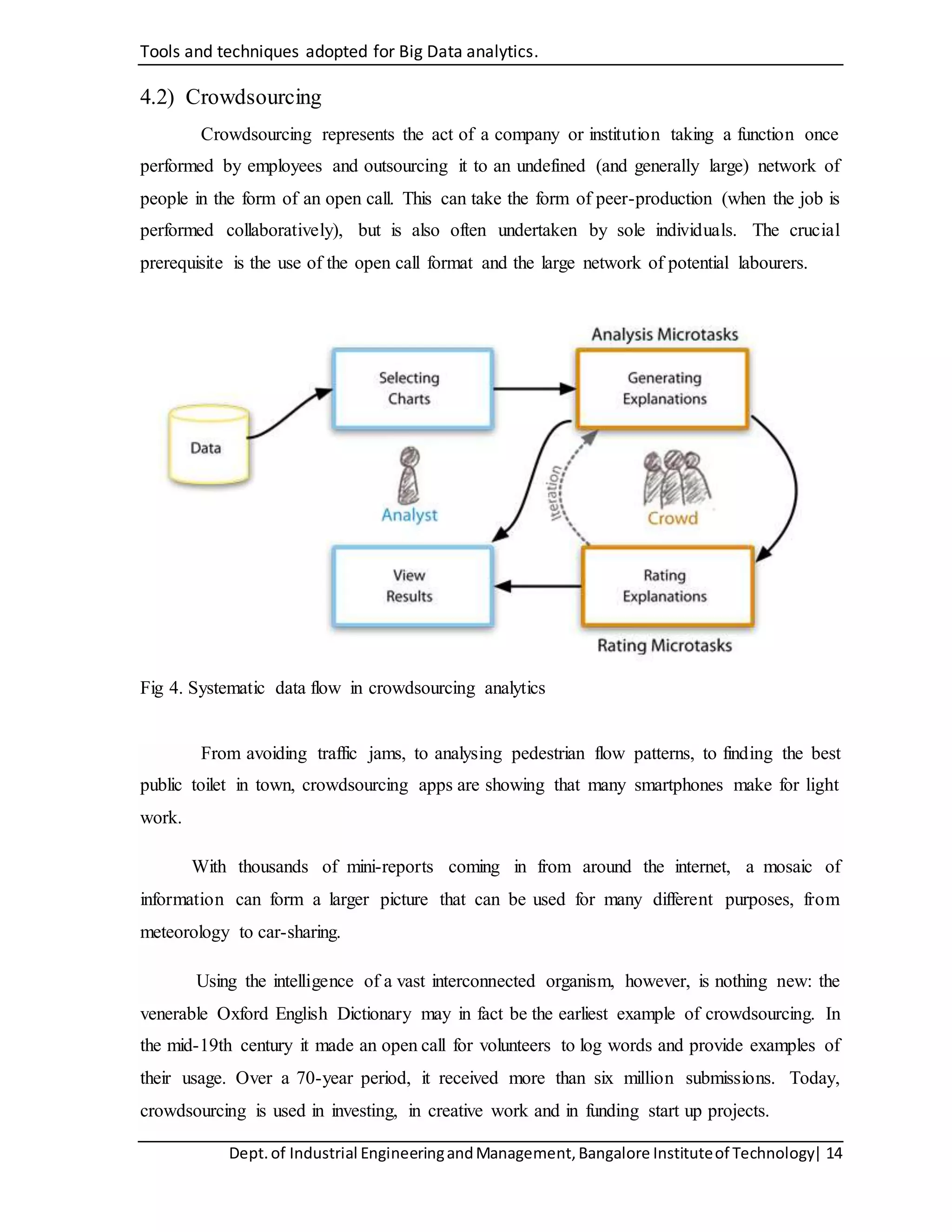

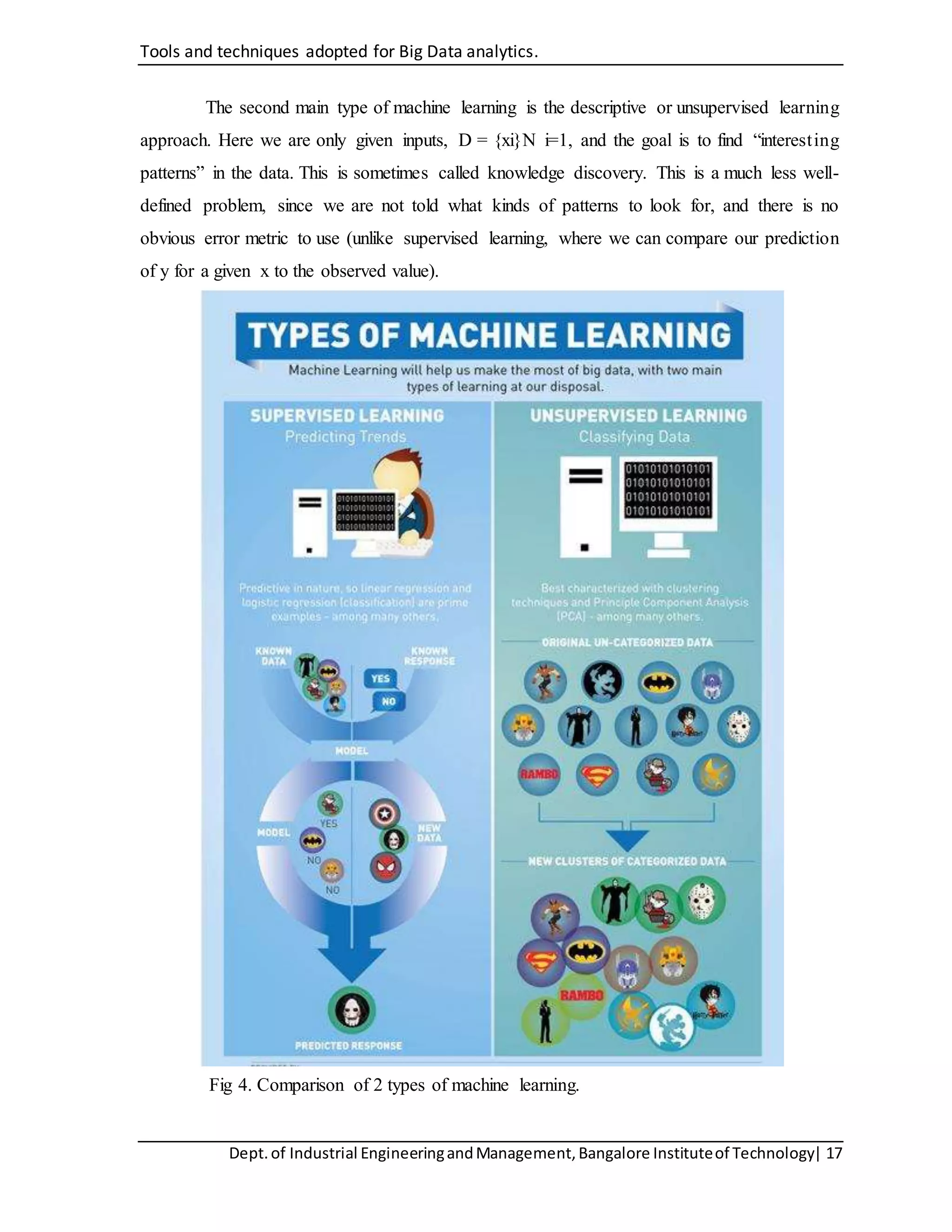

The document discusses tools and techniques for big data analytics, including A/B testing, crowdsourcing, machine learning, and data mining. It provides an overview of the big data analysis pipeline, including data acquisition, information extraction, integration and representation, query processing and analysis, and interpretation. The document also discusses fields where big data is relevant like industry, healthcare, and research. It analyzes tools like A/B testing, machine learning, and data mining techniques in more detail.