Creative Interactive Browser Visualizations with Bokeh by Bryan Van de ven

•

3 likes•1,478 views

Bokeh is an interactive visualization library for Python that allows creating browser-based plots, dashboards, and data applications. It produces static or live interactive visualizations for large datasets. Key features include high-level abstractions, interactive tools, linking views, streaming data support, and integration with Jupyter notebooks. The developer is seeking feedback to improve usability and expand capabilities like abstract rendering for millions of data points.

Report

Share

Report

Share

Download to read offline

Recommended

Spark Saturday: Spark SQL & DataFrame Workshop with Apache Spark 2.3

This introductory workshop is aimed at data analysts & data engineers new to Apache Spark and exposes them how to analyze big data with Spark SQL and DataFrames.

In this partly instructor-led and self-paced labs, we will cover Spark concepts and you’ll do labs for Spark SQL and DataFrames

in Databricks Community Edition.

Toward the end, you’ll get a glimpse into newly minted Databricks Developer Certification for Apache Spark: what to expect & how to prepare for it.

* Apache Spark Basics & Architecture

* Spark SQL

* DataFrames

* Brief Overview of Databricks Certified Developer for Apache Spark

Web-Scale Graph Analytics with Apache® Spark™

Graph analytics has a wide range of applications, from information propagation and network flow optimization to fraud and anomaly detection. The rise of social networks and the Internet of Things has given us complex web-scale graphs with billions of vertices and edges. However, in order to extract the hidden gems within those graphs, you need tools to analyze the graphs easily and efficiently.

At Spark Summit 2016, Databricks introduced GraphFrames, which implemented graph queries and pattern matching on top of Spark SQL to simplify graph analytics. In this talk, you’ll learn about work that has made graph algorithms in GraphFrames faster and more scalable. For example, new implementations like connected components have received algorithm improvements based on recent research, as well as performance improvements from Spark DataFrames. Discover lessons learned from scaling the implementation from millions to billions of nodes; compare its performance with other popular graph libraries; and hear about real-world applications.

Real Time analytics with Druid, Apache Spark and Kafka

The presentation from Druid meetup in Tel Aviv, November 2019.

Presenting the architecture we've built at Outbrain for real time analytics dashboard based in Druid, Spark Streaming and Kafka.

Simplify Data Conversion from Spark to TensorFlow and PyTorch

In this talk, I would like to introduce an open-source tool built by our team that simplifies the data conversion from Apache Spark to deep learning frameworks.

Imagine you have a large dataset, say 20 GBs, and you want to use it to train a TensorFlow model. Before feeding the data to the model, you need to clean and preprocess your data using Spark. Now you have your dataset in a Spark DataFrame. When it comes to the training part, you may have the problem: How can I convert my Spark DataFrame to some format recognized by my TensorFlow model?

The existing data conversion process can be tedious. For example, to convert an Apache Spark DataFrame to a TensorFlow Dataset file format, you need to either save the Apache Spark DataFrame on a distributed filesystem in parquet format and load the converted data with third-party tools such as Petastorm, or save it directly in TFRecord files with spark-tensorflow-connector and load it back using TFRecordDataset. Both approaches take more than 20 lines of code to manage the intermediate data files, rely on different parsing syntax, and require extra attention for handling vector columns in the Spark DataFrames. In short, all these engineering frictions greatly reduced the data scientists’ productivity.

The Databricks Machine Learning team contributed a new Spark Dataset Converter API to Petastorm to simplify these tedious data conversion process steps. With the new API, it takes a few lines of code to convert a Spark DataFrame to a TensorFlow Dataset or a PyTorch DataLoader with default parameters.

In the talk, I will use an example to show how to use the Spark Dataset Converter to train a Tensorflow model and how simple it is to go from single-node training to distributed training on Databricks.

Graph-Powered Machine Learning

Many powerful Machine Learning algorithms are based on graphs, e.g., Page Rank (Pregel), Recommendation Engines (collaborative filtering), text summarization, and other NLP tasks. Also, the recent developments with Graph Neural Networks connect the worlds of Graphs and Machine Learning even further.

Considering data pre-processing and feature engineering which are both vital tasks in Machine Learning Pipelines extends this relationship across the entire ecosystem. In this session, we will investigate the entire range of Graphs and Machine Learning with many practical exercises.

Recommended

Spark Saturday: Spark SQL & DataFrame Workshop with Apache Spark 2.3

This introductory workshop is aimed at data analysts & data engineers new to Apache Spark and exposes them how to analyze big data with Spark SQL and DataFrames.

In this partly instructor-led and self-paced labs, we will cover Spark concepts and you’ll do labs for Spark SQL and DataFrames

in Databricks Community Edition.

Toward the end, you’ll get a glimpse into newly minted Databricks Developer Certification for Apache Spark: what to expect & how to prepare for it.

* Apache Spark Basics & Architecture

* Spark SQL

* DataFrames

* Brief Overview of Databricks Certified Developer for Apache Spark

Web-Scale Graph Analytics with Apache® Spark™

Graph analytics has a wide range of applications, from information propagation and network flow optimization to fraud and anomaly detection. The rise of social networks and the Internet of Things has given us complex web-scale graphs with billions of vertices and edges. However, in order to extract the hidden gems within those graphs, you need tools to analyze the graphs easily and efficiently.

At Spark Summit 2016, Databricks introduced GraphFrames, which implemented graph queries and pattern matching on top of Spark SQL to simplify graph analytics. In this talk, you’ll learn about work that has made graph algorithms in GraphFrames faster and more scalable. For example, new implementations like connected components have received algorithm improvements based on recent research, as well as performance improvements from Spark DataFrames. Discover lessons learned from scaling the implementation from millions to billions of nodes; compare its performance with other popular graph libraries; and hear about real-world applications.

Real Time analytics with Druid, Apache Spark and Kafka

The presentation from Druid meetup in Tel Aviv, November 2019.

Presenting the architecture we've built at Outbrain for real time analytics dashboard based in Druid, Spark Streaming and Kafka.

Simplify Data Conversion from Spark to TensorFlow and PyTorch

In this talk, I would like to introduce an open-source tool built by our team that simplifies the data conversion from Apache Spark to deep learning frameworks.

Imagine you have a large dataset, say 20 GBs, and you want to use it to train a TensorFlow model. Before feeding the data to the model, you need to clean and preprocess your data using Spark. Now you have your dataset in a Spark DataFrame. When it comes to the training part, you may have the problem: How can I convert my Spark DataFrame to some format recognized by my TensorFlow model?

The existing data conversion process can be tedious. For example, to convert an Apache Spark DataFrame to a TensorFlow Dataset file format, you need to either save the Apache Spark DataFrame on a distributed filesystem in parquet format and load the converted data with third-party tools such as Petastorm, or save it directly in TFRecord files with spark-tensorflow-connector and load it back using TFRecordDataset. Both approaches take more than 20 lines of code to manage the intermediate data files, rely on different parsing syntax, and require extra attention for handling vector columns in the Spark DataFrames. In short, all these engineering frictions greatly reduced the data scientists’ productivity.

The Databricks Machine Learning team contributed a new Spark Dataset Converter API to Petastorm to simplify these tedious data conversion process steps. With the new API, it takes a few lines of code to convert a Spark DataFrame to a TensorFlow Dataset or a PyTorch DataLoader with default parameters.

In the talk, I will use an example to show how to use the Spark Dataset Converter to train a Tensorflow model and how simple it is to go from single-node training to distributed training on Databricks.

Graph-Powered Machine Learning

Many powerful Machine Learning algorithms are based on graphs, e.g., Page Rank (Pregel), Recommendation Engines (collaborative filtering), text summarization, and other NLP tasks. Also, the recent developments with Graph Neural Networks connect the worlds of Graphs and Machine Learning even further.

Considering data pre-processing and feature engineering which are both vital tasks in Machine Learning Pipelines extends this relationship across the entire ecosystem. In this session, we will investigate the entire range of Graphs and Machine Learning with many practical exercises.

Graph based data models

This is an introduction and overview to the latest development Nosql and Graph Based Data Models

dbt Python models - GoDataFest by Guillermo Sanchez

dbt Python models - GoDataFest by Guillermo Sanchez

Apache Spark GraphX & GraphFrame Synthetic ID Fraud Use Case

Power of Graph Analytics and Synthetic ID Fraud Detection using Apache Spark, GraphX, GraphFrames

Large Scale Graph Analytics with JanusGraph

Slides from my DataWorks Summit presentation on JanusGraph

Data visualization

A walk through the maze of understanding Data Visualization using several tools such as Python, R, Knime and Google Data Studio.

This workshop is hands-on and this set of presentations is designed to be an agenda to the workshop

DBpedia InsideOut

Introduction to DBpedia, the most popular and interconnected source of Linked Open Data. Part of EXPLORING WIKIDATA AND THE SEMANTIC WEB FOR LIBRARIES at METRO http://metro.org/events/598/

Key-Value NoSQL Database

this presentation describe NoSQL data model and consistency topic and introduce Key-Value database

01 Introduction to Knowledge Engineering

'Introduction to Knowledge Engineering' is the first lecture of 'Knowledge Engineering' course at Baku Engineering University, 2019 Fall

OSA Con 2022 - Apache Iceberg_ An Architectural Look Under the Covers - Alex ...

OSA Con 2022: Apache Iceberg: An Architectural Look Under the Covers

Alex Merced - Dremio

The data lakehouse is one of the most exciting trends in the data space promising to merge the best aspects of data lakes and data warehouses without either of their problems. Open source tech is making this promise a reality and in this talk Dremio Developer Advocate, Alex Merced, explores these technologies.

In this talk Alex Merced will cover:

- What is a Data Lakehouse?

- Why open matters in preserving the promise of lakehouses (better costs, vendor freedom, data freedom)

- What are technologies that enable lakehouses like Apache Iceberg, Apache Parquet, Apache Arrow and Project Nessie

Introduction to ML with Apache Spark MLlib

Machine learning is overhyped nowadays. There is a strong belief that this area is exclusively for data scientists with a deep mathematical background that leverage Python (scikit-learn, Theano, Tensorflow, etc.) or R ecosystem and use specific tools like Matlab, Octave or similar. Of course, there is a big grain of truth in this statement, but we, Java engineers, also can take the best of machine learning universe from an applied perspective by using our native language and familiar frameworks like Apache Spark. During this introductory presentation, you will get acquainted with the simplest machine learning tasks and algorithms, like regression, classification, clustering, widen your outlook and use Apache Spark MLlib to distinguish pop music from heavy metal and simply have fun.

Source code: https://github.com/tmatyashovsky/spark-ml-samples

Design by Yarko Filevych: http://filevych.com/

Flux and InfluxDB 2.0 by Paul Dix

In this InfluxDays NYC 2019 talk, InfluxData Founder & CTO Paul Dix will outline his vision around the platform and its new data scripting and query language Flux, and he will give the latest updates on InfluxDB time series database. This talk will walk through the vision and architecture with demonstrations of working prototypes of the projects.

DVC - Git-like Data Version Control for Machine Learning projects

DVC is an open-source tool for versioning datasets, artifacts, and models in Machine Learning projects.

This extremely powerful tool allows you to leverage an intuitive git-like interface to seamlessly

1. track datasets version updates

2. have reproducible and sharable machine learning pipelines (e.g. model training)

3. compare model performance scores

4. integrate your data and model versioning with git

5. deploy the desired version of your trained models

Spark SQL Deep Dive @ Melbourne Spark Meetup

Michael gave a talk at Melbourne Spark Meetup on Spark SQL Deep Dive.

Koalas: Making an Easy Transition from Pandas to Apache Spark

Koalas is an open-source project that aims at bridging the gap between big data and small data for data scientists and at simplifying Apache Spark for people who are already familiar with pandas library in Python. Pandas is the standard tool for data science and it is typically the first step to explore and manipulate a data set, but pandas does not scale well to big data.

Interactive Visualization With Bokeh (SF Python Meetup)

Bokeh is an interactive web visualization framework for Python, in the spirit of D3 but designed for non-Javascript programmers, and architected to be driven by server-side data and object model changes. Learn more about it and play with online demos at http://bokeh.pydata.org.

These slides are from a talk at San Francisco Python Meetup on September 10, 2014

Anaconda and PyData Solutions

Using Anaconda and PyData to Rapidly Build Big-Data Analytics and Visualization Solutions.

More Related Content

What's hot

Graph based data models

This is an introduction and overview to the latest development Nosql and Graph Based Data Models

dbt Python models - GoDataFest by Guillermo Sanchez

dbt Python models - GoDataFest by Guillermo Sanchez

Apache Spark GraphX & GraphFrame Synthetic ID Fraud Use Case

Power of Graph Analytics and Synthetic ID Fraud Detection using Apache Spark, GraphX, GraphFrames

Large Scale Graph Analytics with JanusGraph

Slides from my DataWorks Summit presentation on JanusGraph

Data visualization

A walk through the maze of understanding Data Visualization using several tools such as Python, R, Knime and Google Data Studio.

This workshop is hands-on and this set of presentations is designed to be an agenda to the workshop

DBpedia InsideOut

Introduction to DBpedia, the most popular and interconnected source of Linked Open Data. Part of EXPLORING WIKIDATA AND THE SEMANTIC WEB FOR LIBRARIES at METRO http://metro.org/events/598/

Key-Value NoSQL Database

this presentation describe NoSQL data model and consistency topic and introduce Key-Value database

01 Introduction to Knowledge Engineering

'Introduction to Knowledge Engineering' is the first lecture of 'Knowledge Engineering' course at Baku Engineering University, 2019 Fall

OSA Con 2022 - Apache Iceberg_ An Architectural Look Under the Covers - Alex ...

OSA Con 2022: Apache Iceberg: An Architectural Look Under the Covers

Alex Merced - Dremio

The data lakehouse is one of the most exciting trends in the data space promising to merge the best aspects of data lakes and data warehouses without either of their problems. Open source tech is making this promise a reality and in this talk Dremio Developer Advocate, Alex Merced, explores these technologies.

In this talk Alex Merced will cover:

- What is a Data Lakehouse?

- Why open matters in preserving the promise of lakehouses (better costs, vendor freedom, data freedom)

- What are technologies that enable lakehouses like Apache Iceberg, Apache Parquet, Apache Arrow and Project Nessie

Introduction to ML with Apache Spark MLlib

Machine learning is overhyped nowadays. There is a strong belief that this area is exclusively for data scientists with a deep mathematical background that leverage Python (scikit-learn, Theano, Tensorflow, etc.) or R ecosystem and use specific tools like Matlab, Octave or similar. Of course, there is a big grain of truth in this statement, but we, Java engineers, also can take the best of machine learning universe from an applied perspective by using our native language and familiar frameworks like Apache Spark. During this introductory presentation, you will get acquainted with the simplest machine learning tasks and algorithms, like regression, classification, clustering, widen your outlook and use Apache Spark MLlib to distinguish pop music from heavy metal and simply have fun.

Source code: https://github.com/tmatyashovsky/spark-ml-samples

Design by Yarko Filevych: http://filevych.com/

Flux and InfluxDB 2.0 by Paul Dix

In this InfluxDays NYC 2019 talk, InfluxData Founder & CTO Paul Dix will outline his vision around the platform and its new data scripting and query language Flux, and he will give the latest updates on InfluxDB time series database. This talk will walk through the vision and architecture with demonstrations of working prototypes of the projects.

DVC - Git-like Data Version Control for Machine Learning projects

DVC is an open-source tool for versioning datasets, artifacts, and models in Machine Learning projects.

This extremely powerful tool allows you to leverage an intuitive git-like interface to seamlessly

1. track datasets version updates

2. have reproducible and sharable machine learning pipelines (e.g. model training)

3. compare model performance scores

4. integrate your data and model versioning with git

5. deploy the desired version of your trained models

Spark SQL Deep Dive @ Melbourne Spark Meetup

Michael gave a talk at Melbourne Spark Meetup on Spark SQL Deep Dive.

Koalas: Making an Easy Transition from Pandas to Apache Spark

Koalas is an open-source project that aims at bridging the gap between big data and small data for data scientists and at simplifying Apache Spark for people who are already familiar with pandas library in Python. Pandas is the standard tool for data science and it is typically the first step to explore and manipulate a data set, but pandas does not scale well to big data.

What's hot (20)

dbt Python models - GoDataFest by Guillermo Sanchez

dbt Python models - GoDataFest by Guillermo Sanchez

Apache Spark GraphX & GraphFrame Synthetic ID Fraud Use Case

Apache Spark GraphX & GraphFrame Synthetic ID Fraud Use Case

OSA Con 2022 - Apache Iceberg_ An Architectural Look Under the Covers - Alex ...

OSA Con 2022 - Apache Iceberg_ An Architectural Look Under the Covers - Alex ...

DVC - Git-like Data Version Control for Machine Learning projects

DVC - Git-like Data Version Control for Machine Learning projects

Koalas: Making an Easy Transition from Pandas to Apache Spark

Koalas: Making an Easy Transition from Pandas to Apache Spark

Viewers also liked

Interactive Visualization With Bokeh (SF Python Meetup)

Bokeh is an interactive web visualization framework for Python, in the spirit of D3 but designed for non-Javascript programmers, and architected to be driven by server-side data and object model changes. Learn more about it and play with online demos at http://bokeh.pydata.org.

These slides are from a talk at San Francisco Python Meetup on September 10, 2014

Anaconda and PyData Solutions

Using Anaconda and PyData to Rapidly Build Big-Data Analytics and Visualization Solutions.

Python as the Zen of Data Science

A description of how Python and particularly Anaconda helps achieve Data Science Nirvana.

Python Visualisation for Data Science

My personal usage of Python Visualisation Libraries for Data Science

Bids talk 9.18

Using Anaconda to light up dark data. My talk given to the Berkeley Institute of Data Science describing Anaconda and the Blaze ecosystem for bringing a virtual analytical database to your data.

The Top Skills That Can Get You Hired in 2017

We analyzed all the recruiting activity on LinkedIn this year and identified the Top Skills employers seek. Starting Oct 24, learn these skills and much more for free during the Week of Learning.

#AlwaysBeLearning https://learning.linkedin.com/week-of-learning

Viewers also liked (10)

Interactive Visualization With Bokeh (SF Python Meetup)

Interactive Visualization With Bokeh (SF Python Meetup)

Similar to Creative Interactive Browser Visualizations with Bokeh by Bryan Van de ven

PyData London Bokeh Tutorial - Bryan Van de Ven

Pydata London 2014 Bokeh Turorial given by Bryan Van de Ven.

Using Python with Power BI

Learn the basics to get started using Python with Power BI. We show you how to set up the software and what libraries are needed. Learn how to use Python scripts to create data, connect to an existing data source, build a report and transform data with Power Query Editor.

See the video and download this deck: https://senturus.com/resources/python-with-power-bi/

Senturus offers a full spectrum of services for business analytics. Our resource library has hundreds of free live and recorded webinars, blog posts, demos and unbiased product reviews available on our website at: https://senturus.com/resources/

Continuum Analytics and Python

Talk given to the Philly Python Users Group (PUG) on October 1, 2015: http://www.meetup.com/phillypug/ Thanks SIG (http://www.sig.com) for hosting!

Reveal's Advanced Analytics: Using R & Python

Learn how you can use Reveal’s R & Python scripting capability to bring advanced data preparation, deeper analytics, and richer visualizations to your users!

H2O Deep Water - Making Deep Learning Accessible to Everyone

Deep Water is H2O's integration with multiple open source deep learning libraries such as TensorFlow, MXNet and Caffe. On top of the performance gains from GPU backends, Deep Water naturally inherits all H2O properties in scalability. ease of use and deployment. In this talk, I will go through the motivation and benefits of Deep Water. After that, I will demonstrate how to build and deploy deep learning models with or without programming experience using H2O's R/Python/Flow (Web) interfaces.

Jo-fai (or Joe) is a data scientist at H2O.ai. Before joining H2O, he was in the business intelligence team at Virgin Media in UK where he developed data products to enable quick and smart business decisions. He also worked remotely for Domino Data Lab in the US as a data science evangelist promoting products via blogging and giving talks at meetups. Joe has a background in water engineering. Before his data science journey, he was an EngD research engineer at STREAM Industrial Doctorate Centre working on machine learning techniques for drainage design optimization. Prior to that, he was an asset management consultant specialized in data mining and constrained optimization for the utilities sector in the UK and abroad. He also holds an MSc in Environmental Management and a BEng in Civil Engineering.

Project "Deep Water"

This is my Deep Water talk for the TensorFlow Paris meetup.

Deep Water is H2O's integration with multiple open source deep learning libraries such as TensorFlow, MXNet and Caffe. On top of the performance gains from GPU backends, Deep Water naturally inherits all H2O properties in scalability. ease of use and deployment.

Open web platform talk by daniel hladky at rif 2012 (19 april 2012 moscow)

My talk under W3C Russia at the RIF2012 conference in Moscow/Russia.

Open Source Junction: Apache Wookie and W3C Widgets

Presentation at Open Source Junction in Oxford, 30 March 2011

Open Source DataViz with Apache Superset

Open Source DataViz with Apache Superset. Presentation for Data Science Monterrey Meetup

Streaming Visualization

Most data visualisation solutions today still work on data sources which are stored persistently in a data store, using the so called “data at rest” paradigms. More and more data sources today provide a constant stream of data, from IoT devices to Social Media streams. These data stream publish with high velocity and messages often have to be processed as quick as possible. For the processing and analytics on the data, so called stream processing solutions are available. But these only provide minimal or no visualisation capabilities. One was is to first persist the data into a data store and then use a traditional data visualisation solution to present the data.

If latency is not an issue, such a solution might be good enough. An other question is which data store solution is necessary to keep up with the high load on write and read. If it is not an RDBMS but an NoSQL database, then not all traditional visualisation tools might already integrate with the specific data store. An other option is to use a Streaming Visualisation solution. They are specially built for streaming data and often do not support batch data. A much better solution would be to have one tool capable of handling both, batch and streaming data. This talk presents different architecture blueprints for integrating data visualisation into a fast data solution and highlights some of the products available to implement these blueprints.

Big Data and NoSQL for Database and BI Pros

Big Data and NoSQL for Database and BI Pros - PASS Business Analytics Conference 2013

Intro to H2O Machine Learning in Python - Galvanize Seattle

Erin LeDell presents Intro to H2O Machine Learning in Python at Galvanize Seattle, 02.02.16

- Powered by the open source machine learning software H2O.ai. Contributors welcome at: https://github.com/h2oai

- To view videos on H2O open source machine learning software, go to: https://www.youtube.com/user/0xdata

Similar to Creative Interactive Browser Visualizations with Bokeh by Bryan Van de ven (20)

H2O Deep Water - Making Deep Learning Accessible to Everyone

H2O Deep Water - Making Deep Learning Accessible to Everyone

Open web platform talk by daniel hladky at rif 2012 (19 april 2012 moscow)

Open web platform talk by daniel hladky at rif 2012 (19 april 2012 moscow)

Open Source Junction: Apache Wookie and W3C Widgets

Open Source Junction: Apache Wookie and W3C Widgets

H2O Deep Water - Making Deep Learning Accessible to Everyone

H2O Deep Water - Making Deep Learning Accessible to Everyone

Intro to H2O Machine Learning in Python - Galvanize Seattle

Intro to H2O Machine Learning in Python - Galvanize Seattle

More from PyData

Michal Mucha: Build and Deploy an End-to-end Streaming NLP Insight System | P...

At this workshop, you will build your own messaging insights system - data ingestion from a live data source (Reddit), queueing, deploying a machine learning model, and serving messages with insights to your mobile phone!

Unit testing data with marbles - Jane Stewart Adams, Leif Walsh

In the same way that we need to make assertions about how code functions, we need to make assertions about data, and unit testing is a promising framework. In this talk, we'll explore what is unique about unit testing data, and see how Two Sigma's open source library Marbles addresses these unique challenges in several real-world scenarios.

The TileDB Array Data Storage Manager - Stavros Papadopoulos, Jake Bolewski

TileDB is an open-source storage manager for multi-dimensional sparse and dense array data. It has a novel architecture that addresses some of the pain points in storing array data on “big-data” and “cloud” storage architectures. This talk will highlight TileDB’s design and its ability to integrate with analysis environments relevant to the PyData community such as Python, R, Julia, etc.

Using Embeddings to Understand the Variance and Evolution of Data Science... ...

In this talk I will discuss exponential family embeddings, which are methods that extend the idea behind word embeddings to other data types. I will describe how we used dynamic embeddings to understand how data science skill-sets have transformed over the last 3 years using our large corpus of jobs. The key takeaway is that these models can enrich analysis of specialized datasets.

Deploying Data Science for Distribution of The New York Times - Anne Bauer

How many newspapers should be distributed to each store for sale every day? The data science group at The New York Times addresses this optimization problem using custom time series modeling and analytical solutions, while also incorporating qualitative business concerns. I'll describe our modeling and data engineering approaches, written in Python and hosted on Google Cloud Platform.

Graph Analytics - From the Whiteboard to Your Toolbox - Sam Lerma

However, the the graph theory jargon can make graph analytics seem more intimidating for self-study than is necessary. In this talk, the audience will be exposed to some of the basic concepts of graph theory (no prerequisite math knowledge needed!) and a few of the Python tools available for graph analysis.

Do Your Homework! Writing tests for Data Science and Stochastic Code - David ...

To productionize data science work (and have it taken seriously by software engineers, CTOs, clients, or the open source community), you need to write tests! Except… how can you test code that performs nondeterministic tasks like natural language parsing and modeling? This talk presents an approach to testing probabilistic functions in code, illustrated with concrete examples written for Pytest.

RESTful Machine Learning with Flask and TensorFlow Serving - Carlo Mazzaferro

Those of us who use TensorFlow often focus on building the model that's most predictive, not the one that's most deployable. So how to put that hard work to work? In this talk, we'll walk through a strategy for taking your machine learning models from Jupyter Notebook into production and beyond.

Mining dockless bikeshare and dockless scootershare trip data - Stefanie Brod...

In September 2017, dockless bikeshare joined the transportation options in the District of Columbia. In March 2018, scooter share followed. During the pilot of these technologies, Python has helped District Department of Transportation answer some critical questions. This talk will discuss how Python was used to answer research questions and how it supported the evaluation of this demonstration.

Avoiding Bad Database Surprises: Simulation and Scalability - Steven Lott

There are many stories of developers creating databases that don't operate at scale. The application is good, but the database won't work the realistic volumes of data. It's like a horror movie where they never looked behind the door, ran into the dark forest and night, and discovered the database was the monster killing their application. How can we leverage Python to avoid scaling problems?

Words in Space - Rebecca Bilbro

Machine learning often requires us to think spatially and make choices about what it means for two instances to be close or far apart. So which is best - Euclidean? Manhattan? Cosine? It all depends! In this talk, we'll explore open source tools and visual diagnostic strategies for picking good distance metrics when doing machine learning on text.

End-to-End Machine learning pipelines for Python driven organizations - Nick ...

The recent advances in machine learning and artificial intelligence are amazing! Yet, in order to have real value within a company, data scientists must be able to get their models off of their laptops and deployed within a company’s data pipelines and infrastructure. In this session, I'll demonstrate how one-off experiments can be transformed into scalable ML pipelines with minimal effort.

Pydata beautiful soup - Monica Puerto

We will be using Beautiful Soup to Webscrape the IMDB website and create a function that will allow you to create a dictionary object on specific metadata of the IMDB profile for any IMDB ID you pass through as an argument.

1D Convolutional Neural Networks for Time Series Modeling - Nathan Janos, Jef...

This talk describes an experimental approach to time series modeling using 1D convolution filter layers in a neural network architecture. This approach was developed at System1 for forecasting marketplace value of online advertising categories.

Extending Pandas with Custom Types - Will Ayd

Pandas v.0.23 brought to life a new extension interface through which you can extend NumPy's type system. This talk will explain what that means in more detail and provide practical examples of how the new interface can be leveraged to drastically improve your reporting.

Measuring Model Fairness - Stephen Hoover

Machine learning models are increasingly used to make decisions that affect people’s lives. With this power comes a responsibility to ensure that model predictions are fair. In this talk I’ll introduce several common model fairness metrics, discuss their tradeoffs, and finally demonstrate their use with a case study analyzing anonymized data from one of Civis Analytics’s client engagements.

What's the Science in Data Science? - Skipper Seabold

The gold standard for validating any scientific assumption is to run an experiment. Data science isn’t any different. Unfortunately, it’s not always possible to design the perfect experiment. In this talk, we’ll take a realistic look at measurement using tools from the social sciences to conduct quasi-experiments with observational data.

Applying Statistical Modeling and Machine Learning to Perform Time-Series For...

Forecasting time-series data has applications in many fields, including finance, health, etc. There are potential pitfalls when applying classic statistical and machine learning methods to time-series problems. This talk will give folks the basic toolbox to analyze time-series data and perform forecasting using statistical and machine learning models, as well as interpret and convey the outputs.

Solving very simple substitution ciphers algorithmically - Stephen Enright-Ward

A historical text may now be unreadable, because its language is unknown, or its script forgotten (or both), or because it was deliberately enciphered. Deciphering needs two steps: Identify the language, then map the unknown script to a familiar one. I’ll present an algorithm to solve a cartoon version of this problem, where the language is known, and the cipher is alphabet rearrangement.

The Face of Nanomaterials: Insightful Classification Using Deep Learning - An...

Artificial intelligence is emerging as a new paradigm in materials science. This talk describes how physical intuition and (insightful) machine learning can solve the complicated task of structure recognition in materials at the nanoscale.

More from PyData (20)

Michal Mucha: Build and Deploy an End-to-end Streaming NLP Insight System | P...

Michal Mucha: Build and Deploy an End-to-end Streaming NLP Insight System | P...

Unit testing data with marbles - Jane Stewart Adams, Leif Walsh

Unit testing data with marbles - Jane Stewart Adams, Leif Walsh

The TileDB Array Data Storage Manager - Stavros Papadopoulos, Jake Bolewski

The TileDB Array Data Storage Manager - Stavros Papadopoulos, Jake Bolewski

Using Embeddings to Understand the Variance and Evolution of Data Science... ...

Using Embeddings to Understand the Variance and Evolution of Data Science... ...

Deploying Data Science for Distribution of The New York Times - Anne Bauer

Deploying Data Science for Distribution of The New York Times - Anne Bauer

Graph Analytics - From the Whiteboard to Your Toolbox - Sam Lerma

Graph Analytics - From the Whiteboard to Your Toolbox - Sam Lerma

Do Your Homework! Writing tests for Data Science and Stochastic Code - David ...

Do Your Homework! Writing tests for Data Science and Stochastic Code - David ...

RESTful Machine Learning with Flask and TensorFlow Serving - Carlo Mazzaferro

RESTful Machine Learning with Flask and TensorFlow Serving - Carlo Mazzaferro

Mining dockless bikeshare and dockless scootershare trip data - Stefanie Brod...

Mining dockless bikeshare and dockless scootershare trip data - Stefanie Brod...

Avoiding Bad Database Surprises: Simulation and Scalability - Steven Lott

Avoiding Bad Database Surprises: Simulation and Scalability - Steven Lott

End-to-End Machine learning pipelines for Python driven organizations - Nick ...

End-to-End Machine learning pipelines for Python driven organizations - Nick ...

1D Convolutional Neural Networks for Time Series Modeling - Nathan Janos, Jef...

1D Convolutional Neural Networks for Time Series Modeling - Nathan Janos, Jef...

What's the Science in Data Science? - Skipper Seabold

What's the Science in Data Science? - Skipper Seabold

Applying Statistical Modeling and Machine Learning to Perform Time-Series For...

Applying Statistical Modeling and Machine Learning to Perform Time-Series For...

Solving very simple substitution ciphers algorithmically - Stephen Enright-Ward

Solving very simple substitution ciphers algorithmically - Stephen Enright-Ward

The Face of Nanomaterials: Insightful Classification Using Deep Learning - An...

The Face of Nanomaterials: Insightful Classification Using Deep Learning - An...

Recently uploaded

Smart TV Buyer Insights Survey 2024 by 91mobiles.pdf

91mobiles recently conducted a Smart TV Buyer Insights Survey in which we asked over 3,000 respondents about the TV they own, aspects they look at on a new TV, and their TV buying preferences.

Encryption in Microsoft 365 - ExpertsLive Netherlands 2024

In this session I delve into the encryption technology used in Microsoft 365 and Microsoft Purview. Including the concepts of Customer Key and Double Key Encryption.

Epistemic Interaction - tuning interfaces to provide information for AI support

Paper presented at SYNERGY workshop at AVI 2024, Genoa, Italy. 3rd June 2024

https://alandix.com/academic/papers/synergy2024-epistemic/

As machine learning integrates deeper into human-computer interactions, the concept of epistemic interaction emerges, aiming to refine these interactions to enhance system adaptability. This approach encourages minor, intentional adjustments in user behaviour to enrich the data available for system learning. This paper introduces epistemic interaction within the context of human-system communication, illustrating how deliberate interaction design can improve system understanding and adaptation. Through concrete examples, we demonstrate the potential of epistemic interaction to significantly advance human-computer interaction by leveraging intuitive human communication strategies to inform system design and functionality, offering a novel pathway for enriching user-system engagements.

Neuro-symbolic is not enough, we need neuro-*semantic*

Neuro-symbolic (NeSy) AI is on the rise. However, simply machine learning on just any symbolic structure is not sufficient to really harvest the gains of NeSy. These will only be gained when the symbolic structures have an actual semantics. I give an operational definition of semantics as “predictable inference”.

All of this illustrated with link prediction over knowledge graphs, but the argument is general.

To Graph or Not to Graph Knowledge Graph Architectures and LLMs

Reflecting on new architectures for knowledge based systems in light of generative ai

JMeter webinar - integration with InfluxDB and Grafana

Watch this recorded webinar about real-time monitoring of application performance. See how to integrate Apache JMeter, the open-source leader in performance testing, with InfluxDB, the open-source time-series database, and Grafana, the open-source analytics and visualization application.

In this webinar, we will review the benefits of leveraging InfluxDB and Grafana when executing load tests and demonstrate how these tools are used to visualize performance metrics.

Length: 30 minutes

Session Overview

-------------------------------------------

During this webinar, we will cover the following topics while demonstrating the integrations of JMeter, InfluxDB and Grafana:

- What out-of-the-box solutions are available for real-time monitoring JMeter tests?

- What are the benefits of integrating InfluxDB and Grafana into the load testing stack?

- Which features are provided by Grafana?

- Demonstration of InfluxDB and Grafana using a practice web application

To view the webinar recording, go to:

https://www.rttsweb.com/jmeter-integration-webinar

Assuring Contact Center Experiences for Your Customers With ThousandEyes

Presented by Suzanne Phillips and Alex Marcotte

Key Trends Shaping the Future of Infrastructure.pdf

Keynote at DIGIT West Expo, Glasgow on 29 May 2024.

Cheryl Hung, ochery.com

Sr Director, Infrastructure Ecosystem, Arm.

The key trends across hardware, cloud and open-source; exploring how these areas are likely to mature and develop over the short and long-term, and then considering how organisations can position themselves to adapt and thrive.

How world-class product teams are winning in the AI era by CEO and Founder, P...

How world-class product teams are winning in the AI era by CEO and Founder, Product School

Empowering NextGen Mobility via Large Action Model Infrastructure (LAMI): pav...

Empowering NextGen Mobility via Large Action Model Infrastructure (LAMI)

Accelerate your Kubernetes clusters with Varnish Caching

A presentation about the usage and availability of Varnish on Kubernetes. This talk explores the capabilities of Varnish caching and shows how to use the Varnish Helm chart to deploy it to Kubernetes.

This presentation was delivered at K8SUG Singapore. See https://feryn.eu/presentations/accelerate-your-kubernetes-clusters-with-varnish-caching-k8sug-singapore-28-2024 for more details.

From Siloed Products to Connected Ecosystem: Building a Sustainable and Scala...

From Siloed Products to Connected Ecosystem: Building a Sustainable and Scalable Platform by VP of Product, The New York Times

AI for Every Business: Unlocking Your Product's Universal Potential by VP of ...

AI for Every Business: Unlocking Your Product's Universal Potential by VP of Product, Slack

GraphRAG is All You need? LLM & Knowledge Graph

Guy Korland, CEO and Co-founder of FalkorDB, will review two articles on the integration of language models with knowledge graphs.

1. Unifying Large Language Models and Knowledge Graphs: A Roadmap.

https://arxiv.org/abs/2306.08302

2. Microsoft Research's GraphRAG paper and a review paper on various uses of knowledge graphs:

https://www.microsoft.com/en-us/research/blog/graphrag-unlocking-llm-discovery-on-narrative-private-data/

GenAISummit 2024 May 28 Sri Ambati Keynote: AGI Belongs to The Community in O...

“AGI should be open source and in the public domain at the service of humanity and the planet.”

Knowledge engineering: from people to machines and back

Keynote at the 21st European Semantic Web Conference

Transcript: Selling digital books in 2024: Insights from industry leaders - T...

The publishing industry has been selling digital audiobooks and ebooks for over a decade and has found its groove. What’s changed? What has stayed the same? Where do we go from here? Join a group of leading sales peers from across the industry for a conversation about the lessons learned since the popularization of digital books, best practices, digital book supply chain management, and more.

Link to video recording: https://bnctechforum.ca/sessions/selling-digital-books-in-2024-insights-from-industry-leaders/

Presented by BookNet Canada on May 28, 2024, with support from the Department of Canadian Heritage.

State of ICS and IoT Cyber Threat Landscape Report 2024 preview

The IoT and OT threat landscape report has been prepared by the Threat Research Team at Sectrio using data from Sectrio, cyber threat intelligence farming facilities spread across over 85 cities around the world. In addition, Sectrio also runs AI-based advanced threat and payload engagement facilities that serve as sinks to attract and engage sophisticated threat actors, and newer malware including new variants and latent threats that are at an earlier stage of development.

The latest edition of the OT/ICS and IoT security Threat Landscape Report 2024 also covers:

State of global ICS asset and network exposure

Sectoral targets and attacks as well as the cost of ransom

Global APT activity, AI usage, actor and tactic profiles, and implications

Rise in volumes of AI-powered cyberattacks

Major cyber events in 2024

Malware and malicious payload trends

Cyberattack types and targets

Vulnerability exploit attempts on CVEs

Attacks on counties – USA

Expansion of bot farms – how, where, and why

In-depth analysis of the cyber threat landscape across North America, South America, Europe, APAC, and the Middle East

Why are attacks on smart factories rising?

Cyber risk predictions

Axis of attacks – Europe

Systemic attacks in the Middle East

Download the full report from here:

https://sectrio.com/resources/ot-threat-landscape-reports/sectrio-releases-ot-ics-and-iot-security-threat-landscape-report-2024/

Software Delivery At the Speed of AI: Inflectra Invests In AI-Powered Quality

In this insightful webinar, Inflectra explores how artificial intelligence (AI) is transforming software development and testing. Discover how AI-powered tools are revolutionizing every stage of the software development lifecycle (SDLC), from design and prototyping to testing, deployment, and monitoring.

Learn about:

• The Future of Testing: How AI is shifting testing towards verification, analysis, and higher-level skills, while reducing repetitive tasks.

• Test Automation: How AI-powered test case generation, optimization, and self-healing tests are making testing more efficient and effective.

• Visual Testing: Explore the emerging capabilities of AI in visual testing and how it's set to revolutionize UI verification.

• Inflectra's AI Solutions: See demonstrations of Inflectra's cutting-edge AI tools like the ChatGPT plugin and Azure Open AI platform, designed to streamline your testing process.

Whether you're a developer, tester, or QA professional, this webinar will give you valuable insights into how AI is shaping the future of software delivery.

Recently uploaded (20)

Smart TV Buyer Insights Survey 2024 by 91mobiles.pdf

Smart TV Buyer Insights Survey 2024 by 91mobiles.pdf

Encryption in Microsoft 365 - ExpertsLive Netherlands 2024

Encryption in Microsoft 365 - ExpertsLive Netherlands 2024

Epistemic Interaction - tuning interfaces to provide information for AI support

Epistemic Interaction - tuning interfaces to provide information for AI support

Neuro-symbolic is not enough, we need neuro-*semantic*

Neuro-symbolic is not enough, we need neuro-*semantic*

To Graph or Not to Graph Knowledge Graph Architectures and LLMs

To Graph or Not to Graph Knowledge Graph Architectures and LLMs

JMeter webinar - integration with InfluxDB and Grafana

JMeter webinar - integration with InfluxDB and Grafana

Assuring Contact Center Experiences for Your Customers With ThousandEyes

Assuring Contact Center Experiences for Your Customers With ThousandEyes

Key Trends Shaping the Future of Infrastructure.pdf

Key Trends Shaping the Future of Infrastructure.pdf

How world-class product teams are winning in the AI era by CEO and Founder, P...

How world-class product teams are winning in the AI era by CEO and Founder, P...

Empowering NextGen Mobility via Large Action Model Infrastructure (LAMI): pav...

Empowering NextGen Mobility via Large Action Model Infrastructure (LAMI): pav...

Accelerate your Kubernetes clusters with Varnish Caching

Accelerate your Kubernetes clusters with Varnish Caching

From Siloed Products to Connected Ecosystem: Building a Sustainable and Scala...

From Siloed Products to Connected Ecosystem: Building a Sustainable and Scala...

AI for Every Business: Unlocking Your Product's Universal Potential by VP of ...

AI for Every Business: Unlocking Your Product's Universal Potential by VP of ...

GenAISummit 2024 May 28 Sri Ambati Keynote: AGI Belongs to The Community in O...

GenAISummit 2024 May 28 Sri Ambati Keynote: AGI Belongs to The Community in O...

Monitoring Java Application Security with JDK Tools and JFR Events

Monitoring Java Application Security with JDK Tools and JFR Events

Knowledge engineering: from people to machines and back

Knowledge engineering: from people to machines and back

Transcript: Selling digital books in 2024: Insights from industry leaders - T...

Transcript: Selling digital books in 2024: Insights from industry leaders - T...

State of ICS and IoT Cyber Threat Landscape Report 2024 preview

State of ICS and IoT Cyber Threat Landscape Report 2024 preview

Software Delivery At the Speed of AI: Inflectra Invests In AI-Powered Quality

Software Delivery At the Speed of AI: Inflectra Invests In AI-Powered Quality

Creative Interactive Browser Visualizations with Bokeh by Bryan Van de ven

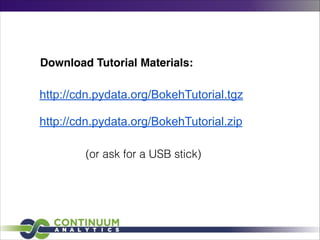

- 1. http://cdn.pydata.org/BokehTutorial.tgz ! http://cdn.pydata.org/BokehTutorial.zip Download Tutorial Materials: (or ask for a USB stick)

- 2. Feb 21, 2014 Creating interactive browser visualizations with Bokeh

- 3. About Me • Employee at Continuum,Analytics • Open-source contributor (Bokeh, Chaco, NumPy) • Scientific, financial, engineering domains using Python, C, C++, etc. • InteractiveVisualization of “Big Data” • Background in Physics, Mathematics

- 4. About Continuum • Founded in 2012 by Travis Oliphant and Peter Wang • Headquartered in Austin,TX • Products, consulting, training • “big data” analytics • scientific & high-performance computing • interactive visualization, dashboards, web apps • collaborative analysis

- 5. Visualization Bokeh: Interactive, browser-based visualization for big data, driven from Python (and others!) http://bokeh.pydata.org !

- 6. Bokeh Object-oriented JS runtime library for dynamic, novel, interactive web graphics ! Python interfaces to output static plots or drive live ones ! Interop with IPython Notebook Interactive web viz without Javascript

- 7. Bokeh • Language-based (instead of GUI) visualization system • High-level expressions of data binding, statistical transforms, interactivity and linked data • Easy to learn, but expressive depth for power users • Interactive • Data space configuration as well as data selection • Specified from high-level language constructs • Web as first class interface target • Support for large datasets via intelligent downsampling (“abstract rendering”)

- 8. Bokeh • Rich interactivity over large datasets • HTML5 Canvas (faster than SVG) • Handles realtime streaming and updating data • Novel & custom visualizations • Integration with Google Maps • No need to learn Javascript - easy interfaces from Python & other langs http://bokeh.pydata.org

- 9. Bokeh Interface Concepts • Plots are based on glyphs • All or almost all visual elements of a glyph can be attached to a vector of data. !

- 16. Coming soon • Abstract Rendering — dynamic downsampling and data shading for millions of points • Contraints based layout system • Interactive tool improvements and additional tools • Matplotlib compatibility — use Bokeh from pandas, ggplot.py, Seaborn • Language bindings — Scala underway, more later • Widget interactors and plugins

- 17. But don’t forget • Usability improvements • Discoverable parameters • Informative error messaging • Expanded live gallery • “Do the right thing” when it is possible • expose capability when it’s not Need feedback from users (you!)

- 18. More information and Contributing Public Github repos • https://github.com/ContinuumIO/bokeh • https://github.com/JosephCottam/AbstractRendering ! Videos • Python & the Future of Data Analysis • Bokeh Workshop ! Blogs • http://continuum.io/blog/index • http://continuum.io/blog/painless_streaming_plots_w_bokeh • http://continuum.io/blog/realtime-analytics-twitter