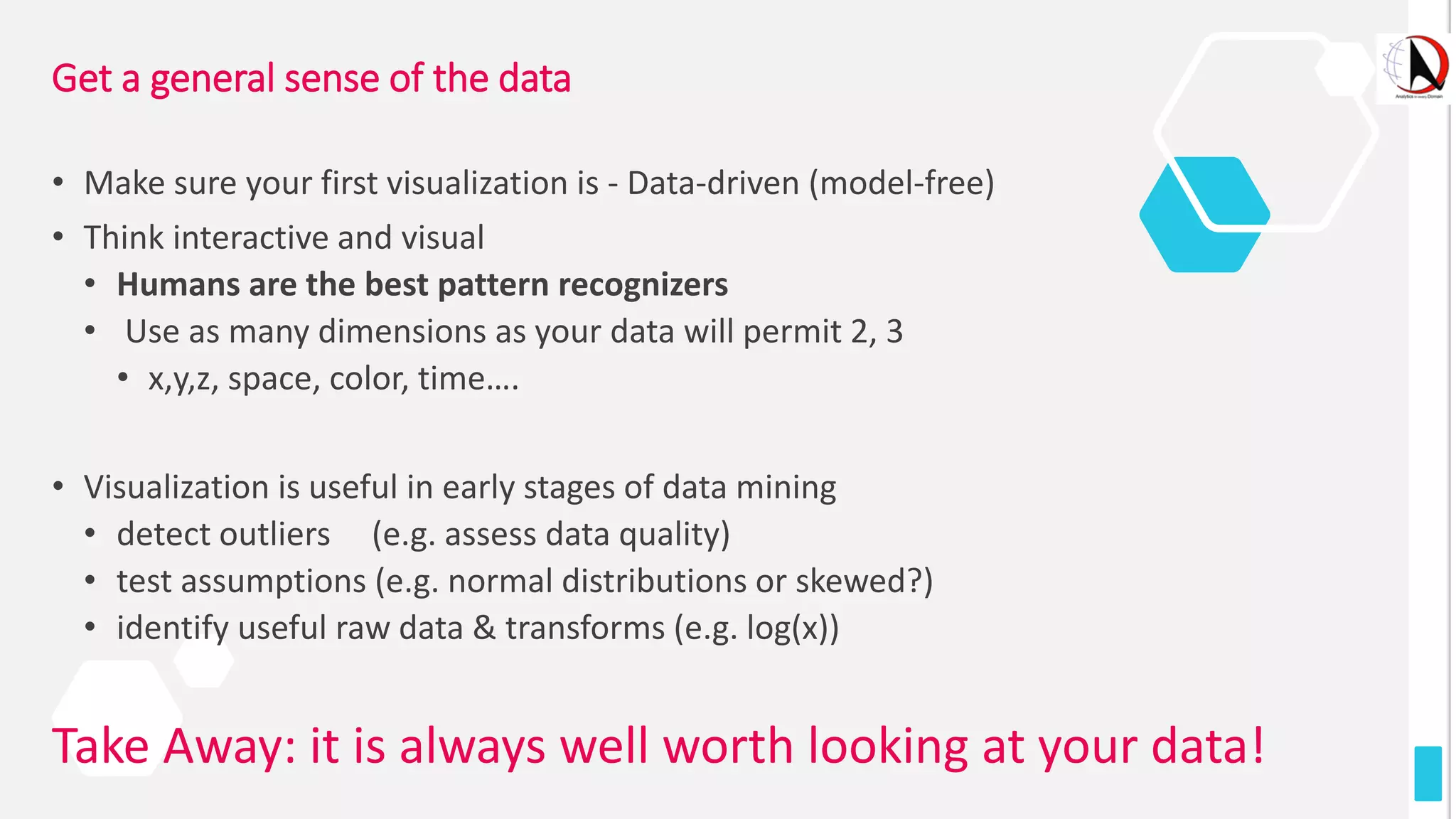

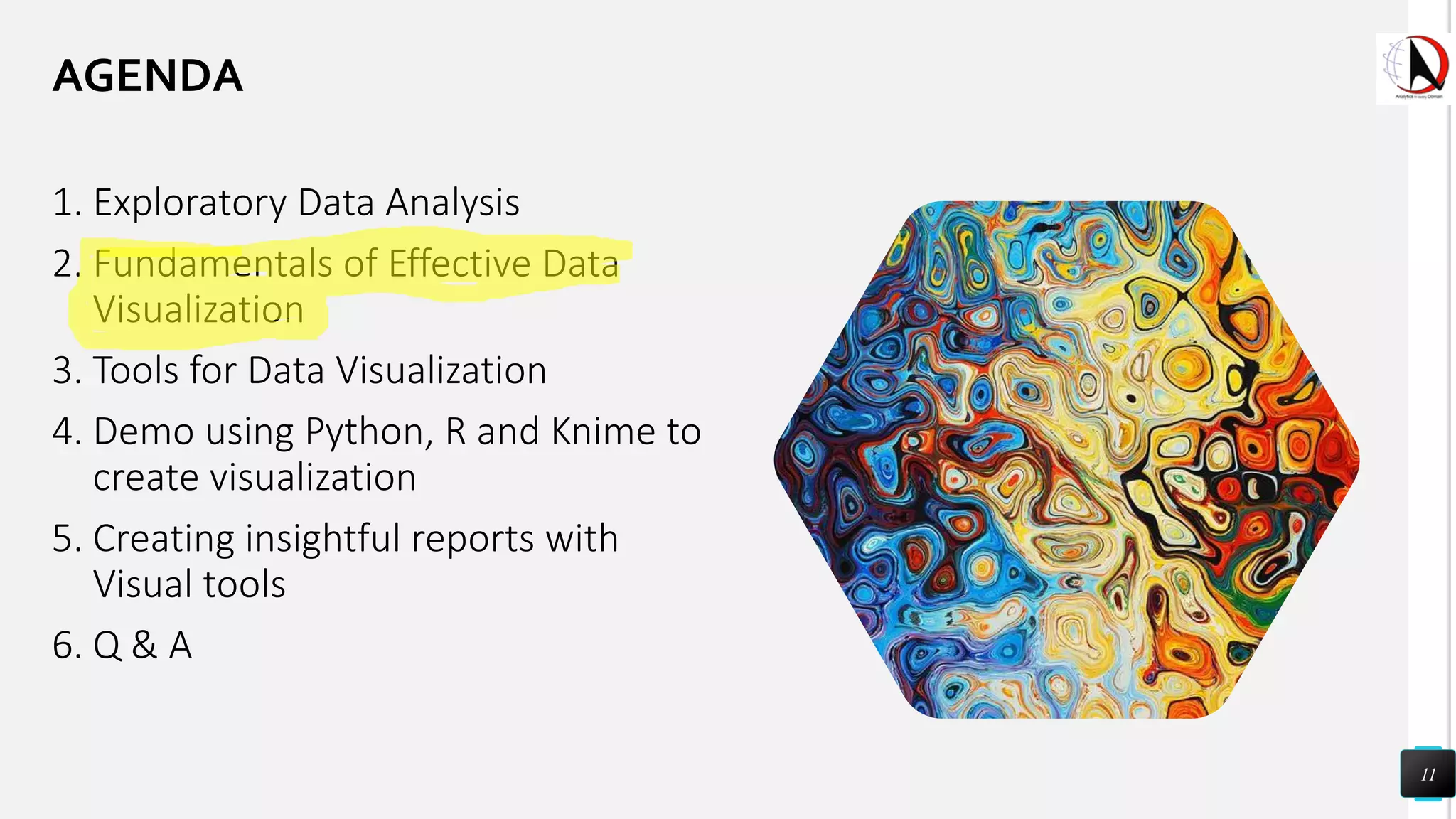

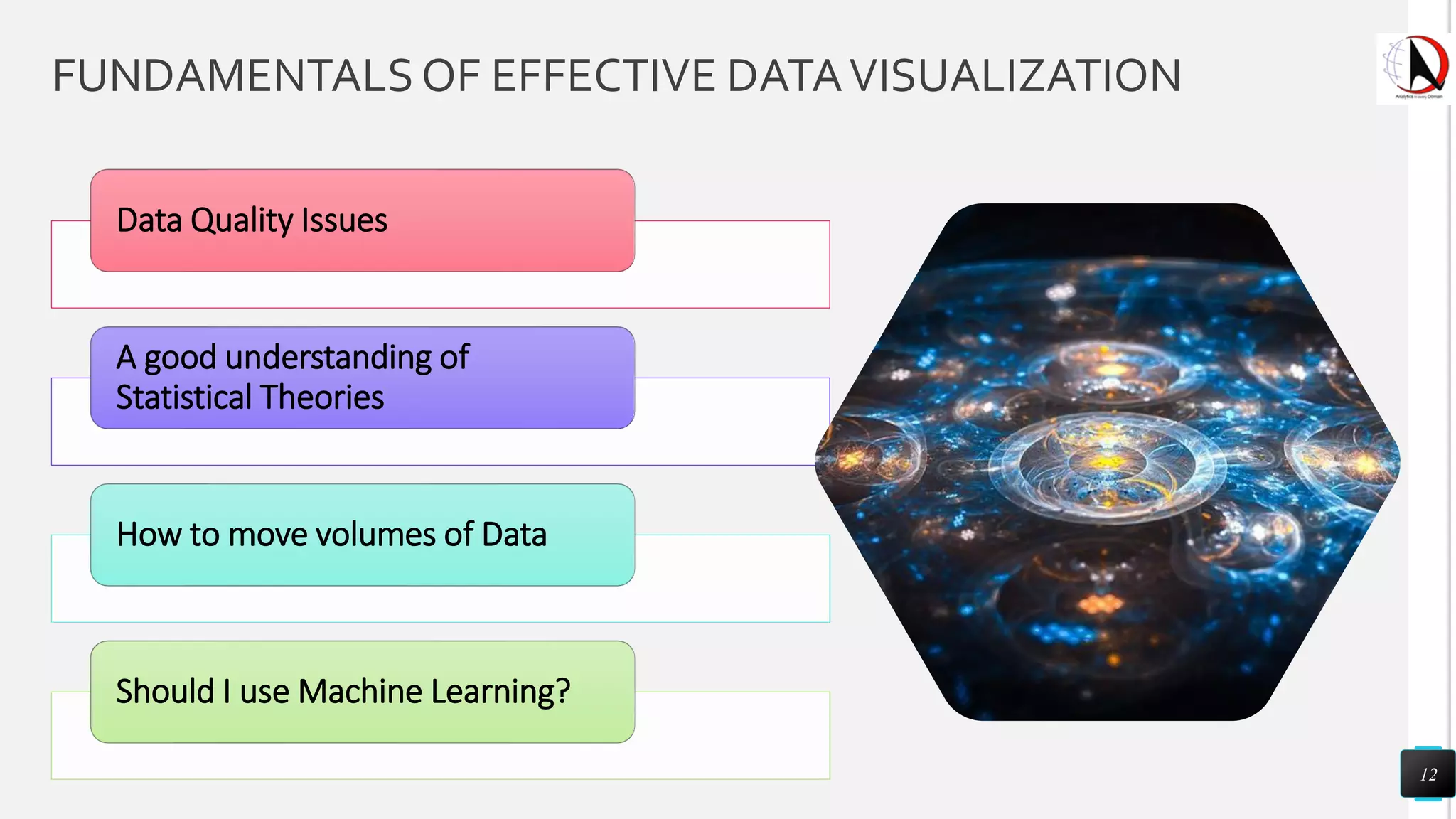

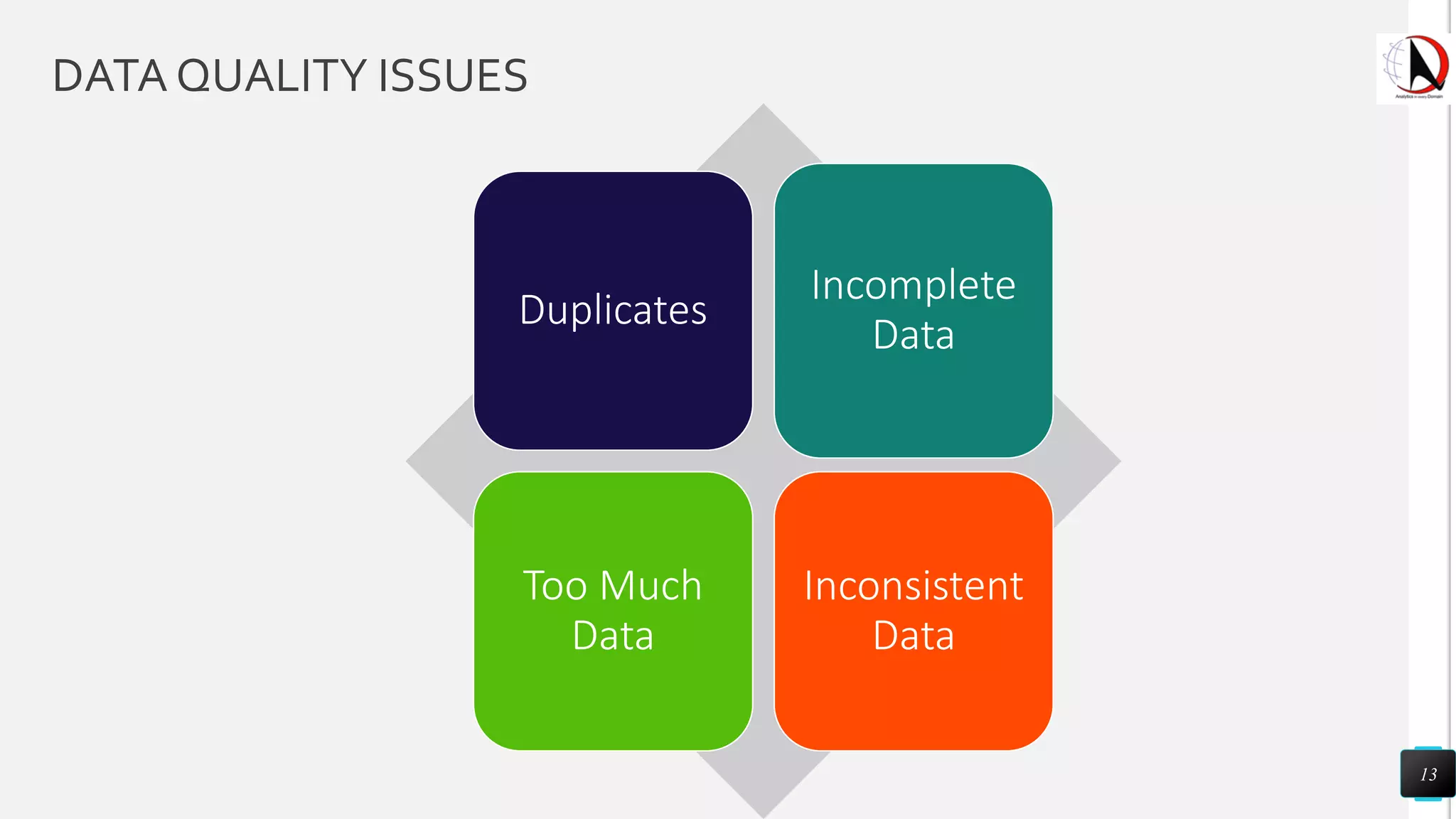

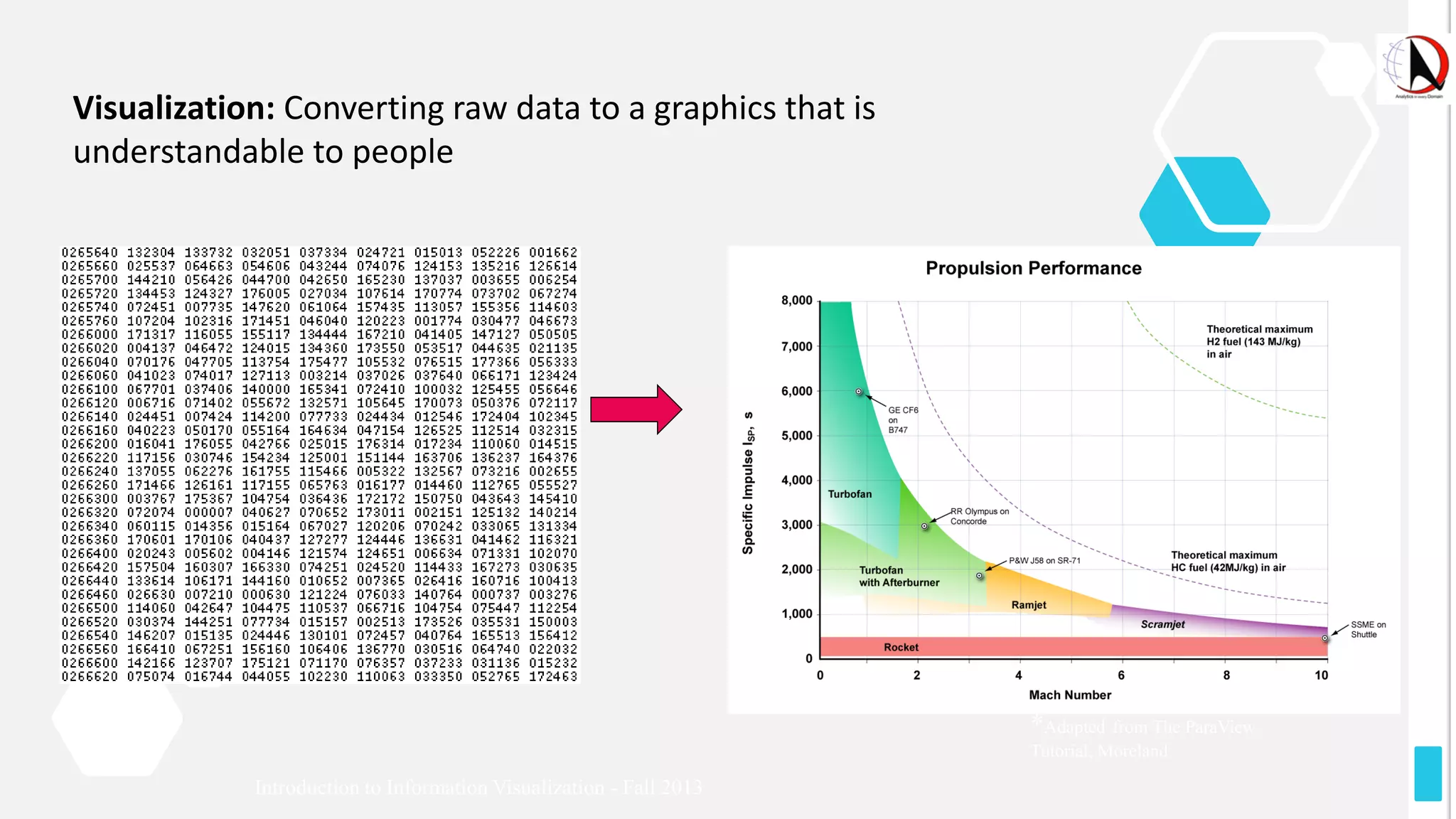

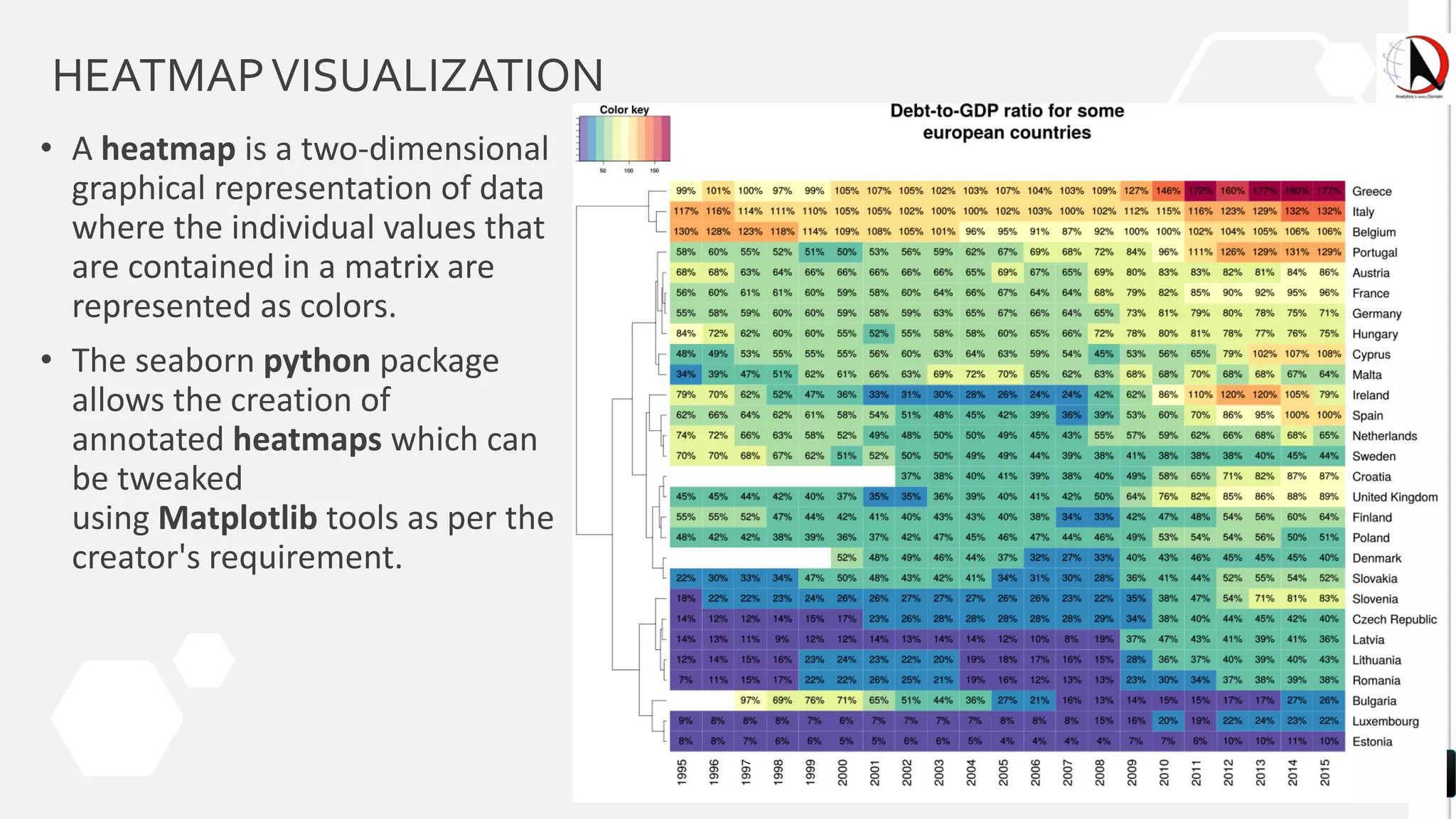

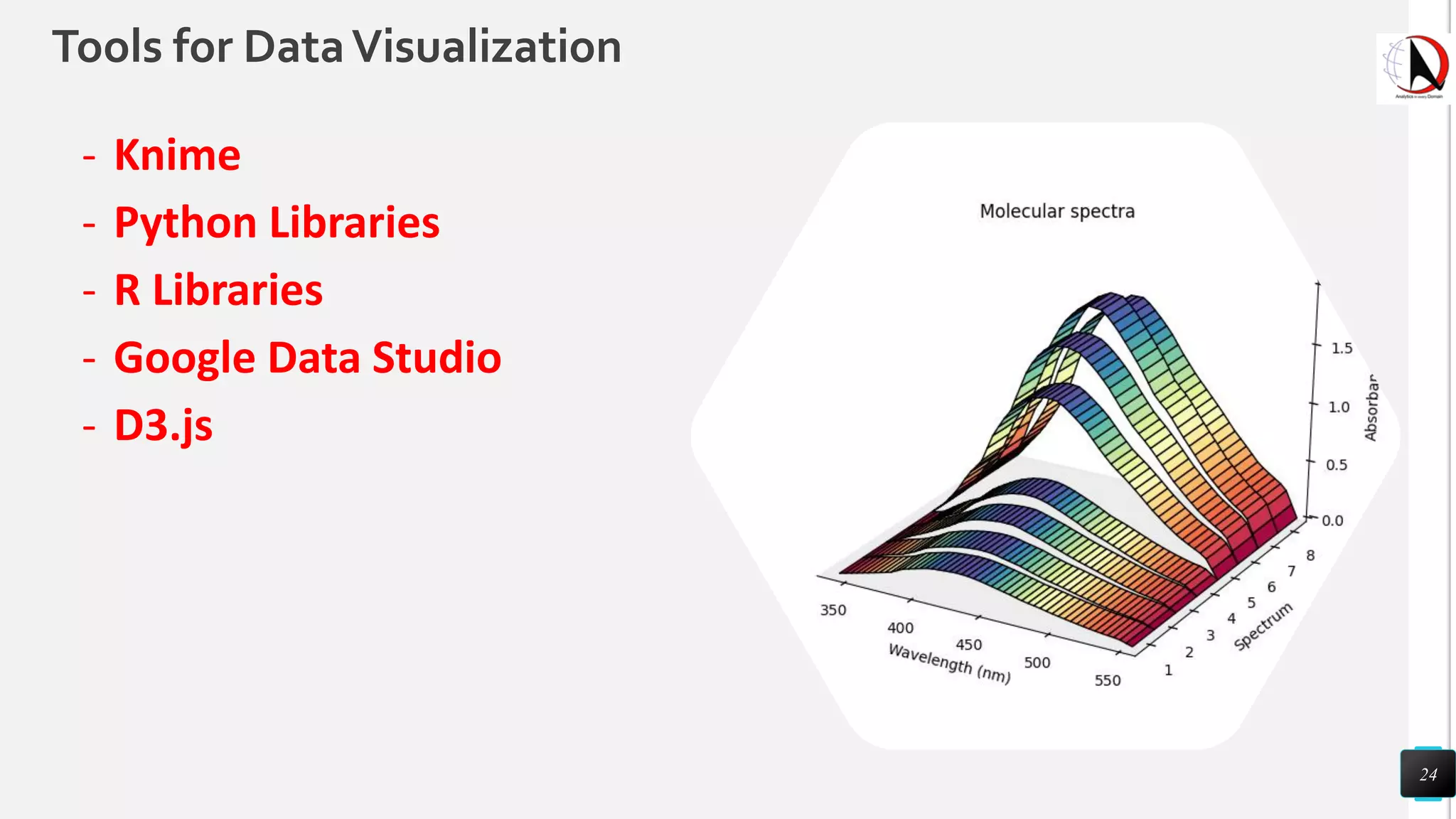

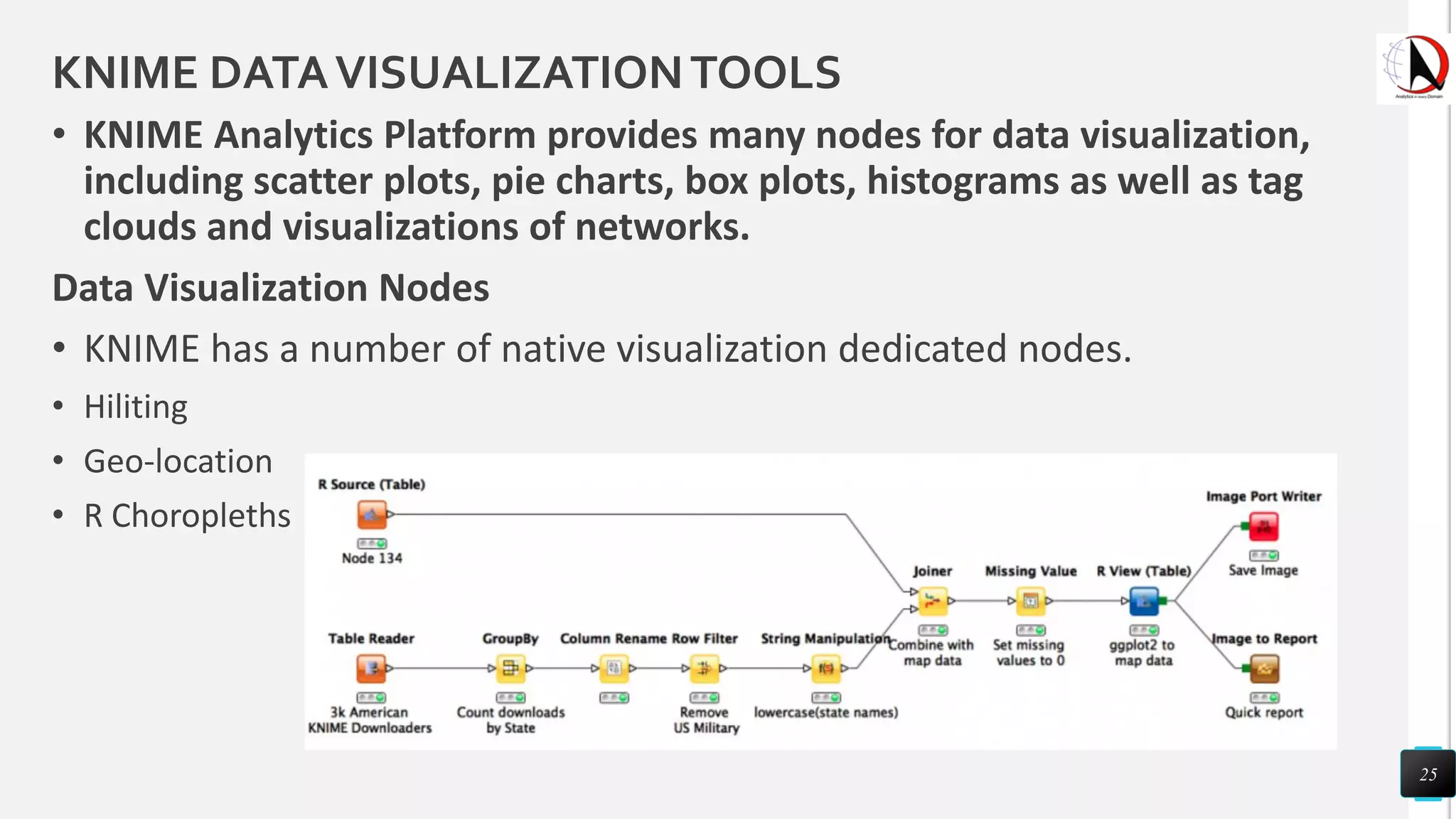

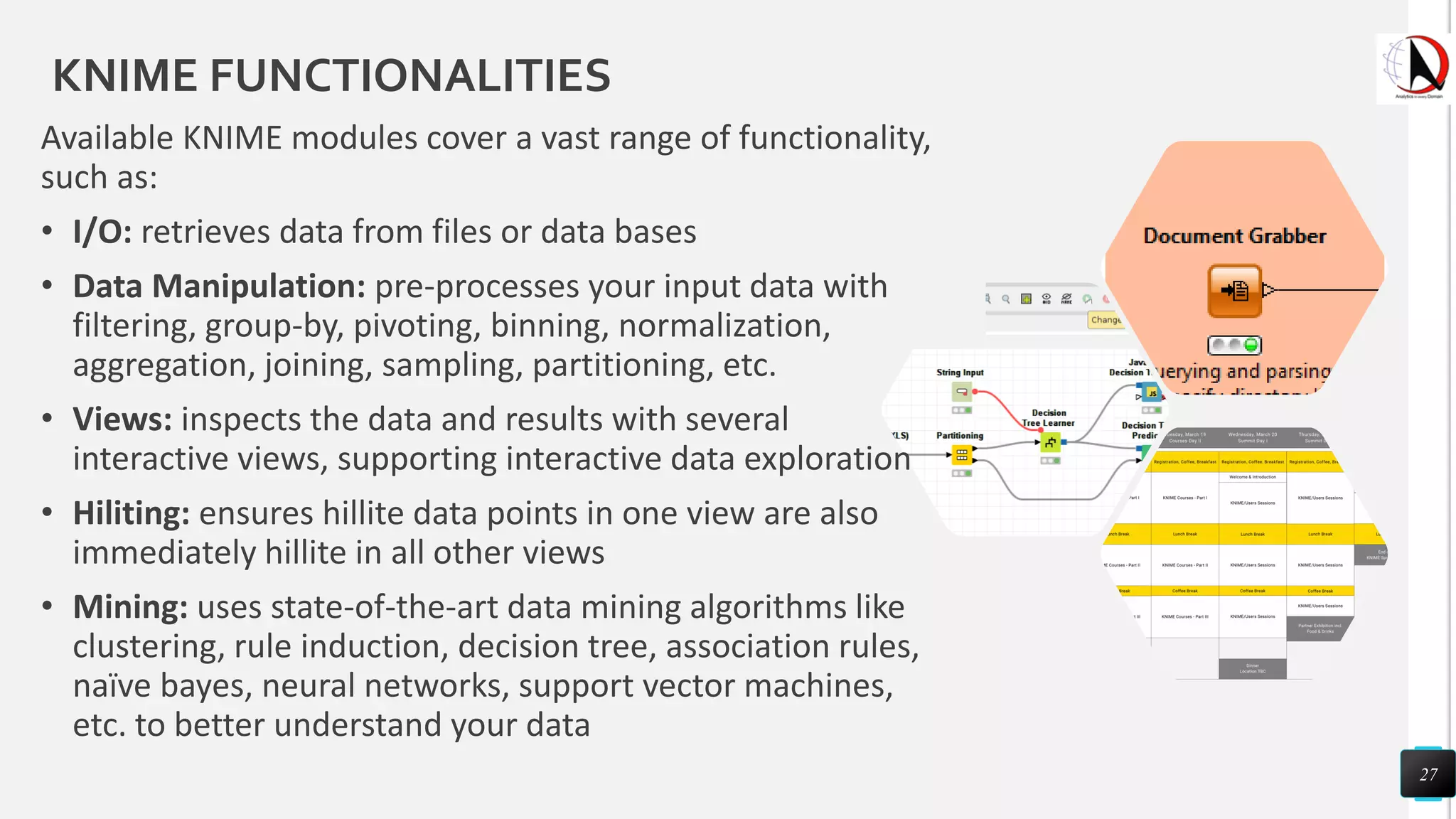

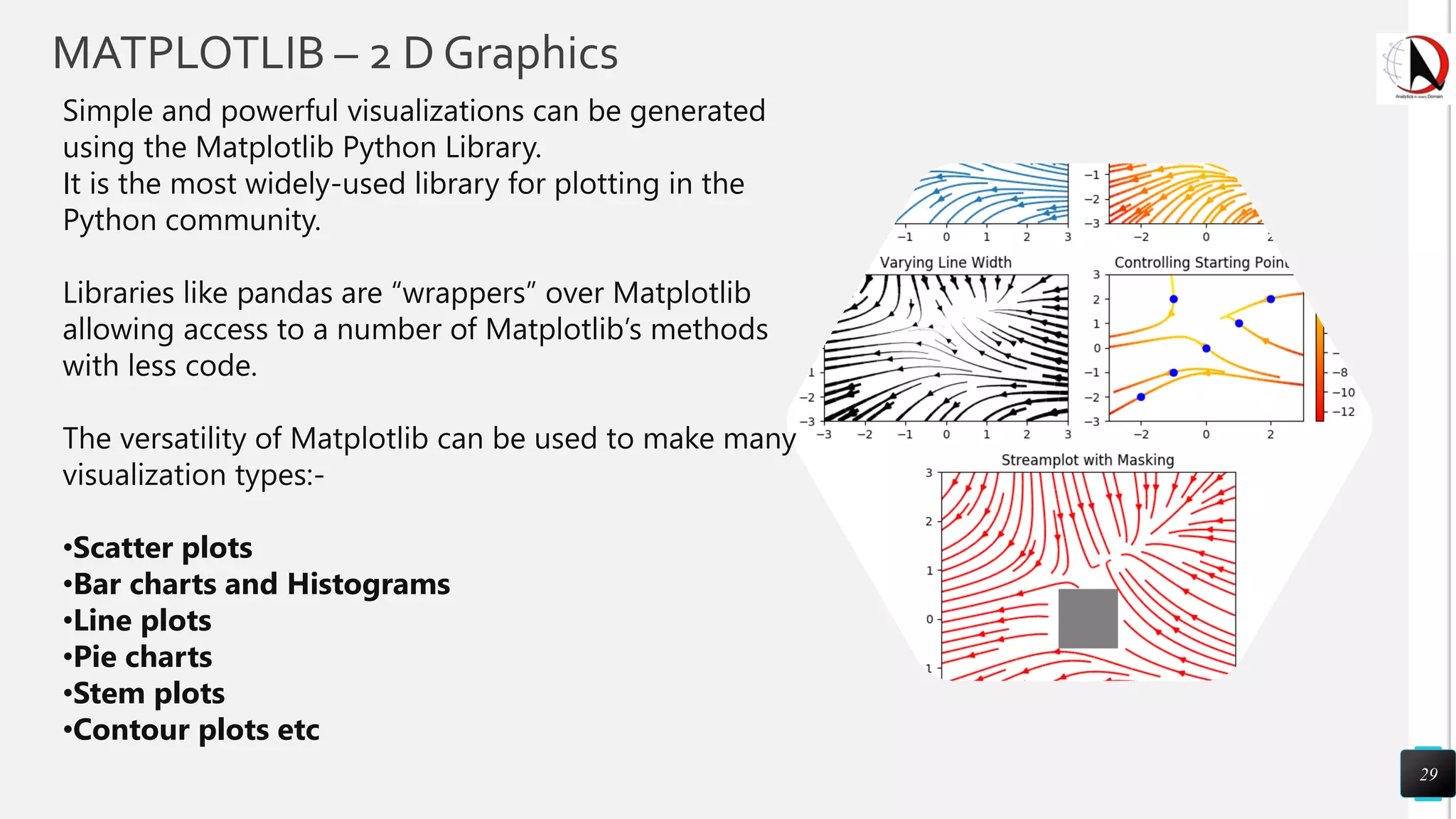

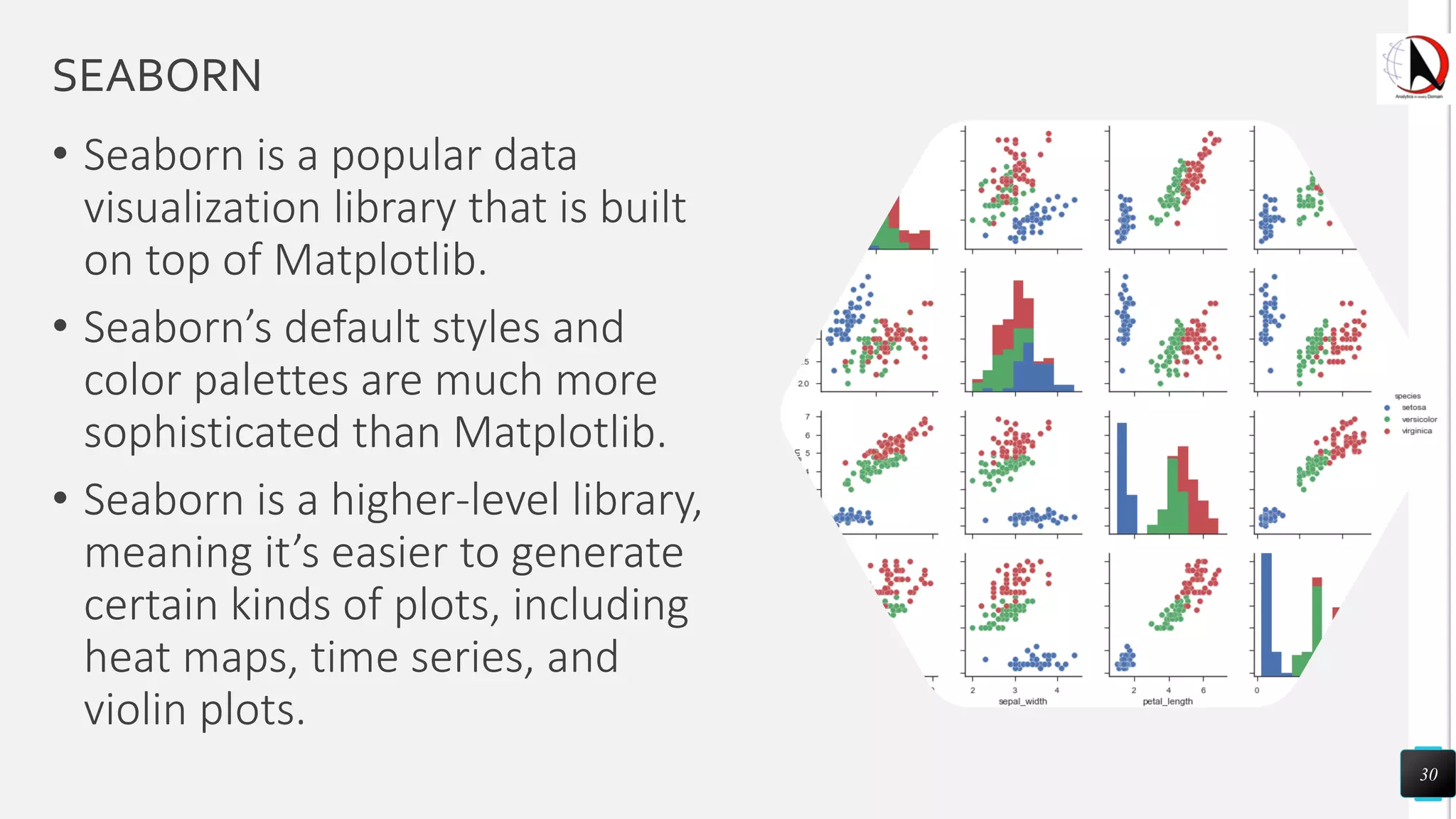

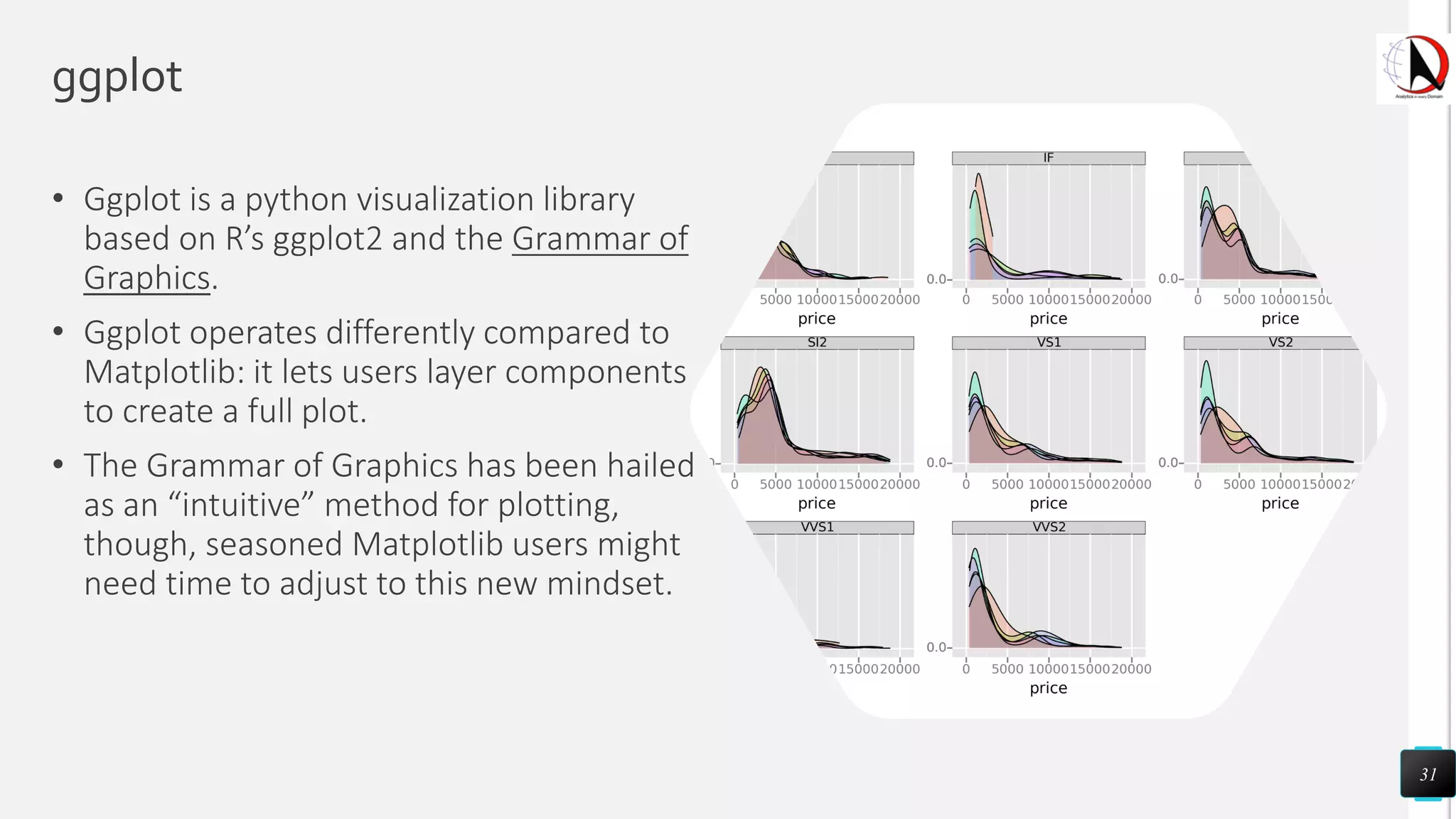

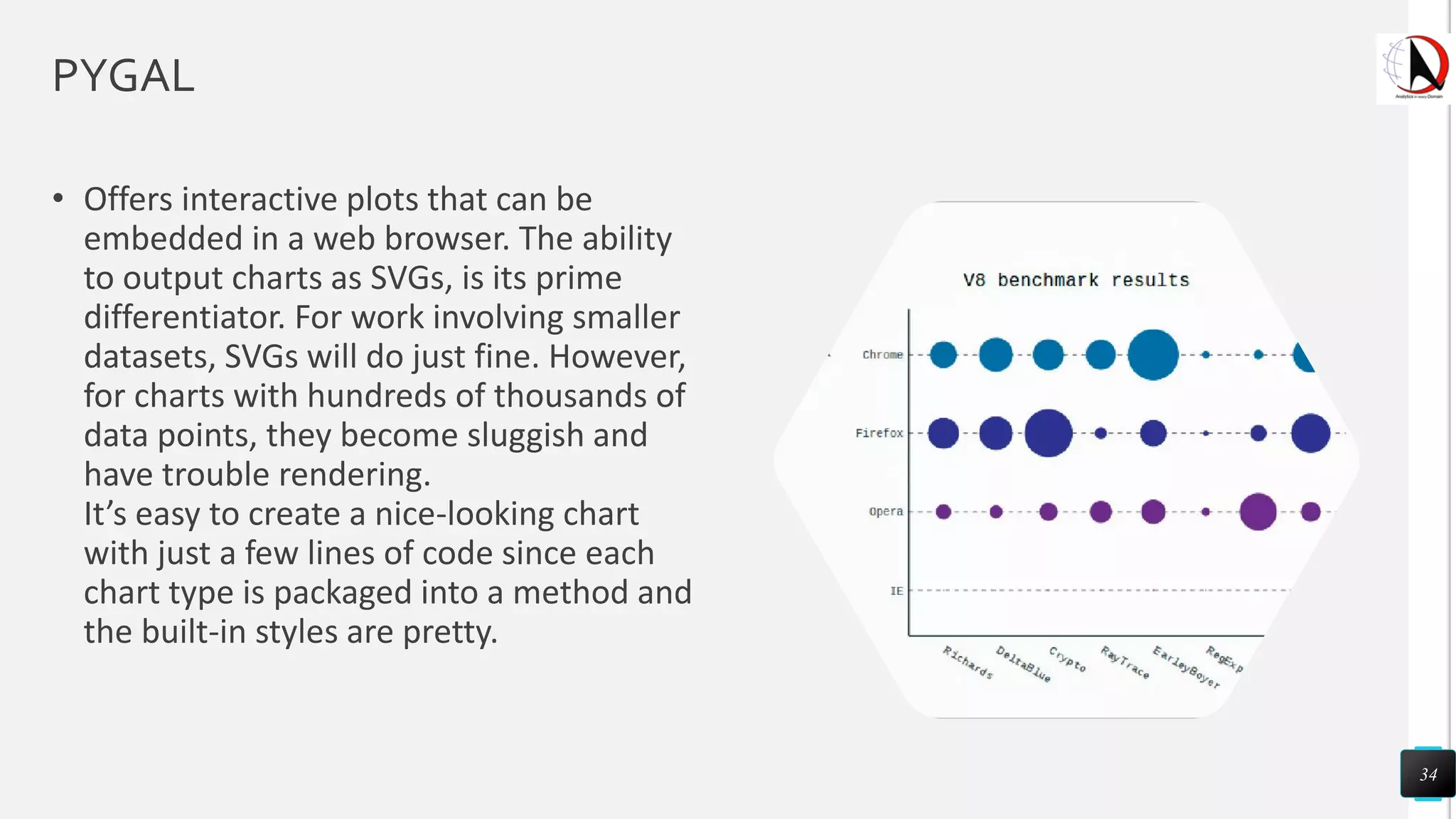

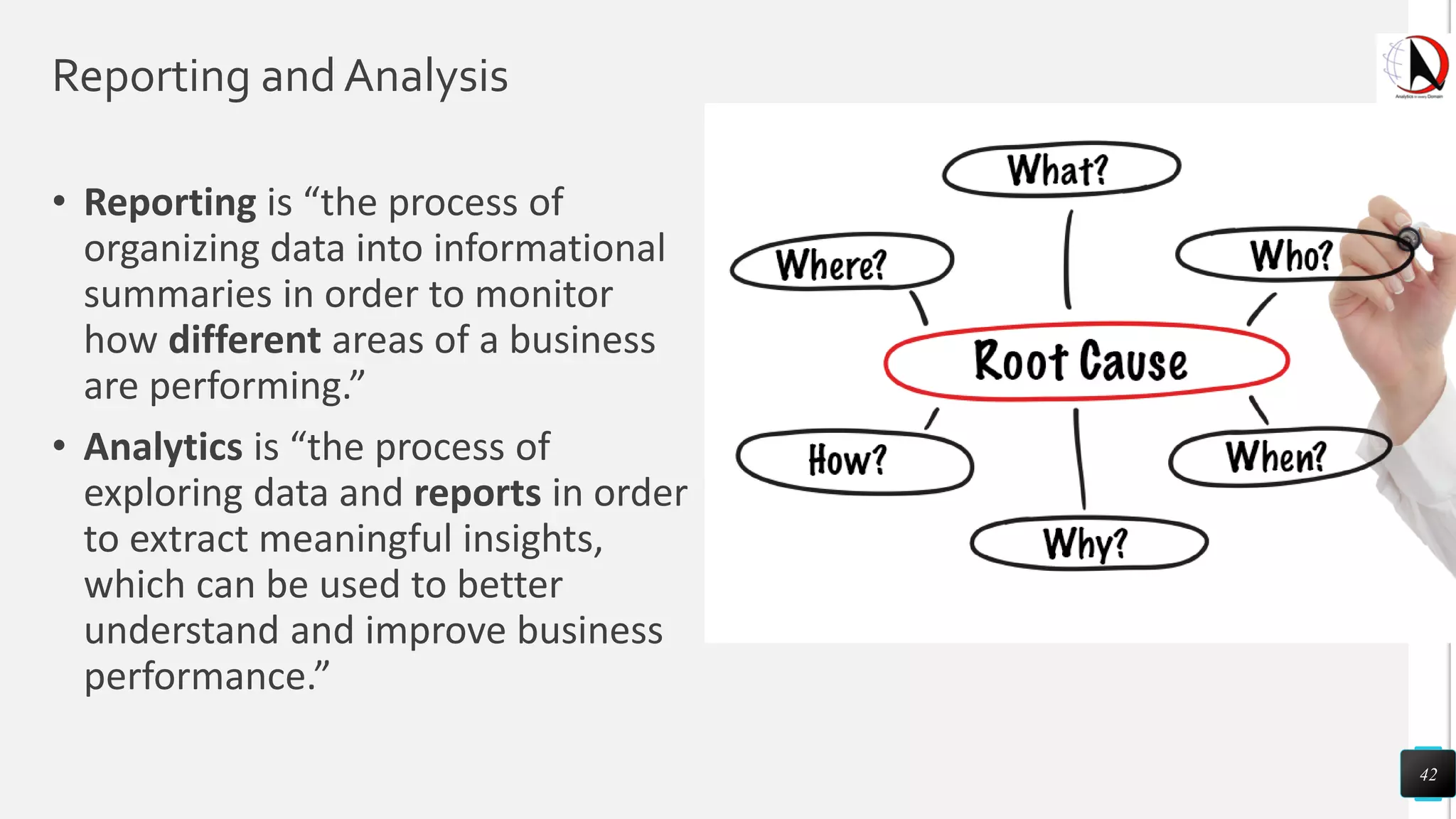

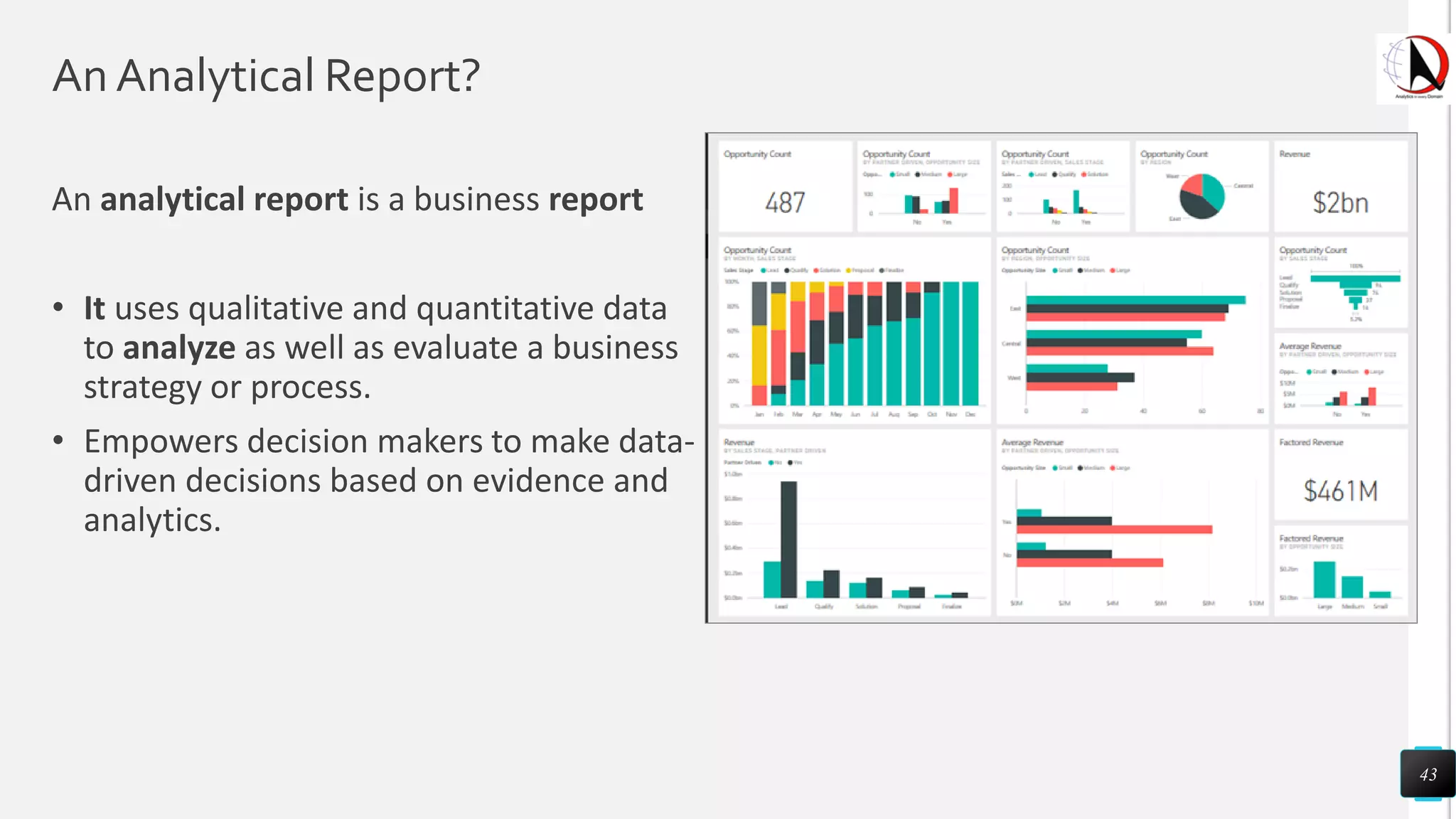

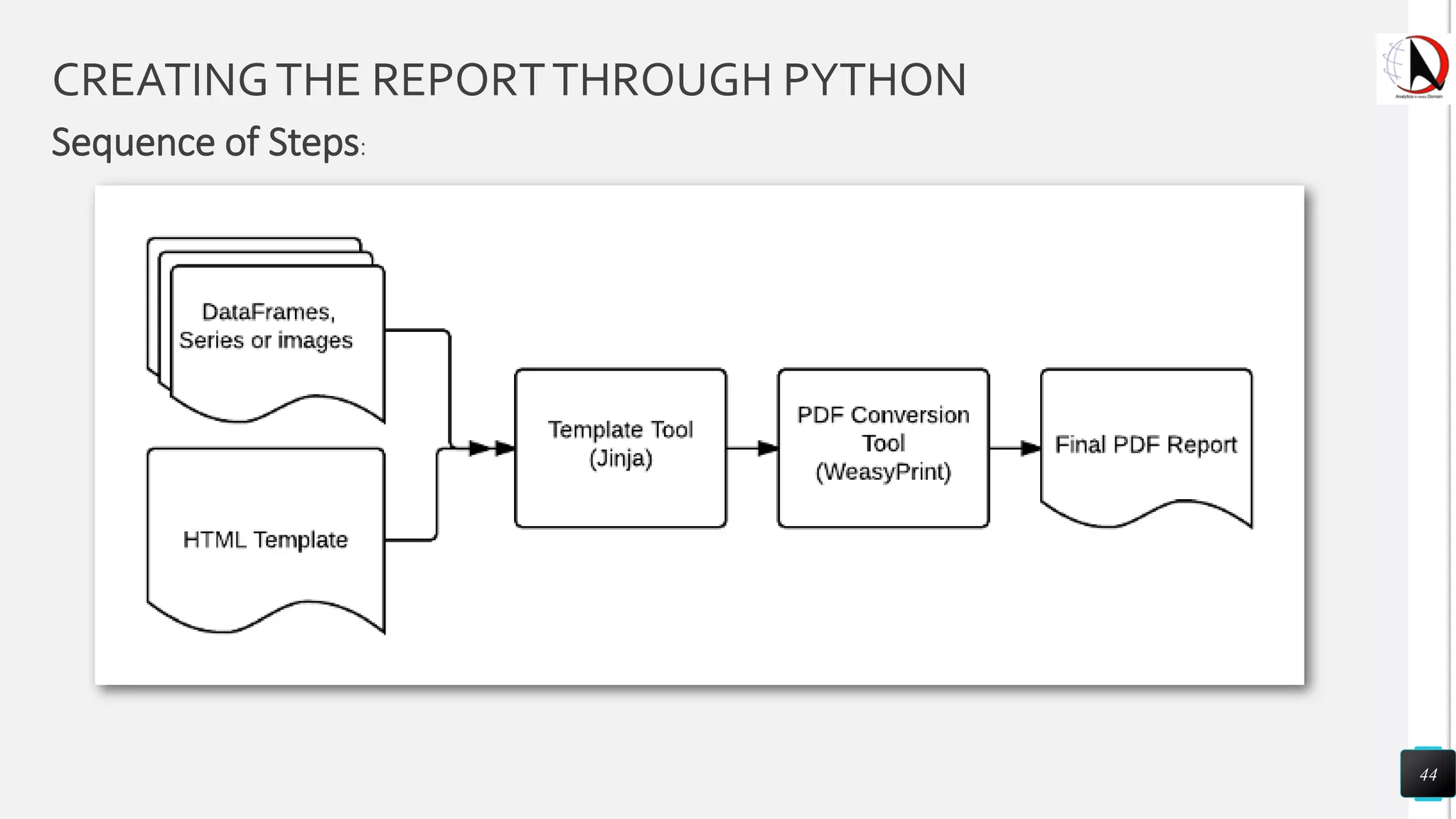

This document outlines an educational session focused on data visualization, covering exploratory data analysis (EDA), effective visualization fundamentals, and tools like Python, R, and Knime. It emphasizes the importance of understanding datasets, handling data quality issues, and generating actionable insights through reports and visualizations. Various data visualization libraries and their functionalities are also discussed, alongside the process of creating meaningful analytical reports.