This document presents a study on the use of an optical tomography system to measure the concentration of gas bubbles in a water column. The system employs a hydraulic flow rig and a hybrid back-projection algorithm to generate two-dimensional images of bubble distributions, which are important for applications in various fields, including medical and gas handling. Results demonstrate the capability of the system to accurately measure and visualize gas concentrations at different flow rates and bubble sizes.

![ISA Transactions 51 (2012) 821–826

Contents lists available at SciVerse ScienceDirect

ISA Transactions

journal homepage: www.elsevier.com/locate/isatrans

Concentration measurements of bubbles in a water column using

an optical tomography system

S. Ibrahim a,n, Mohd Amri.Md Yunus a, R.G. Green b, K. Dutton b

a

b

Faculty of Electrical Engineering, Universiti Teknologi Malaysia, 81310 Skudai, Johor, Malaysia

Materials and Engineering Research Institute, Sheffield Hallam University, City Campus, Sheffield, S1 1WB, United Kingdom

a r t i c l e i n f o

abstract

Article history:

Received 21 June 2011

Received in revised form

27 April 2012

Accepted 27 April 2012

Available online 22 May 2012

Optical tomography provides a means for the determination of the spatial distribution of materials with

different optical density in a volume by non-intrusive means. This paper presents results of

concentration measurements of gas bubbles in a water column using an optical tomography system.

A hydraulic flow rig is used to generate vertical air–water two-phase flows with controllable bubble

flow rate. Two approaches are investigated. The first aims to obtain an average gas concentration at the

measurement section, the second aims to obtain a gas distribution profile by using tomographic

imaging. A hybrid back-projection algorithm is used to calculate concentration profiles from measured

sensor values to provide a tomographic image of the measurement cross-section. The algorithm

combines the characteristic of an optical sensor as a hard field sensor and the linear back projection

algorithm

& 2012 ISA. Published by Elsevier Ltd. All rights reserved.

Keywords:

Bubbles

Concentration

Optical tomography

Optical fibre sensors

Tomography

1. Introduction

In multi-phase flow measurement both the phase distribution

and the velocity profiles vary significantly with temporal and

spatial resolution. This is due to the different phases arranging

themselves in various ways. For a multi-phase flow, the flow

patterns are primarily functions of the volumetric fluxes of all

phases. The flow patterns are functions of superficial velocities or

pressure drops and are depicted in flow profiles. Hence it is

important to have a knowledge of the flow profiles in order to

design heat or heat and mass transfer equipment and to design

fluid-based conveying processes [1].

Information on gas concentration is vital in various applications. In the medical field, it is important to have information on

anaesthetic gas, oxygen or heliox concentration. In order to obtain

a precise bill, it is vital to record the exact heating value of the gas

for gas metering purpose. In gas burner appliances, flame optimisation is conducted for the purpose of efficiency and emission.

This is carried out by regulating the mixing ratio of air and gas.

Generally, accurate gas concentration measurement is vital in

many gas handling applications [2].

Many techniques have been employed for gas or bubble detection

in two-phase flows; both intrusive and non-intrusive measurement

n

Corresponding author. Tel.: þ60 19 7411 434; fax: þ60 7 55 66 272.

E-mail addresses: salleh@fke.utm.my (S. Ibrahim),

mus_utm@yahoo.com (M.Amri.M. Yunus), r.g.green@shu.ac.uk (R.G. Green),

k.dutton@shu.ac.uk (K. Dutton).

techniques were developed for such purpose. However, it is

important that sensors being used for measurement purposes

do not in any way perturb the quantity being measured. Point

sensors are not generally suitable as they disturb the flow field.

Non-intrusive techniques possess the advantage of not modifying

the flow field and they are suitable for laboratory tests [3].

The word tomography comes from the Greek words ‘tomos’

which means a cut or slice and ‘graphein’ which means to

write [4]. Process tomography is a methodology in which the

internal characteristics of process vessel reaction or pipeline flows

are acquired from measurements on or outside the domain of

interest in a non-invasive fashion [5]. This paper describes an

optical tomography system which is used to reconstruct an image

from measurements obtained from several sensors placed around

the measurement section of a hydraulic flow rig. Light travelling

through a transparent medium suffers attenuation for various

reasons, including scattering and absorption. Different materials

cause varying levels of attenuation and it is this phenomenon that

forms the concept of optical tomography. The voltage generated

by the optical sensors is proportional to the level of received light.

It is related to the amount of attenuation in the path of the light

beam caused by the flow regime [6]. Information about the

optical characteristics of a flow can be obtained if a view

consisting of an optical emitter and detector pair are positioned

either side of the measurement section. A larger area can be

interrogated if several views are combined to form a projection.

The image of the flow can be reconstructed if several different

projections are utilised [7].

0019-0578/$ - see front matter & 2012 ISA. Published by Elsevier Ltd. All rights reserved.

http://dx.doi.org/10.1016/j.isatra.2012.04.010](https://image.slidesharecdn.com/concentrationmeasurementsofbubblesinawatercolumn-131121154835-phpapp02/75/Concentration-measurements-of-bubbles-in-a-water-column-using-an-optical-tomography-system-1-2048.jpg)

![822

S. Ibrahim et al. / ISA Transactions 51 (2012) 821–826

2. The measurement system

In an optical tomography system, several groups of transmitter

and receiver pairs are used in a system to provide better solution

and to minimise the aliasing which occurs when two particles

intercept the same view [8]. In this project, four dichroic halogen

bulbs act as light projectors. The light receivers consist of an array

of optical fibre sensors arranged in a combination of two orthogonal and two rectilinear projections (Fig. 1). The orthogonal

projections each consist of an 8 Â 8 array of optical fibre sensors

whereas the rectilinear projections consist of an 11 Â 11 array of

optical fibre sensors (the numbers of sensors is chosen so that they

will give a balanced sensitivity). Thus the total number of optical

fibre sensors used is thirty eight. Ideally the two orthogonal and

two rectilinear projections system should be in the same plane.

However, if they were they would overlap each other and so two

of the projections have to be placed in a separate plane. These

planes are separated by only a few mm with the two orthogonal

projection system placed on top of the rectilinear projections with

respect to the direction of flow. Each optical fibre receiver has a

length of 200 cm from the flow pipe to the electronic circuit.

The receiver circuit is designed for signal conditioning using

amplifiers and filters. The final outputs of the circuit are electrical

signals consisting of a rectified voltage and an averaged voltage. The

rectified voltage enables unipolar data acquisition and consequent

signal processing using a PC. The output of the amplifier should be

proportional to the gas flow rate passing the associated sensor. If all

the averaged voltage amplifier outputs are summed, they should be

proportional to the gas flow rate indicated by the gas rotameter. All

the electronic circuits are placed in an earthed metal box to

minimise electrical noise pick-up. The rectified analogue signals

from an array of optical transducers, covering a cross-section of the

pipe, are converted into digital form by the Keithley Instruments

DAS-1800HC data acquisition system and passed into an image

reconstruction system. For concentration measurements, a sampling

frequency of 500 Hz per channel is chosen as the velocities and flow

rates associated with this project are relatively low (i.e. 0.2–0.3 m/s).

This enables two hundred and twenty-two points to be collected for

each of the thirty-eight channels, which allows 0.44 s of flow data to

be obtained.

Data acquired by the receiver circuit is processed using the hybrid

linear back projection algorithm, which has been described in a

recent paper by Ibrahim et al. [9] in order to generate two-dimensional images of the bubbles in water. The algorithm incorporates

both a priori knowledge and linear back projection (LBP) in order to

improve the accuracy of the image reconstruction. Since optical

sensors are hard field sensors, the material in the flow is assumed

only to vary the intensity of the received signal. This enables a priori

knowledge from the optical sensors to be used as a constraint in the

reconstruction. The optical sensor signal is conditioned so when no

Projector 2

Flow Pipe

Projector 1

Top Aluminium Plate

Optical Fibre Receivers

Optical Fibre

Receivers

Perspex

Optical Fibre Receivers

Projector 3

Perspex

Bottom Aluminium

Plate

Projector 4

Fig. 1. Arrangement of the projectors and optical fibres around the flow pipe—isometric view.](https://image.slidesharecdn.com/concentrationmeasurementsofbubblesinawatercolumn-131121154835-phpapp02/75/Concentration-measurements-of-bubbles-in-a-water-column-using-an-optical-tomography-system-2-2048.jpg)

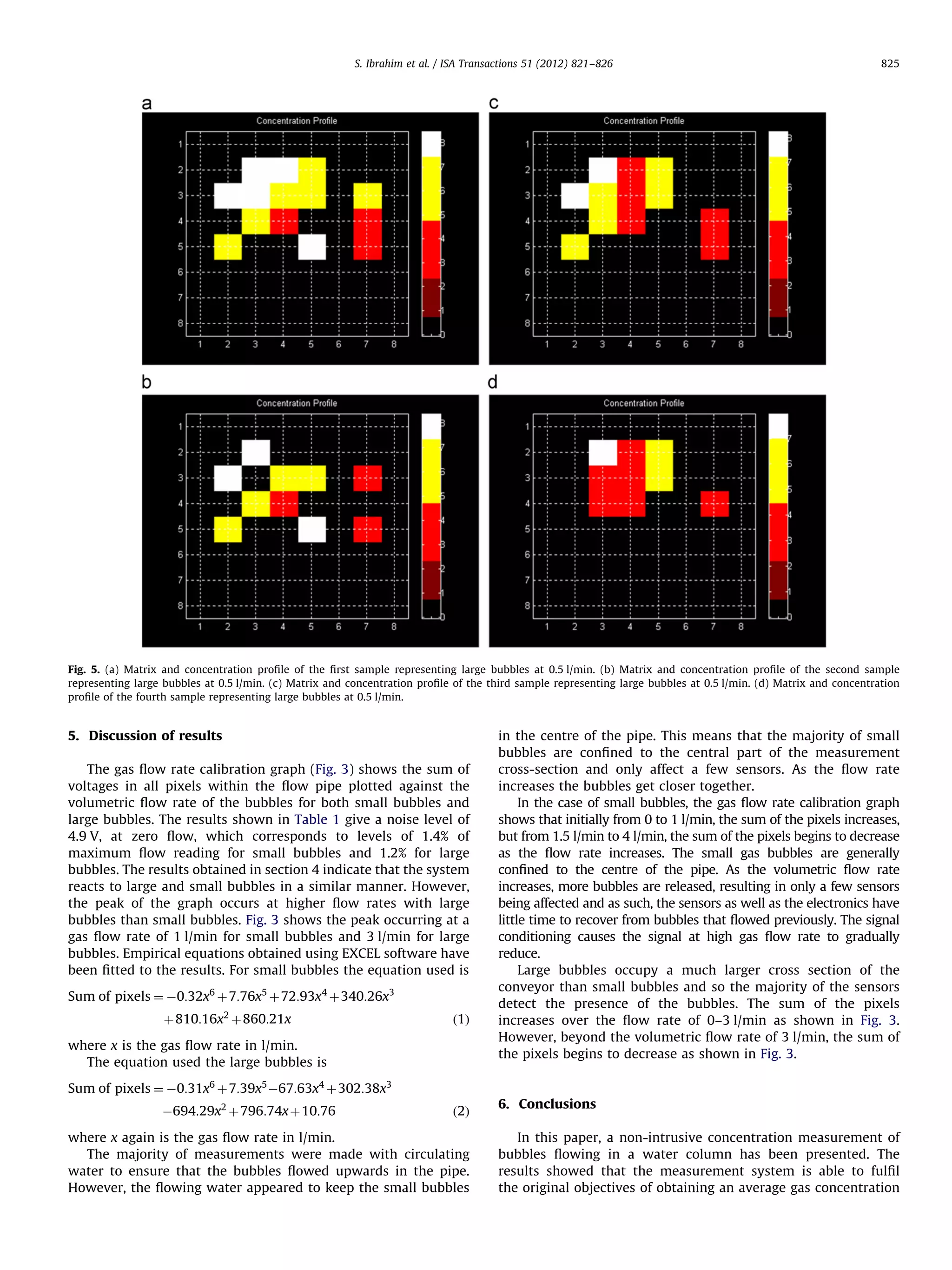

![S. Ibrahim et al. / ISA Transactions 51 (2012) 821–826

objects block the path from light transmitter to the receiver the

sensor will produce a zero output value, neglecting the effect of noise

inherent in the system.

The system was tested on the hydraulic flow rig shown in Fig. 2.

The measurement system is built around a vertical pipe 1.27 m long

with circular cross-section. Control of the water flow is effected by

the use of a pump and by various valves installed in the rig. Bubbles

are injected into the measurement section through two bubble

injectors placed at the base of the vertical section. The two small air

injectors are utilised to blow different sized gas bubbles from the

bottom of the pipe. Small bubbles are generated by a porous plug in

the base of the flow rig, producing bubbles which visually appear to

be in the range 10 mm. Large bubbles are produced by direct gas

injection into the flowing water. These bubbles are about 20 mm in

diameter. When the bubbles rise up and pass though the imaging

cross-section, the two-phase distribution over the imaging plane can

be measured. The time history of bubbles rising up through the

measurement section can be obtained in an off-line manner with

the data stored on the hard-disc. Control of bubble flow is achieved

through the use of two valves linked to the two bubble injectors. The

valves can control the size of the bubbles as well as generating

various flow regimes. The air pressure supply to the bubble injectors

can be varied from 0 to 420 kP. Throughout the experiment, gas is

injected at a constant pressure of 50 kP. The bubble collapsed as it

reached the surface of the water.

The flow pipe is made of perspex to enable visual observation

of the flow. The lower measurement section, consisting of thirtyeight sensors, is placed 62 cm above the gas injection points and

the second sensing array, which also consists of thirty-eight

sensors, is placed 15 cm downstream of the former. The measurement section is of modular construction and comprises a series of

perspex blocks 90 mm square with an 80 mm diameter central

bore so that when bolted together they provide a continuous

80 mm diameter internal flow passage. In order to reduce optical

distortion and to allow optical observation, a flat square shape

perspex window is used [10]. The flow rig is equipped with two

823

rotameters: a water rotameter (0–7 l/min) and a gas rotameter

(0–7 l/min). Each rotameter provides direct readings of the total

flow rate of water and bubbles respectively. For the experiments

described in this paper water always formed the continuous

phase and the gas flow was always in the bubbly regime. The

volumetric flow rate of bubbles ranged from 0 to 7 l/min.

3. Average concentration measurement

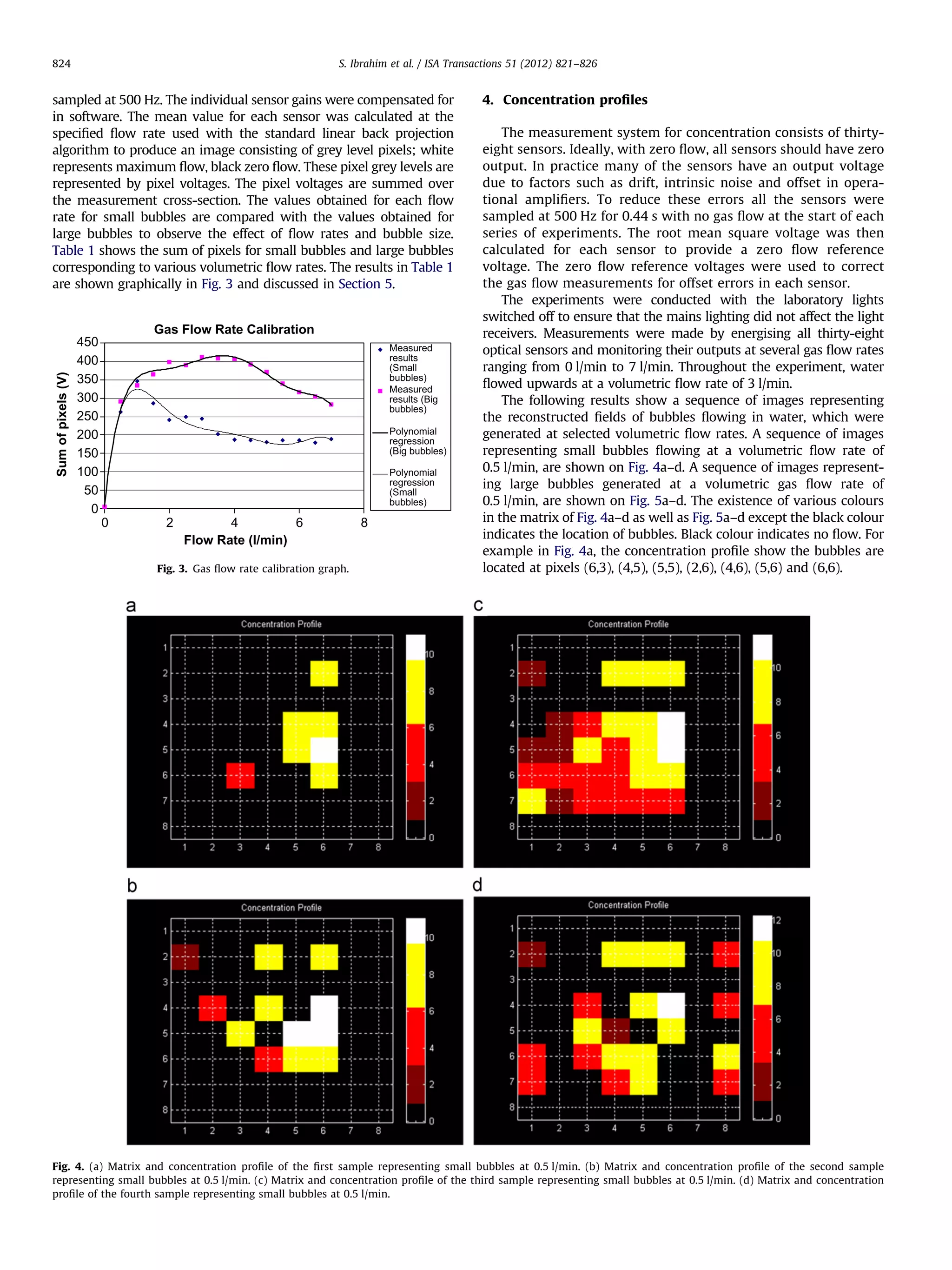

The measurements presented in this section consider the results

from each sensor as a continuous sample of the gas concentration

within its sensing field. The method was to obtain two hundred and

twenty-two samples for all thirty-eight sensors; each sensor was

Table 1

The flow rates of bubbles and the corresponding sum of pixel voltages for small

bubbles and large bubbles.

Flow rates

(l/min)

Sum of pixel

voltages for

small bubbles

(V)

Error

percentage of

small bubbles

(%)

Sum of pixel

voltages for

large bubbles

(V)

Error

percentage

of large

bubbles (%)

0

0.5

1

1.5

2

2.5

3

3.5

4

4.5

5

5.5

6

6.5

7

4.9

262.2

345.6

285.4

240.5

249.3

243.8

201.6

188

185.5

180.1

186.4

185.4

179.3

188.2

2

6.4

3.2

17.3

30.3

27.7

29.3

40.6

45.5

46.2

47.8

46.0

46.3

48.0

45.4

4.9

291.1

334.2

365.5

397.9

389.3

412

408.4

406.4

390.8

371.4

340.3

316.1

303.9

282.4

5.1

4.0

5.9

1.2

0.1

0.7

0.5

0.9

0.9

1.1

1.0

17.4

23.3

26.2

31.5

Fig. 2. The hydraulic flow rig.](https://image.slidesharecdn.com/concentrationmeasurementsofbubblesinawatercolumn-131121154835-phpapp02/75/Concentration-measurements-of-bubbles-in-a-water-column-using-an-optical-tomography-system-3-2048.jpg)

![826

S. Ibrahim et al. / ISA Transactions 51 (2012) 821–826

and gas distribution profiles. For future work, it is suggested that

experiments will be conducted over a larger range of flow

regimes. Ideally in an industrial environment, it is preferable to

use laser as the light source due to its monochromatic and

coherent characteristics. The resolution can be increased by

increasing the number of views per light sources for each pixel.

Further investigation using other types of reconstruction algorithms and different forms of filtering techniques should be

performed. The use of multi modality tomography should be

investigated in which the optical tomographic system can be

combined with other types of sensing with the aim of comparing

the accuracy of the measurements and increasing the understanding of the flow process.

Acknowledgement

The authors wish to acknowledge the assistance of Universiti

Teknologi Malaysia for providing the funds and resources in

carrying out this research.

References

[1] Dyakowski T. Process tomography applied to multi-phase flow measurement.

Measurement Science & Technology 1996;7:343–53.

[2] /http://www.sensirion.com/en/pdf/RD-notes/RD-note_February2012.pdfS.

[3] Rossi GL. Error analysis based development of a bubble velocity measurement chain. Flow Measurement and Instrumentation 1996;7(1):39–47.

[4] Northrop RB. Noninvasive instrumentation and measurement in medical

diagnosis. Boca Raton, FL: CRC Press; 2002.

[5] Wang M. Process tomography (editorial). Measurement Science and Technology 2006:17.

[6] Abdul Rahim R, Kok San C. Optical tomography imaging in pneumatic

conveyor. Sensors and Transducers Journal 2008;95(8):40–8.

[7] Daniels AR. Dual modality tomography for the monitoring of constituent

volumes in multi-component flow. PhD thesis. Sheffield Hallam University;

1996.

[8] Abdul Rahim R, Fea P J, Kok San C. Optical tomography sensor configuration

using two orthogonal and two rectilinear projection arrays. Flow Measurement and Instrumentation 2005;16:327–40.

[9] Ibrahim S, Green R G, Dutton K, Evans K, Goude A, Abdul Rahim R. Optical

sensor configurations for process tomography. Measurement science &

technology 1999;10:1079–86.

[10] King NW, Purfit GL, Pursley WC. A laboratory facility for the study of mixing

phenomena in water/oil flows. In: Proceedings of the symposium flow

metering and proving techniques in the offshore oil industry (Aberdeen);

1983: p. 233–41.](https://image.slidesharecdn.com/concentrationmeasurementsofbubblesinawatercolumn-131121154835-phpapp02/75/Concentration-measurements-of-bubbles-in-a-water-column-using-an-optical-tomography-system-6-2048.jpg)