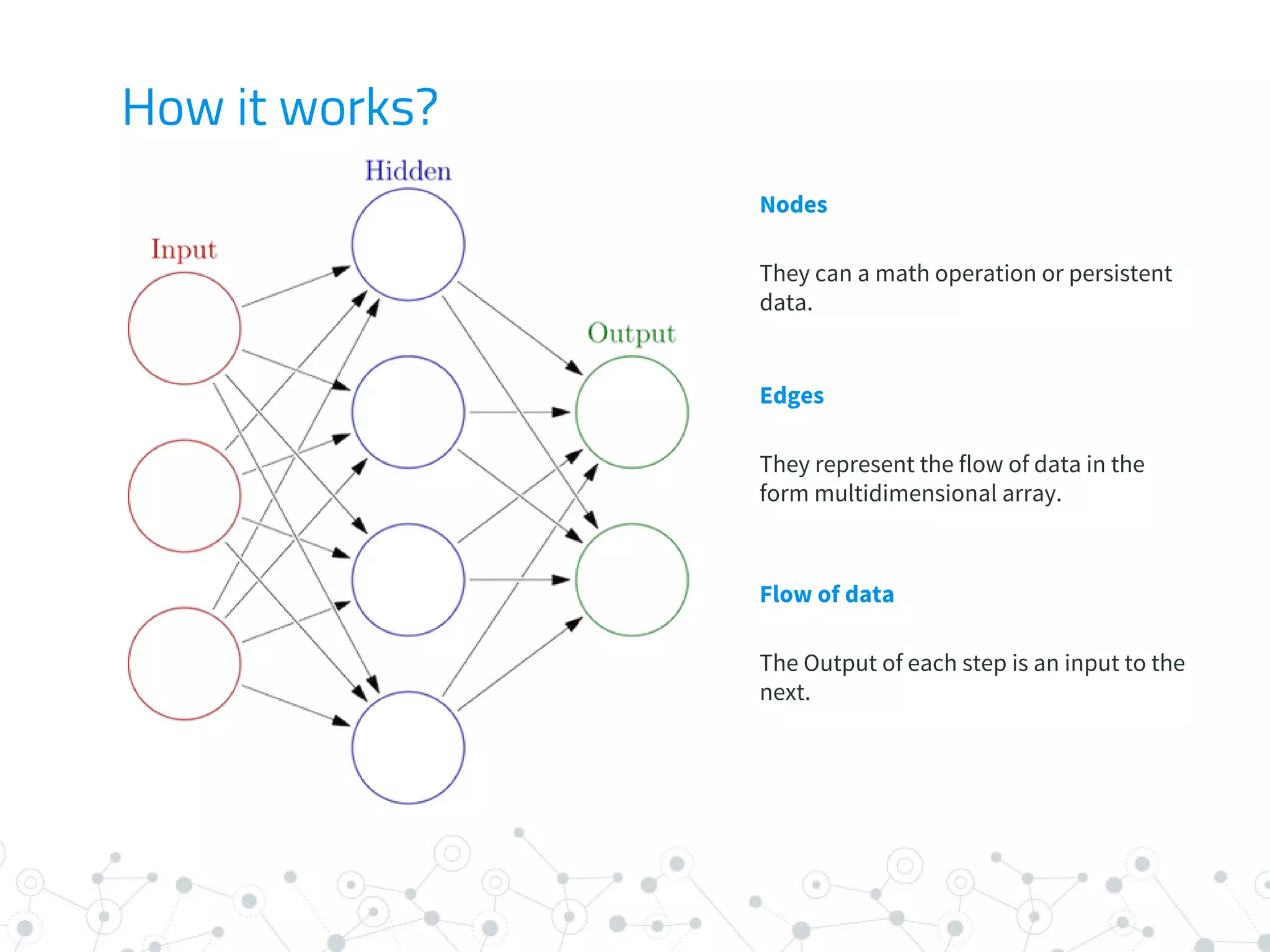

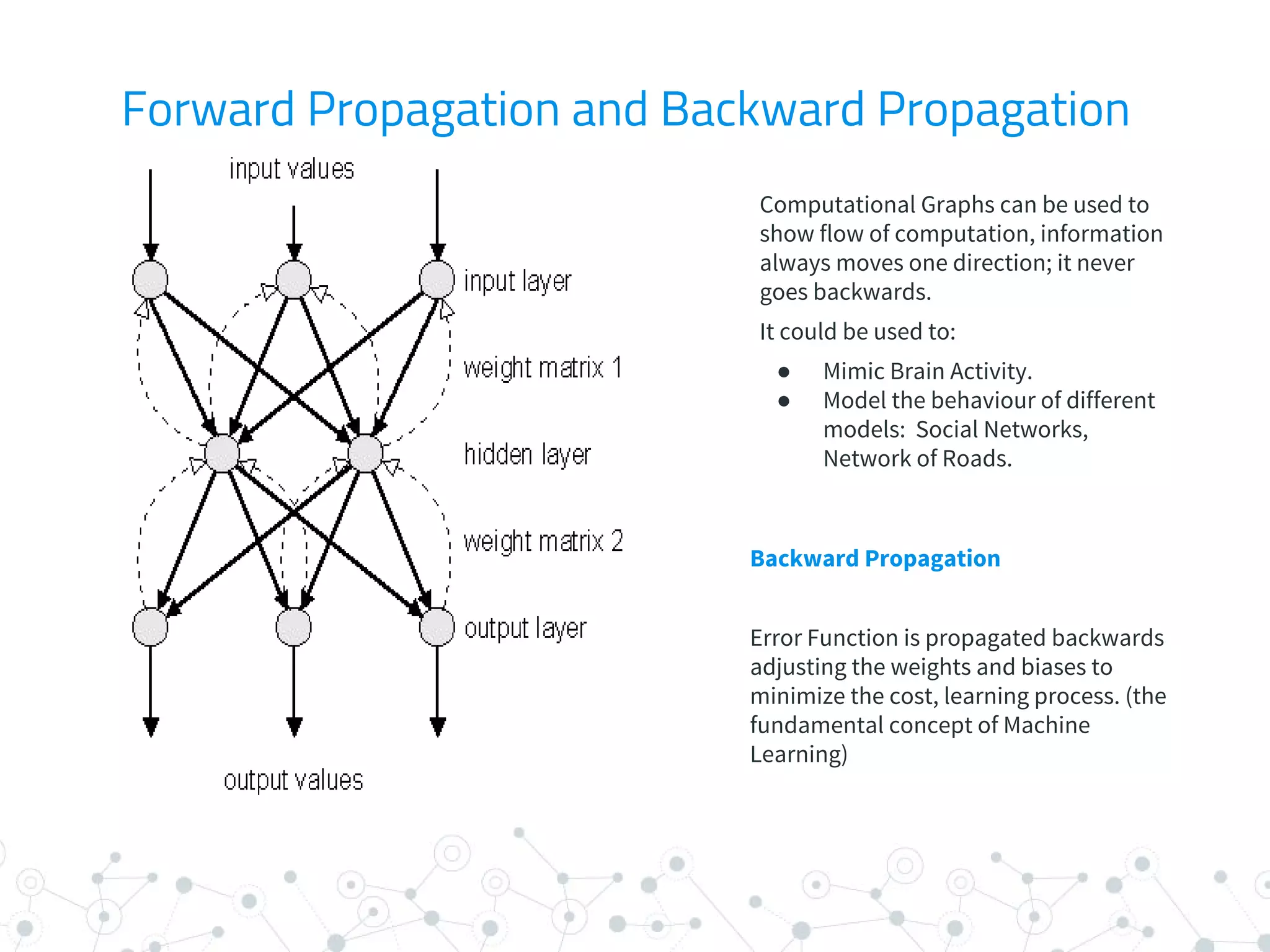

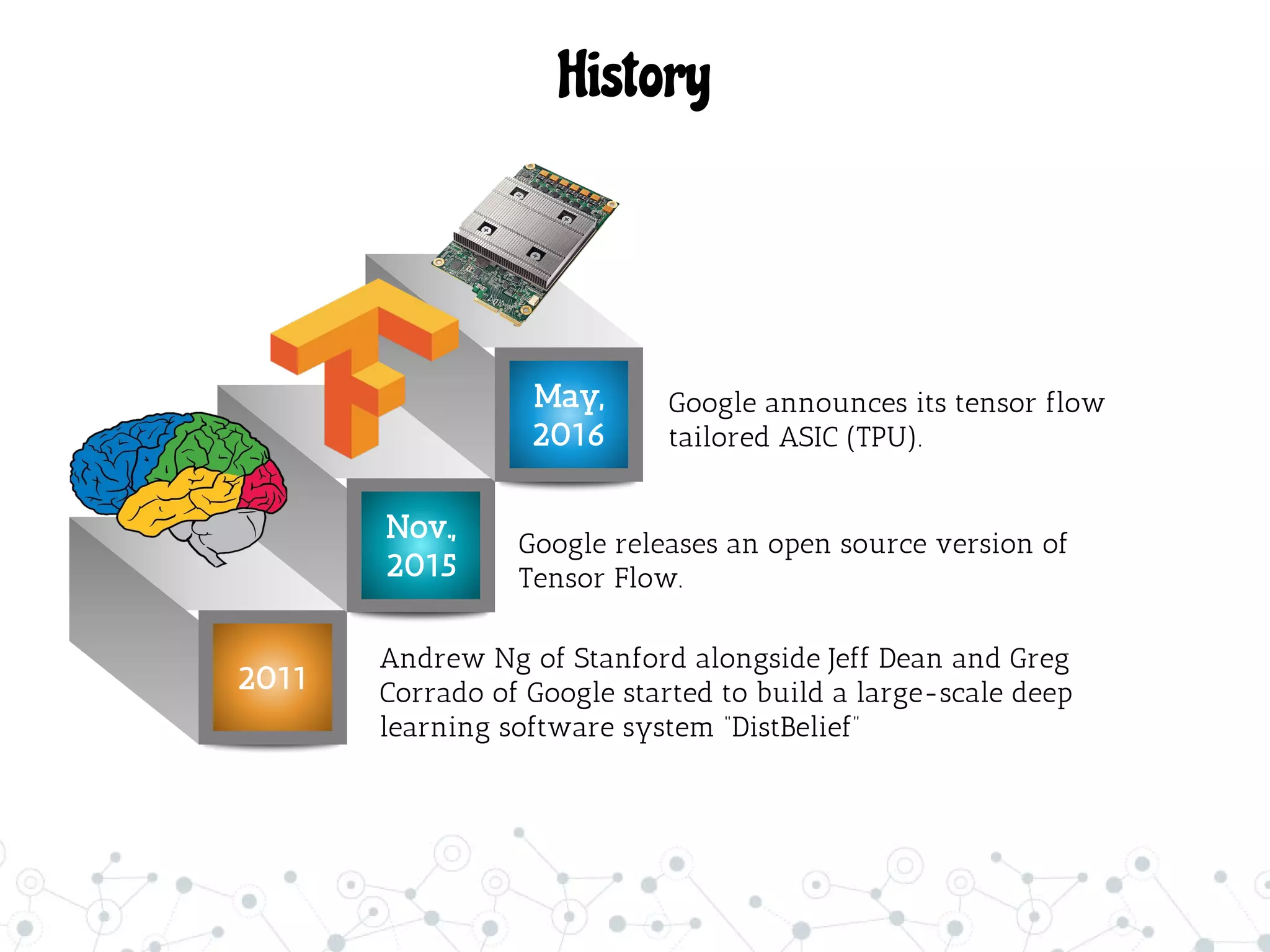

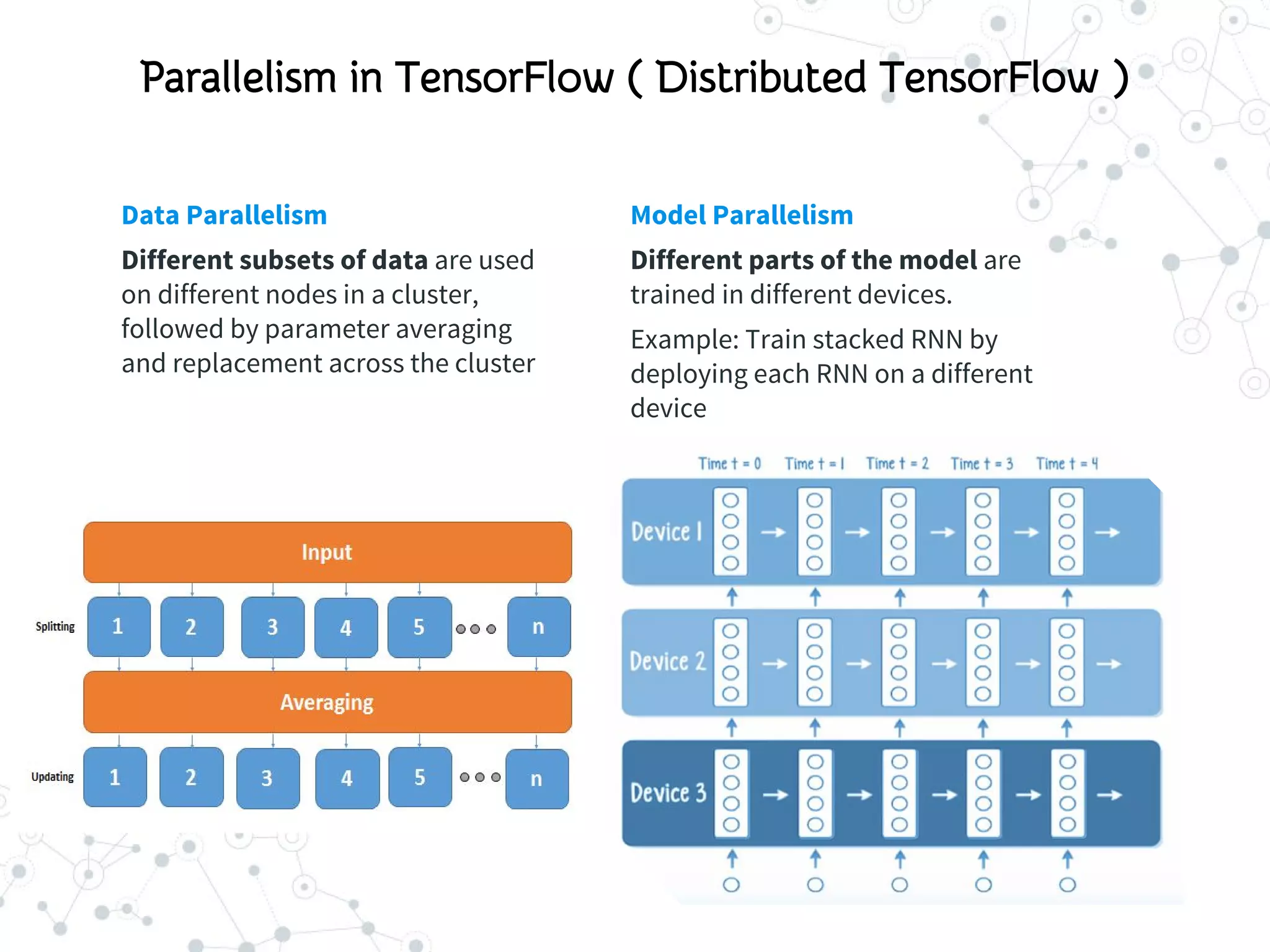

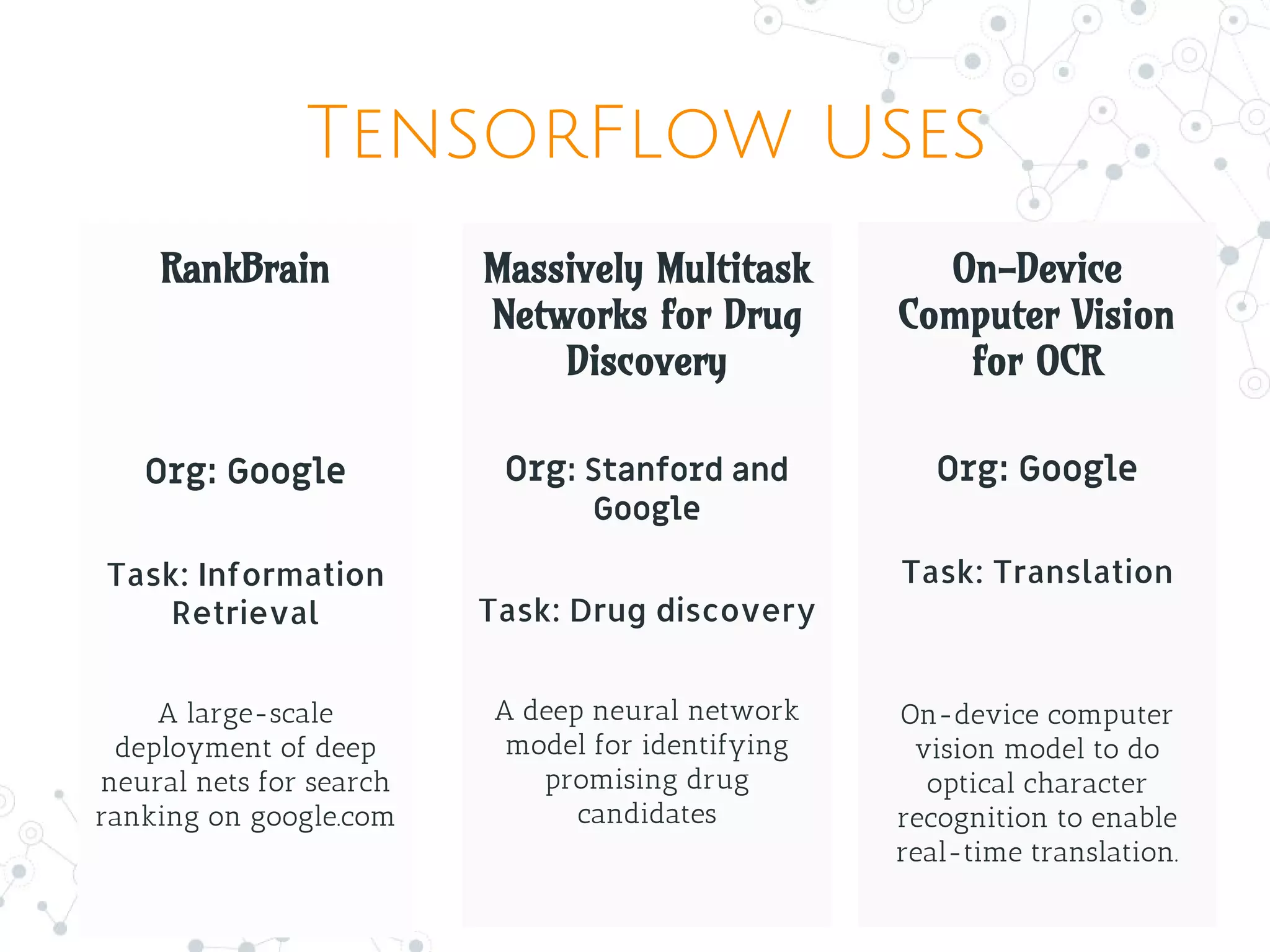

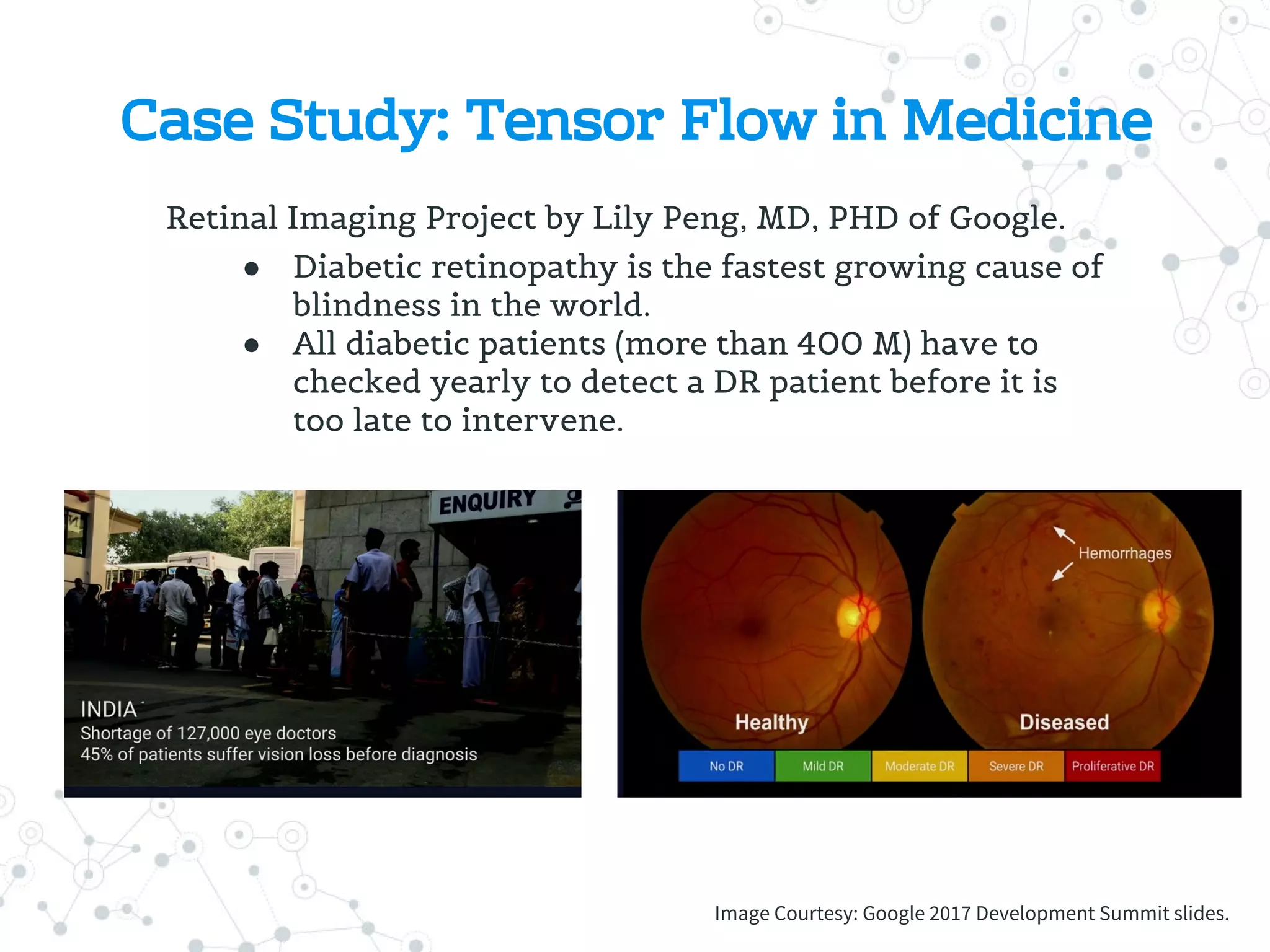

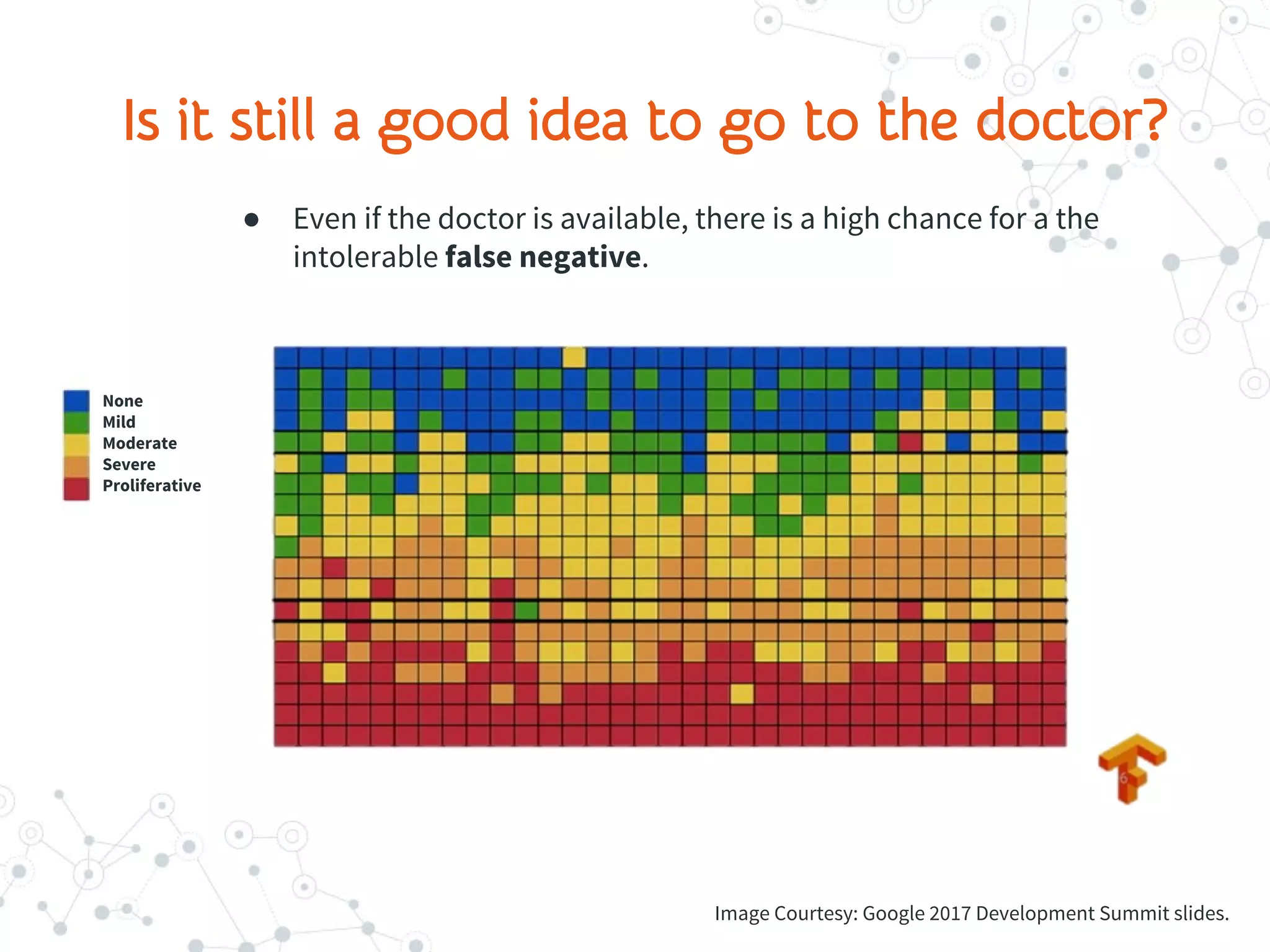

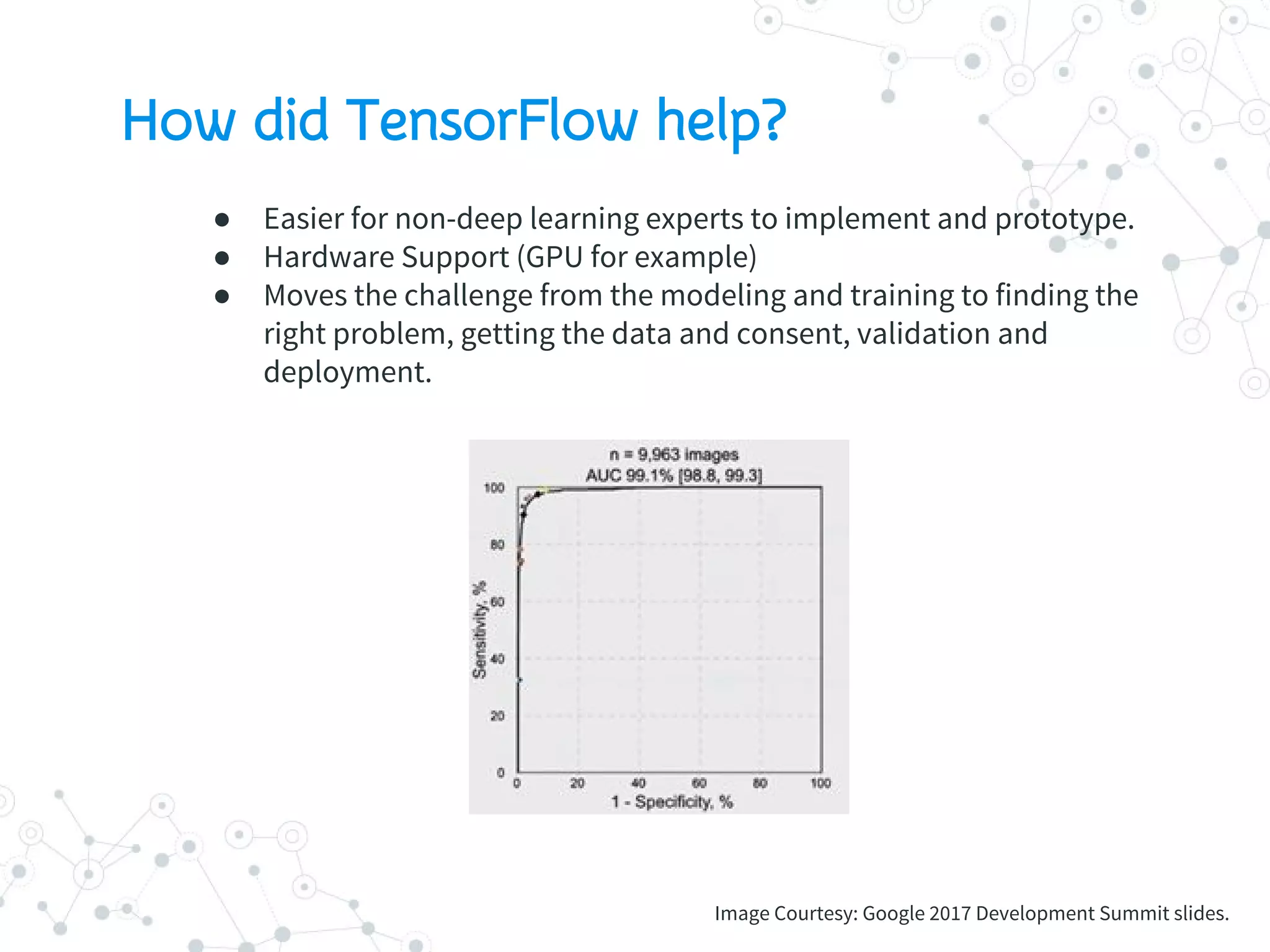

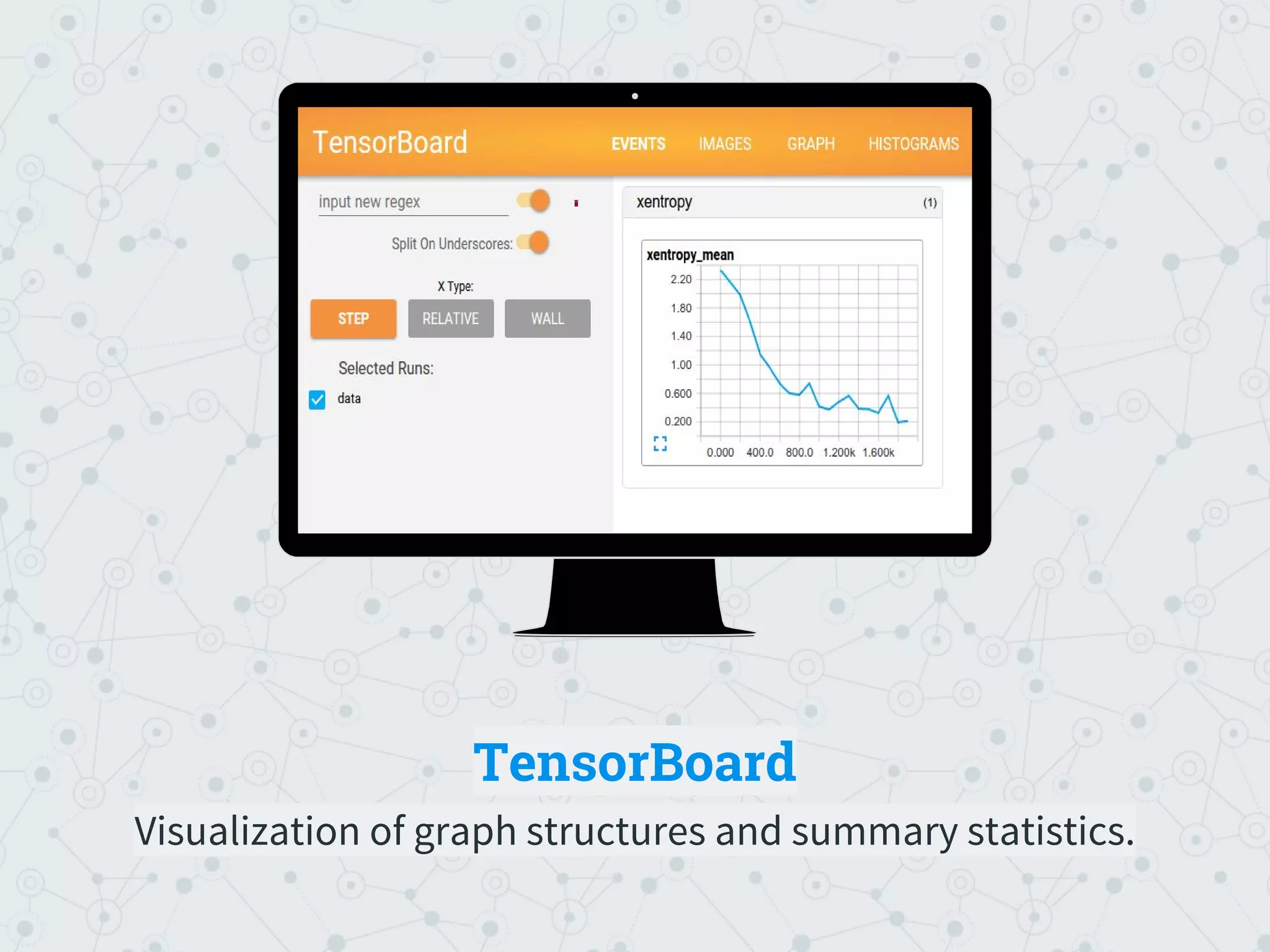

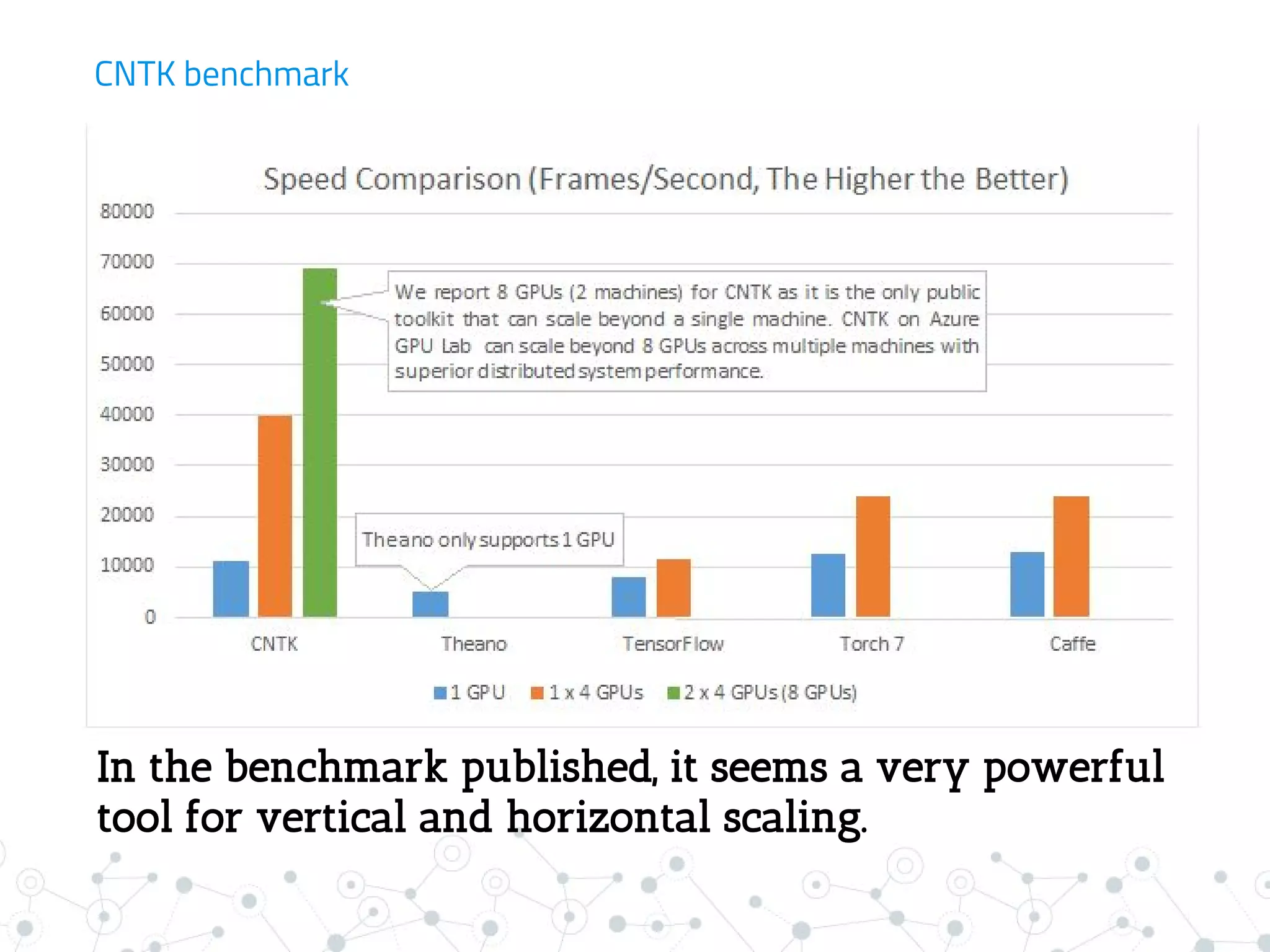

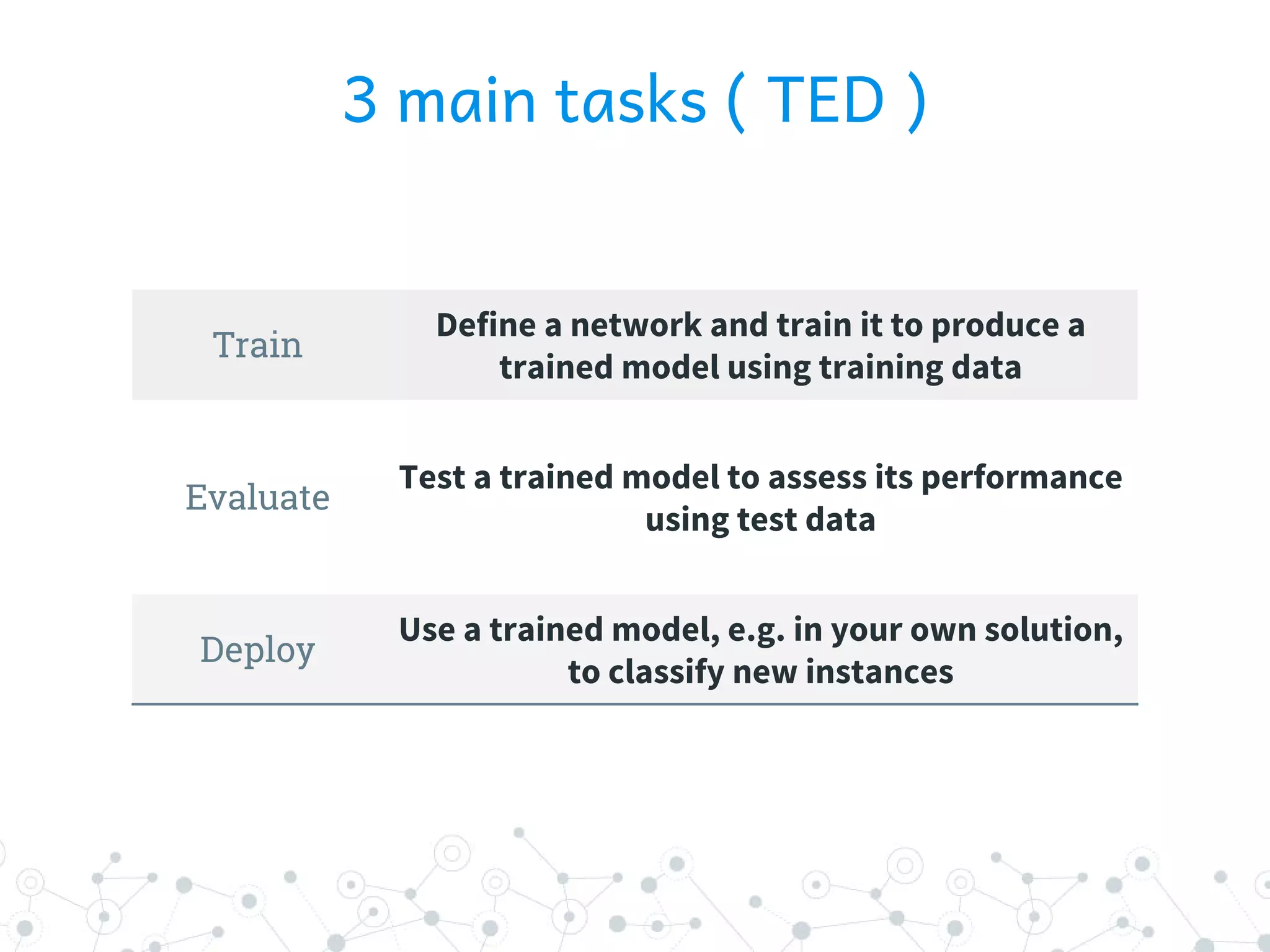

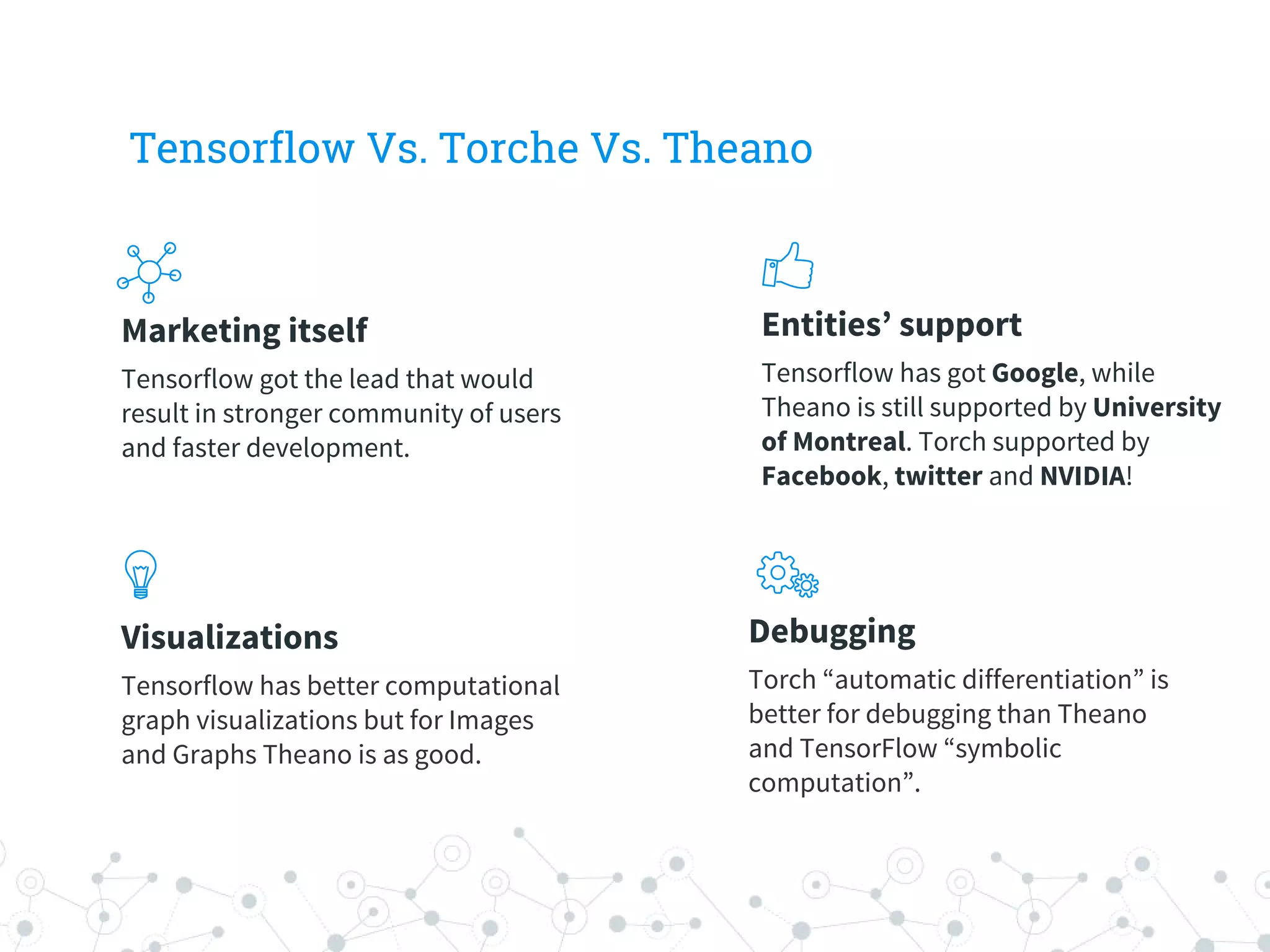

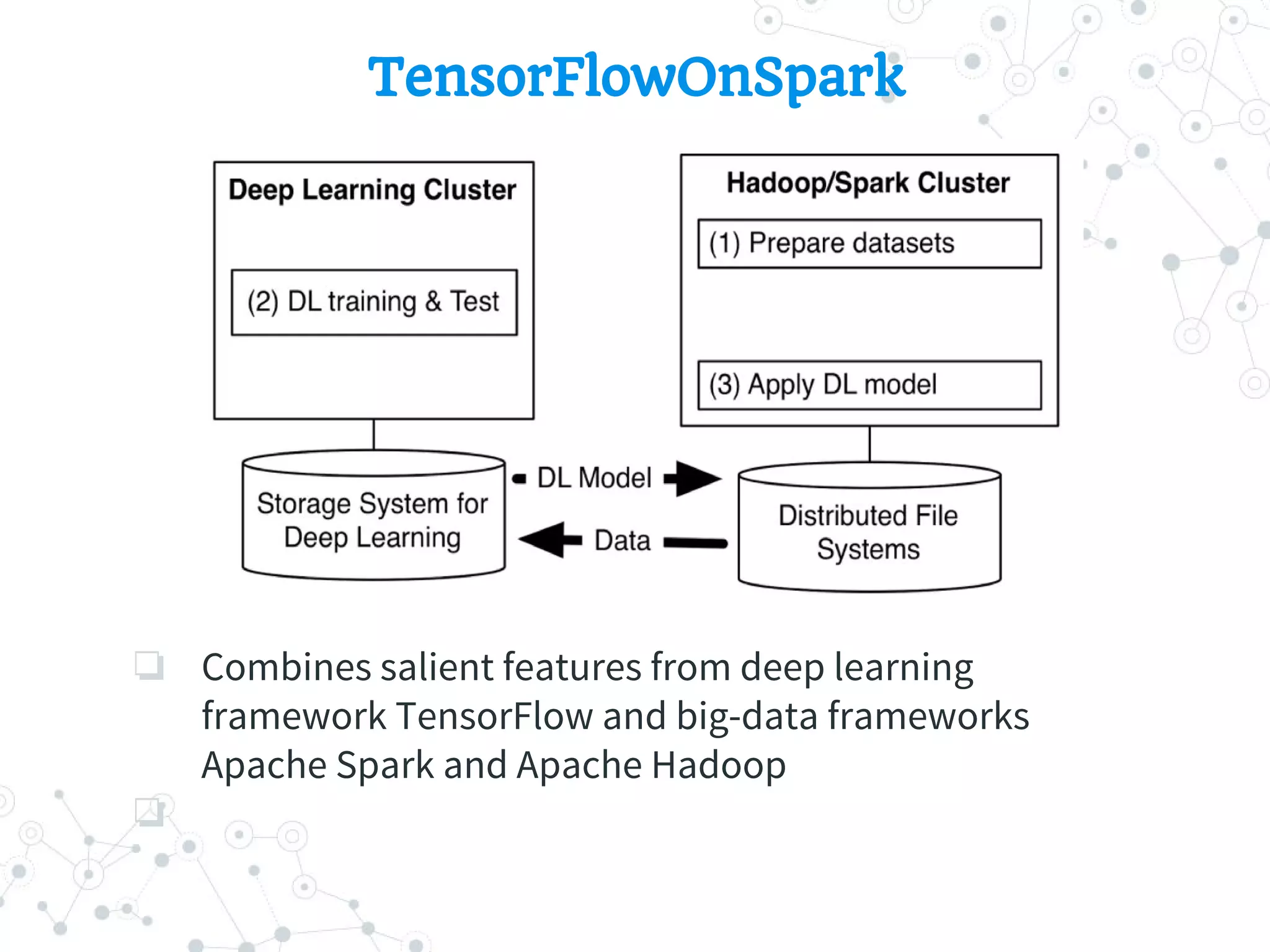

This document provides an overview of computational graphs and several deep learning frameworks. It describes how computational graphs represent functions as connected nodes that propagate data through edges. Frameworks like TensorFlow, CNTK, and PyTorch use computational graphs for forward and backward propagation in neural networks. TensorFlow is highlighted as an open-source framework developed by Google that supports data and model parallelism across CPU, GPU, and custom hardware. CNTK is also introduced as a Microsoft framework that describes neural networks as directed graphs.