Chapter3[one.]

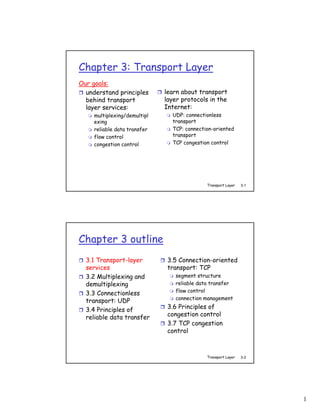

- 1. Chapter 3: Transport Layer Our goals: understand principles learn about transport behind transport layer protocols in the layer services: Internet: multiplexing/demultipl UDP: connectionless exing transport reliable data transfer TCP: connection-oriented flow control transport congestion control TCP congestion control Transport Layer 3-1 Chapter 3 outline 3.1 Transport-layer 3.5 Connection-oriented services transport: TCP 3.2 Multiplexing and segment structure demultiplexing reliable data transfer flow control 3.3 Connectionless connection management transport: UDP 3.4 Principles of 3.6 Principles of reliable data transfer congestion control 3.7 TCP congestion control Transport Layer 3-2 1

- 2. Transport services and protocols provide logical communication application between app processes transport network running on different hosts data link network physical data link network physical transport protocols run in lo data link icg physical end systems a le network nd data link send side: breaks app -e physical network nd data link messages into segments, physical tr an passes to network layer network s data link po physical rcv side: reassembles rt segments into messages, application transport passes to app layer network data link more than one transport physical protocol available to apps Internet: TCP and UDP Transport Layer 3-3 Transport vs. network layer network layer: logical Household analogy: communication 12 kids sending letters between hosts to 12 kids transport layer: logical processes = kids communication app messages = letters between processes in envelopes relies on, enhances, hosts = houses network layer services transport protocol = Ann and Bill network-layer protocol = postal service Transport Layer 3-4 2

- 3. Internet transport-layer protocols reliable, in-order application transport delivery (TCP) network data link network physical congestion control network data link physical lo data link g flow control ic physical a le network connection setup nd data link -e physical network nd data link unreliable, unordered physical tr an delivery: UDP network s data link po physical rt no-frills extension of “best-effort” IP application transport network services not available: data link physical delay guarantees bandwidth guarantees Transport Layer 3-5 Chapter 3 outline 3.1 Transport-layer 3.5 Connection-oriented services transport: TCP 3.2 Multiplexing and segment structure demultiplexing reliable data transfer flow control 3.3 Connectionless connection management transport: UDP 3.4 Principles of 3.6 Principles of reliable data transfer congestion control 3.7 TCP congestion control Transport Layer 3-6 3

- 4. Multiplexing/demultiplexing Demultiplexing at rcv host: Multiplexing at send host: gathering data from multiple delivering received segments sockets, enveloping data with to correct socket header (later used for demultiplexing) = socket = process P3 P1 P1 P2 P4 application application application transport transport transport network network network link link link physical physical physical host 2 host 3 host 1 Transport Layer 3-7 How demultiplexing works host receives IP datagrams each datagram has source 32 bits IP address, destination IP address source port # dest port # each datagram carries 1 transport-layer segment other header fields each segment has source, destination port number host uses IP addresses & port application numbers to direct segment to data appropriate socket (message) TCP/UDP segment format Transport Layer 3-8 4

- 5. Connectionless demultiplexing When host receives UDP Create sockets with port segment: numbers: DatagramSocket mySocket1 = new checks destination port DatagramSocket(99111); number in segment DatagramSocket mySocket2 = new directs UDP segment to DatagramSocket(99222); socket with that port number UDP socket identified by two-tuple: IP datagrams with different source IP (dest IP address, dest port number) addresses and/or source port numbers directed to same socket Transport Layer 3-9 Connectionless demux (cont) DatagramSocket serverSocket = new DatagramSocket(6428); P2 P1 P1 P3 SP: 6428 SP: 6428 DP: 9157 DP: 5775 SP: 9157 SP: 5775 client DP: 6428 DP: 6428 Client server IP: A IP: C IP:B SP provides “return address” Transport Layer 3-10 5

- 6. Connection-oriented demux TCP socket identified Server host may support by 4-tuple: many simultaneous TCP source IP address sockets: source port number each socket identified by dest IP address its own 4-tuple dest port number Web servers have recv host uses all four different sockets for values to direct each connecting client segment to appropriate non-persistent HTTP will socket have different socket for each request Transport Layer 3-11 Connection-oriented demux (cont) P1 P4 P5 P6 P2 P1 P3 SP: 5775 DP: 80 S-IP: B D-IP:C SP: 9157 SP: 9157 client DP: 80 DP: 80 Client server IP: A S-IP: A IP: C S-IP: B IP:B D-IP:C D-IP:C Transport Layer 3-12 6

- 7. Connection-oriented demux: Threaded Web Server P1 P4 P2 P1 P3 SP: 5775 DP: 80 S-IP: B D-IP:C SP: 9157 SP: 9157 client DP: 80 DP: 80 Client server IP: A S-IP: A IP: C S-IP: B IP:B D-IP:C D-IP:C Transport Layer 3-13 Chapter 3 outline 3.1 Transport-layer 3.5 Connection-oriented services transport: TCP 3.2 Multiplexing and segment structure demultiplexing reliable data transfer flow control 3.3 Connectionless connection management transport: UDP 3.4 Principles of 3.6 Principles of reliable data transfer congestion control 3.7 TCP congestion control Transport Layer 3-14 7

- 8. UDP: User Datagram Protocol [RFC 768] “no frills,” “bare bones” Internet transport Why is there a UDP? protocol no connection “best effort” service, UDP establishment (which can segments may be: add delay) lost simple: no connection state delivered out of order at sender, receiver to app small segment header connectionless: no congestion control: UDP no handshaking between can blast away as fast as UDP sender, receiver desired each UDP segment handled independently of others Transport Layer 3-15 UDP: more often used for streaming multimedia apps 32 bits loss tolerant Length, in source port # dest port # rate sensitive bytes of UDP length checksum segment, other UDP uses including DNS header SNMP reliable transfer over UDP: Application add reliability at data application layer (message) application-specific error recovery! UDP segment format Transport Layer 3-16 8

- 9. UDP checksum Goal: detect “errors” (e.g., flipped bits) in transmitted segment Sender: Receiver: treat segment contents compute checksum of as sequence of 16-bit received segment integers check if computed checksum checksum: addition (1’s equals checksum field value: complement sum) of NO - error detected segment contents YES - no error detected. sender puts checksum But maybe errors value into UDP checksum nonetheless? More later field …. Transport Layer 3-17 Internet Checksum Example Note When adding numbers, a carryout from the most significant bit needs to be added to the result Example: add two 16-bit integers 1 1 1 1 0 0 1 1 0 0 1 1 0 0 1 1 0 1 1 1 0 1 0 1 0 1 0 1 0 1 0 1 0 1 wraparound 1 1 0 1 1 1 0 1 1 1 0 1 1 1 0 1 1 sum 1 1 0 1 1 1 0 1 1 1 0 1 1 1 1 0 0 checksum 1 0 1 0 0 0 1 0 0 0 1 0 0 0 0 1 1 Transport Layer 3-18 9

- 10. Chapter 3 outline 3.1 Transport-layer 3.5 Connection-oriented services transport: TCP 3.2 Multiplexing and segment structure demultiplexing reliable data transfer flow control 3.3 Connectionless connection management transport: UDP 3.4 Principles of 3.6 Principles of reliable data transfer congestion control 3.7 TCP congestion control Transport Layer 3-19 Principles of Reliable data transfer important in app., transport, link layers top-10 list of important networking topics! characteristics of unreliable channel will determine complexity of reliable data transfer protocol (rdt) Transport Layer 3-20 10

- 11. Principles of Reliable data transfer important in app., transport, link layers top-10 list of important networking topics! characteristics of unreliable channel will determine complexity of reliable data transfer protocol (rdt) Transport Layer 3-21 Principles of Reliable data transfer important in app., transport, link layers top-10 list of important networking topics! characteristics of unreliable channel will determine complexity of reliable data transfer protocol (rdt) Transport Layer 3-22 11

- 12. Reliable data transfer: getting started rdt_send(): called from above, deliver_data(): called by (e.g., by app.). Passed data to rdt to deliver data to upper deliver to receiver upper layer send receive side side udt_send(): called by rdt, rdt_rcv(): called when packet to transfer packet over arrives on rcv-side of channel unreliable channel to receiver Transport Layer 3-23 Reliable data transfer: getting started We’ll: incrementally develop sender, receiver sides of reliable data transfer protocol (rdt) consider only unidirectional data transfer but control info will flow on both directions! use finite state machines (FSM) to specify sender, receiver event causing state transition actions taken on state transition state: when in this “state” next state state state 1 event uniquely determined 2 by next event actions Transport Layer 3-24 12

- 13. Rdt1.0: reliable transfer over a reliable channel underlying channel perfectly reliable no bit errors no loss of packets separate FSMs for sender, receiver: sender sends data into underlying channel receiver read data from underlying channel Wait for rdt_send(data) Wait for rdt_rcv(packet) call from call from extract (packet,data) above packet = make_pkt(data) below deliver_data(data) udt_send(packet) sender receiver Transport Layer 3-25 Rdt2.0: channel with bit errors underlying channel may flip bits in packet checksum to detect bit errors the question: how to recover from errors: acknowledgements (ACKs): receiver explicitly tells sender that pkt received OK negative acknowledgements (NAKs): receiver explicitly tells sender that pkt had errors sender retransmits pkt on receipt of NAK new mechanisms in rdt2.0 (beyond rdt1.0): error detection receiver feedback: control msgs (ACK,NAK) rcvr->sender Transport Layer 3-26 13

- 14. rdt2.0: FSM specification rdt_send(data) snkpkt = make_pkt(data, checksum) receiver udt_send(sndpkt) rdt_rcv(rcvpkt) && isNAK(rcvpkt) Wait for Wait for rdt_rcv(rcvpkt) && call from ACK or udt_send(sndpkt) corrupt(rcvpkt) above NAK udt_send(NAK) rdt_rcv(rcvpkt) && isACK(rcvpkt) Wait for Λ call from sender below rdt_rcv(rcvpkt) && notcorrupt(rcvpkt) extract(rcvpkt,data) deliver_data(data) udt_send(ACK) Transport Layer 3-27 rdt2.0: operation with no errors rdt_send(data) snkpkt = make_pkt(data, checksum) udt_send(sndpkt) rdt_rcv(rcvpkt) && isNAK(rcvpkt) Wait for Wait for rdt_rcv(rcvpkt) && call from ACK or udt_send(sndpkt) corrupt(rcvpkt) above NAK udt_send(NAK) rdt_rcv(rcvpkt) && isACK(rcvpkt) Wait for Λ call from below rdt_rcv(rcvpkt) && notcorrupt(rcvpkt) extract(rcvpkt,data) deliver_data(data) udt_send(ACK) Transport Layer 3-28 14

- 15. rdt2.0: error scenario rdt_send(data) snkpkt = make_pkt(data, checksum) udt_send(sndpkt) rdt_rcv(rcvpkt) && isNAK(rcvpkt) Wait for Wait for rdt_rcv(rcvpkt) && call from ACK or udt_send(sndpkt) corrupt(rcvpkt) above NAK udt_send(NAK) rdt_rcv(rcvpkt) && isACK(rcvpkt) Wait for Λ call from below rdt_rcv(rcvpkt) && notcorrupt(rcvpkt) extract(rcvpkt,data) deliver_data(data) udt_send(ACK) Transport Layer 3-29 rdt2.0 has a fatal flaw! What happens if Handling duplicates: ACK/NAK corrupted? sender retransmits current sender doesn’t know what pkt if ACK/NAK garbled happened at receiver! sender adds sequence can’t just retransmit: number to each pkt possible duplicate receiver discards (doesn’t deliver up) duplicate pkt stop and wait Sender sends one packet, then waits for receiver response Transport Layer 3-30 15

- 16. rdt2.1: sender, handles garbled ACK/NAKs rdt_send(data) sndpkt = make_pkt(0, data, checksum) udt_send(sndpkt) rdt_rcv(rcvpkt) && ( corrupt(rcvpkt) || Wait for Wait for ACK or isNAK(rcvpkt) ) call 0 from NAK 0 udt_send(sndpkt) above rdt_rcv(rcvpkt) && notcorrupt(rcvpkt) rdt_rcv(rcvpkt) && isACK(rcvpkt) && notcorrupt(rcvpkt) && isACK(rcvpkt) Λ Λ Wait for Wait for ACK or call 1 from rdt_rcv(rcvpkt) && NAK 1 above ( corrupt(rcvpkt) || isNAK(rcvpkt) ) rdt_send(data) udt_send(sndpkt) sndpkt = make_pkt(1, data, checksum) udt_send(sndpkt) Transport Layer 3-31 rdt2.1: receiver, handles garbled ACK/NAKs rdt_rcv(rcvpkt) && notcorrupt(rcvpkt) && has_seq0(rcvpkt) extract(rcvpkt,data) deliver_data(data) sndpkt = make_pkt(ACK, chksum) udt_send(sndpkt) rdt_rcv(rcvpkt) && (corrupt(rcvpkt) rdt_rcv(rcvpkt) && (corrupt(rcvpkt) sndpkt = make_pkt(NAK, chksum) sndpkt = make_pkt(NAK, chksum) udt_send(sndpkt) udt_send(sndpkt) Wait for Wait for rdt_rcv(rcvpkt) && 0 from 1 from rdt_rcv(rcvpkt) && not corrupt(rcvpkt) && below below not corrupt(rcvpkt) && has_seq1(rcvpkt) has_seq0(rcvpkt) sndpkt = make_pkt(ACK, chksum) sndpkt = make_pkt(ACK, chksum) udt_send(sndpkt) udt_send(sndpkt) rdt_rcv(rcvpkt) && notcorrupt(rcvpkt) && has_seq1(rcvpkt) extract(rcvpkt,data) deliver_data(data) sndpkt = make_pkt(ACK, chksum) udt_send(sndpkt) Transport Layer 3-32 16

- 17. rdt2.1: discussion Sender: Receiver: seq # added to pkt must check if received two seq. #’s (0,1) will packet is duplicate suffice. Why? state indicates whether 0 or 1 is expected pkt must check if received seq # ACK/NAK corrupted note: receiver can not twice as many states know if its last state must “remember” ACK/NAK received OK whether “current” pkt at sender has 0 or 1 seq. # Transport Layer 3-33 rdt2.2: a NAK-free protocol same functionality as rdt2.1, using ACKs only instead of NAK, receiver sends ACK for last pkt received OK receiver must explicitly include seq # of pkt being ACKed duplicate ACK at sender results in same action as NAK: retransmit current pkt Transport Layer 3-34 17

- 18. rdt2.2: sender, receiver fragments rdt_send(data) sndpkt = make_pkt(0, data, checksum) udt_send(sndpkt) rdt_rcv(rcvpkt) && ( corrupt(rcvpkt) || Wait for Wait for ACK isACK(rcvpkt,1) ) call 0 from above 0 udt_send(sndpkt) sender FSM fragment rdt_rcv(rcvpkt) && notcorrupt(rcvpkt) rdt_rcv(rcvpkt) && && isACK(rcvpkt,0) (corrupt(rcvpkt) || Λ has_seq1(rcvpkt)) Wait for receiver FSM 0 from udt_send(sndpkt) below fragment rdt_rcv(rcvpkt) && notcorrupt(rcvpkt) && has_seq1(rcvpkt) extract(rcvpkt,data) deliver_data(data) sndpkt = make_pkt(ACK1, chksum) udt_send(sndpkt) Transport Layer 3-35 rdt3.0: channels with errors and loss New assumption: Approach: sender waits underlying channel can “reasonable” amount of also lose packets (data time for ACK or ACKs) retransmits if no ACK checksum, seq. #, ACKs, received in this time retransmissions will be if pkt (or ACK) just delayed of help, but not enough (not lost): retransmission will be duplicate, but use of seq. #’s already handles this receiver must specify seq # of pkt being ACKed requires countdown timer Transport Layer 3-36 18

- 19. rdt3.0 sender rdt_send(data) rdt_rcv(rcvpkt) && sndpkt = make_pkt(0, data, checksum) ( corrupt(rcvpkt) || udt_send(sndpkt) isACK(rcvpkt,1) ) rdt_rcv(rcvpkt) start_timer Λ Λ Wait for Wait for timeout call 0from ACK0 udt_send(sndpkt) above start_timer rdt_rcv(rcvpkt) && notcorrupt(rcvpkt) rdt_rcv(rcvpkt) && isACK(rcvpkt,1) && notcorrupt(rcvpkt) stop_timer && isACK(rcvpkt,0) stop_timer Wait Wait for timeout for call 1 from udt_send(sndpkt) ACK1 above start_timer rdt_rcv(rcvpkt) rdt_send(data) Λ rdt_rcv(rcvpkt) && ( corrupt(rcvpkt) || sndpkt = make_pkt(1, data, checksum) isACK(rcvpkt,0) ) udt_send(sndpkt) start_timer Λ Transport Layer 3-37 rdt3.0 in action Transport Layer 3-38 19

- 20. rdt3.0 in action Transport Layer 3-39 Performance of rdt3.0 rdt3.0 works, but performance stinks example: 1 Gbps link, 15 ms e-e prop. delay, 1KB packet: Ttransmit = L (packet length in bits) 8kb/pkt = = 8 microsec R (transmission rate, bps) 10**9 b/sec U sender: utilization – fraction of time sender busy sending U L/R .008 = = = 0.00027 sender 30.008 RTT + L / R 1KB pkt every 30 msec -> 33kB/sec thruput over 1 Gbps link network protocol limits use of physical resources! Transport Layer 3-40 20

- 21. rdt3.0: stop-and-wait operation sender receiver first packet bit transmitted, t = 0 last packet bit transmitted, t = L / R first packet bit arrives RTT last packet bit arrives, send ACK ACK arrives, send next packet, t = RTT + L / R U L/R .008 = = = 0.00027 sender 30.008 RTT + L / R Transport Layer 3-41 Pipelined protocols Pipelining: sender allows multiple, “in-flight”, yet-to- be-acknowledged pkts range of sequence numbers must be increased buffering at sender and/or receiver Two generic forms of pipelined protocols: go-Back-N, selective repeat Transport Layer 3-42 21

- 22. Pipelining: increased utilization sender receiver first packet bit transmitted, t = 0 last bit transmitted, t = L / R first packet bit arrives RTT last packet bit arrives, send ACK last bit of 2nd packet arrives, send ACK last bit of 3rd packet arrives, send ACK ACK arrives, send next packet, t = RTT + L / R Increase utilization by a factor of 3! U 3*L/R .024 = = = 0.0008 sender 30.008 RTT + L / R Transport Layer 3-43 Go-Back-N Sender: k-bit seq # in pkt header “window” of up to N, consecutive unack’ed pkts allowed ACK(n): ACKs all pkts up to, including seq # n - “cumulative ACK” may receive duplicate ACKs (see receiver) timer for each in-flight pkt timeout(n): retransmit pkt n and all higher seq # pkts in window Transport Layer 3-44 22

- 23. GBN: sender extended FSM rdt_send(data) if (nextseqnum < base+N) { sndpkt[nextseqnum] = make_pkt(nextseqnum,data,chksum) udt_send(sndpkt[nextseqnum]) if (base == nextseqnum) start_timer nextseqnum++ } Λ else refuse_data(data) base=1 nextseqnum=1 timeout start_timer Wait udt_send(sndpkt[base]) rdt_rcv(rcvpkt) udt_send(sndpkt[base+1]) && corrupt(rcvpkt) … udt_send(sndpkt[nextseqnum-1]) rdt_rcv(rcvpkt) && notcorrupt(rcvpkt) base = getacknum(rcvpkt)+1 If (base == nextseqnum) stop_timer else start_timer Transport Layer 3-45 GBN: receiver extended FSM default udt_send(sndpkt) rdt_rcv(rcvpkt) && notcurrupt(rcvpkt) Λ && hasseqnum(rcvpkt,expectedseqnum) expectedseqnum=1 Wait extract(rcvpkt,data) sndpkt = deliver_data(data) make_pkt(expectedseqnum,ACK,chksum) sndpkt = make_pkt(expectedseqnum,ACK,chksum) udt_send(sndpkt) expectedseqnum++ ACK-only: always send ACK for correctly-received pkt with highest in-order seq # may generate duplicate ACKs need only remember expectedseqnum out-of-order pkt: discard (don’t buffer) -> no receiver buffering! Re-ACK pkt with highest in-order seq # Transport Layer 3-46 23

- 24. GBN in action Transport Layer 3-47 Selective Repeat receiver individually acknowledges all correctly received pkts buffers pkts, as needed, for eventual in-order delivery to upper layer sender only resends pkts for which ACK not received sender timer for each unACKed pkt sender window N consecutive seq #’s again limits seq #s of sent, unACKed pkts Transport Layer 3-48 24

- 25. Selective repeat: sender, receiver windows Transport Layer 3-49 Selective repeat sender receiver data from above : pkt n in [rcvbase, rcvbase+N-1] if next available seq # in send ACK(n) window, send pkt out-of-order: buffer timeout(n): in-order: deliver (also resend pkt n, restart timer deliver buffered, in-order pkts), advance window to ACK(n) in [sendbase,sendbase+N]: next not-yet-received pkt mark pkt n as received pkt n in [rcvbase-N,rcvbase-1] if n smallest unACKed pkt, ACK(n) advance window base to next unACKed seq # otherwise: ignore Transport Layer 3-50 25

- 26. Selective repeat in action Transport Layer 3-51 Selective repeat: dilemma Example: seq #’s: 0, 1, 2, 3 window size=3 receiver sees no difference in two scenarios! incorrectly passes duplicate data as new in (a) Q: what relationship between seq # size and window size? Transport Layer 3-52 26

- 27. Chapter 3 outline 3.1 Transport-layer 3.5 Connection-oriented services transport: TCP 3.2 Multiplexing and segment structure demultiplexing reliable data transfer flow control 3.3 Connectionless connection management transport: UDP 3.4 Principles of 3.6 Principles of reliable data transfer congestion control 3.7 TCP congestion control Transport Layer 3-53 TCP: Overview RFCs: 793, 1122, 1323, 2018, 2581 point-to-point: full duplex data: one sender, one receiver bi-directional data flow reliable, in-order byte in same connection steam: MSS: maximum segment size no “message boundaries” connection-oriented: pipelined: handshaking (exchange TCP congestion and flow of control msgs) init’s control set window size sender, receiver state send & receive buffers before data exchange flow controlled: sender will not application application writes data reads data socket socket overwhelm receiver door door TCP TCP send buffer receive buffer segment Transport Layer 3-54 27

- 28. TCP segment structure 32 bits URG: urgent data counting (generally not used) source port # dest port # by bytes sequence number of data ACK: ACK # valid acknowledgement number (not segments!) head not PSH: push data now len used UA P R S F Receive window (generally not used) # bytes checksum Urg data pnter rcvr willing RST, SYN, FIN: to accept Options (variable length) connection estab (setup, teardown commands) application Internet data checksum (variable length) (as in UDP) Transport Layer 3-55 TCP seq. #’s and ACKs Seq. #’s: Host A Host B byte stream “number” of first User Seq=4 2, AC types byte in segment’s K=79, d ata = ‘C’ ‘C’ data host ACKs receipt of ACKs: = ‘C’ ‘C’, echoes data =43, seq # of next byte 79, A CK back ‘C’ Seq= expected from other side host ACKs cumulative ACK receipt Seq=4 of echoed 3, ACK Q: how receiver handles ‘C’ =80 out-of-order segments A: TCP spec doesn’t time say, - up to simple telnet scenario implementor Transport Layer 3-56 28

- 29. TCP Round Trip Time and Timeout Q: how to set TCP Q: how to estimate RTT? timeout value? SampleRTT: measured time from longer than RTT segment transmission until ACK but RTT varies receipt too short: premature ignore retransmissions timeout SampleRTT will vary, want unnecessary estimated RTT “smoother” retransmissions average several recent too long: slow reaction measurements, not just to segment loss current SampleRTT Transport Layer 3-57 TCP Round Trip Time and Timeout EstimatedRTT = (1- α)*EstimatedRTT + α*SampleRTT Exponential weighted moving average influence of past sample decreases exponentially fast typical value: α = 0.125 Transport Layer 3-58 29

- 30. Example RTT estimation: RTT: gaia.cs.umass.edu to fantasia.eurecom.fr 350 300 250 RTT (milliseconds) 200 150 100 1 8 15 22 29 36 43 50 57 64 71 78 85 92 99 106 time (seconnds) SampleRTT Estimated RTT Transport Layer 3-59 TCP Round Trip Time and Timeout Setting the timeout EstimtedRTT plus “safety margin” large variation in EstimatedRTT -> larger safety margin first estimate of how much SampleRTT deviates from EstimatedRTT: DevRTT = (1-β)*DevRTT + β*|SampleRTT-EstimatedRTT| (typically, β = 0.25) Then set timeout interval: TimeoutInterval = EstimatedRTT + 4*DevRTT Transport Layer 3-60 30

- 31. Chapter 3 outline 3.1 Transport-layer 3.5 Connection-oriented services transport: TCP 3.2 Multiplexing and segment structure demultiplexing reliable data transfer flow control 3.3 Connectionless connection management transport: UDP 3.4 Principles of 3.6 Principles of reliable data transfer congestion control 3.7 TCP congestion control Transport Layer 3-61 TCP reliable data transfer TCP creates rdt Retransmissions are service on top of IP’s triggered by: unreliable service timeout events Pipelined segments duplicate acks Cumulative acks Initially consider TCP uses single simplified TCP sender: ignore duplicate acks retransmission timer ignore flow control, congestion control Transport Layer 3-62 31

- 32. TCP sender events: data rcvd from app: timeout: Create segment with retransmit segment seq # that caused timeout seq # is byte-stream restart timer number of first data Ack rcvd: byte in segment If acknowledges start timer if not previously unacked already running (think segments of timer as for oldest update what is known to unacked segment) be acked expiration interval: start timer if there are TimeOutInterval outstanding segments Transport Layer 3-63 NextSeqNum = InitialSeqNum SendBase = InitialSeqNum loop (forever) { TCP sender switch(event) event: data received from application above create TCP segment with sequence number NextSeqNum (simplified) if (timer currently not running) start timer pass segment to IP Comment: NextSeqNum = NextSeqNum + length(data) • SendBase-1: last event: timer timeout cumulatively retransmit not-yet-acknowledged segment with ack’ed byte smallest sequence number Example: start timer • SendBase-1 = 71; y= 73, so the rcvr event: ACK received, with ACK field value of y wants 73+ ; if (y > SendBase) { y > SendBase, so SendBase = y that new data is if (there are currently not-yet-acknowledged segments) start timer acked } } /* end of loop forever */ Transport Layer 3-64 32

- 33. TCP: retransmission scenarios Host A Host B Host A Host B Seq=9 Seq=9 2, 8 b 2, 8 b yte s data ytes d ata Seq=92 timeout Seq= 100, 20 by tes d timeout ata =100 ACK 00 X =1 0 CK CK=12 loss A A Seq=9 Seq=9 2, 8 b 2, 8 b yte s data Sendbase yte s data = 100 Seq=92 timeout SendBase = 120 20 K=1 =100 AC ACK SendBase = 100 SendBase = 120 premature timeout time time lost ACK scenario Transport Layer 3-65 TCP retransmission scenarios (more) Host A Host B Seq=9 2, 8 b yte s data =100 timeout Seq=1 ACK 0 0, 20 bytes data X loss SendBase =120 ACK = 120 time Cumulative ACK scenario Transport Layer 3-66 33

- 34. TCP ACK generation [RFC 1122, RFC 2581] Event at Receiver TCP Receiver action Arrival of in-order segment with Delayed ACK. Wait up to 500ms expected seq #. All data up to for next segment. If no next segment, expected seq # already ACKed send ACK Arrival of in-order segment with Immediately send single cumulative expected seq #. One other ACK, ACKing both in-order segments segment has ACK pending Arrival of out-of-order segment Immediately send duplicate ACK, higher-than-expect seq. # . indicating seq. # of next expected byte Gap detected Arrival of segment that Immediate send ACK, provided that partially or completely fills gap segment startsat lower end of gap Transport Layer 3-67 Fast Retransmit Time-out period often If sender receives 3 relatively long: ACKs for the same long delay before data, it supposes that resending lost packet segment after ACKed Detect lost segments data was lost: via duplicate ACKs. fast retransmit: resend Sender often sends segment before timer many segments back-to- expires back If segment is lost, there will likely be many duplicate ACKs. Transport Layer 3-68 34

- 35. Fast retransmit algorithm: event: ACK received, with ACK field value of y if (y > SendBase) { SendBase = y if (there are currently not-yet-acknowledged segments) start timer } else { increment count of dup ACKs received for y if (count of dup ACKs received for y = 3) { resend segment with sequence number y } a duplicate ACK for fast retransmit already ACKed segment Transport Layer 3-69 Chapter 3 outline 3.1 Transport-layer 3.5 Connection-oriented services transport: TCP 3.2 Multiplexing and segment structure demultiplexing reliable data transfer flow control 3.3 Connectionless connection management transport: UDP 3.4 Principles of 3.6 Principles of reliable data transfer congestion control 3.7 TCP congestion control Transport Layer 3-70 35

- 36. TCP Flow Control flow control sender won’t overflow receive side of TCP receiver’s buffer by connection has a transmitting too much, receive buffer: too fast speed-matching service: matching the send rate to the receiving app’s drain rate app process may be slow at reading from buffer Transport Layer 3-71 TCP Flow control: how it works Rcvr advertises spare room by including value of RcvWindow in segments Sender limits unACKed (Suppose TCP receiver data to RcvWindow discards out-of-order guarantees receive segments) buffer doesn’t overflow spare room in buffer = RcvWindow = RcvBuffer-[LastByteRcvd - LastByteRead] Transport Layer 3-72 36

- 37. Chapter 3 outline 3.1 Transport-layer 3.5 Connection-oriented services transport: TCP 3.2 Multiplexing and segment structure demultiplexing reliable data transfer flow control 3.3 Connectionless connection management transport: UDP 3.4 Principles of 3.6 Principles of reliable data transfer congestion control 3.7 TCP congestion control Transport Layer 3-73 TCP Connection Management Recall: TCP sender, receiver Three way handshake: establish “connection” before exchanging data Step 1: client host sends TCP segments SYN segment to server initialize TCP variables: specifies initial seq # seq. #s no data buffers, flow control Step 2: server host receives info (e.g. RcvWindow) SYN, replies with SYNACK client: connection initiator segment Socket clientSocket = new server allocates buffers Socket("hostname","port number"); specifies server initial seq. # server: contacted by client Socket connectionSocket = Step 3: client receives SYNACK, welcomeSocket.accept(); replies with ACK segment, which may contain data Transport Layer 3-74 37

- 38. TCP Connection Management (cont.) Closing a connection: client server close client closes socket: FIN clientSocket.close(); Step 1: client end system ACK close sends TCP FIN control FIN segment to server Step 2: server receives timed wait ACK FIN, replies with ACK. Closes connection, sends FIN. closed Transport Layer 3-75 TCP Connection Management (cont.) Step 3: client receives FIN, client server replies with ACK. closing FIN Enters “timed wait” - will respond with ACK to received FINs ACK closing Step 4: server, receives FIN ACK. Connection closed. timed wait ACK Note: with small closed modification, can handle simultaneous FINs. closed Transport Layer 3-76 38

- 39. TCP Connection Management (cont) TCP server lifecycle TCP client lifecycle Transport Layer 3-77 Chapter 3 outline 3.1 Transport-layer 3.5 Connection-oriented services transport: TCP 3.2 Multiplexing and segment structure demultiplexing reliable data transfer flow control 3.3 Connectionless connection management transport: UDP 3.4 Principles of 3.6 Principles of reliable data transfer congestion control 3.7 TCP congestion control Transport Layer 3-78 39

- 40. Principles of Congestion Control Congestion: informally: “too many sources sending too much data too fast for network to handle” different from flow control! manifestations: lost packets (buffer overflow at routers) long delays (queueing in router buffers) a top-10 problem! Transport Layer 3-79 Causes/costs of congestion: scenario 1 Host A λout two senders, two λin : original data receivers one router, Host B unlimited shared output link buffers infinite buffers no retransmission large delays when congested maximum achievable throughput Transport Layer 3-80 40

- 41. Causes/costs of congestion: scenario 2 one router, finite buffers sender retransmission of lost packet Host A λin : original data λout λ'in : original data, plus retransmitted data Host B finite shared output link buffers Transport Layer 3-81 Causes/costs of congestion: scenario 2 always: λ= λ (goodput) in out “perfect” retransmission only when loss: λ > λout in retransmission of delayed (not lost) packet makes λ larger in (than perfect case) for same λout R/2 R/2 R/2 R/3 λout λout λout R/4 R/2 R/2 R/2 λin λin λin a. b. c. “costs” of congestion: more work (retrans) for given “goodput” unneeded retransmissions: link carries multiple copies of pkt Transport Layer 3-82 41

- 42. Causes/costs of congestion: scenario 3 four senders Q: what happens as λ multihop paths and λ increase ? in timeout/retransmit in Host A λout λin : original data λ'in : original data, plus retransmitted data finite shared output link buffers Host B Transport Layer 3-83 Causes/costs of congestion: scenario 3 H λ o o s u t A t H o s t B Another “cost” of congestion: when packet dropped, any “upstream transmission capacity used for that packet was wasted! Transport Layer 3-84 42

- 43. Approaches towards congestion control Two broad approaches towards congestion control: End-end congestion Network-assisted control: congestion control: no explicit feedback from routers provide feedback network to end systems congestion inferred from single bit indicating end-system observed loss, congestion (SNA, delay DECbit, TCP/IP ECN, approach taken by TCP ATM) explicit rate sender should send at Transport Layer 3-85 Case study: ATM ABR congestion control ABR: available bit rate: RM (resource management) “elastic service” cells: if sender’s path sent by sender, interspersed “underloaded”: with data cells sender should use bits in RM cell set by switches available bandwidth (“network-assisted”) if sender’s path NI bit: no increase in rate congested: (mild congestion) sender throttled to CI bit: congestion minimum guaranteed indication rate RM cells returned to sender by receiver, with bits intact Transport Layer 3-86 43

- 44. Case study: ATM ABR congestion control two-byte ER (explicit rate) field in RM cell congested switch may lower ER value in cell sender’ send rate thus minimum supportable rate on path EFCI bit in data cells: set to 1 in congested switch if data cell preceding RM cell has EFCI set, sender sets CI bit in returned RM cell Transport Layer 3-87 Chapter 3 outline 3.1 Transport-layer 3.5 Connection-oriented services transport: TCP 3.2 Multiplexing and segment structure demultiplexing reliable data transfer flow control 3.3 Connectionless connection management transport: UDP 3.4 Principles of 3.6 Principles of reliable data transfer congestion control 3.7 TCP congestion control Transport Layer 3-88 44

- 45. TCP congestion control: additive increase, multiplicative decrease Approach: increase transmission rate (window size), probing for usable bandwidth, until loss occurs additive increase: increase CongWin by 1 MSS every RTT until loss detected multiplicative decrease: cut CongWin in half after loss congestion window size congestion window 24 Kbytes Saw tooth behavior: probing 16 Kbytes for bandwidth 8 Kbytes time time Transport Layer 3-89 TCP Congestion Control: details sender limits transmission: How does sender LastByteSent-LastByteAcked perceive congestion? ≤ CongWin loss event = timeout or Roughly, 3 duplicate acks CongWin TCP sender reduces rate = Bytes/sec RTT rate (CongWin) after CongWin is dynamic, function loss event of perceived network three mechanisms: congestion AIMD slow start conservative after timeout events Transport Layer 3-90 45

- 46. TCP Slow Start When connection begins, When connection begins, CongWin = 1 MSS increase rate Example: MSS = 500 exponentially fast until bytes & RTT = 200 msec first loss event initial rate = 20 kbps available bandwidth may be >> MSS/RTT desirable to quickly ramp up to respectable rate Transport Layer 3-91 TCP Slow Start (more) When connection Host A Host B begins, increase rate exponentially until one segm ent RTT first loss event: double CongWin every two segm ents RTT done by incrementing CongWin for every ACK four segm ents received Summary: initial rate is slow but ramps up exponentially fast time Transport Layer 3-92 46

- 47. Refinement Q: When should the exponential increase switch to linear? A: When CongWin gets to 1/2 of its value before timeout. Implementation: Variable Threshold At loss event, Threshold is set to 1/2 of CongWin just before loss event Transport Layer 3-93 Refinement: inferring loss After 3 dup ACKs: CongWin is cut in half Philosophy: window then grows linearly 3 dup ACKs indicates But after timeout event: network capable of delivering some segments CongWin instead set to timeout indicates a 1 MSS; “more alarming” window then grows congestion scenario exponentially to a threshold, then grows linearly Transport Layer 3-94 47

- 48. Summary: TCP Congestion Control When CongWin is below Threshold, sender in slow-start phase, window grows exponentially. When CongWin is above Threshold, sender is in congestion-avoidance phase, window grows linearly. When a triple duplicate ACK occurs, Threshold set to CongWin/2 and CongWin set to Threshold. When timeout occurs, Threshold set to CongWin/2 and CongWin is set to 1 MSS. Transport Layer 3-95 TCP sender congestion control State Event TCP Sender Action Commentary Slow Start ACK receipt CongWin = CongWin + MSS, Resulting in a doubling of (SS) for previously If (CongWin > Threshold) CongWin every RTT unacked set state to “Congestion data Avoidance” Congestion ACK receipt CongWin = CongWin+MSS * Additive increase, resulting Avoidance for previously (MSS/CongWin) in increase of CongWin by (CA) unacked 1 MSS every RTT data SS or CA Loss event Threshold = CongWin/2, Fast recovery, detected by CongWin = Threshold, implementing multiplicative triple Set state to “Congestion decrease. CongWin will not duplicate Avoidance” drop below 1 MSS. ACK SS or CA Timeout Threshold = CongWin/2, Enter slow start CongWin = 1 MSS, Set state to “Slow Start” SS or CA Duplicate Increment duplicate ACK count CongWin and Threshold not ACK for segment being acked changed Transport Layer 3-96 48

- 49. TCP throughput What’s the average throughout of TCP as a function of window size and RTT? Ignore slow start Let W be the window size when loss occurs. When window is W, throughput is W/RTT Just after loss, window drops to W/2, throughput to W/2RTT. Average throughout: .75 W/RTT Transport Layer 3-97 TCP Futures Example: 1500 byte segments, 100ms RTT, want 10 Gbps throughput Requires window size W = 83,333 in-flight segments Throughput in terms of loss rate: 1.22 ⋅ MSS RTT L ➜ L = 2·10-10 Wow New versions of TCP for high-speed needed! Transport Layer 3-98 49

- 50. TCP Fairness Fairness goal: if K TCP sessions share same bottleneck link of bandwidth R, each should have average rate of R/K TCP connection 1 bottleneck TCP router connection 2 capacity R Transport Layer 3-99 Why is TCP fair? Two competing sessions: Additive increase gives slope of 1, as throughout increases multiplicative decrease decreases throughput proportionally R equal bandwidth share Connection 2 throughput loss: decrease window by factor of 2 congestion avoidance: additive increase loss: decrease window by factor of 2 congestion avoidance: additive increase Connection 1 throughput R Transport Layer 3-100 50

- 51. Fairness (more) Fairness and UDP Fairness and parallel TCP Multimedia apps often connections do not use TCP nothing prevents app from do not want rate opening parallel throttled by congestion connections between 2 control hosts. Instead use UDP: Web browsers do this pump audio/video at Example: link of rate R constant rate, tolerate packet loss supporting 9 cnctions; new app asks for 1 TCP, gets Research area: TCP rate R/10 friendly new app asks for 11 TCPs, gets R/2 ! Transport Layer 3-101 Delay modeling Notation, assumptions: Q: How long does it take to Assume one link between receive an object from a client and server of rate R Web server after sending S: MSS (bits) a request? O: object size (bits) Ignoring congestion, delay is no retransmissions (no loss, influenced by: no corruption) TCP connection establishment Window size: data transmission delay First assume: fixed slow start congestion window, W segments Then dynamic window, modeling slow start Transport Layer 3-102 51

- 52. Fixed congestion window (1) First case: WS/R > RTT + S/R: ACK for first segment in window returns before window’s worth of data sent delay = 2RTT + O/R Transport Layer 3-103 Fixed congestion window (2) Second case: WS/R < RTT + S/R: wait for ACK after sending window’s worth of data sent delay = 2RTT + O/R + (K-1)[S/R + RTT - WS/R] Transport Layer 3-104 52

- 53. TCP Delay Modeling: Slow Start (1) Now suppose window grows according to slow start Will show that the delay for one object is: O ⎡ S⎤ S Latency = 2 RTT + + P ⎢ RTT + ⎥ − ( 2 P − 1) R ⎣ R⎦ R where P is the number of times TCP idles at server: P = min{Q, K − 1} - where Q is the number of times the server idles if the object were of infinite size. - and K is the number of windows that cover the object. Transport Layer 3-105 TCP Delay Modeling: Slow Start (2) Delay components: initiate TCP connection • 2 RTT for connection estab and request request • O/R to transmit object first window object = S/R • time server idles due RTT second window to slow start = 2S/R Server idles: third window = 4S/R P = min{K-1,Q} times Example: fourth window = 8S/R • O/S = 15 segments • K = 4 windows •Q=2 • P = min{K-1,Q} = 2 object complete transmission delivered Server idles P=2 times time at time at server client Transport Layer 3-106 53

- 54. TCP Delay Modeling (3) S + RTT = time from when server starts to send segment R until server receives acknowledgement initiate TCP connection S 2 k −1 = time to transmit the kth window request R object first window = S/R + ⎡S k −1 S ⎤ RTT ⎢ R + RTT − 2 R ⎥ = idle time after the kth window second window = 2S/R ⎣ ⎦ third window = 4S/R P O delay = + 2 RTT + ∑ idleTime p fourth window = 8S/R R p =1 P O S S = + 2 RTT + ∑ [ + RTT − 2 k −1 ] R k =1 R R object complete transmission delivered O S S = + 2 RTT + P[ RTT + ] − (2 P − 1) time at R R R time at client server Transport Layer 3-107 TCP Delay Modeling (4) Recall K = number of windows that cover object How do we calculate K ? K = min{k : 2 0 S + 21 S + L + 2 k −1 S ≥ O} = min{k : 2 0 + 21 + L + 2 k −1 ≥ O / S } O = min{k : 2 k − 1 ≥ } S O = min{k : k ≥ log 2 ( + 1)} S ⎡ O ⎤ = ⎢log 2 ( + 1)⎥ ⎢ S ⎥ Calculation of Q, number of idles for infinite-size object, is similar (see HW). Transport Layer 3-108 54

- 55. HTTP Modeling Assume Web page consists of: 1 base HTML page (of size O bits) M images (each of size O bits) Non-persistent HTTP: M+1 TCP connections in series Response time = (M+1)O/R + (M+1)2RTT + sum of idle times Persistent HTTP: 2 RTT to request and receive base HTML file 1 RTT to request and receive M images Response time = (M+1)O/R + 3RTT + sum of idle times Non-persistent HTTP with X parallel connections Suppose M/X integer. 1 TCP connection for base file M/X sets of parallel connections for images. Response time = (M+1)O/R + (M/X + 1)2RTT + sum of idle times Transport Layer 3-109 HTTP Response time (in seconds) RTT = 100 msec, O = 5 Kbytes, M=10 and X=5 20 18 16 14 non-persistent 12 10 persistent 8 6 4 parallel non- persistent 2 0 28 100 1 10 Kbps Kbps Mbps Mbps For low bandwidth, connection & response time dominated by transmission time. Persistent connections only give minor improvement over parallel connections. Transport Layer 3-110 55